For the past few years, the artificial intelligence industry has been singularly focused on one primary goal: generation. Large Language Models (LLMs) were trained to solve the “blank page” problem, allowing businesses, marketers, and developers to produce thousands of words in a matter of seconds. In that regard, the technology has been a staggering success.

However, as we move deeper into 2026, the novelty of instant generation has worn off. We have solved the blank page problem, but we have accidentally created a new one: the “sea of sameness.”

When everyone has access to the exact same generative models, the competitive advantage of simply producing content drops to zero. Audiences, search engines, and enterprise clients are becoming highly sensitive to the robotic, mathematically perfect cadence of machine-generated text. The next frontier in AI development isn’t just about creating content faster; it is about creating content that actually sounds human.

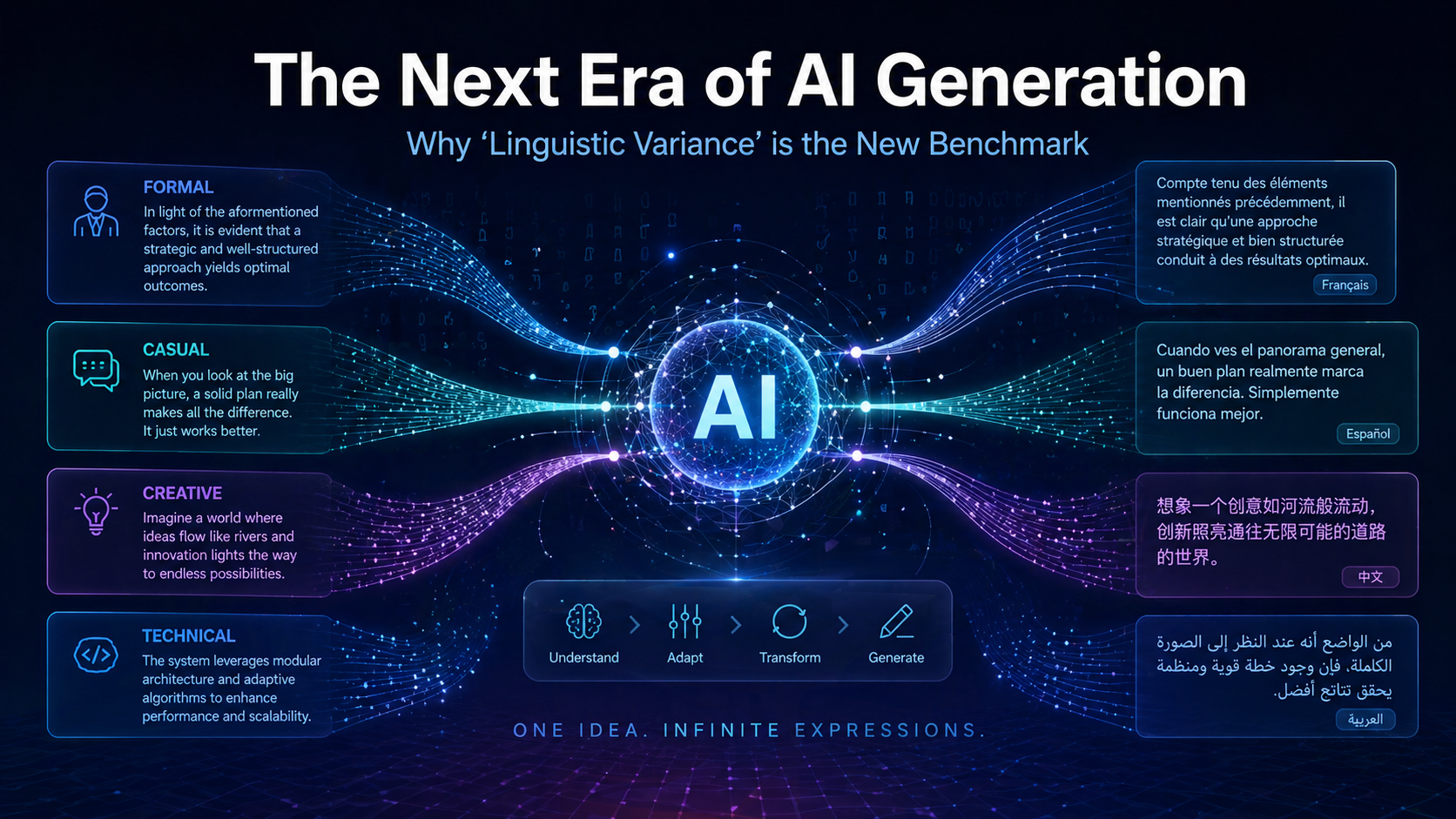

To achieve this, the tech industry is turning its attention to a new benchmark: Linguistic Variance.

Table of Contents

The Anatomy of Machine Prose: Why AI Sounds Like AI

To understand why AI humanization is becoming a critical sector of the tech industry, we first have to understand how LLMs write. AI models do not “think” or craft narratives; they operate on complex statistical probabilities, predicting the most logical next word in a sequence based on their massive training datasets.

Because they are designed to be helpful, safe, and universally understood, LLMs naturally gravitate toward the statistical middle. This results in text that suffers from a lack of variance, measured by two primary metrics:

1. Low Perplexity: Perplexity measures how predictable a piece of text is. If a sentence uses common vocabulary and standard phrasing, it has low perplexity. AI models default to low perplexity because it is the safest mathematical route. Humans, on the other hand, frequently use idiosyncratic vocabulary, surprising metaphors, and unconventional phrasing.

2. Low Burstiness: Burstiness refers to the variation in sentence length and structure. Human thought is erratic; we write in a mix of long, complex, explanatory sentences followed immediately by short, punchy statements. AI models tend to produce text with high uniformity sentences that are all roughly the same length, leading to a monotonous, drone-like reading experience.

When a piece of text has low perplexity and low burstiness, it carries a “synthetic signature.” It may be grammatically flawless, but it lacks the rhythm of human communication.

The Business Impact of the “Synthetic Signature”

The inability of raw AI to mimic human linguistic variance is no longer just an academic observation; it has severe business implications.

First, there is the issue of Brand Trust. In marketing and business communications, empathy and authenticity are the primary drivers of conversion. When a potential client reads an email outreach campaign or a landing page that feels artificially generated, trust evaporates. The reader feels processed rather than spoken to.

Second, there is the SEO and Discoverability Impact. Search engines are continuously updating their algorithms to prioritize “Helpful Content” and E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). While search engines do not outright penalize AI content simply for being AI, they do penalize content that offers no unique value or reads like a generic compilation of existing data. Text that lacks linguistic variance is often flagged by ranking algorithms as low-effort or unhelpful, leading to poor search visibility.

The Rise of Auditing and Humanization Tools

Because of these challenges, a new sub-industry of AI tools has emerged. The focus has shifted from “Text Generation” to “Text Orchestration.”

Initially, the market saw a flood of AI detectors designed to catch synthetic signatures. However, detection alone is a passive process. The real value lies in active refinement. This has led to the development of sophisticated humanization platforms that programmatically restructure AI drafts.

Instead of just swapping words with a thesaurus, modern utility tools like theText2Human platform are engineered to address the core mathematical issues of burstiness and perplexity. By analyzing a raw AI draft, these tools can dynamically inject structural variance, breaking up monotonous sentence patterns and introducing the natural inconsistencies that define human writing. This effectively bridges the gap between machine efficiency and authentic communication.

Building a “Human-First” AI Workflow

For developers, content teams, and businesses looking to leverage AI without sacrificing their brand voice, it is time to implement a structured, human-first workflow. Relying on “zero-shot” prompting (asking the AI to write a final draft in one go) is a recipe for generic output.

Instead, modern workflows should follow a four-step orchestration process:

Step 1: The Generative Scaffold Use your preferred LLM (like ChatGPT, Claude, or Gemini) for heavy lifting. Let the AI conduct research, outline the structure, and generate the foundational draft. At this stage, do not worry about the tone; focus purely on the accuracy of the information.

Step 2: The Transparency Audit Run the initial draft through an AI auditing tool. This is not about policing the text; it is a diagnostic step. The audit will highlight the “robotic hot zones” the paragraphs that suffer from severe structural uniformity and low burstiness.

Step 3: Algorithmic Humanization Utilize a humanization engine to process the draft. By instructing the tool to increase linguistic variance, you can automatically restructure the monotonous sections. The goal here is to elevate the text from a statistical average to a piece of writing with natural rhythm and flow.

Step 4: The Personal Injection This is the final, irreplaceable step. A human editor must review the varied draft and inject elements that an AI simply cannot know. This includes proprietary data, first-hand case studies, subjective opinions, and personal anecdotes. This is how you fulfill the “Experience” requirement of modern content standards.

Conclusion: The Human Element as a Premium Feature

Artificial intelligence is arguably the greatest productivity multiplier of our generation. However, as AI-generated text becomes a ubiquitous commodity, the standard of quality will inevitably shift.

In a digital landscape overflowing with mathematically perfect, highly predictable content, the messy, varied, and surprising nature of human prose will become a premium feature. Businesses and developers who understand the mechanics of linguistic variance and who actively use tools to humanize their digital workflows will be the ones who successfully capture audience attention and trust in the years to come.

The future of AI isn’t just about sounding smart; it’s about sounding real.