Key Takeaways

- Traditional app advertising platforms were built to capture demand not to understand it, which is why ROAS is degrading across most major channels.

- The “black box” era of mobile advertising is ending. Advertisers now demand not just results, but traceable logic behind every match and install.

- AI-driven campaign infrastructure not AI as a bolt-on feature is what’s separating sustainable ROAS from budget waste in 2026.

- Rising user acquisition costs, post-IDFA signal loss, and shorter retention windows have made creative intelligence and intent-based targeting non-negotiable.

- The next competitive advantage in app marketing isn’t bigger budgets it’s better inputs: sharper signals, smarter systems, and infrastructure that optimizes toward outcomes that actually matter.

Introduction

Here’s the uncomfortable truth that most mobile marketing teams are sitting with right now: they’re spending more, and getting less.

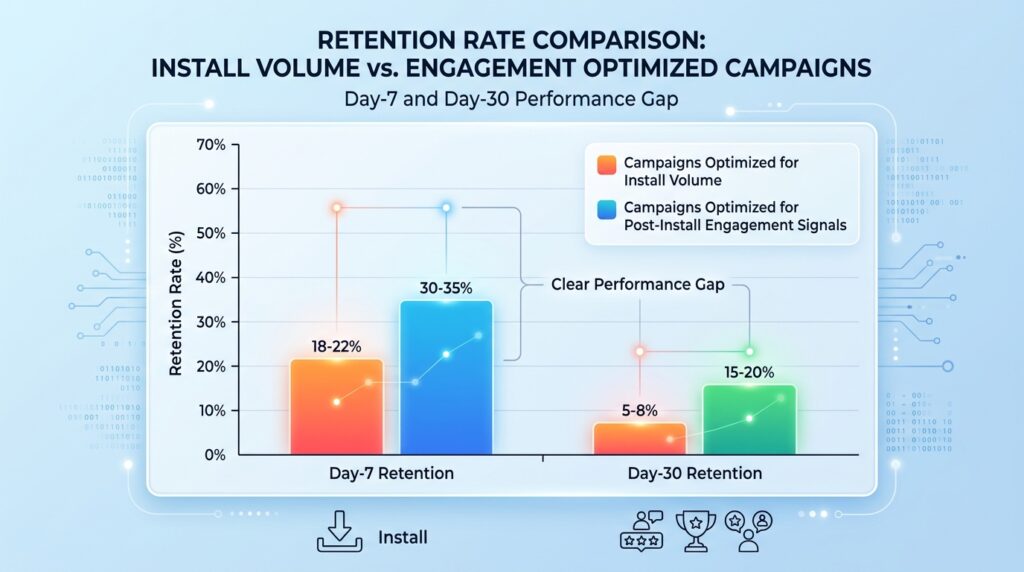

Cost-per-install across major verticals climbed steadily through 2025. Day-7 retention rates for most app categories hover below 25%. And the platforms that dominate the bulk of app advertising budgets are still running on targeting logic that was designed for a different era of the mobile internet one where users moved predictably, privacy signals were abundant, and install volume was a reliable proxy for business value.

That era is over.

In 2026, ROAS isn’t a metric you optimize at the margin. It’s a structural question about whether the advertising infrastructure you’re using was ever designed to deliver the kind of return you actually need. The marketers pulling ahead aren’t doing it by squeezing better performance out of systems that weren’t built for this environment. They’re switching to systems that were.

This article breaks down exactly why traditional app advertising is struggling to hold its ground and how AI-driven discovery is changing the math.

Why Traditional App Advertising Is Losing Ground

Let’s start with what changed, because the problem isn’t new spending habits. It’s a compounding set of infrastructure failures that most marketing teams have been patching around rather than solving.

Signal Loss Changed the Game Permanently

Apple’s App Tracking Transparency framework didn’t just reduce signal volume — it changed the fundamental relationship between advertisers and user data. When IDFA became opt-in, most major platforms lost the cross-app behavioral thread that made lookalike audiences and retargeting actually work.

The industry has spent three years adapting, but the adaptations have been uneven. SKAdNetwork attribution is limited by design. Google’s Privacy Sandbox is still evolving. And the platforms that depended most heavily on deterministic user-level data are still figuring out what probabilistic modeling actually looks like at scale.

The result? Bidding against audiences that look right on paper but convert at rates that no longer justify the cost.

Install Volume Stopped Predicting Revenue

For most of the 2010s, user acquisition teams ran on a simple hypothesis: more installs equal more revenue. Scale the volume, improve the funnel, and LTV will follow. The platforms that dominated that era Meta’s Advantage+, Google UAC, Apple Search Ads were all optimized around that hypothesis.

It no longer holds.

High-volume, low-intent installs are cheap to buy and expensive to carry. A user who installs because of a broadly targeted ad, engages for 36 hours, and churns generates negative return once you factor in server costs, onboarding infrastructure, and the customer support burden. In subscription apps, gaming, fintech, and ecommerce categories, the gap between an install that generates LTV and one that doesn’t has never been wider.

Optimizing for install volume in that environment isn’t a growth strategy. It’s a slow drain.

The Attribution Window Problem

Privacy changes didn’t just reduce signal breadth. They compressed attribution windows in ways that make full-funnel analysis nearly impossible on major platforms.

When a user installs on day one, engages on day three, and converts on day twelve, that conversion is increasingly invisible to the platform that drove the original install. The marketer sees a cost-per-install without a clear LTV signal attached to it. Scaling that campaign becomes a leap of faith.

This is part of why ROAS feels so volatile in 2026. The same campaign, running the same creative, on the same platform, can look like it’s working one week and broken the next — not because the campaign changed, but because the measurement infrastructure can’t see the full outcome.

How AI-Driven Discovery Actually Changes the Equation

The phrase “AI-powered advertising” gets applied to almost everything now, which has made it nearly meaningless as a differentiator. The relevant distinction isn’t whether a platform uses AI they all do. It’s what the AI is optimizing toward, and how deeply that logic runs through the system.

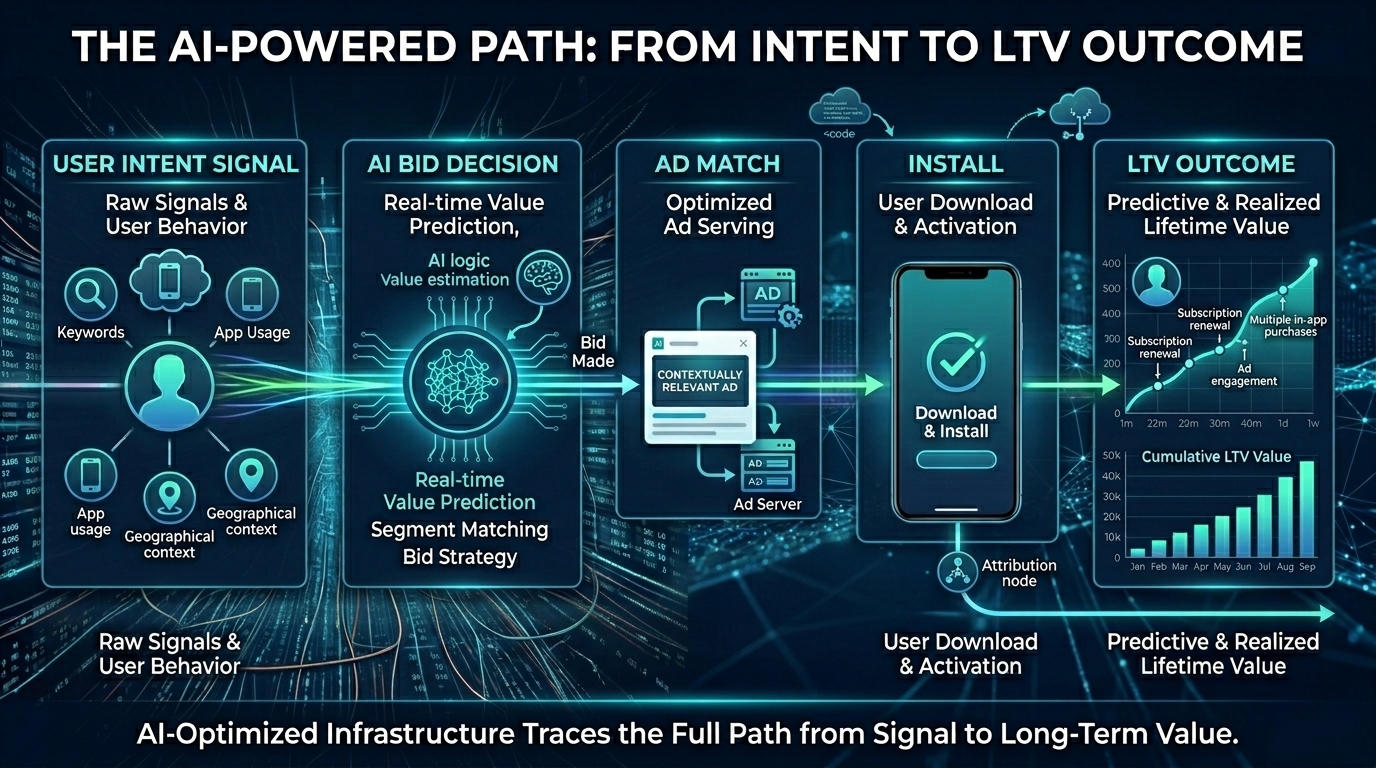

Intent Signals vs. Demographic Proxies

Legacy targeting is built on proxies. Age, gender, interest categories, device type, and purchase history are all reasonable approximations of who might want an app. But they’re approximations, and approximations get expensive when user acquisition costs are high.

Genuine intent signals look different. They’re drawn from real behavioral patterns: what a user has searched for in the last 72 hours, what apps they’ve opened and abandoned, what content formats they engage with before making a download decision. That kind of signal is richer, more perishable, and harder to scale — but it’s also dramatically more predictive of post-install behavior.

AI systems that are trained on intent signals rather than demographic proxies consistently produce lower-churn cohorts. The match quality is higher because the signal quality is higher.

Real-Time Budget and Bid Optimization

One of the most underappreciated advantages of AI-driven campaign management is the speed of iteration. A traditional campaign management workflow runs on a human-interpretable timescale: pull data, form a hypothesis, adjust targeting or creative, wait for the next cycle to validate. That loop might run weekly, or even bi-weekly on large campaigns.

AI-optimized systems don’t wait for a human to form a hypothesis. They’re adjusting bid strategy, creative rotation, and budget allocation in real time based on signals that accumulate between the time an impression is served and the time a downstream action occurs. That’s a fundamentally different feedback loop and in fast-moving categories, the compounding advantage adds up quickly.

Consider what this means in practice: a gaming app running AI-optimized UA might shift 35% of its budget away from a declining inventory source within hours of signals showing declining day-3 retention from that cohort, before a human analyst would have even noticed the trend in the data.

Creative Intelligence Is Now a ROAS Variable

Most ROAS conversations focus on targeting and bid strategy. Creative is treated as a separate workstream design team produces variants, performance team tests them, the best one gets scaled. That model works reasonably well when testing cycles are long and inventory is relatively stable.

In 2026, creative is a real-time performance variable, not a separate workstream.

AI systems can now run granular creative testing at the cohort level not just “does version A outperform version B overall?” but “does version A outperform version B specifically for users aged 25-34 in Southeast Asia who discovered the app through a short-form video surface?” That level of granularity turns creative from a fixed input into an adaptive element of the performance equation.

The brands that have recognized this are producing creative in modular formats specifically designed for AI-driven assembly and testing: swappable headlines, multiple visual hooks, different CTA variants. It’s a different creative philosophy, and it’s producing measurably better ROAS on platforms that support that level of real-time optimization.

The Transparency Deficit: Why Auditable ROAS Is the New Benchmark

There’s a conversation happening in performance marketing teams right now that didn’t exist three years ago. It’s not “what was our ROAS?” It’s “can we actually explain what produced it?”

This isn’t a philosophical question. It has direct operational consequences.

When you cannot trace the logic from signal to match to install to downstream conversion, you cannot intelligently scale a winning campaign. You’re extrapolating from a result you don’t fully understand. And when the result shifts as it will you don’t have a framework for diagnosing why, which means you’re starting from zero every time you need to course-correct.

Auditable ROAS means being able to answer these questions:

- What intent signal or behavioral indicator triggered this user match?

- What quality filters determined this inventory was appropriate for this bid?

- Which creative variant drove engagement, and at what point in the discovery funnel?

- What is the downstream LTV signal for cohorts acquired through this campaign?

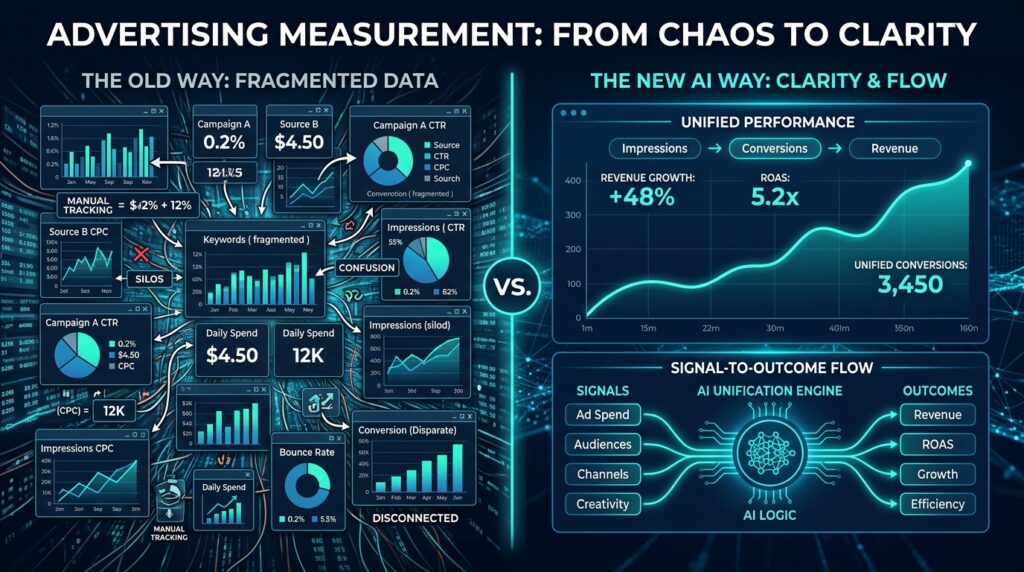

Platforms built on unified AI infrastructure can answer these questions because the optimization logic is traceable. The signal path from impression to outcome can be examined, refined, and replicated. That’s not just a transparency feature it’s the foundation of systematic performance improvement.

Unified Infrastructure vs. Performance as a Feature

This is the structural divide that matters most in 2026, and it’s worth spelling out clearly.

There are two categories of platforms offering AI optimization in app advertising. The first category has built their systems primarily for reach, engagement time, or content consumption, and has layered AI performance features on top. The optimization logic competes with other objectives the platform has keeping users on-platform, maximizing time-in-feed, managing its own inventory yield.

The second category has built performance as the foundational layer. Every element bidding strategy, budget allocation, creative testing, inventory selection, attribution runs through the same optimization framework, oriented toward the same goal: delivering outcomes that the advertiser defines.

The difference shows up in results, but it also shows up in diagnostics. When performance is the infrastructure, you can interrogate the system. When it’s a feature, you’re trusting that the platform’s other objectives don’t pull the algorithm away from yours.

What This Means for App Marketers Right Now

The practical implications of this shift aren’t abstract. Here’s what they look like in day-to-day campaign management.

Redefine what success signals you’re feeding the system. If your optimization goal is cost-per-install, you’ll get cheap installs. If your optimization goal is cost-per-paying-user or cost-per-day-30-retained-user, the AI will optimize toward that instead. The quality of your outcome ceiling is directly tied to the quality of the success signal you’ve defined.

Treat creative as a performance variable, not a creative deliverable. Build in modular formats. Produce more variants with faster cycles. Give your AI optimization layer enough creative surface area to find what actually works by cohort, by surface, by region.

Demand signal traceability from your platform partners. If a campaign is performing well and you can’t explain why, you’re one algorithm update away from not being able to explain why it stopped. Traceability isn’t a nice-to-have — it’s risk management.

Consolidate measurement where possible. Fragmented attribution across multiple platforms produces conflicting ROAS signals that make it nearly impossible to allocate budget intelligently. Unified measurement, even if imperfect, gives you a consistent baseline to optimize against.

Where App Advertising Is Heading: The Next 18 Months

The structural changes underway in app advertising aren’t at a tipping point — they’ve already tipped. But the full implications are still working through the industry.

A few trends to watch:

Predictive LTV modeling will move from aspirational to operational. The combination of better AI models and more sophisticated first-party data infrastructure means that predicting which users will generate long-term value — not just install — will become a standard input to bid strategy rather than a post-campaign analysis exercise.

Cross-channel AI optimization will become table stakes. Right now, most AI-driven campaign management still operates channel by channel. The next evolution is systems that can intelligently allocate across channels — social, in-app, CTV, search — in real time based on a unified intent signal layer. The performance gap between marketers running siloed channel strategies and those running unified cross-channel AI optimization will widen significantly.

Privacy-preserving AI will unlock intent signal depth that current systems can’t access. Federated learning and on-device AI processing are still early, but they represent a path to intent-level signal quality without centralized data collection. The platforms investing in this infrastructure now are building a long-term competitive position.

Creative AI will close the gap between testing speed and campaign velocity. As AI-generated creative variants become more sophisticated and faster to produce, the constraint on creative testing will no longer be production capacity. It will be the quality of the brief and the sophistication of the evaluation framework.

Conclusion

The ROAS problem in 2026 isn’t a targeting problem or a budget problem. It’s an infrastructure problem.

The platforms and tools built for the last decade of app advertising were designed for a world with abundant signals, predictable user behavior, and install volume as the primary currency. That world is gone. What’s replaced it requires a different kind of system: one that’s built on intent rather than demographics, performance rather than reach, and transparency rather than aggregate reporting.

The marketers who are winning right now aren’t spending more. They’re spending smarter — on infrastructure that can actually explain its own outputs. In a market where every percentage point of ROAS improvement has direct budget consequences, the quality of the engine underneath the campaign matters as much as the quality of the strategy above it.

The question for every app marketing team heading into the second half of 2026 isn’t “are we using AI?” It’s “is the AI we’re using actually oriented toward the outcomes we care about?” That distinction will define who scales and who stalls.