Engines like google like Google use web site crawlers to learn and perceive webpages.

However search engine optimisation professionals also can use internet crawlers to uncover points and alternatives inside their very own websites. Or to extract data from competing web sites.

There are tons of crawling and scraping instruments accessible on-line. Whereas some are helpful for search engine optimisation and information assortment, others might have questionable intentions or pose potential dangers.

That will help you navigate the world of web site crawlers, we’ll stroll you thru what crawlers are, how they work, and how one can safely use the appropriate instruments to your benefit.

What Is a Web site Crawler?

An internet crawler is a bot that robotically accesses and processes webpages to know their content material.

They go by many names, like:

- Crawler

- Bot

- Spider

- Spiderbot

The spider nicknames come from the truth that these bots crawl throughout the World Huge Net.

Engines like google use crawlers to find and categorize webpages. Then, serve those they deem finest to customers in response to look queries.

For instance, Google’s internet crawlers are key gamers within the search engine course of:

- You publish or replace content material in your web site

- Bots crawl your web site’s new or up to date pages

- Google indexes the pages crawlers discover—although there are some points that may stop indexing in some circumstances

- Google (hopefully) presents your web page in search outcomes primarily based on its relevance to a person’s question

However search engines like google aren’t the one partiers that use web site crawlers. You can even deploy internet crawlers your self to collect details about webpages.

Publicly accessible crawlers are barely completely different from search engine crawlers like Googlebot or Bingbot (the distinctive internet crawlers that Google and Bing use). However they work in an identical manner—they entry a web site and “learn” it as a search engine crawler would.

And you should use data from most of these crawlers to enhance your web site. Or to higher perceive different web sites.

How Do Net Crawlers Work?

Net crawlers scan three main components on a webpage: content material, code, and hyperlinks.

By studying the content material, bots can assess what a web page is about. This data helps search engine algorithms decide which pages have the solutions customers are on the lookout for after they make a search.

That’s why utilizing search engine optimisation key phrases strategically is so vital. They assist enhance an algorithm’s skill to attach that web page to associated searches.

Whereas studying a web page’s content material, internet spiders are additionally crawling a web page’s HTML code. (All web sites are composed of HTML code that buildings every webpage and its content material.)

And you should use sure HTML code (like meta tags) to assist crawlers higher perceive your web page’s content material and objective.

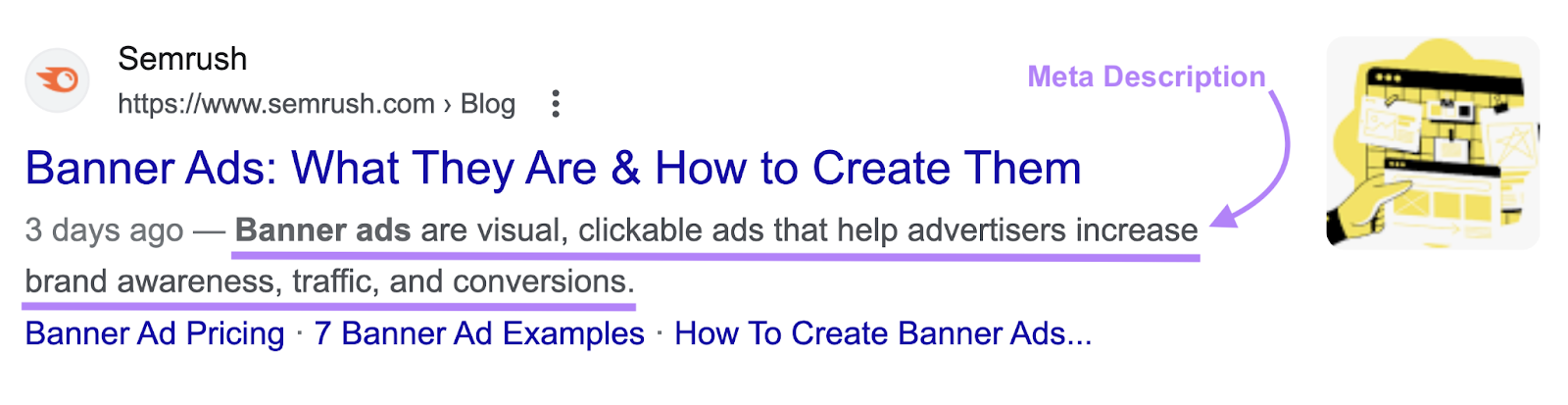

For instance, you possibly can affect how your web page would possibly seem in Google search outcomes utilizing a meta description tag.

Right here’s a meta description:

And right here’s the code for that meta description tag:

Leveraging meta tags is simply one other approach to give search engine crawlers helpful details about your web page so it might get listed appropriately.

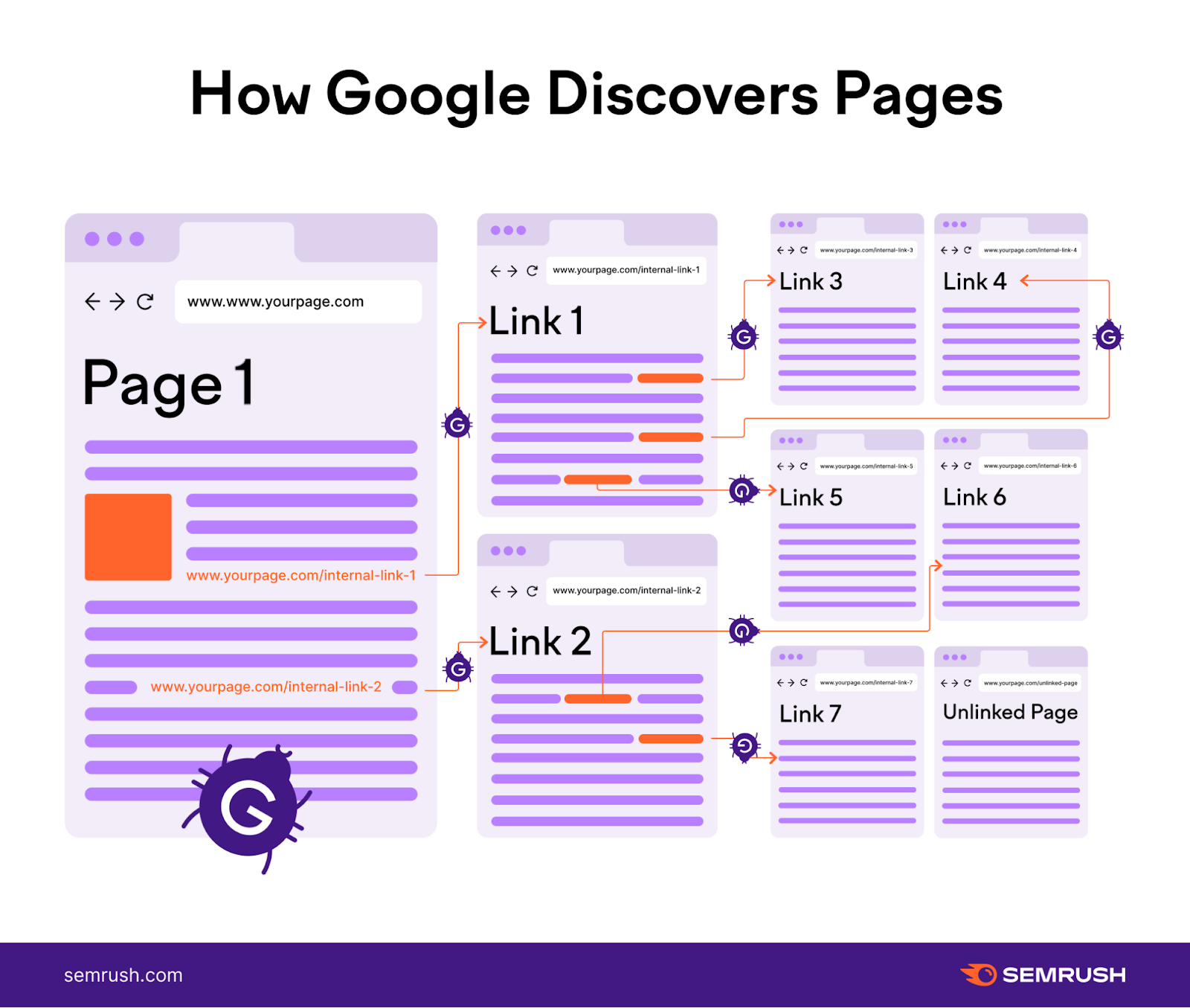

Crawlers have to scour billions of webpages. To perform this, they comply with pathways. These pathways are largely decided by inner hyperlinks.

If Web page A hyperlinks to Web page B inside its content material, the bot can comply with the hyperlink from Web page A to Web page B. After which course of Web page B.

This is the reason inner linking is so vital for search engine optimisation. It helps search engine crawlers discover and index all of the pages in your web site.

Why You Ought to Crawl Your Personal Web site

Auditing your individual web site utilizing an online crawler permits you to discover crawlability and indexibility points which may in any other case slip by means of the cracks.

Crawling your individual web site additionally permits you to see your web site the way in which a search engine crawler would. That will help you optimize it.

Listed here are only a few examples of vital use circumstances for a private web site audit:

Guaranteeing Google Crawlers Can Simply Navigate Your Web site

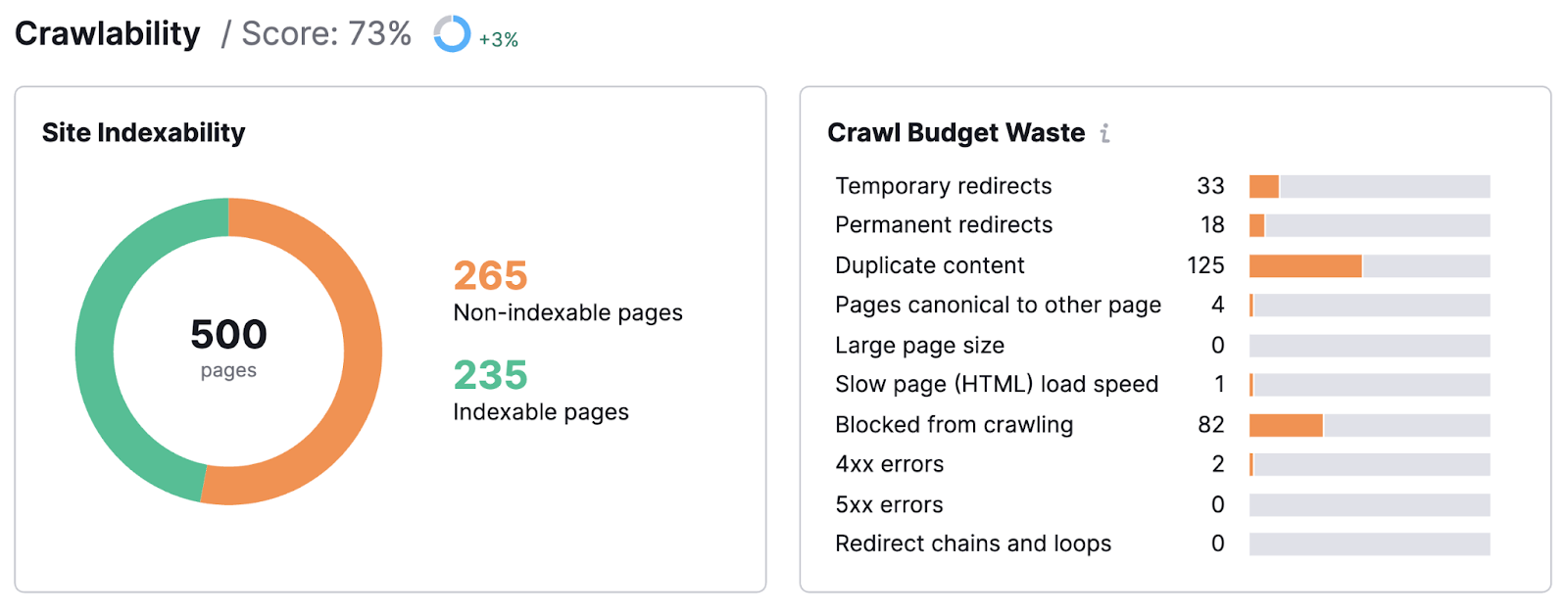

A web site audit can let you know precisely how straightforward it’s for Google bots to navigate your web site. And course of its content material.

For instance, you will discover which sorts of points stop your web site from being crawled successfully. Like non permanent redirects, duplicate content material, and extra.

Your web site audit might even uncover pages that Google isn’t in a position to index.

This could be resulting from any variety of causes. However regardless of the trigger, you want to repair it. Or threat shedding time, cash, and rating energy.

The excellent news is when you’ve recognized issues, you possibly can resolve them. And get again on the trail to search engine optimisation success.

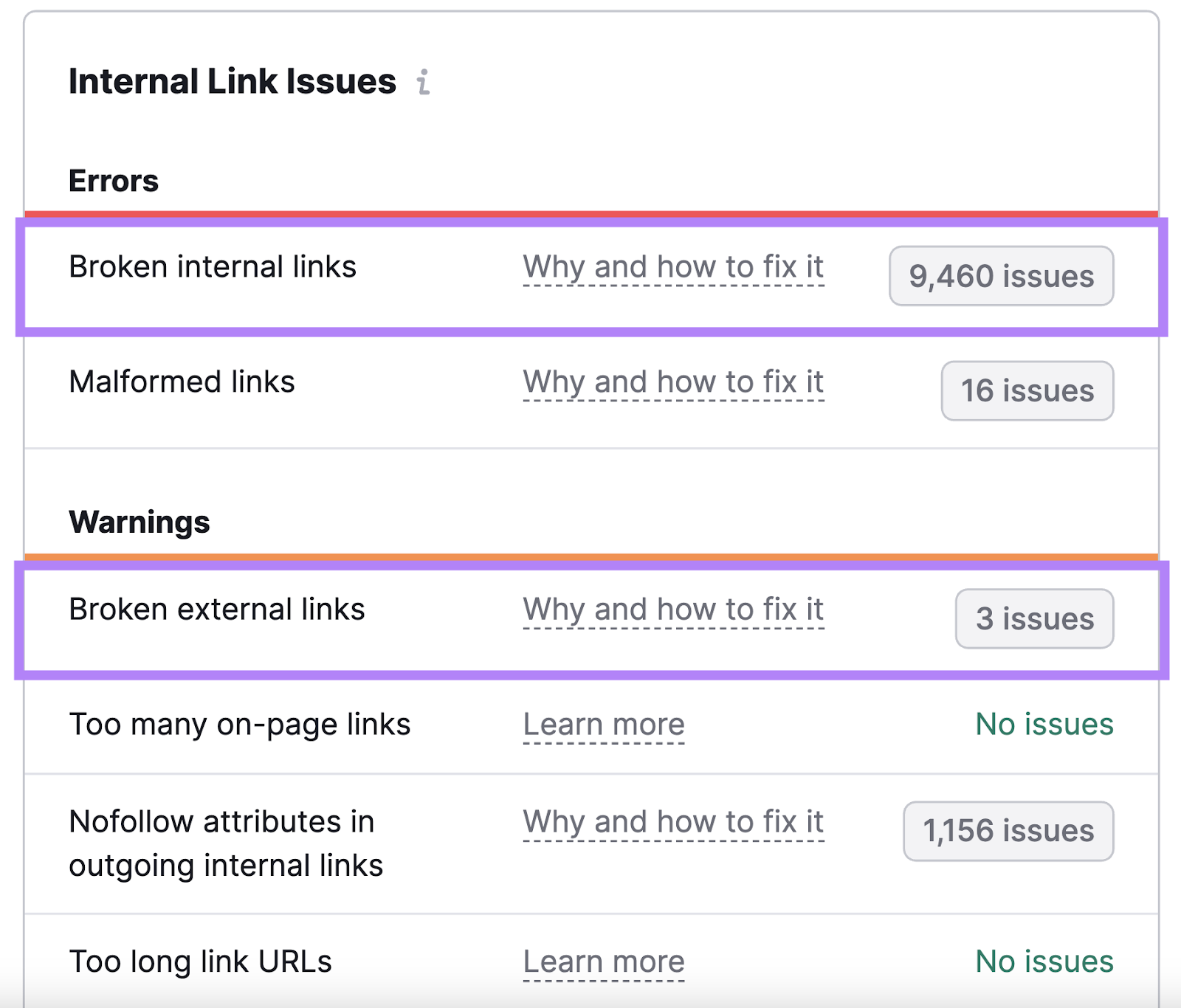

Figuring out Damaged Hyperlinks to Enhance Web site Well being and Hyperlink Fairness

Damaged hyperlinks are one of the vital frequent linking errors.

They’re a nuisance to customers. And to Google’s internet crawlers—as a result of they make your web site seem poorly maintained or coded.

Discover damaged hyperlinks and repair them to make sure robust web site well being.

The fixes themselves will be simple: take away the hyperlink, exchange it, or contact the proprietor of the web site you’re linking to (if it’s an exterior hyperlink) and report the difficulty.

Discovering Duplicate Content material to Repair Chaotic Rankings

Duplicate content material (similar or practically similar content material that may be discovered elsewhere in your web site) could cause main search engine optimisation points by complicated search engines like google.

It could trigger the unsuitable model of a web page to indicate up within the search outcomes. Or, it could even seem like you’re utilizing bad-faith practices to control Google.

A web site audit may also help you discover duplicate content material.

Then, you possibly can repair it. So the appropriate web page can declare its spot in search outcomes.

Content material crawlers and content material scrapers are sometimes referred to interchangeably.

However crawlers entry and index web site content material. Whereas scrapers are used to extract information from webpages and even total web sites.

Some malicious actors use scrapers to tear off and republish different web sites’ content material. Which violates these websites’ copyrights and may steal from their search engine optimisation efforts.

That stated, there are reputable use circumstances for scrapers.

Like scraping information for collective evaluation (e.g., scraping rivals’ product listings to evaluate one of the simplest ways to explain, value, and current related objects). Or scraping and lawfully republishing content material by yourself web site (like by asking for specific permission from the unique writer).

Listed here are some examples of fine instruments that fall underneath each classes.

3 Scraper Instruments

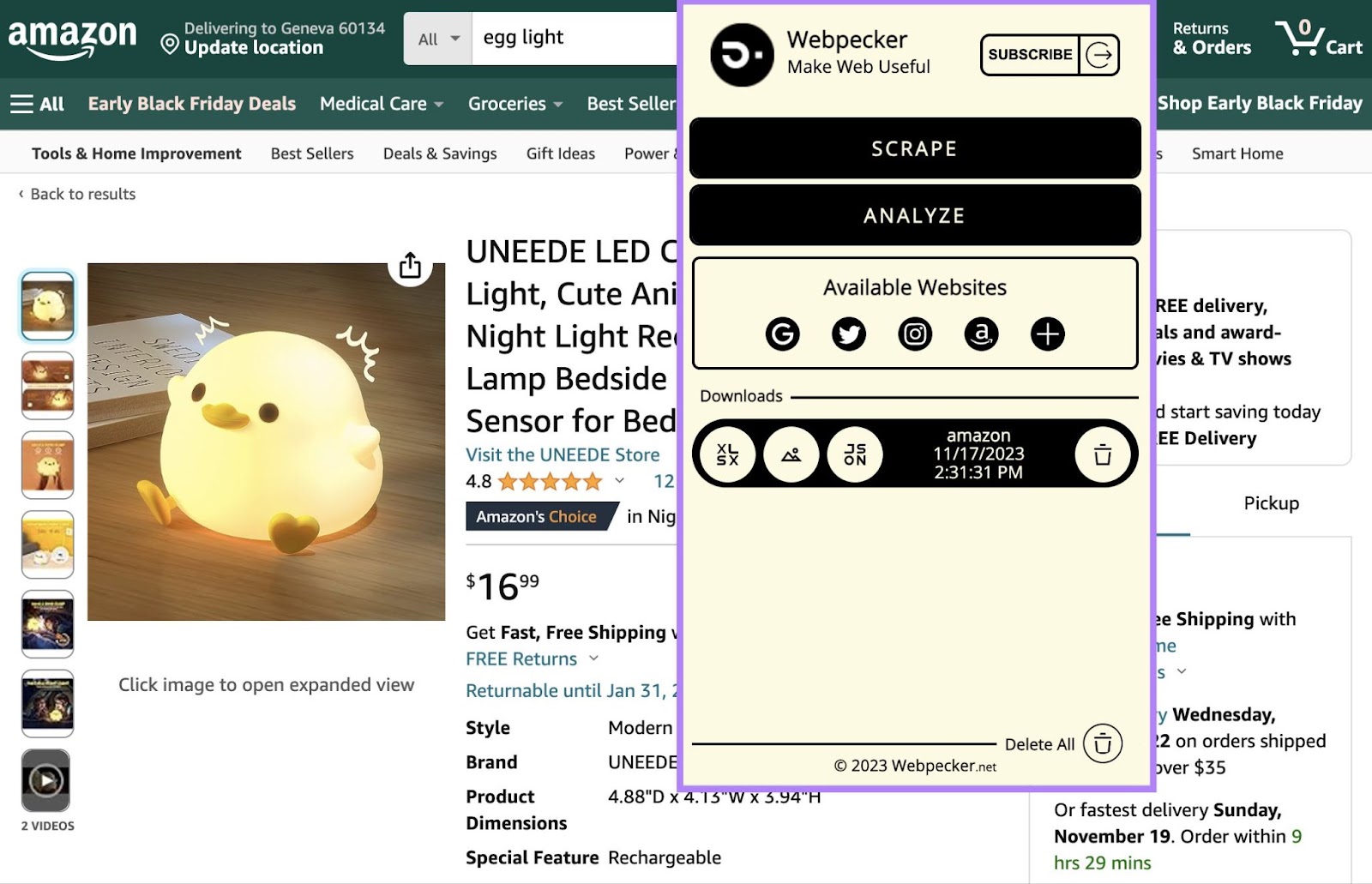

Webpecker

Webpecker is a Chrome extension that permits you to scrape information from main search engines like google like Amazon and social networks. Then, you possibly can obtain the info in XLSX, JSON, or ZIP codecs.

For instance, you possibly can scrape the next from Amazon:

- Hyperlinks

- Costs

- Photos

- Picture URLs

- Information URLs

- Titles

- Rankings

- Coupons

Or, you possibly can scrape the next from Instagram:

- Hyperlinks

- Photos

- Picture URLs

- Information URLs

- Alt textual content (the textual content that’s learn aloud by display readers and that shows when a picture fails to load)

- Avatars

- Likes

- Feedback

- Date and time

- Titles

- Mentions

- Hashtags

- Areas

Accumulating this information permits you to analyze rivals and discover inspiration in your personal web site or social media presence.

As an example, contemplate hashtags on Instagram.

Utilizing them strategically can put your content material in entrance of your audience and enhance person engagement. However with infinite potentialities, selecting the best hashtags could be a problem.

By compiling an inventory of hashtags your rivals use on high-performing posts, you possibly can jumpstart your individual hashtag success.

This type of software will be particularly helpful when you’re simply beginning out and aren’t positive tips on how to strategy product listings or social media postings.

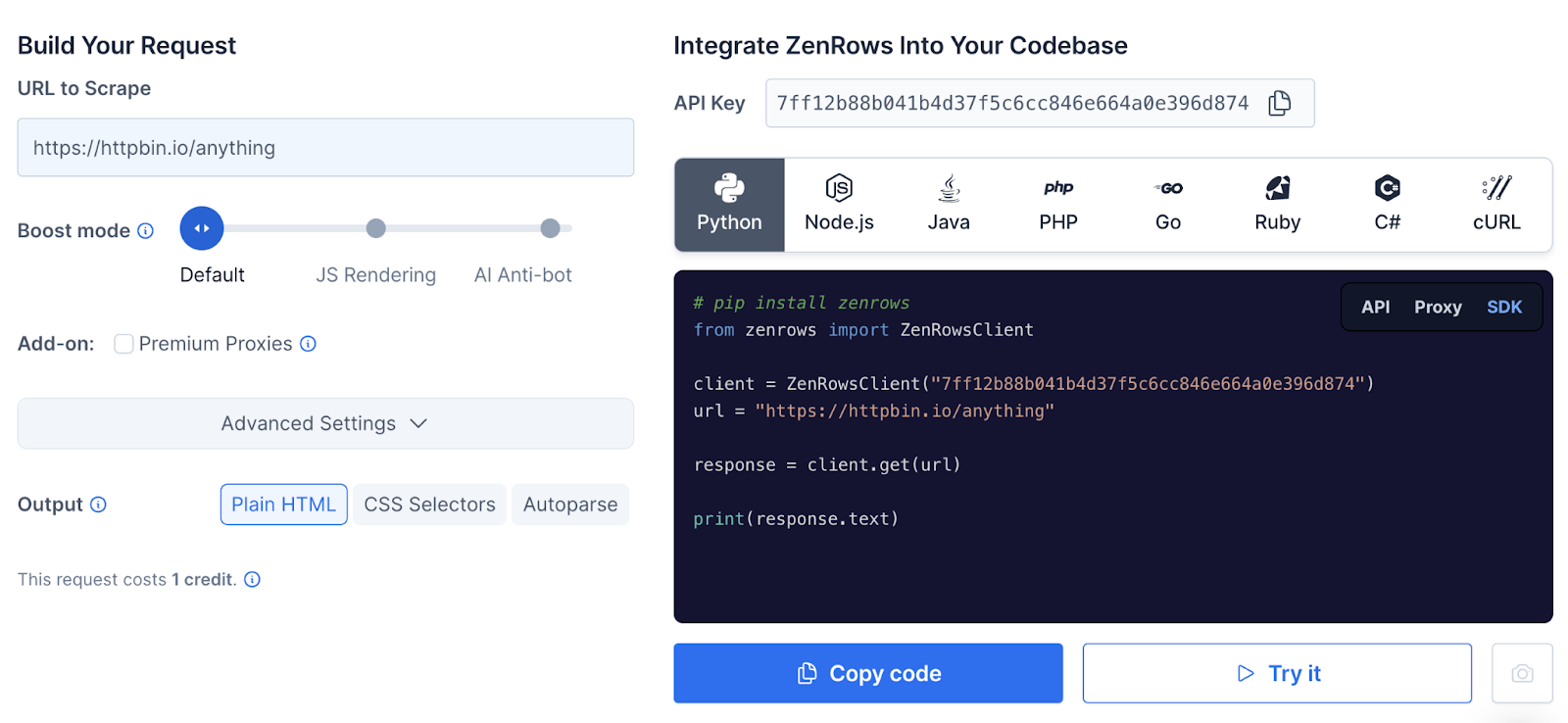

ZenRows

ZenRows is able to scraping hundreds of thousands of webpages and bypassing boundaries comparable to Fully Automated Public Turing exams to inform Computer systems and People Aside (CAPTCHAs).

ZenRows is finest deployed by somebody in your internet growth workforce. However as soon as the parameters have been set, the software says it might save 1000’s of growth hours.

And it’s in a position to bypass “Entry Denied” screens that usually block bots.

ZenRow’s auto-parsing software allows you to scrape pages or websites you’re excited about. And compiles the info right into a JSON file so that you can assess.

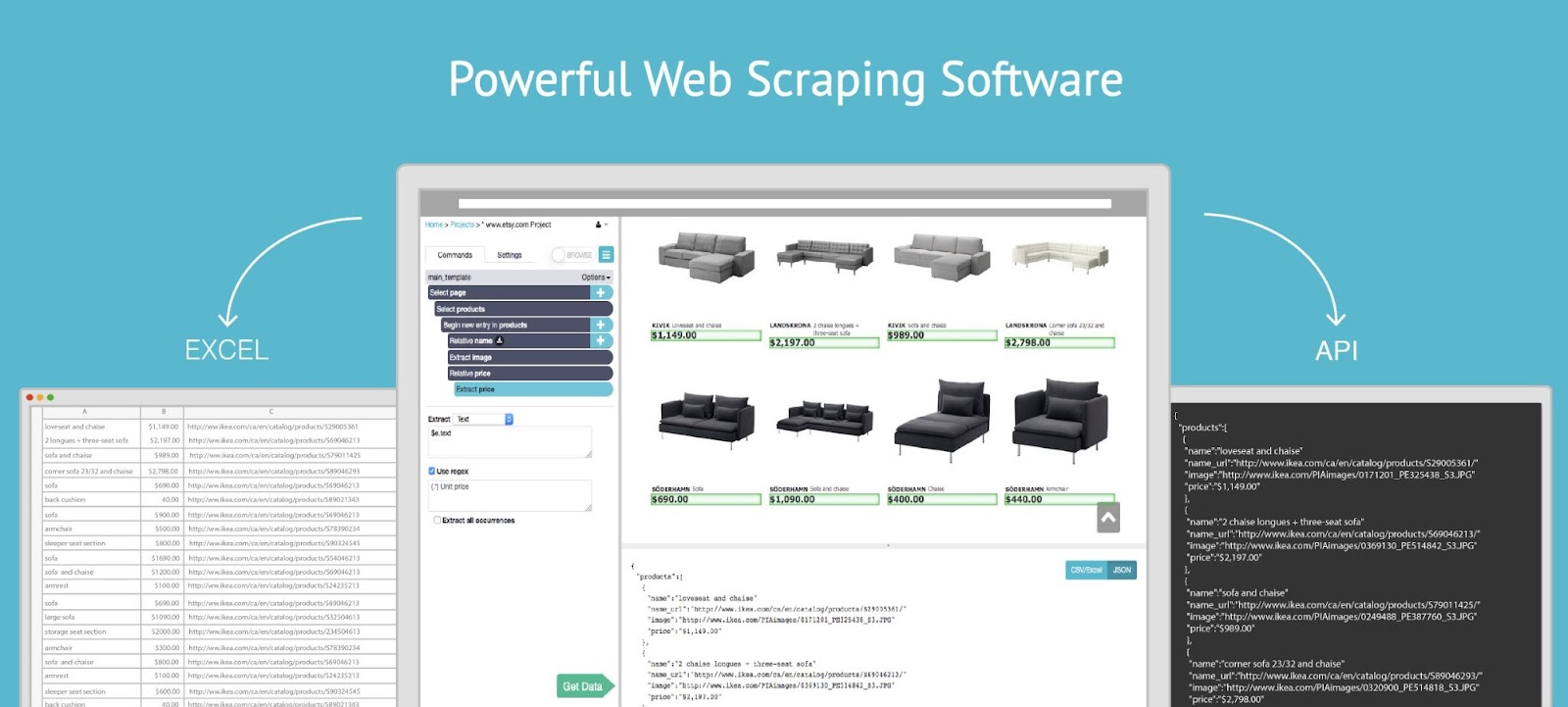

ParseHub

ParseHub is a no-code approach to scrape any web site for vital information.

Picture Supply: ParseHub

It might probably gather difficult-to-access information from:

- Varieties

- Drop-downs

- Infinite scroll pages

- Pop-ups

- JavaScript

- Asynchronous JavaScript and XML (AJAX)—a mix of programming instruments used to change information

You possibly can obtain the scraped information. Or, import it into Google Sheets or Tableau.

Utilizing scrapers like this may also help you research the competitors. However don’t neglect to utilize crawlers.

3 Net Crawler Instruments

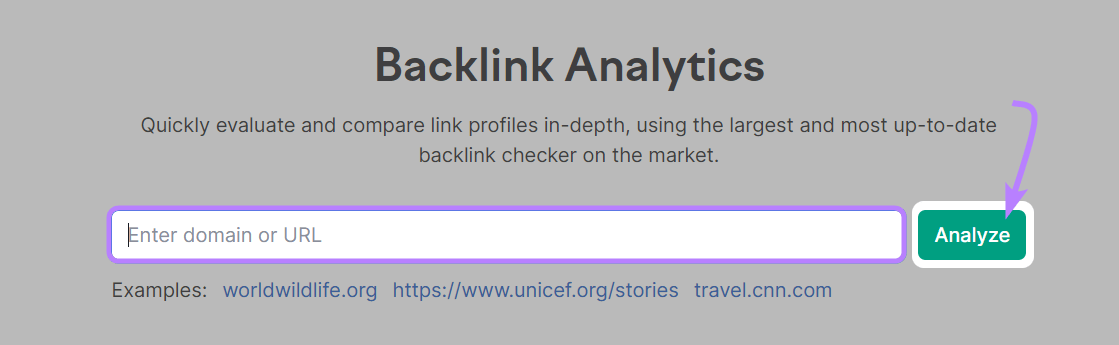

Backlink Analytics

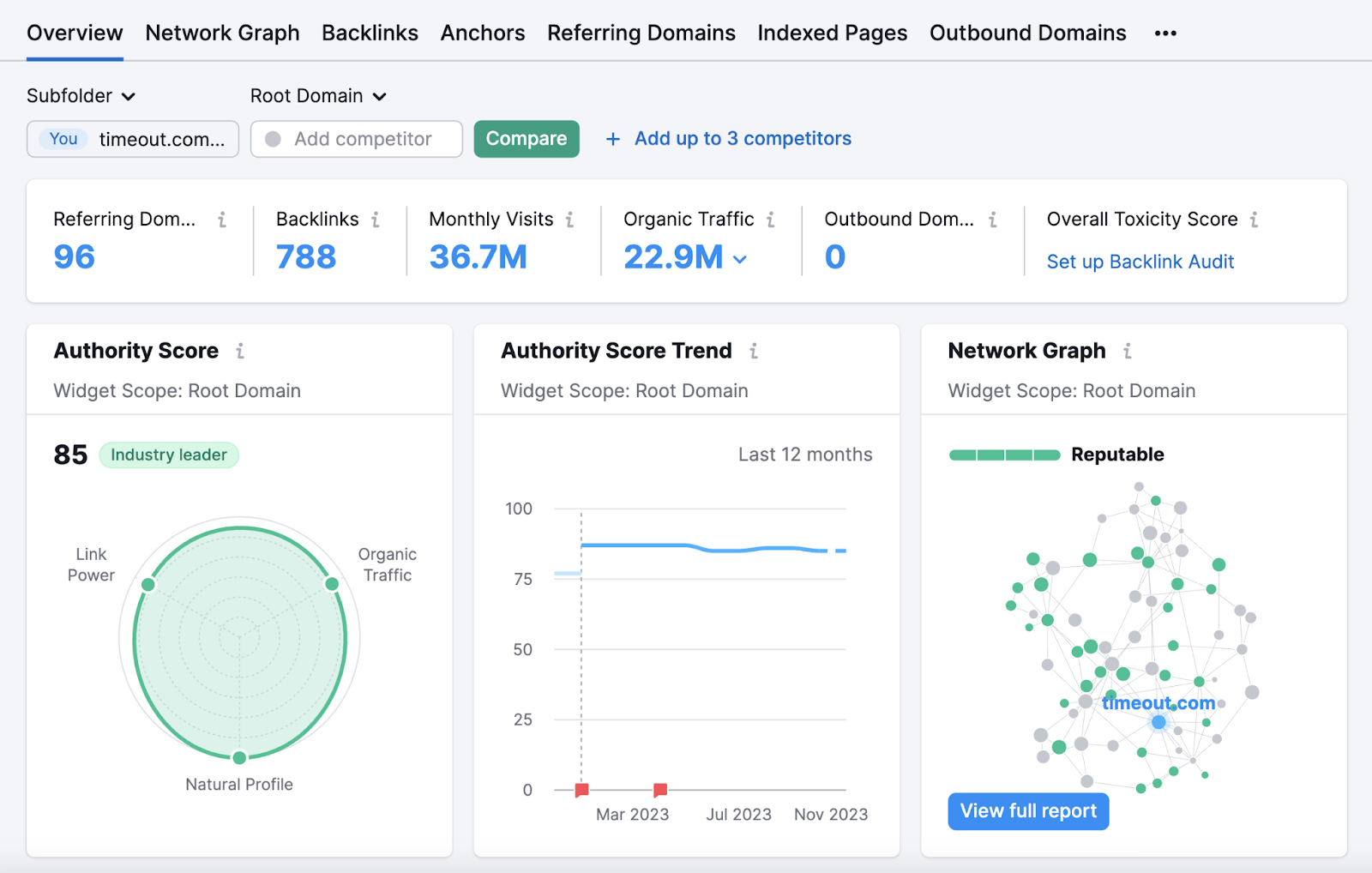

Backlink Analytics makes use of crawlers to check your and your rivals’ incoming hyperlinks. Which lets you analyze the backlink profiles of your rivals or different business leaders.

Semrush’s backlinks database commonly updates with new details about hyperlinks to and from crawled pages. So the data is all the time updated.

Open the software, enter a website identify, and click on “Analyze.”

You’ll then be taken to the “Overview” report. The place you possibly can look into the area’s complete variety of backlinks, complete variety of referring domains, estimated natural visitors, and extra.

Backlink Audit

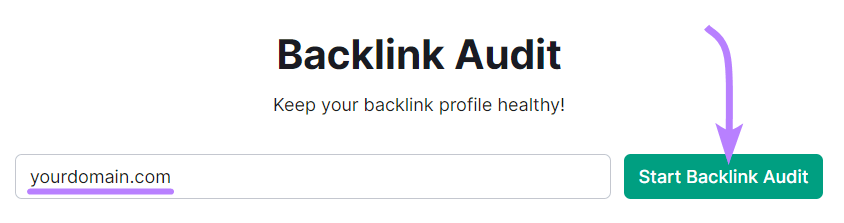

Semrush’s Backlink Audit software allows you to crawl your individual web site to get an in-depth have a look at how wholesome your backlink profile is. That will help you perceive whether or not you possibly can enhance your rating potential.

Open the software, enter your area, and click on “Begin Backlink Audit.”

Then, comply with the Backlink Audit configuration information to arrange your undertaking and begin the audit.

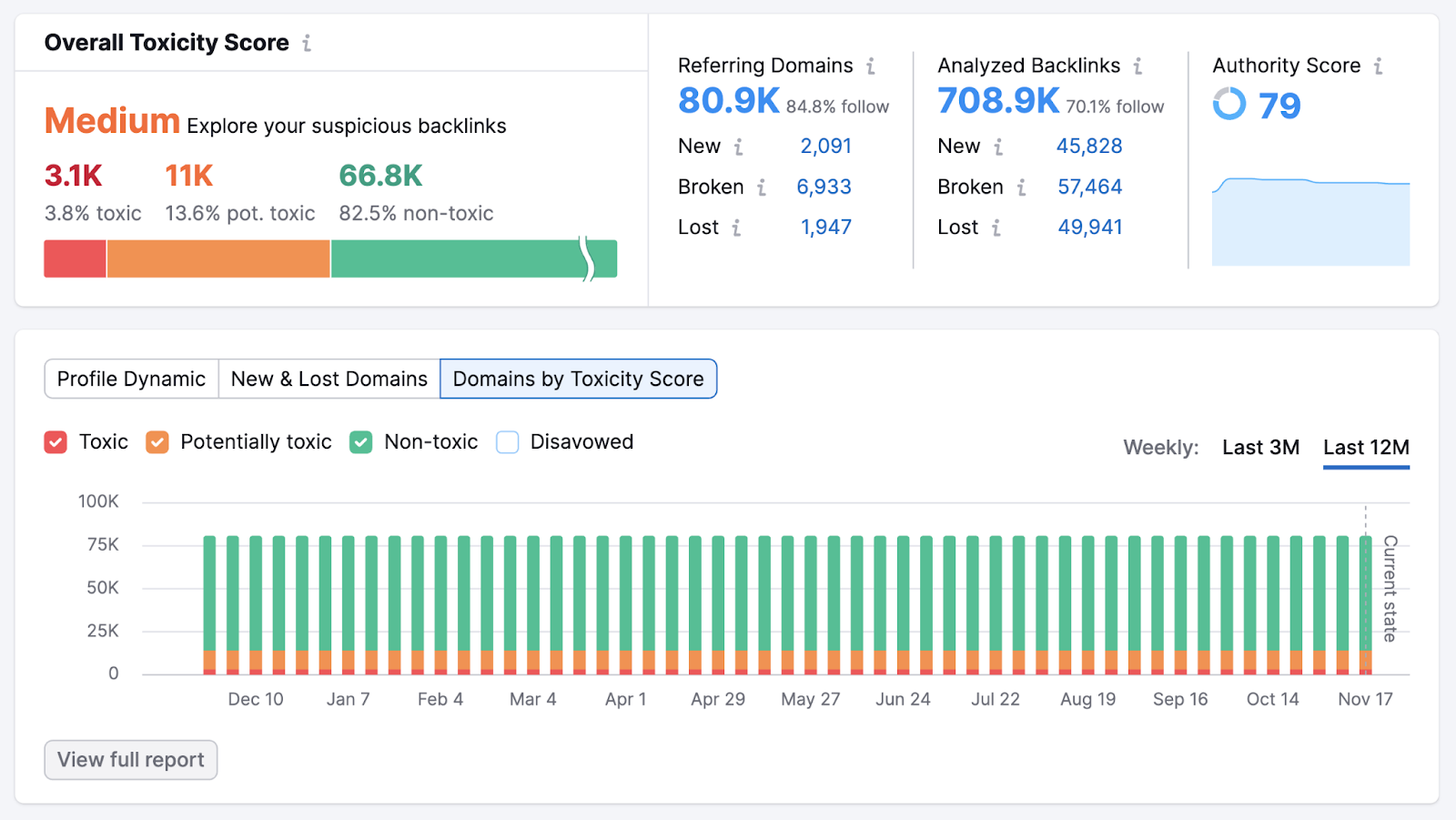

As soon as the software is completed gathering data, you’ll see the “Overview” report. Which supplies you a holistic have a look at your backlink profile.

Have a look at the “Total Toxicity Rating” (a metric primarily based on the variety of low-quality domains linking to your web site) part. A “Medium” or “Excessive” rating signifies you’ve gotten room for enchancment.

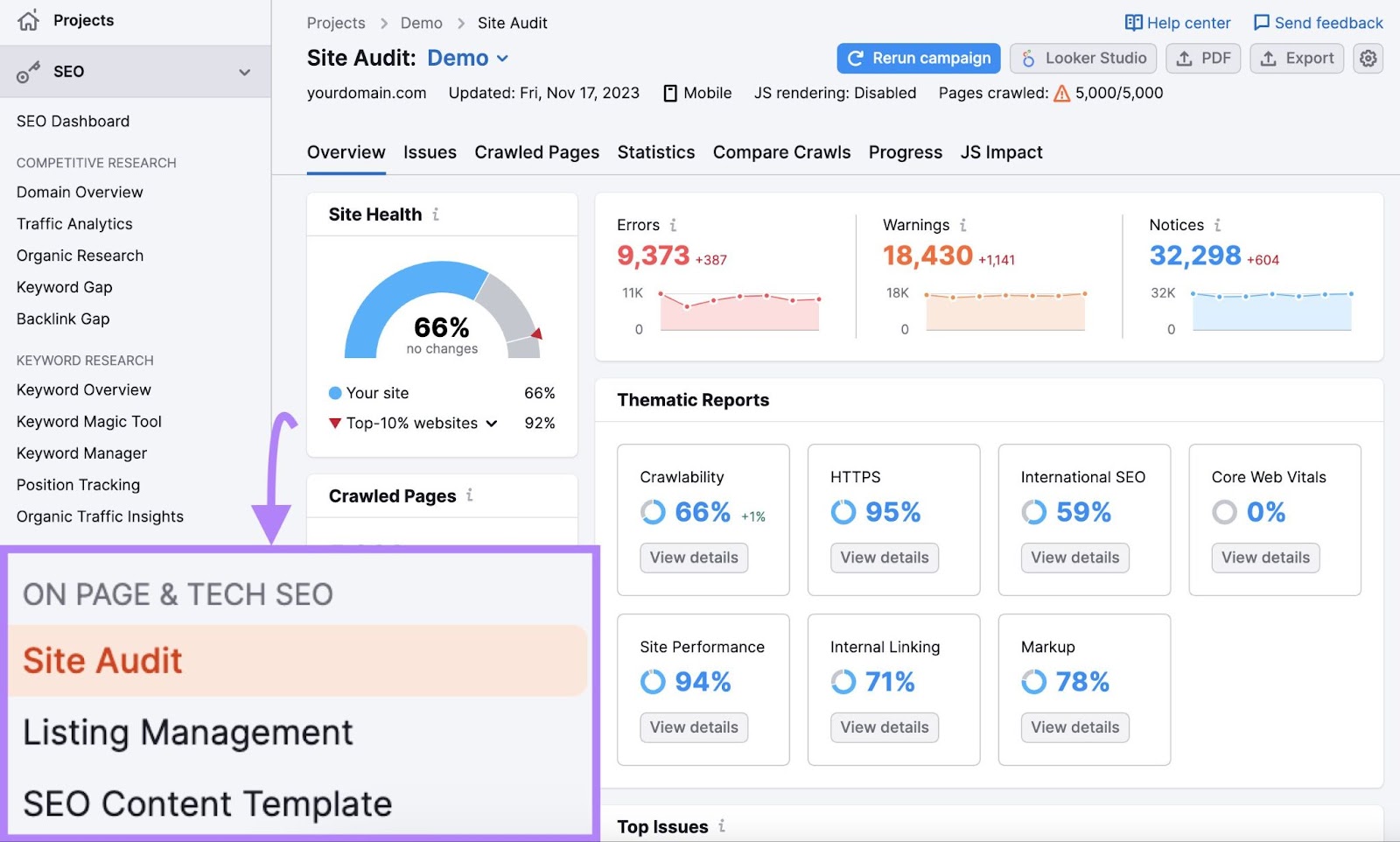

Web site Audit

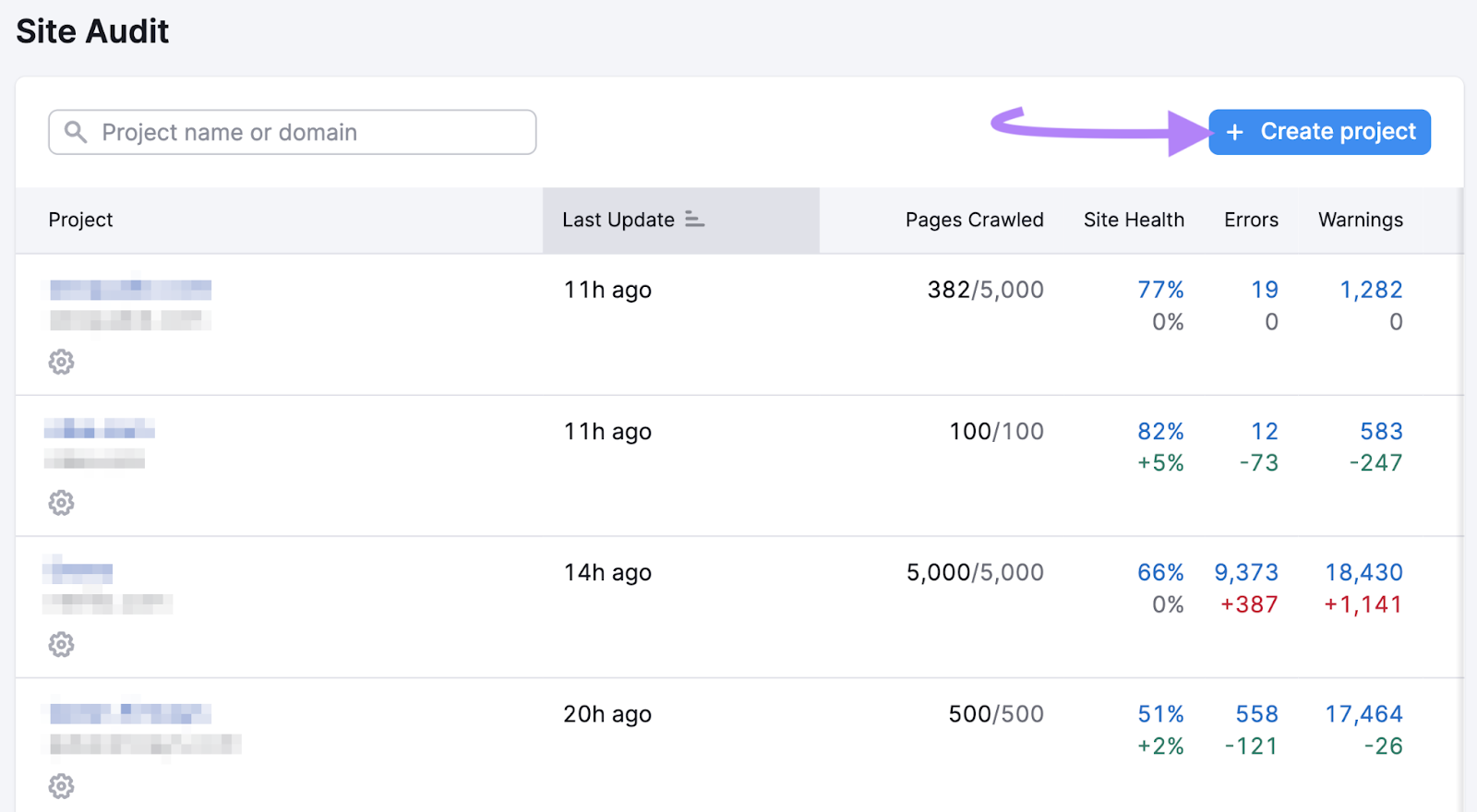

Web site Audit makes use of crawlers to entry your web site and analyze its total well being. And provides you a report of the technical points that may very well be affecting your web site’s skill to rank effectively in Google’s search outcomes.

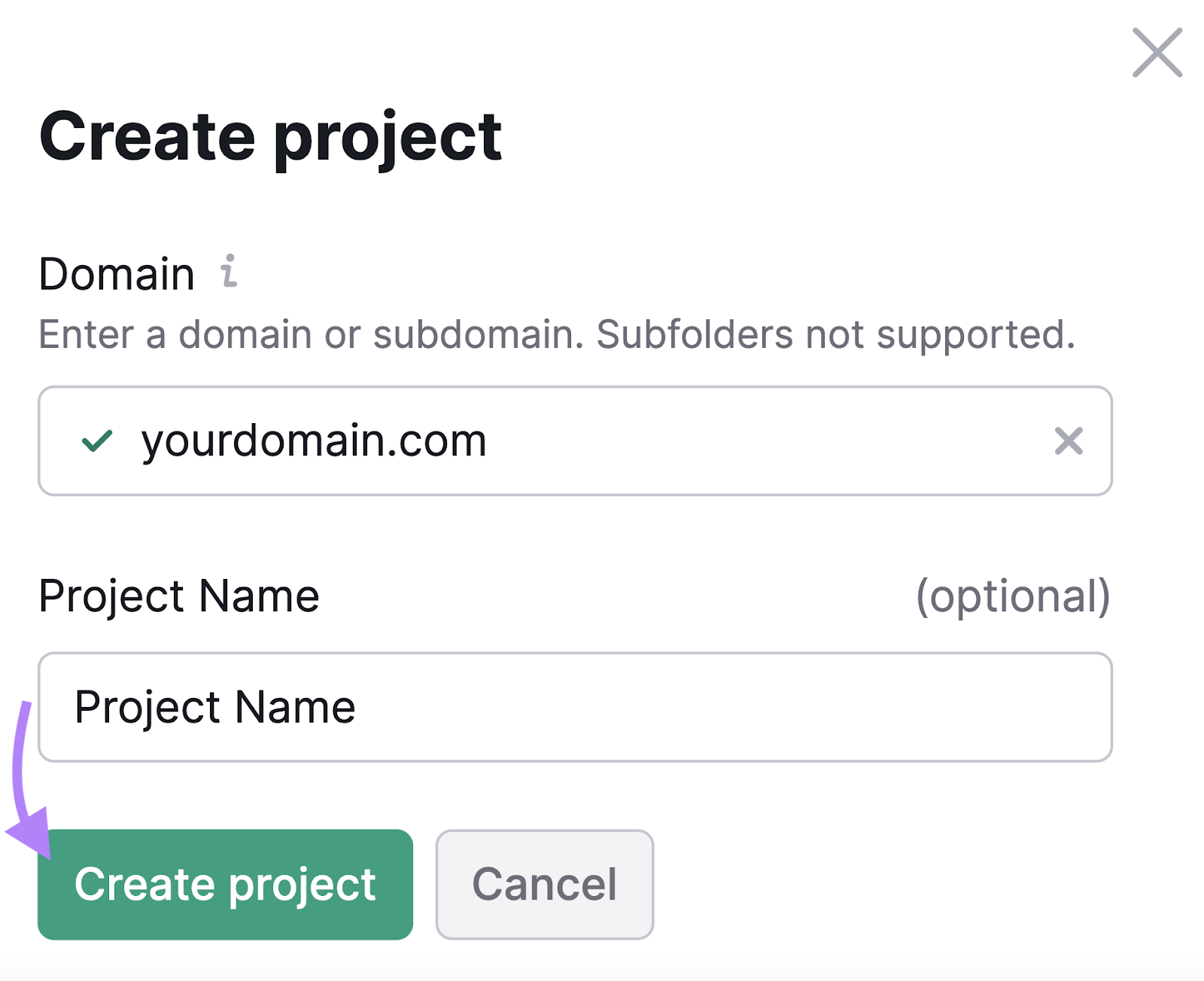

To crawl your web site, open the software and click on “+ Create undertaking.”

Enter your area and an optionally available undertaking identify. Then, click on “Create undertaking.”

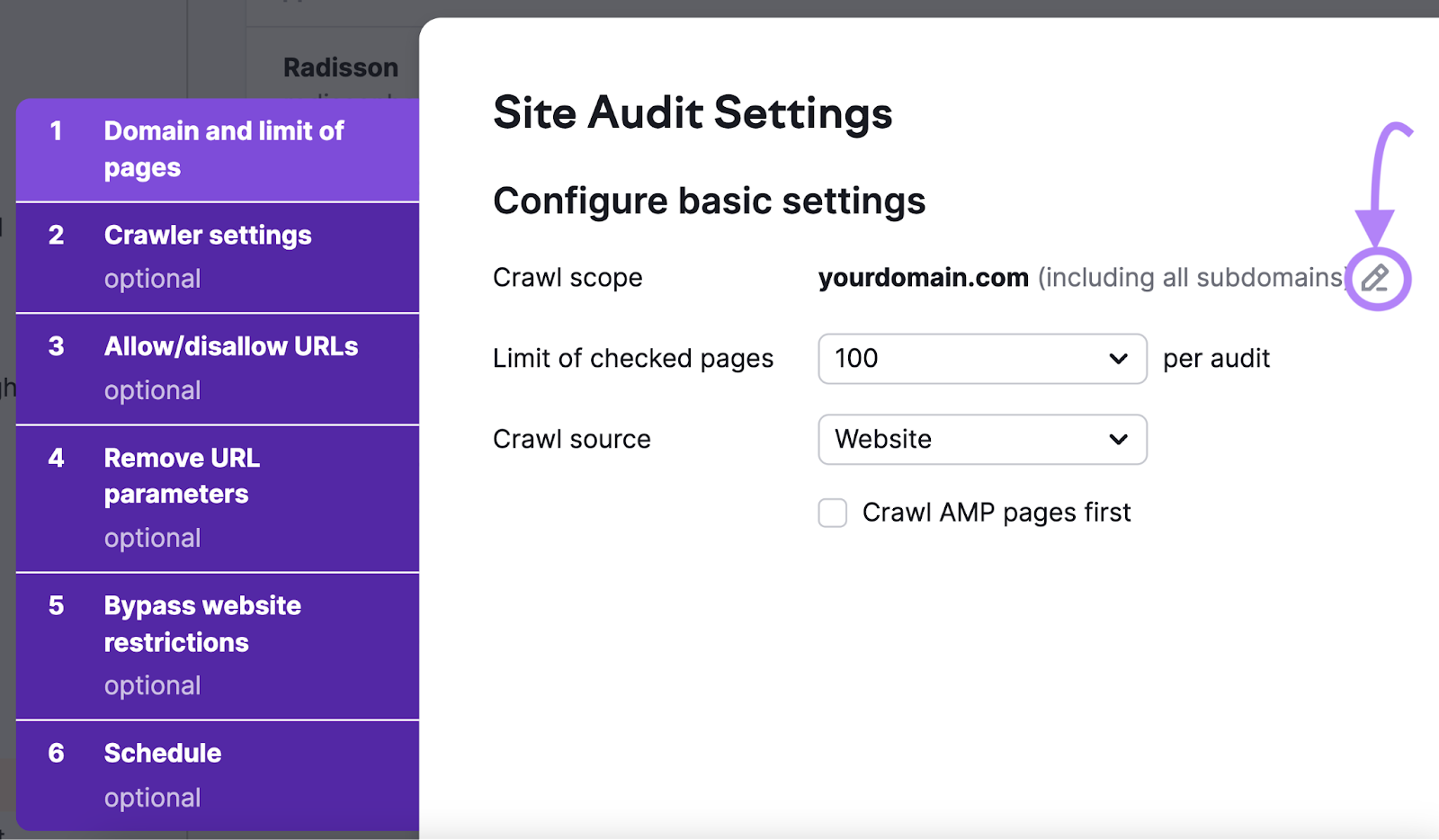

Now, it’s time to configure your fundamental settings.

First, outline the scope of your crawl. The default setting is to crawl the area you entered together with its subdomains and subfolders. To edit this, click on the pencil icon and alter your settings.

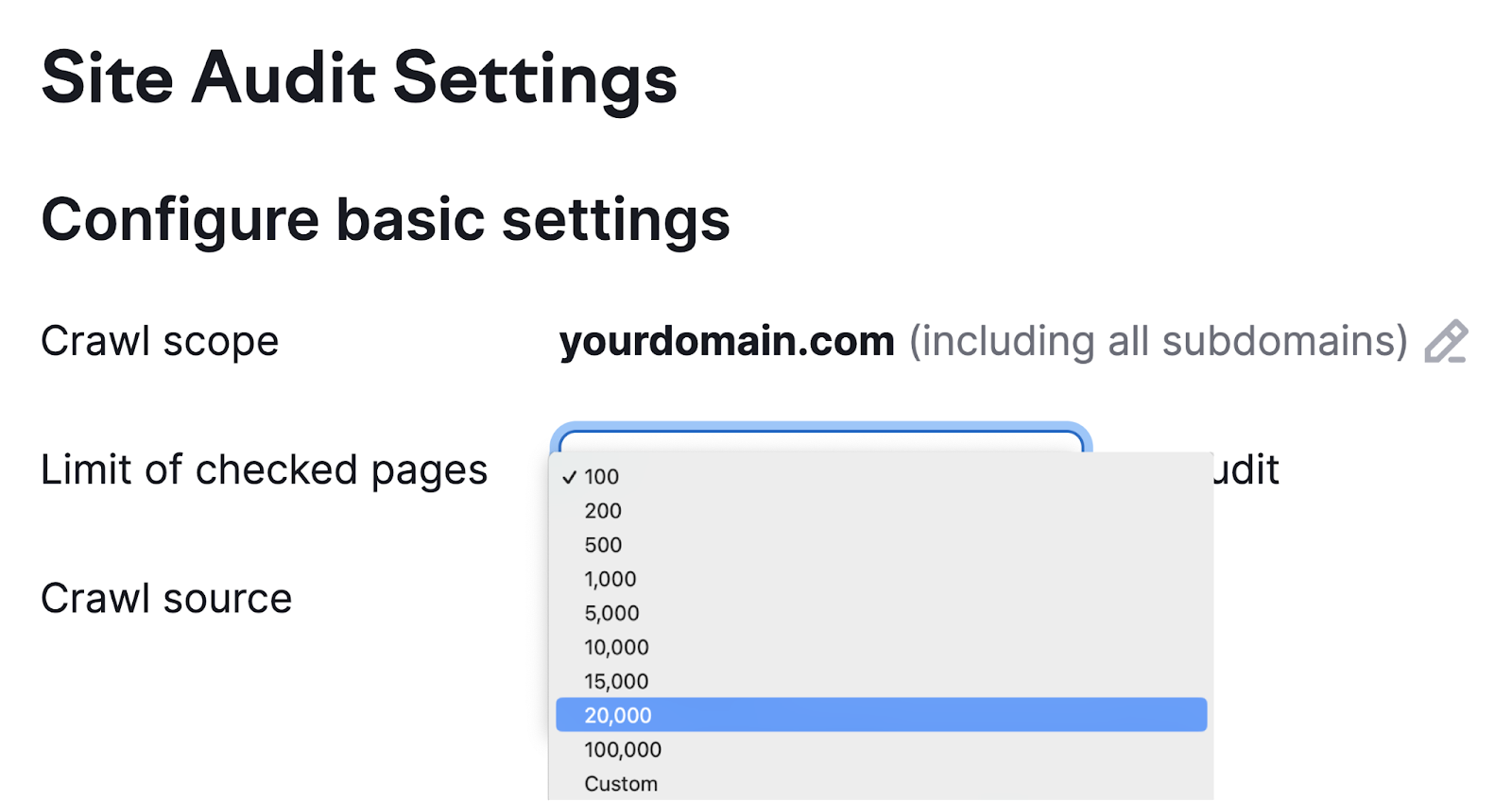

Subsequent, set the utmost variety of pages you need crawled per audit. The extra pages you crawl, the extra correct your audit will probably be. However you additionally have to account in your personal capability and your subscription stage.

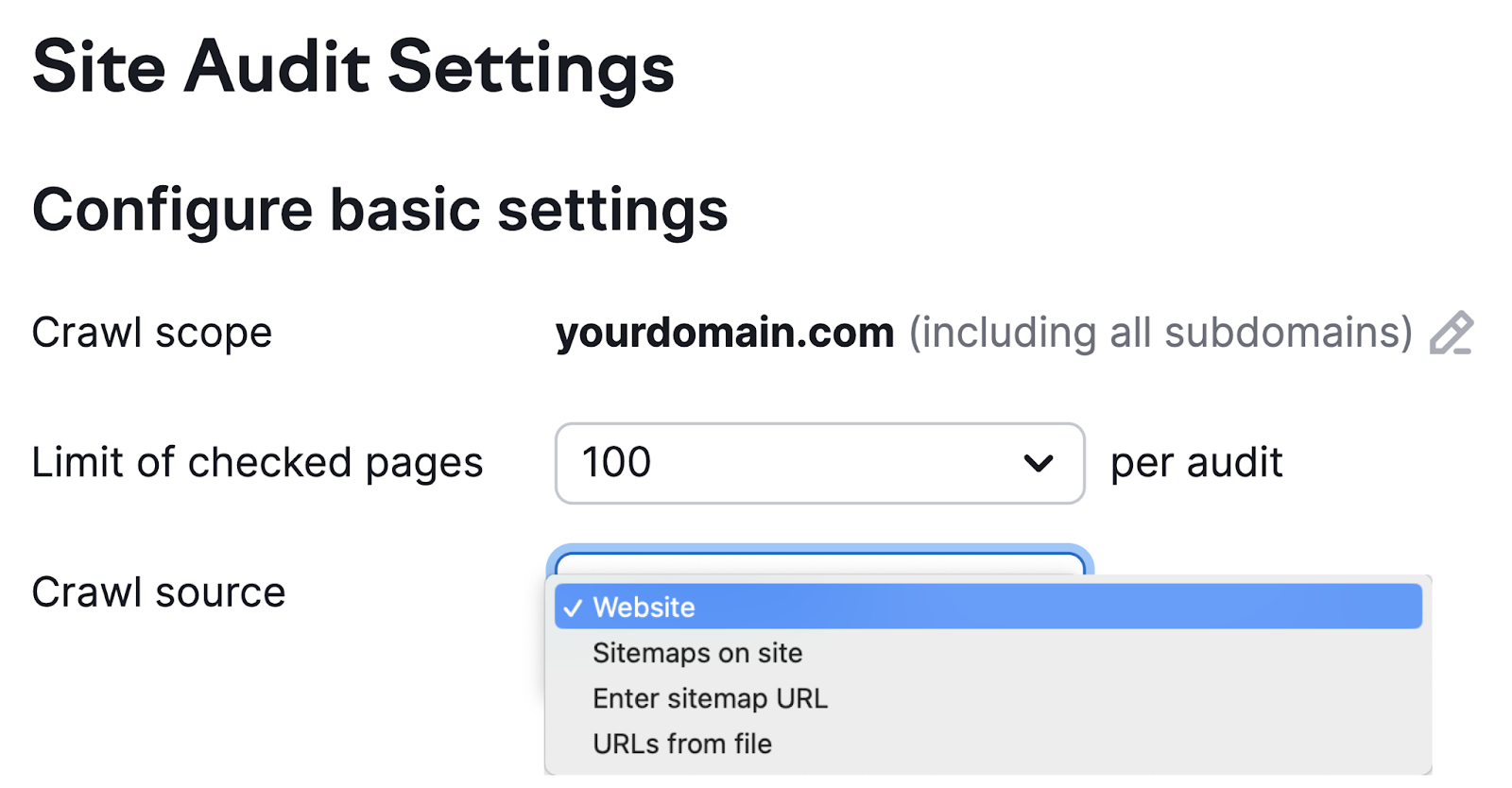

Then, select your crawl supply.

There are 4 choices:

- Web site: This initiates an algorithm that travels round your web site like a search engine crawler would. It’s a good selection when you’re excited about crawling the pages in your web site which are most accessible from the homepage.

- Sitemaps on web site: This initiates a crawl of the URLs discovered within the sitemap out of your robots.txt file

- Enter sitemap URL: This supply permits you to enter your individual sitemap URL, making your audit extra particular

- URLs from file: This lets you get actually particular about which pages you need to audit. You simply have to have them saved as CSV or TXT information you can add on to Semrush. This selection is nice for once you don’t want a basic overview. For instance, once you’ve made modifications to particular pages and need to see how they carry out.

The remaining settings on tabs two by means of six within the setup wizard are optionally available. However be sure to specify any components of your web site you don’t need to be crawled. And supply login credentials in case your web site is protected by a password.

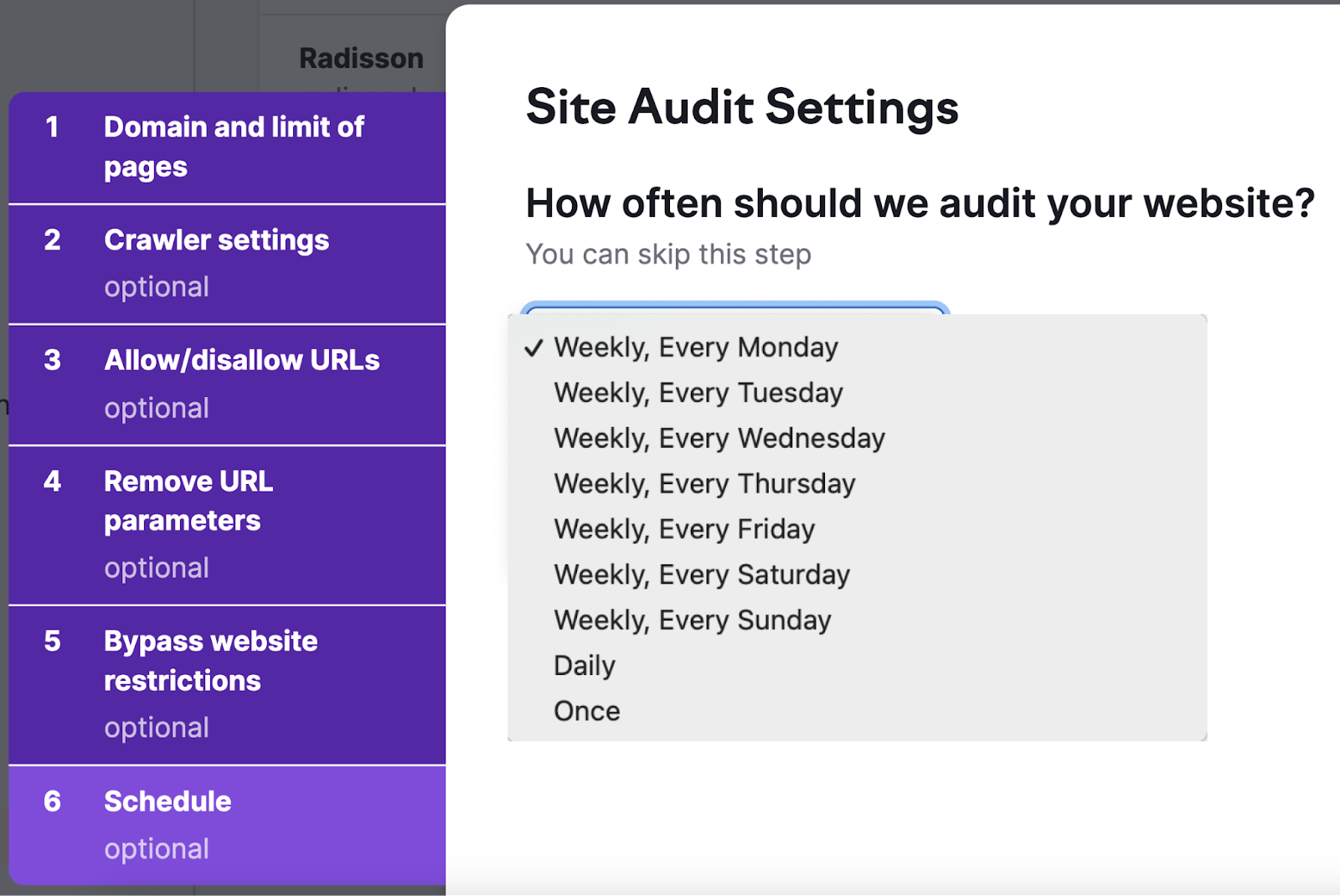

We additionally advocate scheduling common web site audits within the “Schedule” tab. As a result of auditing your web site commonly helps you monitor your web site’s well being and gauge the influence of latest modifications.

Scheduling choices together with weekly, every day, or simply as soon as.

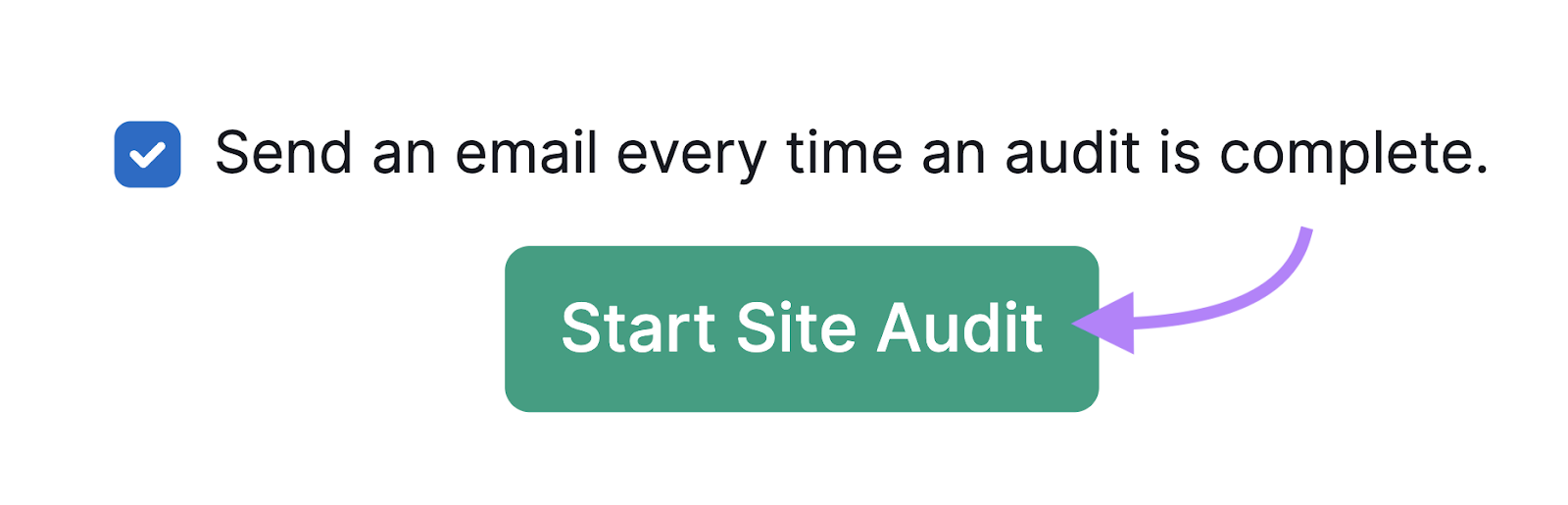

Lastly, click on “Begin Web site Audit” to get your crawl underway. And don’t neglect to click on the field subsequent to “Ship an e mail each time an audit is full.” So that you’re notified when your audit report is prepared.

Now simply anticipate the e-mail notification that your audit is full. You possibly can then begin reviewing your outcomes.

The right way to Consider Your Web site Crawl Information

When you’ve carried out a crawl, analyze the outcomes. To seek out out what you are able to do to enhance.

You possibly can go to your undertaking in Web site Audit.

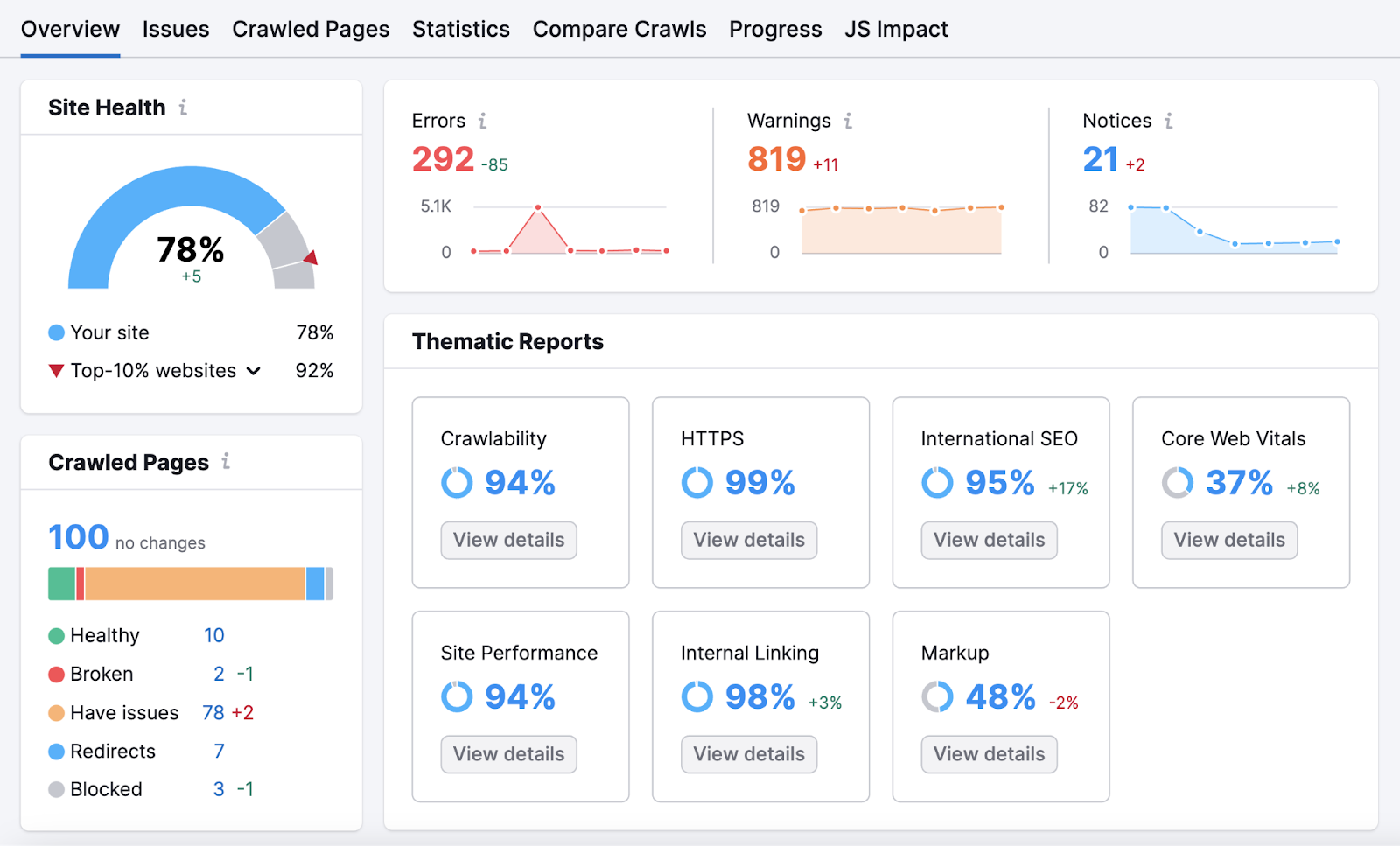

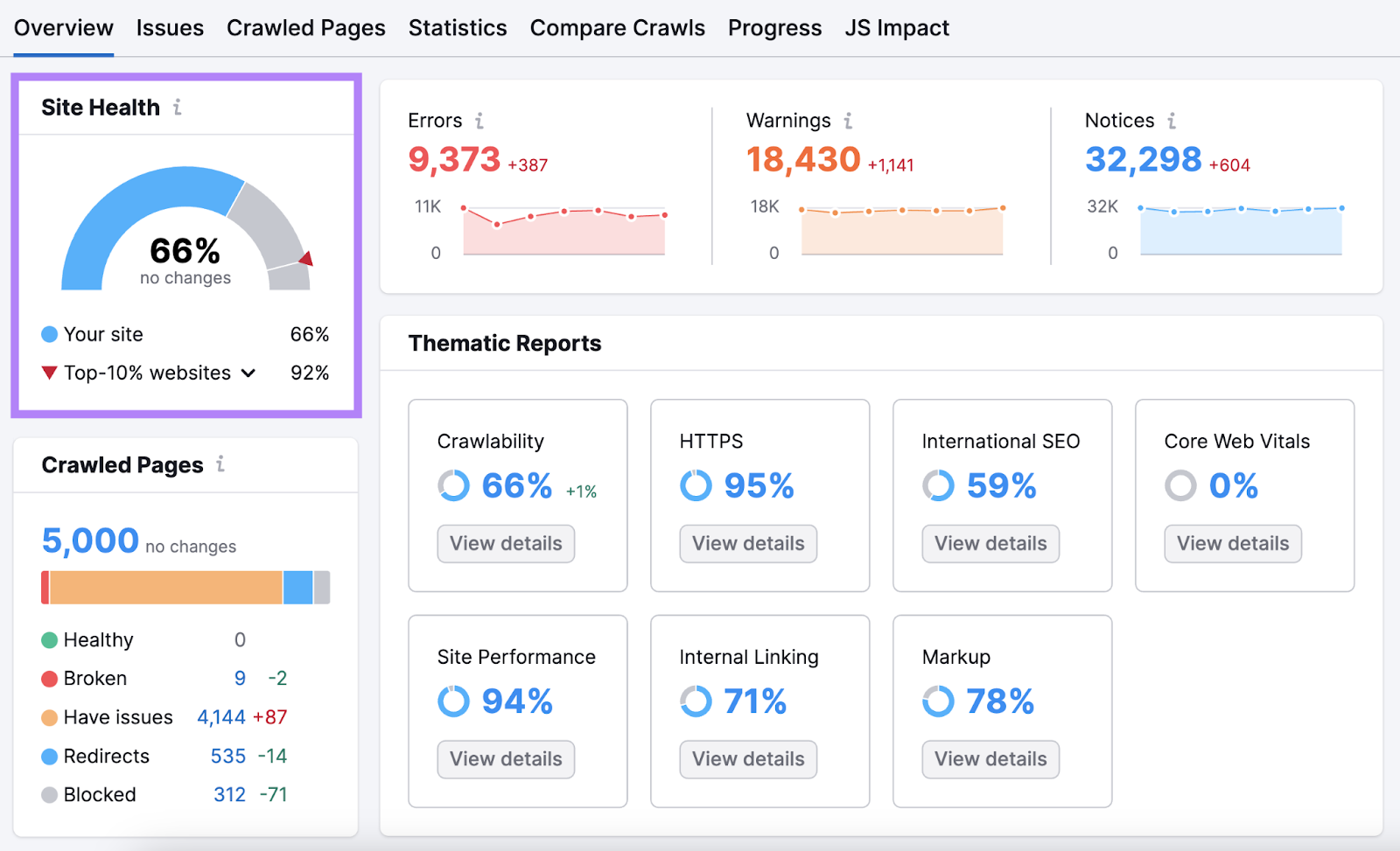

You’ll be taken to the “Overview” report. The place you’ll discover your “Web site Well being” rating. The upper your rating, the extra user-friendly and search-engine-optimized your web site is.

Beneath that, you’ll see the variety of crawled pages. And a distribution of pages by web page standing.

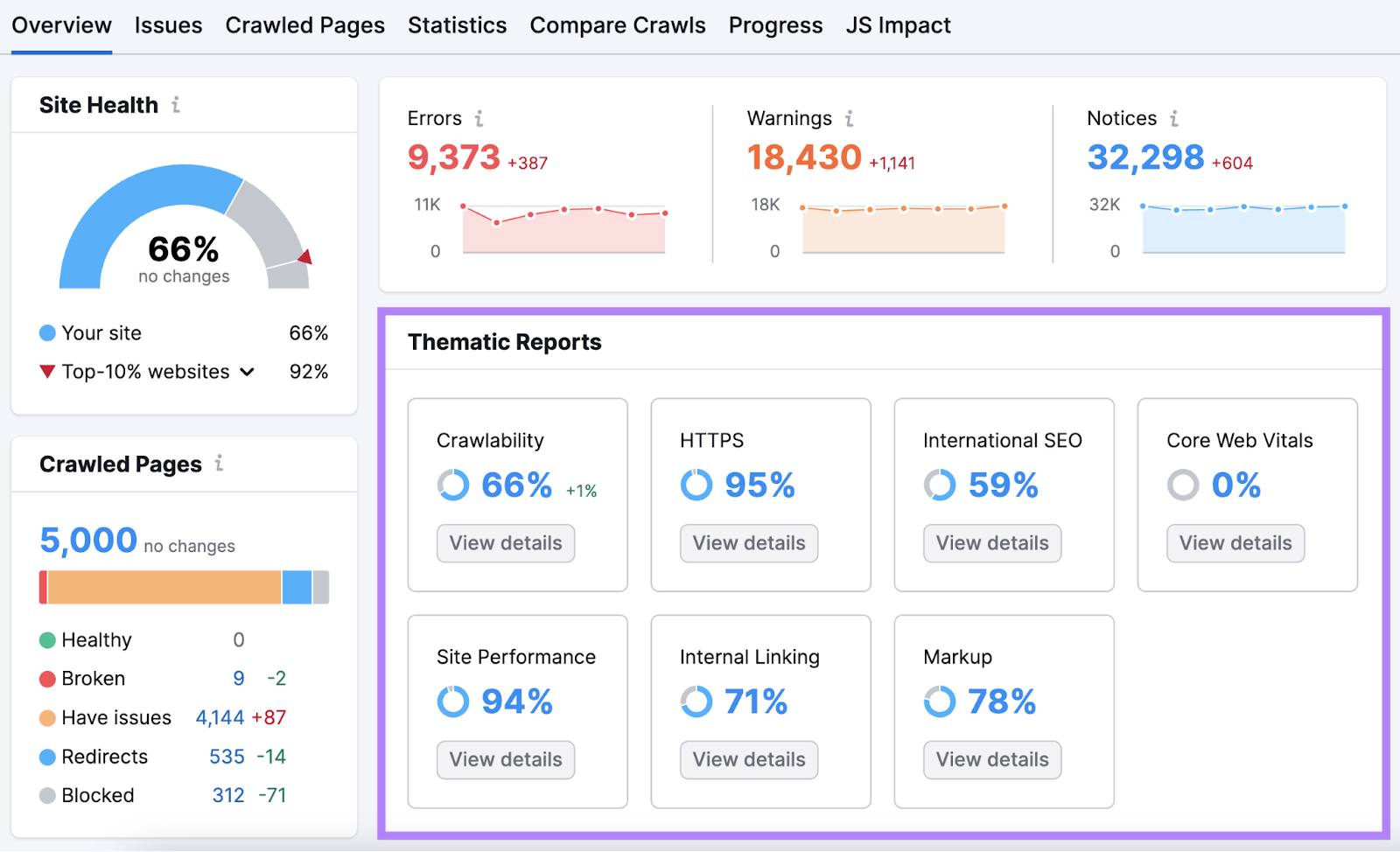

Scroll right down to “Thematic Stories.” This part particulars room for enchancment in areas like Core Net Vitals, inner linking, and crawlability.

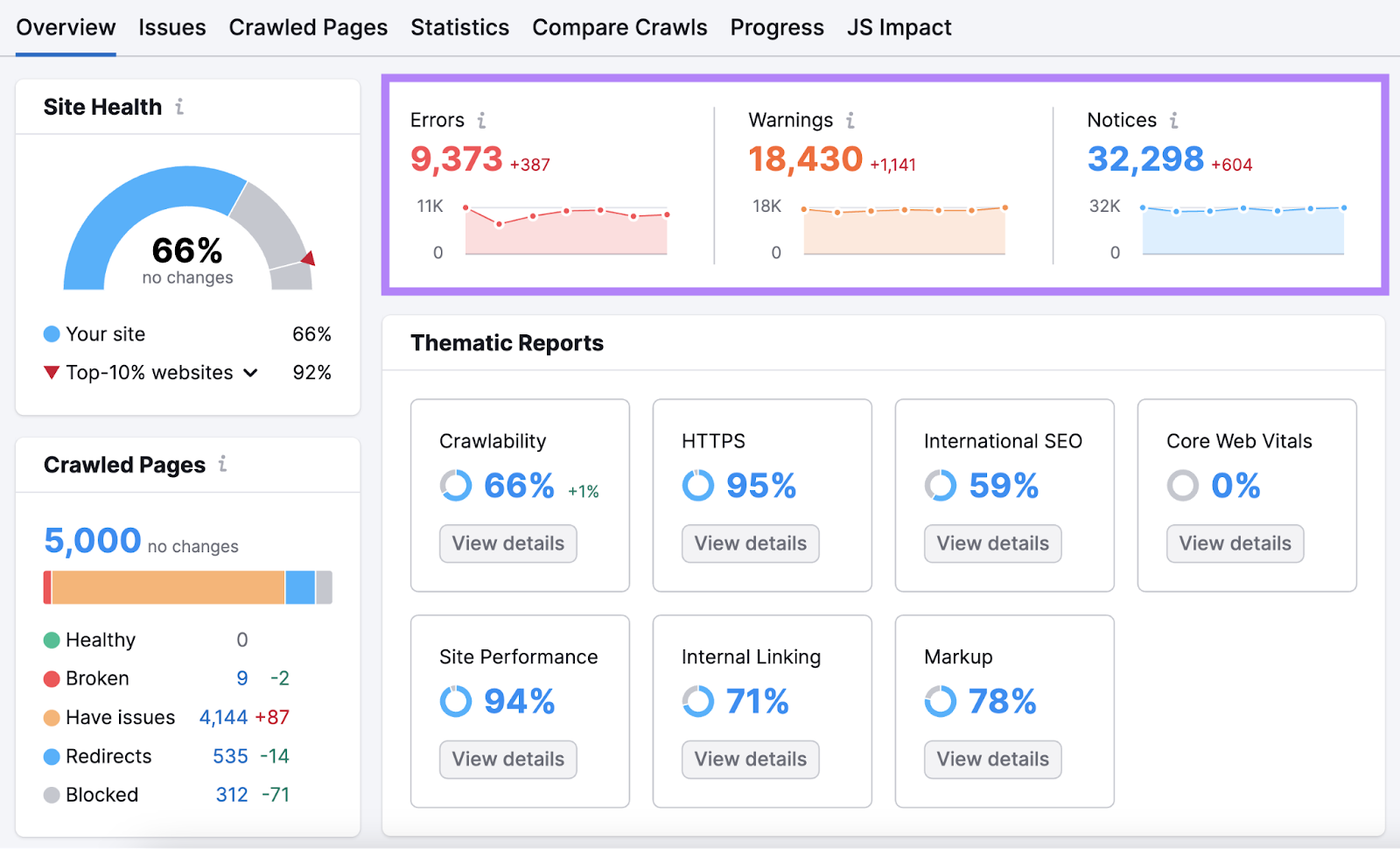

To the appropriate, you’ll discover “Errors,” “Warnings,” and “Notices.” That are points categorized by severity.

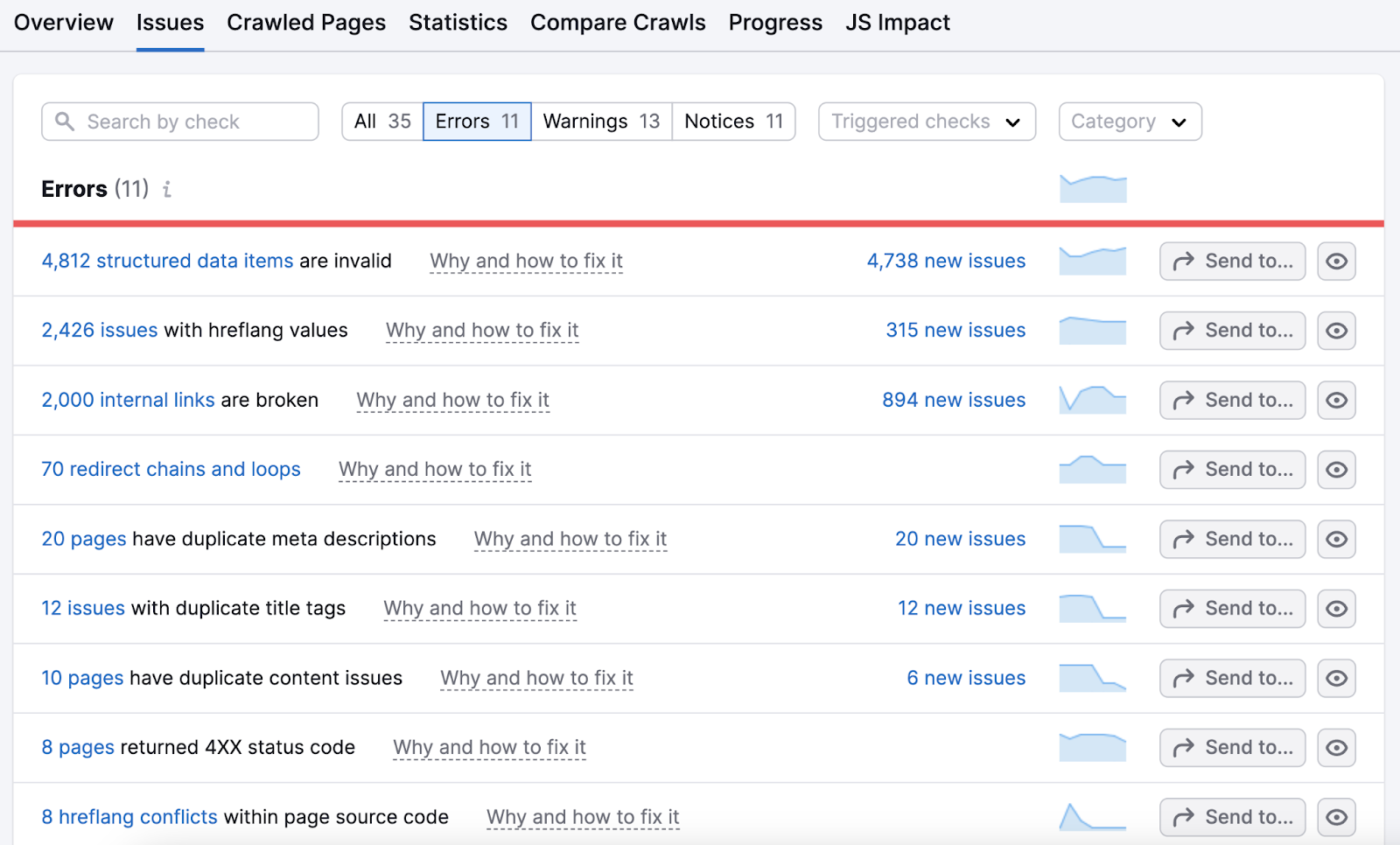

Click on the quantity highlighted in “Errors” to get detailed breakdowns of the most important issues. And solutions for fixing them.

Work your manner by means of this listing. After which transfer on to deal with warnings and notices.

Retains Crawling to Keep Forward of the Competitors

Engines like google like Google by no means cease crawling webpages.

Together with yours. And your rivals’.

Preserve an edge over the competitors by commonly crawling your web site to cease issues of their tracks.

You possibly can schedule computerized recrawls and reviews with Web site Audit. Or, manually run future internet crawls to maintain your web site in prime form—from customers’ and Google’s perspective.