What Is a Robots.txt File?

A robots.txt file is a set of directions telling search engines like google which pages ought to and shouldn’t be crawled on a web site. Which guides crawler entry however shouldn’t be used to maintain pages out of Google’s index.

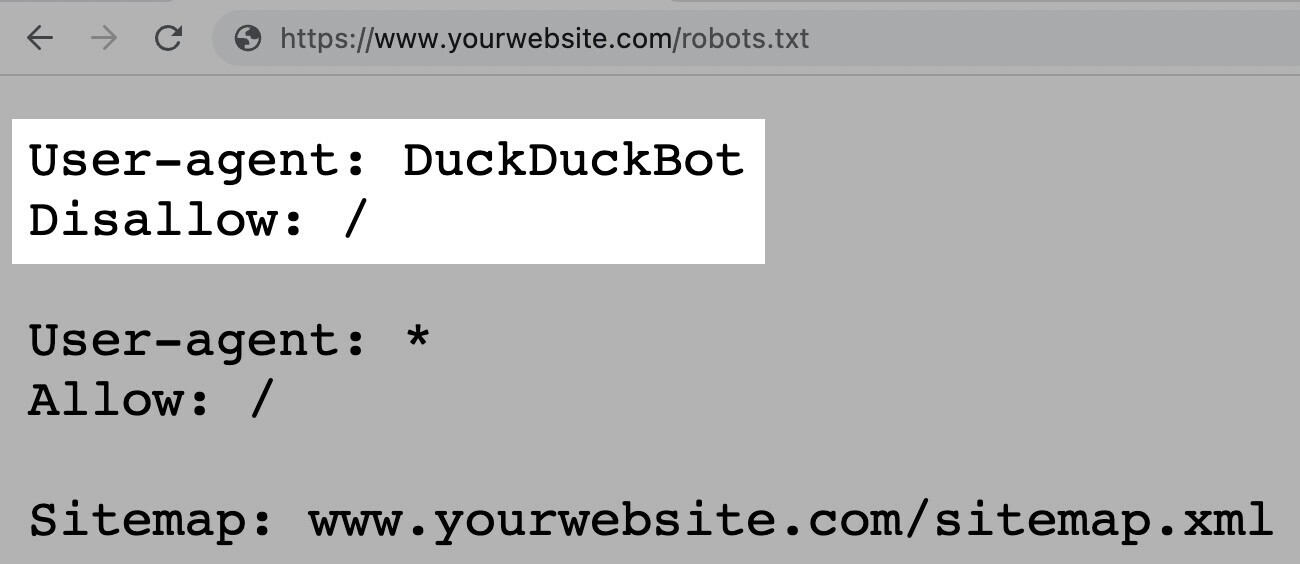

A robots.txt file appears like this:

Robots.txt recordsdata may appear difficult, however the syntax (pc language) is simple.

Earlier than we get into these particulars, let’s provide some clarification on how robots.txt differs from some phrases that sound comparable.

Robots.txt recordsdata, meta robots tags, and x-robots tags all information search engines like google about the best way to deal with your web site’s content material.

However they differ of their stage of management, the place they’re situated, and what they management.

Listed below are the specifics:

- Robots.txt: This file is situated in your web site’s root listing and acts as a gatekeeper to supply normal, site-wide directions to go looking engine crawlers on which areas of your web site they need to and shouldn’t crawl

- Meta robots tags: These are snippets of code that reside inside the <head> part of particular person webpages. And supply page-specific directions to search engines like google on whether or not to index (embody in search outcomes) and observe (crawl hyperlinks inside) every web page.

- X-robot tags: These are code snippets which can be primarily used for non-HTML recordsdata like PDFs and pictures. And are carried out within the file’s HTTP header.

Additional studying: Meta Robots Tag & X-Robots-Tag Defined

Why Is Robots.txt Vital for search engine optimization?

A robots.txt file helps handle net crawler actions, in order that they don’t overwork your web site or hassle with pages not meant for public view.

Beneath are a couple of causes to make use of a robots.txt file:

1. Optimize Crawl Funds

Crawl price range refers back to the variety of pages Google will crawl in your web site inside a given timeframe.

The quantity can differ based mostly in your web site’s measurement, well being, and variety of backlinks.

In case your web site’s variety of pages exceeds your web site’s crawl price range, you possibly can have necessary pages that fail to get listed.

These unindexed pages received’t rank. That means you wasted time creating pages customers received’t see.

Blocking pointless pages with robots.txt permits Googlebot (Google’s net crawler) to spend extra crawl price range on pages that matter.

2. Block Duplicate and Non-Public Pages

Crawl bots don’t have to sift by means of each web page in your web site. As a result of not all of them have been created to be served within the search engine outcomes pages (SERPs).

Like staging websites, inner search outcomes pages, duplicate pages, or login pages. Some content material administration techniques deal with these inner pages for you.

WordPress, for instance, routinely disallows the login web page “/wp-admin/” for all crawlers.

Robots.txt means that you can block these pages from crawlers.

3. Disguise Assets

Generally, you wish to exclude sources corresponding to PDFs, movies, and pictures from search outcomes.

To maintain them non-public or have Google give attention to extra necessary content material.

In both case, robots.txt retains them from being crawled.

How Does a Robots.txt File Work?

Robots.txt recordsdata inform search engine bots which URLs they need to crawl and (extra importantly) which of them to disregard.

As they crawl webpages, search engine bots uncover and observe hyperlinks. This course of takes them from web site A to web site B to web site C throughout hyperlinks, pages, and web sites.

But when a bot finds a robots.txt file, it would learn it earlier than doing the rest.

The syntax is simple.

You assign guidelines by figuring out the “user-agent” (search engine bot) and specifying the directives (guidelines).

You can even use an asterisk (*) to assign directives to each user-agent, which applies the rule for all bots.

For instance, the beneath instruction permits all bots besides DuckDuckGo to crawl your web site:

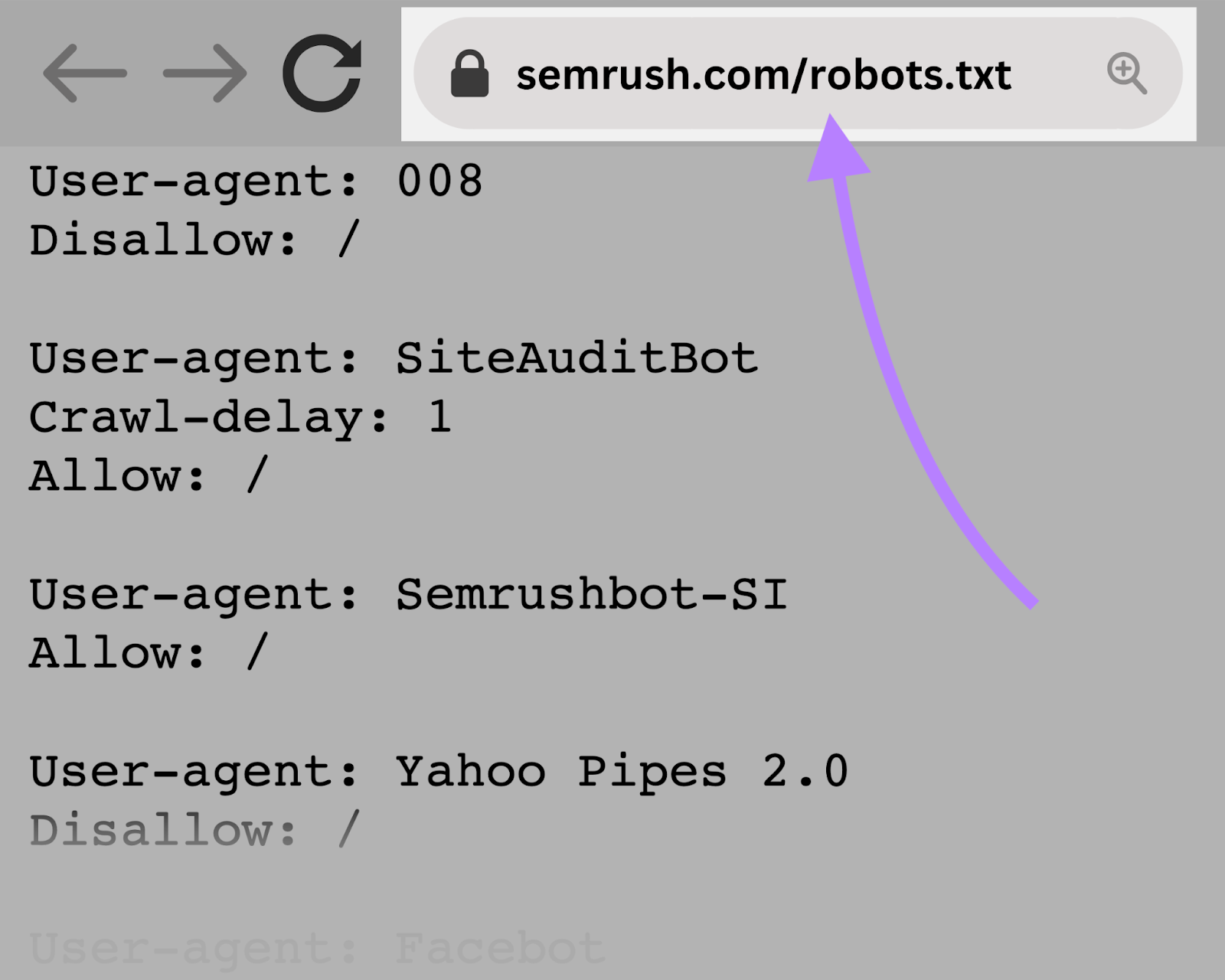

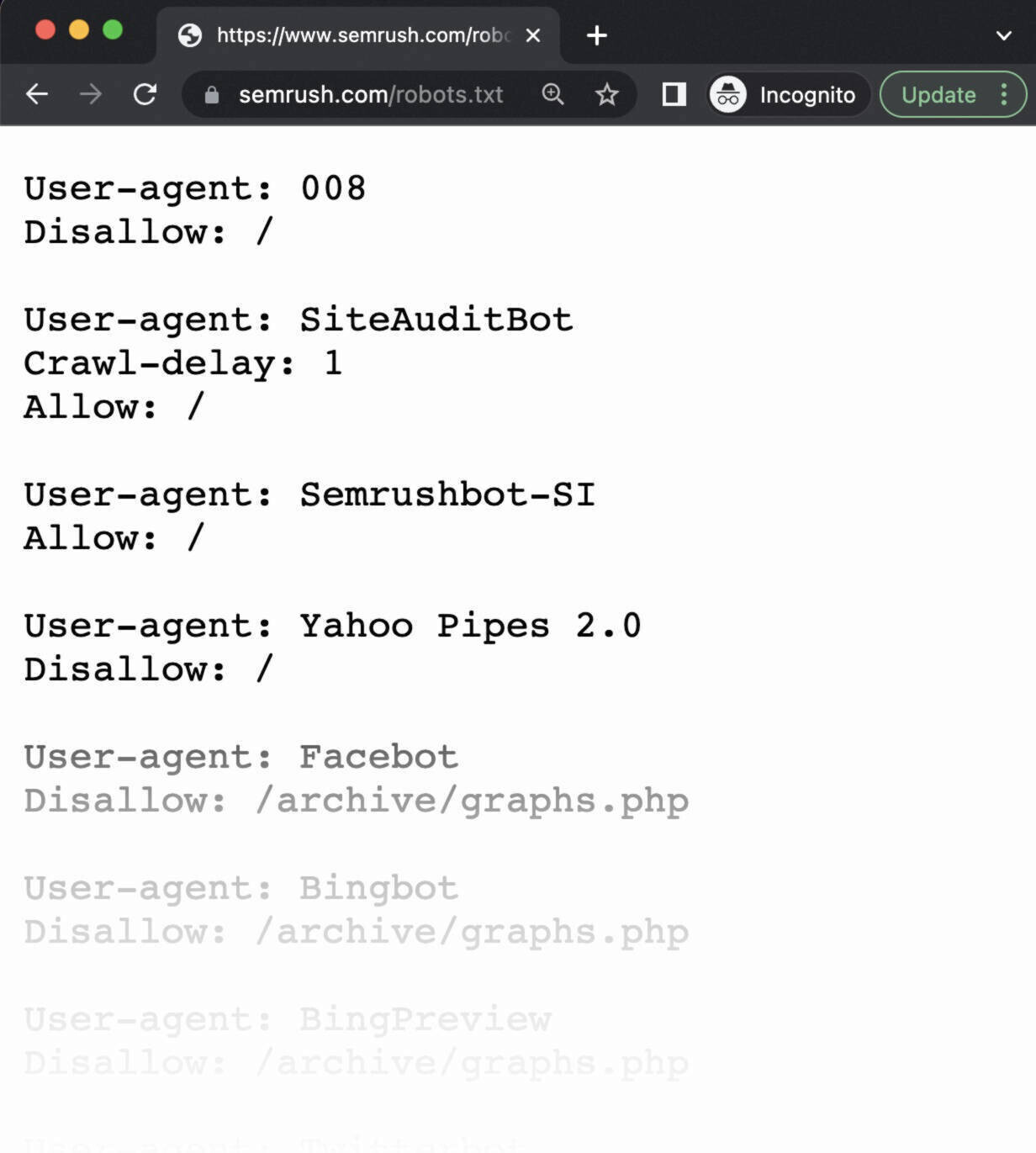

Semrush bots crawl the net to collect insights for our web site optimization instruments, corresponding to Website Audit, Backlink Audit, and On Web page search engine optimization Checker.

Our bots respect the principles outlined in your robots.txt file. So, for those who block our bots from crawling your web site, they received’t.

However doing that additionally means you’ll be able to’t use a few of our instruments to their full potential.

For instance, for those who blocked our SiteAuditBot from crawling your web site, you couldn’t audit your web site with our Website Audit device. To investigate and repair technical points in your web site.

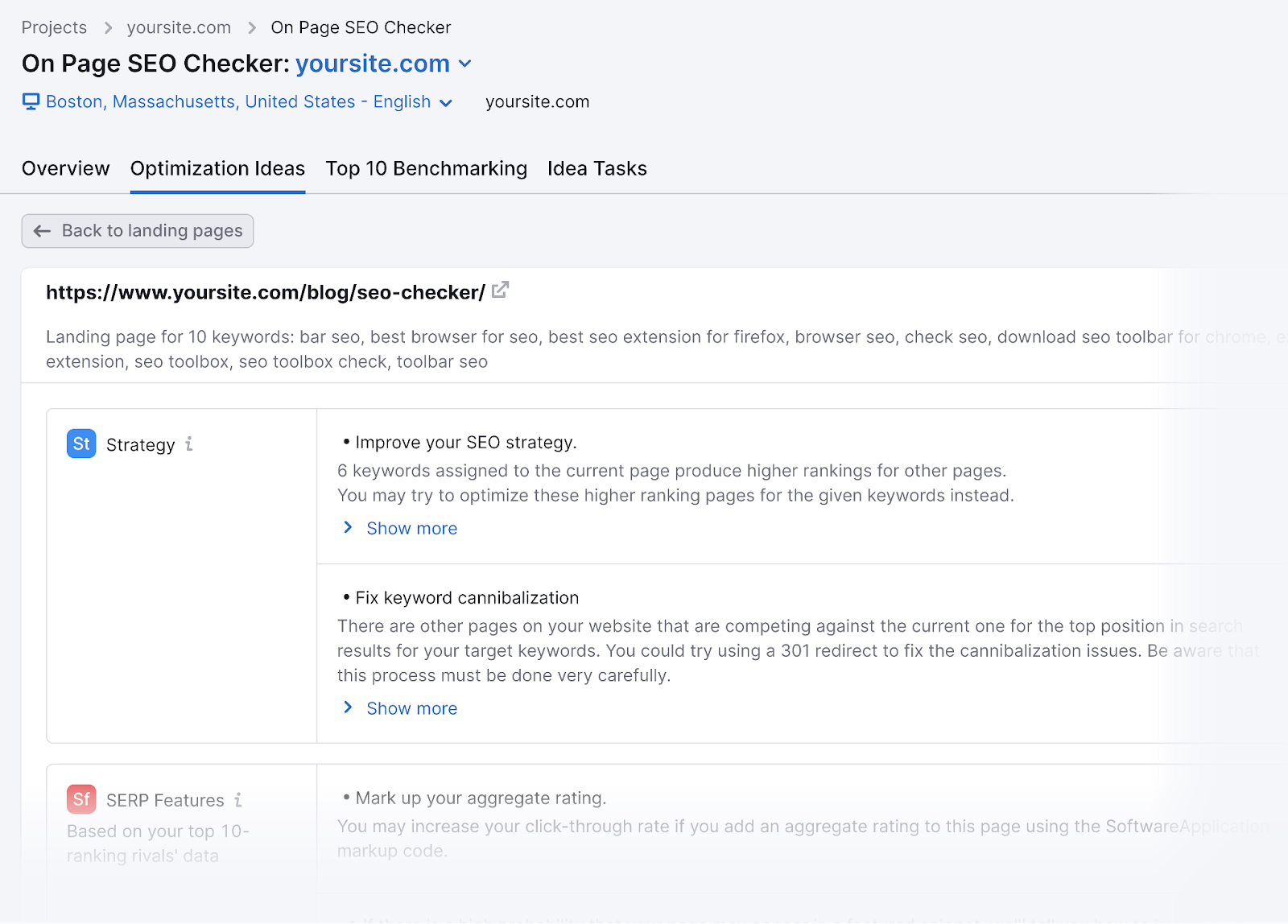

For those who blocked our SemrushBot-SI from crawling your web site, you couldn’t use the On Web page search engine optimization Checker device successfully.

And also you’d lose out on producing optimization concepts to enhance your webpages’ rankings.

How one can Discover a Robots.txt File

Your robots.txt file is hosted in your server, identical to another file in your web site.

You may view the robots.txt file for any given web site by typing the complete URL for the homepage and including “/robots.txt” on the finish.

Like this: “https://semrush.com/robots.txt.”

Earlier than studying the best way to create a robots.txt file or going into the syntax, let’s first have a look at some examples.

Examples of Robots.txt Recordsdata

Listed below are some real-world robots.txt examples from standard web sites.

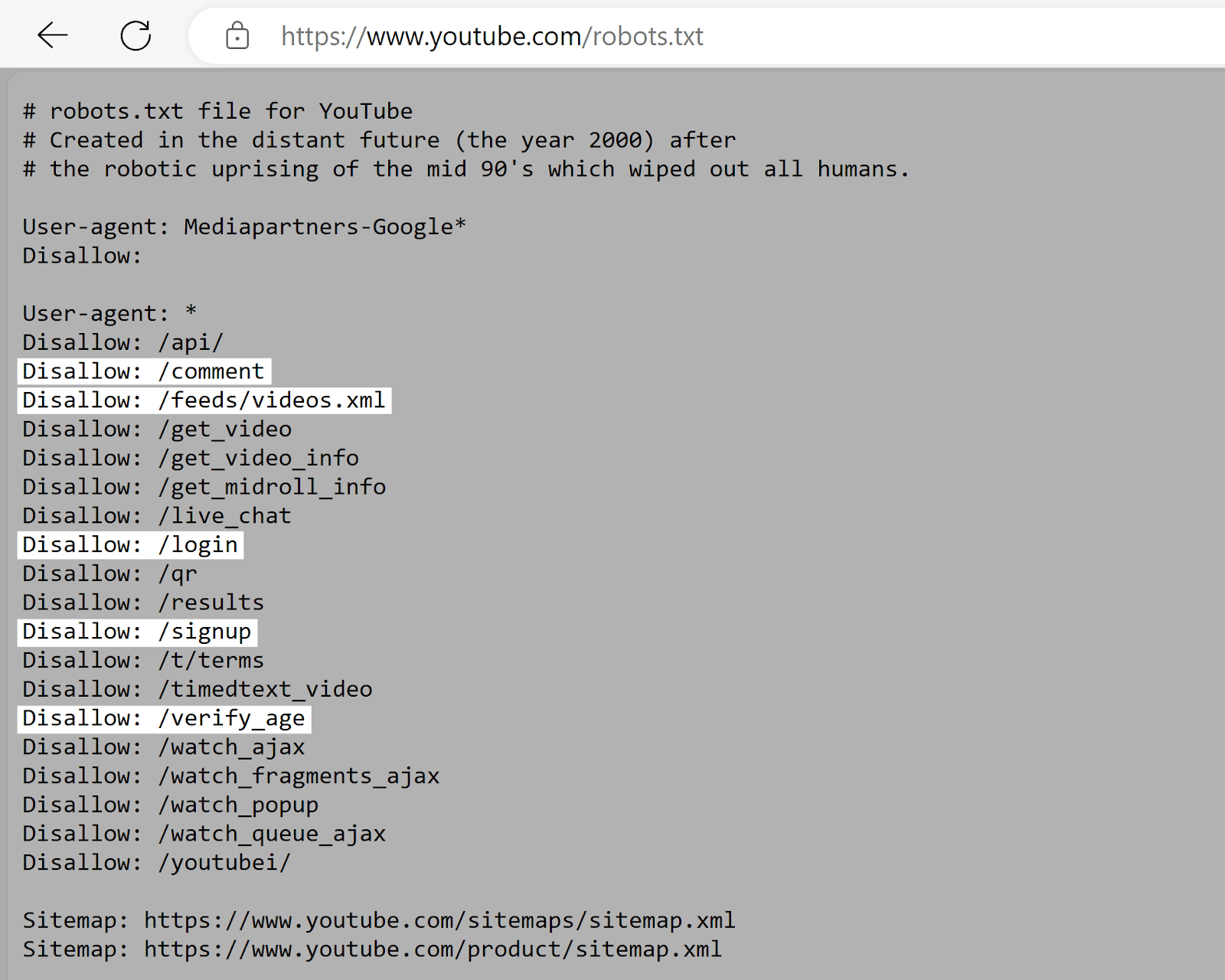

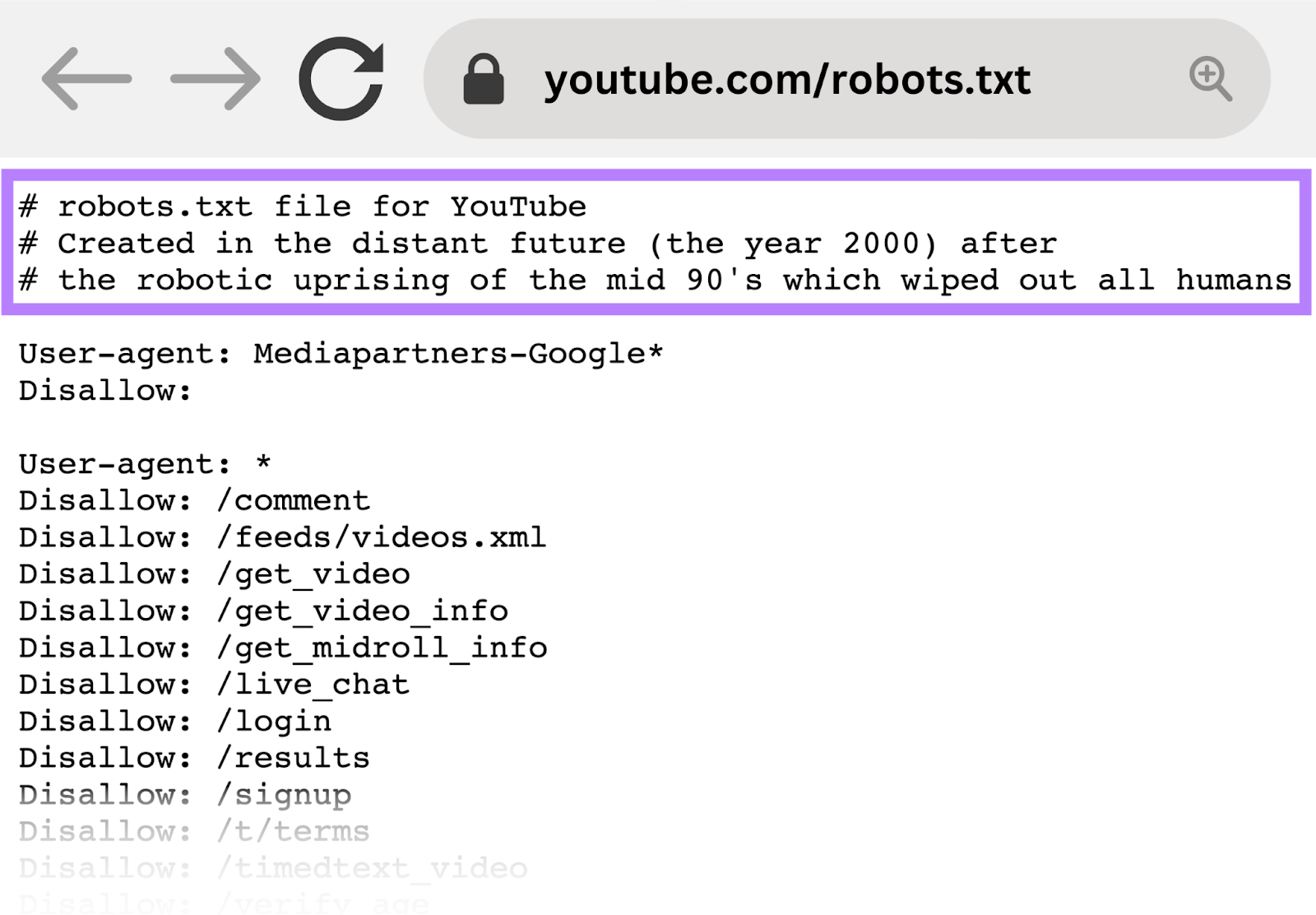

YouTube

YouTube’s robots.txt file tells crawlers to not entry consumer feedback, video feeds, login/signup pages, and age verification pages.

This discourages the indexing of user-specific or dynamic content material that’s usually irrelevant to go looking outcomes and will increase privateness considerations.

G2

G2’s robots.txt file tells crawlers to not entry sections with user-generated content material. Like survey responses, feedback, and contributor profiles.

This helps shield consumer privateness by defending doubtlessly delicate private data. And in addition prevents customers from making an attempt to govern search outcomes.

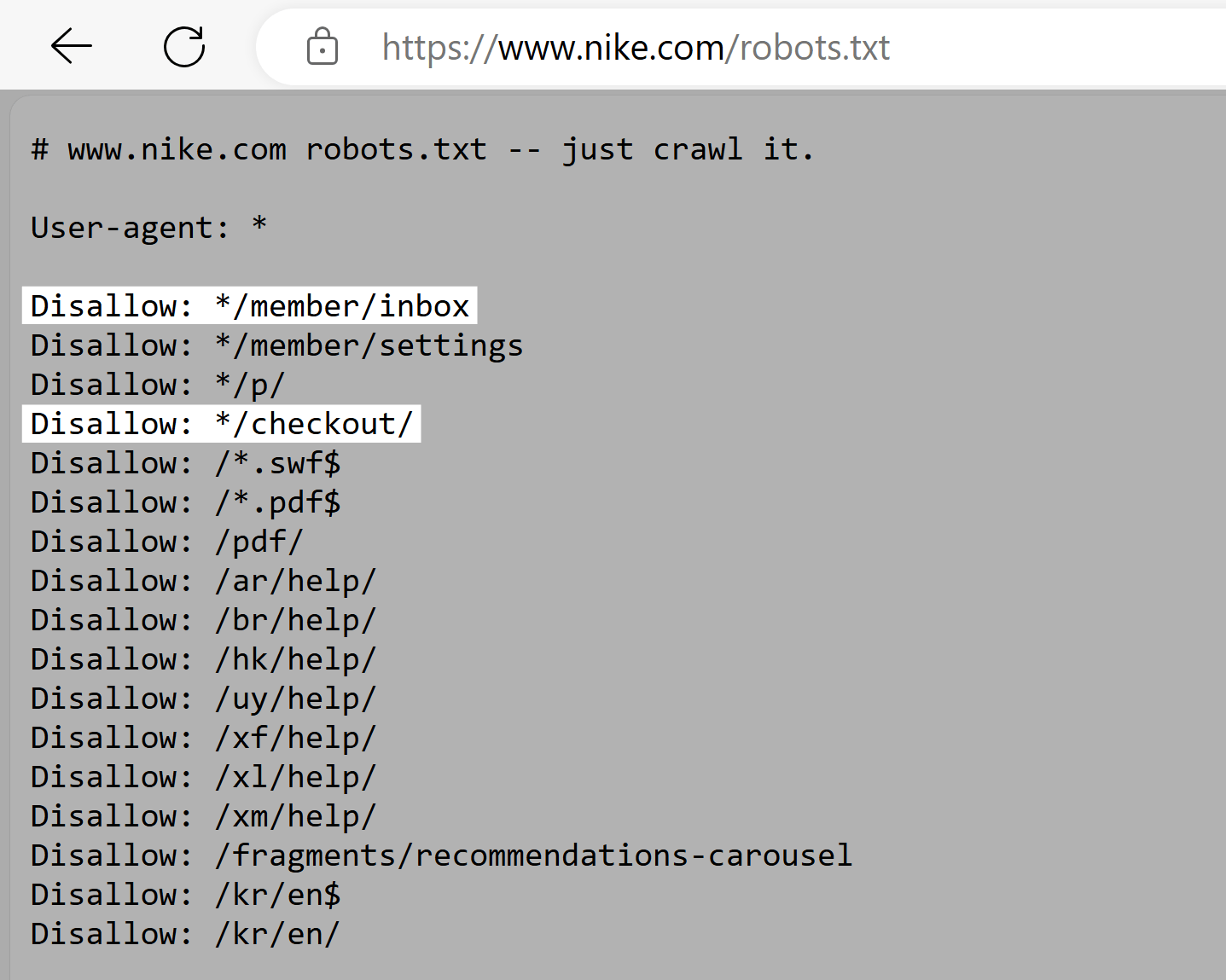

Nike

Nike’s robots.txt file makes use of the disallow directive to dam crawlers from accessing user-generated directories. Like “/checkout/” and “*/member/inbox.”

This ensures that doubtlessly delicate consumer knowledge isn’t uncovered in search outcomes. And prevents makes an attempt to govern search engine optimization rankings.

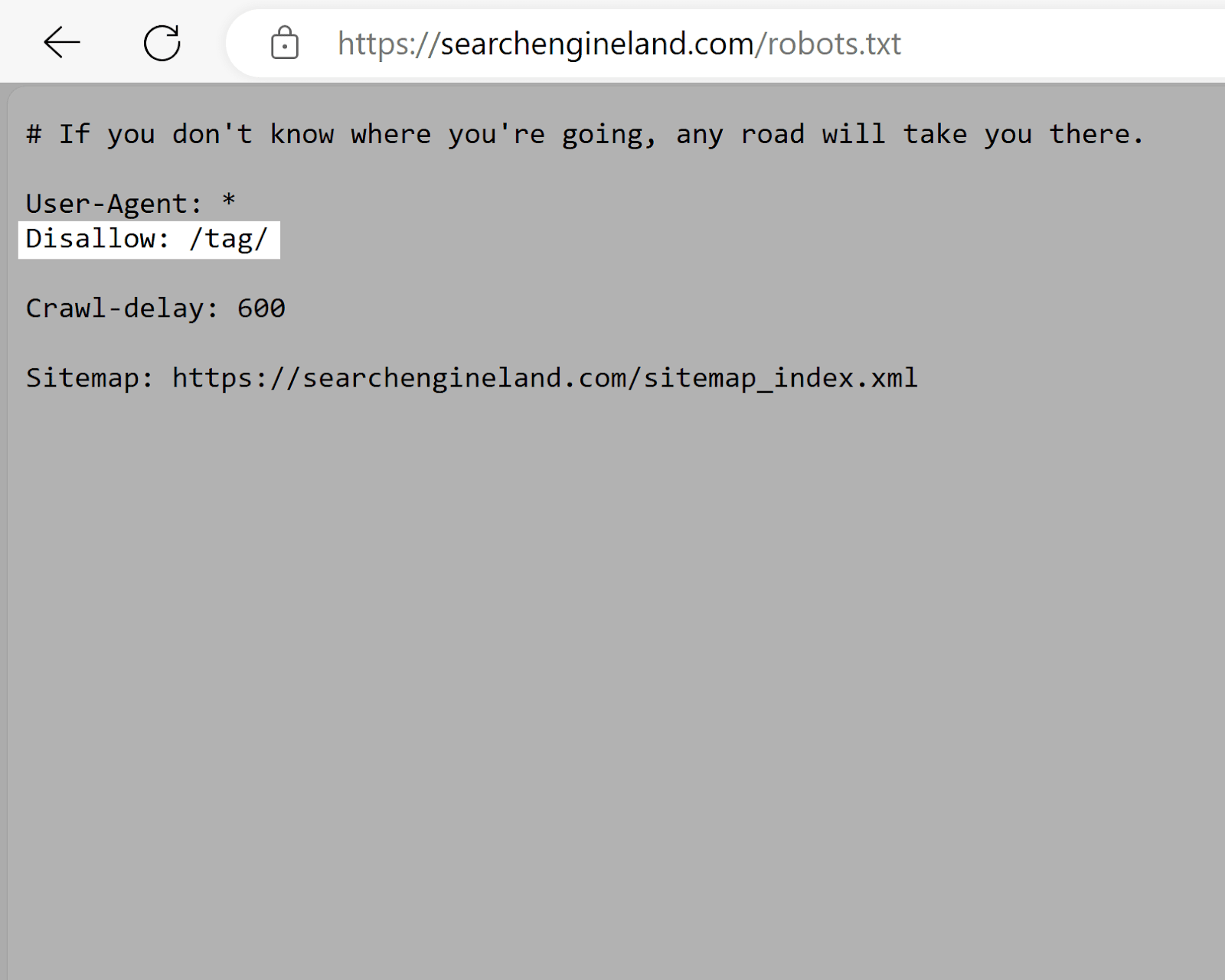

Search Engine Land

Search Engine Land’s robots.txt file makes use of the disallow tag to discourage the indexing of “/tag/” listing pages. Which are inclined to have low search engine optimization worth in comparison with precise content material pages. And might trigger duplicate content material points.

This encourages search engines like google to prioritize crawling higher-quality content material, maximizing the web site’s crawl price range.

Which is particularly necessary given what number of pages Search Engine Land has.

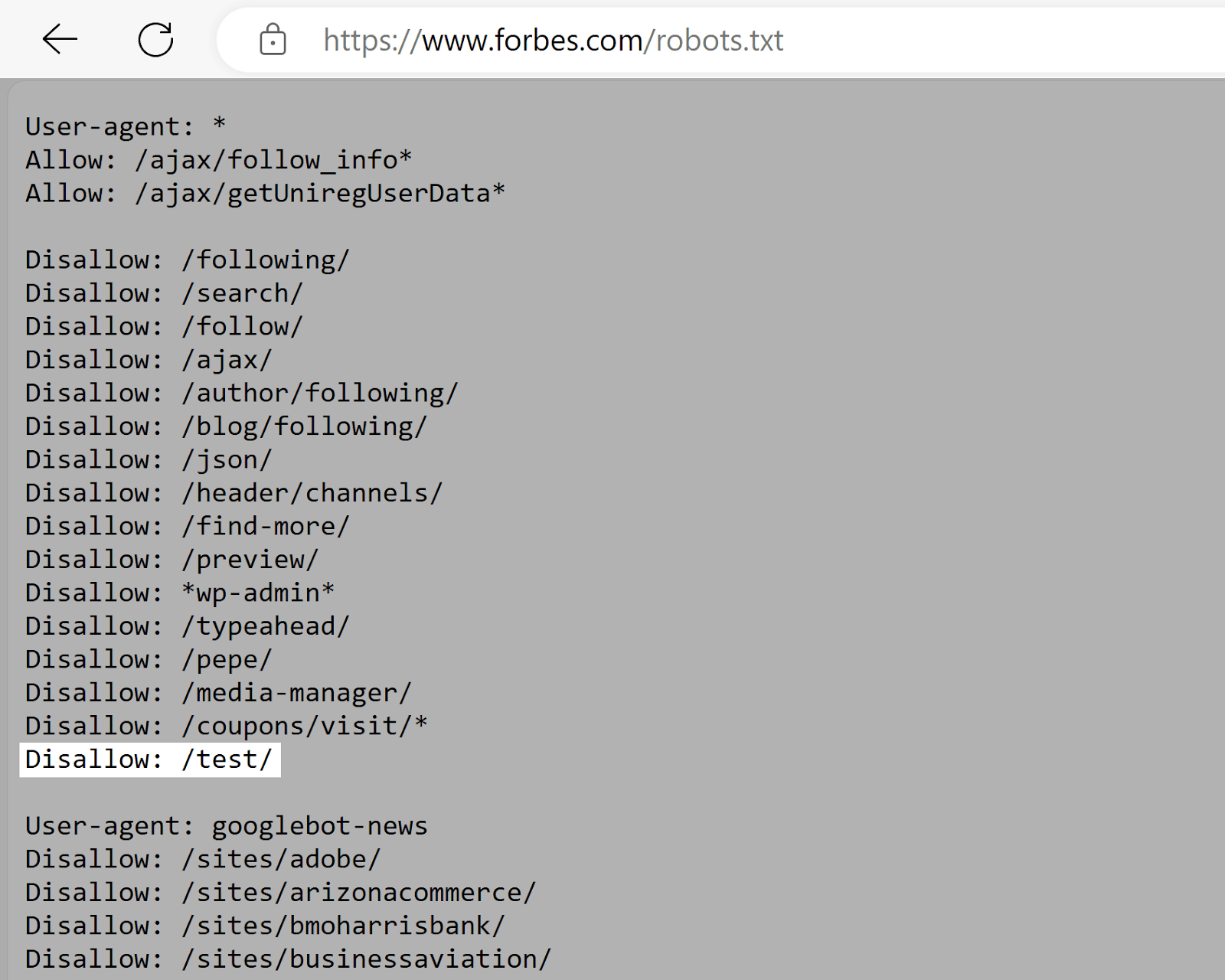

Forbes

Forbes’s robots.txt file instructs Google to keep away from the “/check/” listing. Which probably accommodates testing or staging environments.

This prevents unfinished or delicate content material from being listed (assuming it isn’t linked to elsewhere.)

Explaining Robots.txt Syntax

A robots.txt file is made up of:

- A number of blocks of “directives” (guidelines)

- Every with a specified “user-agent” (search engine bot)

- And an “permit” or “disallow” instruction

A easy block can seem like this:

Consumer-agent: Googlebot

Disallow: /not-for-google

Consumer-agent: DuckDuckBot

Disallow: /not-for-duckduckgo

Sitemap: https://www.yourwebsite.com/sitemap.xml

The Consumer-Agent Directive

The primary line of each directive block is the user-agent, which identifies the crawler.

If you wish to inform Googlebot to not crawl your WordPress admin web page, for instance, your directive will begin with:

Consumer-agent: Googlebot

Disallow: /wp-admin/

When a number of directives are current, the bot might select probably the most particular block of directives obtainable.

Let’s say you may have three units of directives: one for *, one for Googlebot, and one for Googlebot-Picture.

If the Googlebot-Information consumer agent crawls your web site, it would observe the Googlebot directives.

However, the Googlebot-Picture consumer agent will observe the extra particular Googlebot-Picture directives.

The Disallow Robots.txt Directive

The second line of a robots.txt directive is the “disallow” line.

You may have a number of disallow directives that specify which elements of your web site the crawler can’t entry.

An empty disallow line means you’re not disallowing something—a crawler can entry all sections of your web site.

For instance, for those who needed to permit all search engines like google to crawl your total web site, your block would seem like this:

Consumer-agent: *

Permit: /

For those who needed to dam all search engines like google from crawling your web site, your block would seem like this:

Consumer-agent: *

Disallow: /

The Permit Directive

The “permit” directive permits search engines like google to crawl a subdirectory or particular web page, even in an in any other case disallowed listing.

For instance, if you wish to forestall Googlebot from accessing each submit in your weblog aside from one, your directive would possibly seem like this:

Consumer-agent: Googlebot

Disallow: /weblog

Permit: /weblog/example-post

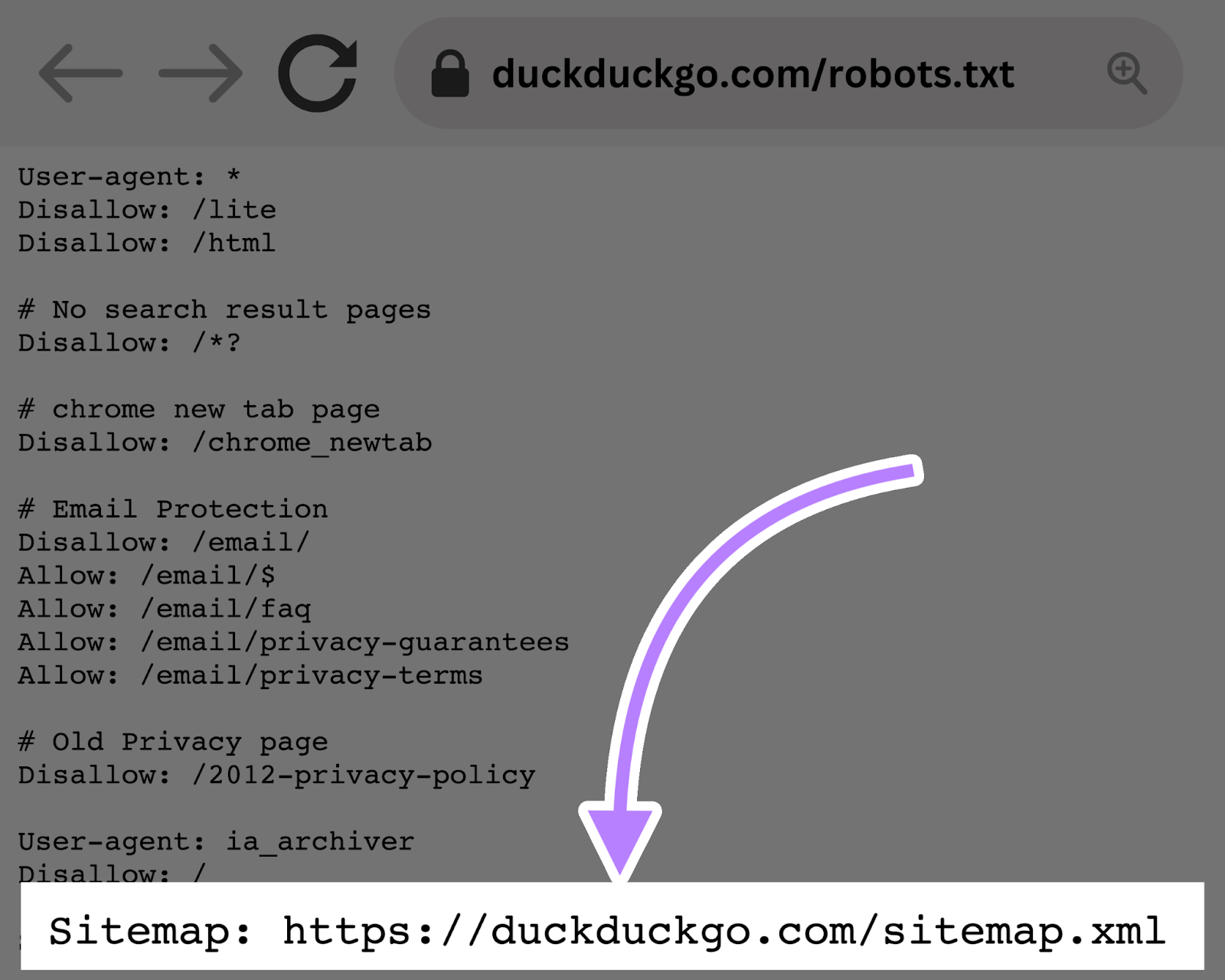

The Sitemap Directive

The Sitemap directive tells search engines like google—particularly Bing, Yandex, and Google—the place to seek out your XML sitemap.

Sitemaps usually embody the pages you need search engines like google to crawl and index.

This directive lives on the high or backside of a robots.txt file and appears like this:

Including a Sitemap directive to your robots.txt file is a fast different. However you’ll be able to (and will) additionally submit your XML sitemap to every search engine utilizing their webmaster instruments.

Search engines like google and yahoo will crawl your web site finally, however submitting a sitemap accelerates the crawling course of.

The Crawl-Delay Directive

The “crawl-delay” directive instructs crawlers to delay their crawl charges. To keep away from overtaxing a server (i.e., slowing down your web site).

Google now not helps the crawl-delay directive. And if you wish to set your crawl charge for Googlebot, you’ll must do it in Search Console.

However Bing and Yandex do help the crawl-delay directive. Right here’s the best way to use it.

Let’s say you need a crawler to attend 10 seconds after every crawl motion. You’ll set the delay to 10 like so:

Consumer-agent: *

Crawl-delay: 10

Additional studying: 15 Crawlability Issues & How one can Repair Them

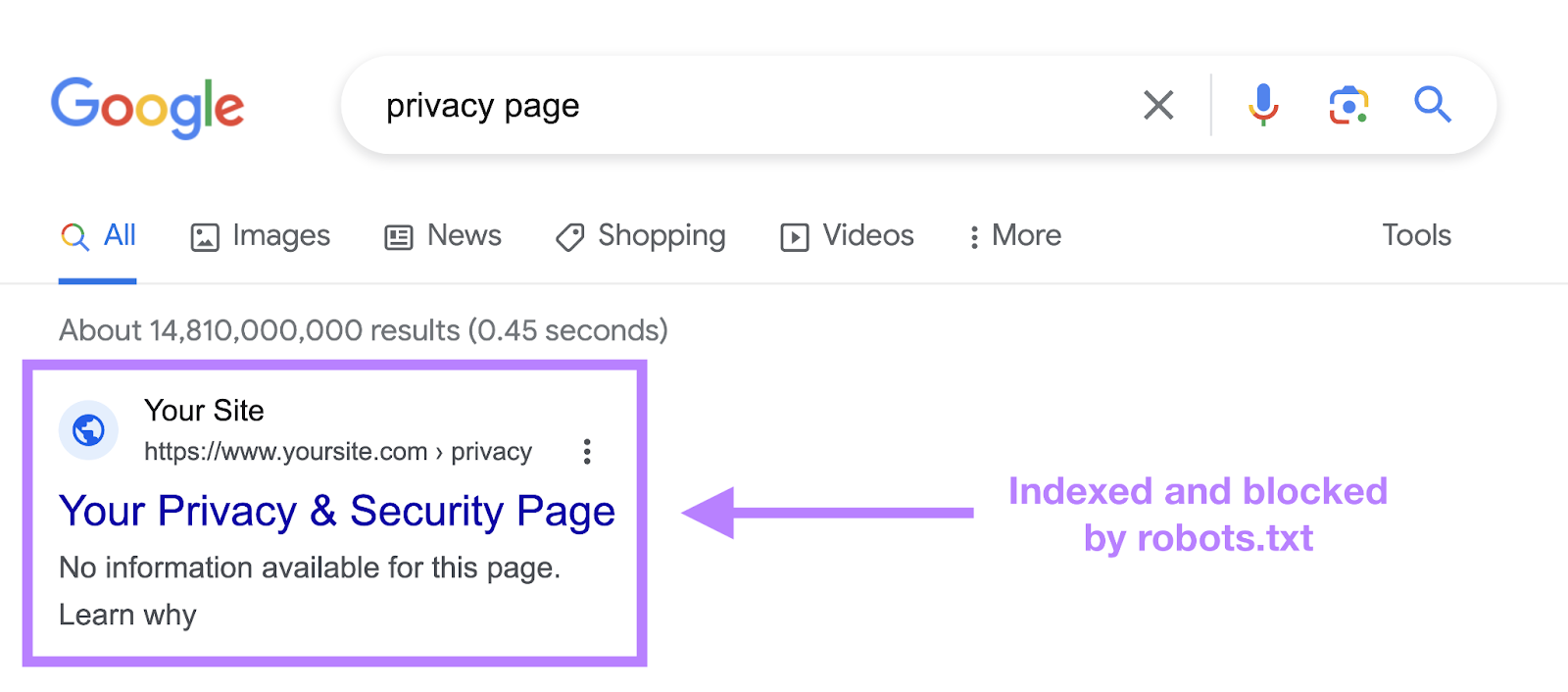

The Noindex Directive

A robots.txt file tells a bot what it ought to or shouldn’t crawl. However it may possibly’t inform a search engine which URLs to not index and serve in search outcomes.

Utilizing the noindex tag in your robots.txt file might block a bot from realizing what’s in your web page. However the web page can nonetheless present up in search outcomes. Albeit with no data.

Like this:

Google by no means formally supported this directive. And on September 1, 2019, Google even introduced that they certainly don’t help the noindex directive in robots.txt.

If you wish to reliably exclude a web page or file from showing in search outcomes, keep away from this directive altogether and use a meta robots noindex tag as an alternative.

How one can Create a Robots.txt File

Use a robots.txt generator device or create one your self.

Right here’s the best way to create one from scratch:

1. Create a File and Identify It Robots.txt

Begin by opening a .txt doc inside a textual content editor or net browser.

Subsequent, title the doc “robots.txt.”

You’re now prepared to begin typing directives.

2. Add Directives to the Robots.txt File

A robots.txt file consists of a number of teams of directives. And every group consists of a number of traces of directions.

Every group begins with a user-agent and has the next data:

- Who the group applies to (the user-agent)

- Which directories (pages) or recordsdata the agent ought to entry

- Which directories (pages) or recordsdata the agent shouldn’t entry

- A sitemap (non-obligatory) to inform search engines like google which pages and recordsdata you deem necessary

Crawlers ignore traces that don’t match these directives.

Let’s say you don’t need Google crawling your “/shoppers/” listing as a result of it’s only for inner use.

The primary group would look one thing like this:

Consumer-agent: Googlebot

Disallow: /shoppers/

Further directions may be added in a separate line beneath, like this:

Consumer-agent: Googlebot

Disallow: /shoppers/

Disallow: /not-for-google

When you’re accomplished with Google’s particular directions, hit enter twice to create a brand new group of directives.

Let’s make this one for all search engines like google and forestall them from crawling your “/archive/” and “/help/” directories as a result of they’re for inner use solely.

It could seem like this:

Consumer-agent: Googlebot

Disallow: /shoppers/

Disallow: /not-for-google

Consumer-agent: *

Disallow: /archive/

Disallow: /help/

When you’re completed, add your sitemap.

Your completed robots.txt file would look one thing like this:

Consumer-agent: Googlebot

Disallow: /shoppers/

Disallow: /not-for-google

Consumer-agent: *

Disallow: /archive/

Disallow: /help/

Sitemap: https://www.yourwebsite.com/sitemap.xml

Then, save your robots.txt file. And do not forget that it should be named “robots.txt.”

3. Add the Robots.txt File

After you’ve saved the robots.txt file to your pc, add it to your web site and make it obtainable for search engines like google to crawl.

Sadly, there’s no common device for this step.

Importing the robots.txt file relies on your web site’s file construction and hosting.

Search on-line or attain out to your internet hosting supplier for assistance on importing your robots.txt file.

For instance, you’ll be able to seek for “add robots.txt file to WordPress.”

Beneath are some articles explaining the best way to add your robots.txt file in the preferred platforms:

After importing the file, examine if anybody can see it and if Google can learn it.

Right here’s how.

4. Check Your Robots.txt File

First, check whether or not your robots.txt file is publicly accessible (i.e., if it was uploaded appropriately).

Open a non-public window in your browser and seek for your robots.txt file.

For instance, “https://semrush.com/robots.txt.”

For those who see your robots.txt file with the content material you added, you’re prepared to check the markup (HTML code).

Google gives two choices for testing robots.txt markup:

- The robots.txt report in Search Console

- Google’s open-source robots.txt library (superior)

As a result of the second choice is geared towards superior builders, let’s check with Search Console.

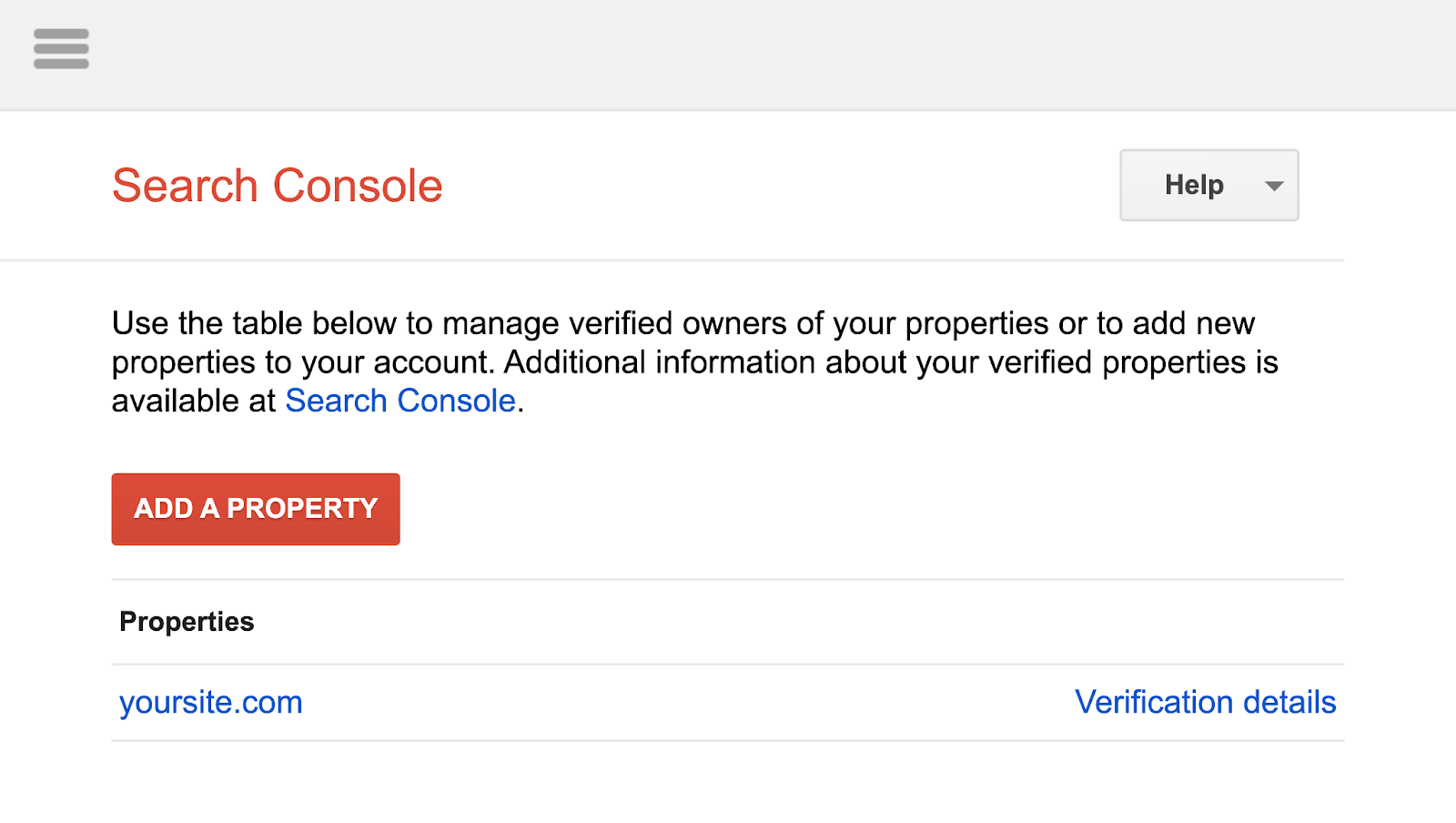

Go to the robots.txt report by clicking the hyperlink.

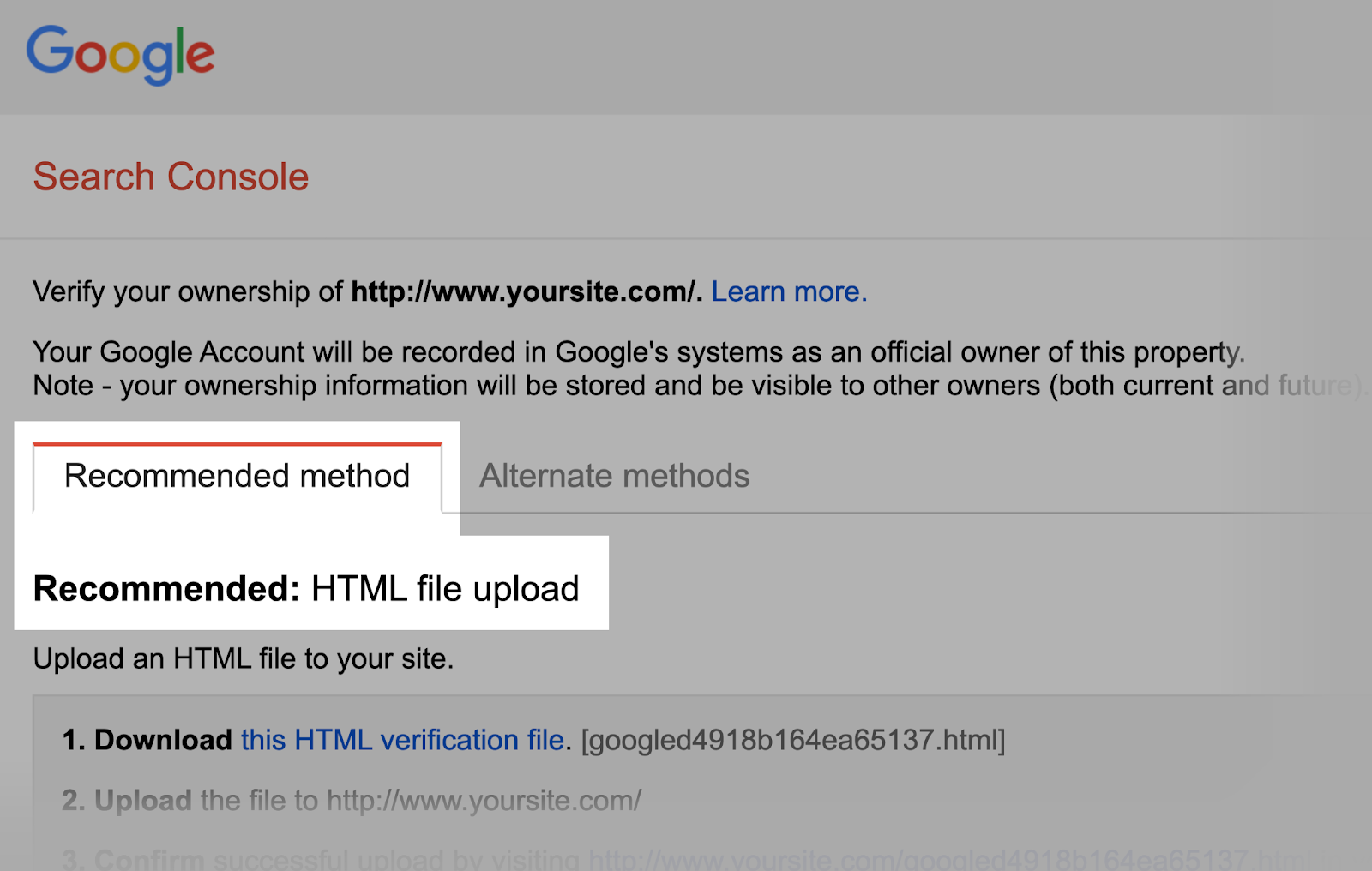

For those who haven’t linked your web site to your Google Search Console account, you’ll want so as to add a property first.

Then, confirm that you simply’re the positioning’s proprietor.

When you have present verified properties, choose one from the drop-down record.

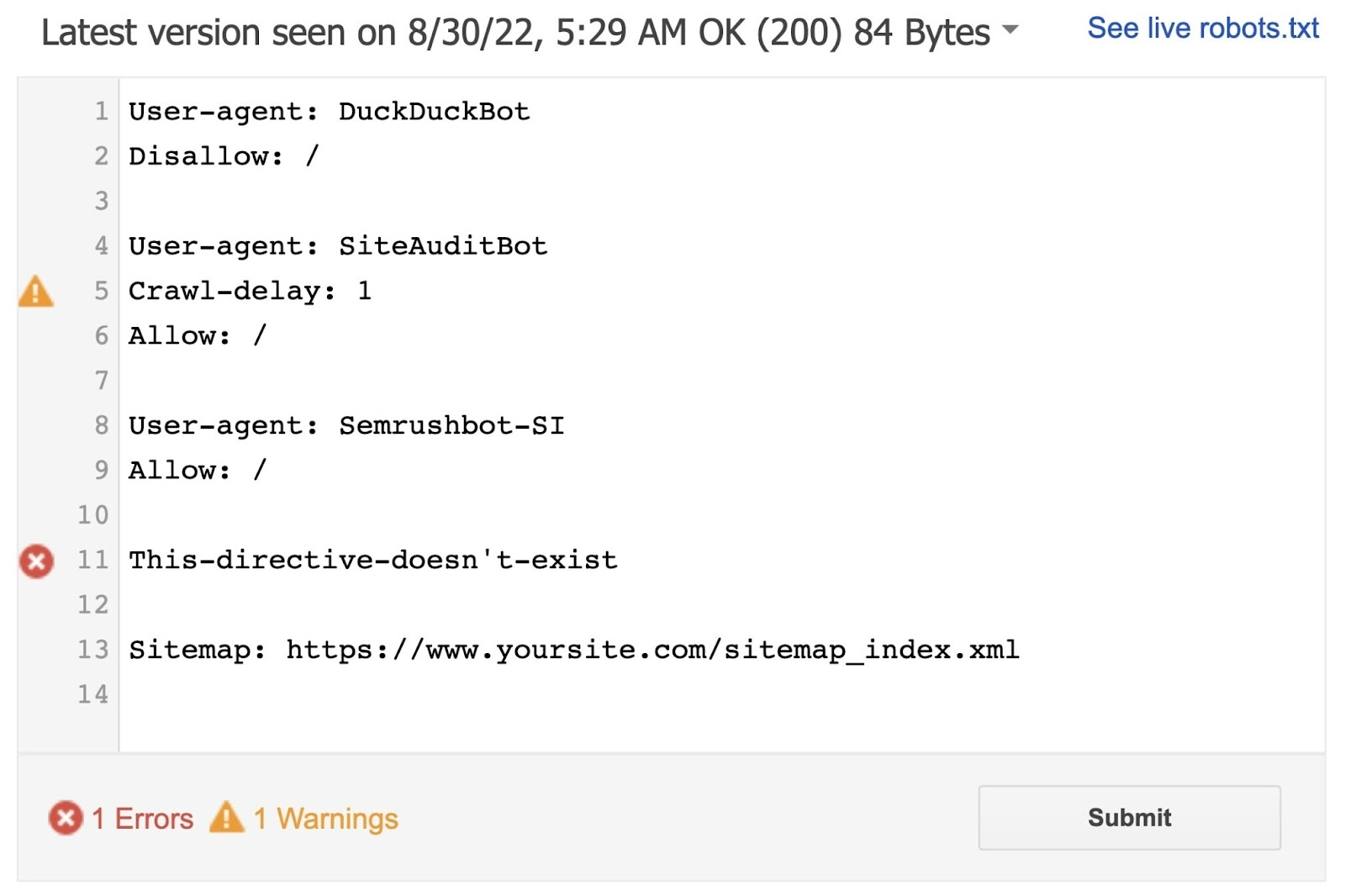

The device will determine syntax warnings and logic errors.

And show the whole variety of warnings and errors beneath the editor.

You may edit errors or warnings instantly on the web page and retest as you go.

Any modifications made on the web page aren’t saved to your web site. So, copy and paste the edited check copy into the robots.txt file in your web site.

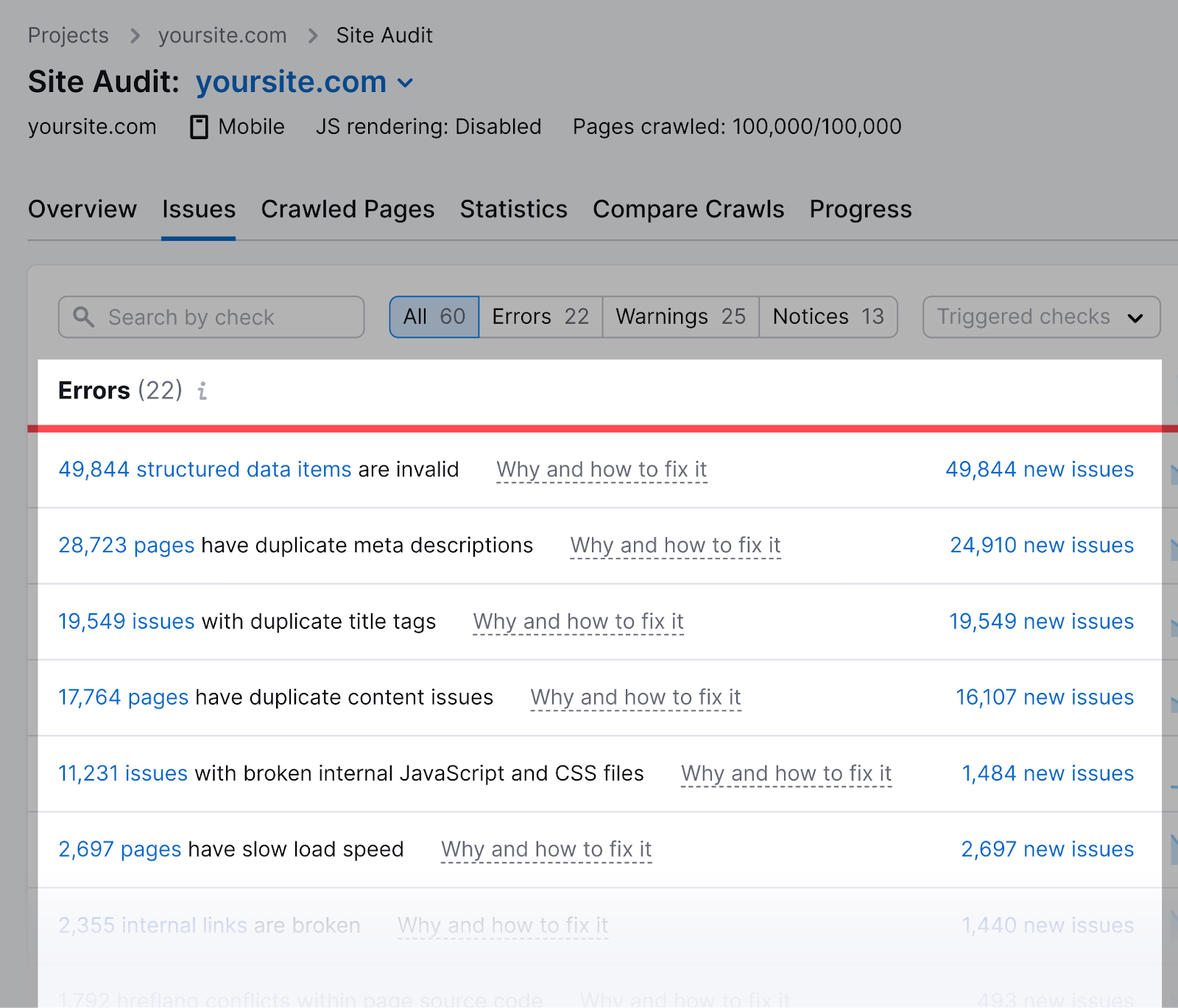

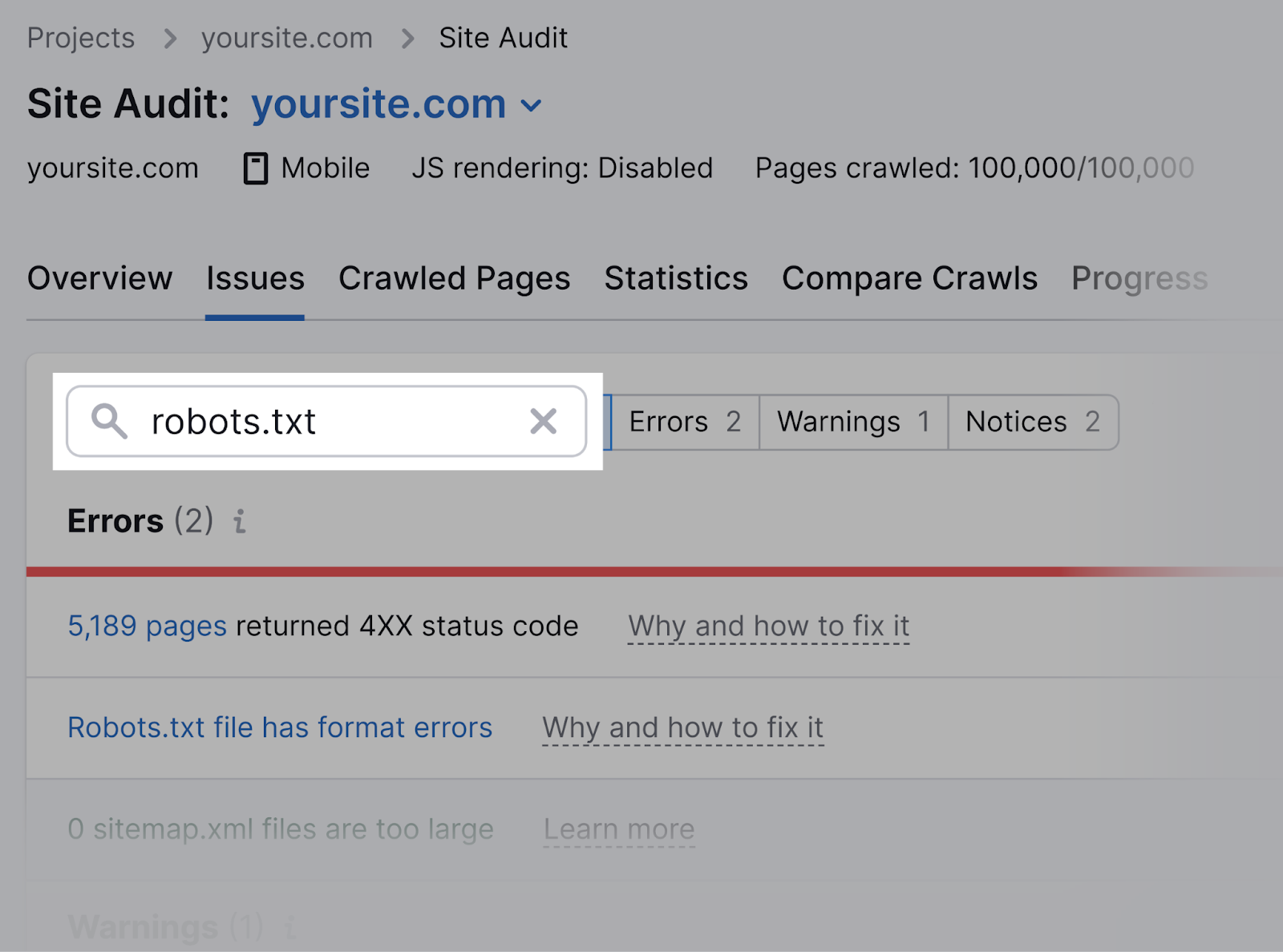

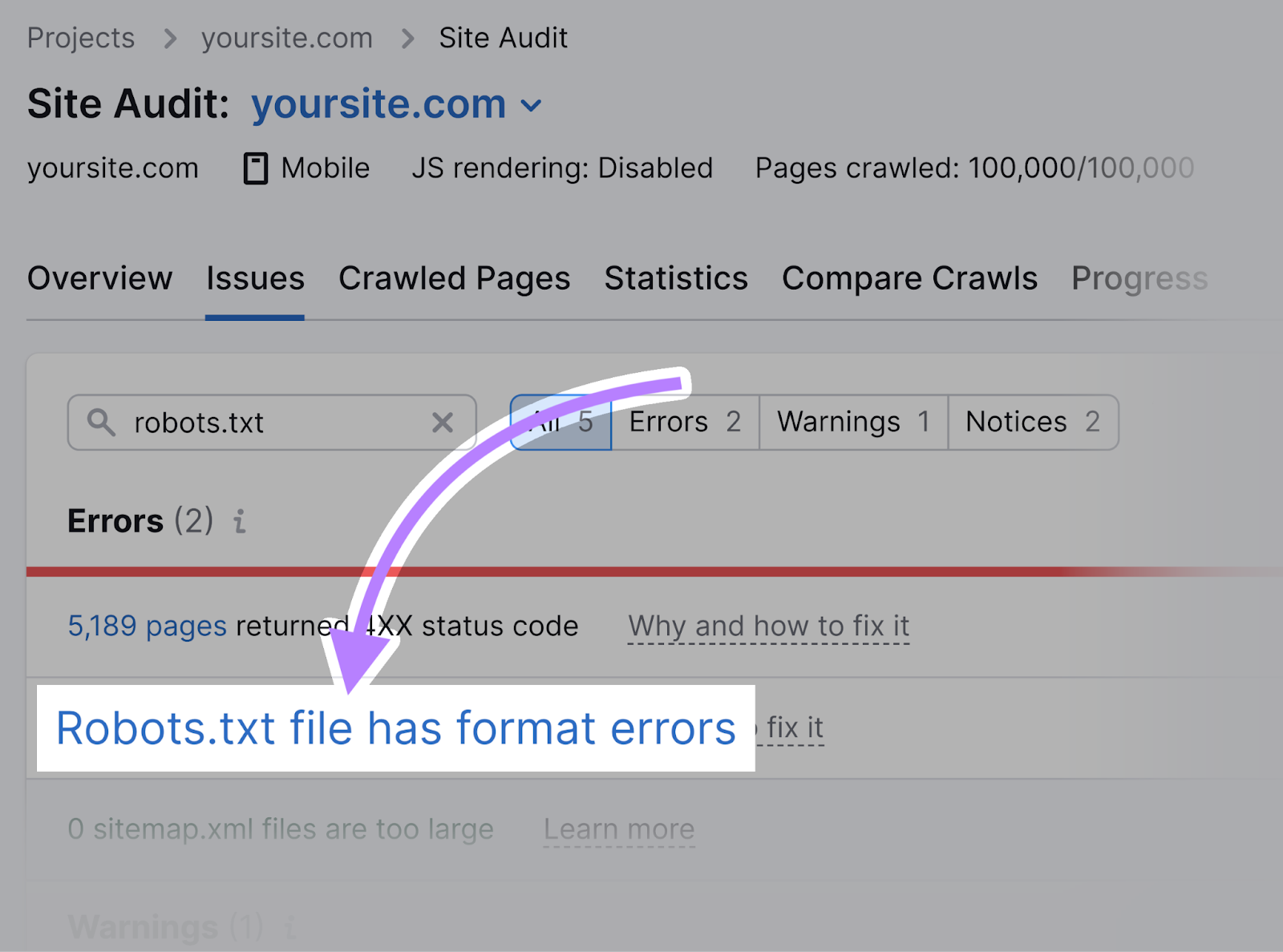

Semrush’s Website Audit device also can examine for points concerning your robots.txt file.

First, arrange a challenge within the device to audit your web site.

As soon as the audit is full, navigate to the “Points” tab and seek for “robots.txt.”

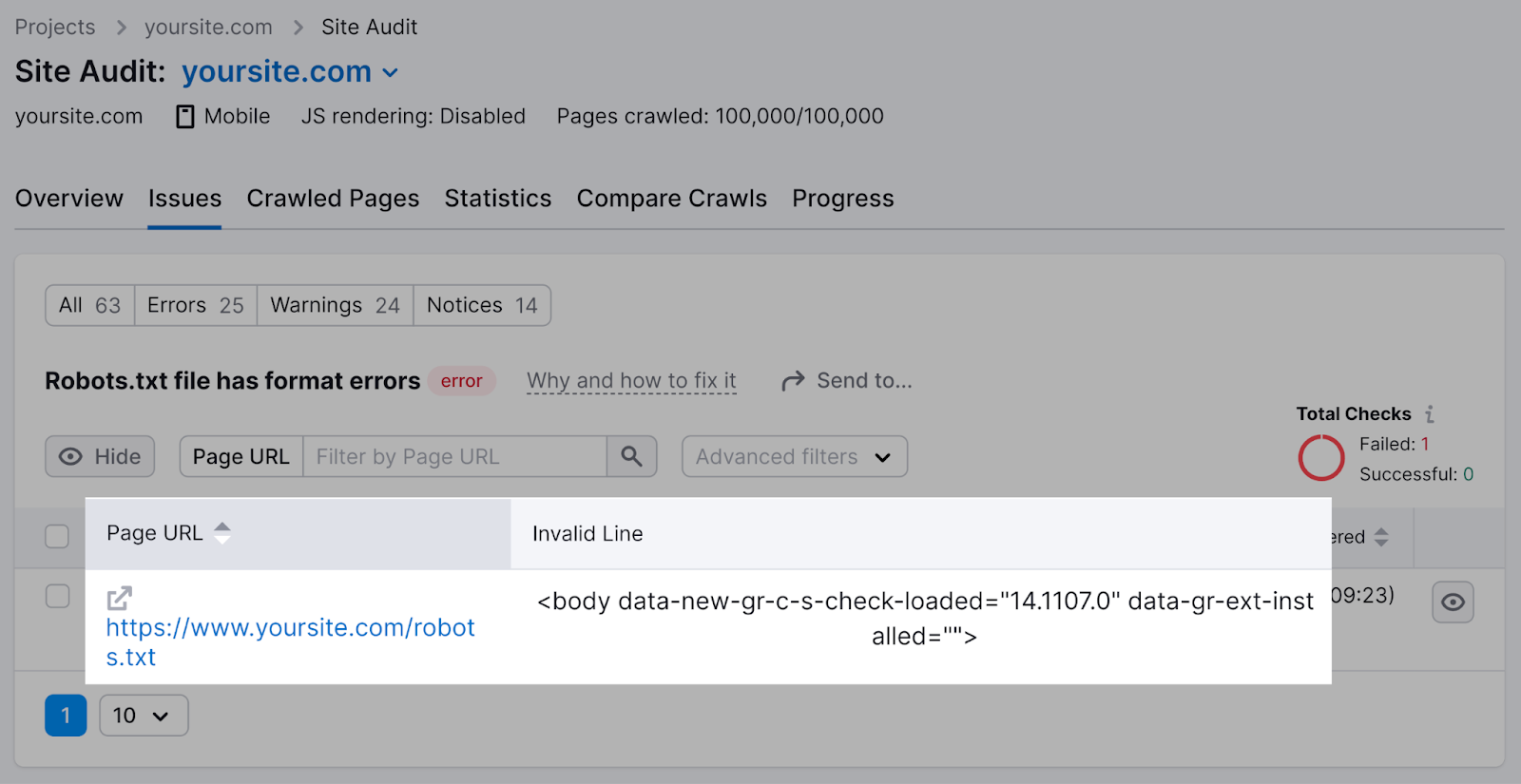

Click on on the “Robots.txt file has format errors” hyperlink if it seems that your file has format errors.

You’ll see a listing of invalid traces.

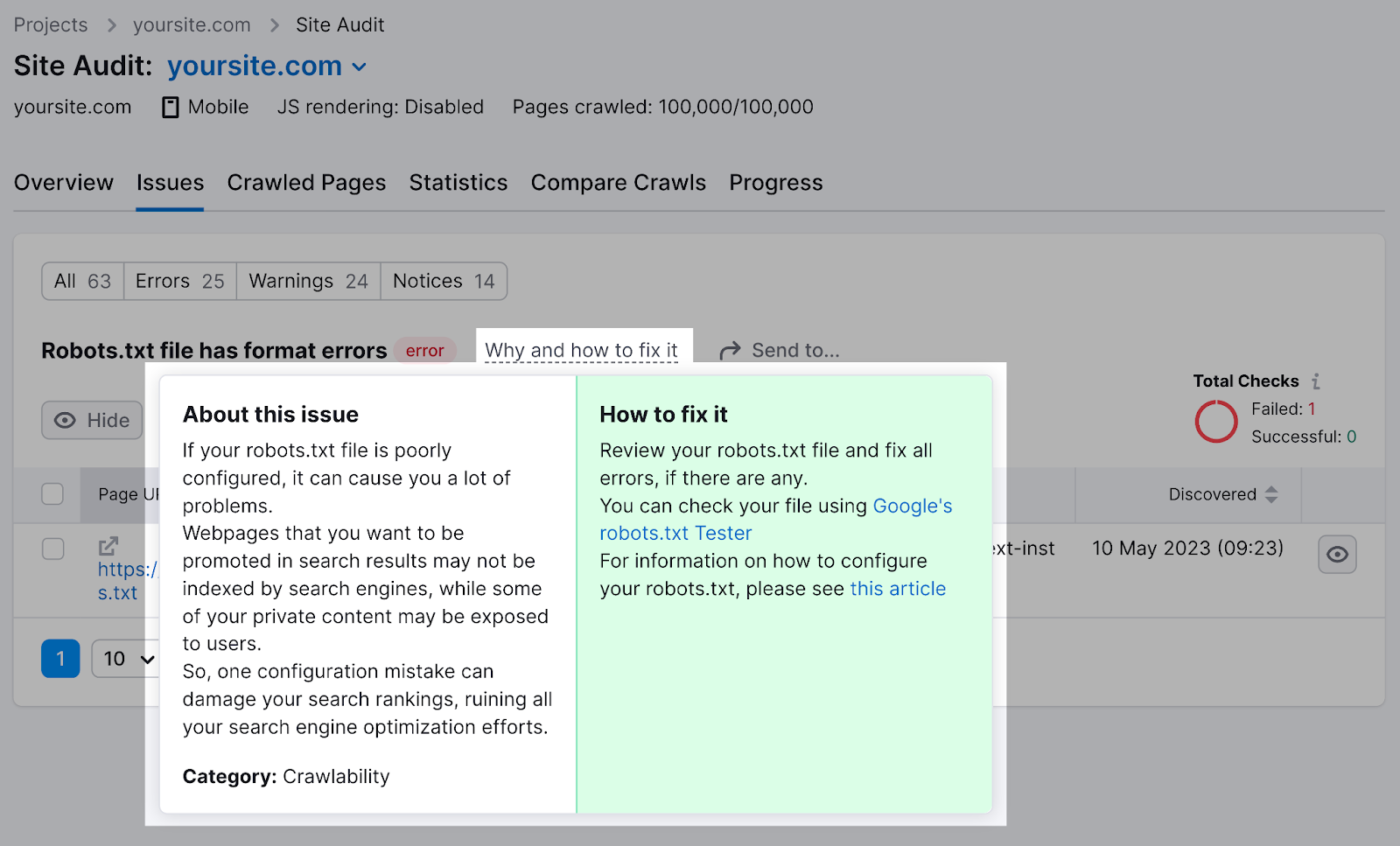

You may click on “Why and the best way to repair it” to get particular directions on the best way to repair the error.

Checking your robots.txt file for points is necessary, as even minor errors can negatively have an effect on your web site’s indexability.

Robots.txt Finest Practices

Use a New Line for Every Directive

Every directive ought to sit on a brand new line.

In any other case, search engines like google received’t have the ability to learn them. And your directions might be ignored.

Incorrect:

Consumer-agent: * Disallow: /admin/

Disallow: /listing/

Right:

Consumer-agent: *

Disallow: /admin/

Disallow: /listing/

Use Every Consumer-Agent Solely As soon as

Bots don’t thoughts for those who enter the identical user-agent a number of occasions.

However referencing it solely as soon as retains issues neat and easy. And reduces the possibilities of human error.

Complicated:

Consumer-agent: Googlebot

Disallow: /example-page

Consumer-agent: Googlebot

Disallow: /example-page-2

Discover how the Googlebot user-agent is listed twice?

Clear:

Consumer-agent: Googlebot

Disallow: /example-page

Disallow: /example-page-2

Within the first instance, Google would nonetheless observe the directions. However writing all directives underneath the identical user-agent is cleaner and helps you keep organized.

Use Wildcards to Make clear Instructions

You should use wildcards (*) to use a directive to all user-agents and match URL patterns.

To stop search engines like google from accessing URLs with parameters, you possibly can technically record them out one after the other.

However that’s inefficient. You may simplify your instructions with a wildcard.

Inefficient:

Consumer-agent: *

Disallow: /sneakers/vans?

Disallow: /sneakers/nike?

Disallow: /sneakers/adidas?

Environment friendly:

Consumer-agent: *

Disallow: /sneakers/*?

The above instance blocks all search engine bots from crawling all URLs underneath the “/sneakers/” subfolder with a query mark.

Use ‘$’ to Point out the Finish of a URL

Including the “$” signifies the top of a URL.

For instance, if you wish to block search engines like google from crawling all .jpg recordsdata in your web site, you’ll be able to record them individually.

However that might be inefficient.

Inefficient:

Consumer-agent: *

Disallow: /photo-a.jpg

Disallow: /photo-b.jpg

Disallow: /photo-c.jpg

As an alternative, add the “$” function:

Environment friendly:

Consumer-agent: *

Disallow: /*.jpg$

The “$” expression is a useful function in particular circumstances like above. However it can be harmful.

You may simply unblock belongings you didn’t imply to, so be prudent in its utility.

Crawlers ignore every little thing that begins with a hash (#).

So, builders usually use a hash so as to add a remark within the robots.txt file. It helps preserve the file organized and simple to learn.

So as to add a remark, start the road with a hash (#).

Like this:

Consumer-agent: *

#Touchdown Pages

Disallow: /touchdown/

Disallow: /lp/

#Recordsdata

Disallow: /recordsdata/

Disallow: /private-files/

#Web sites

Permit: /web site/*

Disallow: /web site/search/*

Builders often embody humorous messages in robots.txt recordsdata as a result of they know customers not often see them.

For instance, YouTube’s robots.txt file reads: “Created within the distant future (the yr 2000) after the robotic rebellion of the mid 90’s which worn out all people.”

And Nike’s robots.txt reads “simply crawl it” (a nod to its “simply do it” tagline) and its brand.

Use Separate Robots.txt Recordsdata for Totally different Subdomains

Robots.txt recordsdata management crawling conduct solely on the subdomain through which they’re hosted.

To regulate crawling on a distinct subdomain, you’ll want a separate robots.txt file.

So, in case your fundamental web site lives on “area.com” and your weblog lives on the subdomain “weblog.area.com,” you’d want two robots.txt recordsdata. One for the principle area’s root listing and the opposite to your weblog’s root listing.

5 Robots.txt Errors to Keep away from

When creating your robots.txt file, listed here are some widespread errors it’s best to be careful for.

1. Not Together with Robots.txt within the Root Listing

Your robots.txt file ought to at all times be situated in your web site’s root listing. In order that search engine crawlers can discover your file simply.

For instance, in case your web site is “www.instance.com,” your robots.txt file must be situated at “www.instance.com/robots.txt.”

For those who put your robots.txt file in a subdirectory, corresponding to “www.instance.com/contact/robots.txt,” search engine crawlers might not discover it. And should assume that you have not set any crawling directions to your web site.

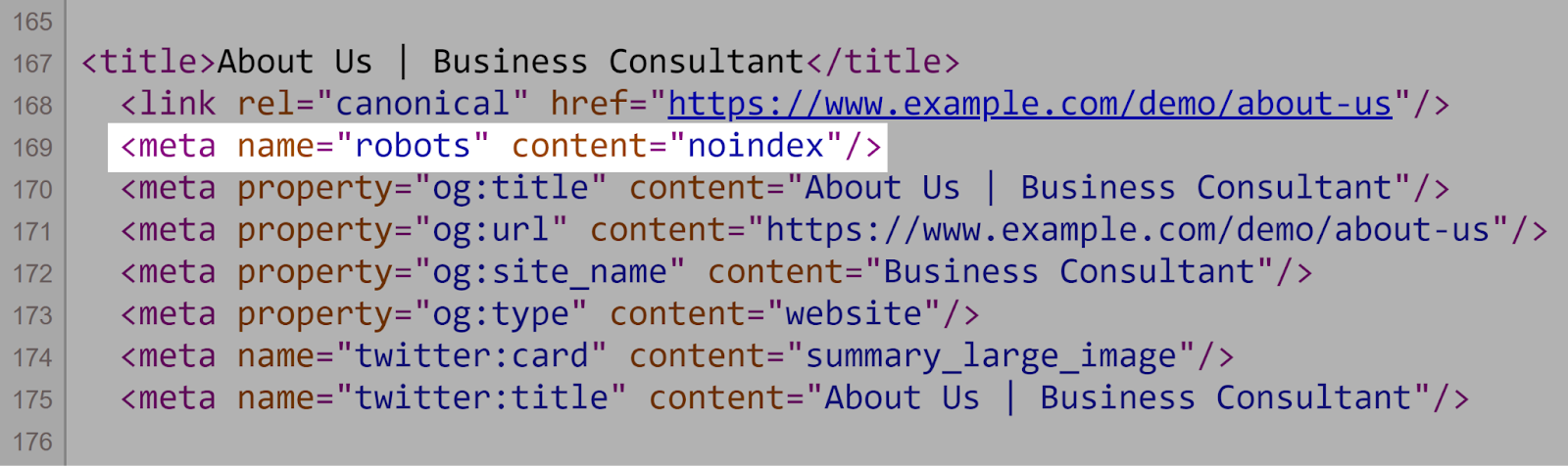

2. Utilizing Noindex Directions in Robots.txt

Robots.txt ought to give attention to crawling directives, not indexing ones. Once more, Google doesn’t help the noindex rule within the robots.txt file.

As an alternative, use meta robots tags (e.g., <meta title=”robots” content material=”noindex”>) on particular person pages to regulate indexing.

Like so:

3. Blocking JavaScript and CSS

Watch out to not block search engines like google from accessing JavaScript and CSS recordsdata by way of robots.txt. Until you may have a particular purpose for doing so, corresponding to proscribing entry to delicate knowledge.

Blocking search engines like google from crawling these recordsdata utilizing your robots.txt could make it tougher for these search engines like google to know your web site’s construction and content material.

Which may doubtlessly hurt your search rankings. As a result of search engines like google might not have the ability to absolutely render your pages.

Additional studying: JavaScript search engine optimization: How one can Optimize JS for Search Engines

4. Not Blocking Entry to Your Unfinished Website or Pages

When creating a brand new model of your web site, it’s best to use robots.txt to dam search engines like google from discovering it prematurely. To stop unfinished content material from being proven in search outcomes.

Search engines like google and yahoo crawling and indexing an in-development web page can result in poor consumer expertise. And potential duplicate content material points.

By blocking entry to your unfinished web site with robots.txt, you make sure that solely your web site’s closing, polished model seems in search outcomes.

5. Utilizing Absolute URLs

Use relative URLs in your robots.txt file to make it simpler to handle and preserve.

Absolute URLs are pointless and might introduce errors in case your area modifications.

❌ Right here’s an instance of a robots.txt file with absolute URLs:

Consumer-agent: *

Disallow: https://www.instance.com/private-directory/

Disallow: https://www.instance.com/temp/

Permit: https://www.instance.com/important-directory/

✅ And one with out:

Consumer-agent: *

Disallow: /private-directory/

Disallow: /temp/

Permit: /important-directory/

Preserve Your Robots.txt File Error-Free

Now that you simply perceive how robots.txt recordsdata work, it is necessary to optimize your individual robots.txt file. As a result of even small errors can negatively influence your web site’s capability to be correctly crawled, listed, and displayed in search outcomes.

Semrush’s Website Audit device makes it simple to investigate your robots.txt file for errors and get actionable suggestions to repair any points.