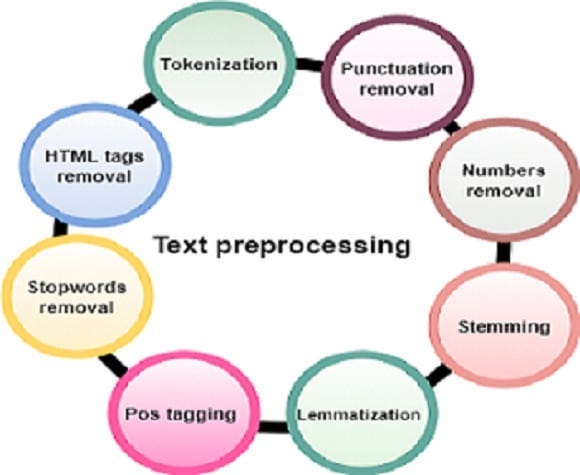

Pure Language Processing (NLP) includes varied strategies to deal with and analyze human language knowledge. On this weblog, we are going to discover three important strategies: tokenization, stemming, and lemmatization. These strategies are foundational for a lot of NLP purposes, comparable to textual content preprocessing, sentiment evaluation, and machine translation. Let’s delve into every method, perceive its objective, execs and cons, and see how they are often applied utilizing Python’s NLTK library.

What’s Tokenization?

Tokenization is the method of splitting a textual content into particular person items, referred to as tokens. These tokens could be phrases, sentences, or subwords. Tokenization helps break down advanced textual content into manageable items for additional processing and evaluation.

Why is Tokenization Used?

Tokenization is step one in textual content preprocessing. It transforms uncooked textual content right into a format that may be analyzed. This course of is crucial for duties comparable to textual content mining, info retrieval, and textual content classification.

Professionals and Cons of Tokenization

Professionals:

- Simplifies textual content processing by breaking textual content into smaller items.

- Facilitates additional textual content evaluation and NLP duties.

Cons:

- May be advanced for languages with out clear phrase boundaries.

- Might not deal with particular characters and punctuation effectively.

Code Implementation

Right here is an instance of tokenization utilizing the NLTK library:

# Set up NLTK library

!pip set up nltk

Clarification:

!pip set up nltk: This command installs the NLTK library, which is a strong toolkit for NLP in Python.

# Pattern textual content

tweet = "Generally to grasp a phrase's that means you want greater than a definition. it's worthwhile to see the phrase utilized in a sentence."

Clarification:

tweet: This can be a pattern textual content we are going to use for tokenization. It incorporates a number of sentences and phrases.

# Importing required modules

import nltk

nltk.obtain('punkt')

Clarification:

import nltk: This imports the NLTK library.nltk.obtain('punkt'): This downloads the ‘punkt’ tokenizer fashions, that are obligatory for tokenization.

from nltk.tokenize import word_tokenize, sent_tokenize

Clarification:

from nltk.tokenize import word_tokenize, sent_tokenize: This imports theword_tokenizeandsent_tokenizecapabilities from the NLTK library for phrase and sentence tokenization, respectively.

# Phrase Tokenization

textual content = "Hey! how are you?"

word_tok = word_tokenize(textual content)

print(word_tok)

Clarification:

textual content: This can be a easy sentence we are going to tokenize into phrases.word_tok = word_tokenize(textual content): This tokenizes the textual content into particular person phrases.print(word_tok): This prints the record of phrase tokens. Output:['Hello', '!', 'how', 'are', 'you', '?']

# Sentence Tokenization

sent_tok = sent_tokenize(tweet)

print(sent_tok)

Clarification:

sent_tok = sent_tokenize(tweet): This tokenizes the tweet into particular person sentences.print(sent_tok): This prints the record of sentence tokens. Output:['Sometimes to understand a word's meaning you need more than a definition.', 'you need to see the word used in a sentence.']

What’s Stemming?

Stemming is the method of lowering a phrase to its base or root type. It includes eradicating suffixes and prefixes from phrases to derive the stem.

Why is Stemming Used?

Stemming helps in normalizing phrases to their root type, which is beneficial in textual content mining and search engines like google and yahoo. It reduces inflectional types and derivationally associated types of a phrase to a typical base type.

Professionals and Cons of Stemming

Professionals:

- Reduces the complexity of textual content by normalizing phrases.

- Improves the efficiency of search engines like google and yahoo and data retrieval programs.

Cons:

- Can result in incorrect base types (e.g., ‘working’ to ‘run’, however ‘flying’ to ‘fli’).

- Totally different stemming algorithms could produce completely different outcomes.

Code Implementation

Let’s see the best way to carry out stemming utilizing completely different algorithms:

Porter Stemmer:

from nltk.stem import PorterStemmer

stemming = PorterStemmer()

phrase = 'danced'

print(stemming.stem(phrase))

Clarification:

from nltk.stem import PorterStemmer: This imports thePorterStemmerclass from NLTK.stemming = PorterStemmer(): This creates an occasion of thePorterStemmer.phrase = 'danced': That is the phrase we wish to stem.print(stemming.stem(phrase)): This prints the stemmed type of the phrase ‘danced’. Output:danc

phrase = 'substitute'

print(stemming.stem(phrase))

Clarification:

phrase = 'substitute': That is one other phrase we wish to stem.print(stemming.stem(phrase)): This prints the stemmed type of the phrase ‘substitute’. Output:replac

phrase = 'happiness'

print(stemming.stem(phrase))

Clarification:

phrase = 'happiness': That is one other phrase we wish to stem.print(stemming.stem(phrase)): This prints the stemmed type of the phrase ‘happiness’. Output:happi

Lancaster Stemmer:

from nltk.stem import LancasterStemmer

stemming1 = LancasterStemmer()

phrase = 'fortunately'

print(stemming1.stem(phrase))

Clarification:

from nltk.stem import LancasterStemmer: This imports theLancasterStemmerclass from NLTK.stemming1 = LancasterStemmer(): This creates an occasion of theLancasterStemmer.phrase = 'fortunately': That is the phrase we wish to stem.print(stemming1.stem(phrase)): This prints the stemmed type of the phrase ‘fortunately’. Output:glad

Common Expression Stemmer:

from nltk.stem import RegexpStemmer

stemming2 = RegexpStemmer('ing$|s$|e$|ready$|ness$', min=3)

phrase = 'raining'

print(stemming2.stem(phrase))

Clarification:

from nltk.stem import RegexpStemmer: This imports theRegexpStemmerclass from NLTK.stemming2 = RegexpStemmer('ing$|s$|e$|ready$|ness$', min=3): This creates an occasion of theRegexpStemmerwith an everyday expression sample to match suffixes and a minimal stem size of three characters.phrase = 'raining': That is the phrase we wish to stem.print(stemming2.stem(phrase)): This prints the stemmed type of the phrase ‘raining’. Output:rain

phrase = 'flying'

print(stemming2.stem(phrase))

Clarification:

phrase = 'flying': That is one other phrase we wish to stem.print(stemming2.stem(phrase)): This prints the stemmed type of the phrase ‘flying’. Output:fly

phrase = 'happiness'

print(stemming2.stem(phrase))

Clarification:

phrase = 'happiness': That is one other phrase we wish to stem.print(stemming2.stem(phrase)): This prints the stemmed type of the phrase ‘happiness’. Output:glad

Snowball Stemmer:

nltk.obtain("snowball_data")

from nltk.stem import SnowballStemmer

stemming3 = SnowballStemmer("english")

phrase = 'happiness'

print(stemming3.stem(phrase))

Clarification:

nltk.obtain("snowball_data"): This downloads the Snowball stemmer knowledge.from nltk.stem import SnowballStemmer: This imports theSnowballStemmerclass from NLTK.stemming3 = SnowballStemmer("english"): This creates an occasion of theSnowballStemmerfor the English language.phrase = 'happiness': That is the phrase we wish to stem.print(stemming3.stem(phrase)): This prints the stemmed type of the phrase ‘happiness’. Output:glad

stemming3 = SnowballStemmer("arabic")

phrase = 'تحلق'

print(stemming3.stem(phrase))

Clarification:

stemming3 = SnowballStemmer("arabic"): This creates an occasion of theSnowballStemmerfor the Arabic language.phrase = 'تحلق': That is an Arabic phrase we wish to stem.print(stemming3.stem(phrase)): This prints the stemmed type of the phrase ‘تحلق’. Output:تحل

What’s Lemmatization?

Lemmatization is the method of lowering a phrase to its base or dictionary type, generally known as a lemma. In contrast to stemming, lemmatization considers the context and converts the phrase to its significant base type.

Why is Lemmatization Used?

Lemmatization gives extra correct base types in comparison with stemming. It’s broadly utilized in textual content evaluation, chatbots, and NLP purposes the place understanding the context of phrases is crucial.

Professionals and Cons of Lemmatization

Professionals:

- Produces extra correct base types by contemplating the context.

- Helpful for duties requiring semantic understanding.

Cons:

- Requires extra computational assets in comparison with stemming.

- Depending on language-specific dictionaries.

Code Implementation

Right here is the best way to carry out lemmatization utilizing the NLTK library:

# Obtain obligatory knowledge

nltk.obtain('wordnet')

Clarification:

nltk.obtain('wordnet'): This command downloads the WordNet corpus, which is utilized by the WordNetLemmatizer for locating the lemmas of phrases.

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

Clarification:

from nltk.stem import WordNetLemmatizer: This imports theWordNetLemmatizerclass from NLTK.lemmatizer = WordNetLemmatizer(): This creates an occasion of theWordNetLemmatizer.

print(lemmatizer.lemmatize('going', pos='v'))

Clarification:

lemmatizer.lemmatize('going', pos='v'): This lemmatizes the phrase ‘going’ with the a part of speech (POS) tag ‘v’ (verb). Output:go

# Lemmatizing an inventory of phrases with their respective POS tags

phrases = [("eating", 'v'), ("playing", 'v')]

for phrase, pos in phrases:

print(lemmatizer.lemmatize(phrase, pos=pos))

Clarification:

phrases = [("eating", 'v'), ("playing", 'v')]: This can be a record of tuples the place every tuple incorporates a phrase and its corresponding POS tag.for phrase, pos in phrases: This iterates by every tuple within the record.print(lemmatizer.lemmatize(phrase, pos=pos)): This prints the lemmatized type of every phrase primarily based on its POS tag. Outputs:eat, play

- Tokenization is utilized in textual content preprocessing, sentiment evaluation, and language modeling.

- Stemming is beneficial for search engines like google and yahoo, info retrieval, and textual content mining.

- Lemmatization is crucial for chatbots, textual content classification, and semantic evaluation.

Tokenization, stemming, and lemmatization are essential strategies in NLP. They remodel the uncooked textual content right into a format appropriate for evaluation and assist in understanding the construction and that means of the textual content. By making use of these strategies, we will improve the efficiency of varied NLP purposes.

Be at liberty to experiment with the supplied code snippets and discover these strategies additional. Joyful coding!