Language fashions (LMs) are a cornerstone of synthetic intelligence analysis, specializing in the power to grasp and generate human language. Researchers intention to boost these fashions to carry out varied complicated duties, together with pure language processing, translation, and artistic writing. This area examines how LMs be taught, adapt, and scale their capabilities with growing computational sources. Understanding these scaling behaviors is crucial for predicting future capabilities and optimizing the sources required for coaching and deploying these fashions.

The first problem in language mannequin analysis is knowing how mannequin efficiency scales with the quantity of computational energy and knowledge used throughout coaching. This scaling is essential for predicting future capabilities and optimizing useful resource use. Conventional strategies require intensive coaching throughout a number of scales, which is computationally costly and time-consuming. This creates a big barrier for a lot of researchers and engineers who want to grasp these relationships to enhance mannequin growth and software.

Present analysis contains varied frameworks and fashions for understanding language mannequin efficiency. Notable amongst these are compute scaling legal guidelines, which analyze the connection between computational sources and mannequin capabilities. Instruments just like the Open LLM Leaderboard, LM Eval Harness, and benchmarks like MMLU, ARC-C, and HellaSwag are generally used. Furthermore, fashions resembling LLaMA, GPT-Neo, and BLOOM present numerous examples of how scaling legal guidelines will be practiced. These frameworks and benchmarks assist researchers consider and optimize language mannequin efficiency throughout totally different computational scales and duties.

Researchers from Stanford College, College of Toronto, and Vector Institute launched observational scaling legal guidelines to enhance language mannequin efficiency predictions. This technique makes use of publicly out there fashions to create scaling legal guidelines, decreasing the necessity for intensive coaching. By leveraging present knowledge from roughly 80 fashions, the researchers might construct a generalized scaling legislation that accounts for variations in coaching compute efficiencies. This modern method provides a cheap and environment friendly method to predict mannequin efficiency throughout totally different scales and capabilities, setting it other than conventional scaling strategies.

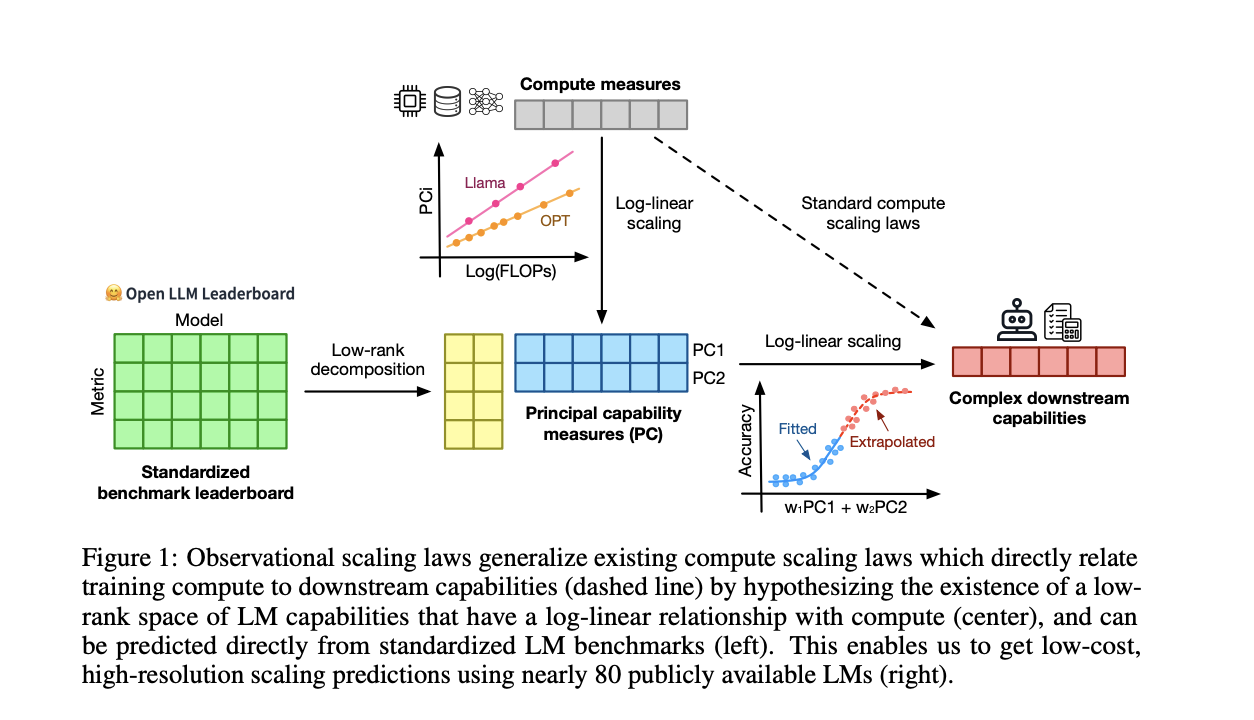

The methodology analyzes efficiency knowledge from about 80 publicly out there language fashions, together with the Open LLM Leaderboard and standardized benchmarks resembling MMLU, ARC-C, and HellaSwag. The researchers hypothesized that mannequin efficiency may very well be mapped to a low-dimensional functionality area. They developed a generalized scaling legislation by inspecting variations in coaching compute efficiencies amongst totally different mannequin households. This course of concerned utilizing principal element evaluation (PCA) to establish key functionality measures and becoming these measures right into a log-linear relationship with compute sources, enabling correct and high-resolution efficiency predictions.

The analysis demonstrated vital success with observational scaling legal guidelines. For example, utilizing less complicated fashions, the tactic precisely predicted the efficiency of superior fashions like GPT-4. Quantitatively, the scaling legal guidelines confirmed a excessive correlation (R² > 0.9) with precise efficiency throughout varied benchmarks. Emergent phenomena, resembling language understanding and reasoning skills, adopted a predictable sigmoidal sample. The outcomes additionally indicated that the affect of post-training interventions, like Chain-of-Thought and Self-Consistency, may very well be reliably predicted, exhibiting efficiency enhancements of as much as 20% in particular duties.

To conclude, the analysis introduces observational scaling legal guidelines, leveraging publicly out there knowledge from round 80 fashions to foretell language mannequin efficiency effectively. By figuring out a low-dimensional functionality area and utilizing generalized scaling legal guidelines, the research reduces the necessity for intensive mannequin coaching. The outcomes confirmed excessive predictive accuracy for superior mannequin efficiency and post-training interventions. This method saves computational sources and enhances the power to forecast mannequin capabilities, providing a useful software for researchers and engineers in optimizing language mannequin growth.

Take a look at the Paper. All credit score for this analysis goes to the researchers of this challenge. Additionally, don’t neglect to comply with us on Twitter. Be part of our Telegram Channel, Discord Channel, and LinkedIn Group.

When you like our work, you’ll love our publication..

Don’t Neglect to hitch our 42k+ ML SubReddit

Nikhil is an intern advisor at Marktechpost. He’s pursuing an built-in twin diploma in Supplies on the Indian Institute of Know-how, Kharagpur. Nikhil is an AI/ML fanatic who’s all the time researching purposes in fields like biomaterials and biomedical science. With a robust background in Materials Science, he’s exploring new developments and creating alternatives to contribute.