Creating general-purpose assistants that may effectively perform varied real-world actions by following customers’ (multimodal) directions has lengthy been a aim in synthetic intelligence. The realm has just lately seen elevated curiosity in creating basis fashions with rising multimodal understanding and producing expertise in open-world challenges. Methods to create multimodal, general-purpose assistants for laptop imaginative and prescient and vision-language actions nonetheless must be found, regardless of the effectiveness of using giant language fashions (LLMs) like ChatGPT to supply general-purpose assistants for pure language duties.

The present endeavors aimed toward creating multimodal brokers could also be typically divided into two teams:

(i) Finish-to-end coaching utilizing LLMs, through which a succession of Massive Multimodal Fashions (LMMs) are created by repeatedly coaching LLMs to learn to interpret visible data utilizing image-text information and multimodal instruction-following information. Each open-sourced fashions like LLaVA and MiniGPT-4 and personal fashions like Flamingo and multimodal GPT-4 have proven spectacular visible understanding and reasoning expertise. Whereas these end-to-end coaching approaches work nicely for helping LMMs in buying emergent expertise (like in-context studying), making a cohesive structure that may easily combine a broad vary of talents—like picture segmentation and era—which might be important for multimodal purposes in the true world continues to be a troublesome process.

(ii) Instrument chaining with LLMs, through which the prompts are rigorously designed to permit LLMs to name upon varied instruments (equivalent to imaginative and prescient fashions which have already been educated) to do desired (sub-)duties, all with out requiring additional mannequin coaching. VisProg, ViperGPT, Visible ChatGPT, X-GPT, and MM-REACT are well-known works. The power of those approaches is their potential to deal with a variety of visible duties utilizing (new) instruments that may be developed cheaply and built-in into an AI agent. Prompting, nevertheless, must be extra versatile and dependable to allow multimodal brokers to reliably select and activate the best instruments (from a broad and diversified toolset) and compose their outcomes to offer remaining options for multimodal duties within the precise world on the go.

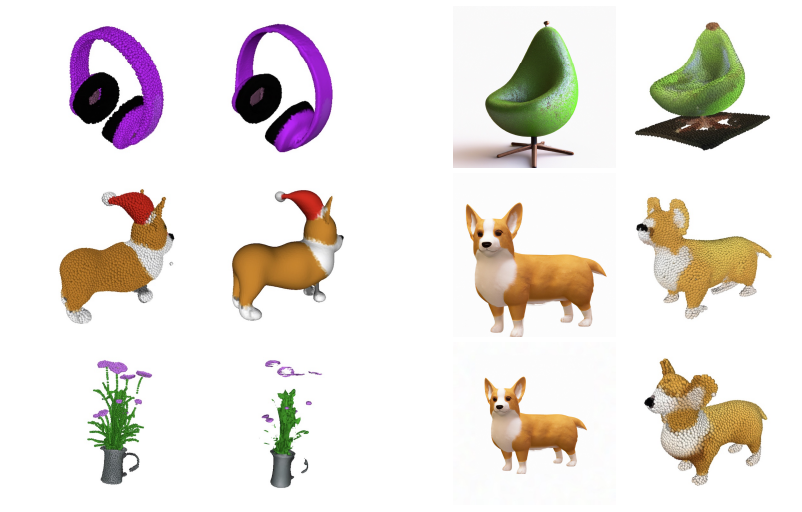

Determine 1: A graphic illustration of the chances of LLaVA-Plus made attainable through ability acquisition.

Researchers from Tsinghua College, Microsoft Analysis, College of Wisconsin-Madison, HKUST, and IDEA Analysis on this paper introduce LLaVA-Plus (Massive Language and Imaginative and prescient Assistants that Plug and Be taught to Use Abilities), a multimodal assistant with a broad vary of purposes that acquires software utilization expertise via an end-to-end coaching methodology that methodically enhances LMMs’ capabilities via visible instruction tweaking. To their data, that is the primary documented try to mix some great benefits of the beforehand described software chaining and end-to-end coaching methods. The ability repository that comes with LLaVA-Plus has a big number of imaginative and prescient and vision-language instruments. The design is an instance of the “Society of Thoughts” principle, through which particular person instruments are created for sure duties and have restricted use on their very own; however, when these instruments are mixed, they supply emergent expertise that show larger intelligence.

As an illustration, given customers’ multimodal inputs, LLaVA-Plus might create a brand new workflow immediately, select and activate pertinent instruments from the ability library, and assemble the outcomes of their execution to finish varied real-world duties that aren’t seen throughout mannequin coaching. By means of instruction tweaking, LLaVA-Plus could also be enhanced over time by including extra capabilities or devices. Think about a brand-new multimodal software created for a sure use case or potential. To construct instruction-following information for tuning, they collect related person directions that require this software together with their execution outcomes or the outcomes that observe. Following instruction tweaking, LLaVA-Plus positive aspects extra capabilities because it learns to make use of this new software to perform jobs beforehand unattainable.

Moreover, LLaVA-Plus deviates from earlier research on software utilization coaching for LLMs by using visible cues solely along with multimodal instruments. Then again, LLaVA-Plus enhances LMM’s capability for planning and reasoning through the use of unprocessed visible alerts for all of the human-AI contact classes. To summarize, the contributions of their paper are as follows:

• Use information for a brand new multimodal instruction-following software. Utilizing ChatGPT and GPT-4 as labeling instruments, they describe a brand new pipeline for choosing vision-language instruction-following information that’s meant to be used as a software in human-AI interplay classes.

• A brand new, giant multimodal helper. They’ve created LLaVA-Plus, a multimodal assistant with a broad vary of makes use of that expands on LLaVA by integrating an intensive and diversified assortment of exterior instruments that may be shortly chosen, assembled, and engaged to finish duties. Determine 1 illustrates how LLaVA-Plus tremendously expands the chances of LMM. Their empirical investigation verifies the efficacy of LLaVA-Plus by displaying persistently higher outcomes on a number of benchmarks, particularly the brand new SoTA on VisiT-Bench with a variety of real-world actions.

• Supply-free. The supplies they’ll make publicly obtainable are the produced multimodal instruction information, the codebase, the LLaVA-Plus checkpoints, and a visible chat demo.

Try the Paper and Mission. All credit score for this analysis goes to the researchers of this challenge. Additionally, don’t overlook to affix our 33k+ ML SubReddit, 41k+ Fb Neighborhood, Discord Channel, and E mail E-newsletter, the place we share the most recent AI analysis information, cool AI initiatives, and extra.

In case you like our work, you’ll love our e-newsletter..

Aneesh Tickoo is a consulting intern at MarktechPost. He’s at the moment pursuing his undergraduate diploma in Information Science and Synthetic Intelligence from the Indian Institute of Expertise(IIT), Bhilai. He spends most of his time engaged on initiatives aimed toward harnessing the ability of machine studying. His analysis curiosity is picture processing and is obsessed with constructing options round it. He loves to attach with folks and collaborate on fascinating initiatives.