What Is the Google Index?

The Google index is a database of all of the webpages the search engine has crawled and saved to make use of in search outcomes.

It acts like an enormous, searchable library of internet content material. And shops the textual content from every webpage, together with vital metadata like titles, headers, hyperlinks, photos, and extra.

All of this knowledge will get compiled right into a structured index that permits Google to immediately scan its contents and match search queries with related outcomes.

So when customers seek for one thing in Google, they’re looking out its highly effective index to search out the perfect webpages on that matter.

Each web page that seems in Google’s search outcomes must be listed first. In case your web page isn’t listed, it gained’t present in search outcomes.

This is how indexing matches into the entire course of (assuming there aren’t points alongside the best way):

- Crawling: Googlebot crawls the online and appears for brand new or up to date pages

- Indexing: Google analyzes the pages and shops them in its database

- Rating: Google’s algorithm picks the perfect and most related pages from its index and reveals them as search outcomes

Predetermined algorithms management Google indexing. However there are issues you are able to do to affect indexing.

How Do You Verify If Google Has Listed Your Web site?

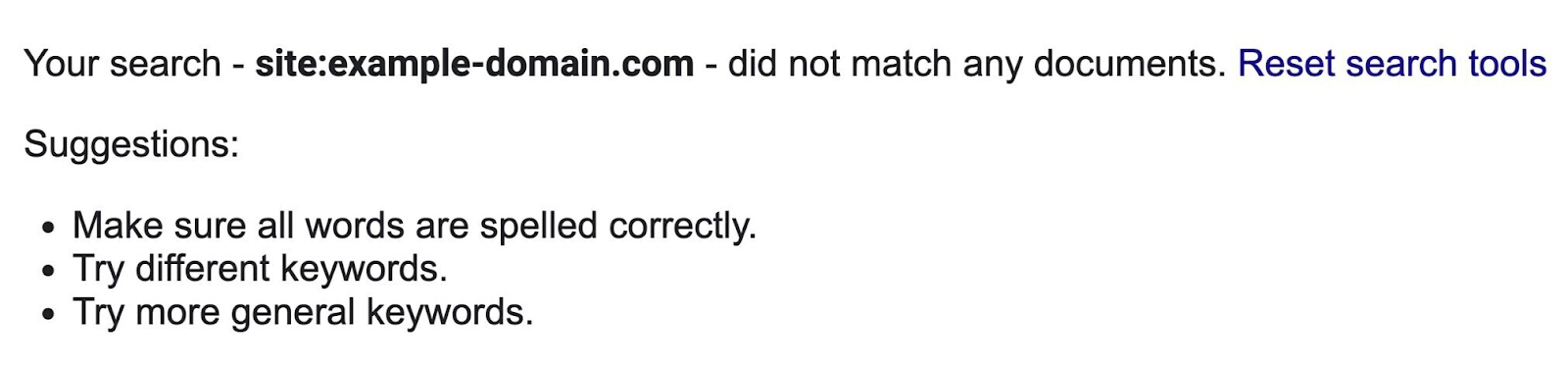

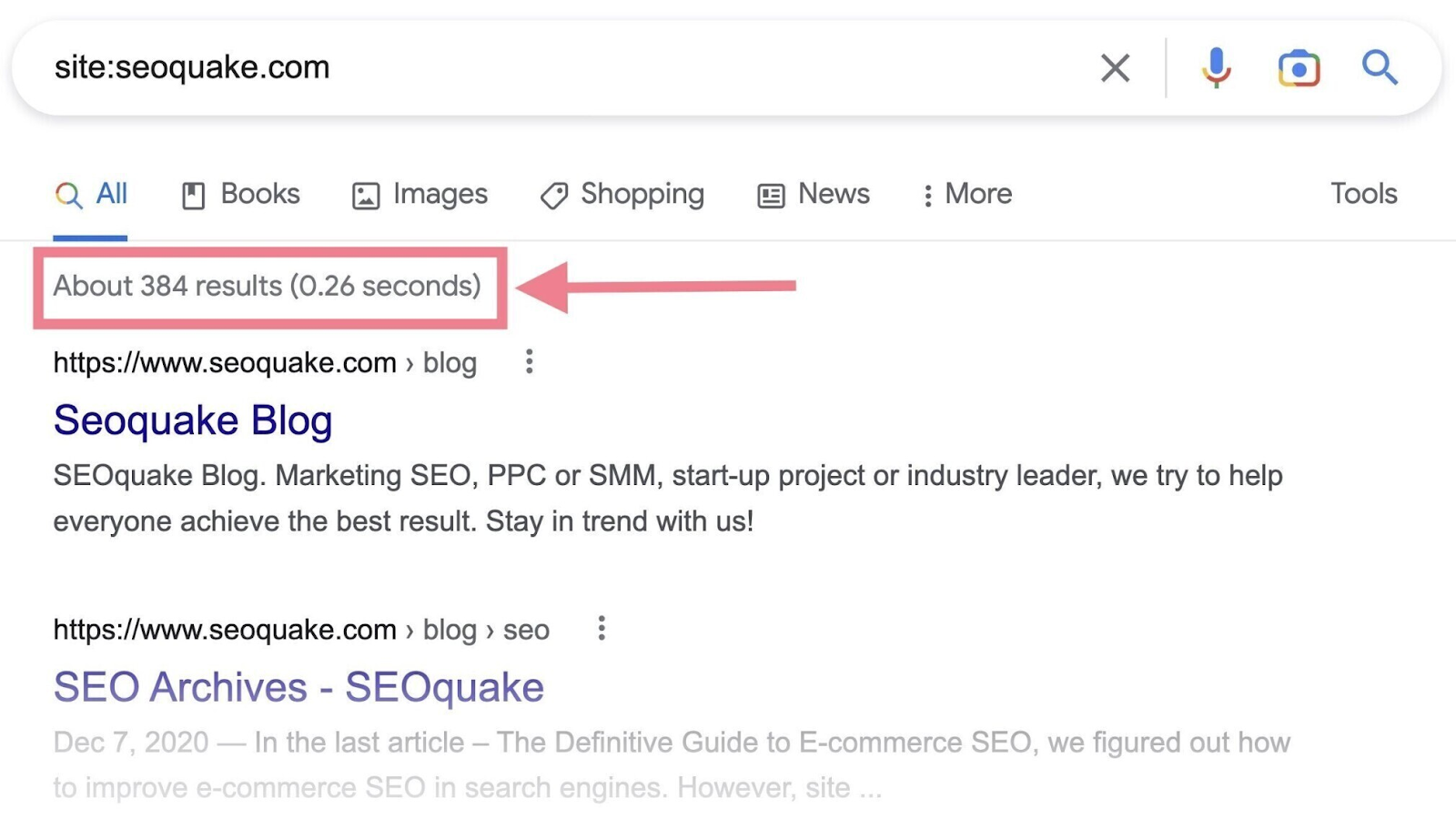

Google makes it simple to search out out whether or not your website has been listed—by utilizing the positioning: search operator.

Right here’s how you can verify:

- Go to Google

- Within the search bar, kind within the website: search operator adopted by your area (e.g., “website:yourdomain.com”)

- Whenever you look under the search bar, you’ll see an estimate of what number of of your pages Google has listed

If zero outcomes present up, none of your pages are listed.

If there are listed pages, Google will present them as search outcomes.

That’s the way you shortly verify the indexing standing of your pages. However it’s not probably the most sensible manner, as it might be tough to identify particular pages that have not been listed.

The choice (and preferable) approach to verify if Google has listed your web site is to make use of Google Search Console (GSC). We’ll take a more in-depth take a look at it and how you can index your web site on Google within the subsequent part.

How Do You Get Google to Index Your Web site?

When you have a brand new web site, it will possibly take Google a while to index it as a result of it must be crawled first. And crawling can take anyplace from a couple of days to some weeks.

(Indexing normally occurs proper after that, however it’s not assured.)

However you’ll be able to pace up the method.

The simplest manner is to request indexing in Google Search Console. GSC is a free toolset that means that you can verify your web site’s presence on Google and troubleshoot any associated points.

If you do not have a GSC account but, you may must:

- Sign up along with your Google account

- Add a brand new property (your web site) to your account

- Confirm possession of the web site

Need assistance? Learn our detailed information that will help you arrange Google Search Console.

Then, observe these steps:

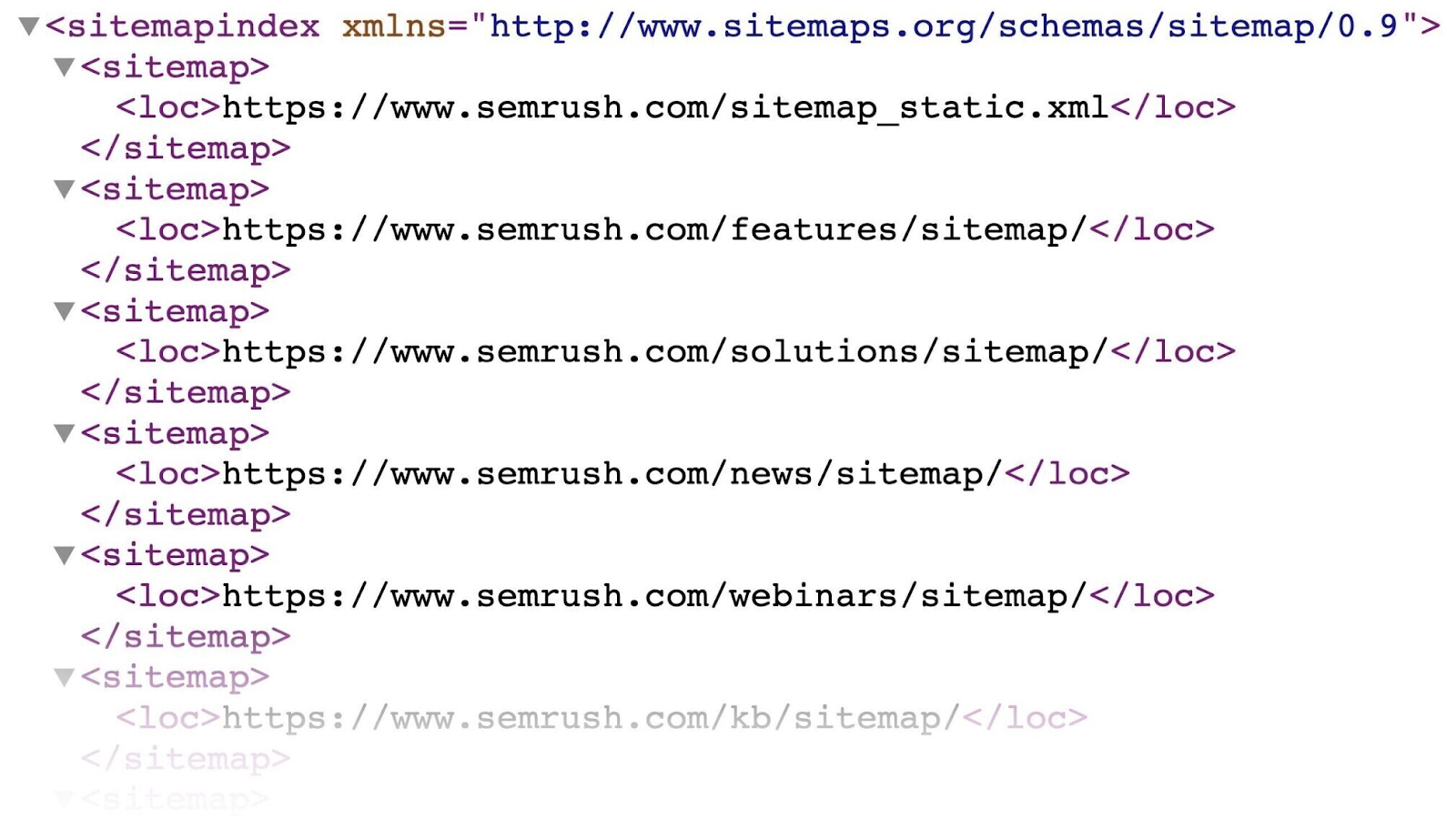

Create and Submit a Sitemap

An XML sitemap is a file that lists all of the URLs you need Google to index. Which helps crawlers discover your primary pages quicker.

It appears to be like one thing like this:

You will probably discover your sitemap on this URL: “https://yourdomain.com/sitemap.xml”

If you do not have one, learn our information to creating an XML sitemap (or this information to WordPress sitemaps in case your web site runs on WordPress).

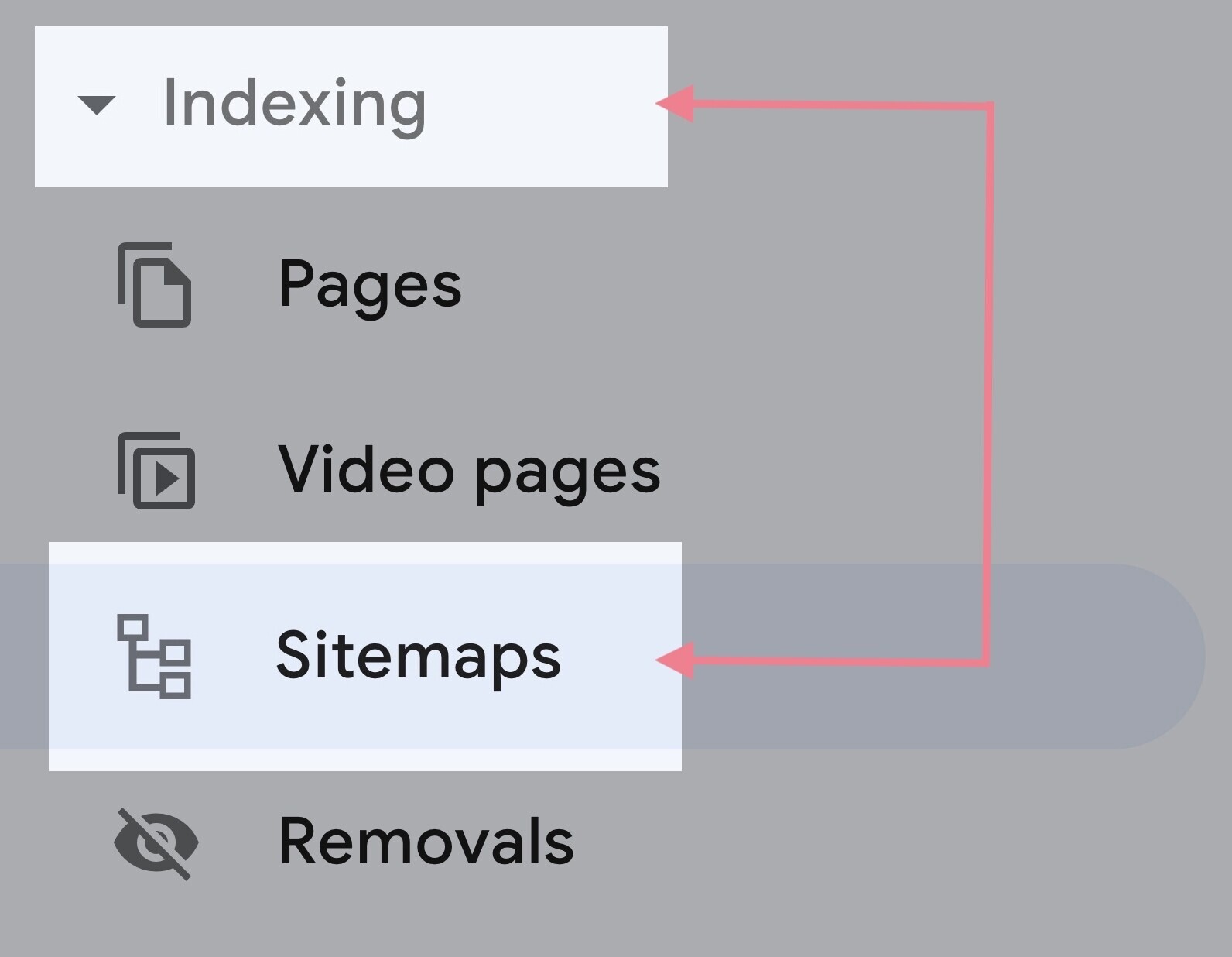

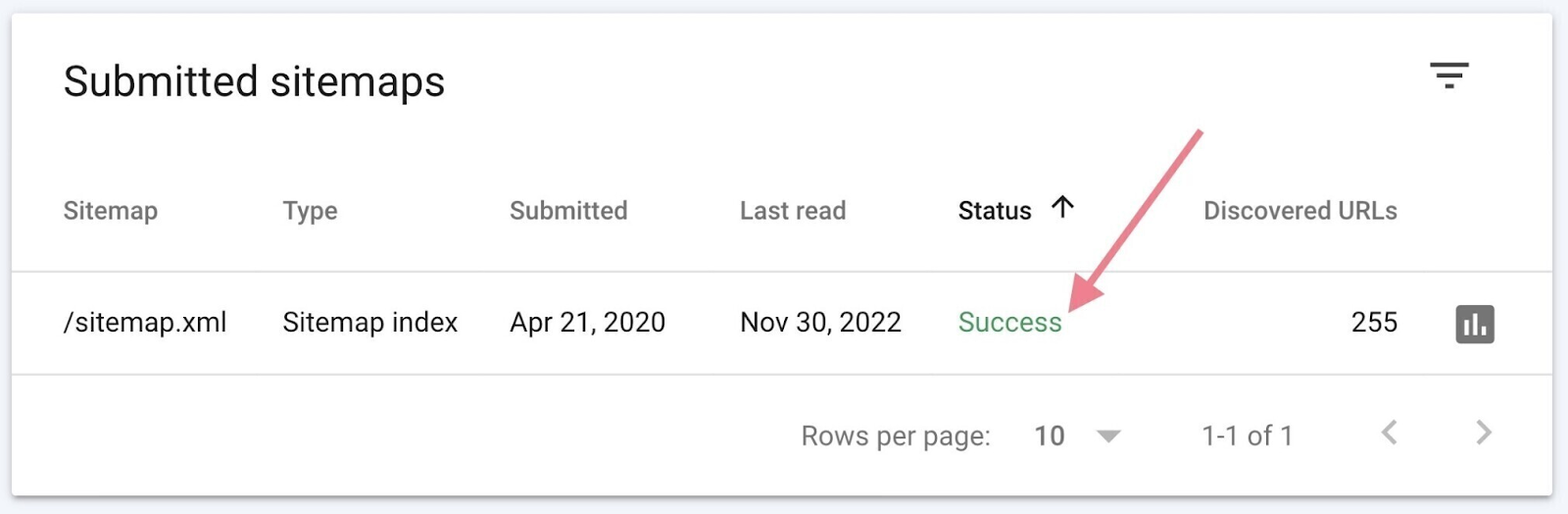

After getting the your sitemap URL, go to “Sitemaps” in GSC. You will discover it beneath the “Indexing” part within the left menu.

Enter your sitemap URL and hit “Submit.”

It might take a few days on your sitemap to be processed. When it’s performed, you need to see the hyperlink to your sitemap and a inexperienced “Success” standing within the report.

Submitting the sitemap may help Google uncover all of the pages you deem vital. And pace up the method of indexing them.

Use the URL Inspection Device

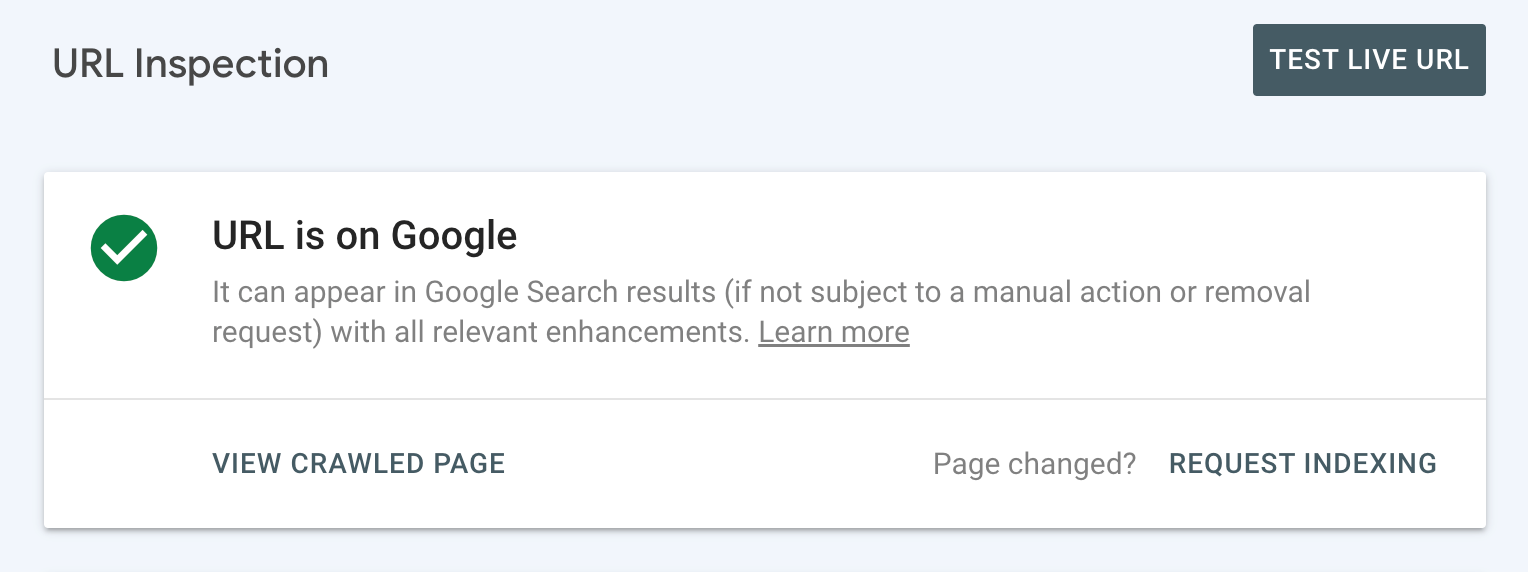

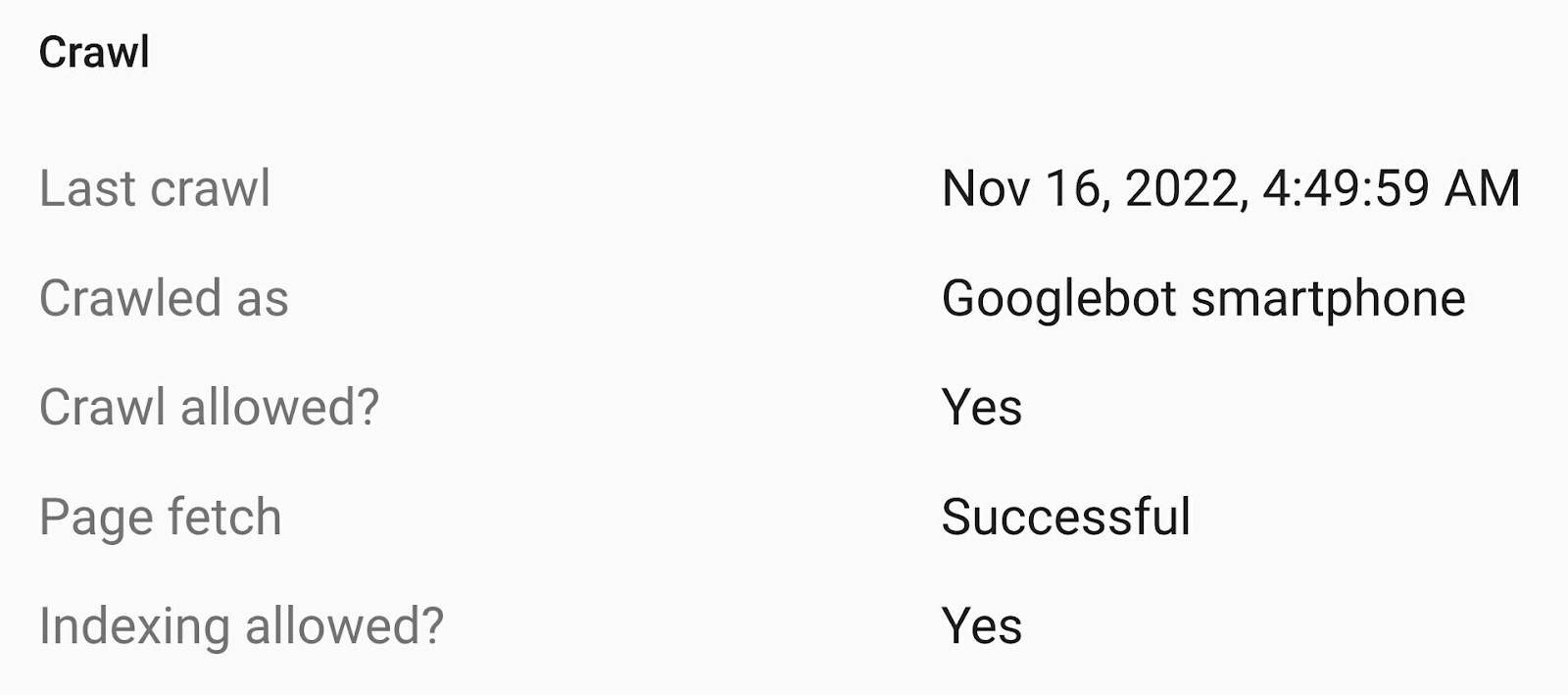

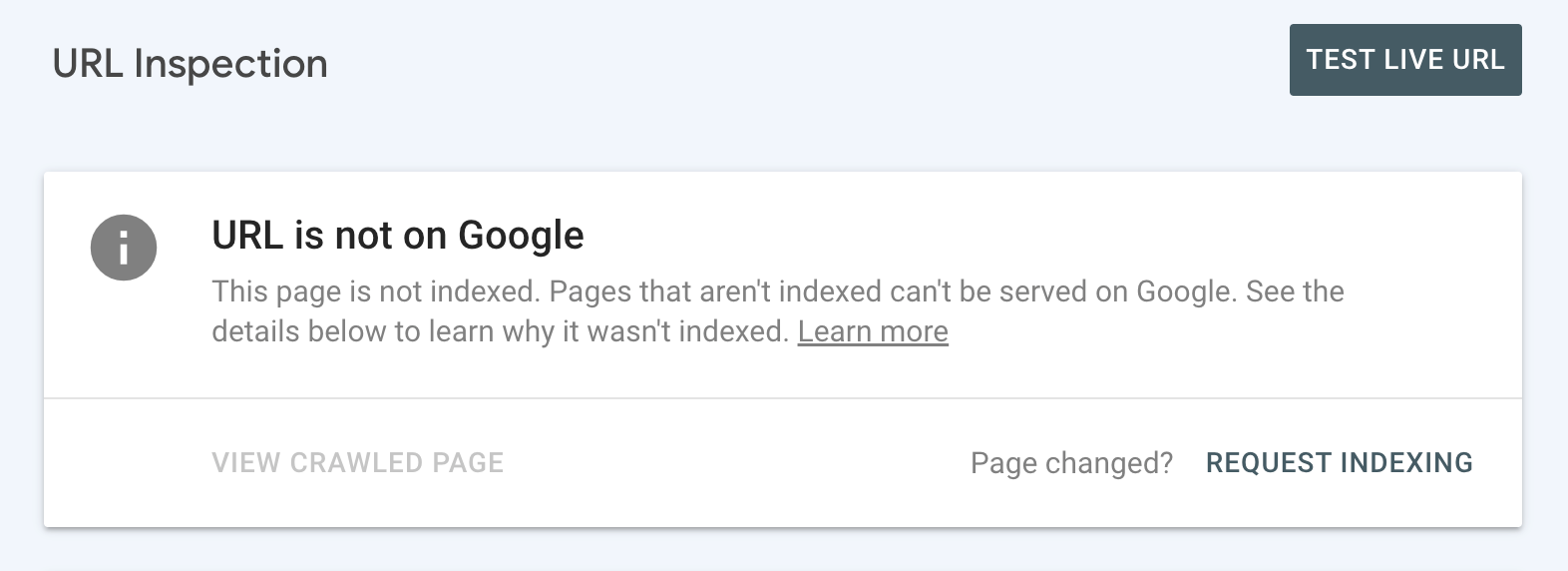

To verify the standing of a selected URL, use the URL inspection device in GSC.

Begin by coming into the URL within the search bar on the prime.

If you happen to see the “URL is on Google” standing, it means Google has crawled and listed it.

You possibly can verify the small print to see when it was final crawled and in addition get different useful data.

If that is so, you are all set and do not must do something.

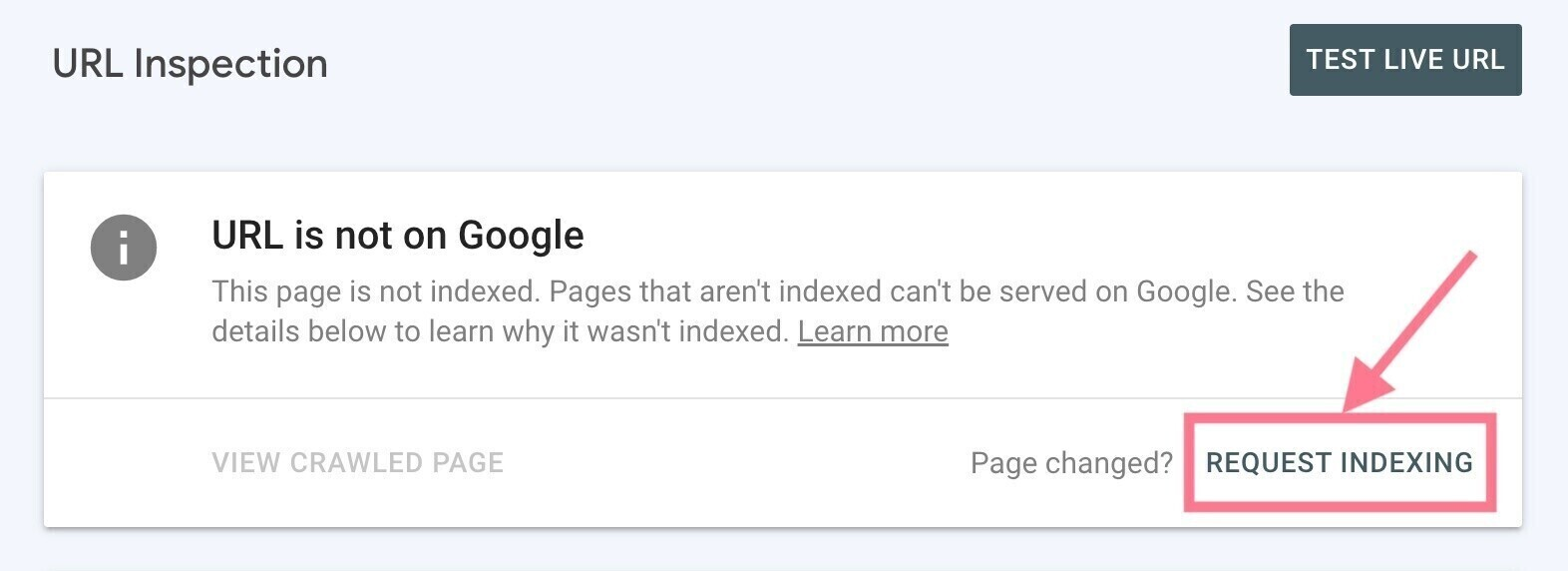

However in the event you see the “URL is just not on Google” standing, it means the inspected URL isn’t listed and might’t seem in Google’s search engine outcomes pages (SERPs).

You will most likely see the rationale why the web page hasn’t been listed. And you will want to deal with the problem (see subsequent part for a way to do that).

As soon as that’s performed, you’ll be able to request indexing by clicking the “Request Indexing” hyperlink.

Frequent Indexing Points to Discover and Repair

Generally, there could also be points along with your web site’s technical search engine optimization that maintain your website (or a selected web page) from being listed—even in the event you request it.

This may occur in case your website isn’t mobile-friendly, masses too slowly, has redirect points, and many others.

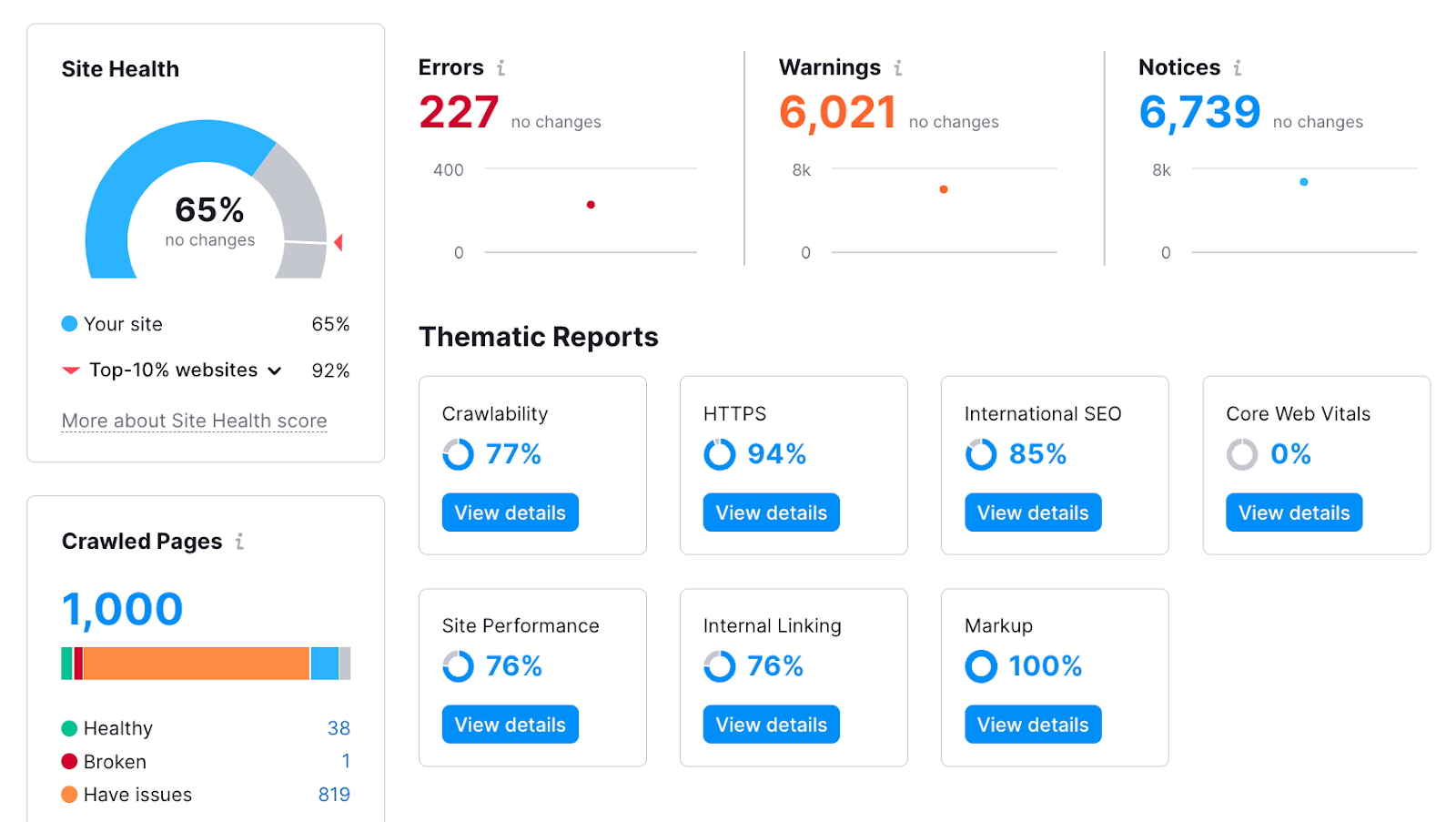

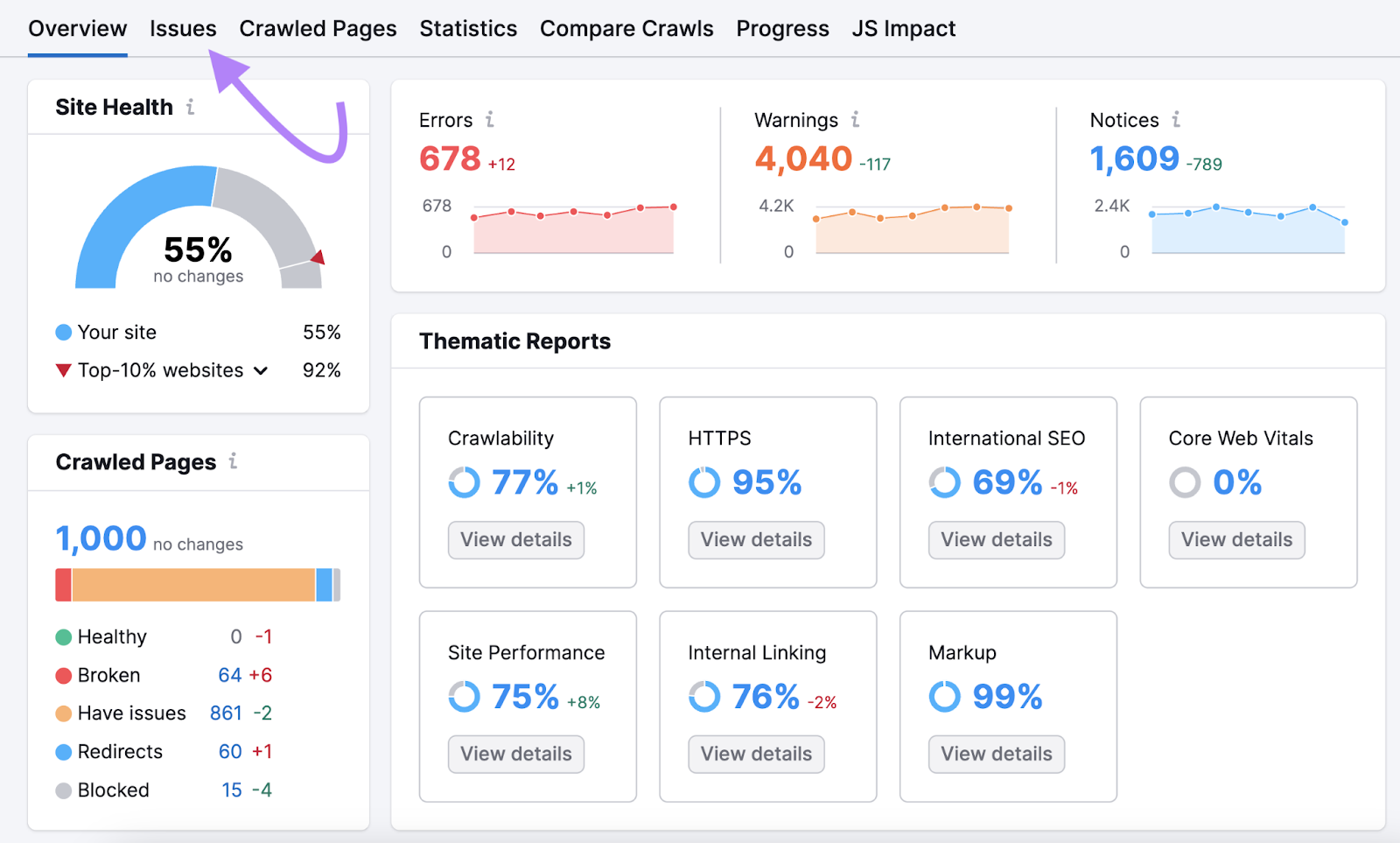

Carry out a technical search engine optimization audit with Semrush’s Web site Audit to search out out why Google has not listed your pages.

Right here’s how:

- Create a free Semrush account (no bank card wanted)

- Arrange your first crawl (we now have an in depth setup information that will help you)

- Click on the “Begin Web site Audit” button

After you run the audit, you may get an in-depth view of your website’s well being.

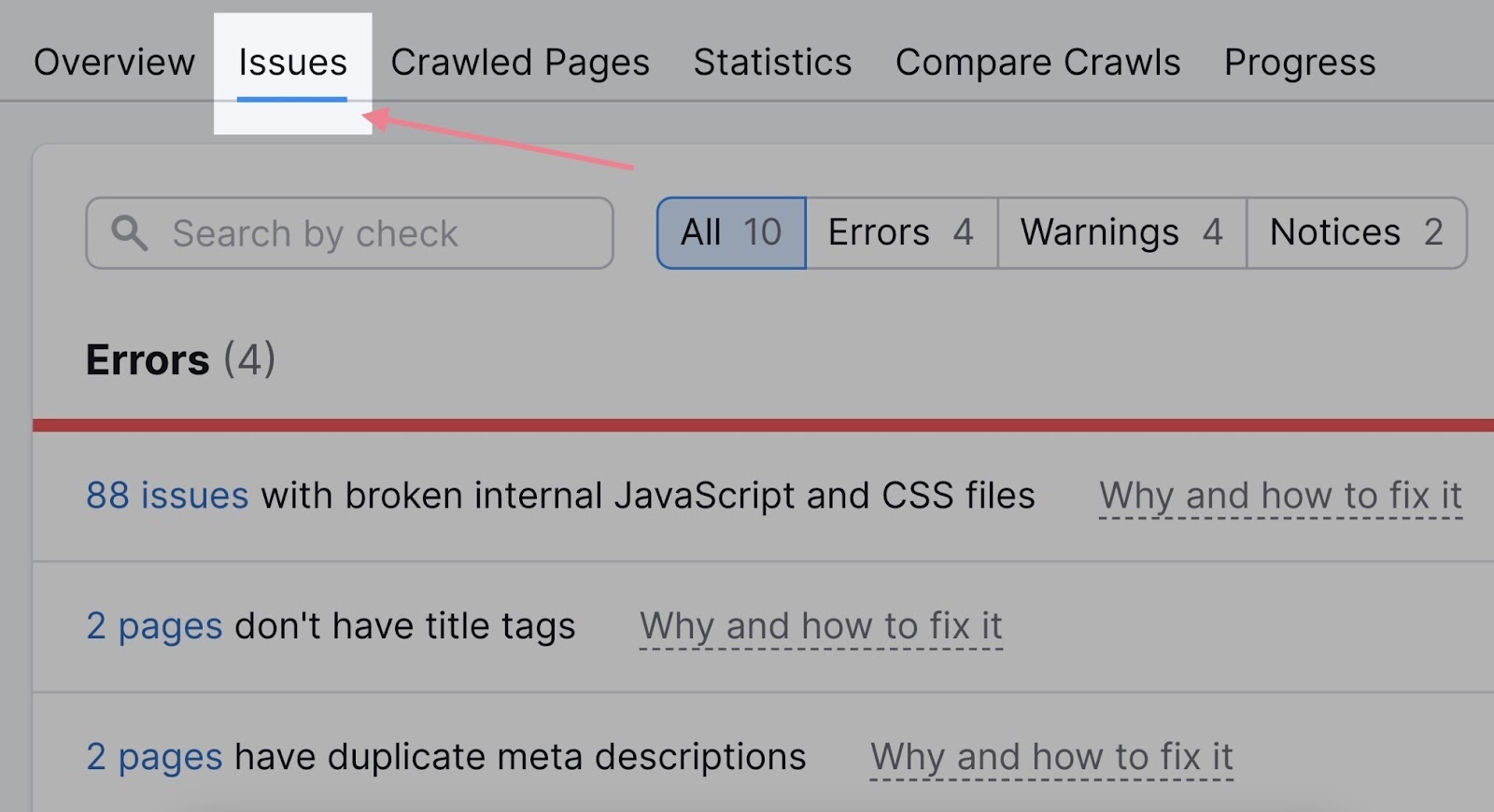

You may also see a listing of all the issues by clicking the “Points” tab:

The problems associated to indexing will virtually at all times seem on the prime of the record—within the “Errors” part.

Let’s check out some frequent explanation why your website is probably not listed and how you can repair the issues.

Errors with Your Robots.txt File

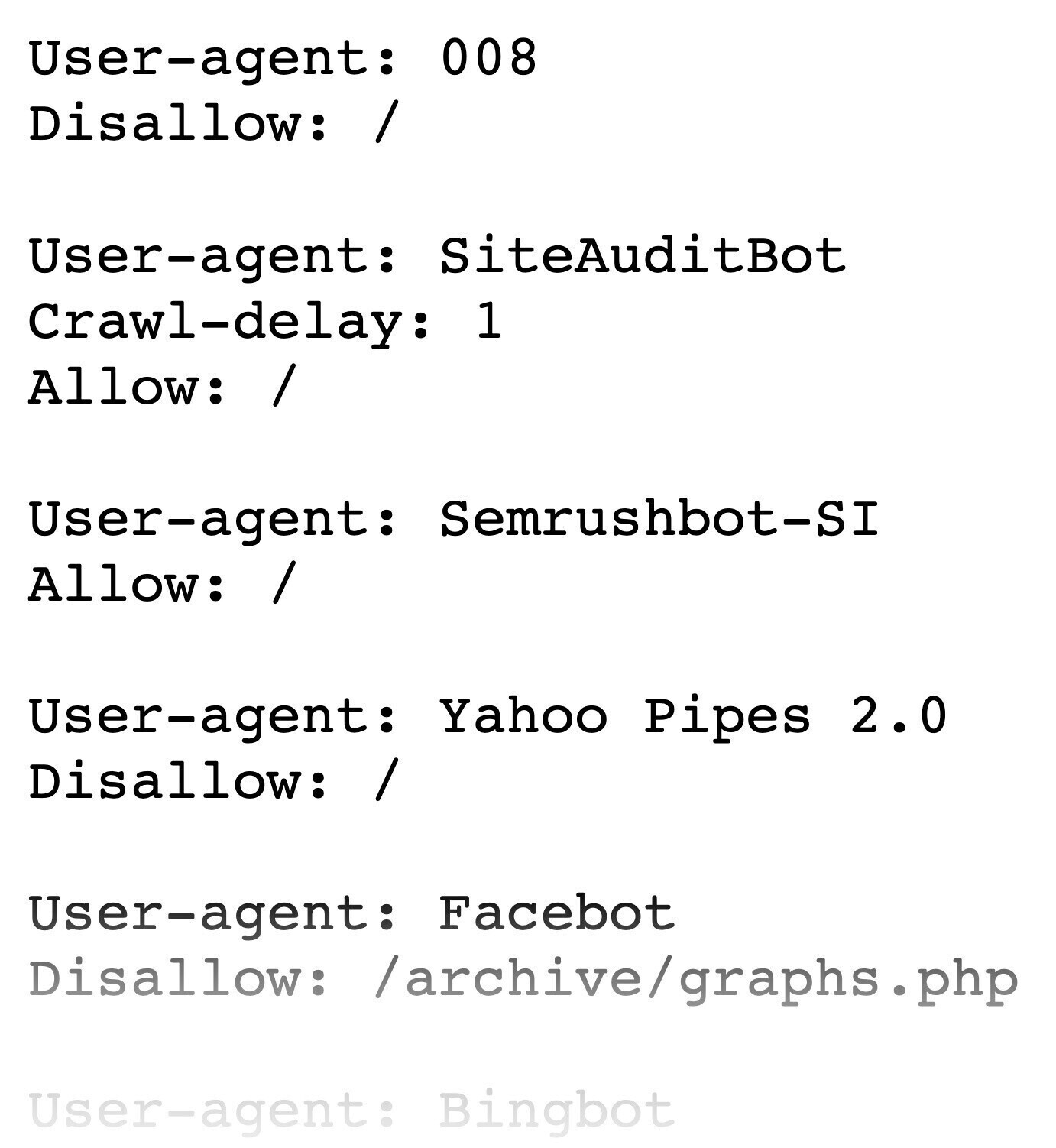

Your robots.txt file offers directions to search engines like google and yahoo about which components of an internet site they shouldn’t crawl. And it appears to be like one thing like this:

You will discover yours at “https://yourdomain.com/robots.txt.”

(Observe our information to create a robots.txt file if you do not have one.)

Chances are you’ll need to use directives to dam Google from crawling duplicate pages, non-public pages, or assets like PDFs and movies.

But when your robots.txt file tells Googlebot (or internet crawlers normally) that your total website shouldn’t be crawled, there is a excessive likelihood it will not be listed both.

Every directive in robots.txt consists of two components:

- “Person-agent” identifies the crawler

- The “Permit” or “Disallow” instruction signifies what ought to and shouldn’t be crawled on the positioning (or a part of it)

For instance:

Person-agent: *

Disallow: /

This directive says all crawlers (represented by an asterisk) shouldn’t crawl (indicated by “disallow:”) the entire website (represented by a slash image).

Examine your robots.txt to verify there’s no directive that would forestall Google from crawling your website or pages/folders you need to have listed.

Unintended Use of Noindex Tags

One approach to inform search engines like google and yahoo to not index your pages is to make use of the robots meta tag with a “noindex” attribute.

It appears to be like like this:

<meta identify="robots" content material="noindex">

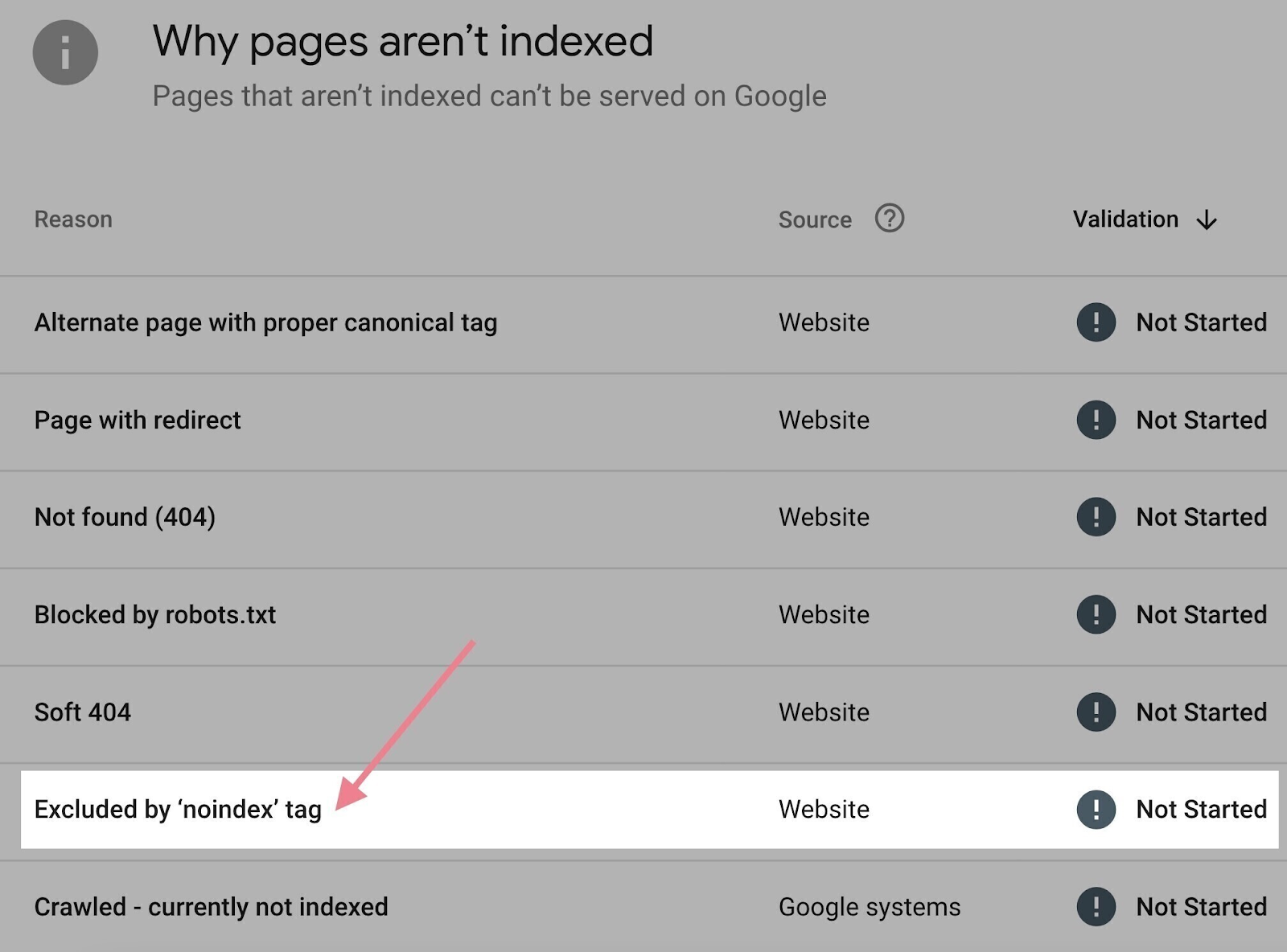

You possibly can verify what pages in your web site have noindex meta tags in Google Search Console:

- Click on the “Pages” report beneath the “Indexing” part within the left menu

- Scroll all the way down to the “Why pages aren’t listed” part

- Click on “Excluded by ‘noindex’ tag” in the event you see it

If the record of URLs accommodates a web page you need listed, merely take away the noindex meta tag from the supply code of that web page.

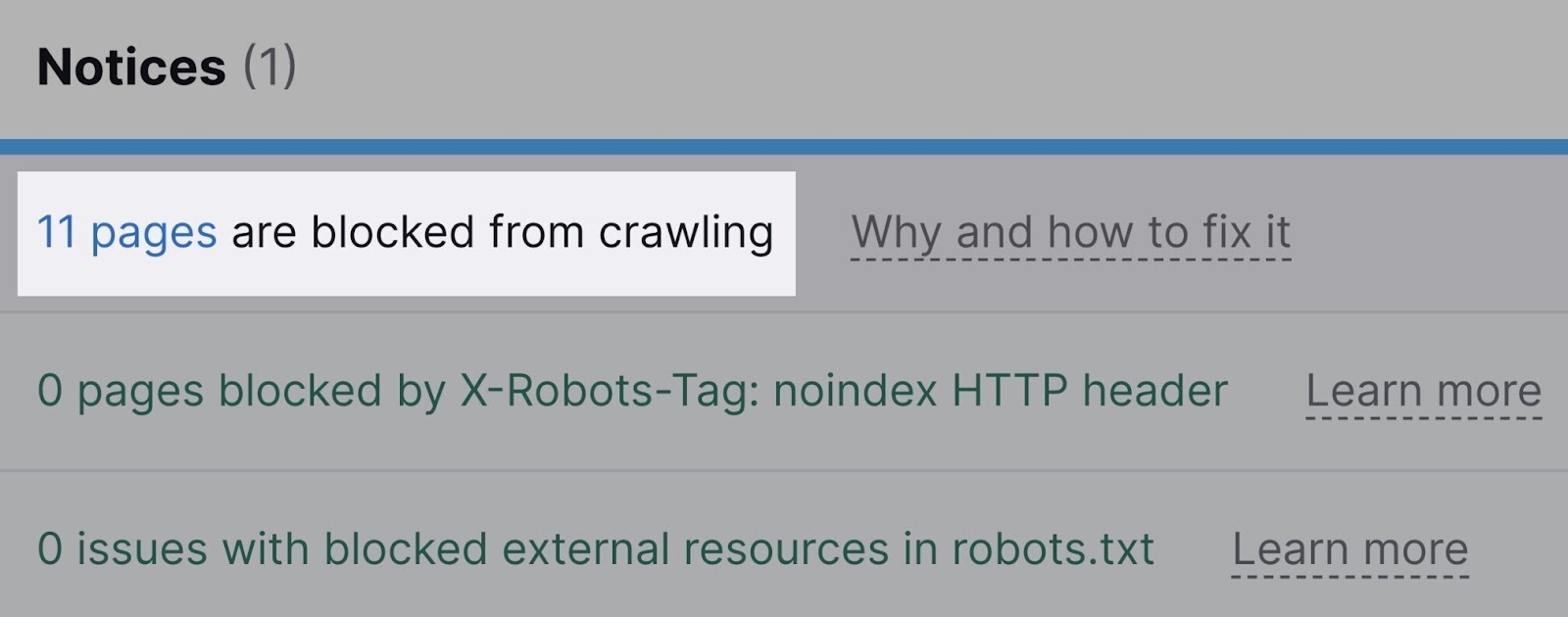

Semrush’s Web site Audit may also warn you about pages which can be blocked both via the robots.txt file or the noindex tag.

It’s going to additionally notify you about assets blocked by the x-robots-tag, which is normally used for non-HTML paperwork (corresponding to PDF recordsdata).

Improper Canonical Tags

One more reason your web page is probably not listed is that it mistakenly accommodates a canonical tag.

Canonical tags inform crawlers if a sure model of a web page is most well-liked. To forestall points attributable to duplicate content material showing on a number of URLs.

If a web page has a canonical tag pointing to a different URL, Googlebot assumes there’s a most well-liked model of that web page. And won’t index the web page in query, even when there isn’t a alternate model.

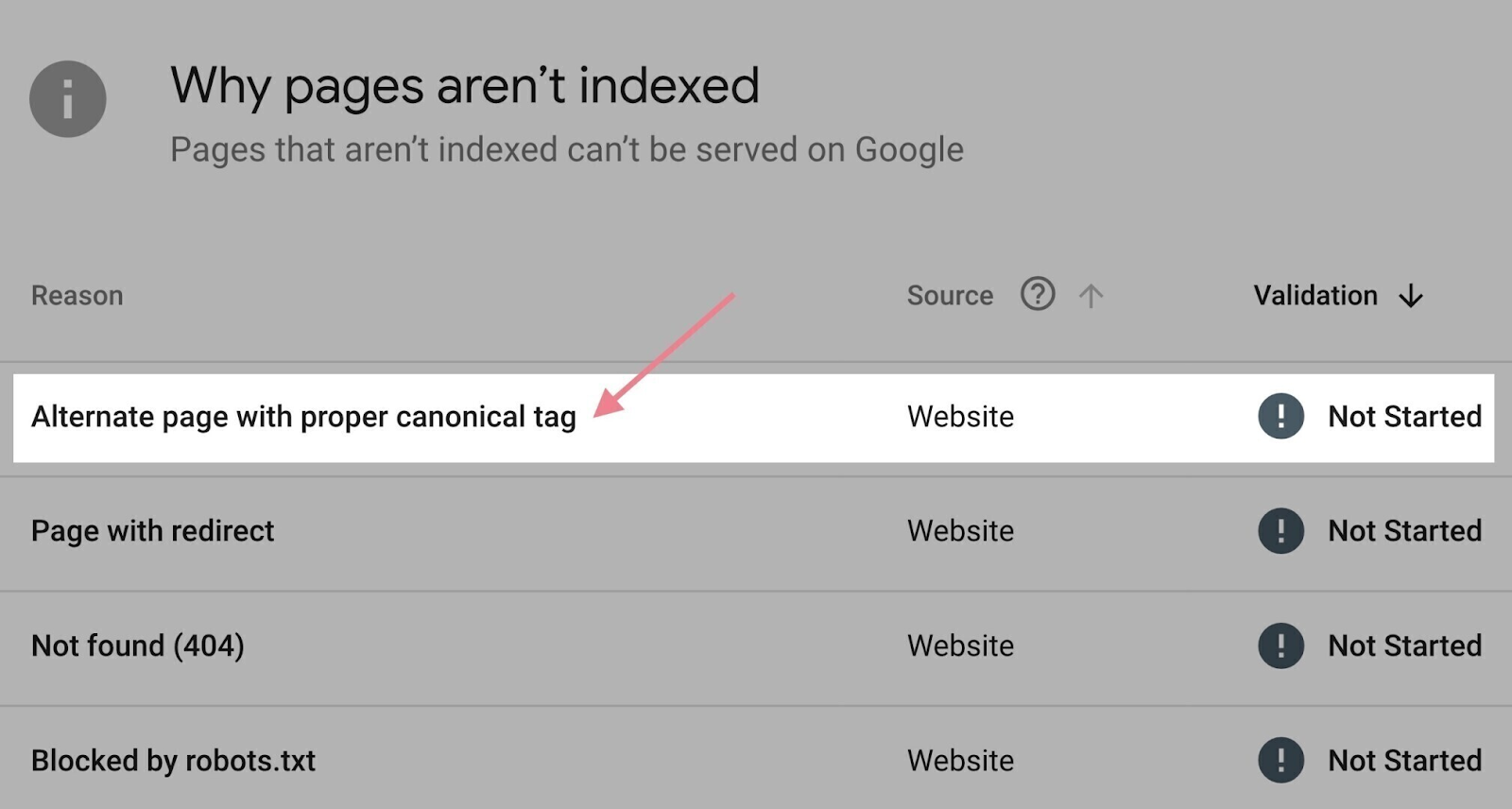

The “Pages” report in Google Search Console may help right here.

Scroll all the way down to the “Why pages aren’t listed” part. Click on the “Alternate web page with correct canonical tag” motive.

You will see a listing of affected pages to undergo.

If there’s a web page you need to have listed (that means the canonical is used incorrectly), take away the canonical tag from that web page. Or ensure that it factors to itself.

Inside Hyperlink Issues

Inside hyperlinks assist crawlers discover your webpages. Which might pace up the method of indexing.

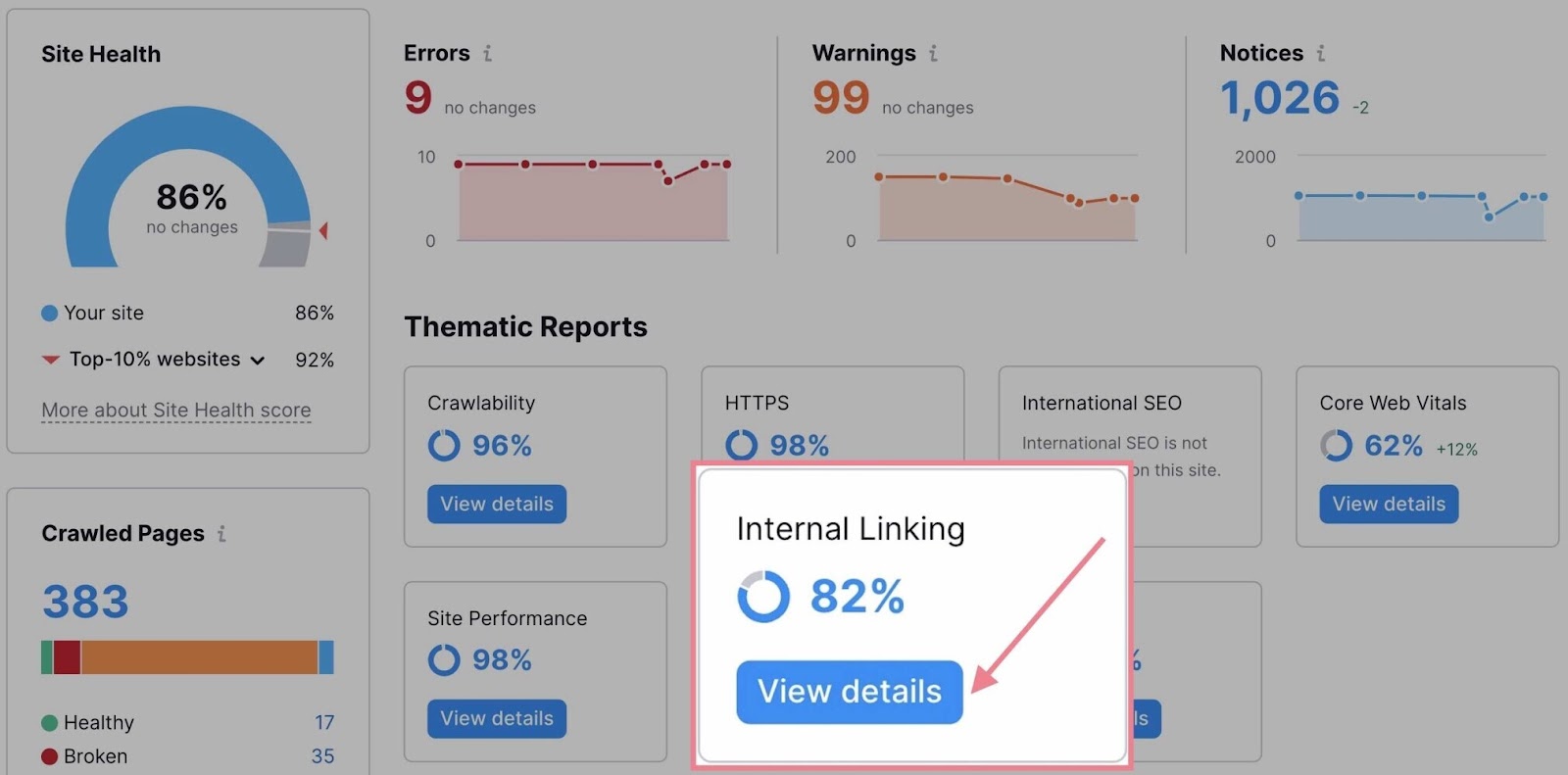

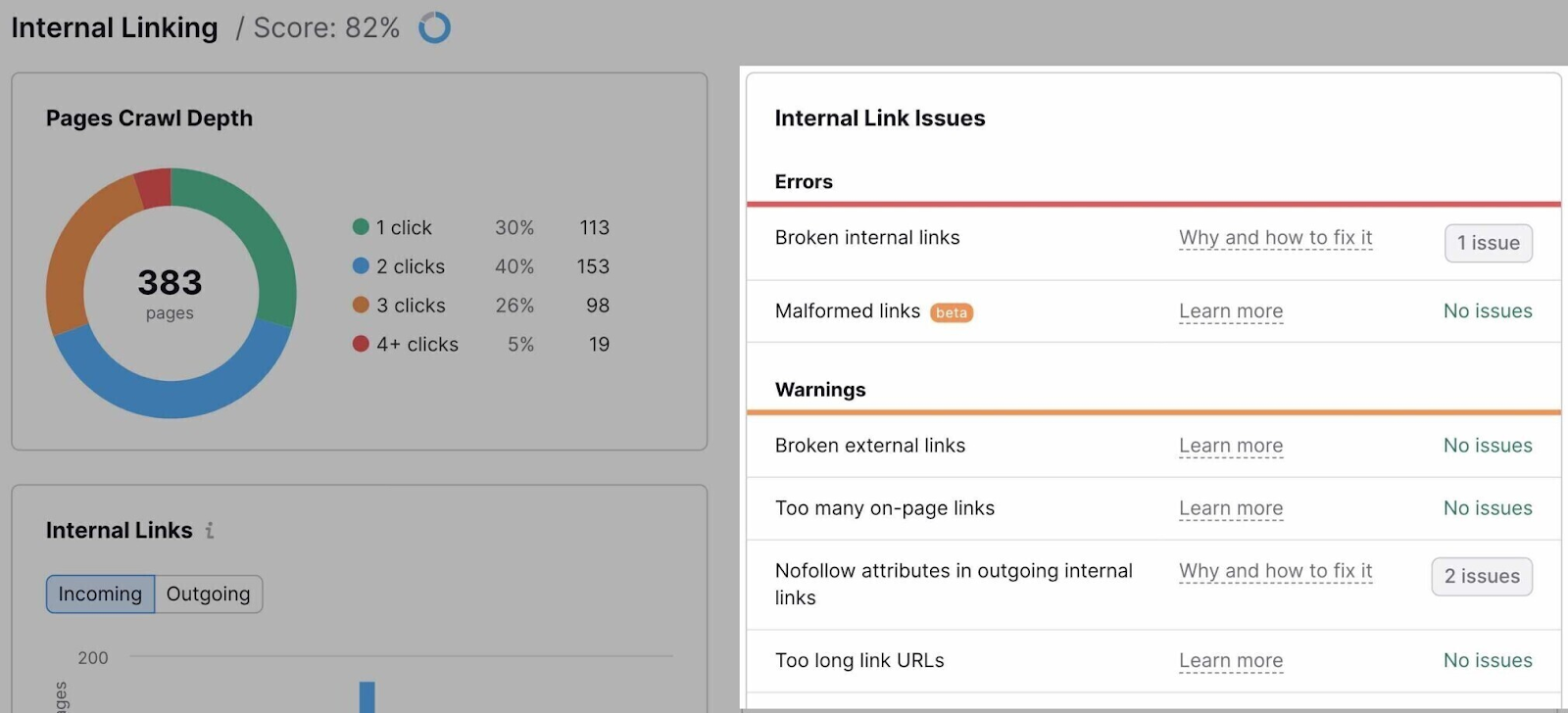

If you wish to audit your inside hyperlinks, go to the “Inside Linking” thematic report in Web site Audit.

The report will record all the problems associated to inside linking.

It will assist to repair all of them, after all. However these are among the most vital points to deal with in terms of crawling and indexing:

- Outgoing inside hyperlinks include nofollow attribute: Nofollow hyperlinks usually do not move authority. In the event that they’re inside, Google could select to disregard the goal web page when crawling your website. Be sure you do not use them for pages you need to have listed.

- Pages want greater than 3 clicks to be reached: If pages want greater than three clicks to be reached from the homepage, there’s an opportunity they will not be crawled and listed. Add extra inside hyperlinks to those pages (and overview your web site structure).

- Orphaned pages in sitemap: Pages that haven’t any inside hyperlinks pointing to them are often known as “orphaned pages.” They’re not often listed. Repair this challenge by linking to any orphaned pages.

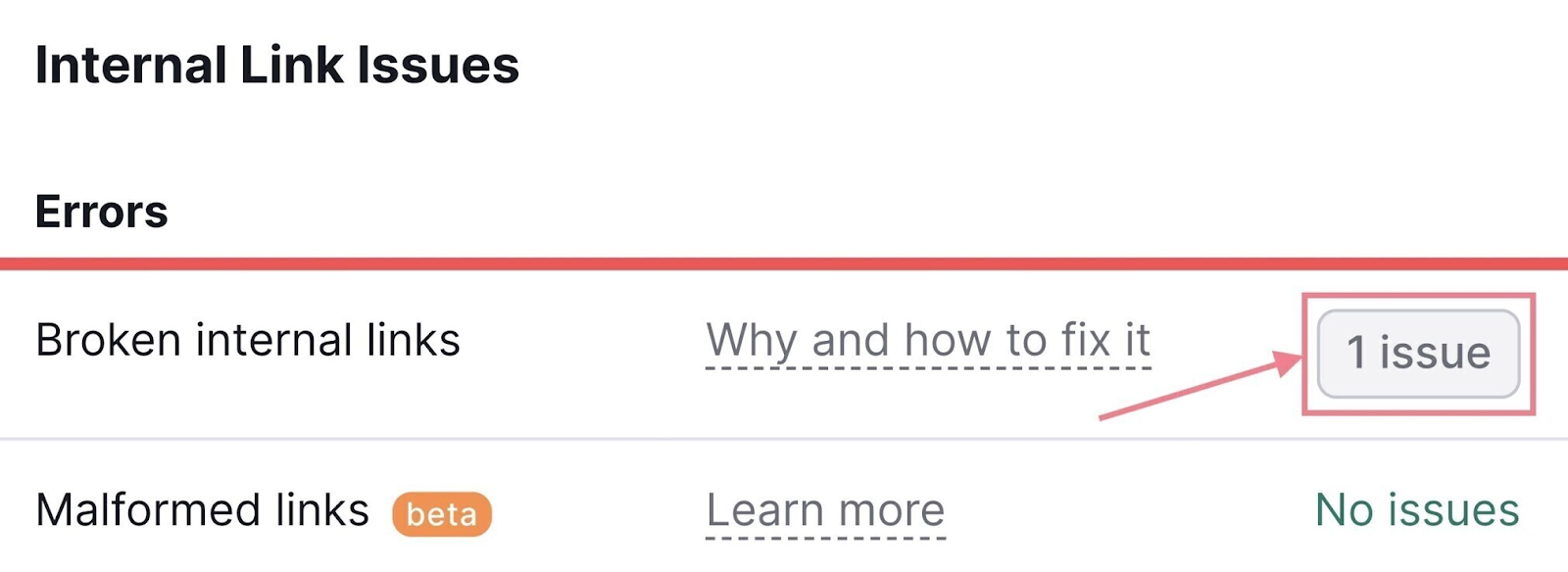

To see pages affected by a selected drawback, click on the hyperlink stating the variety of discovered points subsequent to it.

Final however not least, remember to make use of inside linking strategically:

- Hyperlink to your most vital pages: Google acknowledges that pages are vital to you if they’ve extra inside hyperlinks

- Hyperlink to your new pages: Make inside linking a part of your content material creation course of to hurry up the indexing of your new pages

404 Errors

A 404 error reveals up when an online server can’t discover a web page at a sure URL.

Which might occur for quite a lot of causes. Like an incorrect URL, a deleted web page, a change in URL, or an internet site misconfiguration.

And 404 errors can forestall Google from discovering, indexing, and rating your pages. In addition they hurt the person expertise.

That’s why you need to verify for 404 errors and repair them.

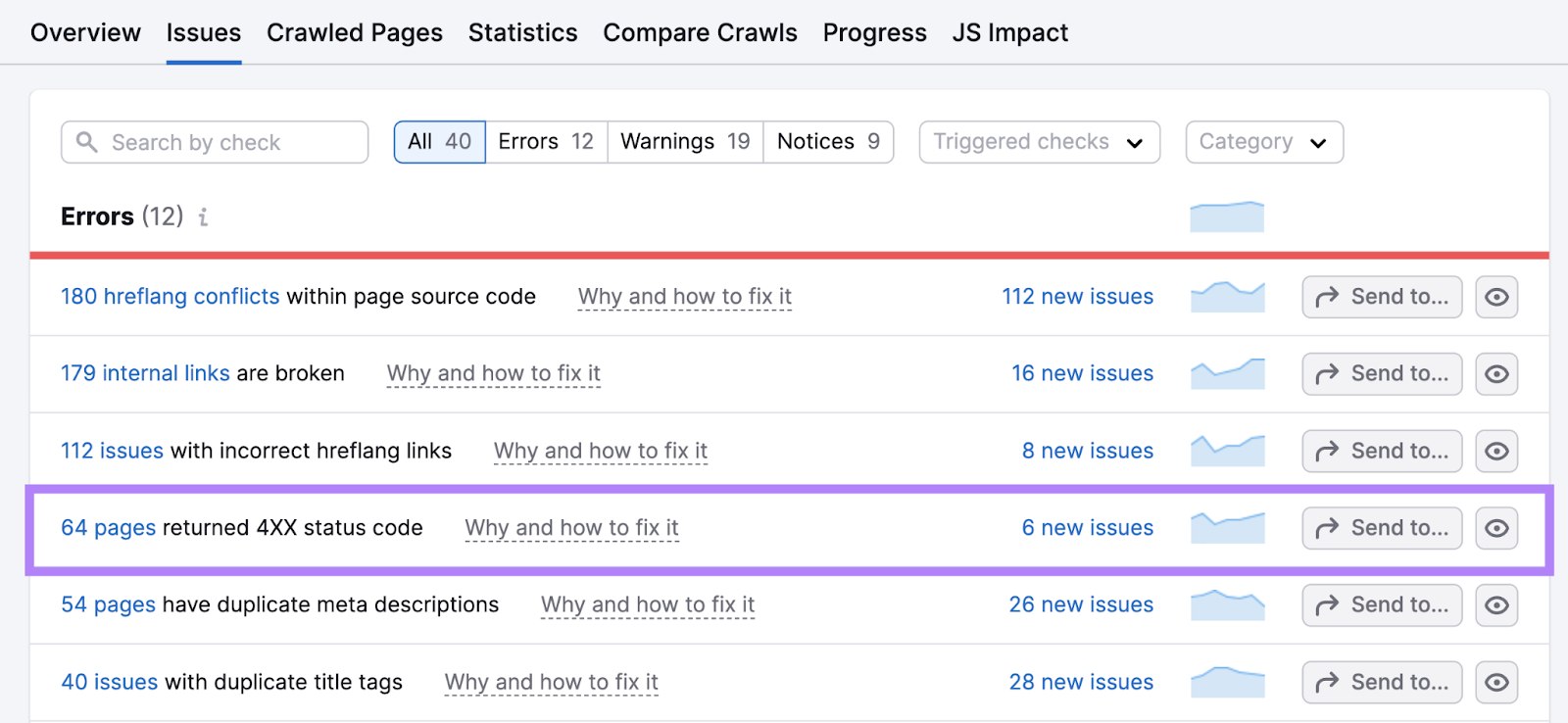

In your Web site Audit report, click on “Points.”

Discover and click on on the hyperlink in “# pages returned a 4XX standing code.”

For any pages which have “404” indicated because the error, click on “View damaged hyperlinks” to see all of the pages that embrace a hyperlink to that damaged URL.

Then, change these hyperlinks to the right URLs by fixing typos in ones that have been mistyped. Or linking to the brand new pages the place the content material is now positioned.

If there’s content material from any damaged URLs that now not exists, change the hyperlinks with the very best substitutes.

Duplicate Content material

Duplicate content material is when equivalent or extremely related content material seems in a couple of place in your website. And it will possibly confuse search engines like google and yahoo, resulting in indexing a web page you don’t need to be the first web page for search rankings.

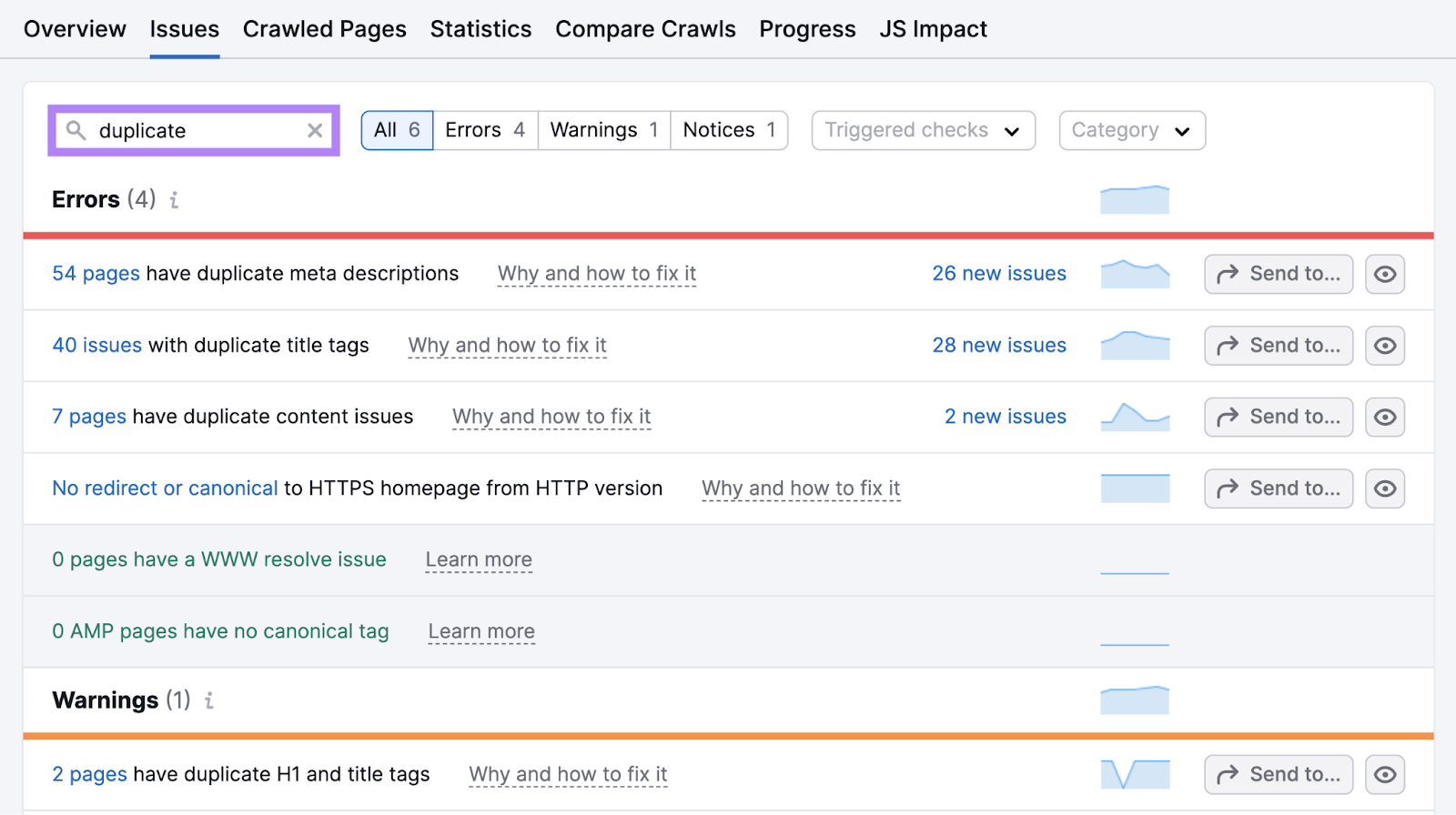

Discover duplicate content material points by clicking “Points” in your Web site Audit challenge and trying to find “duplicate.”

Click on the hyperlink in “# pages have duplicate content material points” to see a listing of affected pages.

When you have duplicates that aren’t serving a objective, embrace any content material from these pages on the principle web page. Then, delete the duplicates and implement a 301 redirects to the principle web page.

If it is advisable to maintain the duplicates, use canonical tags to point which one is the principle one.

Poor Web site High quality

Even when your web site meets all technical necessities, Google could not index all of your pages. Particularly if it would not contemplate your website to be prime quality.

In an episode of search engine optimization Workplace Hours, John Mueller from Google advises prioritizing website high quality:

When you have a smaller website and also you’re seeing a major a part of your pages should not being listed, then I’d take a step again and attempt to rethink the general high quality of the web site and never focus a lot on technical points for these pages.

If this appears like your scenario, observe the three finest practices under to boost it.

Create Excessive-High quality Content material

High quality content material that’s “useful, dependable, and people-first” is extra more likely to be listed and served in search outcomes.

Listed below are some suggestions to enhance the standard of the content material you publish in your website:

- Heart your content material round clients’ wants and ache factors. Deal with pertinent issues and questions and supply actionable options.

- Showcase your experience. Publish content material written by or together with insights from material specialists. Share real-life examples and your model’s expertise with the subject.

- Replace your content material commonly. Make sure that what you put up is related and updated. Run common content material audits to determine errors, outdated data, and alternatives for enchancment.

Construct Related Backlinks

Google views backlinks (hyperlinks on different websites that time to your website) from industry-relevant, high-quality web sites as suggestions. So, the extra profitable your hyperlink constructing efforts (proactively taking steps to achieve backlinks) are, the higher your possibilities of rating.

And having extra backlinks helps with indexing. As a result of Google’s crawler finds new pages to index via hyperlinks.

You need to use completely different hyperlink constructing techniques to achieve extra high-quality hyperlinks. For instance, doing focused outreach to journalists and bloggers, writing articles for different websites, and analyzing rivals’ backlinks for alternatives you’ll be able to replicate.

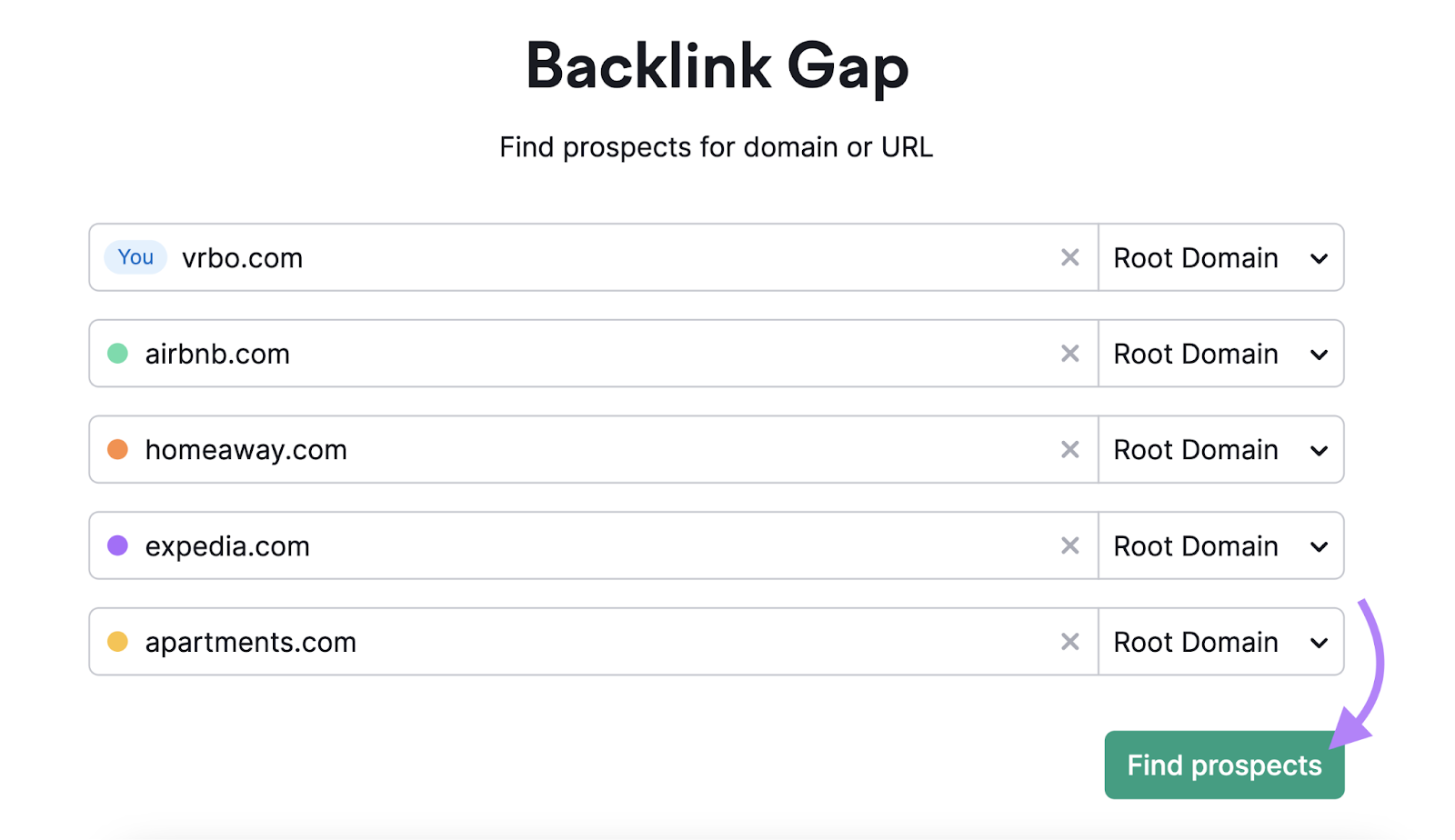

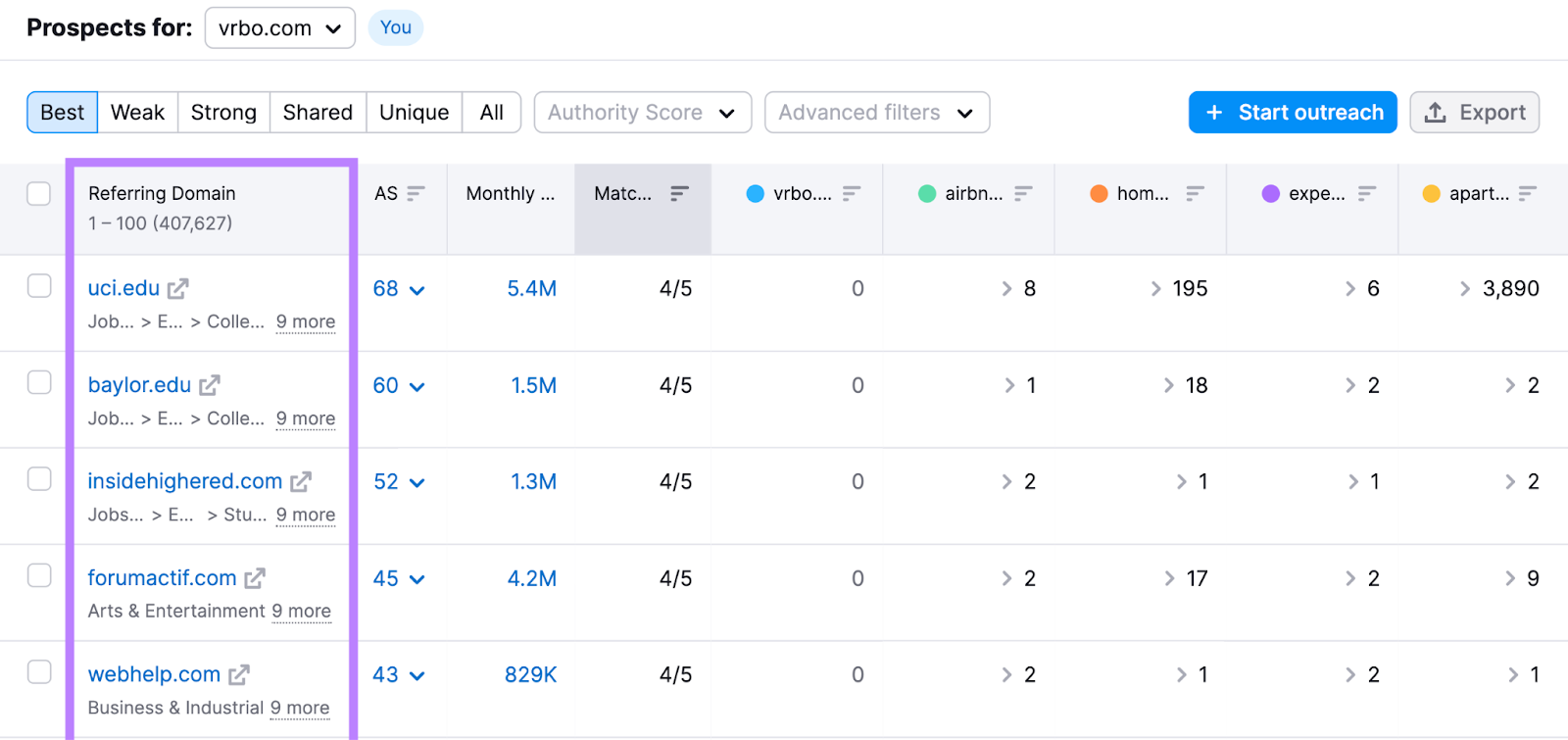

Use Backlink Hole to dive deeper into competitor backlinks.

Enter your area and as much as 4 rivals’s domains. Click on “Discover prospects.”

The “Greatest” tab reveals you web sites that hyperlink to all of your rivals however to not you.

Look via your rivals’ pages and discover how one can replicate among the backlinks. Listed below are a couple of examples:

- Contributing knowledgeable insights: Discover web sites the place rival manufacturers publish visitor articles, get cited as material specialists, or seem as podcast company. Attain out to these web sites to discover how one can be featured.

- Create higher content material: See which industry-leading on-line publications your rivals seem on. Think about creating an analogous however higher web page with unique insights, after which pitch it to these publications as a alternative hyperlink.

Additional studying: The right way to Discover Your Opponents’ Backlinks: A Step-by-Step Information

Enhance E-E-A-T Alerts

E-E-A-T stands for “Expertise, Experience, Authoritativeness, and Trustworthiness.” These are a part of Google’s Search High quality Rater Tips that actual individuals use to judge search outcomes.

This implies creating pages with E-E-A-T in thoughts is extra probably to assist your search efficiency.

To enhance your website’s E-E-A-T, goal to:

- Present clear creator data. Spotlight your contributors’ private experiences and experience regarding the matters they write about.

- Collaborate with material specialists. Embrace insights from {industry} specialists. And even rent them to overview your content material and guarantee its accuracy.

- Assist the claims you make. Cite credible sources throughout all of your printed content material. So readers know the knowledge you present is respected.

Additional studying: What Are E-E-A-T and YMYL in search engine optimization & The right way to Optimize for Them

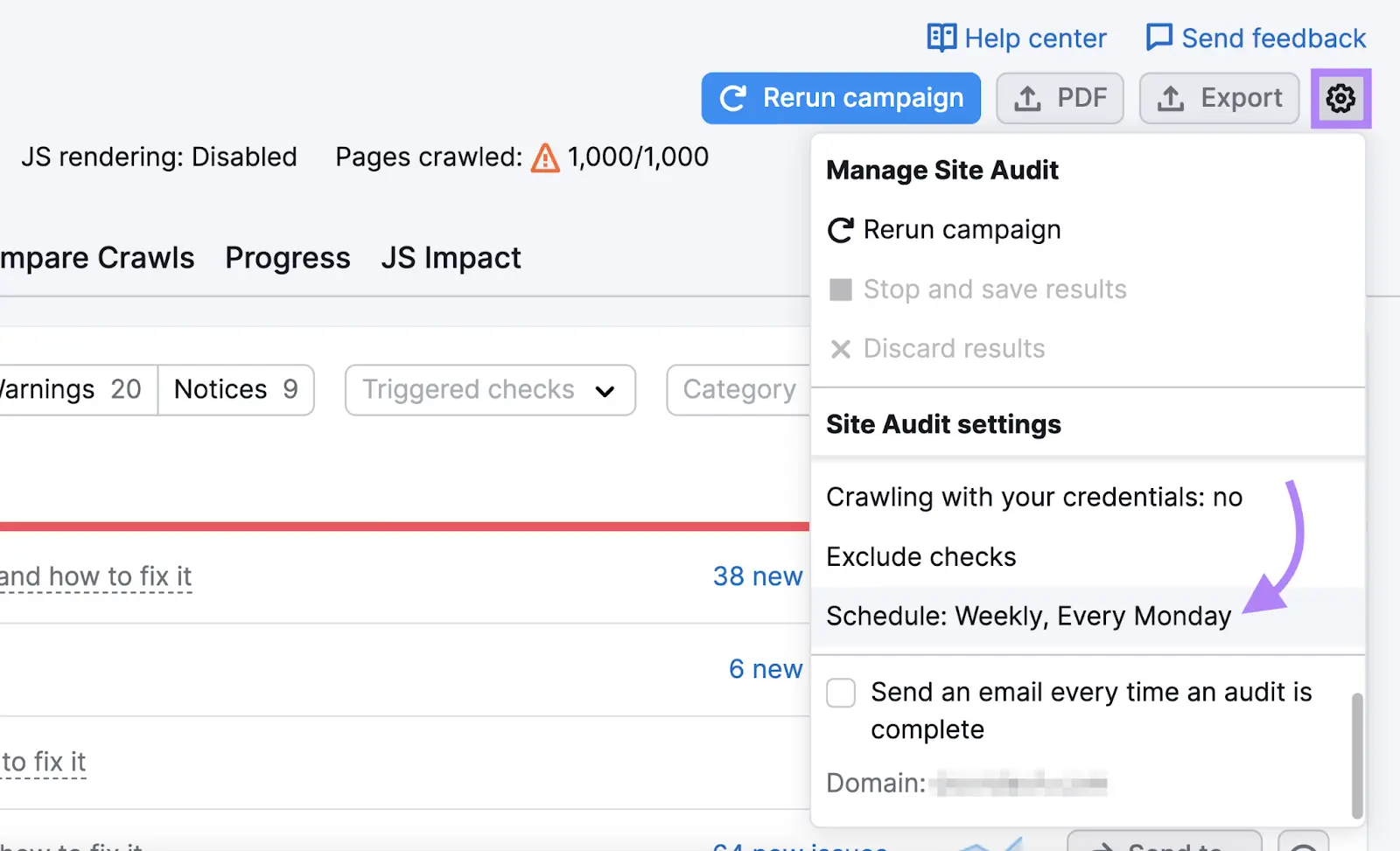

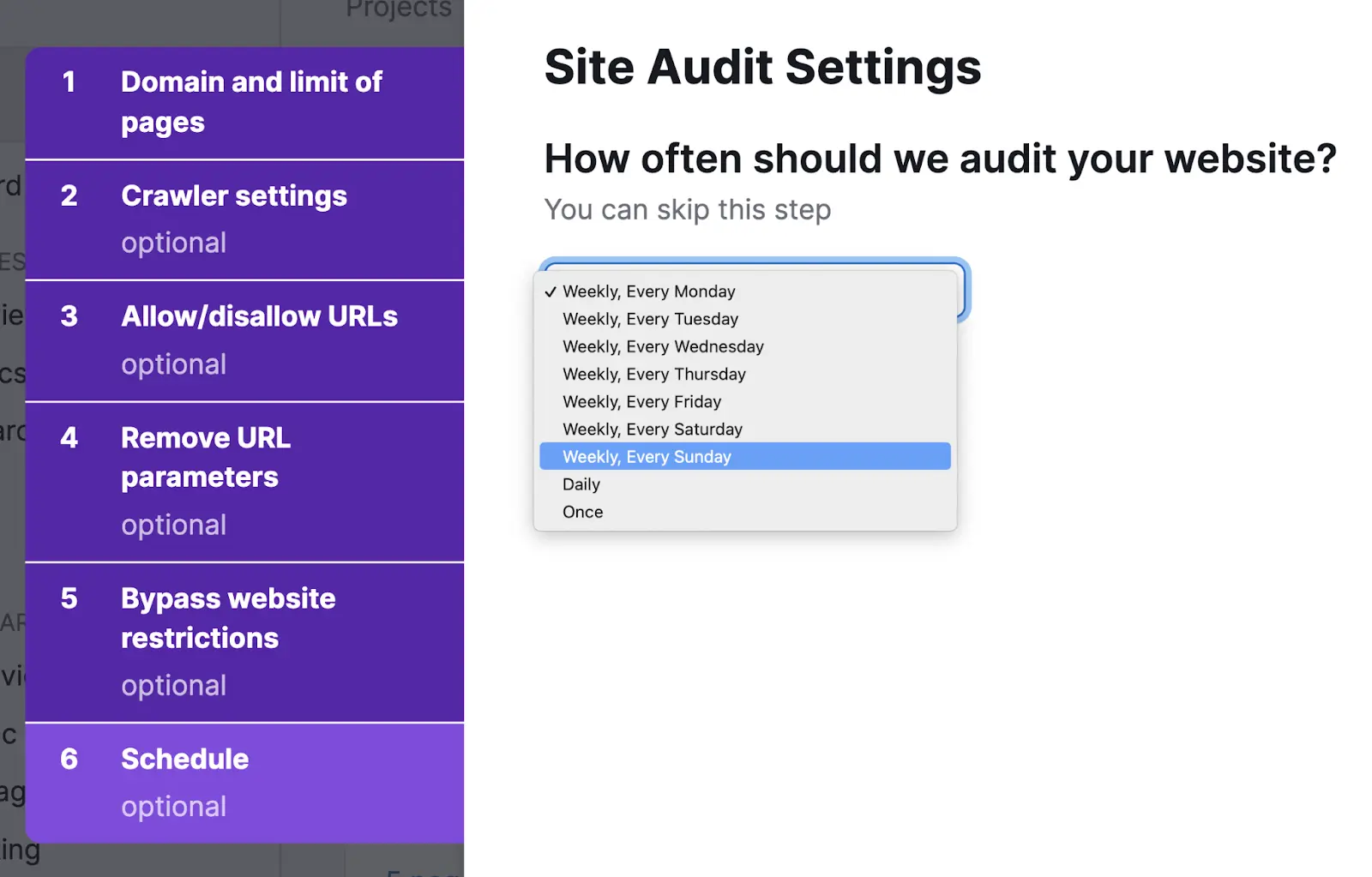

Monitor Your Web site for Indexing Points

Fixing your indexing points isn’t a one-time factor. New points would possibly crop up sooner or later—particularly everytime you add new content material or replace your web site’s construction.

Web site Audit may help you notice new technical issues early earlier than they escalate.

Merely choose periodic audits within the settings.

You’ll get an choice to arrange computerized scans on a each day or weekly foundation

We advocate configuring weekly scans to start out. You possibly can regulate the cadence later as wanted.

Web site Audit will shortly flag any technical issues. Which suggests you’ll be able to handle them earlier than they trigger severe points.

Google Indexing FAQs

How Lengthy Does It Take Google to Index a Web site?

The time Google must index your website varies tremendously, relying on the dimensions of your web site. It may well take a couple of days for smaller websites. And up to some months for big web sites.

How Can You Get Google to Index Your Web site Sooner?

You possibly can particularly ask Google to crawl and index your content material by:

- Submitting your sitemap (for indexing total web sites) in Google Search Console

- Requesting Google indexing (for a single URL) in Google Search Console

What’s the Distinction Between Crawling and Indexing?

Crawling is the invention course of Google’s bot makes use of to observe hyperlinks to search out new web sites and pages. Indexing is when Googlebot analyzes the content material of a web page to grasp it and retailer it for rating functions.

Why Are A few of Your Webpages Not Listed By Google?

Your pages is probably not listed on account of points like:

- Your robots.txt file is obstructing Googlebot from indexing sure pages

- Googlebot cannot discover the web page due to an absence of inside hyperlinks

- There are 404 points

- Your website would possibly has duplicate content material

Discover these points and extra utilizing Web site Audit.