The corporate has shared its analysis about an AI mannequin that may decode speech from noninvasive recordings of mind exercise. It has potential to assist individuals after traumatic mind harm, which left them unable to speak by speech, typing, or gestures.

Decoding speech based mostly on mind exercise has been a long-established objective of neuroscientists and clinicians, however many of the progress has relied on invasive mind recording strategies, corresponding to stereotactic electroencephalography and electrocorticography.

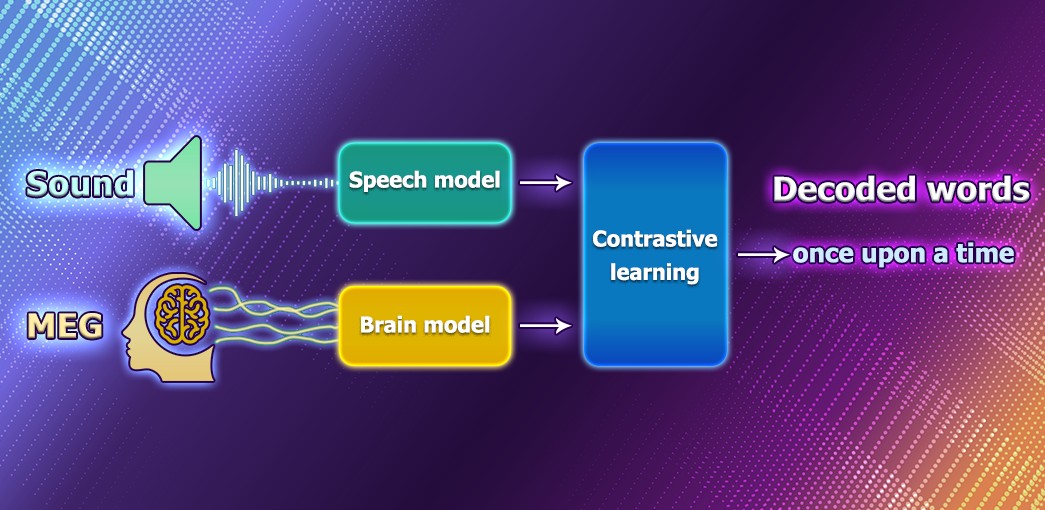

Meantime researchers from Meta suppose that changing speech through noninvasive strategies would offer a safer, extra scalable answer that might finally profit extra individuals. Thus, they created a deep studying mannequin skilled with contrastive studying after which used it to align noninvasive mind recordings and speech sounds.

To do that, scientists used an open supply, self-supervised studying mannequin wave2vec 2.0 to determine the complicated representations of speech within the brains of volunteers whereas listening to audiobooks.

The method consists of an enter of electroencephalography and magnetoencephalography recordings right into a “mind” mannequin, which consists of a regular deep convolutional community with residual connections. Then, the created structure learns to align the output of this mind mannequin to the deep representations of the speech sounds that had been introduced to the individuals.

After coaching, the system performs what’s generally known as zero-shot classification: with a snippet of mind exercise, it could decide from a big pool of recent audio recordsdata which one the individual truly heard.

In keeping with Meta: “The outcomes of our analysis are encouraging as a result of they present that self-supervised skilled AI can efficiently decode perceived speech from noninvasive recordings of mind exercise, regardless of the noise and variability inherent in these information. These outcomes are solely a primary step, nevertheless. On this work, we centered on decoding speech notion, however the final objective of enabling affected person communication would require extending this work to speech manufacturing. This line of analysis might even attain past aiding sufferers to doubtlessly embody enabling new methods of interacting with computer systems.”

Study extra concerning the analysis right here