Massive Language Fashions (LLMs) are pivotal in advancing pure language processing duties resulting from their profound understanding and technology capabilities. These fashions are continuously refined to raised comprehend and execute advanced directions throughout various purposes. Regardless of the numerous progress on this discipline, a persistent subject stays: LLMs typically produce outputs that solely partially adhere to the given directions. This misalignment can lead to inefficiencies, particularly when the fashions are utilized to specialised duties requiring excessive accuracy.

Current analysis consists of fine-tuning LLMs with human-annotated information, as demonstrated by fashions like GPT-4. Frameworks similar to WizardLM and its superior iteration, WizardLM+, improve instruction complexity to enhance mannequin coaching. Research by Zhao et al. and Zhou et al. affirm the importance of instruction complexity in mannequin alignment. Moreover, Schick and Schütze advocate for automating artificial information technology, leveraging LLMs’ in-context studying capabilities. Methods from information distillation, launched by Hinton et al., additionally contribute to refining LLMs for particular educational duties.

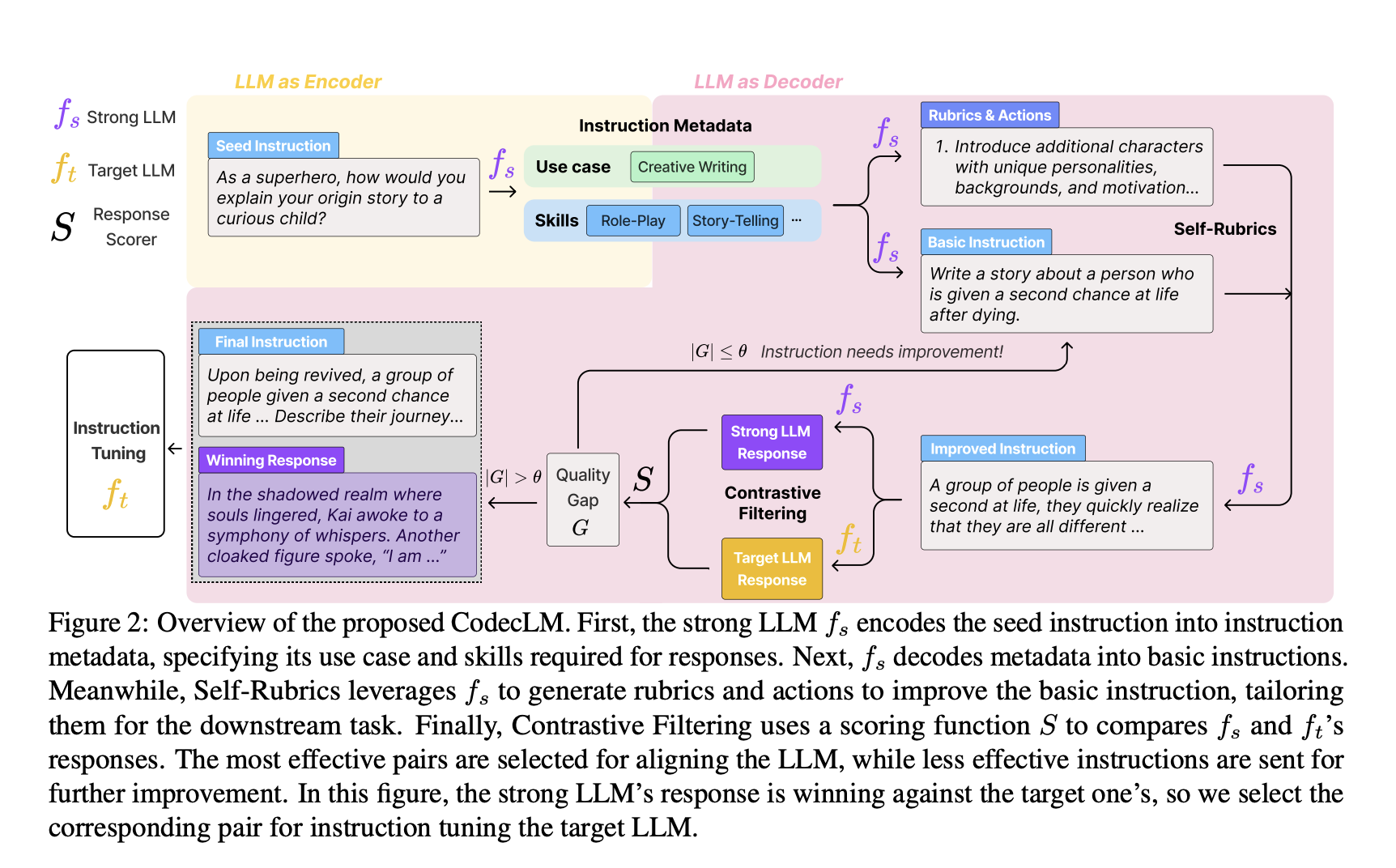

Researchers at Google Cloud AI have developed CodecLM, an revolutionary framework designed to align LLMs with particular consumer directions via tailor-made artificial information technology. CodecLM distinguishes itself by using an encode-decode mechanism to supply extremely custom-made educational information, guaranteeing that LLMs carry out optimally throughout various duties. This technique leverages Self-Rubrics and Contrastive Filtering strategies, enhancing the relevance and high quality of artificial directions and considerably bettering the fashions’ means to comply with advanced directions precisely.

CodecLM employs an encode-decode method, reworking preliminary seed directions into concise metadata that captures important instruction traits. This metadata then guides the technology of artificial directions tailor-made to particular consumer duties. To reinforce instruction high quality and relevance, the framework makes use of Self-Rubrics so as to add complexity and specificity and Contrastive Filtering to pick out the simplest instruction-response pairs based mostly on efficiency metrics. The effectiveness of CodecLM is validated throughout a number of open-domain instruction-following benchmarks, demonstrating vital enhancements in LLM alignment in comparison with conventional strategies with out counting on intensive guide information annotation.

CodecLM’s efficiency was rigorously evaluated throughout a number of benchmarks. Within the Vicuna benchmark, CodecLM recorded a Capability Restoration Ratio (CRR) of 88.75%, a 12.5% enchancment over its nearest competitor. The Self-Instruct benchmark achieved a CRR of 82.22%, marking a 15.2% enhance from the closest competing mannequin. These figures verify CodecLM’s effectiveness in enhancing LLMs’ means to comply with advanced directions with greater accuracy and alignment to particular consumer duties.

In conclusion, CodecLM represents a major development in aligning LLMs with particular consumer directions by producing tailor-made artificial information. By leveraging an revolutionary encode-decode method, enhanced by Self-Rubrics and Contrastive Filtering, CodecLM considerably improves the accuracy of LLMs following advanced directions. This enchancment in LLM efficiency has sensible implications, providing a scalable, environment friendly different to conventional, labor-intensive strategies of LLM coaching and enhancing the fashions’ means to align with particular consumer duties.

Take a look at the Paper. All credit score for this analysis goes to the researchers of this challenge. Additionally, don’t neglect to comply with us on Twitter. Be part of our Telegram Channel, Discord Channel, and LinkedIn Group.

When you like our work, you’ll love our publication..

Don’t Overlook to hitch our 40k+ ML SubReddit

Need to get in entrance of 1.5 Million AI Viewers? Work with us right here

Nikhil is an intern guide at Marktechpost. He’s pursuing an built-in twin diploma in Supplies on the Indian Institute of Know-how, Kharagpur. Nikhil is an AI/ML fanatic who’s at all times researching purposes in fields like biomaterials and biomedical science. With a powerful background in Materials Science, he’s exploring new developments and creating alternatives to contribute.