What Is Bot Site visitors?

Bot visitors is non-human visitors to web sites and apps generated by automated software program packages, or “bots,” moderately than by human customers.

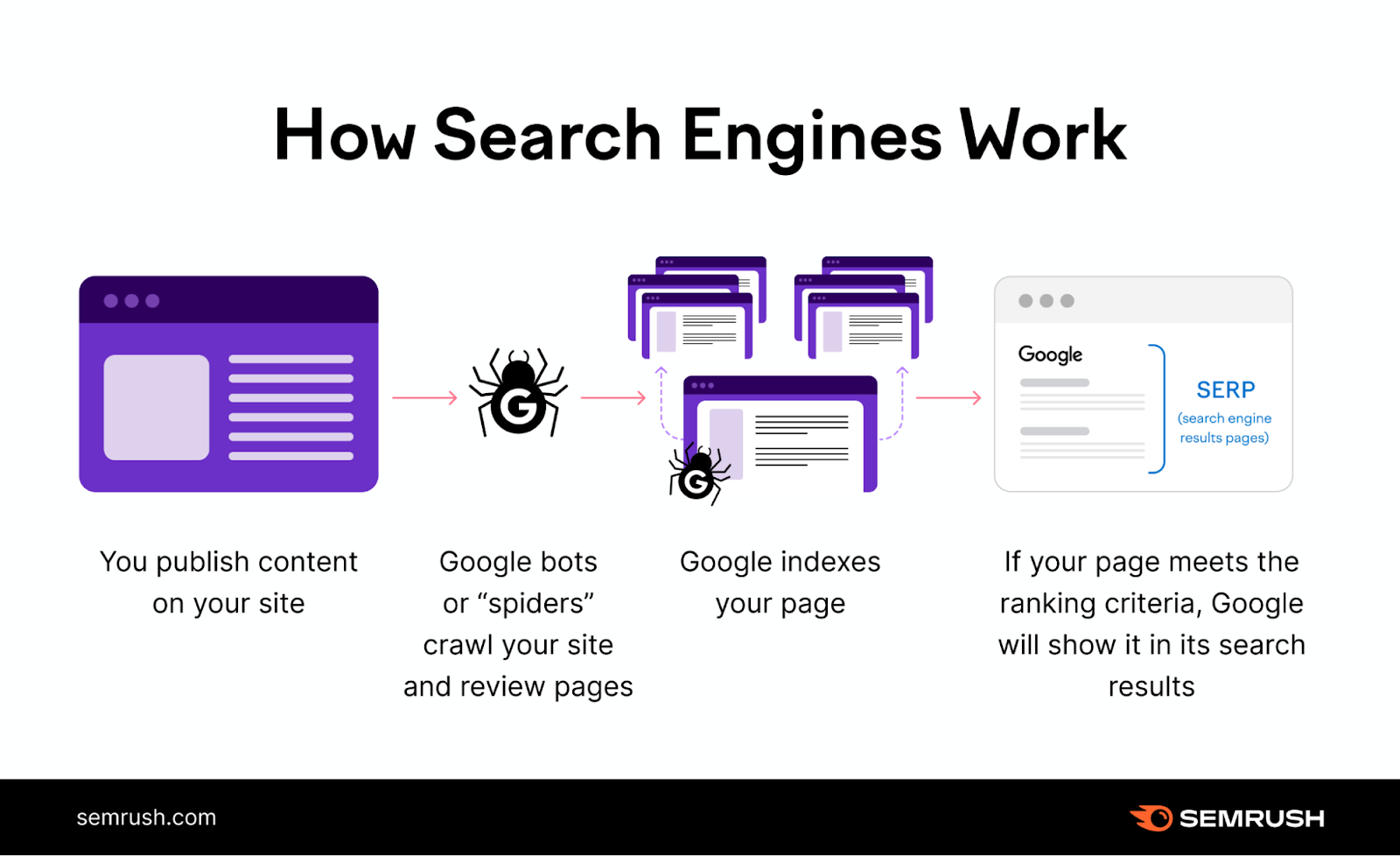

Bot visitors isn’t helpful visitors, but it surely’s widespread to see it. Search engine crawlers (additionally known as “spiders”) might go to your website regularly, for instance.

Bot visitors sometimes received’t end in conversions or income for your enterprise—though, should you’re an ecommerce enterprise, you may expertise purchasing bots that make purchases on behalf of human creators.

Nonetheless, this principally pertains to companies that promote in-demand gadgets like live performance tickets or restricted sneaker releases.

Some bots go to your website to crawl pages for search engine indexing or verify website efficiency. Different bots might try to scrape (extract knowledge) out of your website or deliberately overwhelm your servers to assault your website’s accessibility.

Good Bots vs. Unhealthy Bots: Figuring out the Variations

There are each helpful and dangerous bots. Under, we clarify how they differ.

Good Bots

Widespread good bots embrace however aren’t restricted to:

- Crawlers from search engine optimisation instruments: Device bots, such because the SemrushBot, crawl your website that will help you make knowledgeable selections, like optimizing meta tags and assessing the indexability of pages. These bots are used for good that will help you meet search engine optimisation greatest practices.

- Website monitoring bots: These bots can verify for system outages and monitor the efficiency of your web site. We use SemrushBot with instruments like Website Audit and extra to provide you with a warning of points like downtime and sluggish response instances. Steady monitoring helps preserve optimum website efficiency and availability to your guests.

- Search engine crawlers: Search engines like google and yahoo use bots, corresponding to Googlebot, to index and rank the pages of your web site. With out these bots crawling your website, your pages wouldn’t get listed, and folks wouldn’t discover your enterprise in search outcomes.

Unhealthy Bots

You could not see visitors from or proof of malicious bots regularly, however you need to all the time take into accout the potential of being focused.

Unhealthy bots embrace however aren’t restricted to:

- Scrapers: Bots can scrape and duplicate content material out of your web site with out your permission. Publishing that data elsewhere is mental property theft and copyright infringement. If individuals see your content material duplicated elsewhere on the internet, the integrity of your model could also be compromised.

- Spam bots: Bots may also create and distribute spam content material, corresponding to phishing emails, faux social media accounts, and discussion board posts. Spam can deceive customers and compromise on-line safety by tricking them into revealing delicate data.

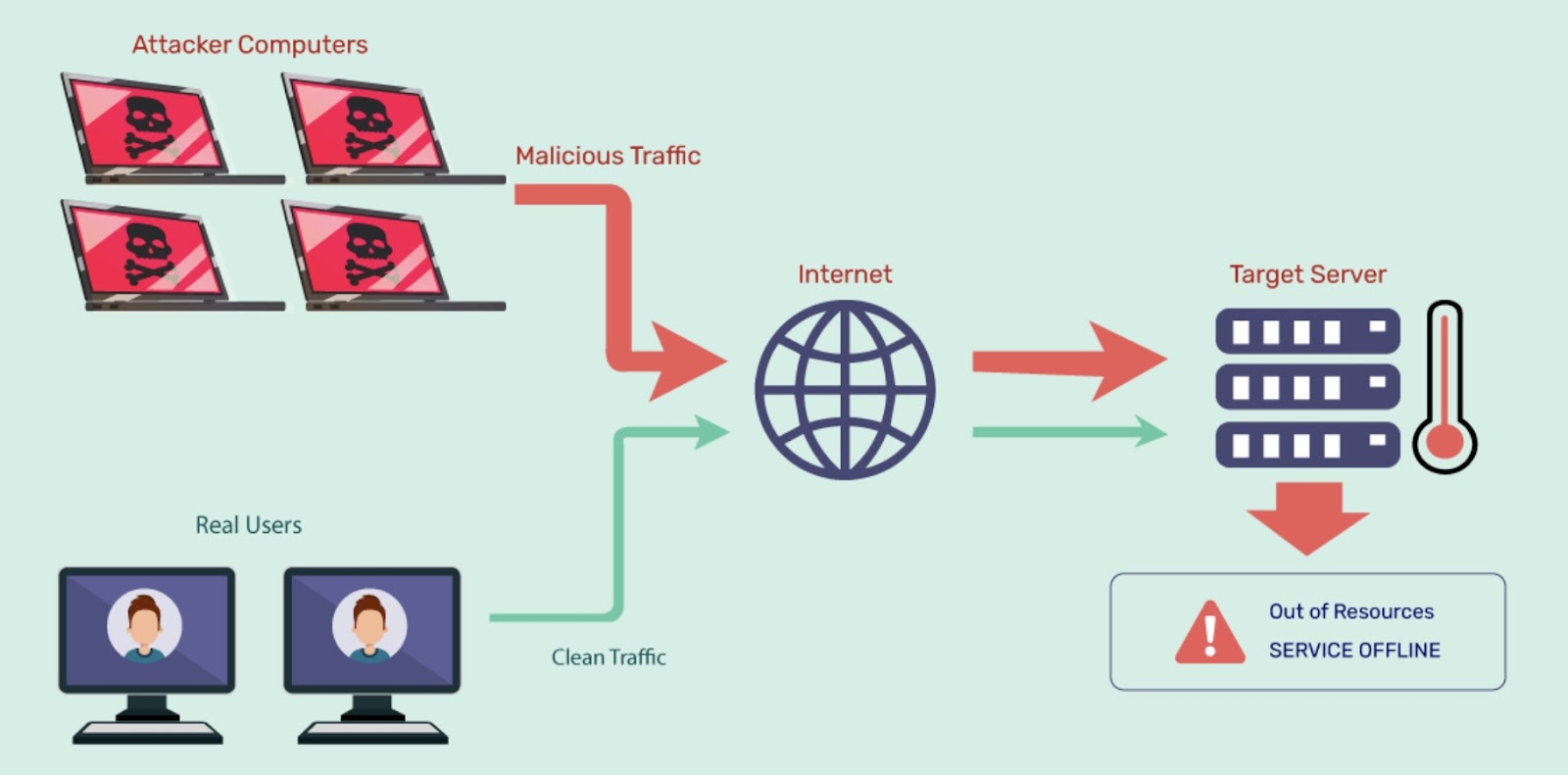

- DDoS bots: DDoS (Distributed Denial-of-Service) bots goal to overwhelm your servers and forestall individuals from accessing your web site by sending a flood of pretend visitors. These bots can disrupt your website’s availability, resulting in downtime and monetary losses if customers aren’t capable of entry or purchase what they want.

Picture Supply: Indusface

Additional studying: 11 Crawlability Issues and Learn how to Repair Them

How Bot Site visitors Impacts Web sites and Analytics

Bot visitors can skew web site analytics and result in inaccurate knowledge by affecting the next:

- Web page views: Bot visitors can artificially inflate the variety of web page views, making it seem to be customers are partaking together with your web site greater than they are surely

- Session length: Bots can have an effect on the session length metric, which measures how lengthy customers keep in your website. Bots that browse your web site rapidly or slowly can alter the common session length, making it difficult to evaluate the true high quality of the consumer expertise.

- Location of customers: Bot visitors creates a misunderstanding of the place your website’s guests are coming from by masking their IP addresses or utilizing proxies

- Conversions: Bots can intervene together with your conversion targets, corresponding to kind submissions, purchases, or downloads, with faux data and e mail addresses

Bot visitors may also negatively affect your web site’s efficiency and consumer expertise by:

- Consuming server sources: Bots can eat bandwidth and server sources, particularly if it’s malicious or high-volume. This will decelerate web page load instances, improve internet hosting prices, and even trigger your website to crash.

- Damaging your popularity and safety: Bots can hurt your website’s popularity and safety by stealing or scraping content material, costs, and knowledge. An assault (corresponding to DDoS) may price you income and buyer belief. Along with your website doubtlessly inaccessible, your rivals might profit if customers flip to them as an alternative.

Safety Dangers Related to Malicious Bots

All web sites are susceptible to bot assaults, which may compromise safety, efficiency, and popularity. Assaults can goal all forms of web sites, no matter measurement or reputation.

Bot visitors makes up practically half of all web visitors, and greater than 30% of automated visitors is malicious.

Malicious bots can pose safety threats to your web site as they’ll steal knowledge, spam, hijack accounts, and disrupt providers.

Two widespread safety threats are knowledge breaches and DDoS assaults:

- Information breaches: Malicious bots can infiltrate your website to entry delicate data like private knowledge, monetary information, and mental property. Information breaches from these bots can lead to fraud, identification theft of individuals at your enterprise or your website’s guests, reputational harm to your model, and extra.

- DDoS assaults: Malicious bots may also launch DDoS assaults that make your website sluggish or unavailable for human customers. These assaults can lead to service disruption, income loss, and dissatisfied customers.

Learn how to Detect Bot Site visitors

Detecting bot visitors is vital for web site safety and correct analytics.

Establish Bots with Instruments and Strategies

There are numerous instruments and strategies that will help you detect bot visitors in your web site.

Among the most typical ones are:

- IP evaluation: Examine the IP addresses of your website’s guests towards identified bot IP lists. Search for IP addresses with uncommon traits, corresponding to excessive request charges, low session durations, or geographic anomalies.

- Conduct evaluation: Monitor the habits of holiday makers and search for indicators that point out bot exercise, corresponding to repetitive patterns, uncommon website navigation, and low session instances

Log File Evaluation

Analyze the log information of your net server. Log information report each request made to your website and supply helpful details about your web site visitors, such because the consumer agent, referrer, response code, and request time.

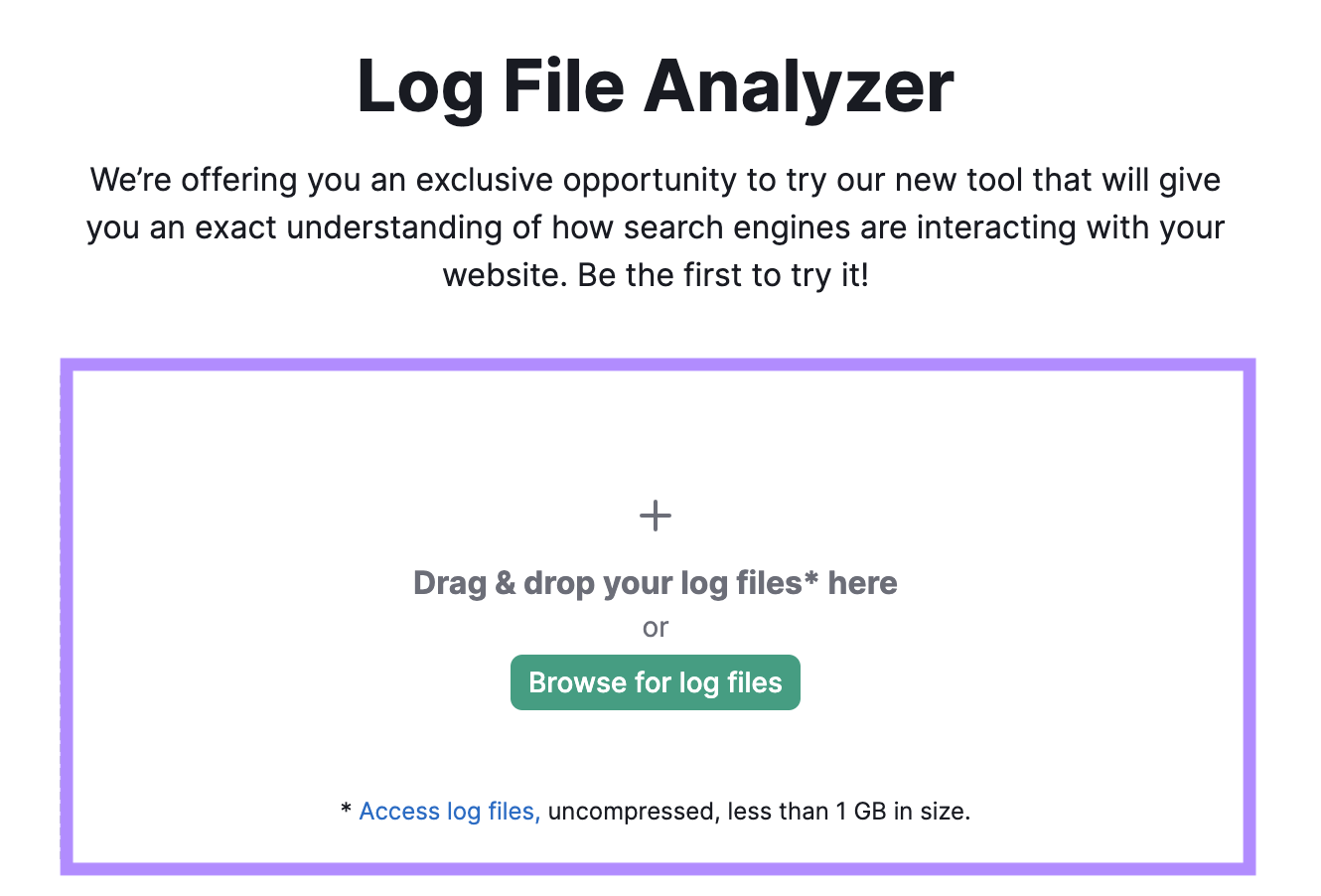

A log file evaluation may also allow you to spot points crawlers may face together with your website. Semrush’s Log File Analyzer lets you higher perceive how Google crawls your web site.

Right here’s how one can use it:

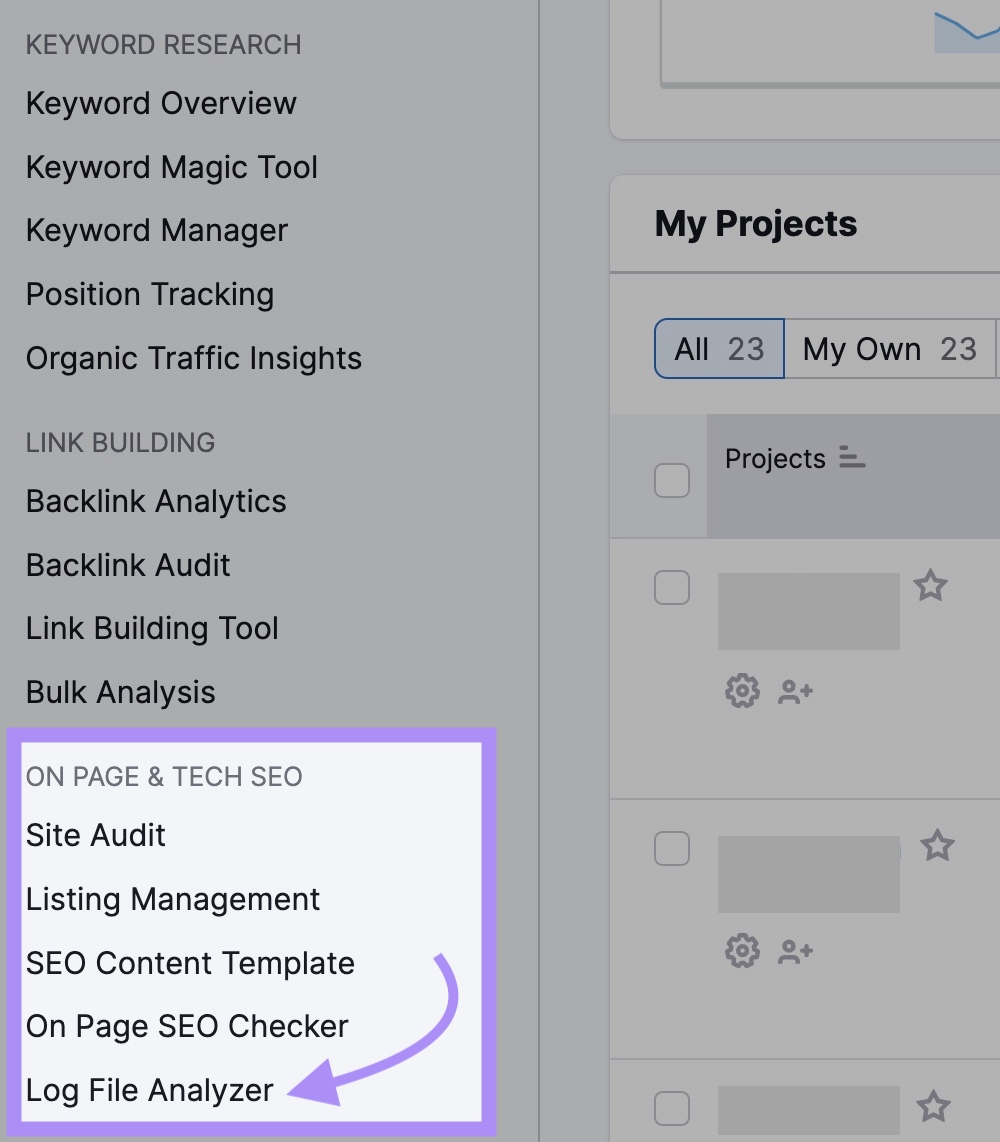

Go straight to the Log File Analyzer or log in to your Semrush account. Entry the device by the left navigation underneath “ON PAGE & TECH search engine optimisation.”

Earlier than utilizing the device, get a duplicate of your website’s log file out of your net server.

The commonest approach of accessing it’s by a file switch protocol (FTP) consumer like FileZilla. Or, ask your improvement or IT group for a duplicate of the file.

After you have the log file, drop it into the analyzer.

Then click on “Begin Log File Analyzer.”

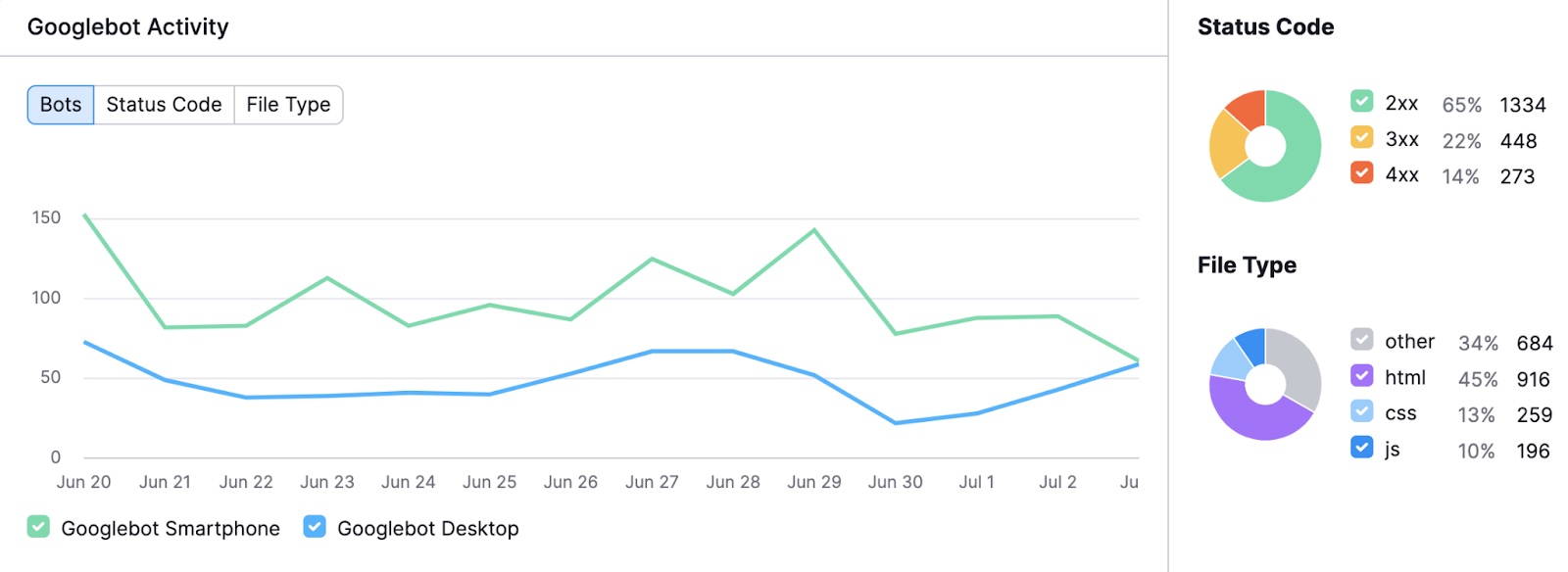

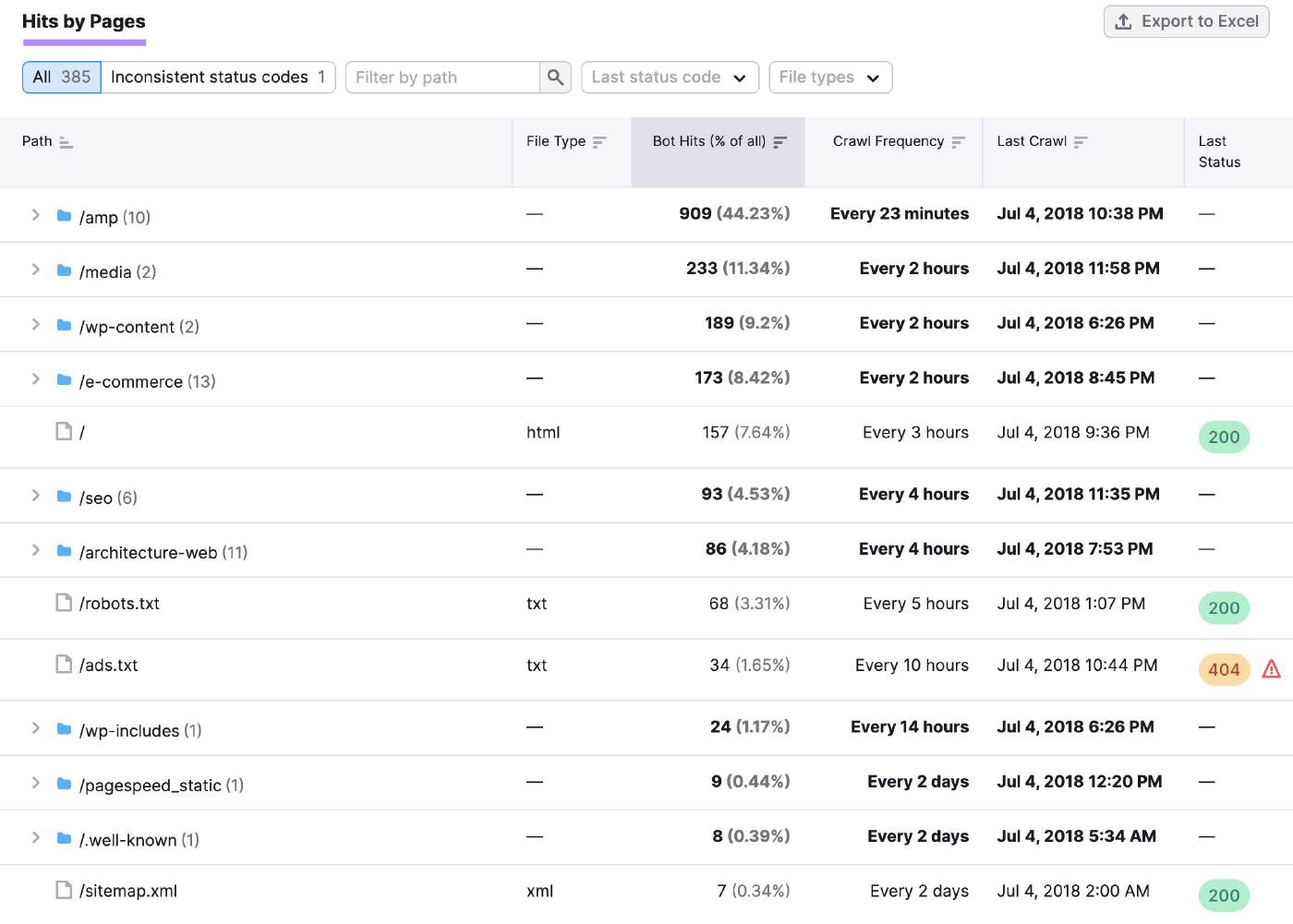

A chart will show smartphone and desktop Googlebot exercise, displaying day by day hits, standing codes, and the requested file sorts.

If you happen to scroll all the way down to “Hits by Pages,” there’s a desk the place you may drill all the way down to particular pages and folders. This may help decide should you’re losing crawl funds, as Google solely crawls so a lot of your pages at a time.

The desk reveals the variety of bot hits, which pages and folders are crawled probably the most, the time of the final crawl, and the final reported server standing. The evaluation offers you insights to enhance your website’s crawlability and indexability.

Analyze Net Site visitors Patterns

To discover ways to establish bot visitors in Google Analytics and different platforms, analyze the visitors patterns of your web site. Search for anomalies which may point out bot exercise.

Examples of suspicious patterns embrace:

Spikes or Drops in Site visitors

Massive adjustments in visitors may very well be an indication of bot exercise. For instance, a spike may point out a DDoS assault. A drop is likely to be the results of a bot scraping your content material, which may cut back your rankings.

Duplication on the internet can muddy your content material’s uniqueness and authority, doubtlessly resulting in decrease rankings and fewer clicks.

Low Variety of Views per Person

A big share of holiday makers touchdown in your website however solely viewing one web page is likely to be an indication of click on fraud. Click on fraud is the act of clicking on hyperlinks with disingenuous or malicious intent.

A median engagement time of zero to at least one second would assist affirm that customers with a low variety of views are bots.

Zero Engagement Time

Bots don’t work together together with your web site like people do, typically arriving after which leaving instantly. If you happen to see visitors with a median engagement time of zero seconds, it might be from bots.

Excessive Conversion Fee

An unusually giant share of your guests finishing a desired motion, corresponding to shopping for an merchandise or filling out a kind, may point out a credential stuffing assault. Any such assault is when your kinds are stuffed out with stolen or faux consumer data in an try to breach your website.

Suspicious Sources and Referrals

Site visitors coming from the “unassigned” medium, which suggests the visitors has no identifiable supply, may be uncommon for human guests who normally come from search engines like google and yahoo, social media, or different web sites.

It might be bot visitors should you see irrelevant referrals to your web site, corresponding to spam domains or grownup websites.

Suspicious Geographies

Site visitors coming from cities, areas, or nations that aren’t constant together with your target market or advertising and marketing efforts could also be from bots which can be spoofing their location.

Methods to Fight Bot Site visitors

To forestall dangerous bots from wreaking havoc in your web site, listed below are a number of strategies that will help you deter or sluggish them down.

Implement Efficient Bot Administration Options

One technique to fight bot visitors is through the use of a bot administration resolution like Cloudflare or Akamai.

These options may help you establish, monitor, and block bot visitors in your web site, utilizing numerous strategies corresponding to:

- Behavioral evaluation: This research how customers work together together with your web site, corresponding to how they scroll or click on. By evaluating the habits of customers and bots, the answer can block malicious bot visitors.

- Gadget fingerprinting: This collects distinctive data from a tool, such because the browser and IP deal with. By making a fingerprint for every system, the answer can block repeated bot requests.

- Machine studying: This makes use of algorithms to be taught from knowledge and make predictions. The answer can analyze the patterns and options of bot visitors.

Bot administration algorithms may also differentiate between good and dangerous bots, with insights and analytics on the supply, frequency, and affect.

If you happen to use a bot administration resolution, you’ll be capable of customise your response to several types of bots, corresponding to:

- Difficult: Asking bots to show their identification or legitimacy earlier than accessing your website

- Redirecting: Sending bots to a special vacation spot away out of your web site

- Throttling: Permitting bots to entry your website, however at a restricted frequency

Set Up Firewalls and Safety Protocols

One other technique to fight bot visitors is to arrange firewalls and safety protocols in your web site, corresponding to net utility firewall (WAF) or HTTPS.

These options may help you forestall unauthorized entry and knowledge breaches in your web site, in addition to filter out malicious requests and customary net assaults.

To make use of a WAF, you need to do the next:

- Join an account with a supplier (corresponding to Cloudflare or Akamai), add your area title, and alter your DNS settings to level to the service’s servers

- Specify which ports, protocols, and IP addresses are allowed or denied entry to your website

- Use a firewall plugin to your website platform, corresponding to WordPress, that will help you handle your firewall settings out of your web site dashboard

To make use of HTTPS to your website, get hold of and set up an SSL/TLS certificates from a trusted certificates authority, which proves your website’s identification and permits encryption.

Through the use of HTTPS, you may:

- Guarantee guests connect with your actual web site and that their knowledge is safe

- Forestall bots from modifying your website’s content material

Use Superior Strategies: CAPTCHAs, Honeypots, and Fee Limiting

Picture Supply: Google

- CAPTCHAs are assessments that require human enter, corresponding to checking a field or typing a phrase, to confirm the consumer isn’t a bot. Use a third-party service like Google’s reCAPTCHA to generate challenges that require human intelligence and embed these in your net kinds or pages.

- Honeypots are traps that lure bots into revealing themselves, corresponding to hidden hyperlinks or kinds that solely bots can see. Monitor any visitors that interacts with these components.

- Fee limiting caps the variety of requests or actions a consumer can carry out in your website, corresponding to logging in or commenting, inside a sure time-frame. Use a device like Cloudflare to set limits on requests and reject or throttle any that exceed these limits.

Finest Practices for Bot Site visitors Prevention

Earlier than you make any adjustments to forestall bots from reaching your web site, seek the advice of with an knowledgeable to assist make sure you don’t block good bots.

Listed below are a number of greatest practices for how one can cease bot visitors and decrease your website’s publicity to danger.

Monitor and Replace Safety Measures

Monitoring net visitors may help you detect and analyze bot exercise, such because the bots’ supply, frequency, and affect.

Replace your safety measures to:

- Forestall or mitigate bot assaults

- Patch vulnerabilities

- Block malicious IP addresses

- Implement encryption and authentication

These instruments, for instance, may help you establish, monitor, and block bot visitors:

Educate Your Workforce on Bot Site visitors Consciousness

Consciousness and coaching may help your group acknowledge and deal with bot visitors, in addition to forestall human errors which will expose your web site to bot assaults.

Foster a tradition of safety and accountability amongst your group members to enhance communication and collaboration. Think about conducting common coaching periods, sharing greatest practices, or making a bot visitors coverage.

Preserve Up with Bot Site visitors Developments

Bots are continuously evolving and adapting as builders use new strategies to bypass safety measures. Maintaining with bot visitors tendencies may help you put together for rising bot threats.

By doing this, you may also be taught from the experiences of different web sites which have handled bot visitors points.

Following trade information and blogs (such because the Cloudflare weblog or the Barracuda weblog), attending webinars and occasions, or becoming a member of on-line communities and boards may help you keep up to date with the most recent tendencies in bot administration.

These are additionally alternatives to change concepts and suggestions with different web site directors.

Learn how to Filter Bot Site visitors in Google Analytics

In Google Analytics 4, the most recent model of the platform, visitors from identified bots and spiders is routinely excluded.

You’ll be able to nonetheless create IP deal with filters to catch different potential bot visitors if you realize or can establish the IP addresses the bots originate from. Google’s filtering characteristic is supposed to filter inside visitors (the characteristic is known as “Outline inside visitors”), however you may nonetheless enter any IP deal with you want.

Right here’s how one can do it:

In Google Analytics, notice the touchdown web page, date, or time-frame the visitors got here in, and some other data (like metropolis or system kind) that could be useful to reference later.

Test your web site’s server logs for suspicious exercise from sure IP addresses, like excessive request frequency or uncommon request patterns throughout the identical time-frame.

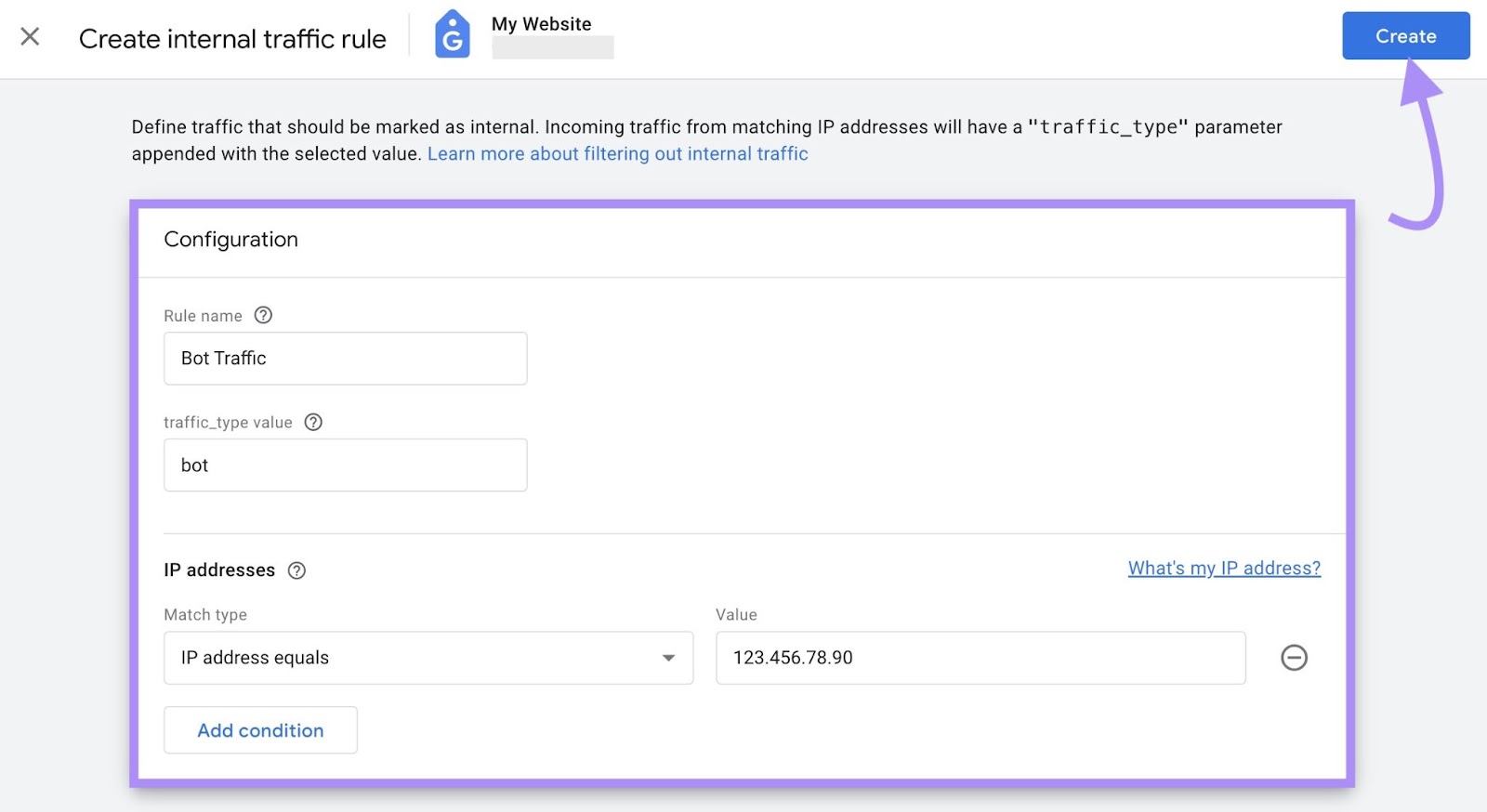

When you’ve decided which IP deal with you wish to block, copy it. For example, it would seem like 123.456.78.90.

Enter the IP deal with into an IP lookup device, corresponding to NordVPN’s IP Deal with Lookup. Have a look at the data that corresponds with the deal with, corresponding to web service supplier (ISP), hostname, metropolis, and nation.

If the IP lookup device confirms your suspicions concerning the IP deal with probably being that of a bot, proceed to Google Analytics to start the filtering course of.

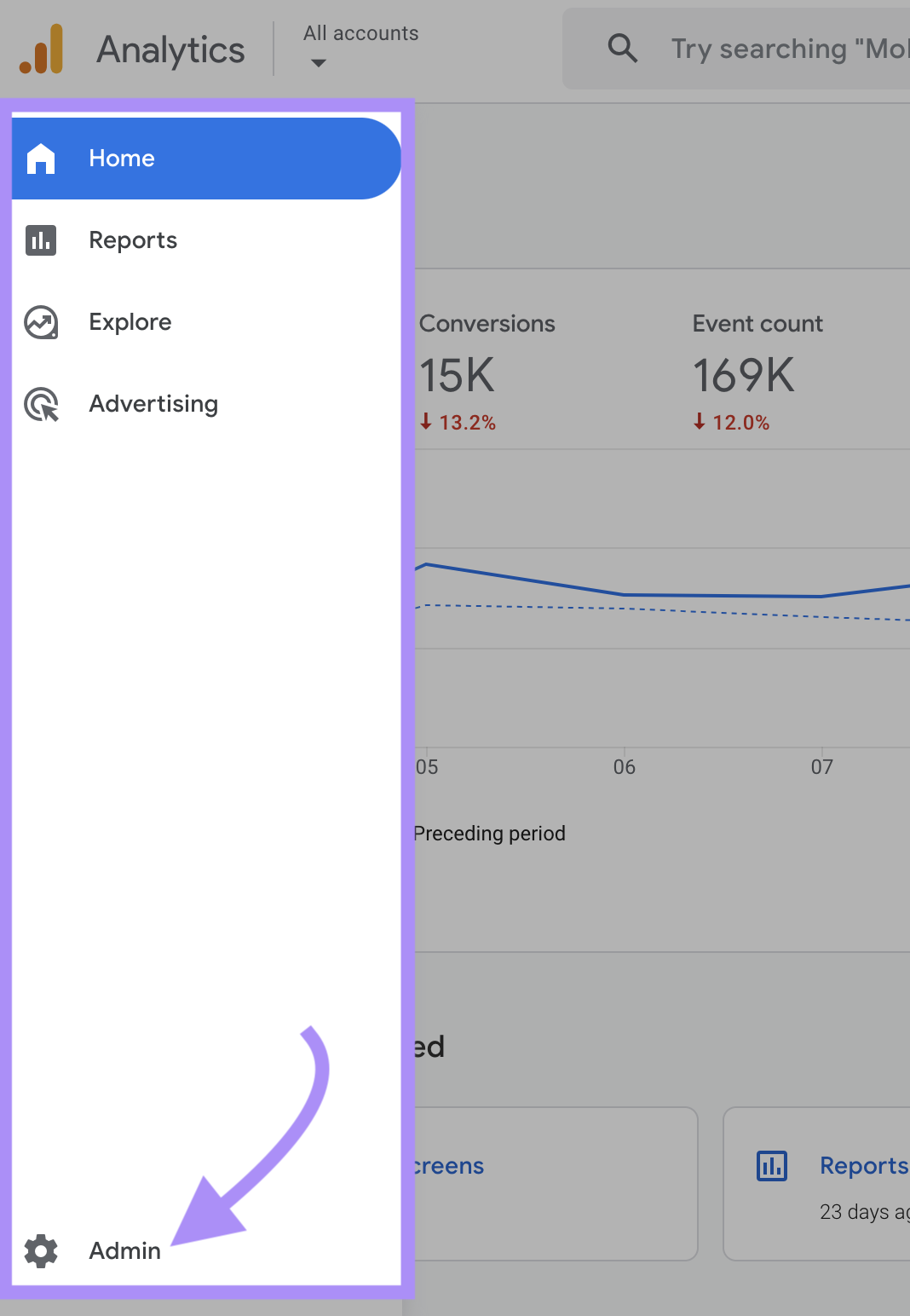

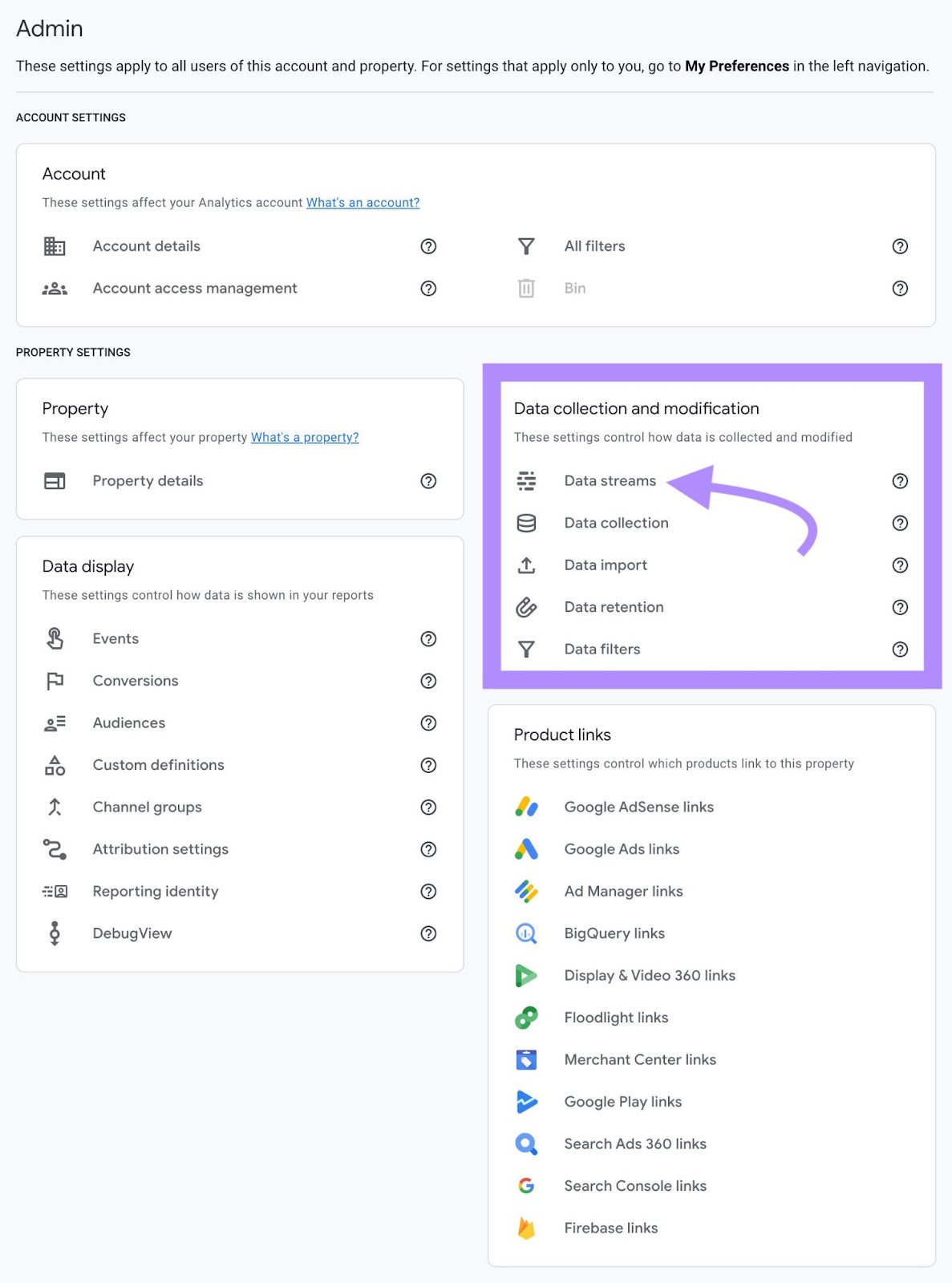

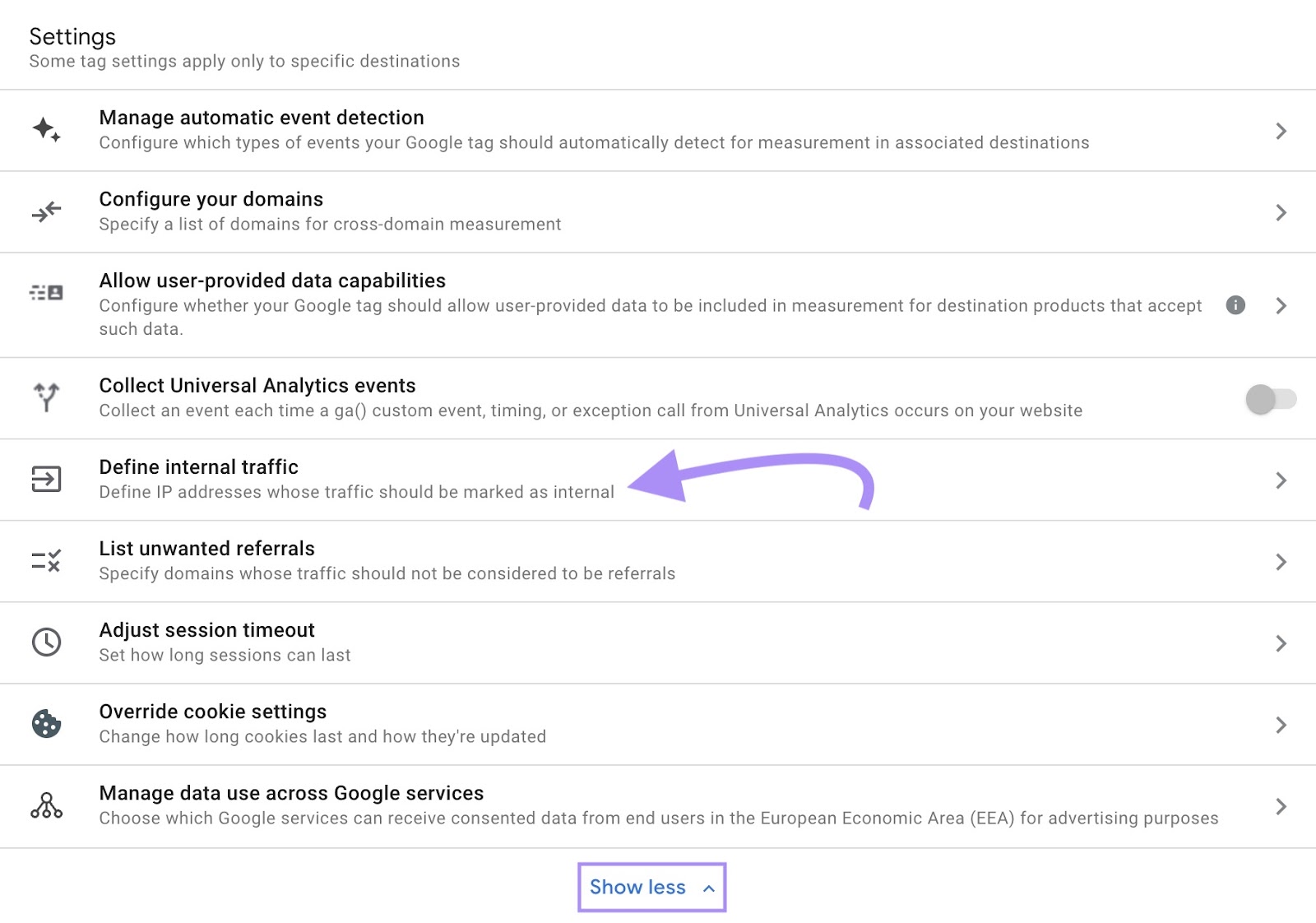

Navigate to “Admin” on the backside left of the platform.

Below “Information assortment and modification,” click on “Information streams.”

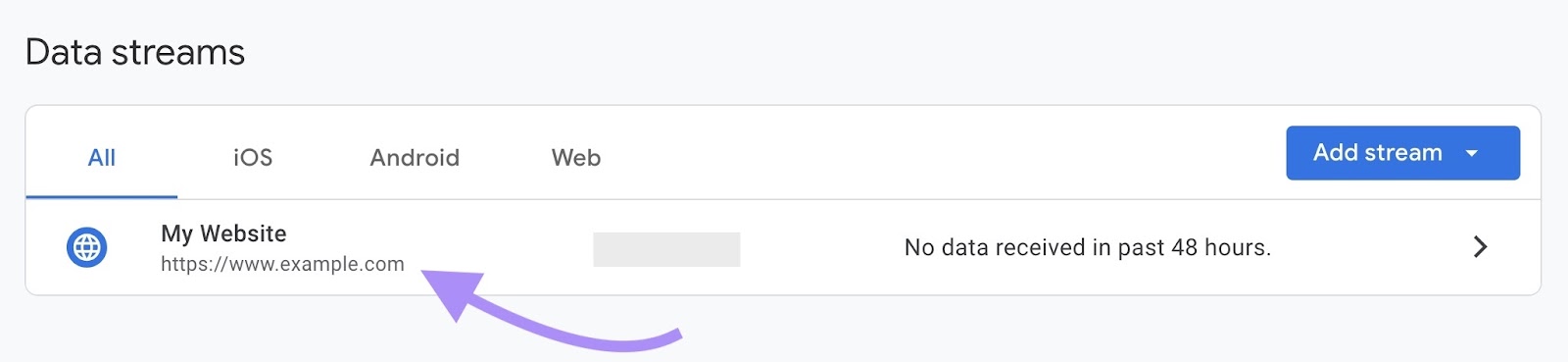

Select the information stream you wish to apply a filter to.

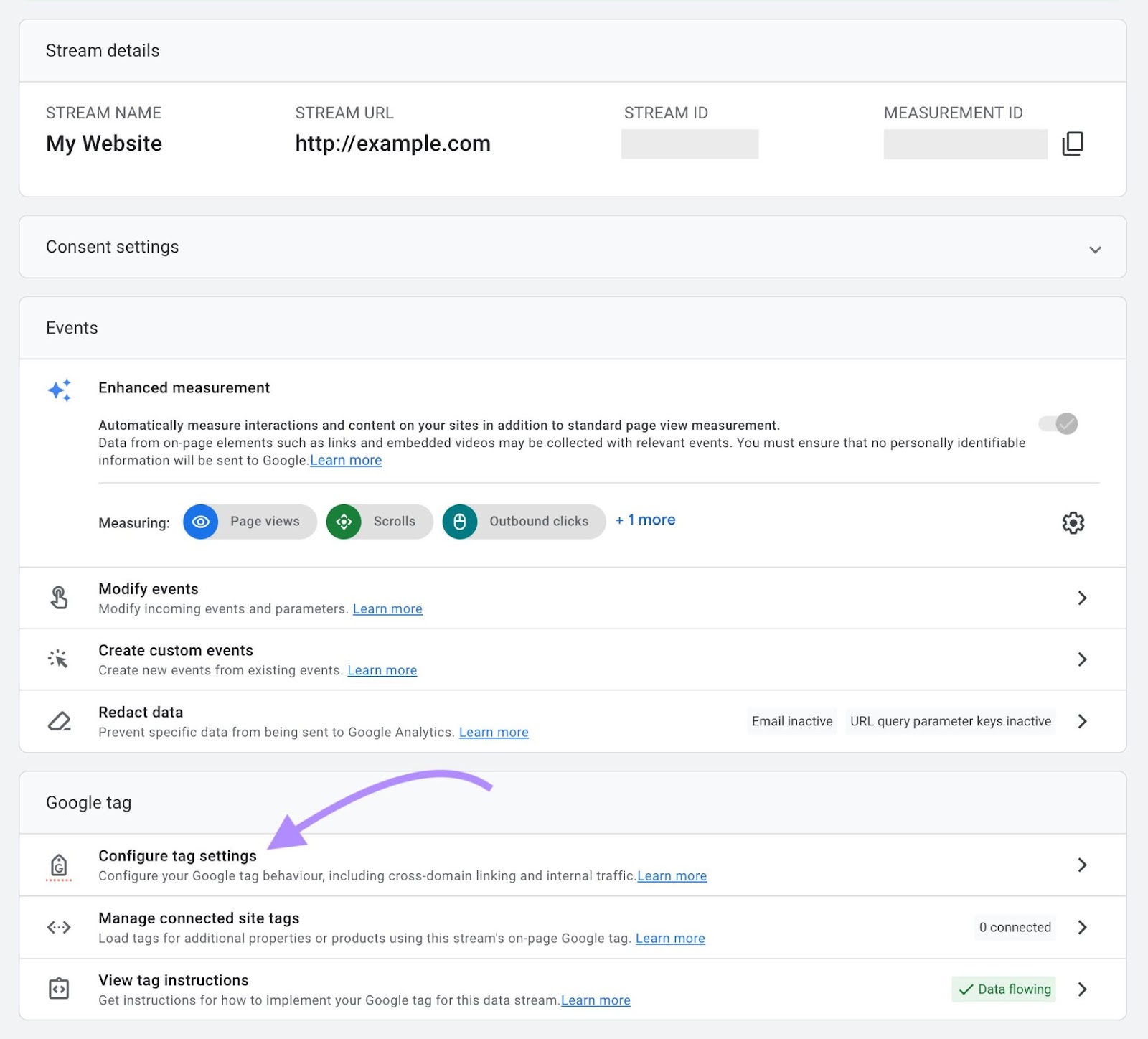

Navigate to “Configure tag settings.”

Click on “Present extra” after which navigate to “Outline inside visitors.”

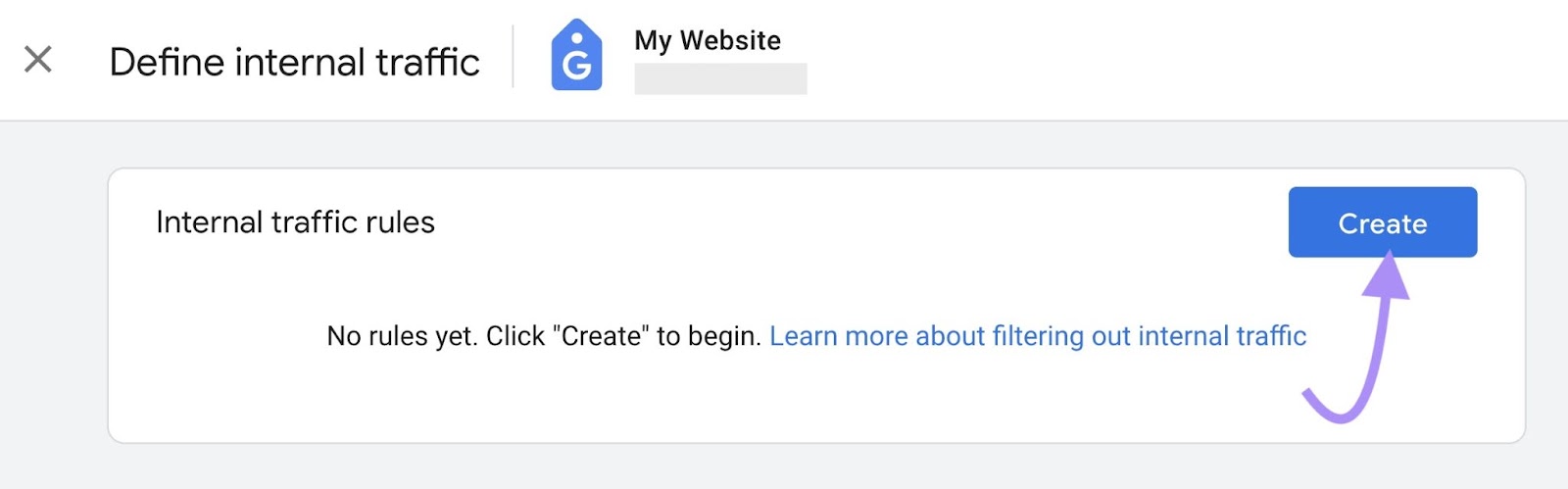

Click on the “Create” button.

Enter a rule title, visitors kind worth (corresponding to “bot”), and the IP deal with you wish to filter. Select from quite a lot of match sorts (equals, vary, and so forth.) and add a number of addresses as situations should you’d desire to not create a separate filter for each deal with.

Click on the “Create” button once more, and also you’re finished. Permit for a processing delay of 24 to 48 hours.

Additional studying: Crawl Errors: What They Are and Learn how to Repair Them

Learn how to Guarantee Good Bots Can Crawl Your Website

When you’ve blocked dangerous bots and filtered bot visitors in your analytics, guarantee good bots can nonetheless simply crawl your website.

Do that through the use of the Website Audit device to establish over 140 potential points, together with crawlability.

Right here’s how:

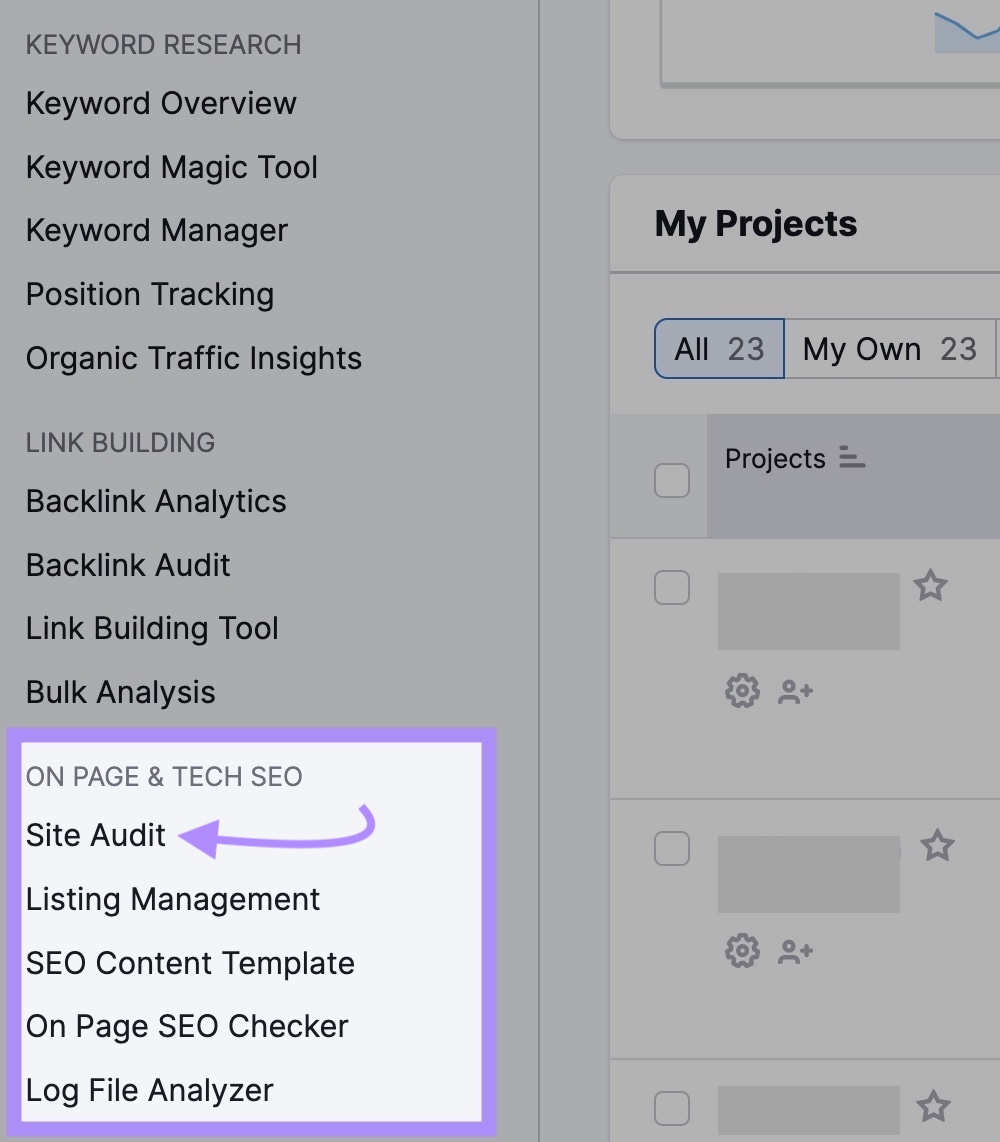

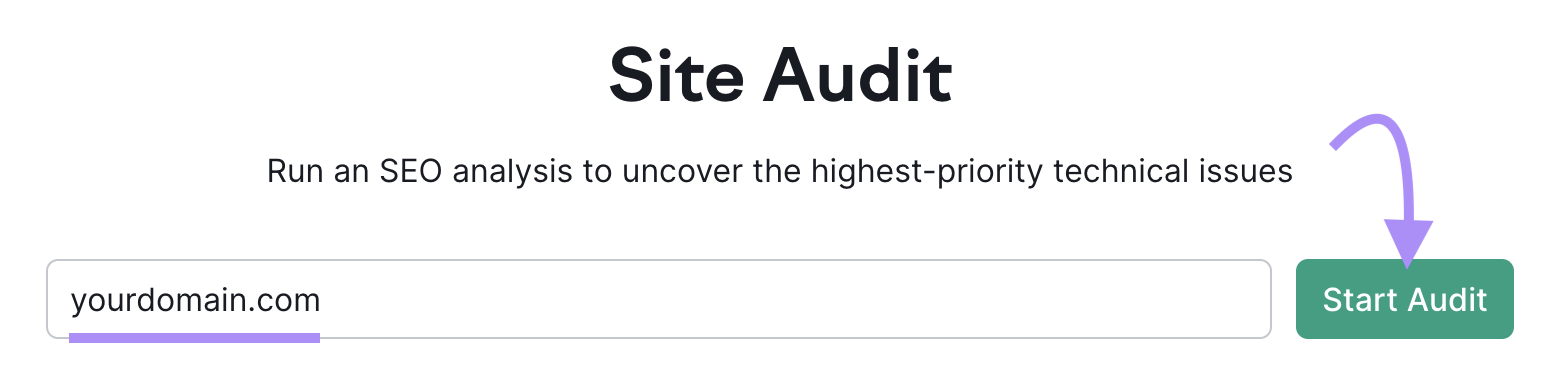

Navigate to Semrush and click on on the Website Audit device within the left-hand navigation underneath “ON PAGE & TECH search engine optimisation.”

Enter your area and click on the “Begin Audit” button.

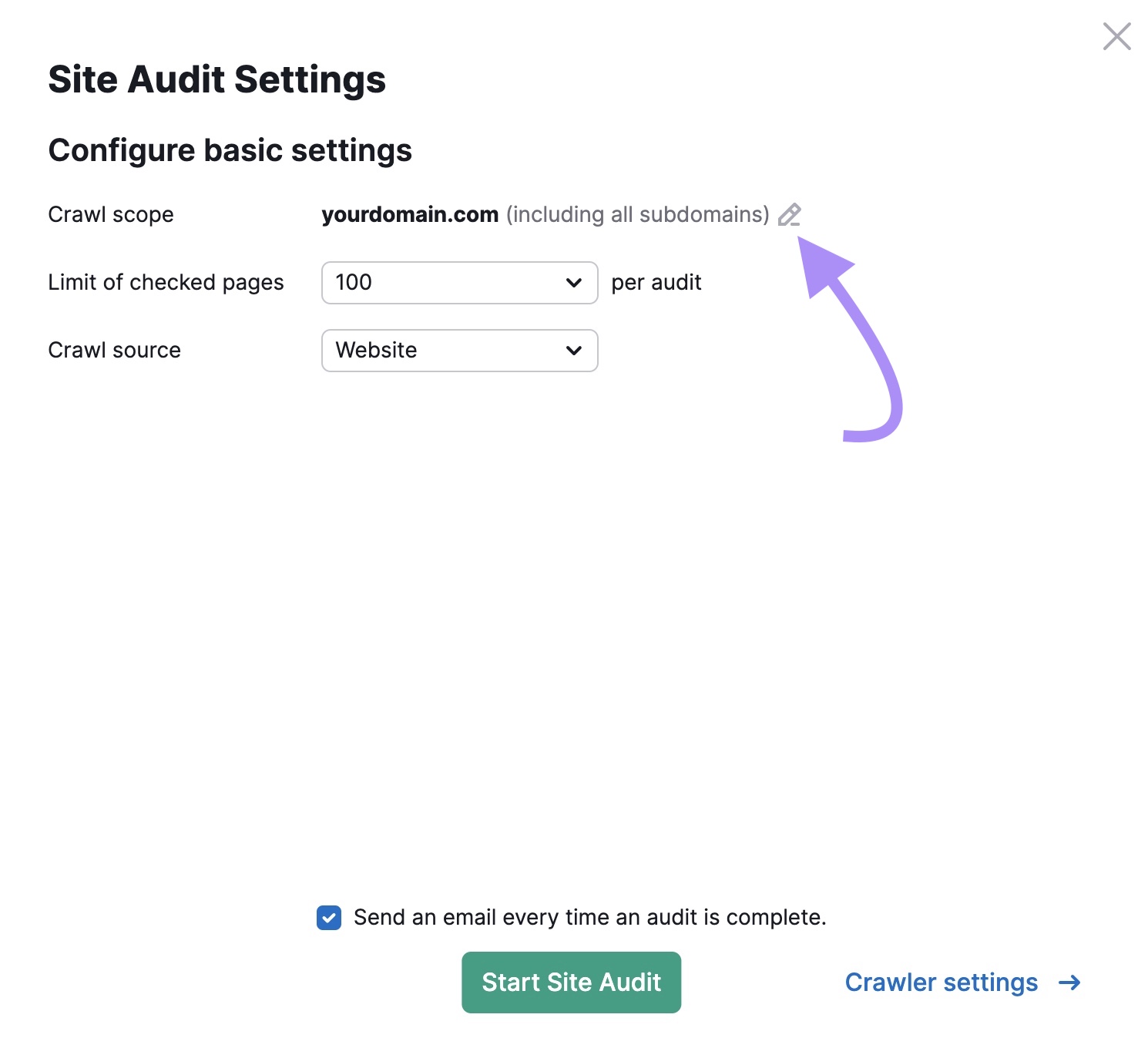

Subsequent, you’ll be introduced with the “Website Audit Settings” menu.

Click on the pencil icon subsequent to the “Crawl scope” line the place your area is.

Select if you wish to crawl your whole area, a subdomain, or a folder.

In order for you Website Audit to crawl all the area, which we advocate, go away the whole lot as-is.

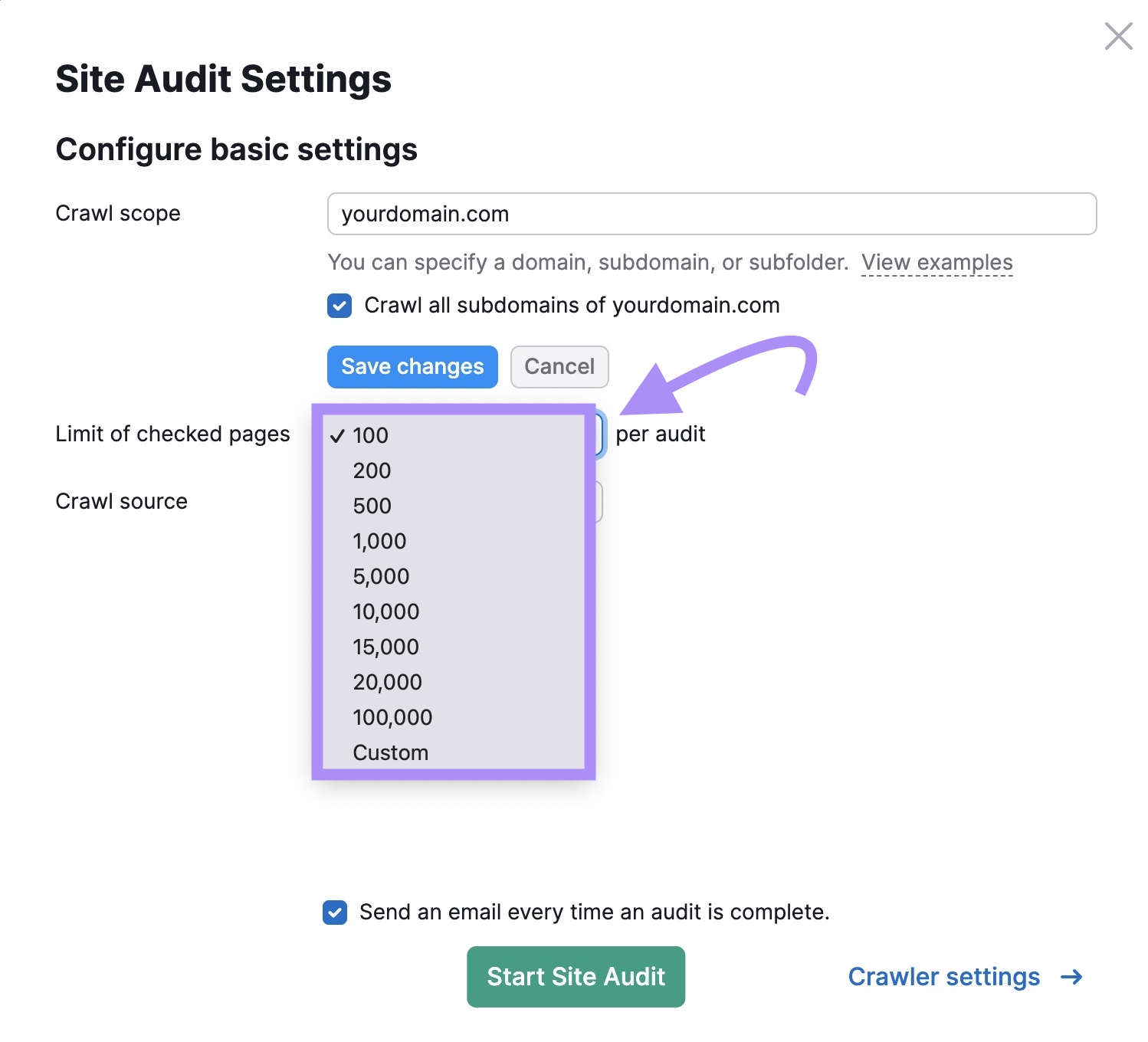

Subsequent, select the variety of pages you need crawled from the restrict drop-down.

Your decisions rely in your Semrush subscription degree:

- Free: 100 pages per audit and monthly

- Professional: 20,000 pages

- Guru: 20,000 pages

- Enterprise: 100,000 pages

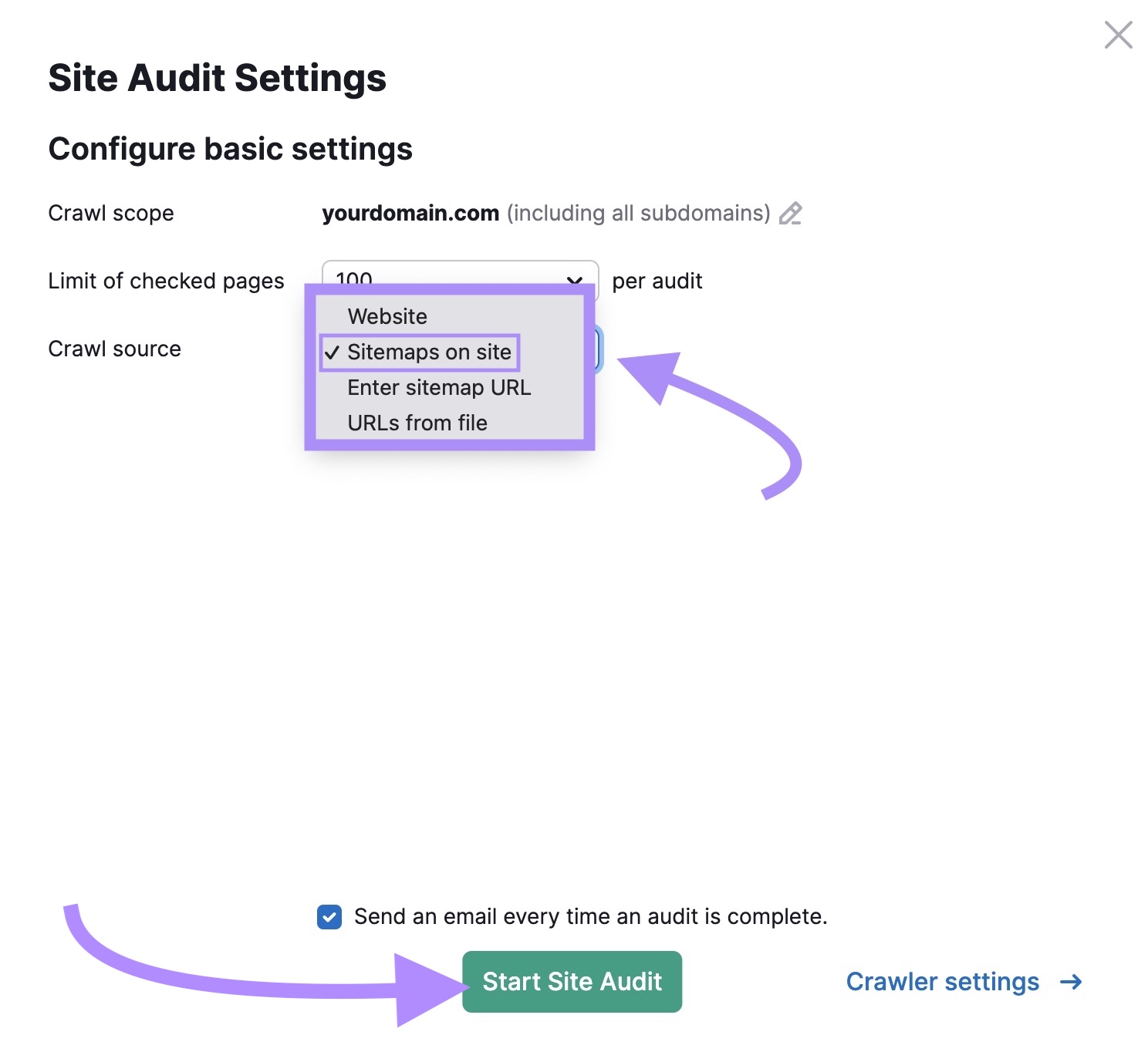

Lastly, choose the crawl supply.

Since we’re enthusiastic about analyzing pages accessible to bots, select “Sitemaps on website.”

The rest of the settings, like “Crawler settings” and “Permit/disallow URLs,” are damaged into six tabs on the left-hand facet. These are non-compulsory.

Once you’re prepared, click on the “Begin Website Audit” button.

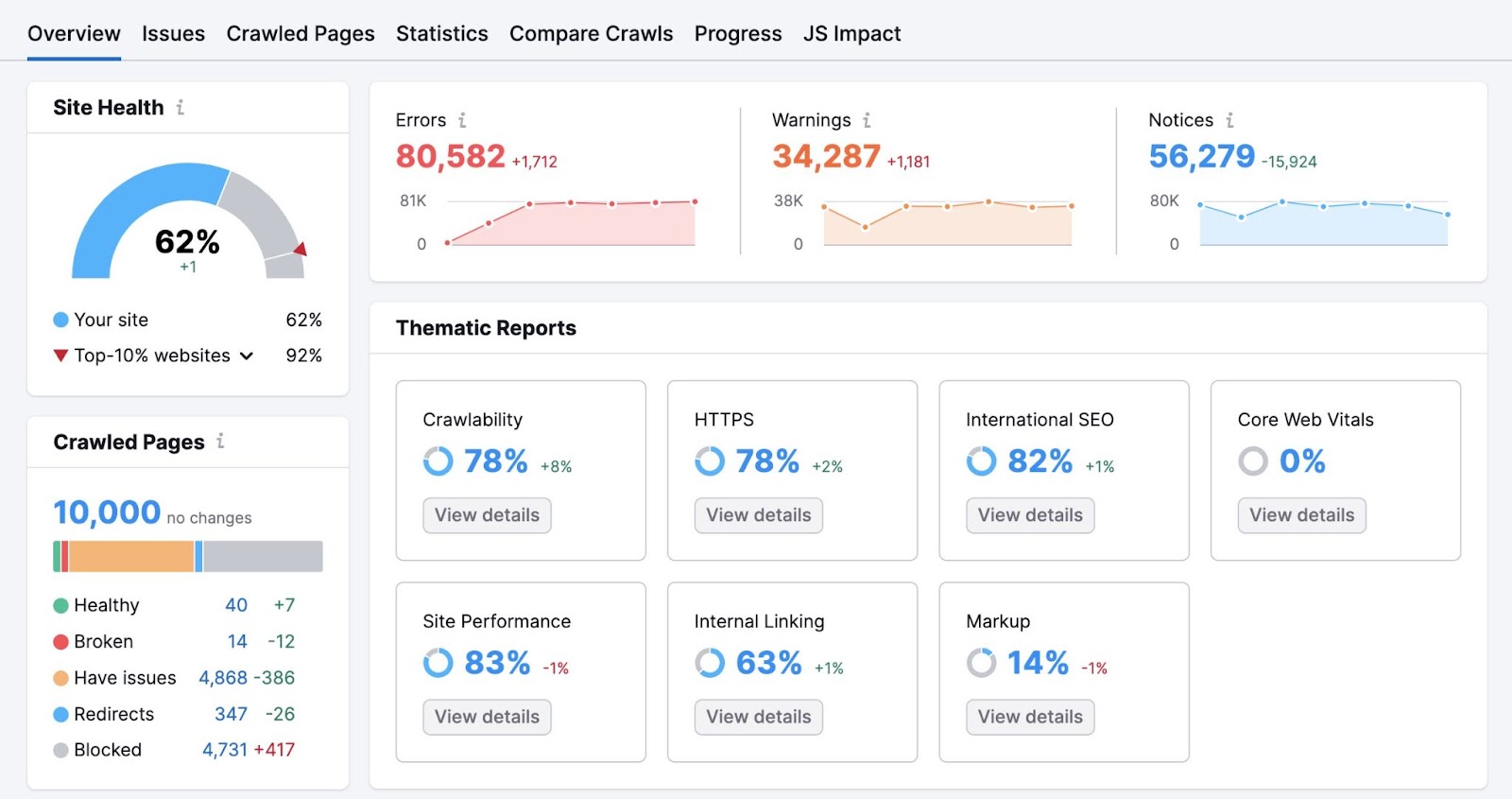

Now, you’ll see an summary that appears like this:

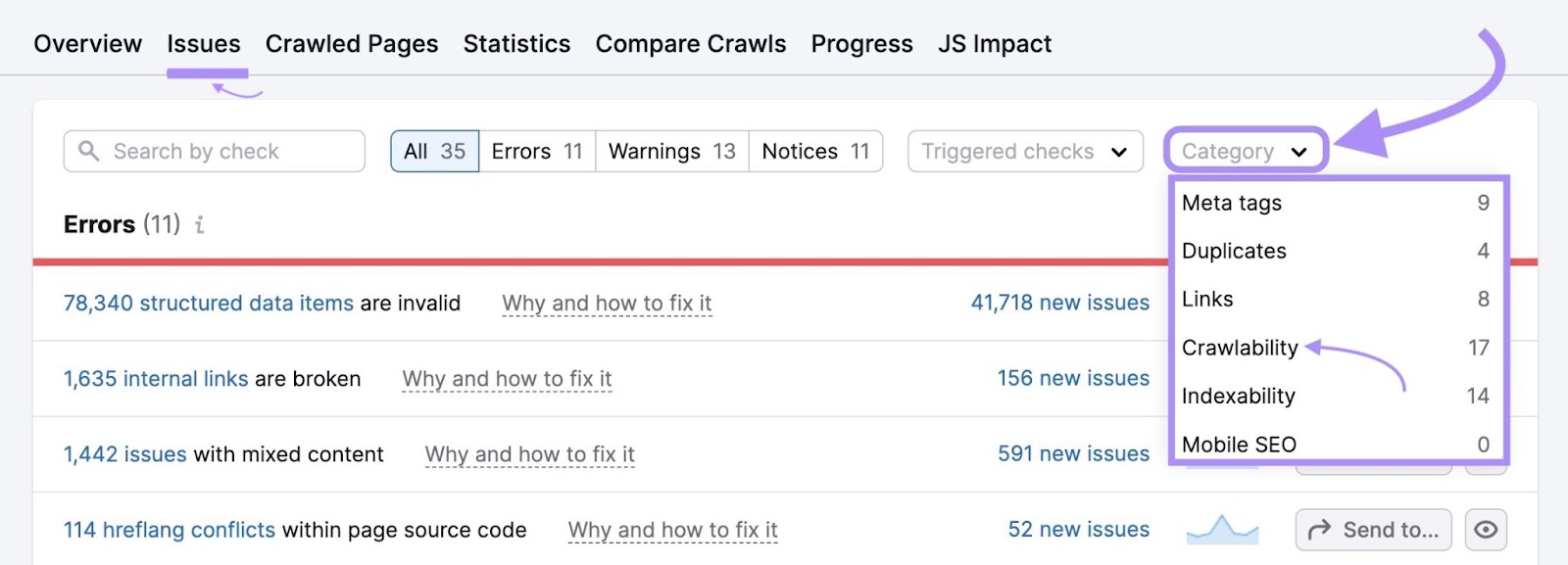

To establish points affecting your website’s crawlability, go to the “Points” tab.

Within the “Class” drop-down, choose “Crawlability.”

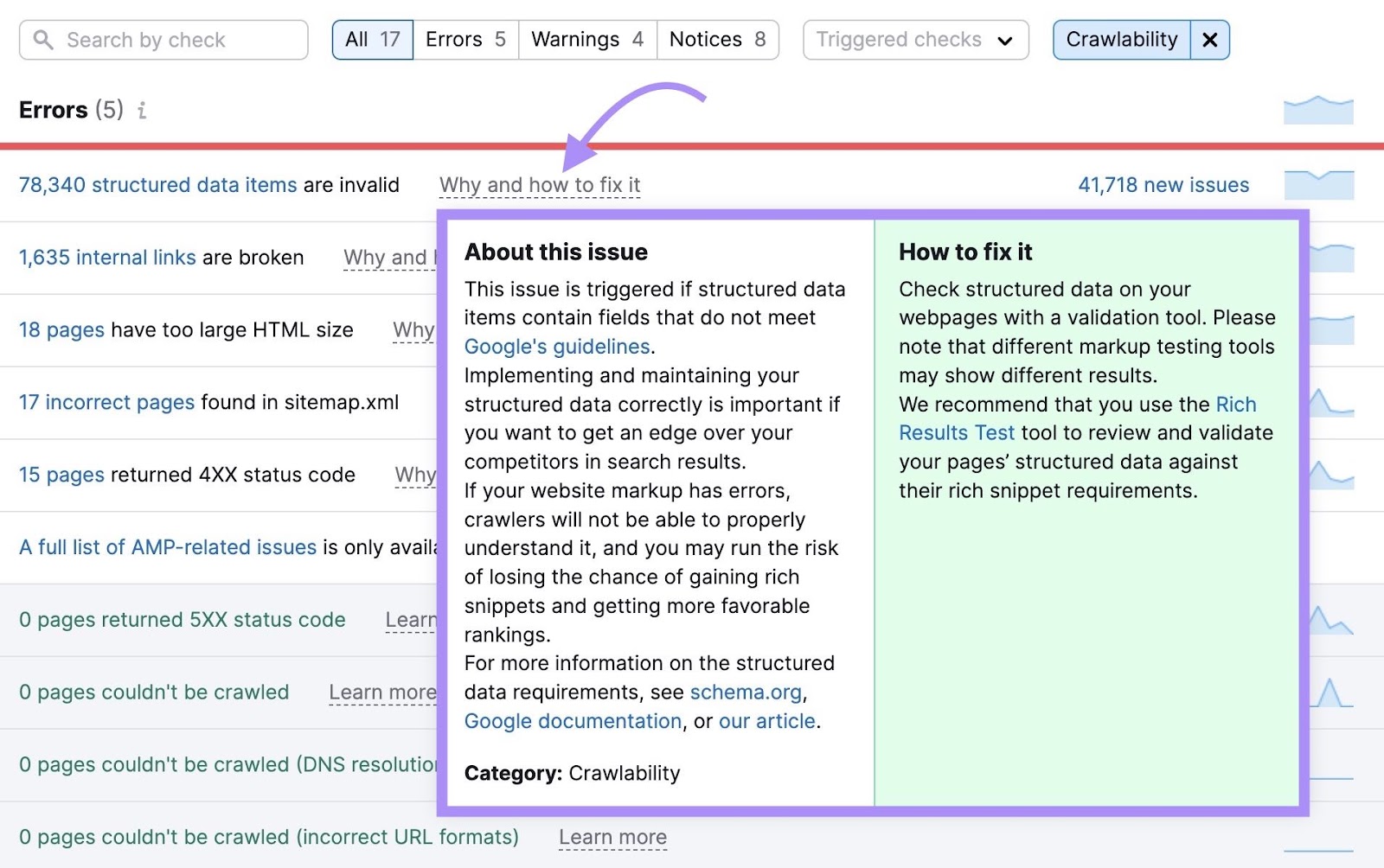

For particulars about any difficulty, click on on “Why and how one can repair it” for a proof and suggestions.

To make sure good bots can crawl your website with none points, pay particular consideration to any of the next errors.

Why?

As a result of these points may hinder a bot’s capacity to crawl:

- Damaged inside hyperlinks

- Format errors in robots.txt file

- Format errors in sitemap.xml information

- Incorrect pages present in sitemap.xml

- Malformed hyperlinks

- No redirect or canonical to HTTPS homepage from HTTP model

- Pages could not be crawled

- Pages could not be crawled (DNS decision points)

- Pages could not be crawled (incorrect URL codecs)

- Pages returning 4XX standing code

- Pages returning 5XX standing code

- Pages with a WWW resolve difficulty

- Redirect chains and loops

The Website Audit points listing will present extra particulars concerning the above points, together with how one can repair them.

Conduct a crawlability audit like this at any time. We advocate doing this month-to-month to repair points that forestall good bots from crawling your website.

Defend Your Web site from Unhealthy Bots

Whereas some bots are good, others are malicious and may skew your visitors analytics, negatively affect your web site’s consumer expertise, and pose safety dangers.

It’s vital to observe visitors to detect and block malicious bots and filter out the visitors out of your analytics.

Experiment with a number of the methods and options on this article for stopping malicious bot visitors to see what works greatest for you and your group.

Strive the Semrush Log File Analyzer to identify web site crawlability points and the Website Audit device to deal with potential points stopping good bots from crawling your pages.