The Coalition for Well being AI this previous Friday unveiled information plans for the way it could certify impartial synthetic intelligence assurance labs.

The draft frameworks come because the group – comprising well being system heavy-hitters resembling Mayo Clinic, Penn Medication and Stanford, together with Amazon, Google, Microsoft and different Large Tech giants – set a timeline for its intention to standardize the output of testing labs by grading AI and ML fashions with so-called CHAI Mannequin Playing cards – which the group likens to ingredient and diet labels on meals merchandise.

CHAI leaders say the certification rubric and mannequin card designs may be anticipated – after incorporating stakeholder suggestions from members, companions and the general public – by the tip of April 2025.

WHY IT MATTERS

Created with the ANSI Nationwide Accreditation Board and several other rising high quality assurance labs utilizing ISO 17025 – it is the predominant customary for testing and calibration laboratories worldwide – thedraft CHAI certification program framework requires, amongst different issues, necessary disclosure of conflicts of curiosity between assurance labs and mannequin builders, and the safety of knowledge and mental property. (That customary was additionally used for ONC’s Digital Well being Document certification program.)

The testing and cert program additionally incorporates information high quality and integrity necessities derived from FDA’s steering on using high-quality real-world information, CHAI officers word, in addition to testing and analysis metrics sourced from the coalition’s varied working teams – all in alignment with the Nationwide Academy of Medication’s AI Code of Conduct.

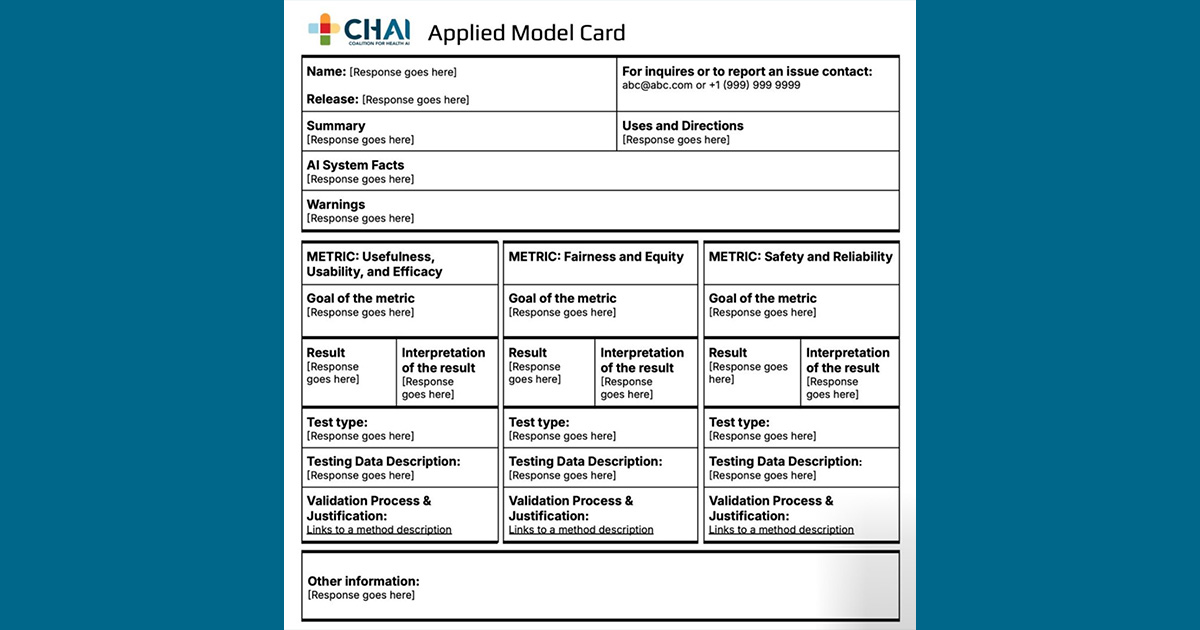

The draft mannequin card – designed by a workgroup comprising specialists from regional well being programs, digital well being report distributors, medical machine makers and others – affords a standardized template designed to point out a level of transparency about algorithms, presenting sure baseline info to assist finish customers consider the efficiency and security of AI instruments.

That info consists of the id of the AI developer, the mannequin’s meant makes use of, focused affected person populations and extra. Different efficiency metrics embrace safety and compliance accreditations and upkeep necessities. The playing cards even have details about recognized dangers and out-of-scope makes use of, biases and different moral concerns.

CHAI says it’ll proceed to have interaction with healthcare stakeholders – together with affected person advocates, small and rural well being programs and tech startups for added steering on mannequin card improvement. The group can also be searching for suggestions through its assurance lab and mannequin card kinds.

“The speedy evolution of AI in healthcare has created a panorama that may really feel unregulated and fragmented,” stated CHAI utilized mannequin card workgroup member Demetri Giannikopoulous, chief transformation officer at Aidoc, which helped lead design and improvement of the playing cards, in an announcement.

“By establishing a typical framework that aligns with federal laws, we’re shifting past theoretical discussions and constructing the inspiration for scalable, dependable, and moral AI options that may be adopted throughout the healthcare ecosystem.”

THE LARGER TREND

Since its founding in 2021, CHAI has been working, together with others who’ve related targets, to be the go-to supply for trusted AI – whether or not that refers to affected person security, privateness and safety, equity and fairness, mannequin transparency or utility and efficacy.

Because the know-how advances and proliferates, the coalition is rising too – convening an array of stakeholders from hospitals and well being programs, Large Tech, startups, authorities companies and affected person advocates. privateness and safety, equity, transparency, usefulness, and security of AI algorithms.

In an interview in Boston this previous month on the HIMSS AI in Healthcare Discussion board, CHAI CEO Dr. Brian Anderson famous that the group, which he co-founded as a facet challenge when he was nonetheless chief digital well being doctor at MITRE, now has greater than 4,000 members from throughout the healthcare and know-how ecosystem, an enormous improve from 1,300 earlier this 12 months.

Duty and transparency round AI fashions have been core to CHAI’s mission because it was based in 2021. Towards that finish it has labored on initiatives resembling its Blueprint for Reliable AI Implementation Steering and Assurance in 2023 and its draft framework for accountable improvement and deployment in June 2024.

The brand new mannequin playing cards had been designed to adjust to the HTI-1 necessities promulgated by ONC earlier this 12 months, meant to be an simply legible place to begin for organizations reviewing AI fashions throughout the procurement course of, and for EHR distributors searching for to adjust to the Well being IT Certification Program.

Anderson famous that Micky Tripathi, Assistant Secretary for Know-how Coverage, Nationwide Coordinator for Well being Info Know-how and Appearing Chief Synthetic Intelligence Officer at HHS, has stated beforehand that business and the personal sector ought to be those to outline what goes into AI mannequin playing cards.

“We have taken up that cost,” stated Anderson, who says CHAI is actively searching for finish person perspective and is on the lookout for a greater variety of stakeholders and use instances because it considers the myriad functions of AI in healthcare.

The purpose is to construct an “simply digestible” however nonetheless “technically particular, detailed mannequin card that the business [can] align round,” he stated.

As for AI assurance labs, Anderson notes that nearly each different sector of the economic system makes use of comparable impartial evaluators, and healthcare ought to have the identical.

“We need to construct a community of labs which can be reliable, which can be competent and succesful – that mannequin prospects can flip to, that well being programs can belief and know-how distributors can belief as effectively,” he stated.

ON THE RECORD

“This has been a pivotal 12 months for CHAI on our journey to assist allow trusted impartial assurance of AI options with native monitoring and validation,” stated Anderson in an announcement. “We’re thrilled with the progress of our CHAI workgroups who characterize a range of views and experience throughout the well being ecosystem.

“These frameworks for certification and primary transparency are constructing blocks of accountable well being AI. They are going to assist to streamline the trail for AI innovation, construct belief with sufferers and clinicians, and place well being programs and answer innovators forward of rising state and federal laws.”

Mike Miliard is govt editor of Healthcare IT Information

E-mail the author: [email protected]

Healthcare IT Information is a HIMSS publication.