Introduction

Welcome to our information of LlamaIndex!

In easy phrases, LlamaIndex is a useful instrument that acts as a bridge between your customized knowledge and huge language fashions (LLMs) like GPT-4 that are highly effective fashions able to understanding human-like textual content. Whether or not you’ve knowledge saved in APIs, databases, or in PDFs, LlamaIndex makes it straightforward to convey that knowledge into dialog with these good machines. This bridge-building makes your knowledge extra accessible and usable, paving the way in which for smarter functions and workflows.

Understanding LlamaIndex

Initially generally known as GPT Index, LlamaIndex has advanced into an indispensable ally for builders. It is like a multi-tool that helps in varied levels of working with knowledge and huge language fashions –

- Firstly, it helps in ‘ingesting’ knowledge, which implies getting the information from its authentic supply into the system.

- Secondly, it helps in ‘structuring’ that knowledge, which implies organizing it in a approach that the language fashions can simply perceive.

- Thirdly, it aids in ‘retrieval’, which implies discovering and fetching the appropriate items of knowledge when wanted.

- Lastly, it simplifies ‘integration’, making it simpler to meld your knowledge with varied utility frameworks.

Once we dive somewhat deeper into the mechanics of LlamaIndex, we discover three most important heroes doing the heavy lifting.

- The ‘knowledge connectors’ are the diligent gatherers, fetching your knowledge from wherever it resides, be it APIs, PDFs, or databases.

- The ‘knowledge indexes’ are the organized librarians, arranging your knowledge neatly in order that it is simply accessible.

- And the ‘engines’ are the translators (LLMs), making it doable to work together along with your knowledge utilizing pure language.

Within the subsequent sections, we’ll discover arrange LlamaIndex and begin utilizing it to supercharge your functions with the facility of huge language fashions.

What’s what in LlamaIndex

LlamaIndex is your go-to platform for creating sturdy functions powered by Massive Language Fashions (LLMs) over your personalized knowledge. Be it a classy Q&A system, an interactive chatbot, or clever brokers, LlamaIndex lays down the inspiration to your ventures into the realm of Retrieval Augmented Era (RAG).

RAG mechanism amplifies the prowess of LLMs with the essence of your customized knowledge. Elements of an RAG workflow –

- Data Base (Enter): The information base is sort of a library stuffed with helpful info resembling FAQs, manuals, and different related paperwork. When a query is requested, that is the place the system seems to search out the reply.

- Set off/Question (Enter): That is the spark that will get issues going. Usually, it is a query or request from a buyer that indicators the system to spring into motion.

- Process/Motion (Output): After understanding the set off or question, the system then performs a sure activity to handle it. As an illustration, if it is a query, the system will work on offering a solution, or if it is a request for a selected motion, it’s going to perform that motion accordingly.

Based mostly on the context of our weblog, we might want to implement the next two levels utilizing Llamaindex to supply the 2 inputs to our RAG mechanism –

- Indexing Stage: Getting ready a information base.

- Querying Stage: Harnessing the information base & the LLM to reply to your queries by producing the ultimate output / performing the ultimate activity.

Let’s take a more in-depth take a look at these levels underneath the magnifying lens of LlamaIndex.

The Indexing Stage: Crafting the Data Base

LlamaIndex equips you with a set of instruments to form your information base:

- Information Connectors: These entities, also referred to as Readers, ingest knowledge from various sources and codecs right into a unified Doc illustration.

- Paperwork / Nodes: A Doc is your container for knowledge, whether or not it springs from a PDF, an API, or a database. A Node, alternatively, is a snippet of a Doc, enriched with metadata and relationships, paving the way in which for exact retrieval operations.

- Information Indexes: Publish ingestion, LlamaIndex assists in arranging the information right into a retrievable format. This course of includes parsing, embedding, and metadata inference, and finally leads to the creation of the information base.

The Querying Stage: Partaking with Your Data

On this section, we fetch related context from the information base as per your question, and mix it with the LLM’s insights to generate a response. This not solely gives the LLM with up to date related information but additionally prevents hallucination. The core problem right here orbits round retrieval, orchestration, and reasoning throughout a number of information bases.

LlamaIndex provides modular constructs that will help you use it for Q&A, chatbots, or agent-driven functions.

These are the first components –

- Question Engines: These are your end-to-end conduits for querying your knowledge, taking a pure language question and returning a response together with the referenced context.

- Chat Engines: They elevate the interplay to a conversational stage, permitting back-and-forths along with your knowledge.

- Brokers: Brokers are your automated decision-makers, interacting with the world by means of a toolkit, and manoeuvring by means of duties with a dynamic motion plan relatively than a hard and fast logic.

These are few frequent constructing blocks of the first components current in all the components mentioned above –

- Retrievers: They dictate the strategy of fetching related context from the information base towards a question. For instance, Dense Retrieval towards a vector index is a prevalent strategy.

- Node Postprocessors: They refine the set of nodes by means of transformation, filtering, or re-ranking.

- Response Synthesizers: They channel the LLM to generate responses, mixing the person question with retrieved textual content chunks.

As we enterprise into LlamaIndex now, we’ll encounter and be taught extra in regards to the above components.

Join your knowledge and apps with Nanonets AI Assistant to speak with knowledge, deploy customized chatbots & brokers, and create AI workflows that carry out duties in your apps.

Set up and Setup

Earlier than exploring the thrilling options, let’s first set up LlamaIndex in your system. Should you’re accustomed to Python, this might be straightforward. Use this command to put in:

pip set up llama-index

Then comply with both of the 2 approaches under –

- By default, LlamaIndex makes use of OpenAI’s gpt-3.5-turbo for creating textual content and text-embedding-ada-002 for fetching and embedding. You want an OpenAI API Key to make use of these. Get your API key at no cost by signing up on OpenAI’s web site. Then set your atmosphere variable with the title OPENAI_API_KEY in your python file.

import os

os.environ["OPENAI_API_KEY"] = "your_api_key"- Should you’d relatively not use OpenAI, the system will swap to utilizing LlamaCPP and llama2-chat-13B for creating textual content and BAAI/bge-small-en for fetching and embedding. These all work offline. To arrange LlamaCPP, comply with its setup information right here. This can want about 11.5GB of reminiscence on each your CPU and GPU. Then, to make use of native embedding, set up this:

pip set up sentence-transformers

Creating Llamaindex Paperwork

Information connectors, additionally known as Readers, are important elements in LlamaIndex that facilitate the ingestion of knowledge from varied sources and codecs, changing them right into a simplified Doc illustration consisting of textual content and primary metadata.

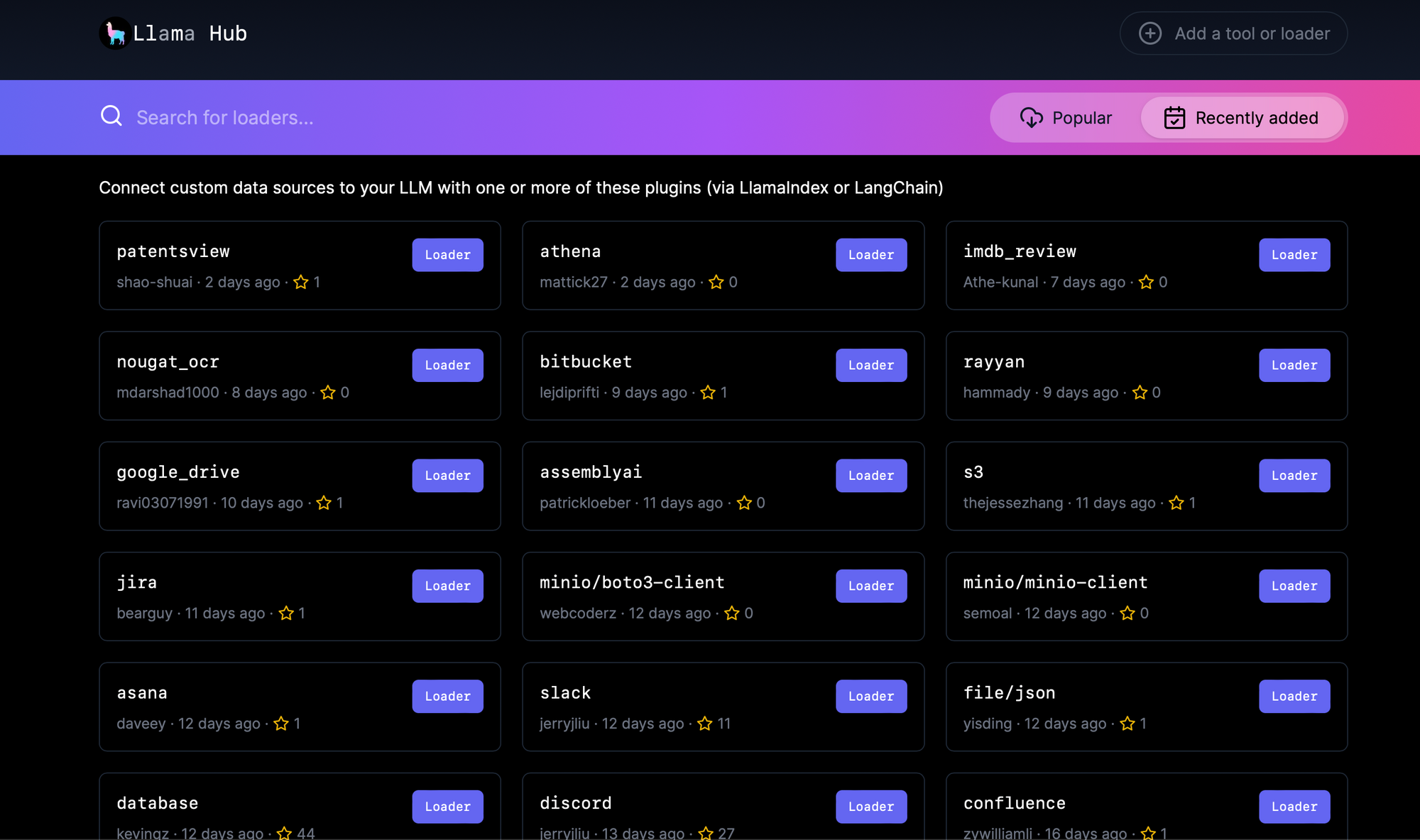

LlamaHub is an open-source repository internet hosting knowledge connectors which could be seamlessly built-in into any LlamaIndex utility. All of the connectors current right here can be utilized as follows –

from llama_index import download_loader

GoogleDocsReader = download_loader('GoogleDocsReader')

loader = GoogleDocsReader()

paperwork = loader.load_data(document_ids=[...])See the total checklist of knowledge connectors right here –

Llama Hub

A hub of knowledge loaders for GPT Index and LangChain

The number of knowledge connectors right here is fairly exhaustive, a few of which embrace:

- SimpleDirectoryReader: Helps a broad vary of file varieties (.pdf, .jpg, .png, .docx, and so forth.) from a neighborhood file listing.

- NotionPageReader: Ingests knowledge from Notion.

- SlackReader: Imports knowledge from Slack.

- ApifyActor: Able to net crawling, scraping, textual content extraction, and file downloading.

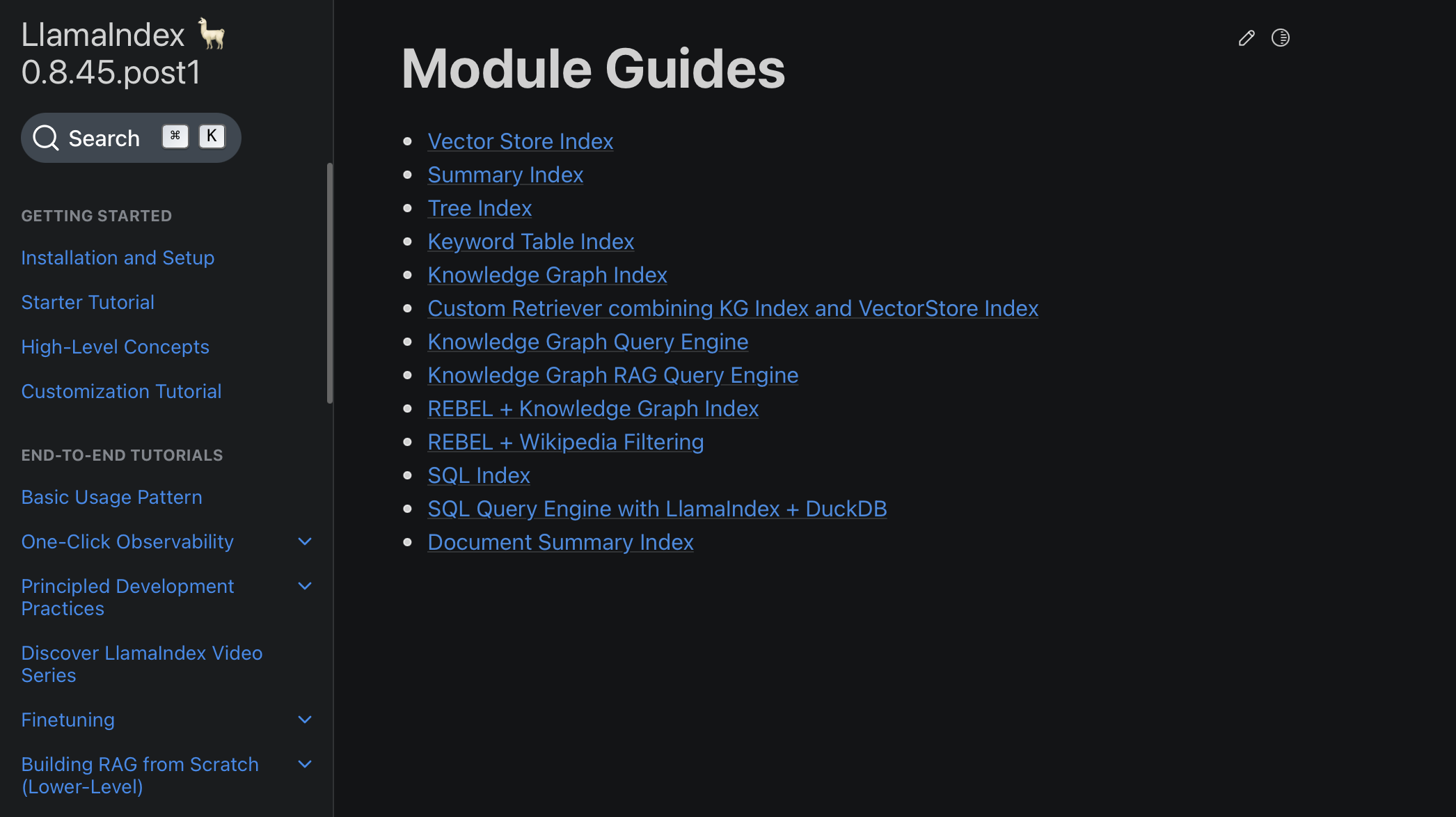

How you can discover the appropriate knowledge connector?

- First lookup and verify if a related knowledge connector is listed in Llamaindex documentation right here –

Module Guides – LlamaIndex 🦙 0.8.45.post1

- If not, then determine the related knowledge connector on Llamahub

For instance, allow us to do this on a few knowledge sources.

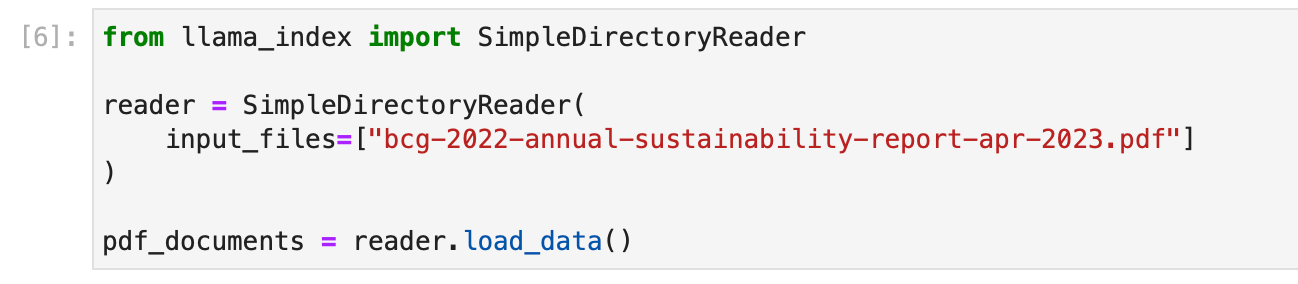

- PDF File : We use the SimpleDirectoryReader knowledge connector for this. The given instance under hundreds a BCG Annual Sustainability Report.

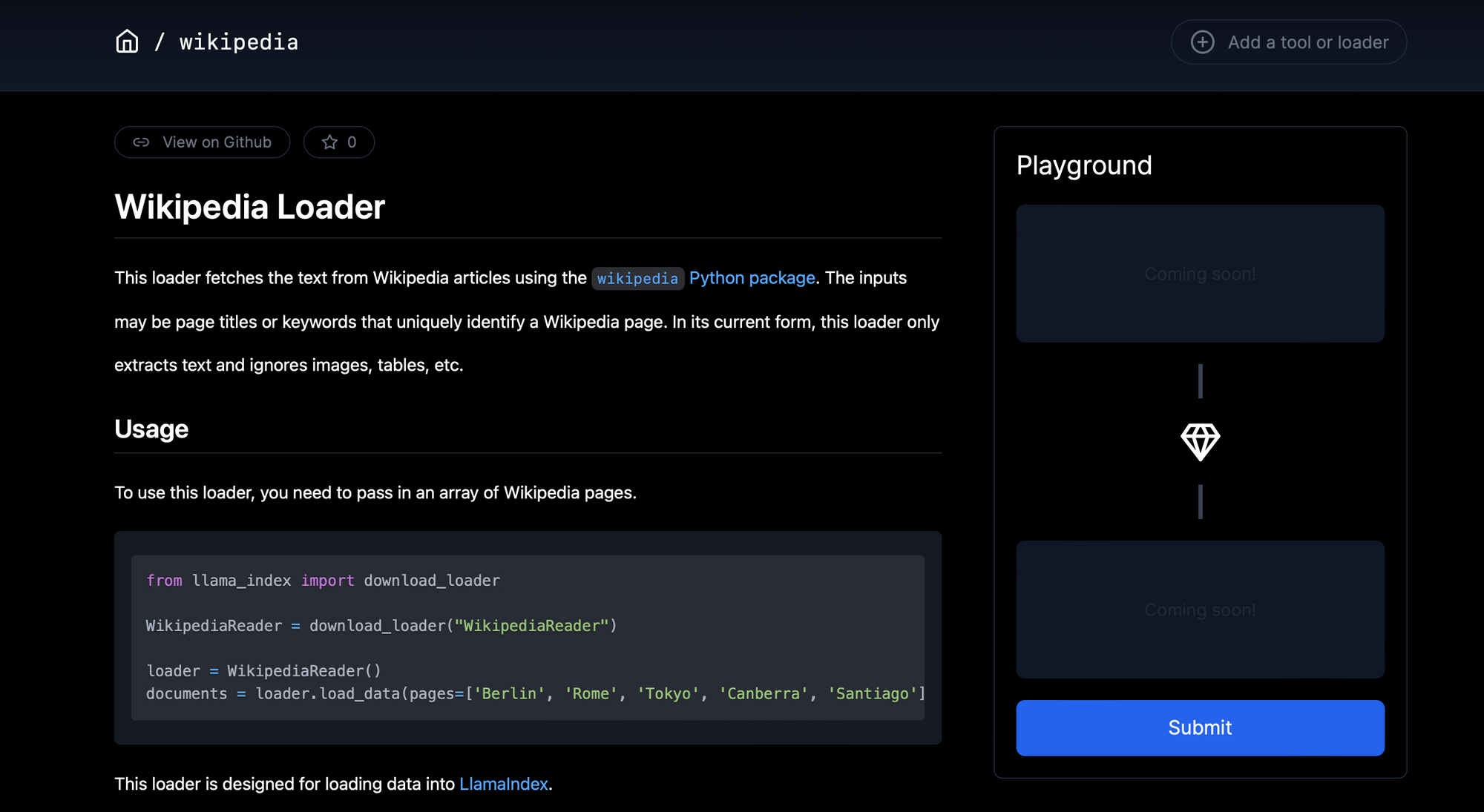

- Wikipedia Web page : We search Llamahub and discover a related connector for this.

The given instance under hundreds the wikipedia pages about just a few nations from across the globe. Principally, the the highest web page that seems within the search outcomes with every factor of the checklist as a search question is ingested.

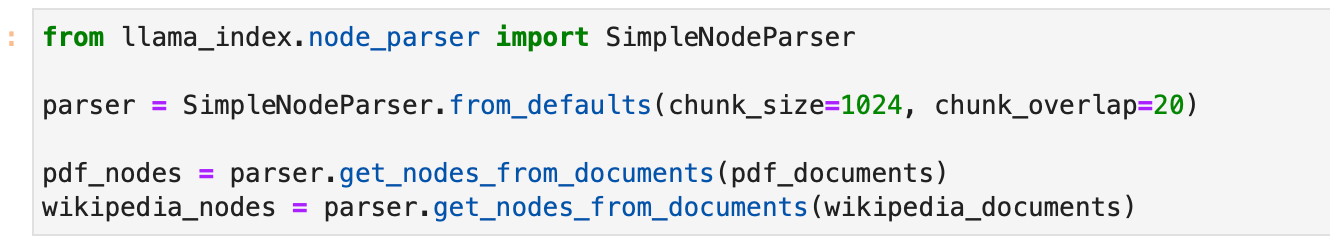

Creating LlamaIndex Nodes

In LlamaIndex, as soon as the information has been ingested and represented as Paperwork, there’s an choice to additional course of these Paperwork into Nodes. Nodes are extra granular knowledge entities that signify “chunks” of supply Paperwork, which might be textual content chunks, photographs, or different forms of knowledge. In addition they carry metadata and relationship info with different nodes, which could be instrumental in constructing a extra structured and relational index.

Fundamental

To parse Paperwork into Nodes, LlamaIndex gives NodeParser courses. These courses assist in mechanically reworking the content material of Paperwork into Nodes, adhering to a selected construction that may be utilized additional in index development and querying.

This is how you should utilize a SimpleNodeParser to parse your Paperwork into Nodes:

from llama_index.node_parser import SimpleNodeParser

# Assuming paperwork have already been loaded

# Initialize the parser

parser = SimpleNodeParser.from_defaults(chunk_size=1024, chunk_overlap=20)

# Parse paperwork into nodes

nodes = parser.get_nodes_from_documents(paperwork)

On this snippet, SimpleNodeParser.from_defaults() initializes a parser with default settings, and get_nodes_from_documents(paperwork) is used to parse the loaded Paperwork into Nodes.

Superior

Numerous customization choices embrace:

text_splitter(default: TokenTextSplitter)include_metadata(default: True)include_prev_next_rel(default: True)metadata_extractor(default: None)

Textual content Splitter Customization

Customise textual content splitter, utilizing both SentenceSplitter, TokenTextSplitter, or CodeSplitter from llama_index.text_splitter. Examples:

SentenceSplitter:

import tiktoken

from llama_index.text_splitter import SentenceSplitter

text_splitter = SentenceSplitter(

separator=" ", chunk_size=1024, chunk_overlap=20,

paragraph_separator="nnn", secondary_chunking_regex="[^,.;。]+[,.;。]?",

tokenizer=tiktoken.encoding_for_model("gpt-3.5-turbo").encode

)

node_parser = SimpleNodeParser.from_defaults(text_splitter=text_splitter)

TokenTextSplitter:

import tiktoken

from llama_index.text_splitter import TokenTextSplitter

text_splitter = TokenTextSplitter(

separator=" ", chunk_size=1024, chunk_overlap=20,

backup_separators=["n"],

tokenizer=tiktoken.encoding_for_model("gpt-3.5-turbo").encode

)

node_parser = SimpleNodeParser.from_defaults(text_splitter=text_splitter)

CodeSplitter:

from llama_index.text_splitter import CodeSplitter

text_splitter = CodeSplitter(

language="python", chunk_lines=40, chunk_lines_overlap=15, max_chars=1500,

)

node_parser = SimpleNodeParser.from_defaults(text_splitter=text_splitter)

SentenceWindowNodeParser

For particular scope embeddings, make the most of SentenceWindowNodeParser to separate paperwork into particular person sentences, additionally capturing surrounding sentence home windows.

import nltk

from llama_index.node_parser import SentenceWindowNodeParser

node_parser = SentenceWindowNodeParser.from_defaults(

window_size=3, window_metadata_key="window", original_text_metadata_key="original_sentence"

)

Guide Node Creation

For extra management, manually create Node objects and outline attributes and relationships:

from llama_index.schema import TextNode, NodeRelationship, RelatedNodeInfo

# Create TextNode objects

node1 = TextNode(textual content="<text_chunk>", id_="<node_id>")

node2 = TextNode(textual content="<text_chunk>", id_="<node_id>")

# Outline node relationships

node1.relationships[NodeRelationship.NEXT] = RelatedNodeInfo(node_id=node2.node_id)

node2.relationships[NodeRelationship.PREVIOUS] = RelatedNodeInfo(node_id=node1.node_id)

# Collect nodes

nodes = [node1, node2]

On this snippet, TextNode creates nodes with textual content content material whereas NodeRelationship and RelatedNodeInfo outline node relationships.

Allow us to create primary nodes for the PDF and Wikipedia web page paperwork we now have created.

Create Index with Nodes and Paperwork

The core essence of LlamaIndex lies in its capability to construct structured indices over ingested knowledge, represented as both Paperwork or Nodes. This indexing facilitates environment friendly querying over the information. Let’s delve into construct indices with each Doc and Node objects, and what occurs underneath the hood throughout this course of.

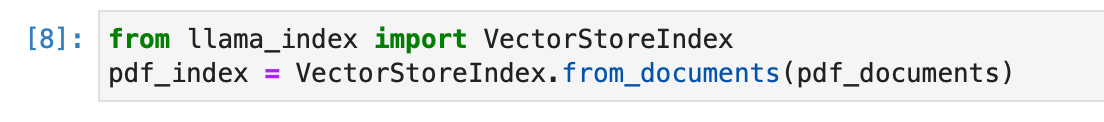

- Constructing Index from Paperwork

This is how one can construct an index straight from Paperwork utilizing the VectorStoreIndex:

from llama_index import VectorStoreIndex

# Assuming docs is your checklist of Doc objects

index = VectorStoreIndex.from_documents(docs)

Several types of indices in LlamaIndex deal with knowledge in distinct methods:

- Abstract Index: Shops Nodes as a sequential chain, and through question time, all Nodes are loaded into the Response Synthesis module if no different question parameters are specified.

- Vector Retailer Index: Shops every Node and a corresponding embedding in a Vector Retailer, and queries contain fetching the top-k most related Nodes.

- Tree Index: Builds a hierarchical tree from a set of Nodes, and queries contain traversing from root nodes right down to leaf nodes.

- Key phrase Desk Index: Extracts key phrases from every Node to construct a mapping, and queries extract related key phrases to fetch corresponding Nodes.

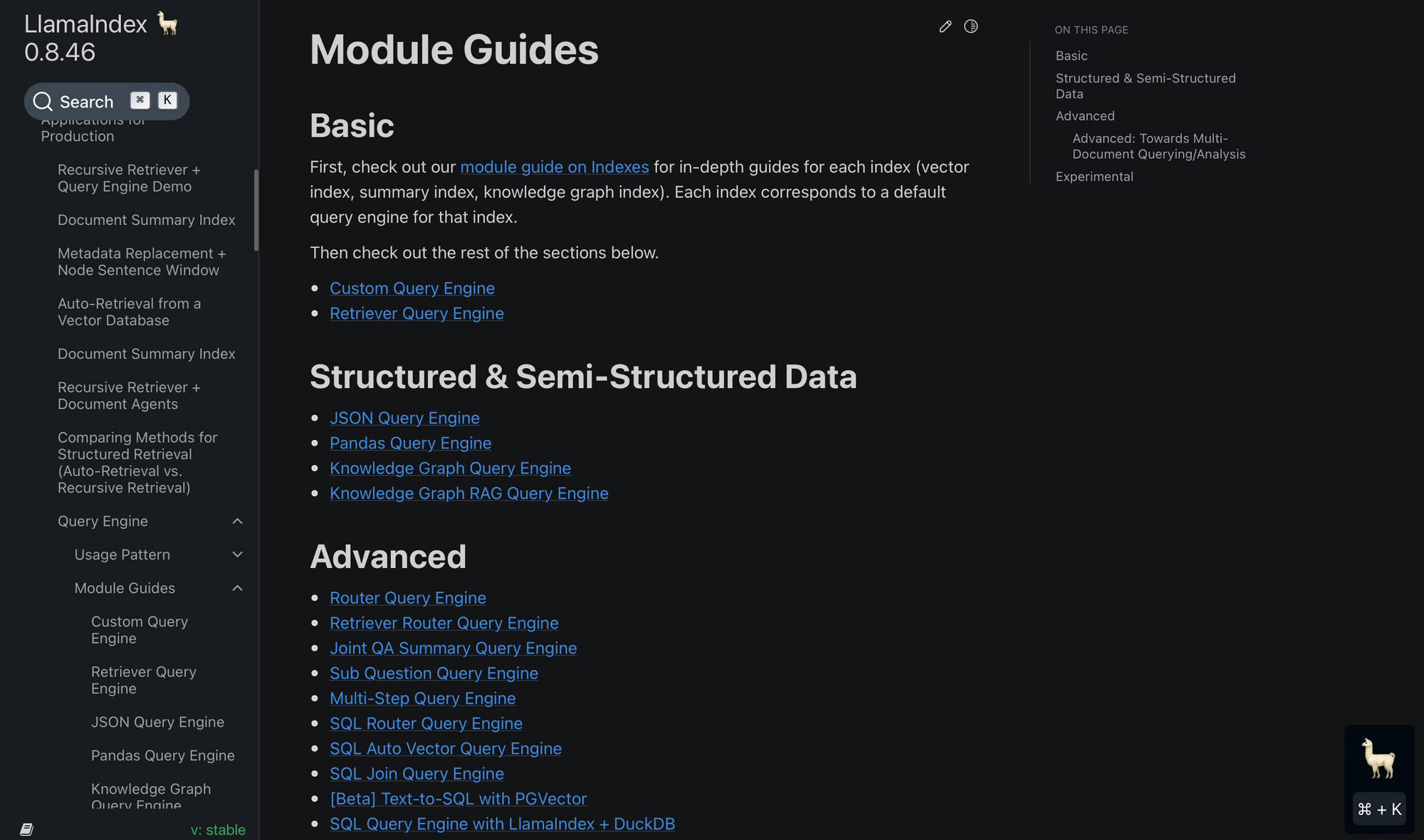

To decide on your index, you must fastidiously consider the module guides right here and make a alternative right here based on your use case.

Beneath the Hood:

- The Paperwork are parsed into Node objects, that are light-weight abstractions over textual content strings that moreover maintain monitor of metadata and relationships.

- Index-specific computations are carried out so as to add Node into the index knowledge construction. For instance:

- For a vector retailer index, an embedding mannequin is known as (both through API or domestically) to compute embeddings for the Node objects.

- For a doc abstract index, an LLM (Language Mannequin) is known as to generate a abstract.

Allow us to create an index for the PDF File utilizing the above code.

- Constructing Index from Nodes

You may also construct an index straight from Node objects, following the parsing of Paperwork into Nodes or guide Node creation:

from llama_index import VectorStoreIndex

# Assuming nodes is your checklist of Node objects

index = VectorStoreIndex(nodes)

Allow us to go forward and create a VectorStoreIndex for the PDF nodes.

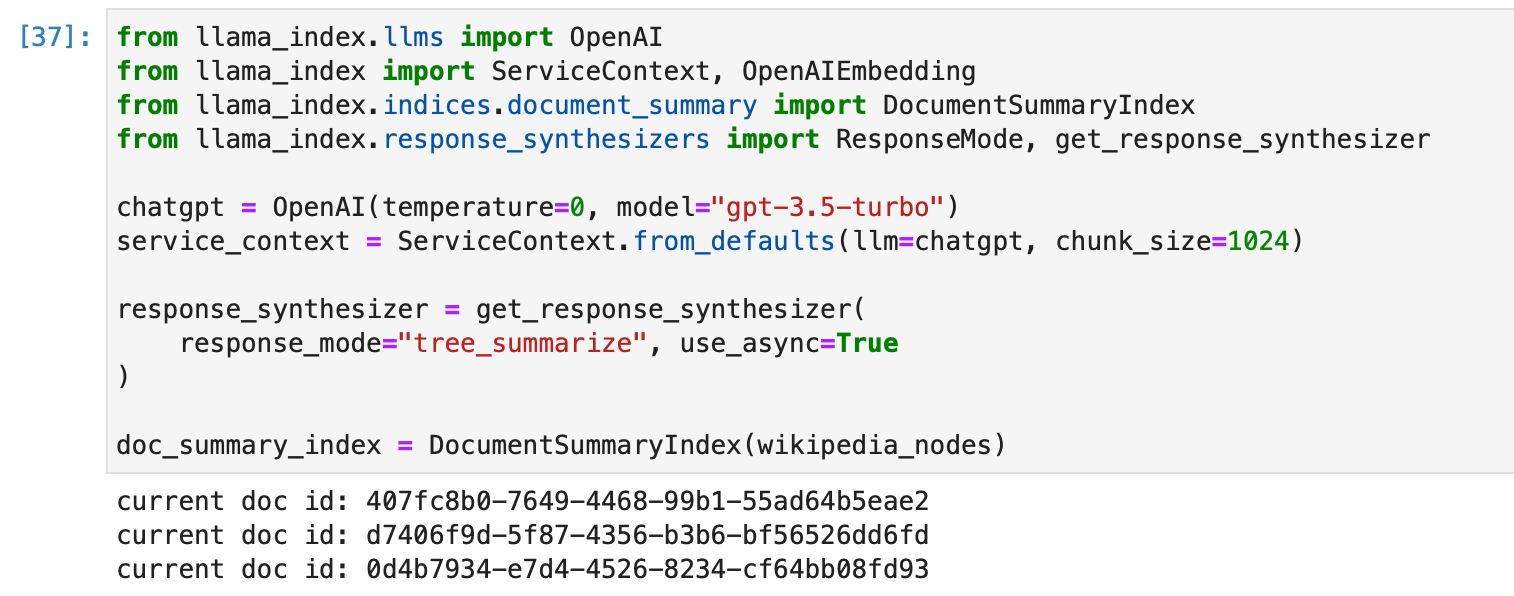

Allow us to now create a abstract index for the Wikipedia nodes. We discover the related index from the checklist of supported indices, and decide on the Doc Abstract Index.

We create the index with some customization as follows –

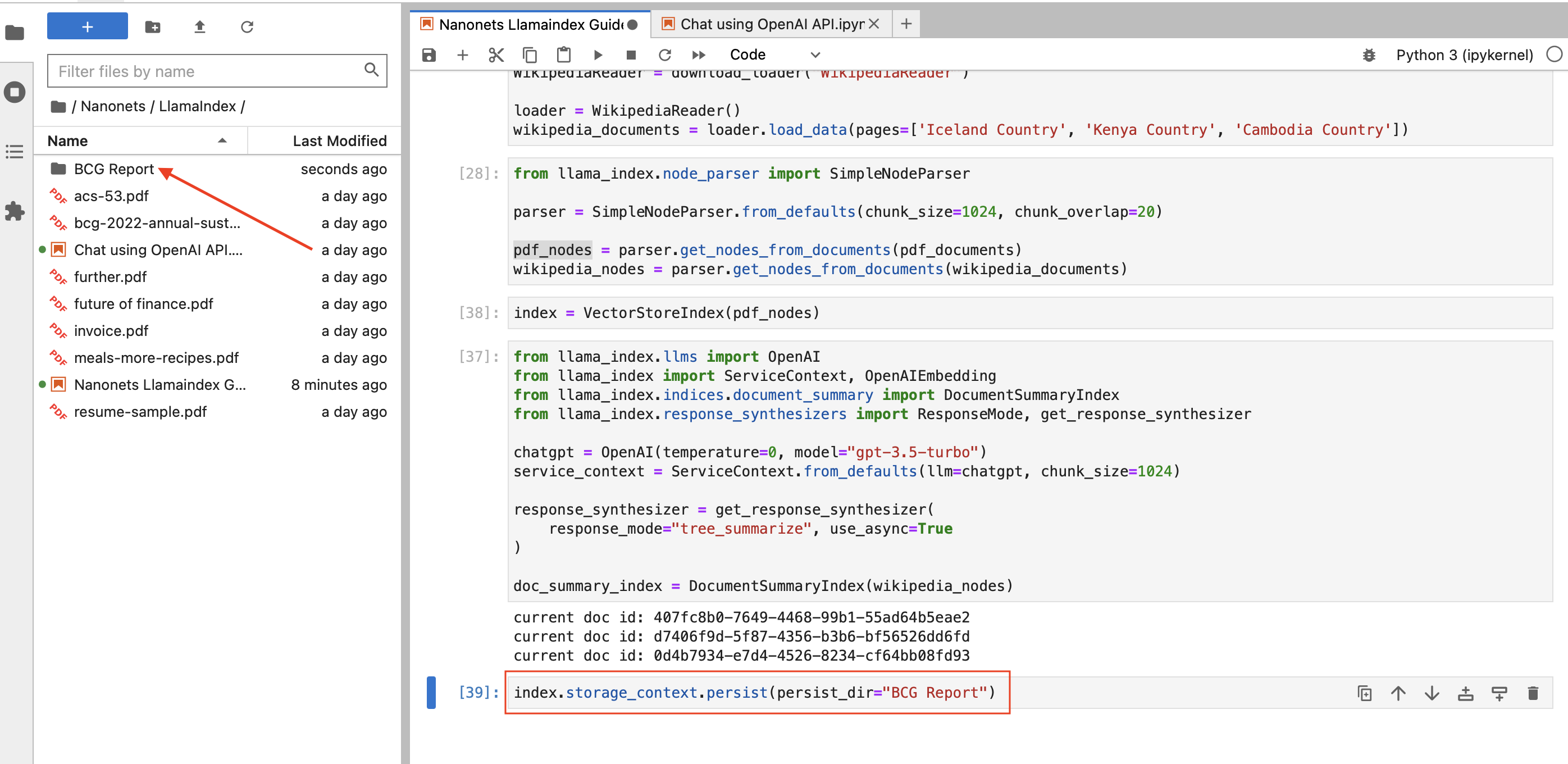

Storing an Index

LlamaIndex’s storage functionality is constructed for adaptability, particularly when coping with evolving knowledge sources. This part outlines the functionalities supplied for managing knowledge storage, together with customization and persistence options.

Persistence (Fundamental)

There may be cases the place you may wish to save the index for future use, and LlamaIndex makes this easy. With the persist() methodology, you may retailer knowledge, and with the load_index_from_storage() methodology, you may retrieve knowledge effortlessly.

# Persisting to disk

index.storage_context.persist(persist_dir="<persist_dir>")

# Loading from disk

from llama_index import StorageContext, load_index_from_storage

storage_context = StorageContext.from_defaults(persist_dir="<persist_dir>")

index = load_index_from_storage(storage_context)

For instance, we are able to save the PDF index as follows –

Storage Elements (Superior)

At its core, LlamaIndex gives customizable storage elements enabling customers to specify the place varied knowledge components are saved. These elements embrace:

- Doc Shops: The repositories for storing ingested paperwork represented as Node objects.

- Index Shops: The locations the place index metadata are saved.

- Vector Shops: The storages for holding embedding vectors.

LlamaIndex is flexible in its storage backend help, with confirmed help for:

- Native filesystem

- AWS S3

- Cloudflare R2

These backends are facilitated by means of the usage of the fsspec library, which permits for quite a lot of storage backends.

For a lot of vector shops, each knowledge and index embeddings are saved collectively, eliminating the necessity for separate doc or index shops. This association additionally auto-handles knowledge persistence, simplifying the method of constructing new indexes or reloading current ones.

LlamaIndex has integrations with varied vector shops that deal with your entire index (vectors + textual content). Examine the information right here.

Creating or reloading an index is illustrated under:

# construct a brand new index

from llama_index import VectorStoreIndex, StorageContext

from llama_index.vector_stores import DeepLakeVectorStore

vector_store = DeepLakeVectorStore(dataset_path="<dataset_path>")

storage_context = StorageContext.from_defaults(vector_store=vector_store)

index = VectorStoreIndex.from_documents(paperwork, storage_context=storage_context)

# reload an current index

index = VectorStoreIndex.from_vector_store(vector_store=vector_store)

To leverage storage abstractions, a StorageContext object must be outlined, as proven under:

from llama_index.storage.docstore import SimpleDocumentStore

from llama_index.storage.index_store import SimpleIndexStore

from llama_index.vector_stores import SimpleVectorStore

from llama_index.storage import StorageContext

storage_context = StorageContext.from_defaults(

docstore=SimpleDocumentStore(),

vector_store=SimpleVectorStore(),

index_store=SimpleIndexStore(),

)

Study extra about storage and utilizing customized storage components right here.

Utilizing Index to Question Information

After having established a well-structured index utilizing LlamaIndex, the following pivotal step is querying this index to extract significant insights or solutions to particular inquiries. This section elucidates the method and strategies out there for querying the information listed in LlamaIndex.

Earlier than diving into querying, guarantee that you’ve a well-constructed index as mentioned within the earlier part. Your index might be constructed on paperwork or nodes, and might be a single index or composed of a number of indices.

Excessive-Stage Question API

LlamaIndex gives a high-level API that facilitates easy querying, splendid for frequent use circumstances.

# Assuming 'index' is your constructed index object

query_engine = index.as_query_engine()

response = query_engine.question("your_query")

print(response)

On this simplistic strategy, the as_query_engine() methodology is utilized to create a question engine out of your index, and the question() methodology to execute a question.

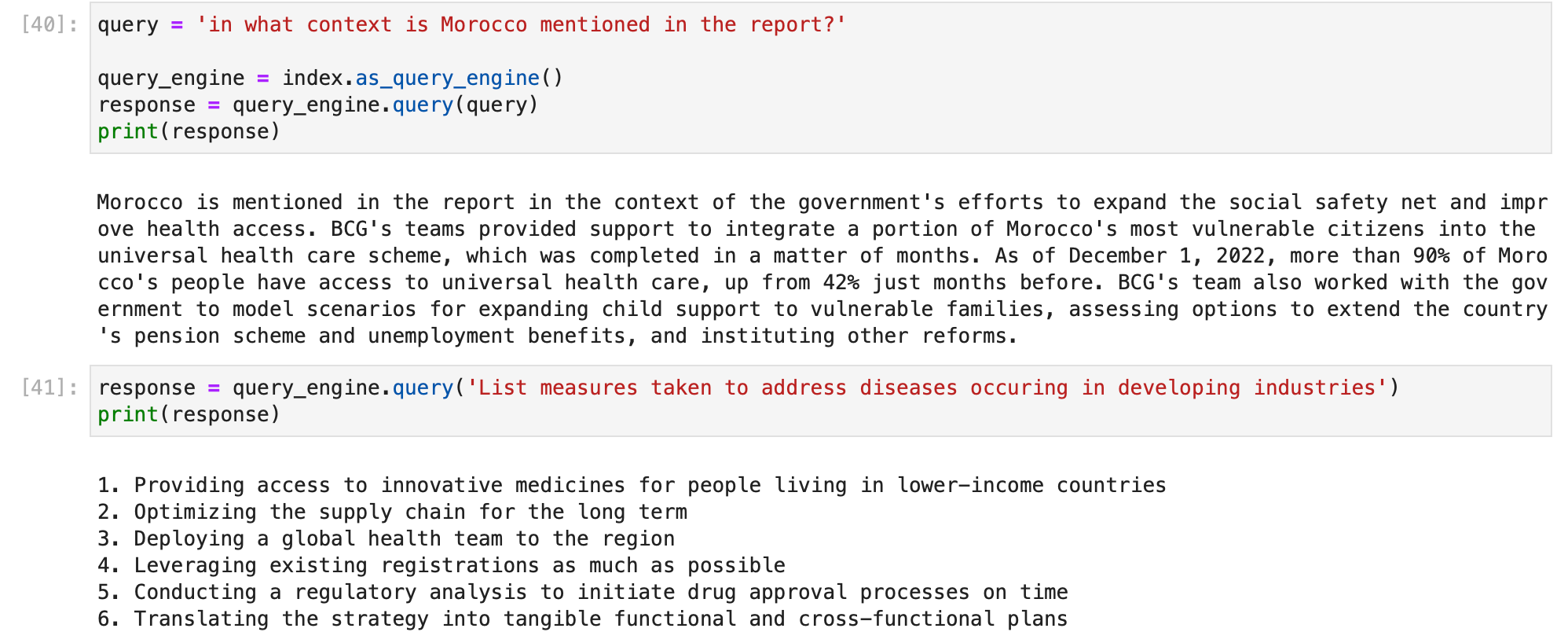

We will do this out on our PDF index –

By default, index.as_query_engine() creates a question engine with the desired default settings in LlamaIndex.

You may select your individual question engine primarily based in your use case from the checklist right here and use that to question your index –

Module Guides – LlamaIndex 🦙 0.8.46

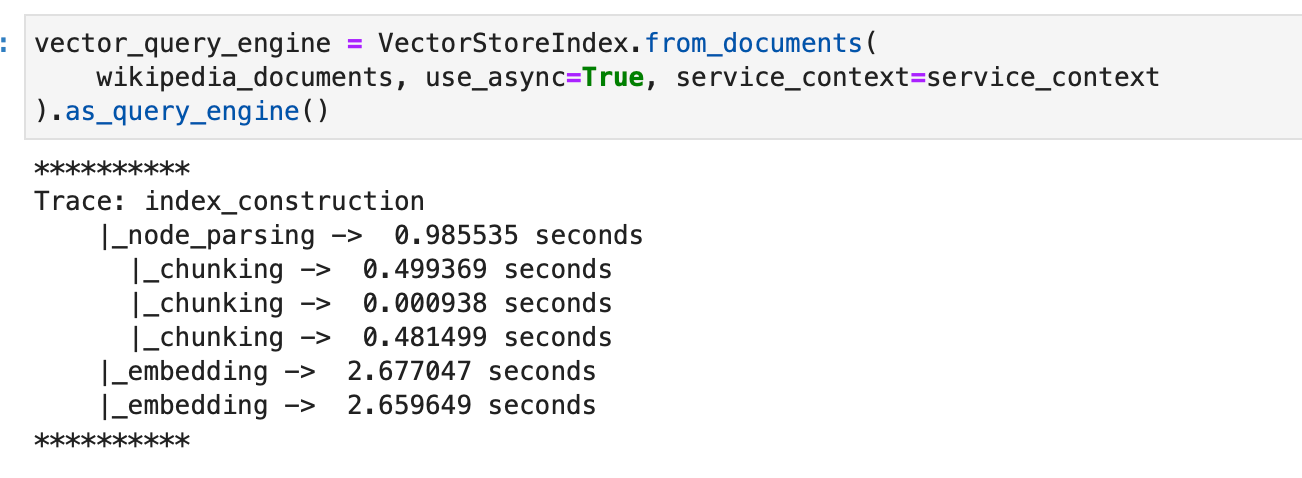

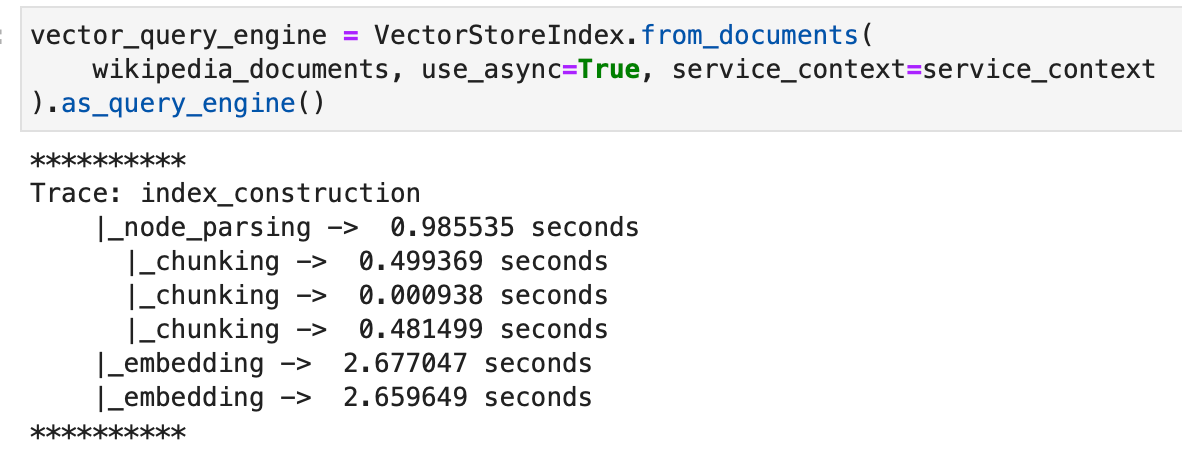

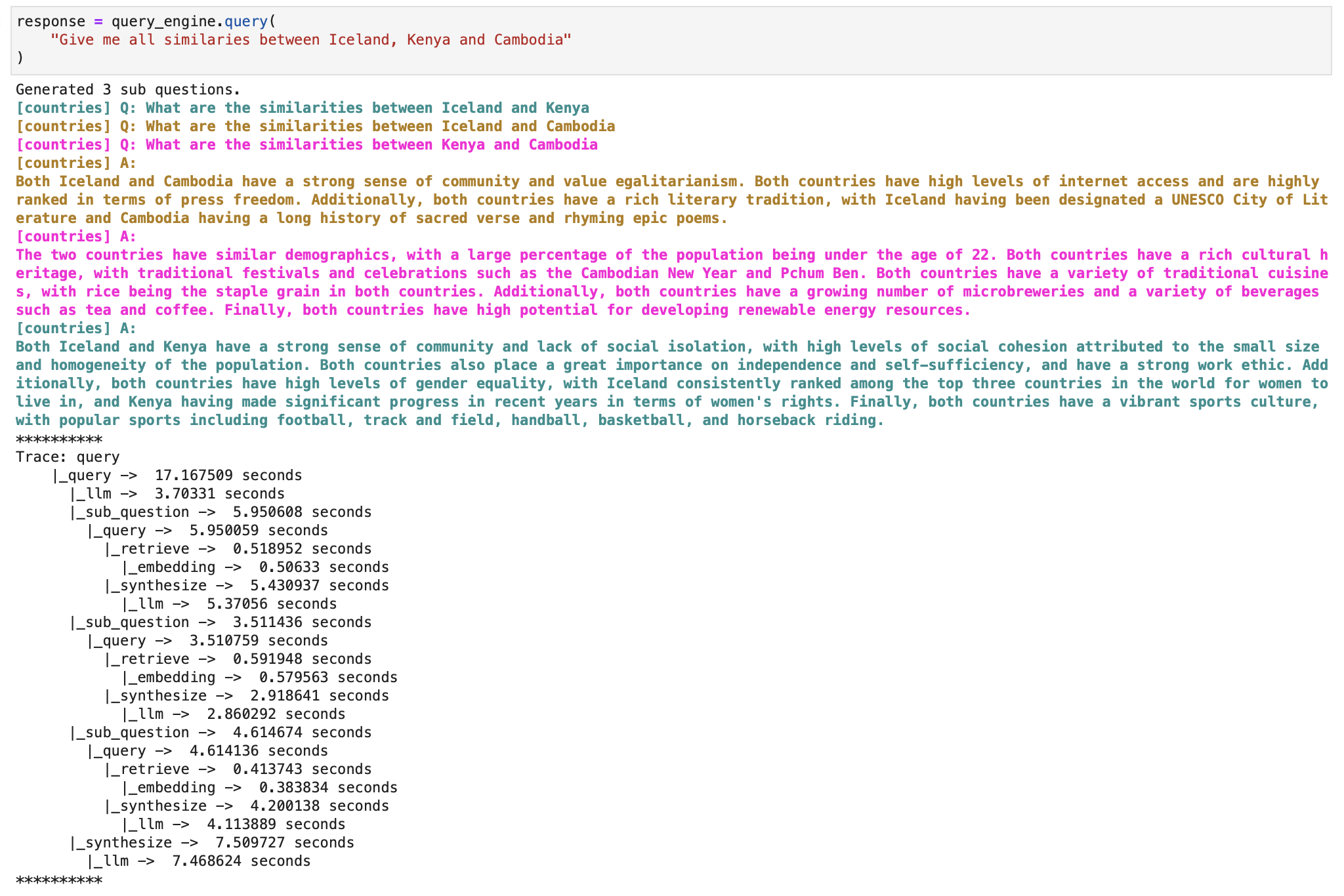

For instance, allow us to use a sub query question engine to deal with the issue of answering a posh question utilizing a number of knowledge sources. It first breaks down the complicated question into sub questions for every related knowledge supply, then collect all of the intermediate reponses and synthesizes a closing response. We’ll apply it to our Wikipedia index.

We’ll comply with the sub query question engine documentation.

Allow us to import required libraries and set context variable to make sure we are able to print the subtasks undertaken by the question engine as a substitute of simply printing the ultimate response.

import nest_asyncio

from llama_index import VectorStoreIndex, SimpleDirectoryReader

from llama_index.instruments import QueryEngineTool, ToolMetadata

from llama_index.query_engine import SubQuestionQueryEngine

from llama_index.callbacks import CallbackManager, LlamaDebugHandler

from llama_index import ServiceContext

nest_asyncio.apply()

# We're utilizing the LlamaDebugHandler to print the hint of the sub questions captured by the SUB_QUESTION callback occasion sort

llama_debug = LlamaDebugHandler(print_trace_on_end=True)

callback_manager = CallbackManager([llama_debug])

service_context = ServiceContext.from_defaults(

callback_manager=callback_manager

)

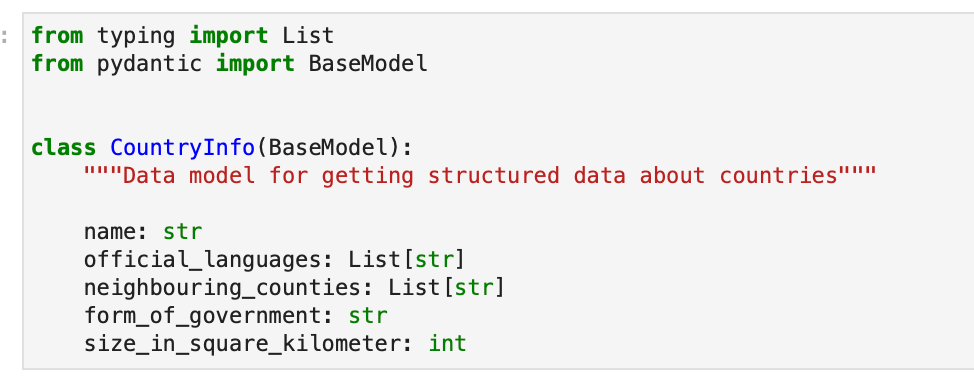

We first create a primary vector index as instructed by the documentation.

Now we create the sub query question engine.

Now, querying and asking for the response traces the subquestions that the question engine internally computed to get to the ultimate response.

And the ultimate response given by the engine can now be printed.

Join your knowledge and apps with Nanonets AI Assistant to speak with knowledge, deploy customized chatbots & brokers, and create AI workflows that carry out duties in your apps.

Low-Stage Composition API

In right now’s data-driven world, structured knowledge is pivotal for a streamlined workflow. LlamaIndex understands this and faucets into the capabilities of Massive Language Fashions (LLMs) to ship structured outcomes. Let’s discover how.

Structured outcomes aren’t only a fancy approach of presenting knowledge; they’re essential for functions that depend upon the precision and stuck construction of parsed values.

LLMs may give structured outputs in two methods:

Technique 1 : Pydantic Applications

With operate calling APIs, you get a naturally structured end result, which then will get molded into the specified format, utilizing Pydantic Applications. These nifty modules convert a immediate right into a well-structured output utilizing a Pydantic object. They will both name features or use textual content completions together with output parsers. Plus, they gel nicely with search instruments. LlamaIndex additionally provides ready-to-use Pydantic applications that change sure inputs into particular output varieties, like knowledge tables.

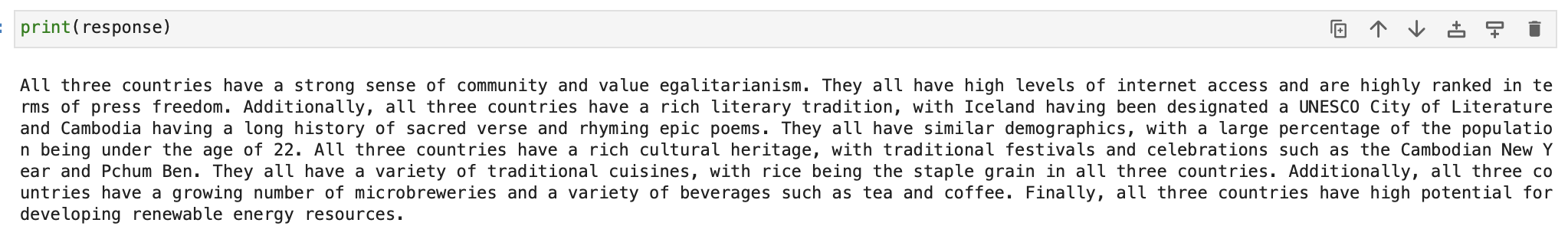

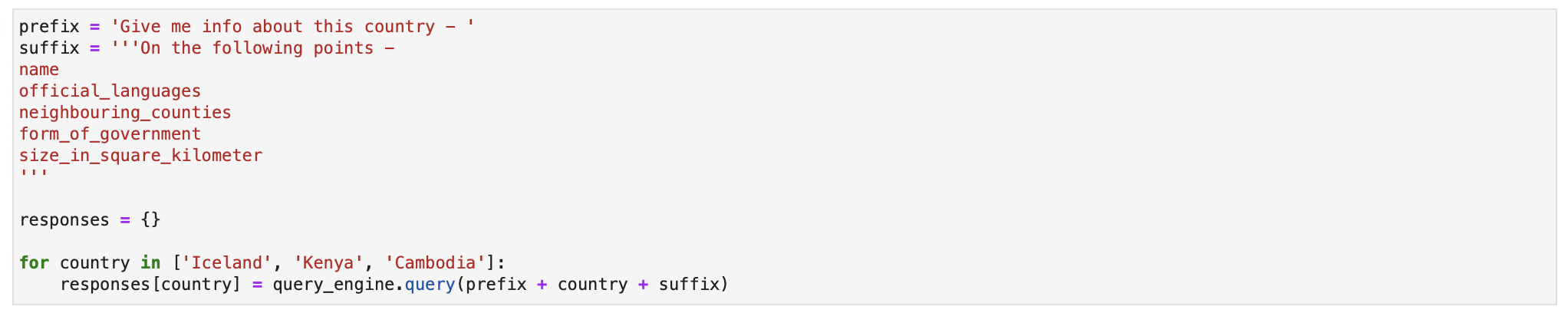

Allow us to use the Pydantic Applications documentation to extract structured knowledge about these nations from the unstructured Wikipedia articles.

We create our pydantic output object –

We then create our index utilizing the wikipedia doc objects.

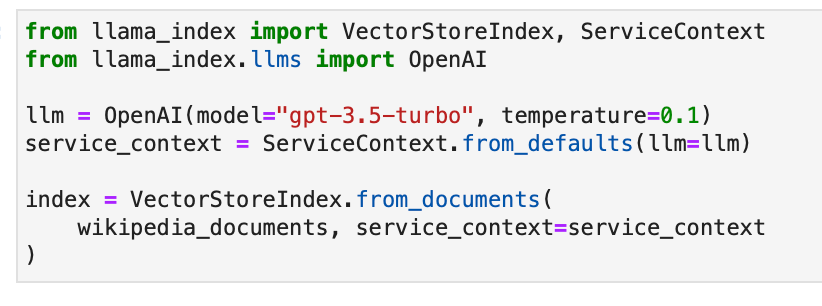

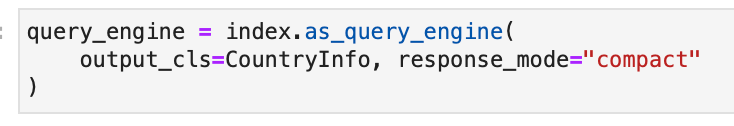

We provoke our question engine and specify the Pydantic output class.

We will now count on structured response from the question engine. Allow us to retrieve this info for the three nations.

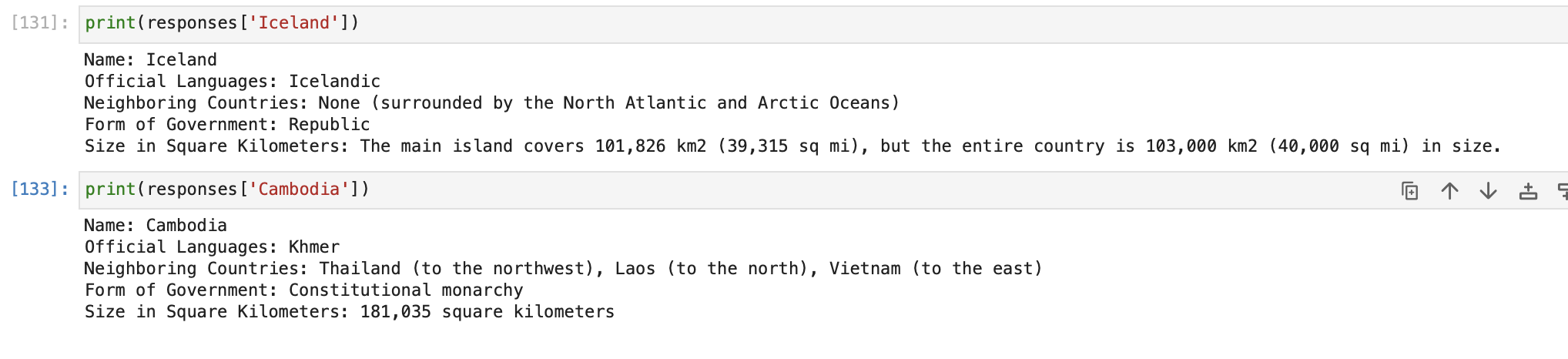

Allow us to examine the responses object now.

Keep in mind, whereas any LLM can technically produce these structured outputs, integration outdoors of OpenAI LLMs continues to be a piece in progress.

Technique 2 : Output Parsers

We will additionally use generic completion APIs, the place textual content prompts dictate inputs and outputs. Right here, the output parser ensures the ultimate product aligns with the specified construction, guiding the LLM earlier than and after its activity. That is accomplished with the assistance of Output Parsers, which acts as gatekeepers simply earlier than the ultimate response is generated. They sit earlier than and after an LLM textual content response and guarantee every little thing’s so as.

Nonetheless, in case you’re utilizing LLM features mentioned above that already give structured outputs, you will not want these.

We’ll comply with the Output Parsers documentation right here.

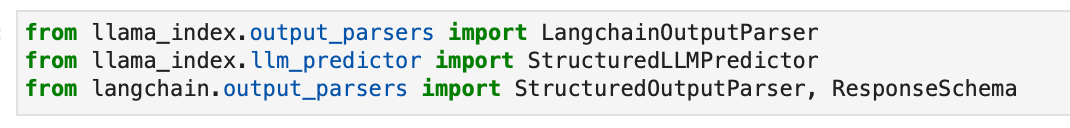

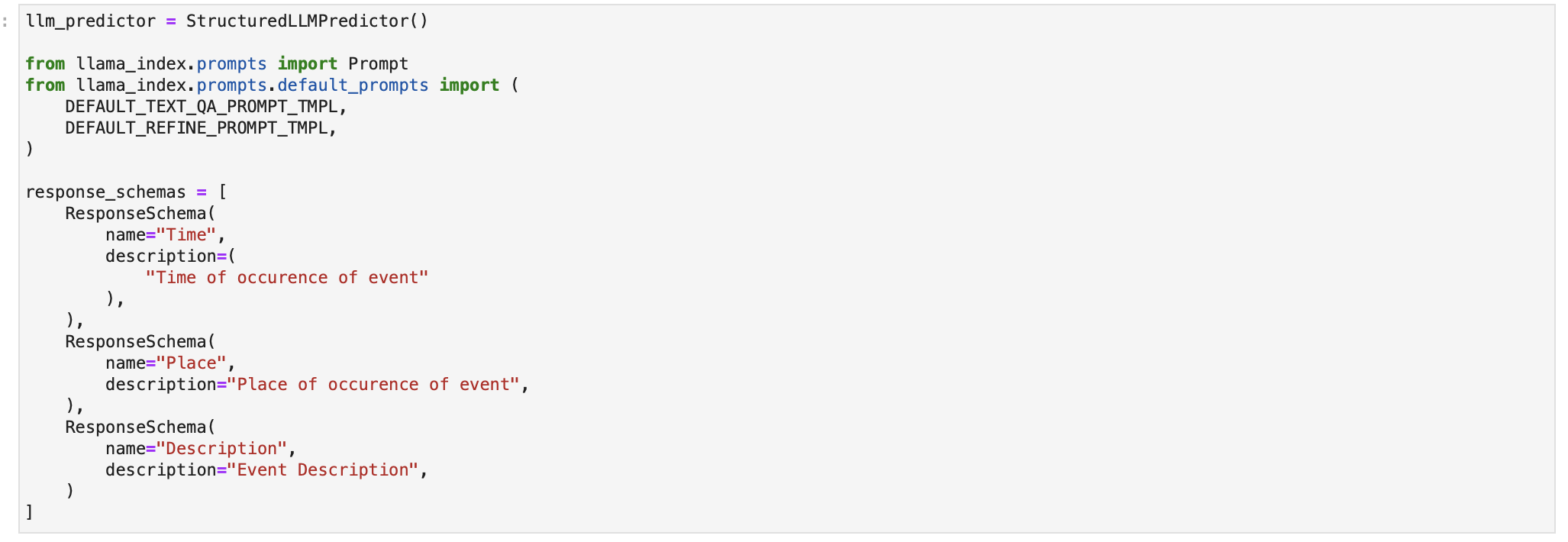

Allow us to import the LangChain output parser now.

We now outline the structured LLM and the response format as proven within the documentation.

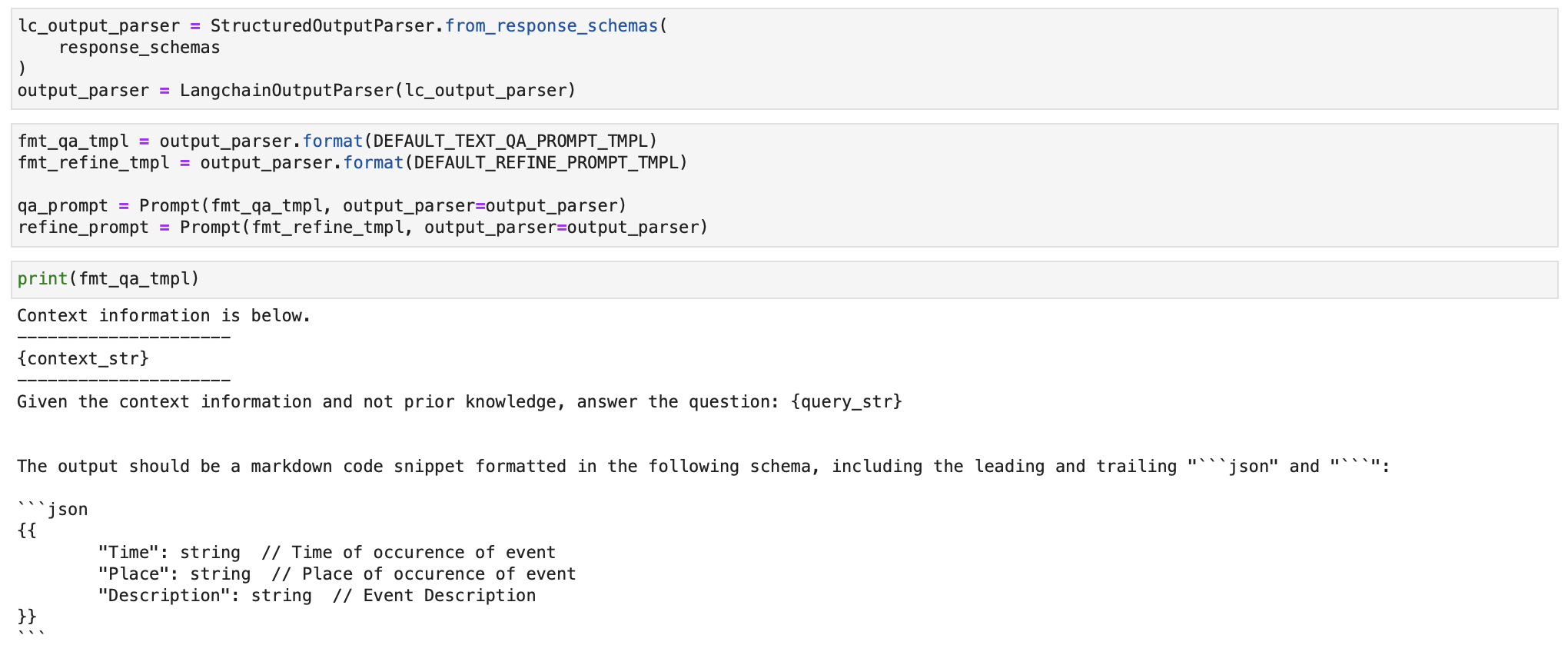

We outline the output parser and it is question template utilizing the response_schemas outlined above.

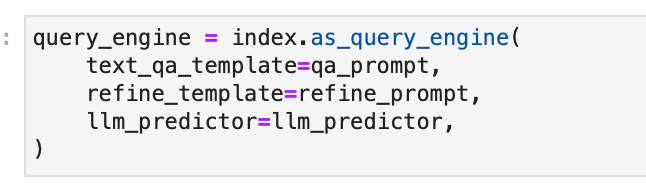

We outline the question engine and cross the structured output parser template to it whereas creating it.

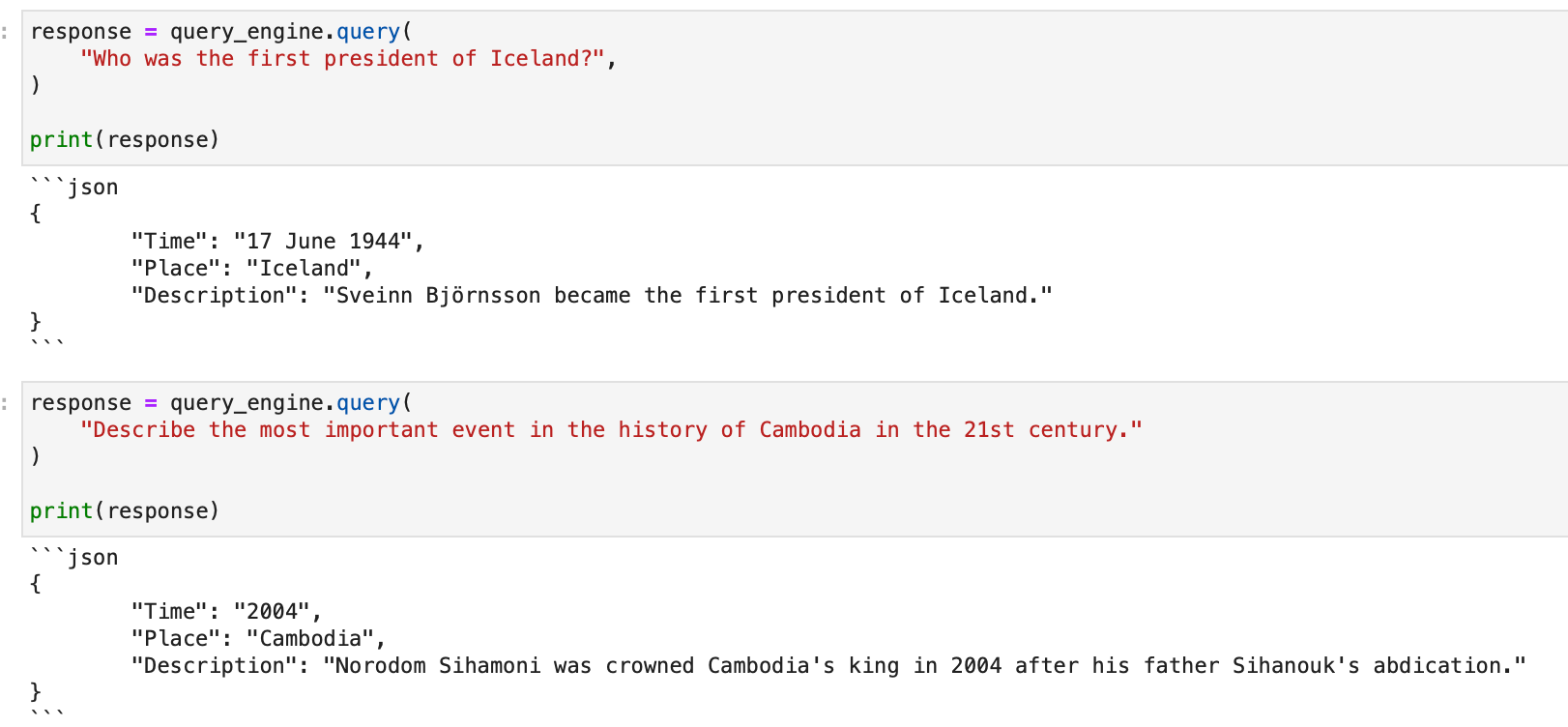

Operating any question now fetches a structured json output!

Structured outputs with LlamaIndex make parsing and downstream processes a breeze, guaranteeing you get essentially the most out of your LLMs.

Join your knowledge and apps with Nanonets AI Assistant to speak with knowledge, deploy customized chatbots & brokers, and create AI workflows that carry out duties in your apps.

Utilizing Index to Chat with Information

Partaking in a dialog along with your knowledge takes querying a step additional. LlamaIndex introduces the idea of a Chat Engine to facilitate a extra interactive and contextual dialogue along with your knowledge. This part elaborates on organising and using the Chat Engine for a richer interplay along with your listed knowledge.

Understanding the Chat Engine

A Chat Engine gives a high-level interface to have a back-and-forth dialog along with your knowledge, versus a single question-answer interplay facilitated by the Question Engine. By sustaining a historical past of the dialog, the Chat Engine can present solutions which might be contextually conscious of earlier interactions.

Tip: For standalone queries with out dialog historical past, use Question Engine.

Getting Began with Chat Engine

Initiating a dialog is easy. Right here’s how one can get began:

# Construct a chat engine from the index

chat_engine = index.as_chat_engine()

# Begin a dialog

response = chat_engine.chat("Inform me a joke.")

# For streaming responses

streaming_response = chat_engine.stream_chat("Inform me a joke.")

for token in streaming_response.response_gen:

print(token, finish="")

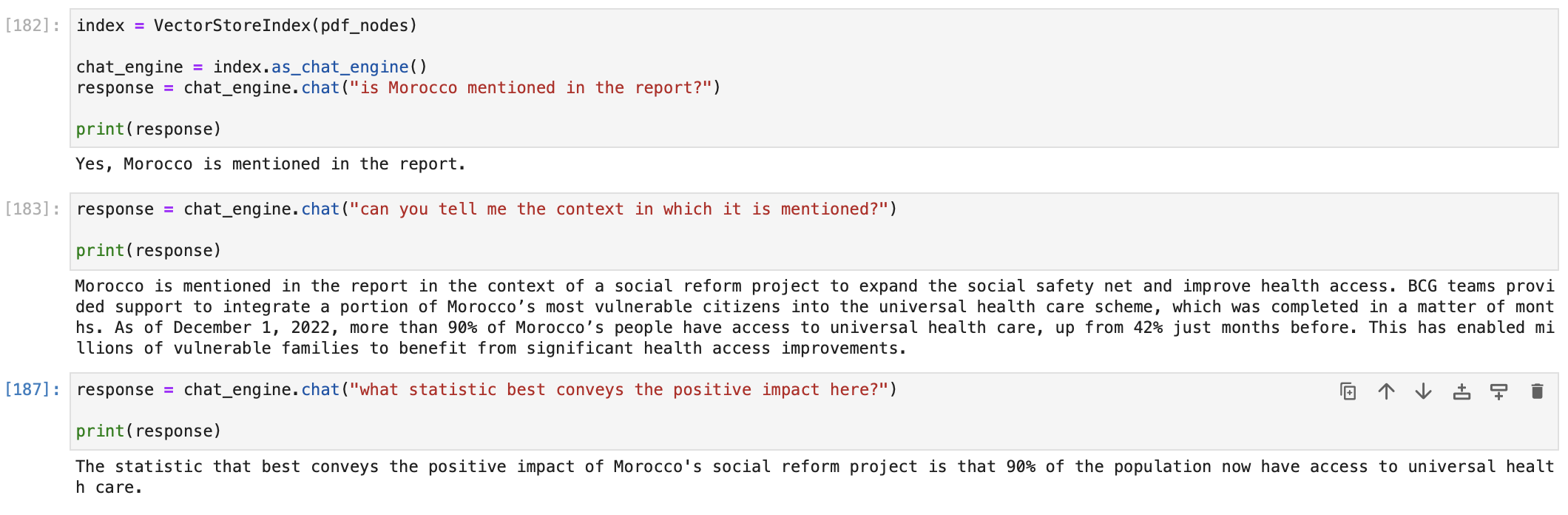

For instance, we are able to begin a chat with our PDF doc on the BCG Annual Sustainability Report as follows.

You may select the chat engine primarily based in your use case. LlamaIndex provides a variety of chat engine implementations, catering to totally different wants and ranges of sophistication. These engines are designed to facilitate conversations and interactions with customers, every providing a singular set of options.

- SimpleChatEngine

The SimpleChatEngine is a primary chat mode that doesn’t depend on a information base. It gives a place to begin for chatbot growth. Nonetheless, it won’t deal with complicated queries nicely as a result of its lack of a information base.

- ReAct Agent Mode

ReAct is an agent-based chat mode constructed on prime of a question engine over your knowledge. It follows a versatile strategy the place the agent decides whether or not to make use of the question engine instrument to generate responses or not. This mode is flexible however extremely depending on the standard of the language mannequin (LLM) and will require extra management to make sure correct responses. You may customise the LLM utilized in ReAct mode.

Implementation Instance:

# Load knowledge and construct index

from llama_index import VectorStoreIndex, SimpleDirectoryReader, ServiceContext

from llama_index.llms import OpenAI

service_context = ServiceContext.from_defaults(llm=OpenAI())

knowledge = SimpleDirectoryReader(input_dir="../knowledge/paul_graham/").load_data()

index = VectorStoreIndex.from_documents(knowledge, service_context=service_context)

# Configure chat engine

chat_engine = index.as_chat_engine(chat_mode="react", verbose=True)

# Chat along with your knowledge

response = chat_engine.chat("What did Paul Graham do in the summertime of 1995?")

- OpenAI Agent Mode

OpenAI Agent Mode leverages OpenAI’s highly effective language fashions like GPT-3.5 Turbo. It is designed for normal interactions and may carry out particular features like querying a information base. It is significantly appropriate for a variety of chat functions.

Implementation Instance:

# Load knowledge and construct index

from llama_index import VectorStoreIndex, SimpleDirectoryReader, ServiceContext

from llama_index.llms import OpenAI

service_context = ServiceContext.from_defaults(llm=OpenAI(mannequin="gpt-3.5-turbo-0613"))

knowledge = SimpleDirectoryReader(input_dir="../knowledge/paul_graham/").load_data()

index = VectorStoreIndex.from_documents(knowledge, service_context=service_context)

# Configure chat engine

chat_engine = index.as_chat_engine(chat_mode="openai", verbose=True)

# Chat along with your knowledge

response = chat_engine.chat("Hello")

- Context Mode

The ContextChatEngine is a straightforward chat mode constructed on prime of a retriever over your knowledge. It retrieves related textual content from the index primarily based on the person’s message, units this retrieved textual content as context within the system immediate, and returns a solution. This mode is right for questions associated to the information base and normal interactions.

Implementation Instance:

# Load knowledge and construct index

from llama_index import VectorStoreIndex, SimpleDirectoryReader

knowledge = SimpleDirectoryReader(input_dir="../knowledge/paul_graham/").load_data()

index = VectorStoreIndex.from_documents(knowledge)

# Configure chat engine

from llama_index.reminiscence import ChatMemoryBuffer

reminiscence = ChatMemoryBuffer.from_defaults(token_limit=1500)

chat_engine = index.as_chat_engine(

chat_mode="context",

reminiscence=reminiscence,

system_prompt=(

"You're a chatbot, capable of have regular interactions, in addition to speak"

" about an essay discussing Paul Graham's life."

),

)

# Chat along with your knowledge

response = chat_engine.chat("Hiya!")

- Condense Query Mode

The Condense Query mode generates a standalone query from the dialog context and the final message. It then queries the question engine with this condensed query to supply a response. This mode is appropriate for questions straight associated to the information base.

Implementation Instance:

# Load knowledge and construct index

from llama_index import VectorStoreIndex, SimpleDirectoryReader

knowledge = SimpleDirectoryReader(input_dir="../knowledge/paul_graham/").load_data()

index = VectorStoreIndex.from_documents(knowledge)

# Configure chat engine

chat_engine = index.as_chat_engine(chat_mode="condense_question", verbose=True)

# Chat along with your knowledge

response = chat_engine.chat("What did Paul Graham do after YC?")

Configuring the Chat Engine

LlamaIndex Information Brokers take pure language as enter, and carry out actions as a substitute of producing responses.

The essence of developing proficient knowledge brokers lies within the artwork of instrument abstractions.

However what precisely is a instrument on this context? Consider Instruments as API interfaces, tailor-made for agent interactions relatively than human touchpoints.

Core Ideas:

- Instrument: At its primary stage, a Instrument comes with a generic interface and a few elementary metadata like title, description, and performance schema.

- Instrument Spec: This dives deeper into the API particulars. It outlines a complete service API specification, able to be translated into an assortment of Instruments.

There are totally different flavors of Instruments out there:

- FunctionTool: Remodel any user-defined operate right into a Instrument. Plus, it could actually well infer the operate’s schema.

- QueryEngineTool: Wraps round an current question engine, and given our agent abstractions are derived from BaseQueryEngine, this instrument may also embrace brokers (which we’ll talk about later).

You may both customized design LlamaHub Instrument Specs and Instruments or effortlessly import them from the llama-hub package deal. Integrating them into brokers is easy.

For an in depth assortment of Instruments and Instrument Specs, make your technique to LlamaHub.

Llama Hub

A hub of knowledge loaders for GPT Index and LangChain

Information Brokers in LlamaIndex are powered by Language Studying Fashions (LLMs) and act as clever information employees over your knowledge, executing each “learn” and “write” operations. They automate search and retrieval throughout various knowledge varieties—unstructured, semi-structured, and structured. In contrast to our question engines which solely “learn” from a static knowledge supply, Information Brokers can dynamically ingest, modify, and work together with knowledge throughout varied instruments. They will name exterior service APIs, course of the returned knowledge, and retailer it for future reference.

The 2 constructing blocks of Information Brokers are:

- A Reasoning Loop: Dictates the agent’s decision-making course of on which instruments to make use of, their sequence, and the parameters for every instrument name primarily based on the enter activity.

- Instrument Abstractions: A set of APIs or Instruments that the agent interacts with to fetch info or alter states.

The kind of reasoning loop depends upon the agent; supported varieties embrace OpenAI Perform agent and a ReAct agent (which operates throughout any chat/textual content completion endpoint).

This is use an OpenAI Perform API-based Information Agent:

from llama_index.agent import OpenAIAgent

from llama_index.llms import OpenAI

... # import and outline instruments

llm = OpenAI(mannequin="gpt-3.5-turbo-0613")

agent = OpenAIAgent.from_tools(instruments, llm=llm, verbose=True)

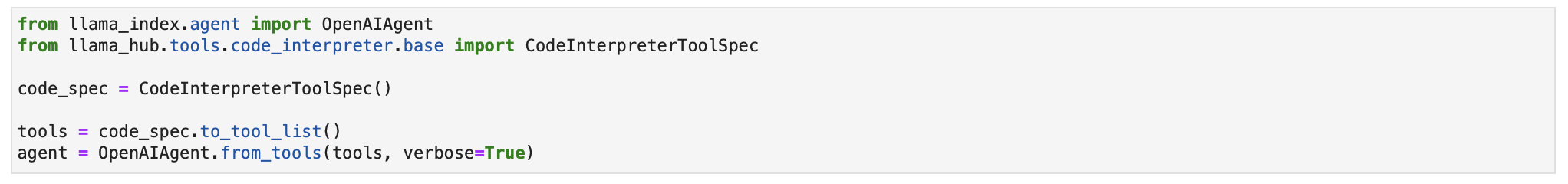

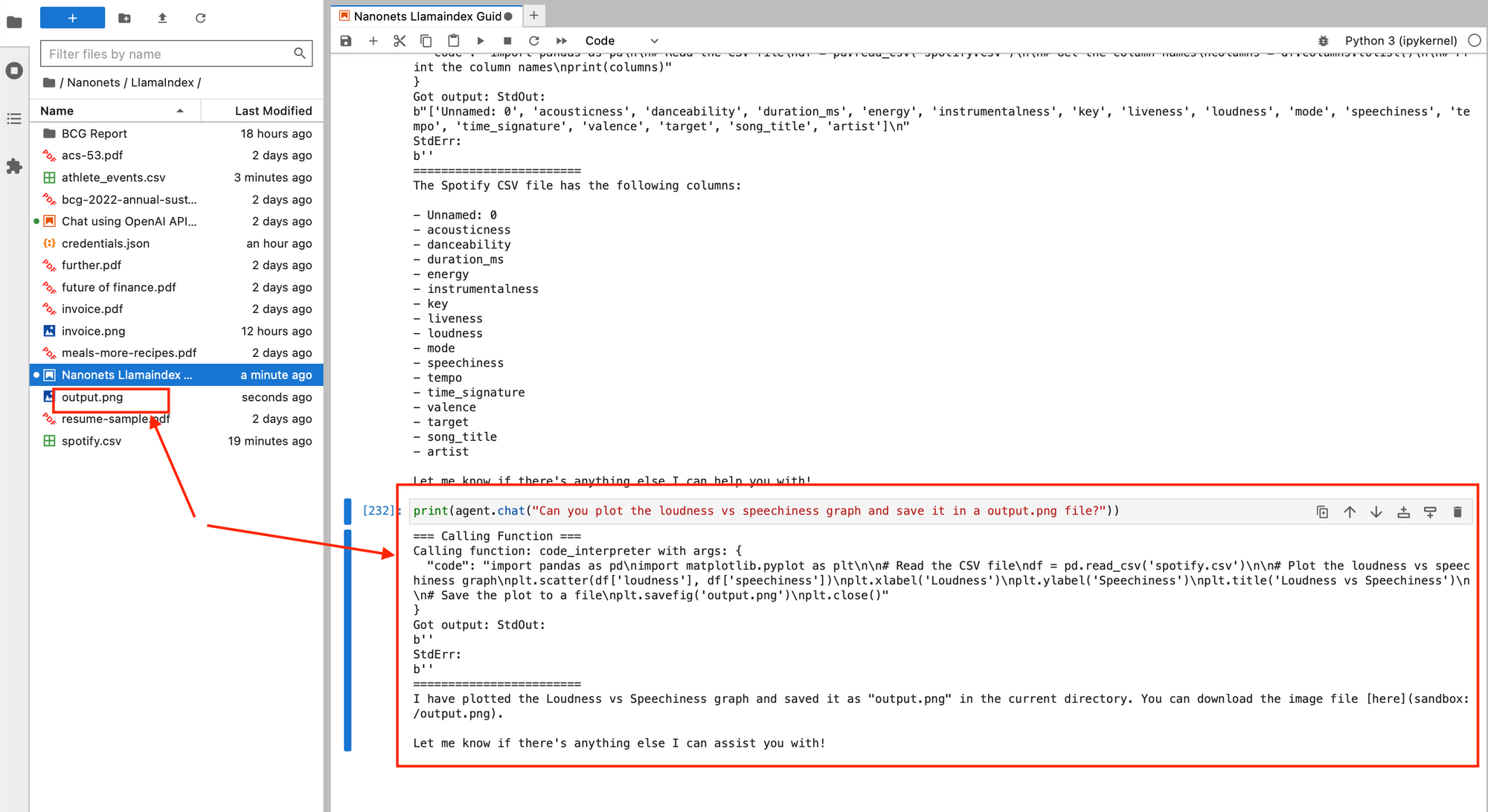

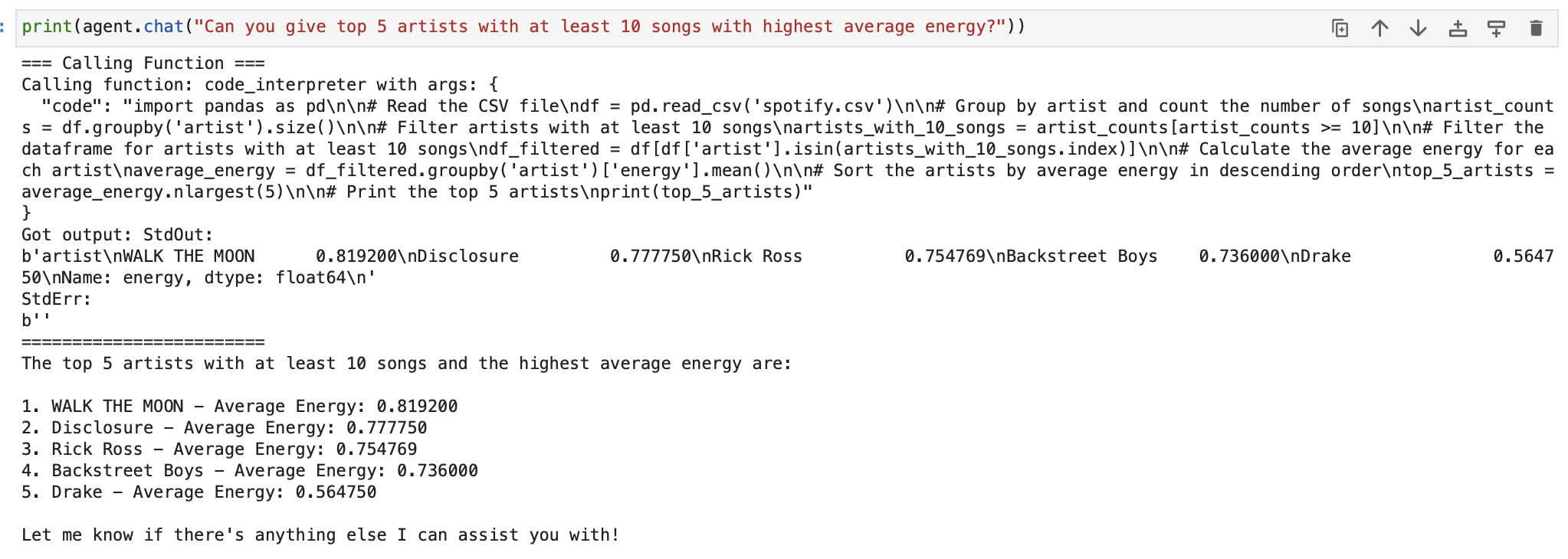

Now, allow us to go forward and use the Code Interpreter instrument out there in LlamaHub to write down and execute code straight by giving pure language directions. We’ll use this Spotify dataset (which is a .csv file) and carry out knowledge evaluation by making our agent execute python code to learn and manipulate the information in pandas.

We first import the instrument.

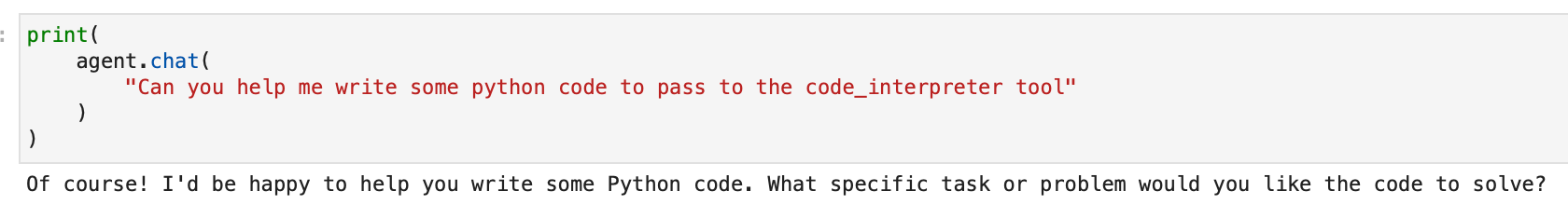

Let’s begin chatting.

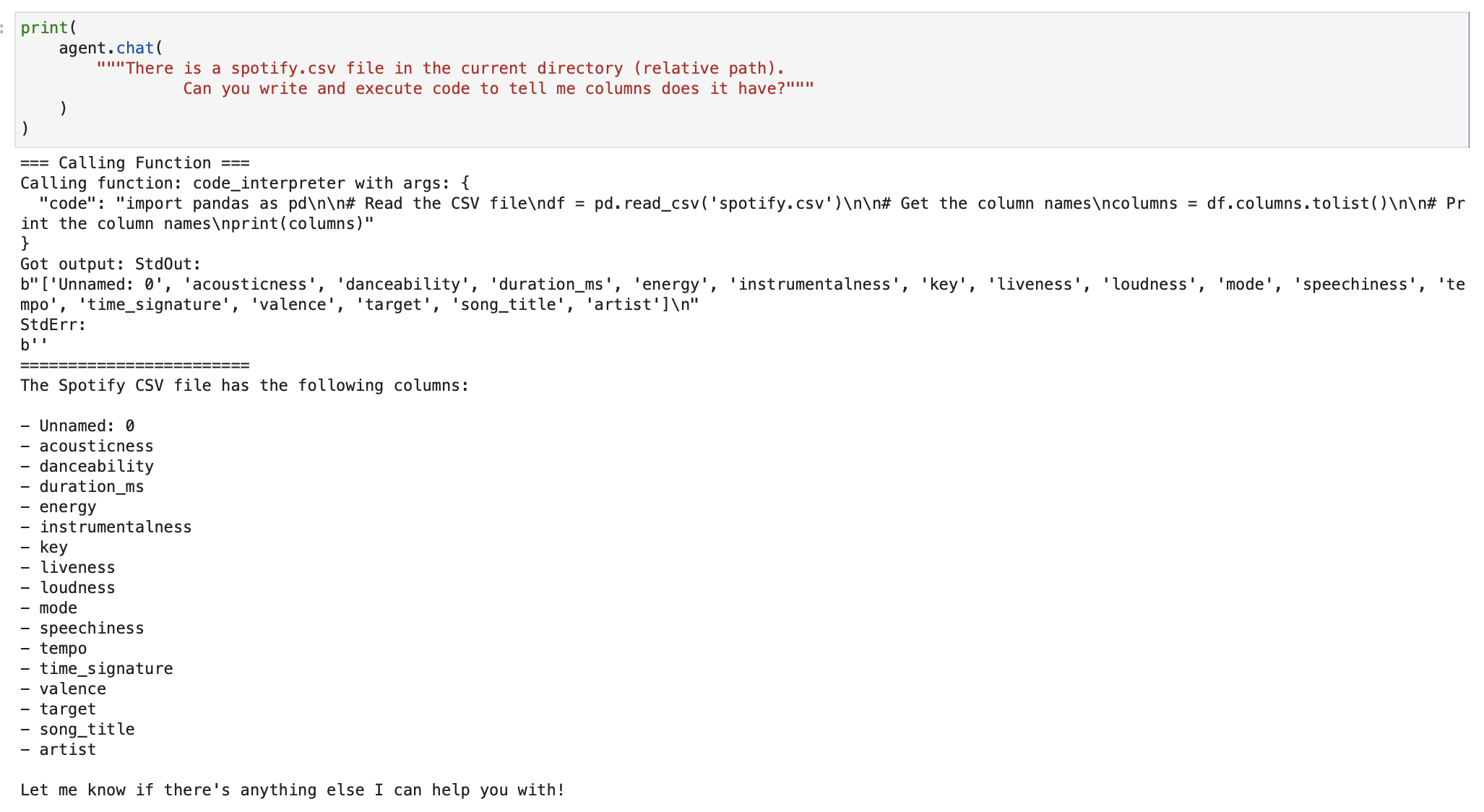

We first ask it to fetch the checklist of columns. Our agent executes python code and makes use of pandas to learn the column names.

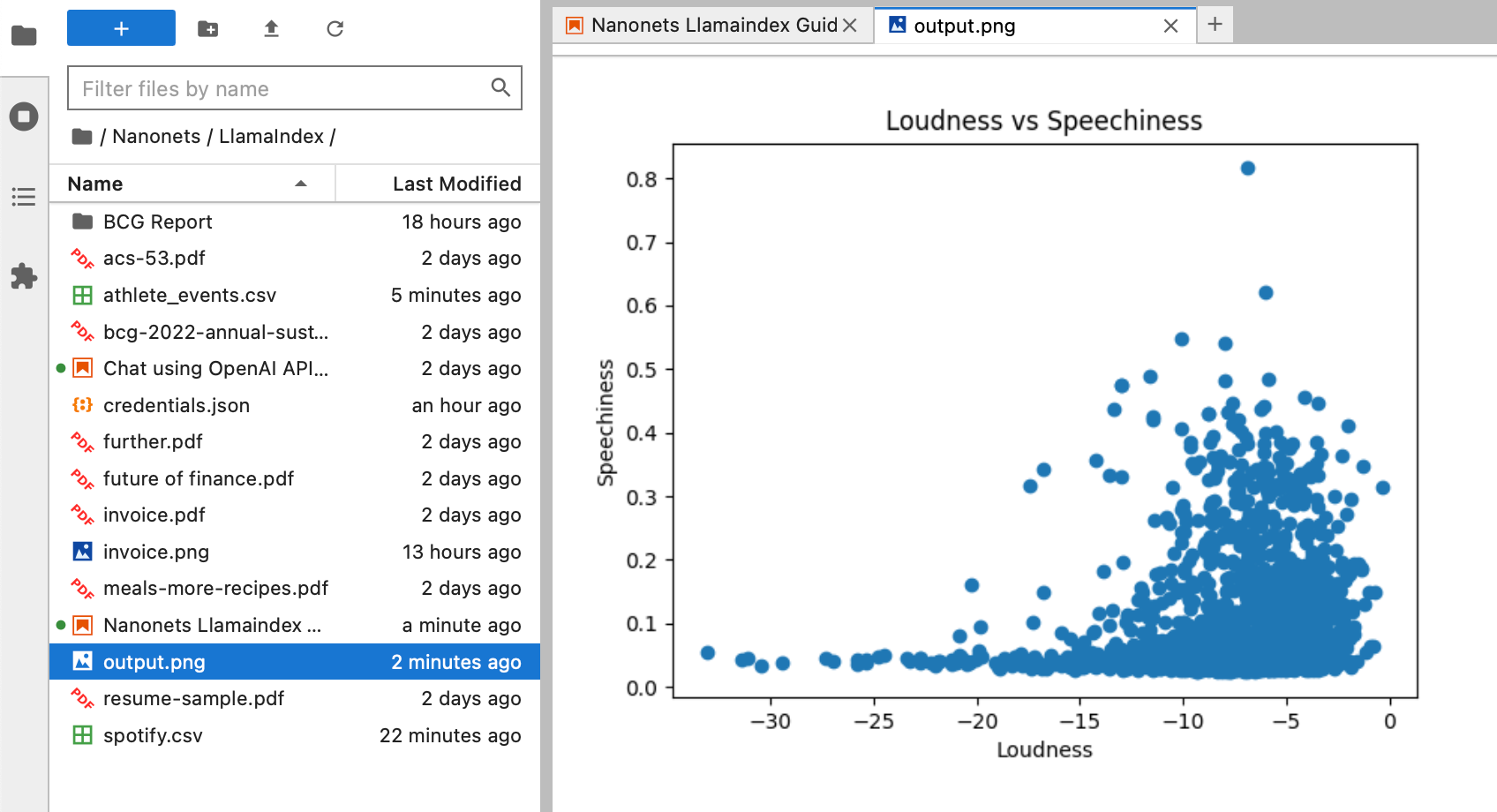

We now ask it to plot a graph of loudness vs ‘speechiness’ and put it aside in a output.png file on our system, all by simply chatting with our agent.

We will carry out EDA intimately as nicely.

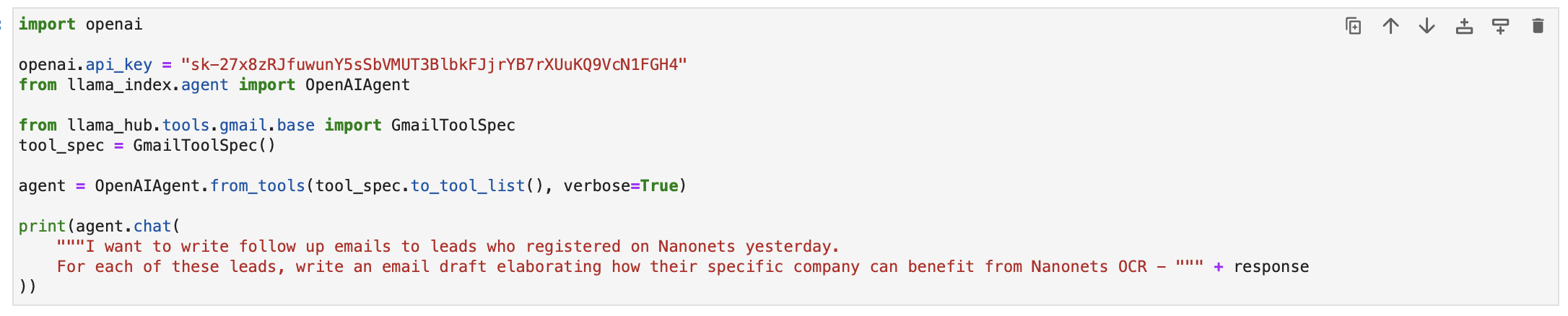

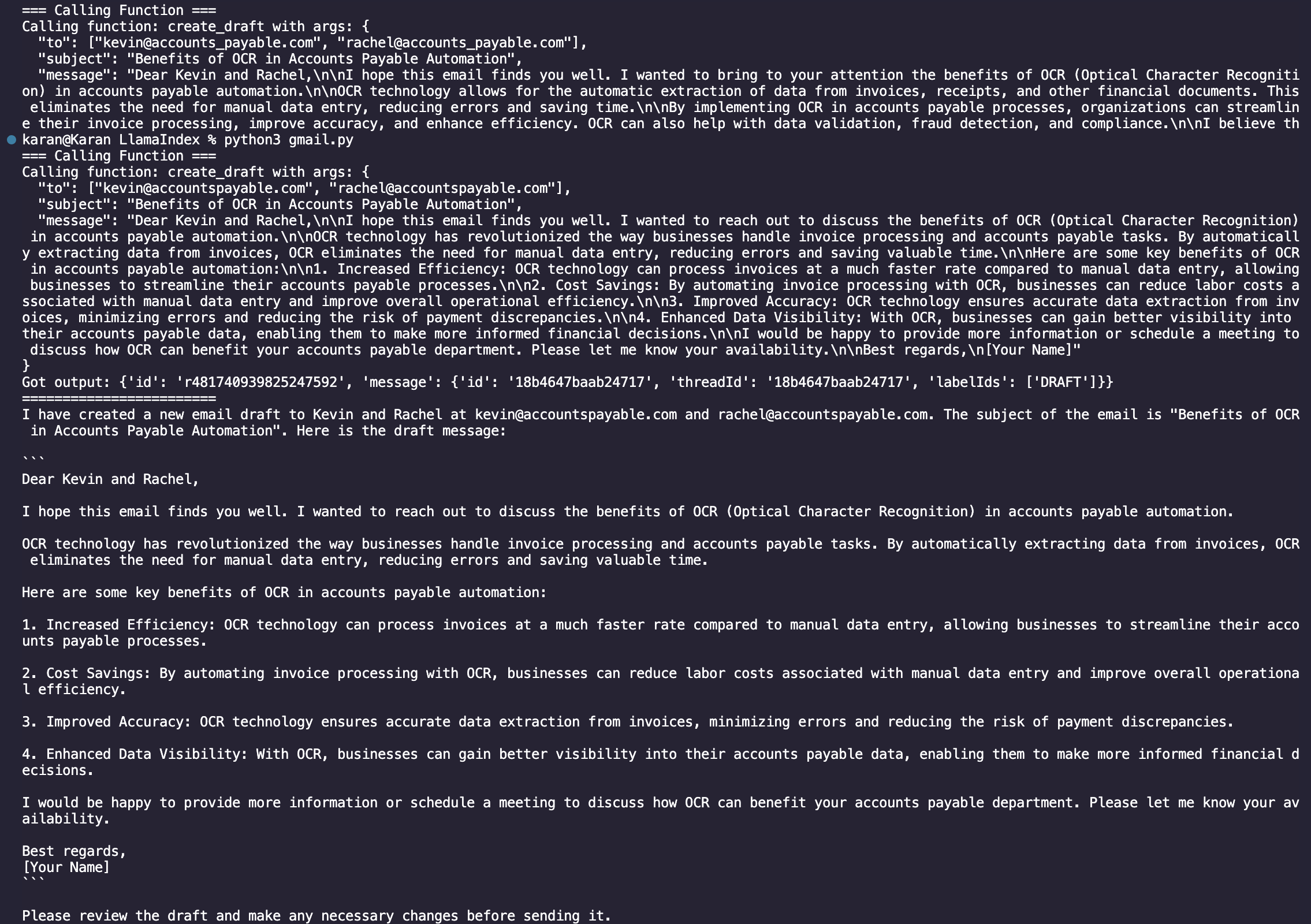

Now, allow us to go forward and use one other instrument to ship emails from our Gmail account with an agent utilizing pure language enter.

We use the information connector with Hubspot from LlamaHub to fetch a structured checklist of leads which had been created yesterday.

We now go to the GmailSpecTool Documentation in LlamaHub to create a Gmail Agent to write down and ship sooner or later follow-up emails to those of us.

We begin by making a credentials.json file by following the documentation and add it to our working listing.

We then proceed to supply the e-mail writing immediate.

Upon operating the code, we are able to see the execution chain writing the emails and saving them as drafts in your Gmail account.

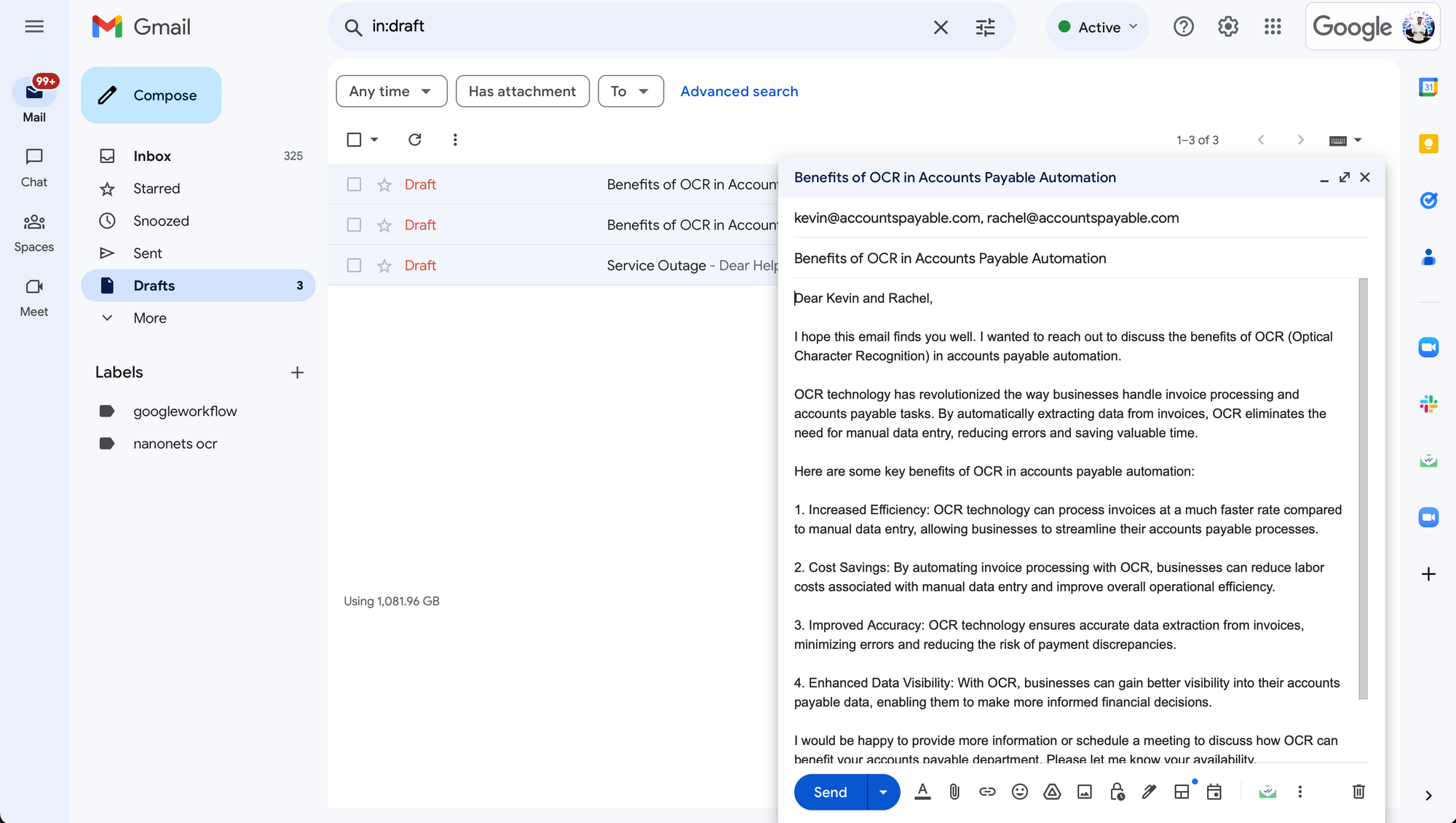

Once we go to our Gmail Draft field, we are able to see the draft that the agent created!

In truth, we may have additionally scheduled this script to run each day and instructed the Gmail agent to ship these emails straight as a substitute of saving them as drafts, leading to a end-to-end totally automated workflow that –

- connects with hubspot each day to fetch yesterday’s leads

- makes use of the area from the e-mail addresses to create customized comply with up emails

- sends the emails out of your Gmail account straight.

Thus, along with querying and chatting with knowledge, LlamaIndex could be even be used to completely execute duties by interacting with functions and knowledge sources.

And that is a wrap!

Join your knowledge and apps with Nanonets AI Assistant to speak with knowledge, deploy customized chatbots & brokers, and create AI workflows that carry out duties in your apps.

Go Past LlamaIndex with Nanonets

LlamaIndex is a superb instrument to attach your knowledge, no matter it is format, with LLMs and harness their prowess to work together with it. We have now seen use pure language along with your knowledge and apps to be able to generate responses / carry out duties.

Nonetheless, for companies, utilizing LlamaIndex for enterprise functions may pose just a few issues –

- Whereas LlamaHub is a superb repository to search out a big number of knowledge connectors, this checklist continues to be not exhaustive and misses out on offering connections with a few of the main workspace apps.

- This development is much more more and more prevalent within the context of instruments, which take pure language as enter to carry out duties (write and ship emails, create CRM entries, execute SQL queries and fetch outcomes, and so forth). This limits the variety of supported apps and methods wherein the brokers can work together with them.

- Finetuning the configuration of every factor of the LlamaIndex pipeline (retrievers, synthesizers, indices, and so forth) is a cumbersome course of. On prime of that, figuring out the perfect pipeline for a given dataset and activity is time consuming and never all the time intuitive.

- Every activity wants a singular implementation. There isn’t a one-stop resolution for connecting your knowledge with LLMs.

- LlamaIndex fetches static knowledge with knowledge connectors, which isn’t up to date with new knowledge flowing into the supply database.

Enter Nanonets AI Assistant.

Nanonets AI Assistant is a safe multi-purpose AI Assistant that connects your and your organization’s information with LLMs, in a straightforward to make use of person interface.

- Information Connectors for

- 100+ most generally used workspace apps (Slack, Notion, Google Suite, Salesforce, Zendesk, and so forth.)

- unstructured knowledge varieties – PDFs, TXTs, Photos, Audio Recordsdata, Video Recordsdata, and so forth.

- structured knowledge varieties – CSVs, Spreadsheets, MongoDB, SQL databases, and so forth.

- Set off / Motion brokers for most generally used workspace apps that hear for occasions in functions / carry out actions in functions. For instance, you may arrange a workflow to hear for brand new emails at help@your_company.com, use your documentation and previous electronic mail conversations as a information base, draft an electronic mail reply with the decision, and ship the e-mail reply.

- Optimized knowledge ingestion and indexing for every corresponding knowledge ingestion, all of which is straight dealt with within the background by the Nanonets AI Assistant.

- Actual-time sync with knowledge sources.

To get began, you may get on a name with one among our AI specialists and we may give you a customized demo & trial of the Nanonets AI Assistant primarily based in your use case.

As soon as arrange, you should utilize your Nanonets AI Assistant to –

Chat with Information

Empower your groups with complete, real-time info from all of your knowledge sources.

Create AI Workflows

Use pure language to create and run complicated workflows powered by LLMs that work together with all of your apps and knowledge.

Deploy Chatbots

Construct and Deploy prepared to make use of Customized AI Chatbots that know you inside minutes.

Supercharge your groups with Nanonets AI to attach your apps and knowledge with AI and begin automating duties so your groups can concentrate on what actually issues.

Join your knowledge and apps with Nanonets AI Assistant to speak with knowledge, deploy customized chatbots & brokers, and create AI workflows that carry out duties in your apps.