Internet crawlers (additionally known as spiders or bots) are packages that go to (or “crawl”) pages throughout the net.

And serps use crawlers to find content material that they’ll then index—that means retailer of their huge databases.

These packages uncover your content material by following hyperlinks in your website.

However the course of doesn’t at all times go easily due to crawl errors.

Earlier than we dive into these errors and learn how to tackle them, let’s begin with the fundamentals.

What Are Crawl Errors?

Crawl errors happen when search engine crawlers can’t navigate via your webpages the way in which they usually do (proven beneath).

When this happens, serps like Google can’t totally discover and perceive your web site’s content material or construction.

This can be a drawback as a result of crawl errors can stop your pages from being found. Which implies they’ll’t be listed, seem in search outcomes, or drive natural (unpaid) site visitors to your website.

Google separates crawl errors into two classes: website errors and URL errors.

Let’s discover each.

Web site Errors

Web site errors are crawl errors that may affect your entire web site.

Server, DNS, and robots.txt errors are the most typical.

Server Errors

Server errors (which return a 5xx HTTP standing code) occur when the server prevents the web page from loading.

Listed here are the most typical server errors:

- Inner server error (500): The server can’t full the request. Nevertheless it may also be triggered when extra particular errors aren’t out there.

- Unhealthy gateway error (502): One server acts as a gateway and receives an invalid response from one other server

- Service not out there error (503): The server is presently unavailable, normally when the server is underneath restore or being up to date

- Gateway timeout error (504): One server acts as a gateway and doesn’t obtain a response from one other server in time. Like when there’s an excessive amount of site visitors on the web site.

When serps continuously encounter 5xx errors, they’ll sluggish a web site’s crawling price.

Meaning serps like Google is likely to be unable to find and index all of your content material.

DNS Errors

A site title system (DNS) error is when serps cannot join along with your area.

All web sites and units have at the very least one web protocol (IP) tackle uniquely figuring out them on the internet.

The DNS makes it simpler for folks and computer systems to speak to one another by matching domains to their IP addresses.

With out the DNS, we’d manually enter a web site’s IP tackle as a substitute of typing its URL.

So, as a substitute of coming into “www.semrush.com” in your URL bar, you would need to use our IP tackle: “34.120.45.191.”

DNS errors are much less widespread than server errors. However listed here are those you may encounter:

- DNS timeout: Your DNS server didn’t reply to the search engine’s request in time

- DNS lookup: The search engine couldn’t attain your web site as a result of your DNS server didn’t find your area title

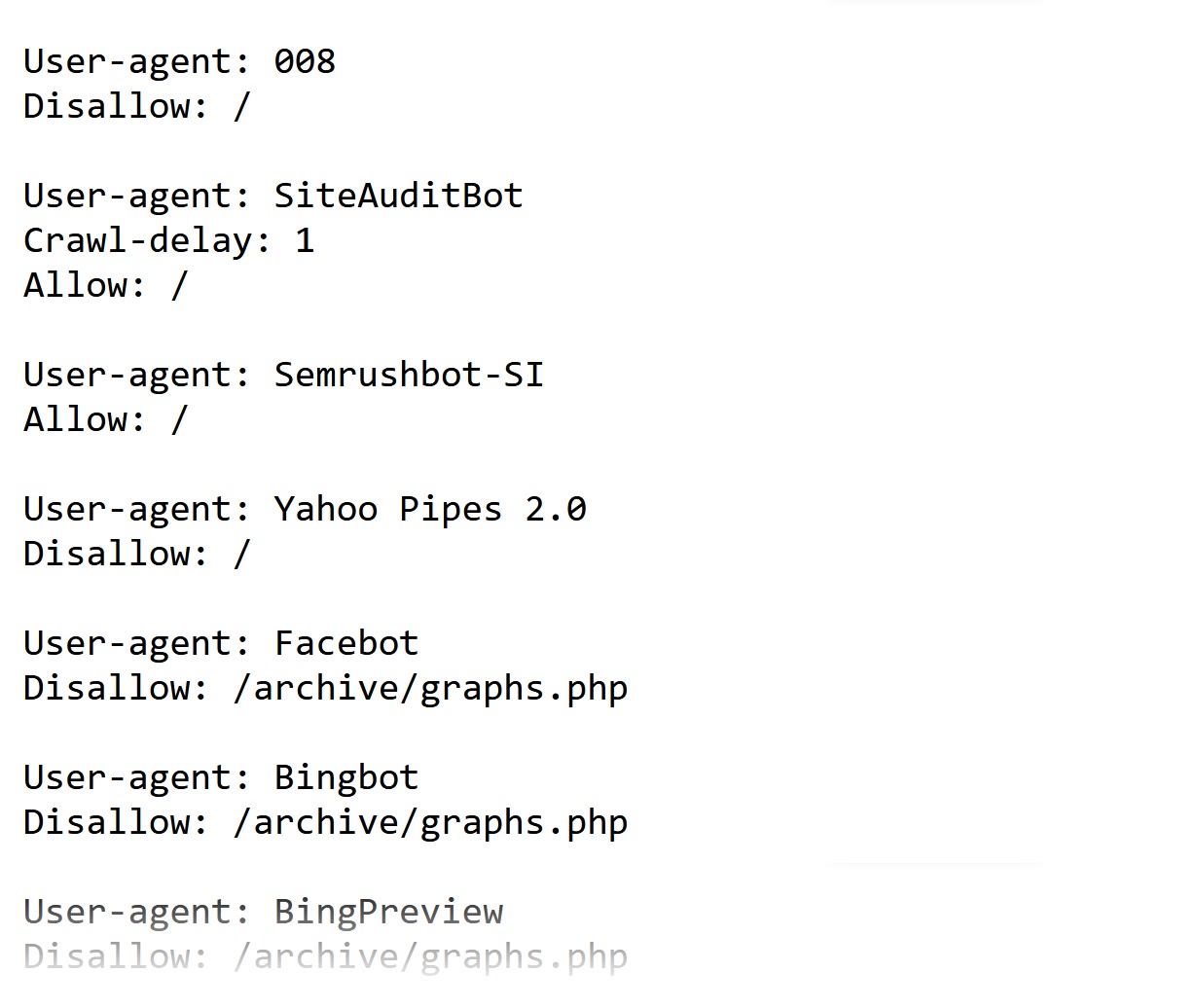

Robots.txt Errors

Robots.txt errors come up when serps can’t retrieve your robots.txt file.

Your robots.txt file tells serps which pages they’ll crawl and which they’ll’t.

Right here’s what a robots.txt file appears like.

Listed here are the three essential components of this file and what every does:

- Person-agent: This line identifies the crawler. And “*” implies that the principles are for all search engine bots.

- Disallow/enable: This line tells search engine bots whether or not they need to crawl your web site or sure sections of your web site

- Sitemap: This line signifies your sitemap location

URL Errors

In contrast to website errors, URL errors solely have an effect on the crawlability of particular pages in your website.

Right here’s an outline of the different sorts:

404 Errors

A 404 error implies that the search engine bot couldn’t discover the URL. And it’s some of the widespread URL errors.

It occurs when:

- You’ve modified the URL of a web page with out updating outdated hyperlinks pointing to it

- You’ve deleted a web page or article out of your website with out including a redirect

- You will have damaged hyperlinks–e.g., there are errors within the URL

Right here’s what a fundamental 404 web page appears like on an Nginx server.

However most firms use customized 404 pages as we speak.

These customized pages enhance the consumer expertise. And let you stay constant along with your web site’s design and branding.

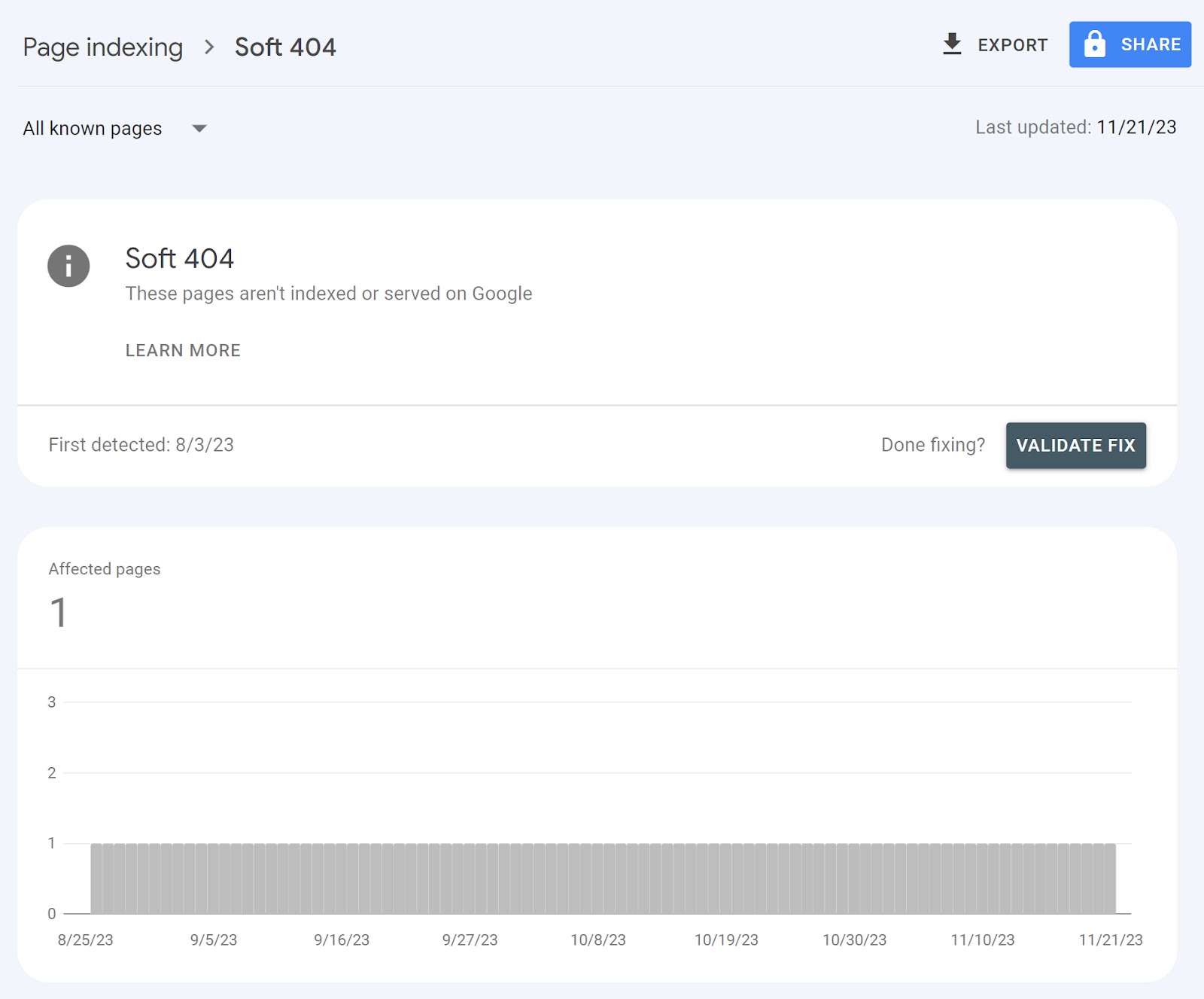

Comfortable 404 Errors

Comfortable 404 errors occur when the server returns a 200 code however Google thinks it must be a 404 error.

The 200 code means every part is OK. It’s the anticipated HTTP response code if there are not any points

So, what causes tender 404 errors?

- JavaScript file subject: The JavaScript useful resource is blocked or can’t be loaded

- Skinny content material: The web page has inadequate content material that doesn’t present sufficient worth to the consumer. Like an empty inside search outcome web page.

- Low-quality or duplicate content material: The web page isn’t helpful to customers or is a replica of one other web page. For instance, placeholder pages that shouldn’t be dwell like those who comprise “lorem ipsum” content material. Or duplicate content material that doesn’t use canonical URLs—which inform serps which web page is the first one.

- Different causes: Lacking recordsdata on the server or a damaged connection to your database

Right here’s what you see in Google Search Console (GSC) once you discover pages with these.

403 Forbidden Errors

The 403 forbidden error means the server denied a crawler’s request. That means the server understood the request, however the crawler isn’t in a position to entry the URL.

Right here’s what a 403 forbidden error appears like on an Nginx server.

Issues with server permissions are the principle causes behind the 403 error.

Server permissions outline consumer and admins’ rights on a folder or file.

We will divide the permissions into three classes: learn, write, and execute.

For instance, you received’t have the ability to entry a URL Should you don’t have the learn permission.

A defective .htaccess file is one other recurring reason for 403 errors.

An .htaccess file is a configuration file used on Apache servers. It’s useful for configuring settings and implementing redirects.

However any error in your .htaccess file can lead to points like a 403 error.

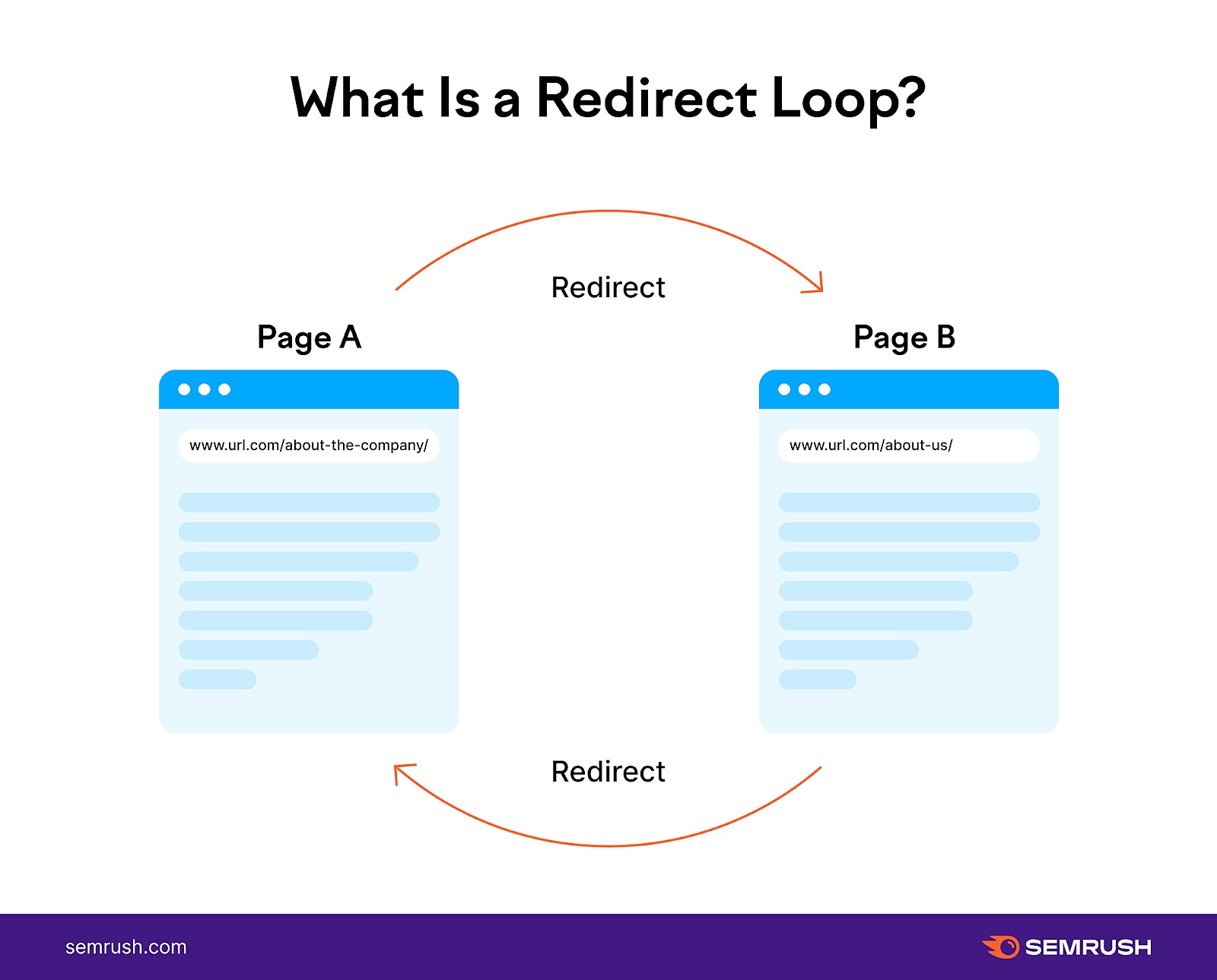

Redirect Loops

A redirect loop occurs when web page A redirects to web page B. And web page B to web page A.

The outcome?

An infinite loop of redirects that stops guests and crawlers from accessing your content material. Which might hinder your rankings.

Tips on how to Discover Crawl Errors

Web site Audit

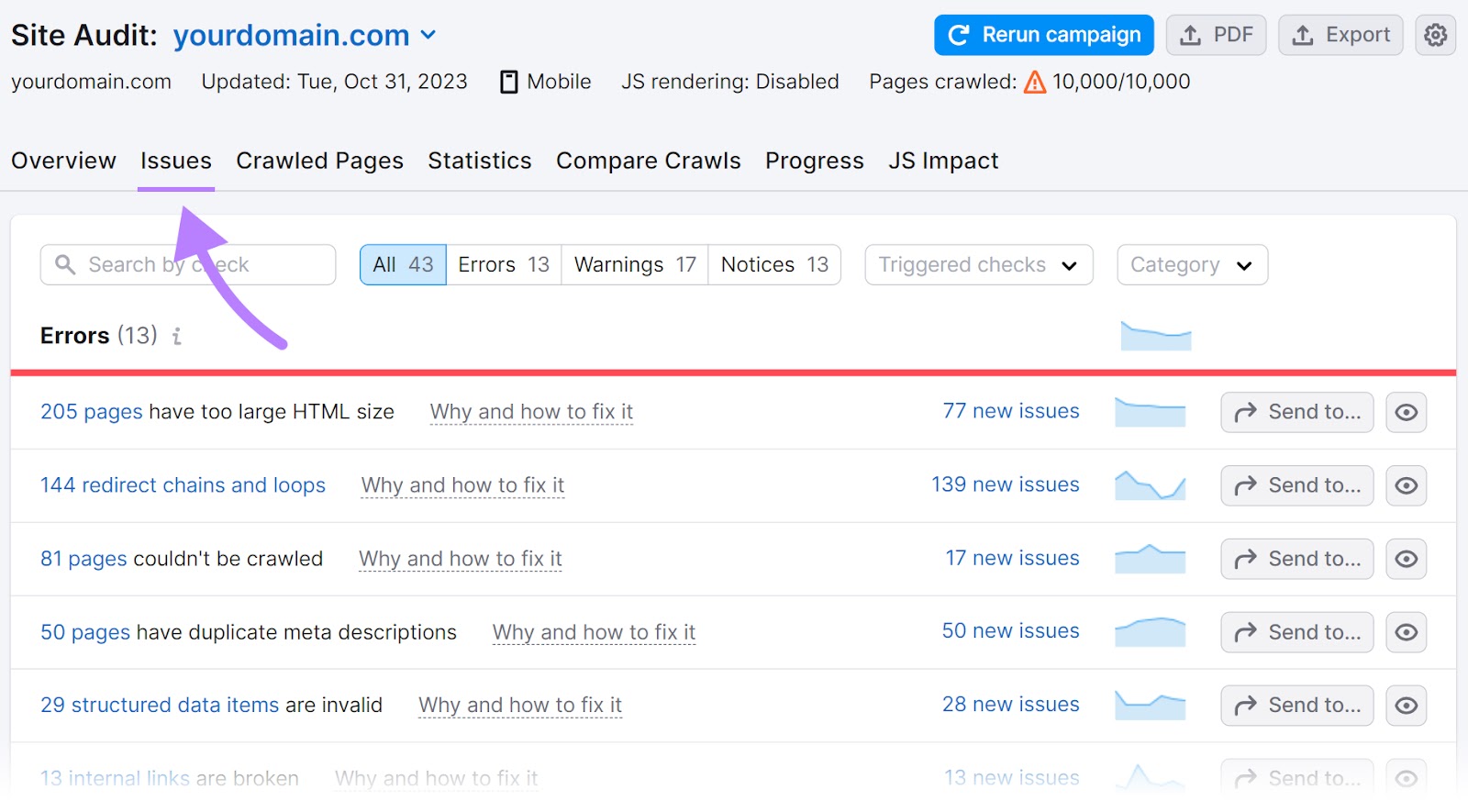

Semrush’s Web site Audit means that you can simply uncover points affecting your website’s crawlability. And supplies recommendations on learn how to tackle them.

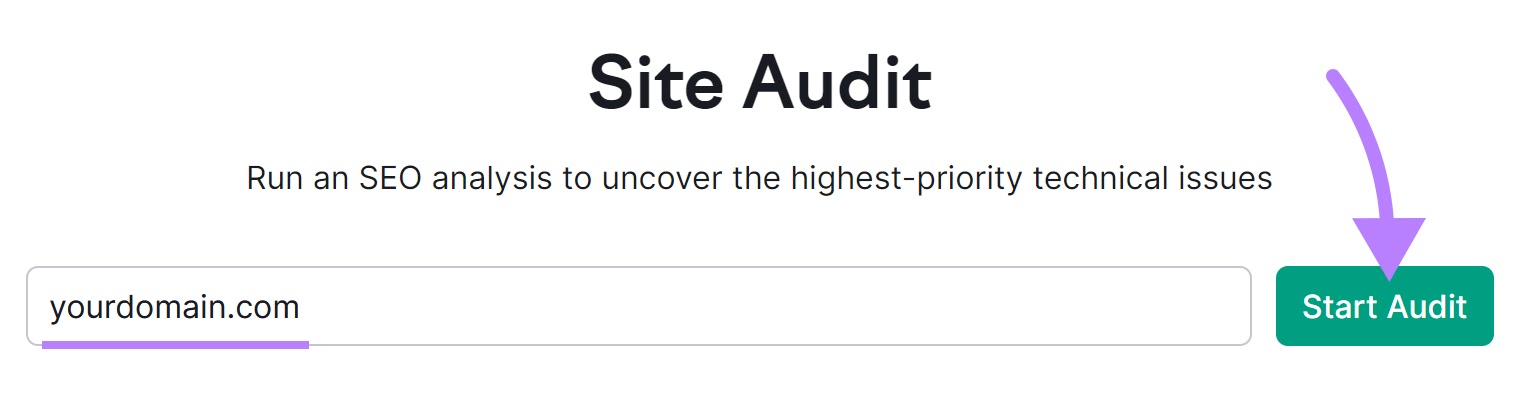

Open the instrument, enter your area title, and click on “Begin Audit.”

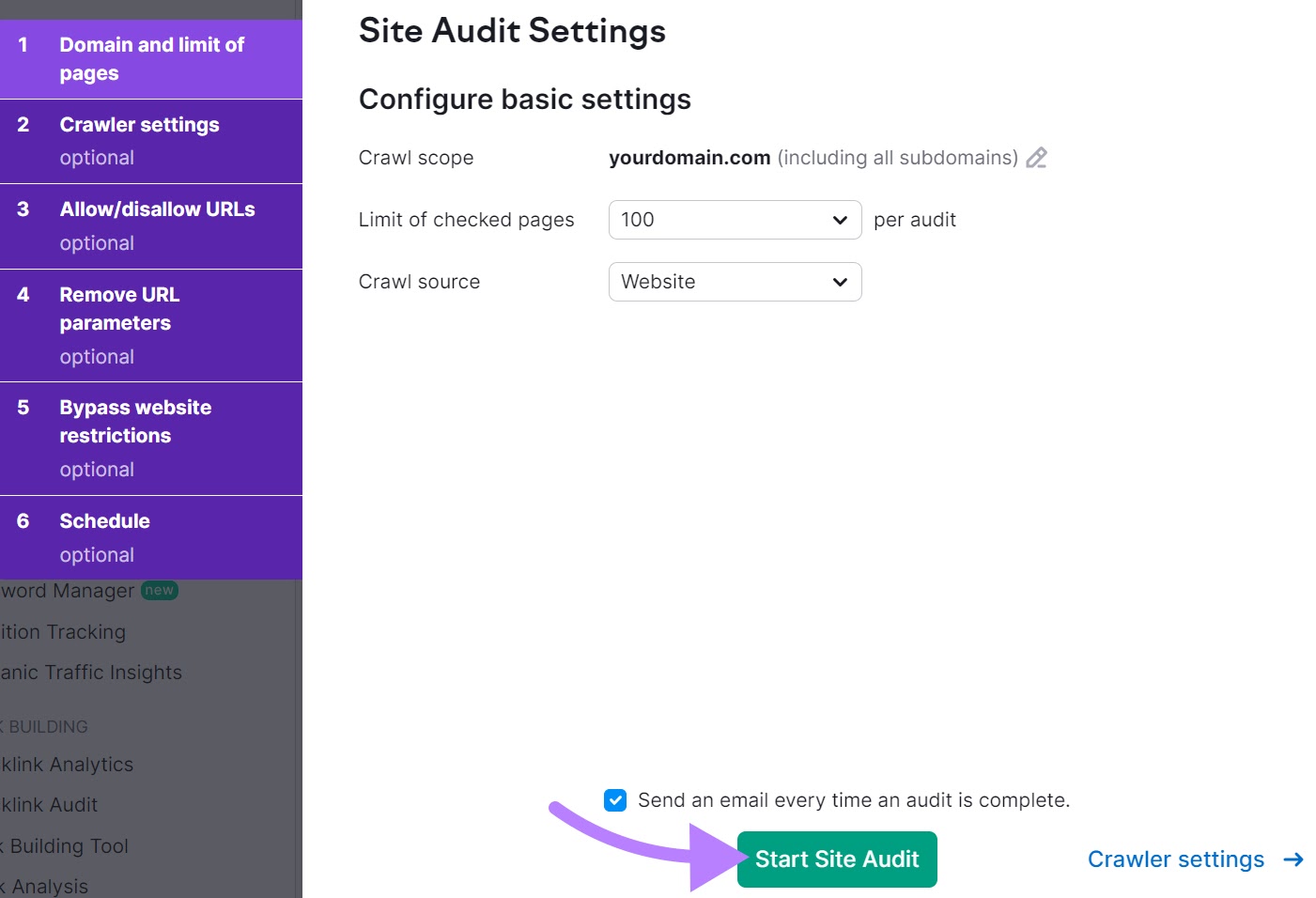

Then, comply with the Web site Audit configuration information to regulate your settings. And click on “Begin Web site Audit.”

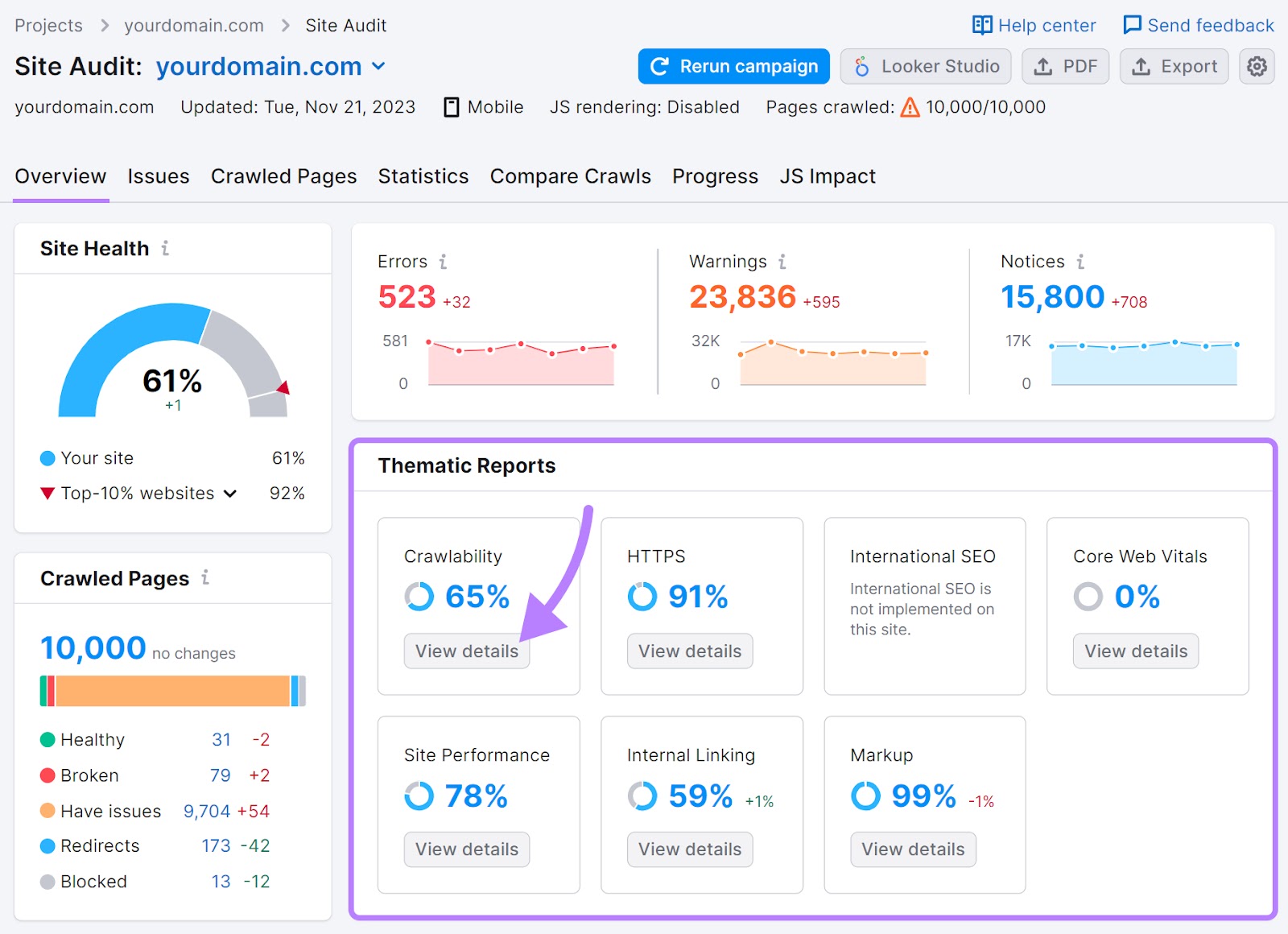

You’ll be taken to the “Overview” report.

Click on on “View particulars” within the “Crawlability” module underneath “Thematic Stories.”

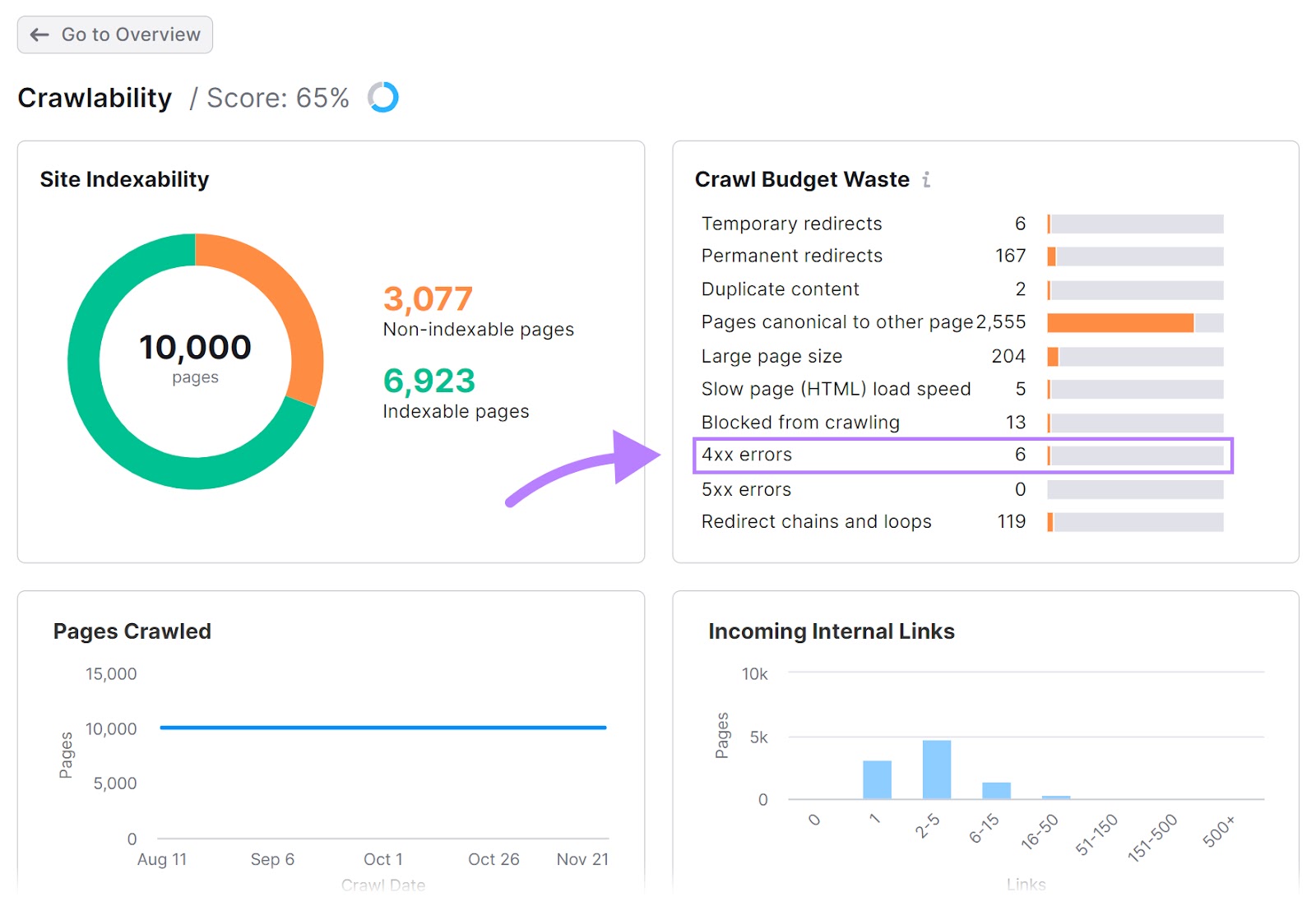

You’ll get an total understanding of the way you’re doing by way of crawl errors.

Then, choose a selected error you need to clear up. And click on on the corresponding bar subsequent to it within the “Crawl Funds Waste” module.

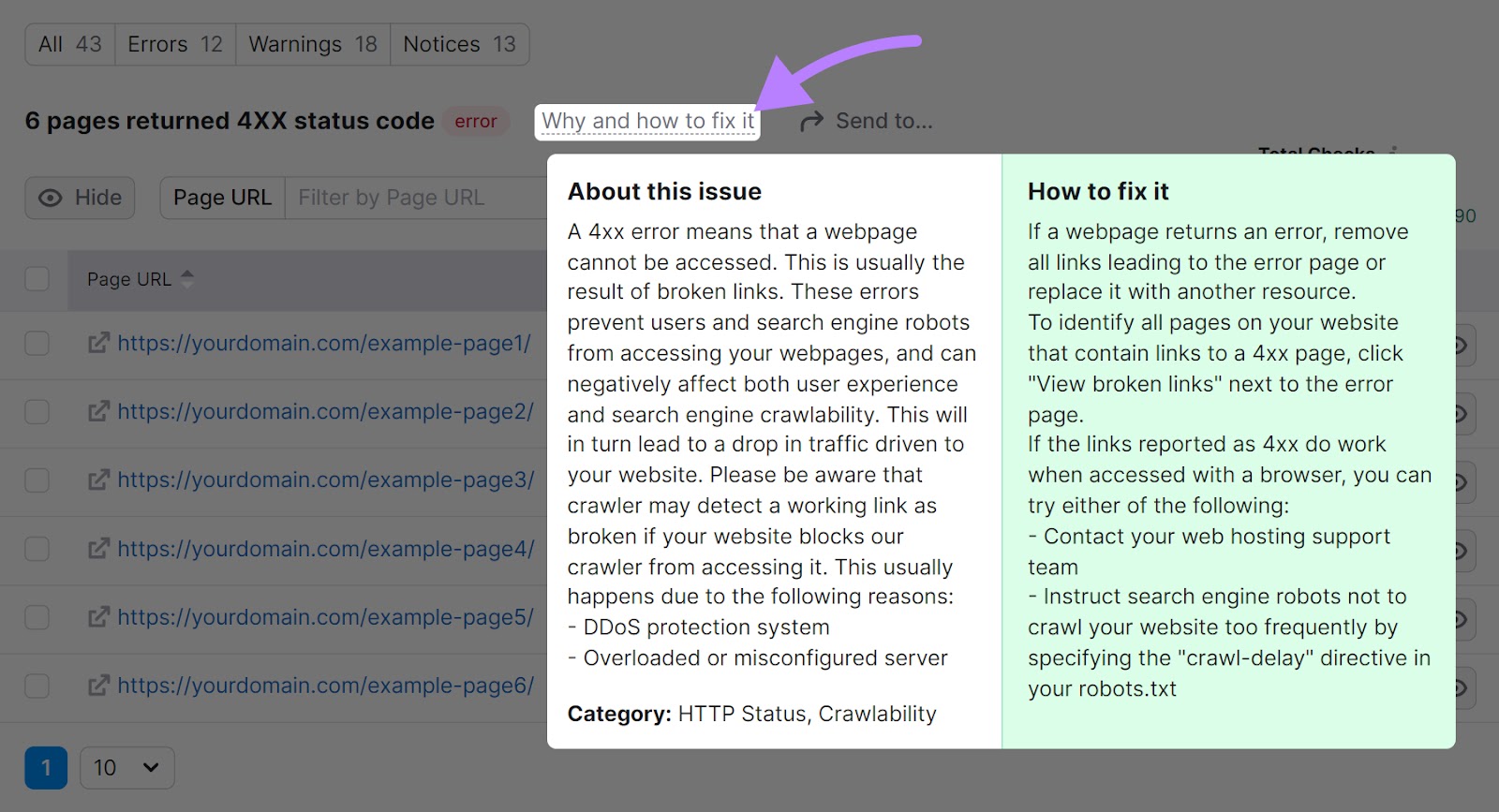

We’ve chosen the 4xx for our instance.

On the subsequent display screen, click on “Why and learn how to repair it.”

You’ll get data required to grasp the problem. And recommendation on learn how to clear up it.

Google Search Console

Google Search Console can be a wonderful instrument providing invaluable assist to determine crawl errors.

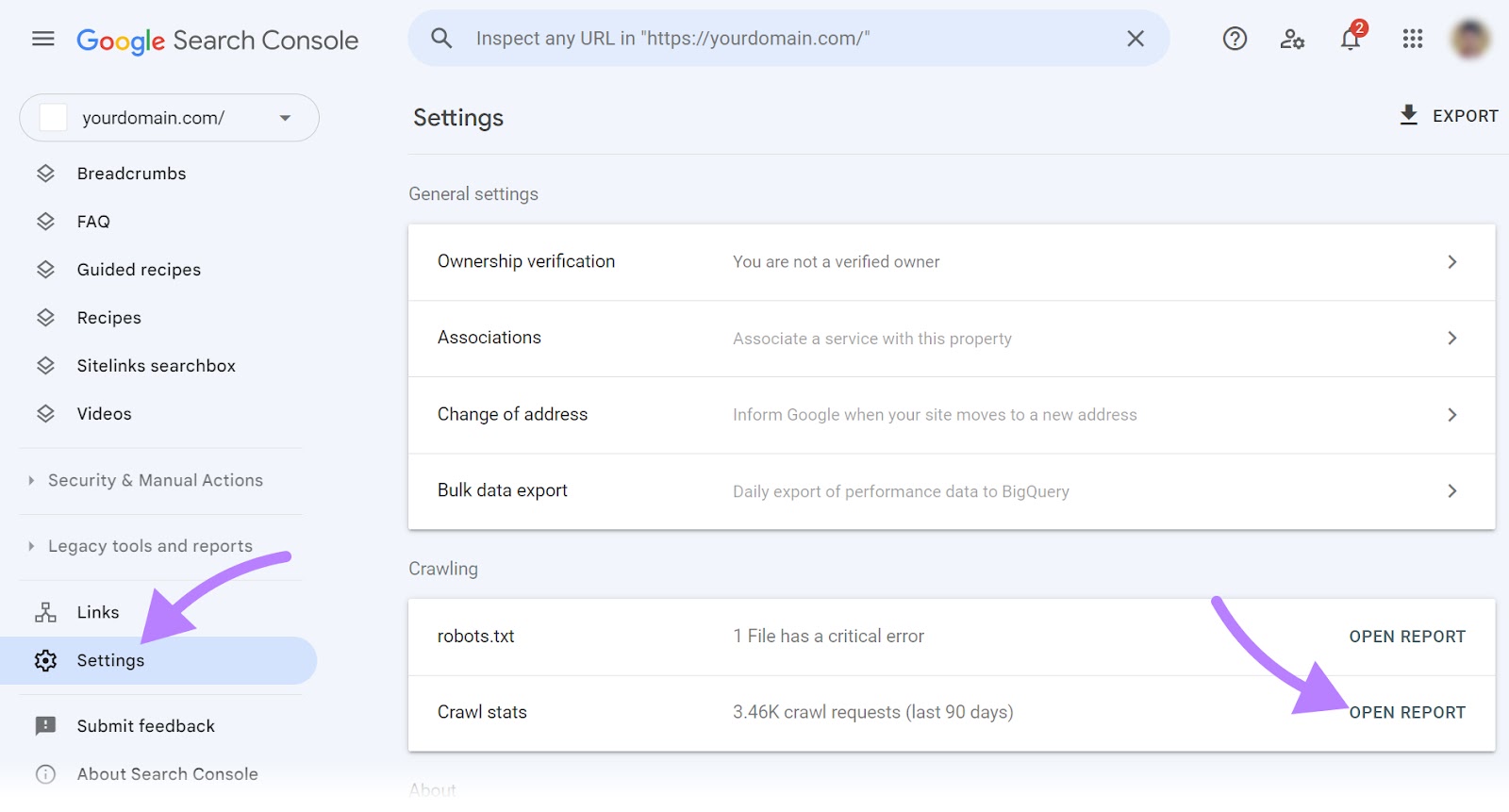

Head to your GSC account and click on on “Settings” on the left sidebar.

Then, click on on “OPEN REPORT” subsequent to the “Crawl stats” tab.

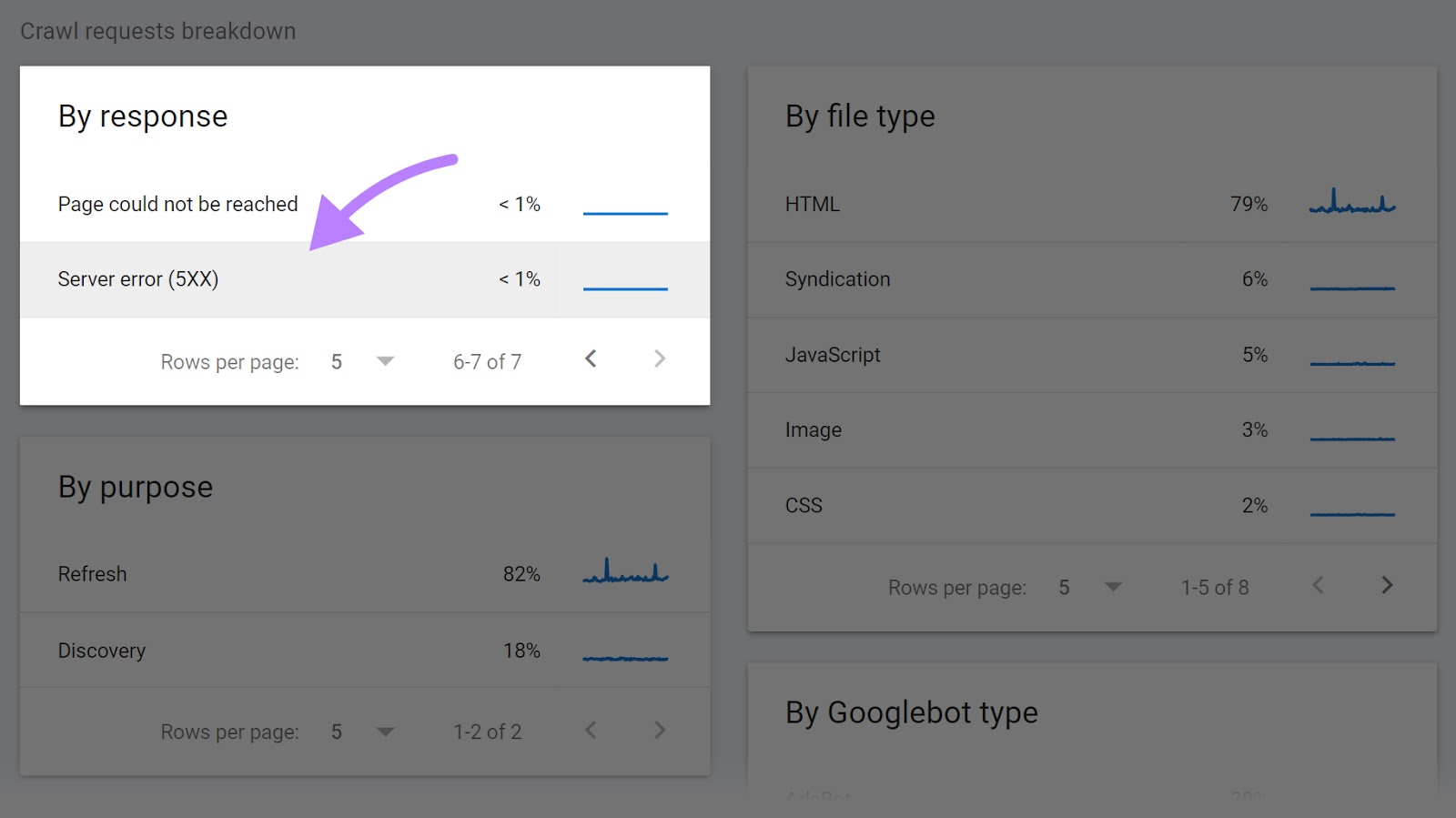

Scroll right down to see if Google seen crawling points in your website.

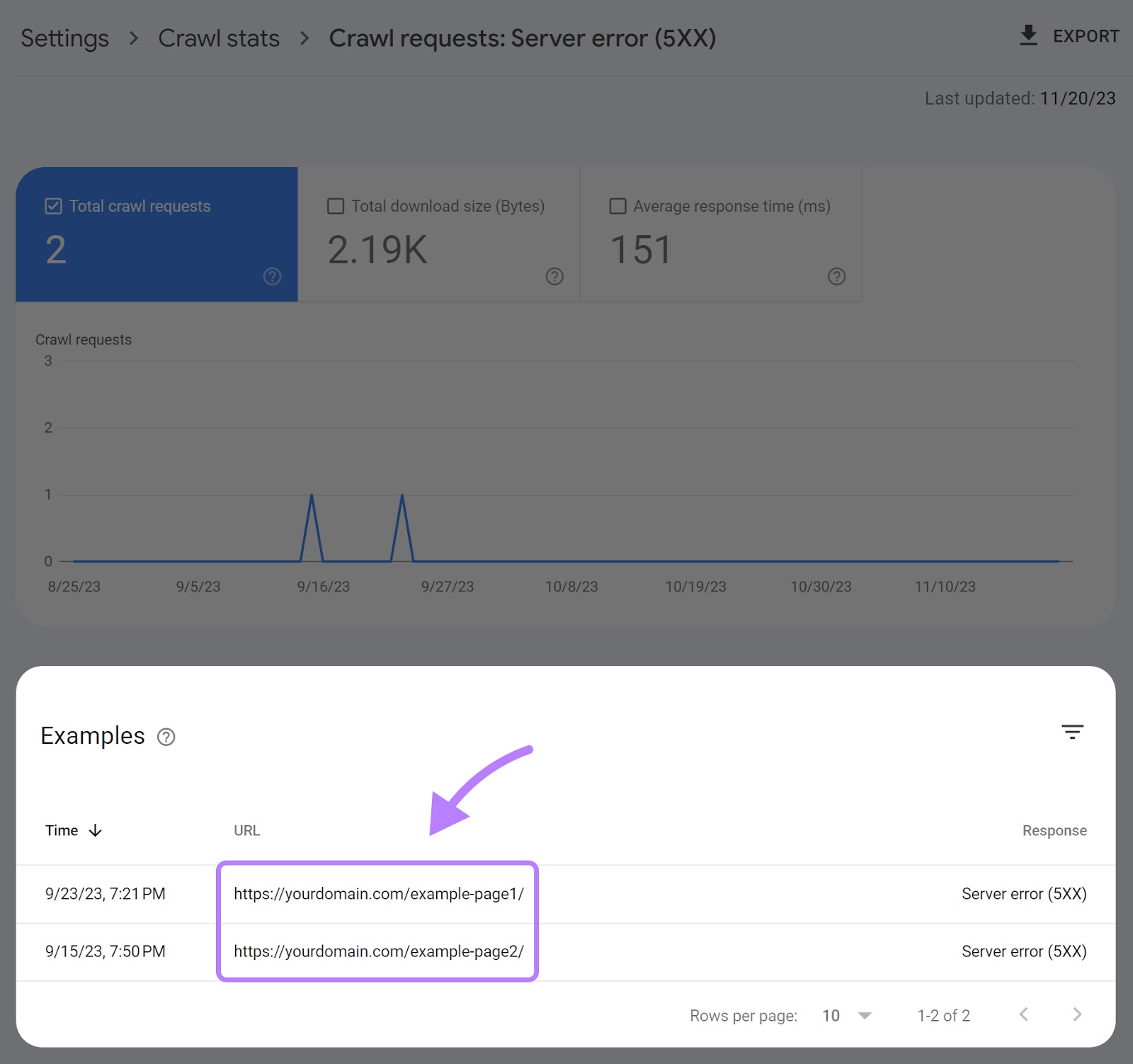

Click on on any subject, just like the 5xx server errors.

You’ll see the total record of URLs matching the error you chose.

Now, you may tackle them one after the other.

Tips on how to Repair Crawl Errors

We now know learn how to determine crawl errors.

The following step is best understanding learn how to repair them.

Fixing 404 Errors

You’ll in all probability encounter 404 errors incessantly. And the excellent news is that they’re straightforward to repair.

You need to use redirects to repair 404 errors.

Use 301 redirects for everlasting redirects as a result of they let you retain among the unique web page’s authority. And use 302 redirects for momentary redirects.

How do you select the vacation spot URL on your redirects?

Listed here are some greatest practices:

- Add a redirect to the brand new URL if the content material nonetheless exists

- Add a redirect to a web page addressing the identical or a extremely comparable matter if the content material not exists

There are three essential methods to deploy redirects.

The primary technique is to make use of a plugin.

Listed here are among the hottest redirect plugins for WordPress:

The second technique is so as to add redirects straight in your server configuration file.

Right here’s what a 301 redirect would appear to be on an .htaccess file on an Apache server.

Redirect 301 https://www.yoursite.com/old-page/ https://www.yoursite.com/new-page/

You possibly can break this line down into 4 components:

- Redirect: Specifies that we need to redirect the site visitors

- 301: Signifies the redirect code, stating that it’s a everlasting redirect

- https://www.yoursite.com/old-page/: Identifies the URL to redirect from

- https://www.yoursite.com/new-page/: Identifies the URL to redirect to

We don’t advocate this selection in the event you’re a newbie. As a result of it will possibly negatively affect your website in the event you’re not sure of what you’re doing. So, ensure to work with a developer in the event you decide to go this route.

Lastly, you may add redirects straight from the backend in the event you use Wix or Shopify.

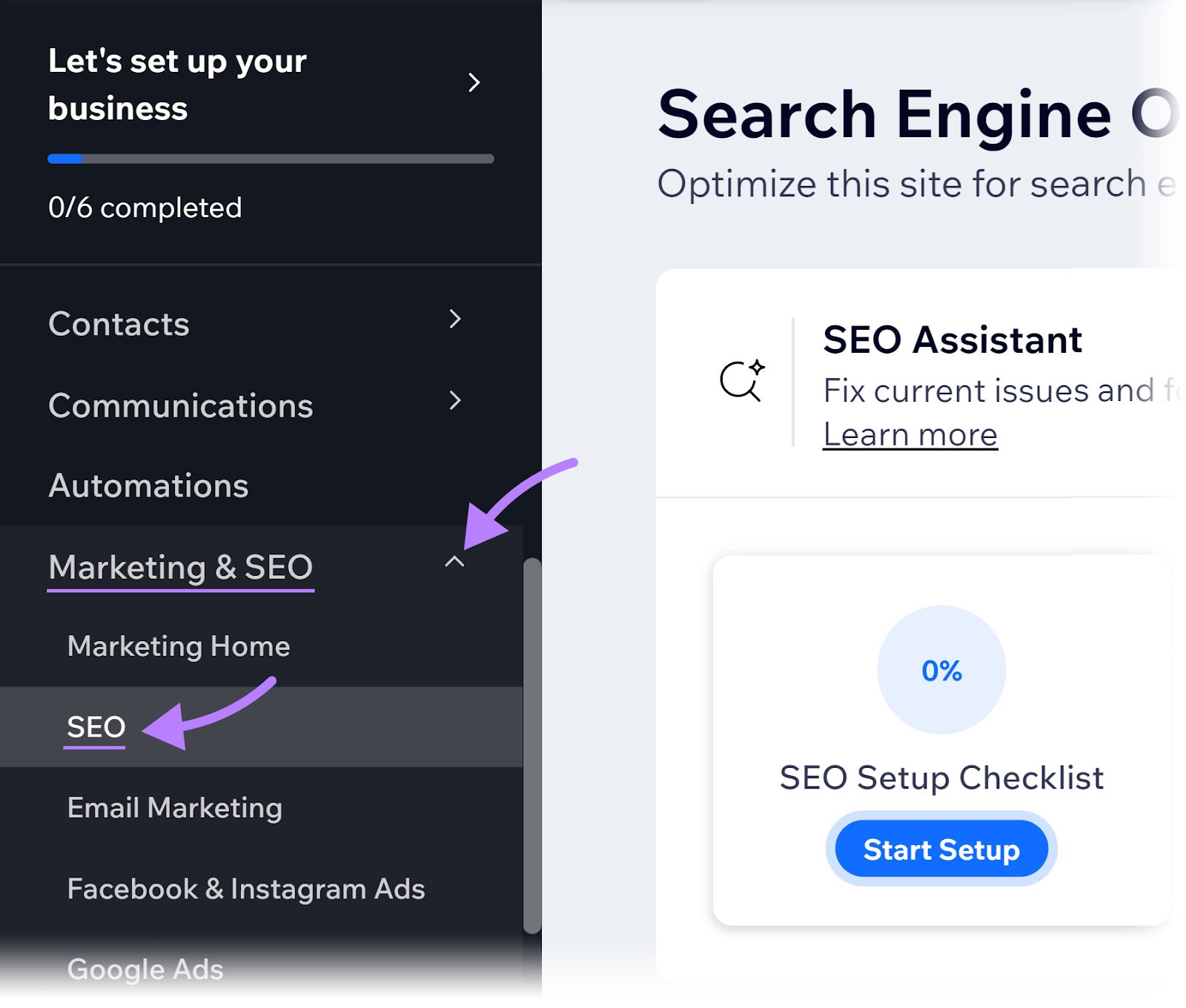

Should you’re utilizing Wix, scroll to the underside of your web site management panel. Then click on on “search engine optimization” underneath “Advertising & search engine optimization.”

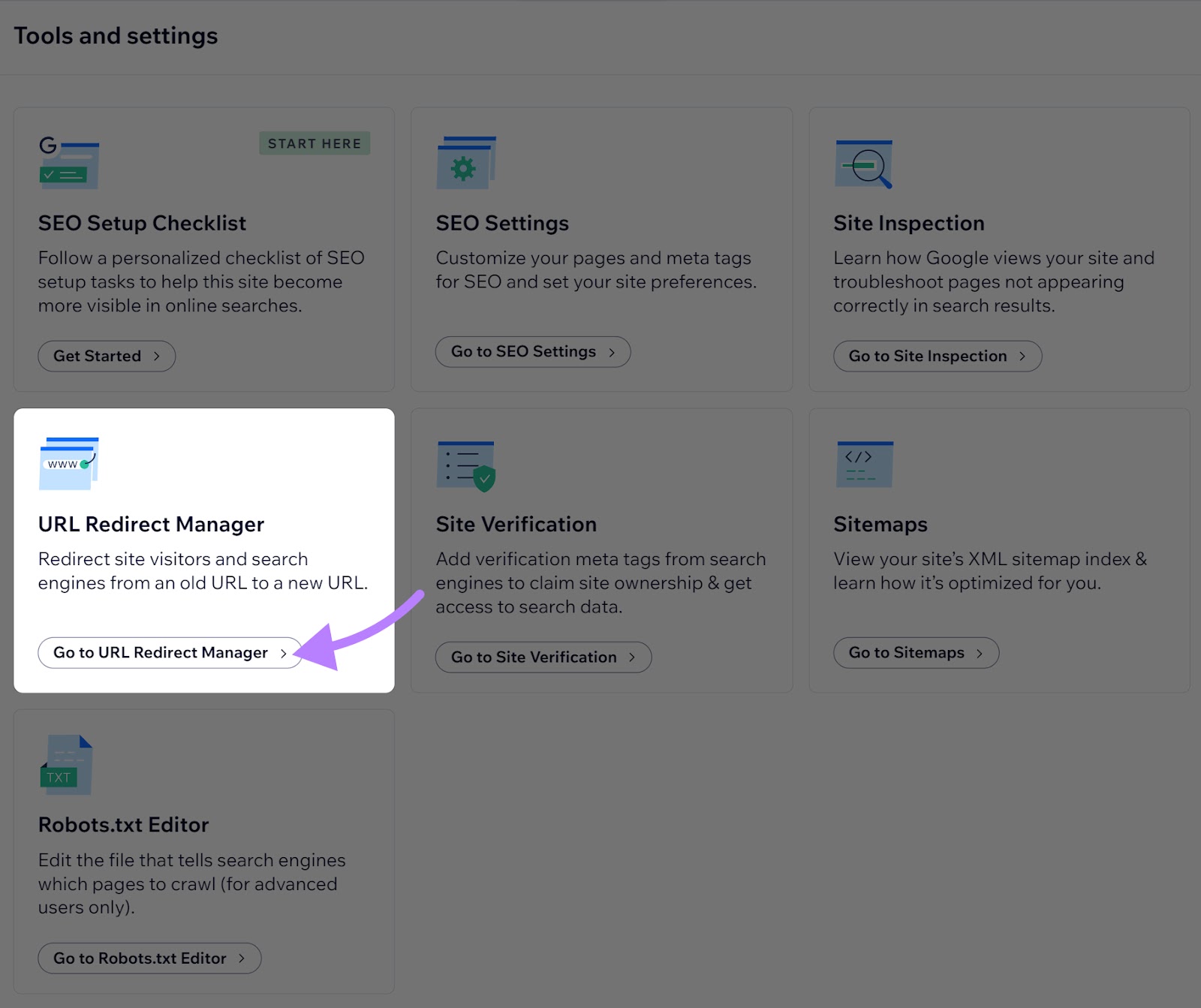

Click on “Go to URL Redirect Supervisor” situated underneath the “Instruments and settings” part.

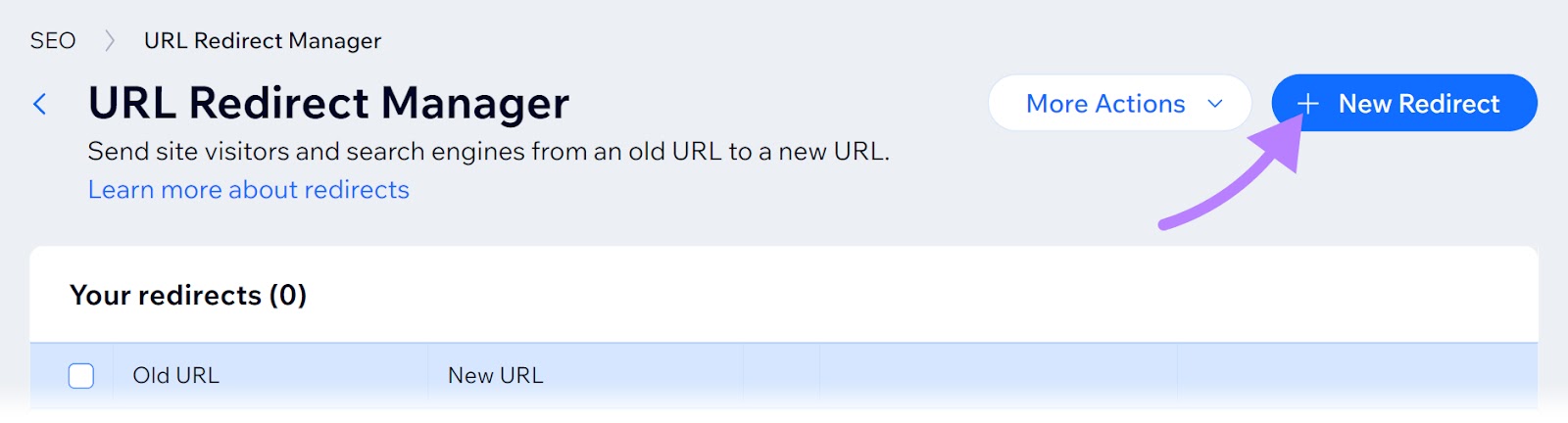

Then, click on the “+ New Redirect” button on the high proper nook.

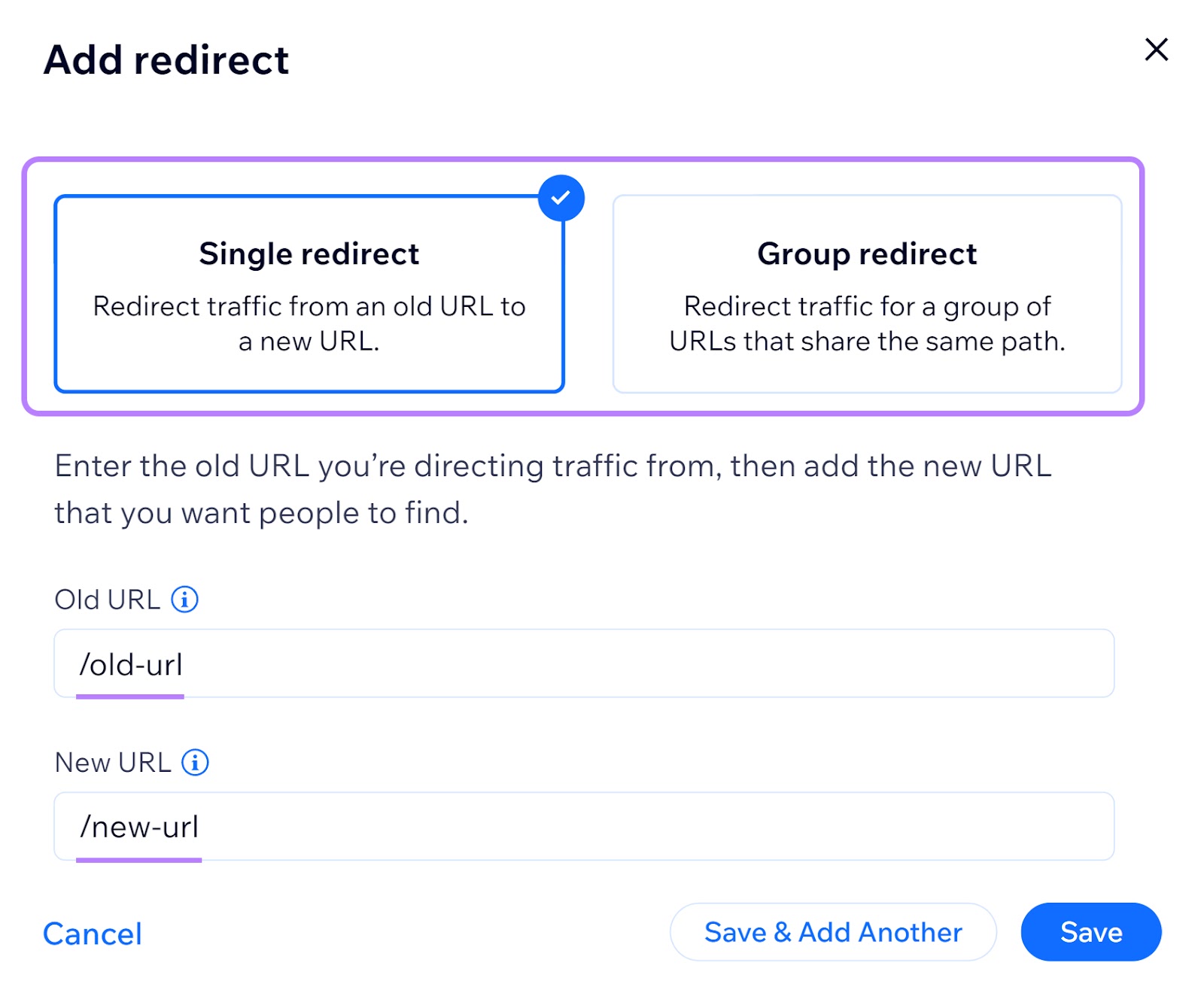

A pop-up window will present. Right here, you may select the kind of redirect, enter the outdated URL you need to redirect from, and the brand new URL you need to direct to.

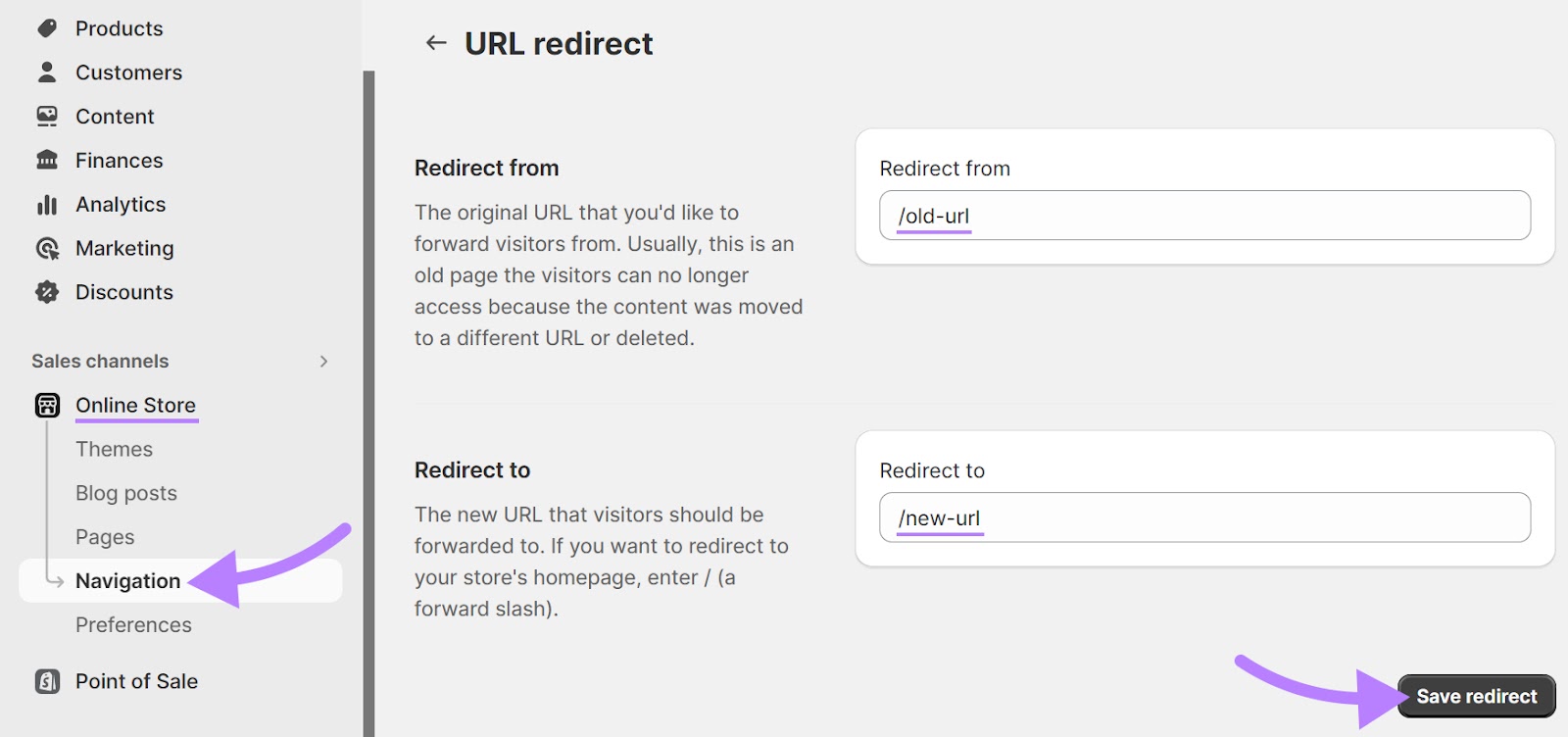

Listed here are the steps to comply with in the event you’re utilizing Shopify:

Log into your account and click on on “On-line Retailer” underneath “Gross sales channels.”

Then, choose “Navigation.”

From right here, go to “View URL Redirects.”

Click on the “Create URL redirect” button.

Enter the outdated URL that you simply want to redirect guests from and the brand new URL that you simply need to redirect your guests to. “Enter “/” to focus on your retailer’s dwelling web page.)

Lastly, save the redirect.

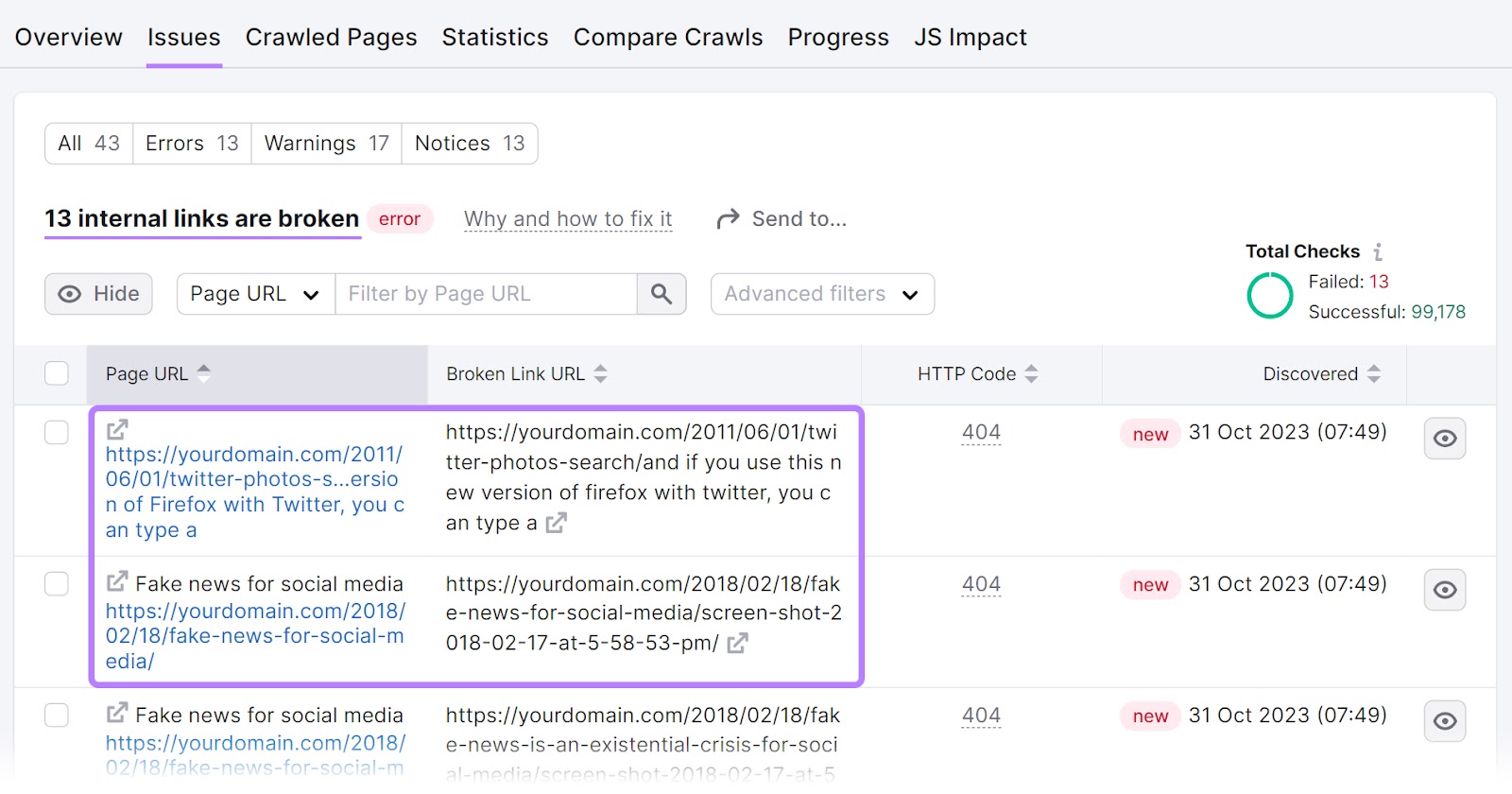

Damaged hyperlinks (hyperlinks that time to pages that may’t be discovered) may also be a purpose behind 404 errors. So, let’s see how we will shortly determine damaged hyperlinks with the Web site Audit instrument and repair them.

Fixing Damaged Hyperlinks

A damaged hyperlink factors to a web page or useful resource that doesn’t exist.

Let’s say you’ve been engaged on a brand new article and need to add an inside hyperlink to your about web page at “yoursite.com/about.”

Any typos in your hyperlink will create damaged hyperlinks.

So, you’ll get a damaged hyperlink error in the event you’ve forgotten the letter “b” and enter “yoursite.com/aout” as a substitute of “yoursite.com/about.”

Damaged hyperlinks will be both inside (pointing to a different web page in your website) or exterior (pointing to a different web site).

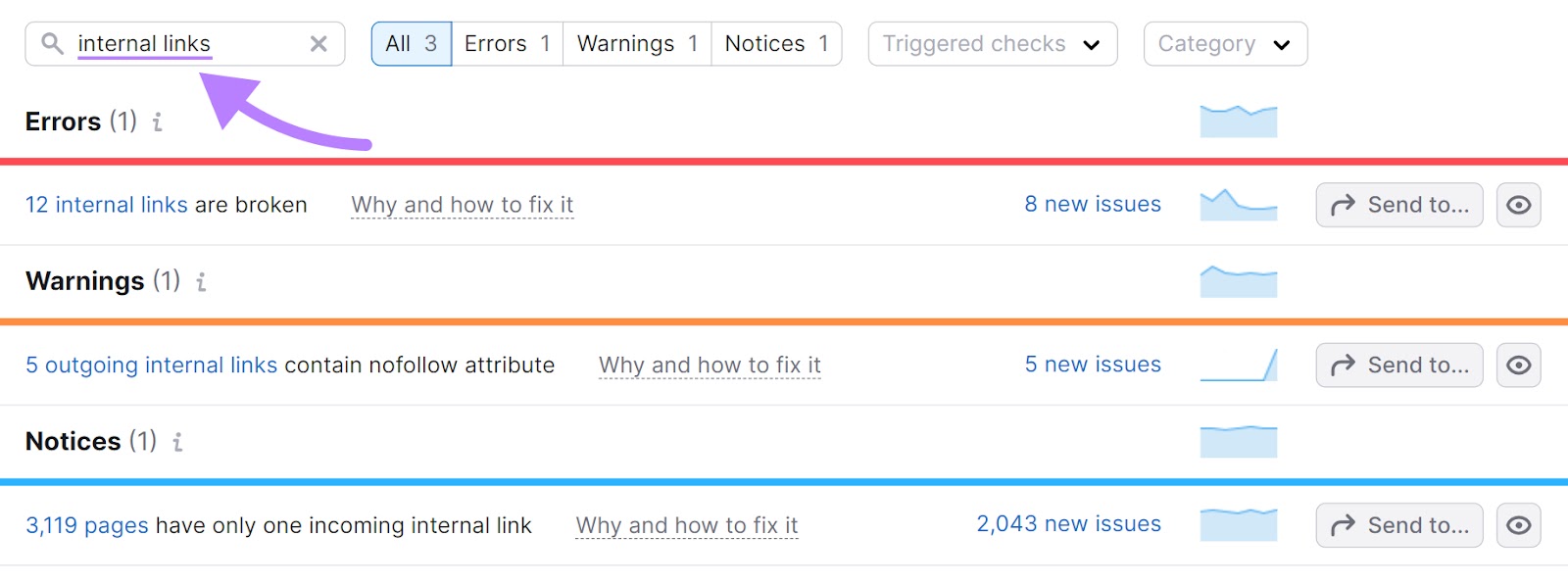

To seek out damaged hyperlinks, configure Web site Audit if you have not but.

Then, go to the “Points” tab.

Now, kind “inside hyperlinks” within the search bar on the high of the desk to search out points associated to damaged hyperlinks.

And click on on the blue, clickable textual content within the subject to see the whole record of affected URLs.

To repair these, change the hyperlink, restore the lacking web page, or add a 301 redirect to a different related web page in your website.

Fixing Robots.txt Errors

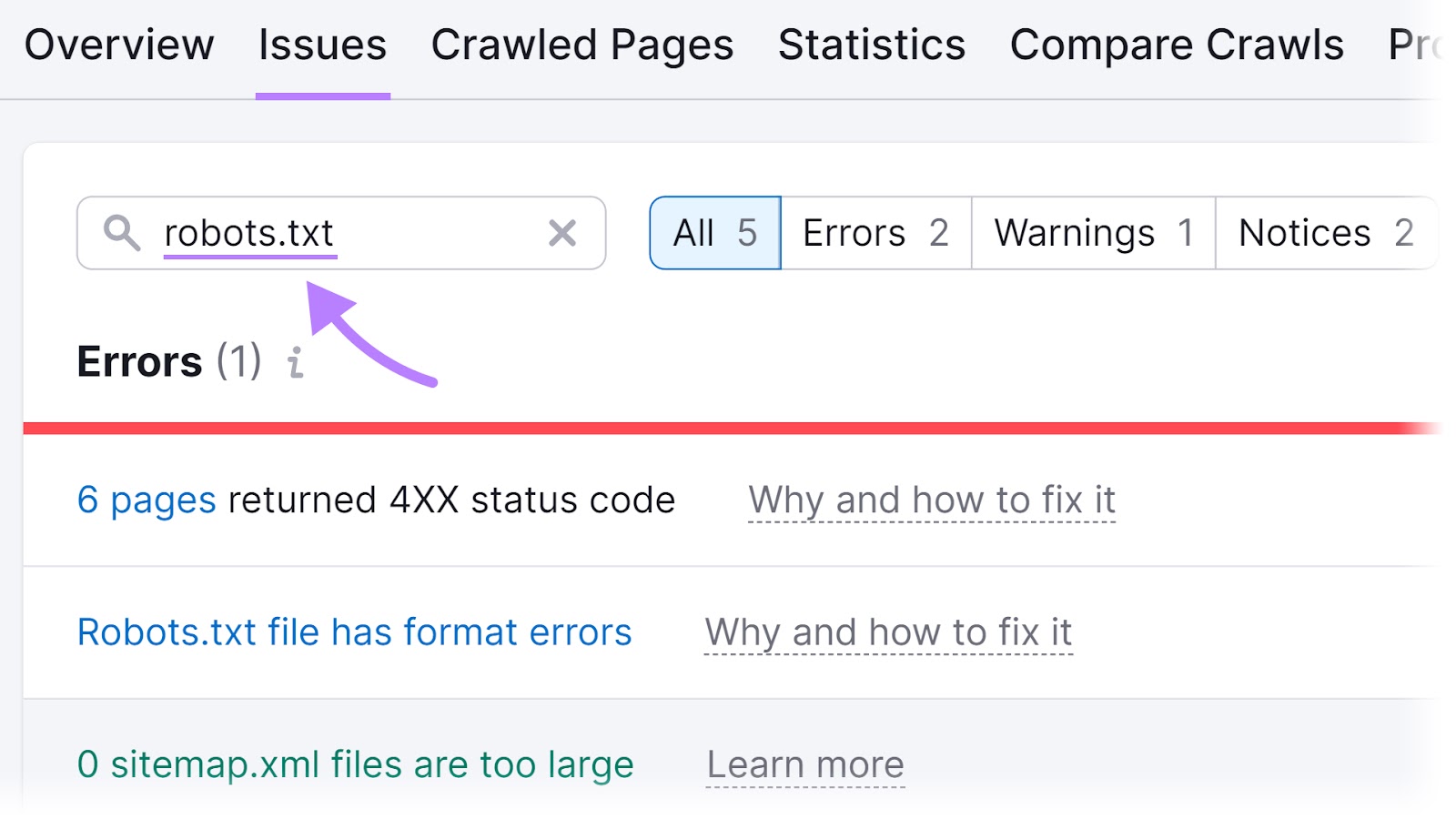

Semrush’s Web site Audit instrument can even assist you resolve points relating to your robots.txt file.

First, arrange a mission within the instrument and run your audit.

As soon as full, navigate to the “Points” tab and seek for “robots.txt.”

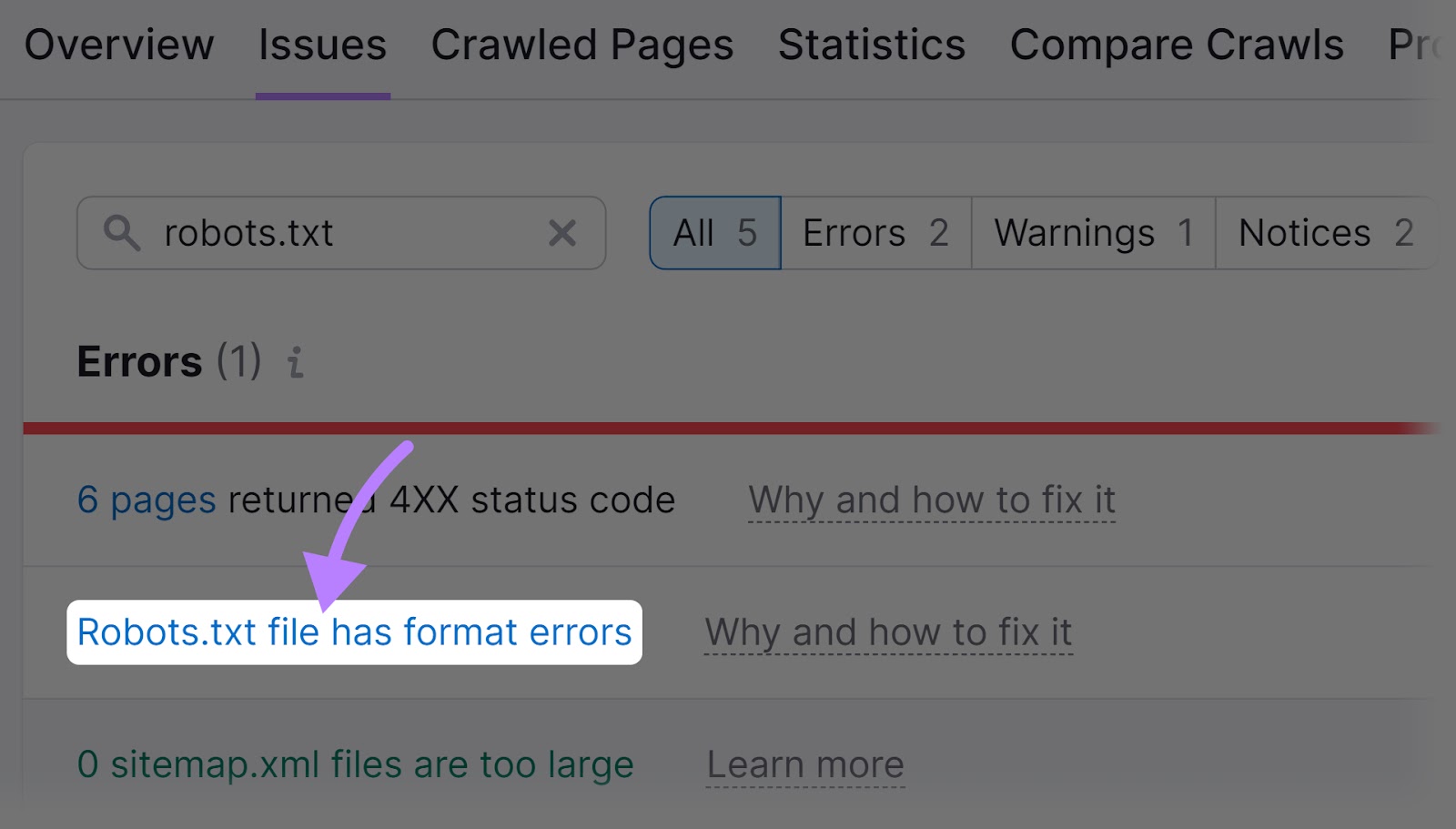

You’ll now see any points associated to your robots.txt file that you may click on on. For instance, you may see a “Robots.txt file has format errors” hyperlink if it seems that your file has format errors.

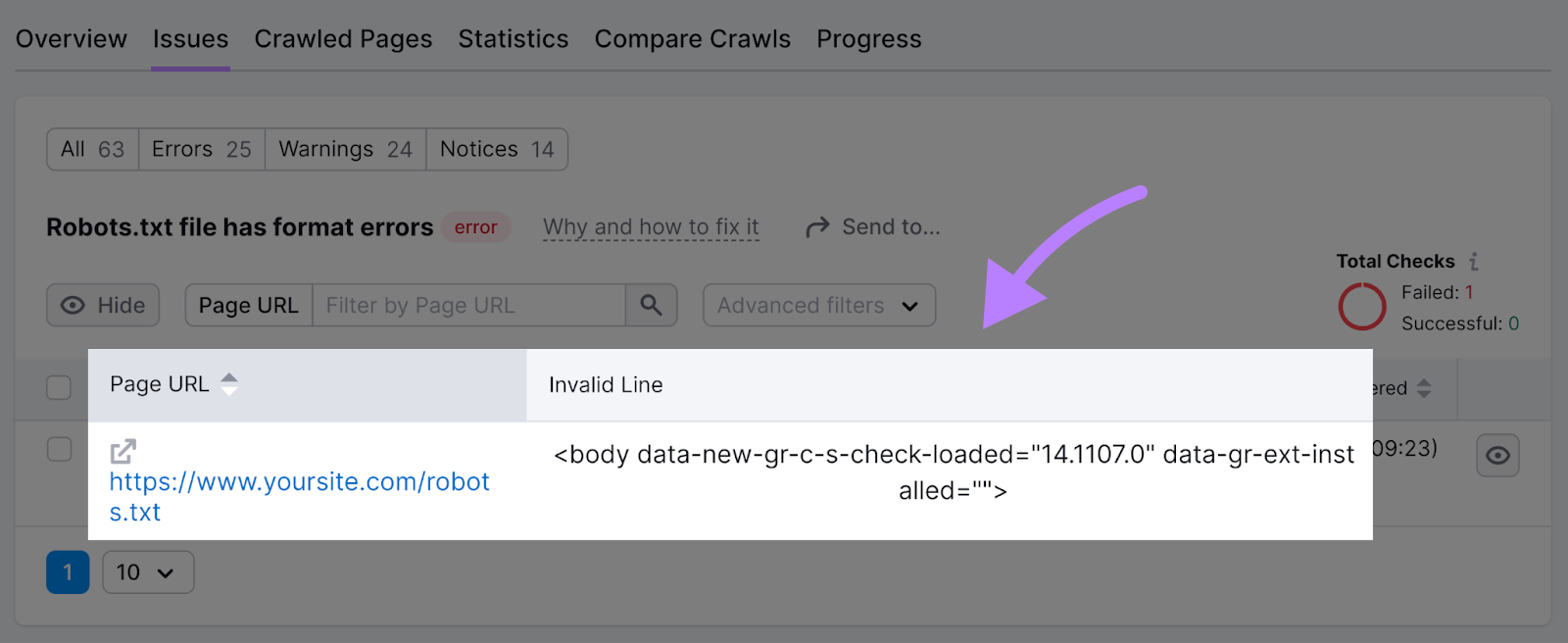

Go forward and click on the blue, clickable textual content.

And also you’ll see an inventory of invalid strains within the file.

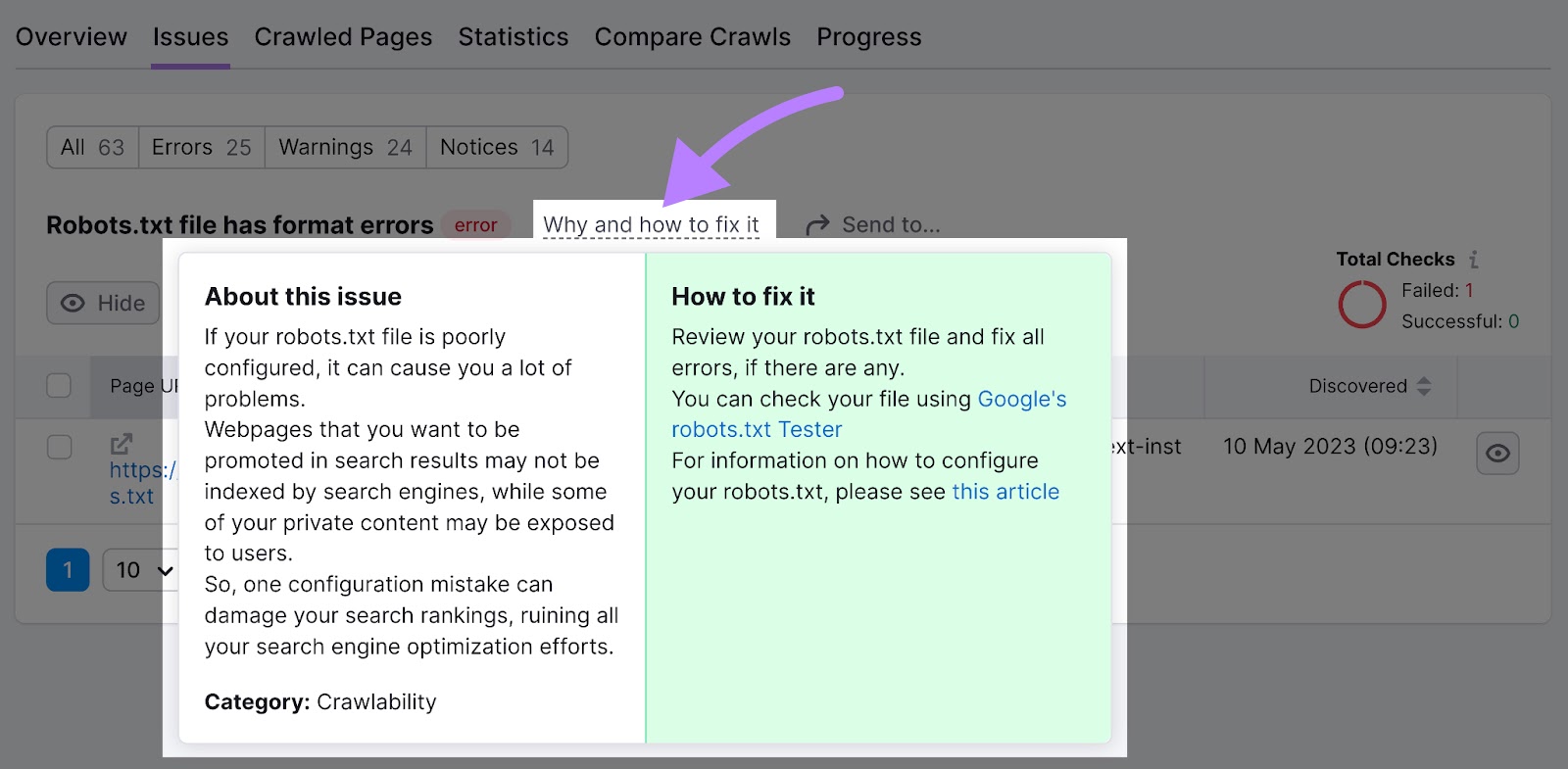

You possibly can click on “Why and learn how to repair it” to get particular directions on learn how to repair the error.

Monitor Crawlability to Guarantee Success

To verify your website will be crawled (and listed and ranked), it’s best to first make it search engine-friendly.

Your pages may not present up in search outcomes if it is not. So, you received’t drive any natural site visitors.

Discovering and fixing issues with crawlability and indexability is straightforward with the Web site Audit instrument.

You possibly can even set it as much as crawl your website mechanically on a recurring foundation. To make sure you keep conscious of any crawl errors that must be addressed.