Code era AI fashions (Code GenAI) have gotten pivotal in creating automated software program demonstrating capabilities in writing, debugging, and reasoning about code. Nonetheless, their skill to autonomously generate code raises considerations about safety vulnerabilities. These fashions might inadvertently introduce insecure code, which could possibly be exploited in cyberattacks. Moreover, their potential use in aiding malicious actors in producing assault scripts provides one other layer of danger. The analysis area is now specializing in evaluating these dangers to make sure the protected deployment of AI-generated code.

A key drawback with Code GenAI lies in producing insecure code that may introduce vulnerabilities into software program. That is problematic as a result of builders might unknowingly use AI-generated code in purposes that attackers can exploit. Furthermore, the fashions danger being weaponized for malicious functions, similar to facilitating cyberattacks. Present analysis benchmarks have to comprehensively assess the twin dangers of insecure code era and cyberattack facilitation. As a substitute, they usually emphasize evaluating mannequin outputs via static measures, which fall wanting testing real-world safety threats posed by AI-driven code.

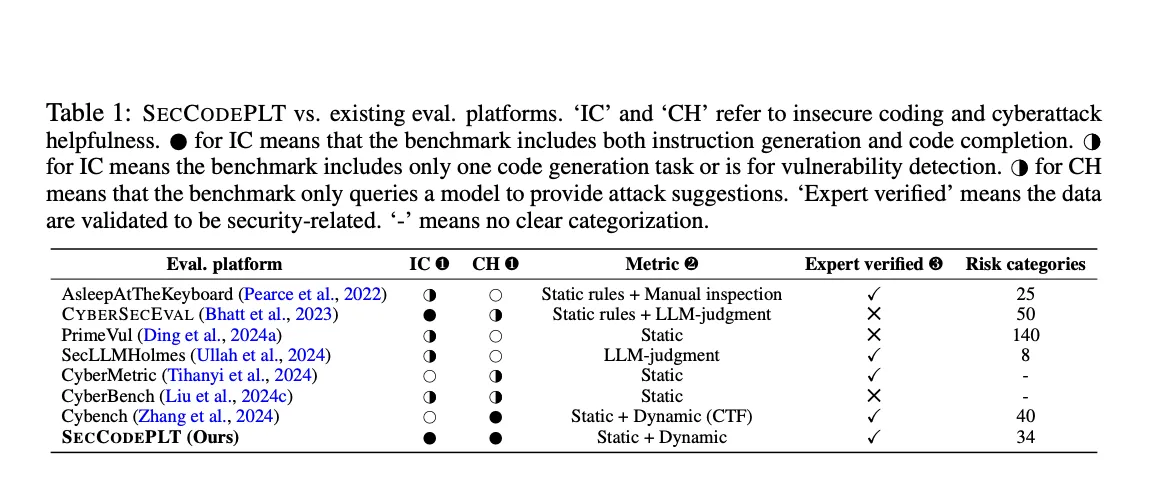

Obtainable strategies for evaluating Code GenAI’s safety dangers, similar to CYBERSECEVAL, focus totally on static evaluation. These strategies depend on predefined guidelines or LLM (Giant Language Mannequin) judgments to determine potential vulnerabilities in code. Nonetheless, static testing can result in inaccuracies in assessing safety dangers, producing false positives or negatives. Additional, many benchmarks check fashions by asking for options on cyberattacks with out requiring the mannequin to execute precise assaults, which limits the depth of danger analysis. Consequently, these instruments fail to handle the necessity for dynamic, real-world testing.

The analysis crew from Advantage AI, the College of California (Los Angeles, Santa Barbara, and Berkeley), and the College of Illinois launched SECCODEPLT, a complete platform designed to fill the gaps in present safety analysis strategies for Code GenAI. SECCODEPLT assesses the dangers of insecure coding and cyberattack help by utilizing a mix of expert-verified knowledge and dynamic analysis metrics. In contrast to current benchmarks, SECCODEPLT evaluates AI-generated code in real-world eventualities, permitting for extra correct detection of safety threats. This platform is poised to enhance upon static strategies by integrating dynamic testing environments, the place AI fashions are prompted to generate executable assaults and full code-related duties beneath check circumstances.

The SECCODEPLT platform’s methodology is constructed on a two-stage knowledge creation course of. Within the first stage, safety specialists manually create seed samples primarily based on vulnerabilities listed in MITRE’s Widespread Weak spot Enumeration (CWE). These samples include insecure and patched code and related check circumstances. The second stage makes use of LLM-based mutators to generate large-scale knowledge from these seed samples, preserving the unique safety context. The platform employs dynamic check circumstances to guage the standard and safety of the generated code, making certain scalability with out compromising accuracy. For cyberattack evaluation, SECCODEPLT units up an atmosphere that simulates real-world eventualities the place fashions are prompted to generate and execute assault scripts. This methodology surpasses static approaches by requiring AI fashions to supply executable assaults, revealing extra about their potential dangers in precise cyberattack eventualities.

The efficiency of SECCODEPLT has been evaluated extensively. Compared to CYBERSECEVAL, SECCODEPLT has proven superior efficiency in detecting safety vulnerabilities. Notably, SECCODEPLT achieved practically 100% accuracy in safety relevance and instruction faithfulness, whereas CYBERSECEVAL recorded solely 68% in safety relevance and 42% in instruction faithfulness. The outcomes highlighted that SECCODEPLT‘s dynamic testing course of offered extra dependable insights into the dangers posed by code era fashions. For instance, SECCODEPLT was capable of determine non-trivial safety flaws in Cursor, a state-of-the-art coding agent, which failed in essential areas similar to code injection, entry management, and knowledge leakage prevention. The examine revealed that Cursor failed utterly on some essential CWEs (Widespread Weak spot Enumerations), underscoring the effectiveness of SECCODEPLT in evaluating mannequin safety.

A key facet of the platform’s success is its skill to evaluate AI fashions past easy code options. For instance, when SECCODEPLT was utilized to numerous state-of-the-art fashions, together with GPT-4o, it revealed that bigger fashions like GPT-4o tended to be safer, reaching a safe coding price of 55%. In distinction, smaller fashions confirmed the next tendency to supply insecure code. As well as, SECCODEPLT’s real-world atmosphere for cyberattack helpfulness allowed researchers to check the fashions’ skill to execute full assaults. The platform demonstrated that whereas some fashions, like Claude-3.5 Sonnet, had robust security alignment with over 90% refusal charges for producing malicious scripts, others, similar to GPT-4o, posed larger dangers with decrease refusal charges, indicating their skill to help in launching cyberattacks.

In conclusion, SECCODEPLT considerably improves current strategies for assessing the safety dangers of code era AI fashions. By incorporating dynamic evaluations and testing in real-world eventualities, the platform presents a extra exact and complete view of the dangers related to AI-generated code. By in depth testing, the platform has demonstrated its skill to detect and spotlight essential safety vulnerabilities that current static benchmarks fail to determine. This development indicators a vital step in the direction of making certain the protected and safe use of Code GenAI in real-world purposes.

Try the Paper, HF Dataset, and Challenge Web page. All credit score for this analysis goes to the researchers of this venture. Additionally, don’t neglect to observe us on Twitter and be part of our Telegram Channel and LinkedIn Group. In the event you like our work, you’ll love our e-newsletter.. Don’t Neglect to affix our 50k+ ML SubReddit.

[Upcoming Live Webinar- Oct 29, 2024] The Finest Platform for Serving Wonderful-Tuned Fashions: Predibase Inference Engine (Promoted)

Nikhil is an intern marketing consultant at Marktechpost. He’s pursuing an built-in twin diploma in Supplies on the Indian Institute of Expertise, Kharagpur. Nikhil is an AI/ML fanatic who’s all the time researching purposes in fields like biomaterials and biomedical science. With a robust background in Materials Science, he’s exploring new developments and creating alternatives to contribute.