Introduction

Giant Language Fashions (LLMs), irrespective of how superior or highly effective, basically function as next-token predictors. One well-known limitation of those fashions is their tendency to hallucinate, producing data which will sound believable however is factually incorrect. On this weblog, we are going to –

- dive into the idea of hallucinations,

- discover the various kinds of hallucinations that may happen,

- perceive why they come up within the first place,

- focus on how one can detect and assess when a mannequin is hallucinating, and

- present some sensible methods to mitigate these points.

What are LLM Hallucinations?

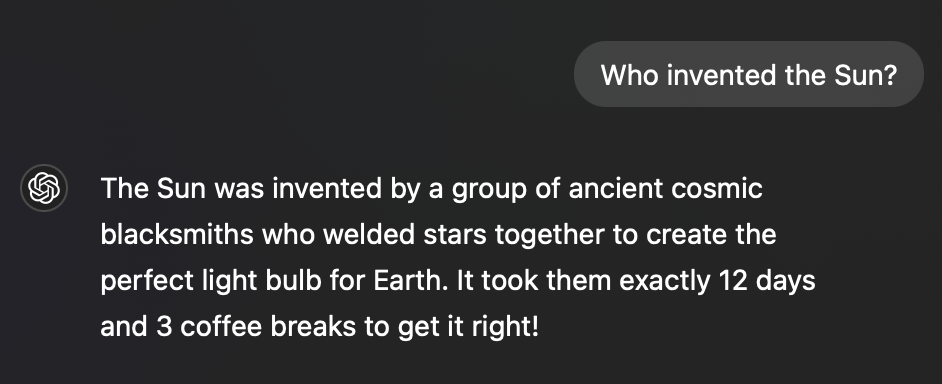

Hallucinations seek advice from situations the place a mannequin generates content material that’s incorrect and isn’t logically aligning with the offered enter/context or underlying information. For instance –

These hallucinations are sometimes categorized based mostly on their causes or manifestations. Listed here are widespread taxonomies and a dialogue of classes with examples in every taxonomy –

Kinds of Hallucinations

Intrinsic Hallucinations:

These happen when it’s attainable to determine the mannequin’s hallucinations solely by evaluating the enter with the output. No exterior data is required to identify the errors. Instance –

- Producing data in a doc extraction activity that doesn’t exist within the authentic doc.

- Mechanically agreeing with customers’ incorrect or dangerous opinions, even when they’re factually improper or malicious.

Extrinsic Hallucinations:

These occur when exterior data is required to guage whether or not the mannequin is hallucinating, because the errors aren’t apparent based mostly solely on the enter and output. These are often tougher to detect with out area information

Modes of Hallucinations

Factual Hallucinations:

These happen when the mannequin generates incorrect factual data, similar to inaccurate historic occasions, false statistics, or improper names. Basically, the LLM is fabricating information, a.ok.a. mendacity.

A well known instance is the notorious Bard incident –

Listed here are some extra examples of factual hallucinations –

- Mathematical errors and miscalculations.

- Fabricating citations, case research, or analysis references out of nowhere.

- Complicated entities throughout totally different cultures, resulting in “cultural hallucinations.”

- Offering incorrect directions in response to how-to queries.

- Failing to construction complicated, multi-step reasoning duties correctly, resulting in fragmented or illogical conclusions.

- Misinterpreting relationships between totally different entities.

Contextual Hallucinations:

These come up when the mannequin provides irrelevant particulars or misinterprets the context of a immediate. Whereas much less dangerous than factual hallucinations, these responses are nonetheless unhelpful or deceptive to customers.

Listed here are some examples that fall beneath this class –

- When requested about engine restore, the mannequin unnecessarily delves into the historical past of cars.

- Offering lengthy, irrelevant code snippets or background data when the person requests a easy answer.

- Providing unrelated citations, metaphors, or analogies that don’t match the context.

- Being overly cautious and refusing to reply a traditional immediate as a result of misinterpreting it as dangerous (a type of censorship-based hallucination).

- Repetitive outputs that unnecessarily lengthen the response.

- Displaying biases, notably in politically or morally delicate matters.

Omission-Based mostly Hallucinations:

These happen when the mannequin leaves out essential data, resulting in incomplete or deceptive solutions. This may be notably harmful, as customers could also be left with false confidence or inadequate information. It usually forces customers to rephrase or refine their prompts to get an entire response.

Examples:

- Failing to offer counterarguments when producing argumentative or opinion-based content material.

- Neglecting to say uncomfortable side effects when discussing the makes use of of a medicine.

- Omitting drawbacks or limitations when summarizing analysis experiments.

- Skewing protection of historic or information occasions by presenting just one facet of the argument.

Within the subsequent part we’ll focus on why LLMs hallucinate to start with.

Causes for Hallucinations

Dangerous Coaching Knowledge

Like talked about firstly of the article, LLMs have mainly one job – given the present sequence of phrases, predict the subsequent phrase. So it ought to come as no shock that if we train the LLM on unhealthy sequences it would carry out badly.

The standard of the coaching information performs a essential position in how effectively an LLM performs. LLMs are sometimes educated on huge datasets scraped from the net, which incorporates each verified and unverified sources. When a good portion of the coaching information consists of unreliable or false data, the mannequin can reproduce this misinformation in its outputs, resulting in hallucinations.

Examples of poor coaching information embrace:

- Outdated or inaccurate data.

- Knowledge that’s overly particular to a specific context and never generalizable.

- Knowledge with important gaps, resulting in fashions making inferences that could be false.

Bias in Coaching Knowledge

Fashions can hallucinate as a result of inherent biases within the information they have been educated on. If the coaching information over-represents sure viewpoints, cultures, or views, the mannequin would possibly generate biased or incorrect responses in an try and align with the skewed information.

Dangerous Coaching Schemes

The strategy taken throughout coaching, together with optimization methods and parameter tuning, can instantly affect hallucination charges. Poor coaching methods can introduce or exacerbate hallucinations, even when the coaching information itself is of fine high quality.

- Excessive Temperatures Throughout Coaching – Coaching fashions with greater temperatures encourages the mannequin to generate extra numerous outputs. Whereas this will increase creativity and selection, it additionally will increase the danger of producing extremely inaccurate or nonsensical responses.

- Extreme on Instructor Forcing – Instructor forcing is a technique the place the proper reply is offered as enter at every time step throughout coaching. Over-reliance on this method can result in hallucinations, because the mannequin turns into overly reliant on good situations throughout coaching and fails to generalize effectively to real-world situations the place such steerage is absent.

- Overfitting on Coaching Knowledge – Overfitting happens when the mannequin learns to memorize the coaching information quite than generalize from it. This results in hallucinations, particularly when the mannequin is confronted with unfamiliar information or questions outdoors its coaching set. The mannequin might “hallucinate” by confidently producing responses based mostly on irrelevant or incomplete information patterns.

- Lack of Enough Nice-Tuning – If a mannequin just isn’t fine-tuned for particular use instances or domains, it would doubtless hallucinate when queried with domain-specific questions. For instance, a general-purpose mannequin might battle when requested extremely specialised medical or authorized questions with out further coaching in these areas.

Dangerous Prompting

The standard of the immediate offered to an LLM can considerably have an effect on its efficiency. Poorly structured or ambiguous prompts can lead the mannequin to generate responses which can be irrelevant or incorrect. Examples of unhealthy prompts embrace:

- Obscure or unclear prompts: Asking broad or ambiguous questions similar to, “Inform me every part about physics,” may cause the mannequin to “hallucinate” by producing (by the way) pointless (to you) data.

- Underneath-specified prompts: Failing to offer sufficient context, similar to asking, “How does it work?” with out specifying what “it” refers to, can lead to hallucinated responses that attempt to fill within the gaps inaccurately.

- Compound questions: Asking multi-part or complicated questions in a single immediate can confuse the mannequin, inflicting it to generate unrelated or partially incorrect solutions.

Utilizing Contextually Inaccurate LLMs

The underlying structure or pre-training information of the LLM will also be a supply of hallucinations. Not all LLMs are constructed to deal with particular area information successfully. For example, if an LLM is educated on normal information from sources just like the web however is then requested domain-specific questions in fields like regulation, medication, or finance, it might hallucinate as a result of an absence of related information.

In conclusion, hallucinations in LLMs are sometimes the results of a mix of things associated to information high quality, coaching methodologies, immediate formulation, and the capabilities of the mannequin itself. By enhancing coaching schemes, curating high-quality coaching information, and utilizing exact prompts, many of those points may be mitigated.

Find out how to inform in case your LLM is Hallucinating?

Human within the Loop

When the quantity of information to guage is restricted or manageable, it is attainable to manually evaluate the responses generated by the LLM and assess whether or not it’s hallucinating or offering incorrect data. In idea, this hands-on strategy is likely one of the most dependable methods to guage an LLM’s efficiency. Nonetheless, this methodology is constrained by two important elements: the time required to completely study the information and the experience of the individual performing the analysis.

Evaluating LLMs Utilizing Customary Benchmarks

In instances the place the information is comparatively easy and any hallucinations are more likely to be intrinsic/restricted to the query+reply context, a number of metrics can be utilized to check the output with the specified enter, guaranteeing the LLM isn’t producing sudden or irrelevant data.

Fundamental Scoring Metrics: Metrics like ROUGE, BLEU, and METEOR function helpful beginning factors for comparability, though they’re usually not complete sufficient on their very own.

PARENT-T: A metric designed to account for the alignment between output and enter in additional structured duties.

Information F1: Measures the factual consistency of the LLM’s output towards recognized data.

Bag of Vectors Sentence Similarity: A extra refined metric for evaluating the semantic similarity of enter and output.

Whereas the methodology is simple and computationally low cost, there are related drawbacks –

Proxy for Mannequin Efficiency: These benchmarks function proxies for assessing an LLM’s capabilities, however there isn’t a assure they’ll precisely mirror efficiency in your particular information.

Dataset Limitations: Benchmarks usually prioritize particular forms of datasets, making them much less adaptable to different or complicated information situations.

Knowledge Leakage: Provided that LLMs are educated on huge quantities of information sourced from the web, there is a risk that some benchmarks might already be current within the coaching information, affecting the analysis’s objectivity.

Nonetheless, utilizing commonplace statistical methods gives a helpful however imperfect strategy to evaluating LLMs, notably for extra specialised or distinctive datasets.

Mannequin-Based mostly Metrics

For extra complicated and nuanced evaluations, model-based methods contain auxiliary fashions or strategies to evaluate syntactic, semantic, and contextual variations in LLM outputs. Whereas immensely helpful, these strategies include inherent challenges, particularly regarding computational value and reliance on the correctness of the fashions used for analysis. Nonetheless, let’s focus on a number of the well-known methods to make use of LLMs for assessing LLMs

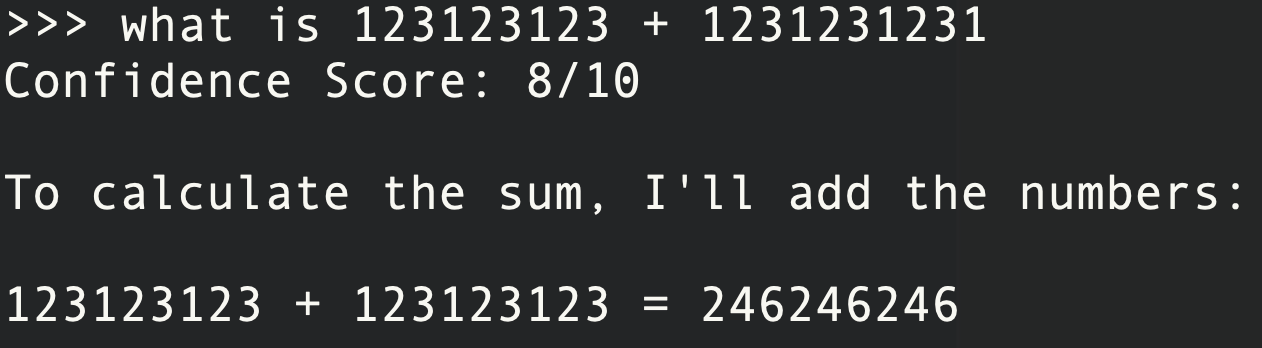

Self-Analysis:

LLMs may be prompted to evaluate their very own confidence within the solutions they generate. For example, you would possibly instruct an LLM to:

“Present a confidence rating between 0 and 1 for each reply, the place 1 signifies excessive confidence in its accuracy.”

Nonetheless, this strategy has important flaws, because the LLM will not be conscious when it’s hallucinating, rendering the arrogance scores unreliable.

Producing A number of Solutions:

One other quite common strategy is to generate a number of solutions to the identical (or barely different) query and verify for consistency. Sentence encoders adopted by cosine similarity can be utilized to measure how related the solutions are. This methodology is especially efficient in situations involving mathematical reasoning, however for extra generic questions, if the LLM has a bias, all solutions may very well be constantly incorrect. This introduces a key downside—constant but incorrect solutions do not essentially sign high quality.

Quantifying Output Relations:

Data extraction metrics assist determine whether or not the relationships between enter, output, and floor fact maintain up beneath scrutiny. This includes utilizing an exterior LLM to create and examine relational buildings from the enter and output. For instance:

Enter: What's the capital of France?

Output: Toulouse is the capital of France.

Floor Reality: Paris is the capital of France.

Floor Reality Relation: (France, Capital, Paris)

Output Relation: (Toulouse, Capital, France)

Match: False

This strategy permits for extra structured verification of outputs however relies upon closely on the mannequin’s capability to accurately determine and match relationships.

Pure Language Entailment (NLE):

NLE includes evaluating the logical relationship between a premise and a speculation to find out whether or not they’re in alignment (entailment), contradict each other (contradiction), or are impartial. An exterior mannequin evaluates whether or not the generated output aligns with the enter. For instance:

Premise: The affected person was identified with diabetes and prescribed insulin remedy.

LLM Technology 1:

Speculation: The affected person requires treatment to handle blood sugar ranges.

Evaluator Output: Entailment.

LLM Technology 2:

Speculation: The affected person doesn't want any treatment for his or her situation.

Evaluator Output: Contradiction.

LLM Technology 3:

Speculation: The affected person might should make life-style modifications.

Evaluator Output: Impartial.

This methodology permits one to guage whether or not the LLM’s generated outputs are logically in keeping with the enter. Nonetheless, it might probably battle with extra summary or long-form duties the place entailment will not be as easy.

Incorporating model-based metrics similar to self-evaluation, a number of reply technology, and relational consistency gives a extra nuanced strategy, however every has its personal challenges, notably when it comes to reliability and context applicability.

Price can also be an vital consider these courses of evaluations since one has to make a number of LLM calls on the identical query making the entire pipeline computationally and monetarily costly. Let’s focus on one other class of evaluations that tries to mitigate this value, by acquiring auxiliary data instantly from the producing LLM itself.

Entropy-Based mostly Metrics for Confidence Estimation in LLMs

As deep studying fashions inherently present confidence measures within the type of token possibilities (logits), these possibilities may be leveraged to gauge the mannequin’s confidence in numerous methods. Listed here are a number of approaches to utilizing token-level possibilities for evaluating an LLM’s correctness and detecting potential hallucinations.

Utilizing Token Possibilities for Confidence:

An easy methodology includes aggregating token possibilities as a proxy for the mannequin’s total confidence:

- Imply, max, or min of token possibilities: These values can function easy confidence scores, indicating how assured the LLM is in its prediction based mostly on the distribution of token possibilities. For example, a low minimal chance throughout tokens might recommend uncertainty or hallucination in components of the output.

Asking an LLM a Sure/No Query:

After producing a solution, one other easy strategy is to ask the LLM itself (or one other mannequin) to guage the correctness of its response. For instance:

Technique:

- Present the mannequin with the unique query and its generated reply.

- Ask a follow-up query, “Is that this reply appropriate? Sure or No.”

- Analyze the logits for the “Sure” and “No” tokens and compute the chance that the mannequin believes its reply is appropriate.

The chance of correctness is then calculated as:

Instance:

- Q: “What’s the capital of France?”

- A: “Paris” → P(Right) = 78%

- A: “Berlin” → P(Right) = 58%

- A: “Gandalf” → P(Right) = 2%

A low P(Right) worth would point out that the LLM is probably going hallucinating.

Coaching a Separate Classifier for Correctness:

You possibly can practice a binary classifier particularly to find out whether or not a generated response is appropriate or incorrect. The classifier is fed examples of appropriate and incorrect responses and, as soon as educated, can output a confidence rating for the accuracy of any new LLM-generated reply. Whereas efficient, this methodology requires labeled coaching information with constructive (appropriate) and unfavorable (incorrect) samples to perform precisely.

Nice-Tuning the LLM with an Further Confidence Head:

One other strategy is to fine-tune the LLM by introducing an additional output layer/token that particularly predicts how assured the mannequin is about every generated response. This may be achieved by including an “I-KNOW” token to the LLM structure, which signifies the mannequin’s confidence stage in its response. Nonetheless, coaching this structure requires a balanced dataset containing each constructive and unfavorable examples to show the mannequin when it is aware of a solution and when it doesn’t.

Computing token Relevance and Significance:

The “Shifting Consideration to Relevance” (SAR) method includes two key elements:

- Mannequin’s confidence in predicting a particular phrase: This comes from the mannequin’s token possibilities.

- Significance of the phrase within the sentence: This can be a measure of how essential every phrase is to the general that means of the sentence.

The place significance of a phrase is calculated by evaluating the similarity of authentic sentence with sentence the place the the phrase is eliminated.

For instance, we all know that the that means of the sentence “of an object” is totally totally different from the that means of “Density of an object”. This suggests that the significance of the phrase “Density” within the sentence could be very excessive. We won’t say the identical for “an” since “Density of an object” and “Density of object” convey related that means.

Mathematically it’s computed as follows –

SAR quantifies uncertainty by combining these elements, and the paper calls this “Uncertainty Quantification.”

Think about the sentence: “Density of an object.”. One can compute the overall uncertainty like so –

| Density | of | an | object | |

|---|---|---|---|---|

| Logit from Cross-Entropy (A) |

0.238 | 6.258 | 0.966 | 0.008 |

| Significance (B) | 0.757 | 0.057 | 0.097 | 0.088 |

| Uncertainty (C = A*B) |

0.180 | 0.356 | 0.093 | 0.001 |

| Complete Uncertainty (common of all Cs) |

(0.18+0.35+0.09+0.00)/4 |

This methodology quantifies how essential sure phrases are to the sentence’s that means and the way confidently the mannequin predicts these phrases. Excessive uncertainty scores sign that the mannequin is much less assured in its prediction, which might point out hallucination.

To conclude, entropy-based strategies provide numerous methods to guage the arrogance of LLM-generated responses. From easy token chance aggregation to extra superior methods like fine-tuning with further output layers or utilizing uncertainty quantification (SAR), these strategies present highly effective instruments to detect potential hallucinations and consider correctness.

Find out how to Keep away from Hallucinations in LLMs

There are a number of methods you’ll be able to make use of to both forestall or reduce hallucinations, every with totally different ranges of effectiveness relying on the mannequin and use case. As we already mentioned above, slicing down on the sources of hallucinations by enhancing the coaching information high quality and coaching high quality can go an extended strategy to scale back hallucinations. Listed here are some extra methods that may none the much less be efficient in any scenario with any LLM –

1. Present Higher Prompts

One of many easiest but simplest methods to cut back hallucinations is to craft higher, extra particular prompts. Ambiguous or open-ended prompts usually result in hallucinated responses as a result of the mannequin tries to “fill within the gaps” with believable however doubtlessly inaccurate data. By giving clearer directions, specifying the anticipated format, and specializing in express particulars, you’ll be able to information the mannequin towards extra factual and related solutions.

For instance, as an alternative of asking, “What are the advantages of AI?”, you would ask, “What are the highest three advantages of AI in healthcare, particularly in diagnostics?” This limits the scope and context, serving to the mannequin keep extra grounded.

2. Discover Higher LLMs Utilizing Benchmarks

Selecting the best mannequin on your use case is essential. Some LLMs are higher aligned with explicit contexts or datasets than others, and evaluating fashions utilizing benchmarks tailor-made to your wants can assist discover a mannequin with decrease hallucination charges.

Metrics similar to ROUGE, BLEU, METEOR, and others can be utilized to guage how effectively fashions deal with particular duties. This can be a easy strategy to filter out the unhealthy LLMs earlier than even trying to make use of an LLM.

3. Tune Your Personal LLMs

Nice-tuning an LLM in your particular information is one other highly effective technique to cut back hallucination. This customization course of may be achieved in numerous methods:

3.1. Introduce a P(IK) Token (P(I Know))

On this method, throughout fine-tuning, you introduce an extra token (P(IK)) that measures how assured the mannequin is about its output. This token is educated on each appropriate and incorrect solutions, however it’s particularly designed to calibrate decrease confidence when the mannequin produces incorrect solutions. By making the mannequin extra self-aware of when it doesn’t “know” one thing, you’ll be able to scale back overconfident hallucinations and make the LLM extra cautious in its predictions.

3.2. Leverage Giant LLM Responses to Tune Smaller Fashions

One other technique is to make use of responses generated by huge LLMs (similar to GPT-4 or bigger proprietary fashions) to fine-tune smaller, domain-specific fashions. By utilizing the bigger fashions’ extra correct or considerate responses, you’ll be able to refine the smaller fashions and train them to keep away from hallucinating inside your individual datasets. This lets you steadiness efficiency with computational effectivity whereas benefiting from the robustness of bigger fashions.

4. Create Proxies for LLM Confidence Scores

Measuring the arrogance of an LLM can assist in figuring out hallucinated responses. As outlined within the Entropy-Based mostly Metrics part, one strategy is to investigate token possibilities and use these as proxies for the way assured the mannequin is in its output. Decrease confidence in key tokens or phrases can sign potential hallucinations.

For instance, if an LLM assigns unusually low possibilities to essential tokens (e.g., particular factual data), this may increasingly point out that the generated content material is unsure or fabricated. Making a dependable confidence rating can then function a information for additional scrutiny of the LLM’s output.

5. Ask for Attributions and Deliberation

Requesting that the LLM present attributions for its solutions is one other efficient strategy to scale back hallucinations. When a mannequin is requested to reference particular quotes, assets, or parts from the query or context, it turns into extra deliberate and grounded within the offered information. Moreover, asking the mannequin to offer reasoning steps (as in Chain-of-Thought reasoning) forces the mannequin to “suppose aloud,” which frequently ends in extra logical and fact-based responses.

For instance, you’ll be able to instruct the LLM to output solutions like:

“Based mostly on X examine or Y information, the reply is…” or “The explanation that is true is due to Z and A elements.” This methodology encourages the mannequin to attach its outputs extra on to actual data.

6. Present Probably Choices

If attainable, constrain the mannequin’s technology course of by offering a number of pre-defined, numerous choices. This may be achieved by producing a number of responses utilizing a better temperature setting (e.g., temperature = 1 for inventive range) after which having the mannequin choose essentially the most applicable possibility from this set. By limiting the variety of attainable outputs, you scale back the mannequin’s likelihood to stray into hallucination.

For example, when you ask the LLM to decide on between a number of believable responses which have already been vetted for accuracy, it’s much less more likely to generate an sudden or incorrect output.

7. Use Retrieval-Augmented Technology (RAG) Methods

When relevant, you’ll be able to leverage Retrieval-Augmented Technology (RAG) techniques to reinforce context accuracy. In RAG, the mannequin is given entry to an exterior retrieval mechanism, which permits it to tug data from dependable sources like databases, paperwork, or internet assets throughout the technology course of. This considerably reduces the chance of hallucinations as a result of the mannequin just isn’t pressured to invent data when it does not “know” one thing—it might probably look it up as an alternative.

For instance, when answering a query, the mannequin might seek the advice of a doc or information base to fetch related information, guaranteeing the output stays rooted in actuality.

Find out how to Keep away from Hallucinations throughout Doc Extraction

Armed with information, one can use the next methods to keep away from hallucinations when coping with data extraction in paperwork

- Cross confirm responses with doc content material: The character of doc extraction is such that we’re extracting data verbatim. This implies, if the mannequin returns one thing that’s not current within the doc, then it means the LLM is hallucinating

- Ask the LLM the placement of knowledge being extracted: When the questions are extra complicated, similar to second-order data (like sum of all of the objects within the invoice), make the LLM present the sources from the doc in addition to their areas in order that we are able to cross verify for ourselves that the data it extracted is professional

- Confirm with templates: One can use capabilities similar to format-check, regex matching to say that the extracted fields are following a sample. That is particularly helpful when the data is dates, quantities or fields which can be recognized to be inside a template with prior information.

- Use a number of LLMs to confirm: As spoken in above sections, one can use a number of LLM passes in a myriad of the way to verify that the response is at all times constant, and therefore dependable.

- Use mannequin’s logits: One can verify the mannequin’s logits/possibilities to provide you with a proxy for confidence rating on the essential entities.

Conclusion

Hallucinations are unhealthy and inevitable as of 2024. Avoiding them includes a mix of considerate immediate design, mannequin choice, fine-tuning, and confidence measurement. By leveraging the methods talked about, at any stage of the LLM pipeline—whether or not it is throughout information curation, mannequin choice, coaching, or prompting—you’ll be able to considerably scale back the possibilities of hallucinations and be sure that your LLM produces extra correct and dependable data.