Optimization issues contain figuring out the most effective viable reply from quite a lot of choices, which could be seen regularly each in actual life conditions and in most areas of scientific analysis. Nonetheless, there are various advanced issues which can’t be solved with easy laptop strategies or which might take an inordinate period of time to resolve.

As a result of easy algorithms are ineffective at fixing these issues, specialists around the globe have labored to develop more practical methods that may resolve them inside real looking time frames. Synthetic neural networks (ANN) are on the coronary heart of a number of the most promising strategies explored to this point.

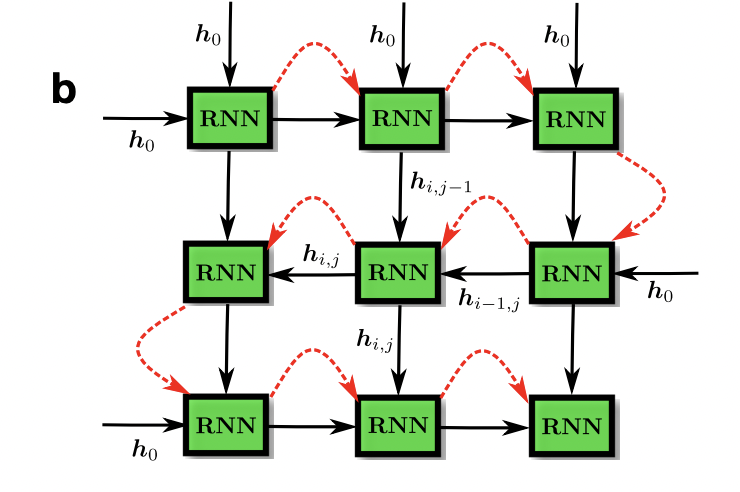

A brand new examine by the Vector Institute, the College of Waterloo and the Perimeter Institute for Theoretical Physics in Canada presents variational neuronal annealing. This new optimization technique combines recurrent neural networks (RNN) with the notion of annealing. Utilizing a parameterized mannequin, this modern approach generalizes the distribution of possible options to a selected drawback. Its purpose is to resolve real-world optimization issues utilizing a novel algorithm primarily based on annealing concept and pure language processing (NLP) RNNs.

The proposed framework relies on the precept of annealing, impressed by metallurgical annealing, which consists of heating the fabric and cooling it slowly to convey it to a weaker, extra resistant and extra secure vitality state. Simulated annealing was developed primarily based on this course of, and it seeks to establish numerical options to optimization issues.

The most important distinguishing function of this optimization technique is that it combines the effectivity and processing capability of ANNs with some great benefits of simulated annealing strategies. The group used the RNNs algorithm which has proven specific promise for NLP functions. Whereas these algorithms are usually utilized in NLP research to interpret human language, researchers have reused them to resolve optimization issues.

In comparison with extra conventional digital annealing implementations, their RNN-based technique produced higher selections, rising the effectivity of each classical and quantum annealing procedures. With autoregressive networks, researchers had been in a position to code the annealing paradigm. Their technique takes optimization drawback fixing to a brand new stage by immediately exploiting the infrastructures used to coach trendy neural networks, similar to TensorFlow or Pytorch, accelerated by GPU and TPU.

The group carried out a number of checks to match the efficiency of the tactic with conventional annealing optimization strategies primarily based on numerical simulations. On many paradigmatic optimization issues, the proposed strategy has gone past all strategies.

This algorithm can be utilized in all kinds of real-world optimization issues sooner or later, permitting specialists in numerous fields to resolve difficulties quicker.

The researchers want to additional consider the efficiency of their algorithm on extra real looking issues, in addition to to match it to the efficiency of current superior optimization strategies. In addition they intend to enhance their approach by changing sure elements or incorporating new ones.

You’ll be able to view the total article right here

There’s additionally a code on Github:

Variational Neural Annealing

Simulated Classical and Quantum Annealing