Introduction

On this planet of AI, the place information drives selections, selecting the best instruments could make or break your venture. For Retrieval-Augmented Era methods extra generally referred to as RAG methods, PDFs are a goldmine of knowledge—when you can unlock their contents. However PDFs are difficult; they’re typically full of complicated layouts, embedded photos, and hard-to-extract information.

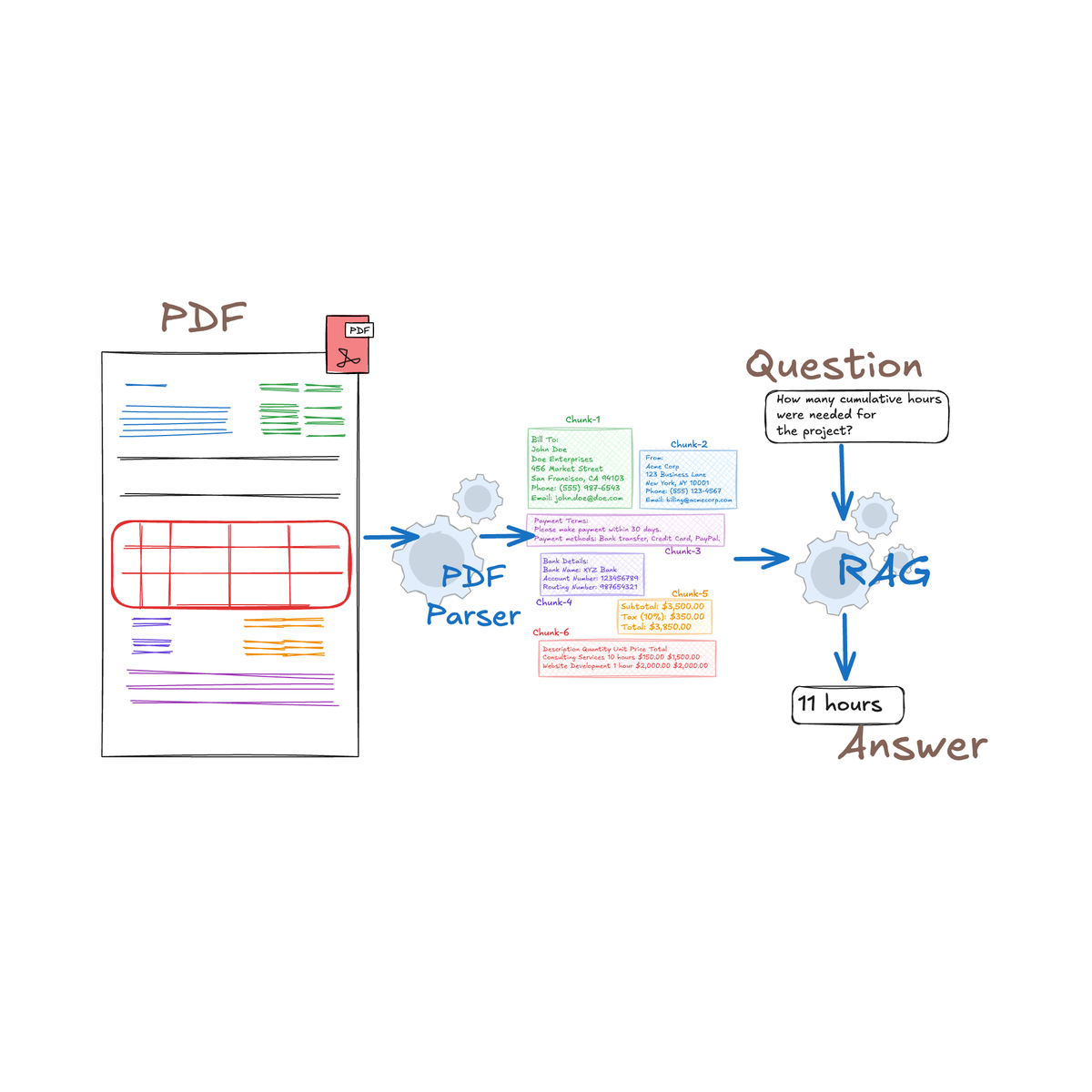

If you happen to’re not accustomed to RAG methods, such methods work by enhancing an AI mannequin’s capacity to offer correct solutions by retrieving related info from exterior paperwork. Giant Language Fashions (LLMs), equivalent to GPT, use this information to ship extra knowledgeable, contextually conscious responses. This makes RAG methods particularly highly effective for dealing with complicated sources like PDFs, which frequently comprise tricky-to-access however beneficial content material.

The fitting PDF parser does not simply learn recordsdata—it turns them right into a wealth of actionable insights in your RAG purposes. On this information, we’ll dive into the important options of high PDF parsers, serving to you discover the right match to energy your subsequent RAG breakthrough.

Understanding PDF Parsing for RAG

What’s PDF Parsing?

PDF parsing is the method of extracting and changing the content material inside PDF recordsdata right into a structured format that may be simply processed and analyzed by software program purposes. This consists of textual content, photos, and tables which might be embedded inside the doc.

Why is PDF Parsing Essential for RAG Functions?

RAG methods depend on high-quality, structured information to generate correct and ctextually related outputs. PDFs, typically used for official paperwork, enterprise experiences, and authorized contracts, comprise a wealth of knowledge however are infamous for his or her complicated layouts and unstructured information. Efficient PDF parsing ensures that this info is precisely extracted and structured, offering the RAG system with the dependable information it must operate optimally. With out strong PDF parsing, important information could possibly be misinterpreted or misplaced, resulting in inaccurate outcomes and undermining the effectiveness of the RAG utility.

The Position of PDF Parsing in Enhancing RAG Efficiency

Tables are a major instance of the complexities concerned in PDF parsing. Contemplate the S-1 doc used within the registration of securities. The S-1 incorporates detailed monetary details about an organization’s enterprise operations, use of proceeds, and administration, typically introduced in tabular type. Precisely extracting these tables is essential as a result of even a minor error can result in important inaccuracies in monetary reporting or compliance with SEC (Securities and Change Fee rules), which is a U.S. authorities company answerable for regulating the securities markets and defending traders. It ensures that firms present correct and clear info, notably by means of paperwork just like the S-1, that are filed when an organization plans to go public or supply new securities.

A well-designed PDF parser can deal with these complicated tables, sustaining the construction and relationships between the information factors. This precision ensures that when the RAG system retrieves and makes use of this info, it does so precisely, resulting in extra dependable outputs.

For instance, we are able to current the next desk from our monetary S1 PDF to an LLM and request it to carry out a selected evaluation primarily based on the information supplied.

By enhancing the extraction accuracy and preserving the integrity of complicated layouts, PDF parsing performs an important position in elevating the efficiency of RAG methods, notably in use circumstances like monetary doc evaluation, the place precision is non-negotiable.

Key Issues When Selecting a PDF Parser for RAG

When choosing a PDF parser to be used in a RAG system, it is important to judge a number of important elements to make sure that the parser meets your particular wants. Under are the important thing issues to bear in mind:

Accuracy is essential to creating certain that the information extracted from PDFs is reliable and might be simply utilized in RAG purposes. Poor extraction can result in misunderstandings and harm the efficiency of AI fashions.

Capability to Keep Doc Construction

- Preserving the unique construction of the doc is vital to be sure that the extracted information retains its unique that means. This consists of preserving the structure, order, and connections between totally different components (e.g., headers, footnotes, tables).

Assist for Varied PDF Sorts

- PDFs are available in numerous kinds, together with digitally created PDFs, scanned PDFs, interactive PDFs, and people with embedded media. A parser’s capacity to deal with various kinds of PDFs ensures flexibility in working with a variety of paperwork.

Integration Capabilities with RAG Frameworks

- For a PDF parser to be helpful in an RAG system, it must work properly with the prevailing setup. This consists of having the ability to ship extracted information straight into the system for indexing, looking, and producing outcomes.

Challenges in PDF Parsing for RAG

RAG methods rely closely on correct and structured information to operate successfully. PDFs, nonetheless, typically current important challenges as a consequence of their complicated formatting, various content material sorts, and inconsistent constructions. Listed here are the first challenges in PDF parsing for RAG:

Coping with Complicated Layouts and Formatting

PDFs typically embody multi-column layouts, blended textual content and pictures, footnotes, and headers, all of which make it tough to extract info in a linear, structured format. The non-linear nature of many PDFs can confuse parsers, resulting in jumbled or incomplete information extraction.

A monetary report might need tables, charts, and a number of columns of textual content on the identical web page. Take the above structure for instance, extracting the related info whereas sustaining the context and order might be difficult for normal parsers.

Wrongly Extracted Information:

Dealing with Scanned Paperwork and Pictures

Many PDFs comprise scanned photos of paperwork slightly than digital textual content. These paperwork often do require Optical Character Recognition (OCR) to transform the photographs into textual content, however OCR can battle with poor picture high quality, uncommon fonts, or handwritten notes, and in most PDF Parsers the information from picture extraction function is just not out there.

Extracting Tables and Structured Information

Tables are a gold mine of information, nonetheless, extracting tables from PDFs is notoriously tough because of the various methods tables are formatted. Tables could span a number of pages, embody merged cells, or have irregular constructions, making it laborious for parsers to accurately establish and extract the information.

An S-1 submitting may embody complicated tables with monetary information that should be extracted precisely for evaluation. Customary parsers could misread rows and columns, resulting in incorrect information extraction.

Earlier than anticipating your RAG system to investigate numerical information saved in important tables, it’s important to first consider how successfully this information is extracted and despatched to the LLM. Guaranteeing correct extraction is essential to figuring out how dependable the mannequin’s calculations might be.

Comparative Evaluation of Common PDF Parsers for RAG

On this part of the article, we might be evaluating a few of the most well-known PDF parsers on the difficult features of PDF extraction utilizing the AllBirds S1 discussion board. Remember that the AllBirds S1 PDF is 700 pages, and extremely complicated PDF parsers that poses important challenges, making this comparability part an actual take a look at of the 5 parsers talked about under. In additional widespread and fewer complicated PDF paperwork, these PDF Parsers may supply higher efficiency when extracting the wanted information.

Multi-Column Layouts Comparability

Under is an instance of a multi-column structure extracted from the AllBirds S1 type. Whereas this format is simple for human readers, who can simply monitor the information of every column, many PDF parsers battle with such layouts. Some parsers could incorrectly interpret the content material by studying it as a single vertical column, slightly than recognizing the logical movement throughout a number of columns. This misinterpretation can result in errors in information extraction, making it difficult to precisely retrieve and analyze the data contained inside such paperwork. Correct dealing with of multi-column codecs is important for making certain correct information extraction in complicated PDFs.

PDF Parsers in Motion

Now let’s verify how some PDF parsers extract multi-column structure information.

a) PyPDF1 (Multi-Column Layouts Comparability)

Nicole BrookshirePeter WernerCalise ChengKatherine DenbyCooley LLP3 Embarcadero Middle, twentieth FloorSan Francisco, CA 94111(415) 693-2000Daniel LiVP, LegalAllbirds, Inc.730 Montgomery StreetSan Francisco, CA 94111(628) 225-4848Stelios G. SaffosRichard A. KlineBenjamin J. CohenBrittany D. RuizLatham & Watkins LLP1271 Avenue of the AmericasNew York, New York 10020(212) 906-1200The first challenge with the PyPDF1 parser is its incapacity to neatly separate extracted information into distinct traces, resulting in a cluttered and complicated output. Moreover, whereas the parser acknowledges the idea of a number of columns, it fails to correctly insert areas between them. This misalignment of textual content could cause important challenges for RAG methods, making it tough for the mannequin to precisely interpret and course of the data. This lack of clear separation and spacing in the end hampers the effectiveness of the RAG system, because the extracted information doesn’t precisely mirror the construction of the unique doc.

b) PyPDF2 (Multi-Column Layouts Comparability)

Nicole Brookshire Daniel Li Stelios G. Saffos

Peter Werner VP, Authorized Richard A. Kline

Calise Cheng Allbirds, Inc. Benjamin J. Cohen

Katherine Denby 730 Montgomery Road Brittany D. Ruiz

Cooley LLP San Francisco, CA 94111 Latham & Watkins LLP

3 Embarcadero Middle, twentieth Flooring (628) 225-4848 1271 Avenue of the Americas

San Francisco, CA 94111 New York, New York 10020

(415) 693-2000 (212) 906-1200As proven above, although the PyPDF2 parser separates the extracted information into separate traces making it simpler to know, it nonetheless struggles with successfully dealing with multi-column layouts. As an alternative of recognizing the logical movement of textual content throughout columns, it mistakenly extracts the information as if the columns have been single vertical traces. This misalignment ends in jumbled textual content that fails to protect the meant construction of the content material, making it tough to learn or analyze the extracted info precisely. Correct parsing instruments ought to have the ability to establish and accurately course of such complicated layouts to take care of the integrity of the unique doc’s construction.

c) PDFMiner (Multi-Column Layouts Comparability)

Nicole Brookshire

Peter Werner

Calise Cheng

Katherine Denby

Cooley LLP

3 Embarcadero Middle, twentieth Flooring

San Francisco, CA 94111

(415) 693-2000

Copies to:

Daniel Li

VP, Authorized

Allbirds, Inc.

730 Montgomery Road

San Francisco, CA 94111

(628) 225-4848

Stelios G. Saffos

Richard A. Kline

Benjamin J. Cohen

Brittany D. Ruiz

Latham & Watkins LLP

1271 Avenue of the Americas

New York, New York 10020

(212) 906-1200The PDFMiner parser handles the multi-column structure with precision, precisely extracting the information as meant. It accurately identifies the movement of textual content throughout columns, preserving the doc’s unique construction and making certain that the extracted content material stays clear and logically organized. This functionality makes PDFMiner a dependable selection for parsing complicated layouts, the place sustaining the integrity of the unique format is essential.

d) Tika-Python (Multi-Column Layouts Comparability)

Copies to:

Nicole Brookshire

Peter Werner

Calise Cheng

Katherine Denby

Cooley LLP

3 Embarcadero Middle, twentieth Flooring

San Francisco, CA 94111

(415) 693-2000

Daniel Li

VP, Authorized

Allbirds, Inc.

730 Montgomery Road

San Francisco, CA 94111

(628) 225-4848

Stelios G. Saffos

Richard A. Kline

Benjamin J. Cohen

Brittany D. Ruiz

Latham & Watkins LLP

1271 Avenue of the Americas

New York, New York 10020

(212) 906-1200Though the Tika-Python parser doesn’t match the precision of PDFMiner in extracting information from multi-column layouts, it nonetheless demonstrates a robust capacity to know and interpret the construction of such information. Whereas the output will not be as polished, Tika-Python successfully acknowledges the multi-column format, making certain that the general construction of the content material is preserved to an inexpensive extent. This makes it a dependable choice when dealing with complicated layouts, even when some refinement is likely to be mandatory post-extraction

e) Llama Parser (Multi-Column Layouts Comparability)

Nicole Brookshire Daniel Lilc.Street1 Stelios G. Saffosen

Peter Werner VP, Legany A 9411 Richard A. Kline

Katherine DenCalise Chengby 730 Montgome C848Allbirds, Ir Benjamin J. CohizLLPcasBrittany D. Rus meri20

3 Embarcadero Middle 94111Cooley LLP, twentieth Flooring San Francisco,-4(628) 225 1271 Avenue of the Ak 100Latham & Watkin

San Francisco, CA0(415) 693-200 New York, New Yor0(212) 906-120The Llama Parser struggled with the multi-column structure, extracting the information in a linear, vertical format slightly than recognizing the logical movement throughout the columns. This ends in disjointed and hard-to-follow information extraction, diminishing its effectiveness for paperwork with complicated layouts.

Desk Comparability

Extracting information from tables, particularly once they comprise monetary info, is important for making certain that vital calculations and analyses might be carried out precisely. Monetary information, equivalent to stability sheets, revenue and loss statements, and different quantitative info, is commonly structured in tables inside PDFs. The flexibility of a PDF parser to accurately extract this information is important for sustaining the integrity of monetary experiences and performing subsequent analyses. Under is a comparability of how totally different PDF parsers deal with the extraction of such information.

Under is an instance desk extracted from the identical Allbird S1 discussion board with a view to take a look at our parsers on.

Now let’s verify how some PDF parsers extract tabular information.

a) PyPDF1 (Desk Comparability)

☐CALCULATION OF REGISTRATION FEETitle of Every Class ofSecurities To Be RegisteredProposed MaximumAggregate Providing PriceAmount ofRegistration FeeClass A standard inventory, $0.0001 par worth per share$100,000,000$10,910(1)Estimated solely for the aim of calculating the registration payment pursuant to Rule 457(o) underneath the Securities Act of 1933, as amended.(2)Just like its dealing with of multi-column structure information, the PyPDF1 parser struggles with extracting information from tables. Simply because it tends to misread the construction of multi-column textual content by studying it as a single vertical line, it equally fails to take care of the right formatting and alignment of desk information, typically resulting in disorganized and inaccurate outputs. This limitation makes PyPDF1 much less dependable for duties that require exact extraction of structured information, equivalent to monetary tables.

b) PyPDF2 (Desk Comparability)

Just like its dealing with of multi-column structure information, the PyPDF2 parser struggles with extracting information from tables. Simply because it tends to misread the construction of multi-column textual content by studying it as a single vertical line, nonetheless not like the PyPDF1 Parser the PyPDF2 Parser splits the information into separate traces.

CALCULATION OF REGISTRATION FEE

Title of Every Class of Proposed Most Quantity of

Securities To Be Registered Mixture Providing Worth(1)(2) Registration Price

Class A standard inventory, $0.0001 par worth per share $100,000,000 $10,910c) PDFMiner (Desk Comparability)

Though the PDFMiner parser understands the fundamentals of extracting information from particular person cells, it nonetheless struggles with sustaining the proper order of column information. This challenge turns into obvious when sure cells are misplaced, such because the “Class A standard inventory, $0.0001 par worth per share” cell, which may find yourself within the unsuitable sequence. This misalignment compromises the accuracy of the extracted information, making it much less dependable for exact evaluation or reporting.

CALCULATION OF REGISTRATION FEE

Class A standard inventory, $0.0001 par worth per share

Title of Every Class of

Securities To Be Registered

Proposed Most

Mixture Providing Worth

(1)(2)

$100,000,000

Quantity of

Registration Price

$10,910d) Tika-Python (Desk Comparability)

As demonstrated under, the Tika-Python parser misinterprets the multi-column information into vertical extraction., making it not that significantly better in comparison with the PyPDF1 and a pair of Parsers.

CALCULATION OF REGISTRATION FEE

Title of Every Class of

Securities To Be Registered

Proposed Most

Mixture Providing Worth

Quantity of

Registration Price

Class A standard inventory, $0.0001 par worth per share $100,000,000 $10,910e) Llama Parser (Desk Comparision)

CALCULATION OF REGISTRATION FEE

Securities To Be RegisteTitle of Every Class ofred Mixture Providing PriceProposed Most(1)(2) Registration Quantity ofFee

Class A standard inventory, $0.0001 par worth per share $100,000,000 $10,910The Llama Parser confronted challenges when extracting information from tables, failing to seize the construction precisely. This resulted in misaligned or incomplete information, making it tough to interpret the desk’s contents successfully.

Picture Comparability

On this part, we are going to consider the efficiency of our PDF parsers in extracting information from photos embedded inside the doc.

Llama Parser

Textual content: Desk of Contents

allbids

Betler Issues In A Higher Method applies

nof solely to our merchandise, however to

every little thing we do. That'$ why we're

pioneering the primary Sustainable Public

Fairness ProvidingThe PyPDF1, PyPDF2, PDFMiner, and Tika-Python libraries are all restricted to extracting textual content and metadata from PDFs, however they don’t possess the potential to extract information from photos. However, the Llama Parser demonstrated the power to precisely extract information from photos embedded inside the PDF, offering dependable and exact outcomes for image-based content material.

Observe that the under abstract is predicated on how the PDF Parsers have dealt with the given challenges supplied within the AllBirds S1 Kind.

Greatest Practices for PDF Parsing in RAG Functions

Efficient PDF parsing in RAG methods depends closely on pre-processing methods to reinforce the accuracy and construction of the extracted information. By making use of strategies tailor-made to the particular challenges of scanned paperwork, complicated layouts, or low-quality photos, the parsing high quality might be considerably improved.

Pre-processing Strategies to Enhance Parsing High quality

Pre-processing PDFs earlier than parsing can considerably enhance the accuracy and high quality of the extracted information, particularly when coping with scanned paperwork, complicated layouts, or low-quality photos.

Listed here are some dependable methods:

- Textual content Normalization: Standardize the textual content earlier than parsing by eradicating undesirable characters, correcting encoding points, and normalizing font sizes and kinds.

- Changing PDFs to HTML: Changing PDFs to HTML provides beneficial HTML components, equivalent to <p>, <br>, and <desk>, which inherently protect the construction of the doc, like headers, paragraphs, and tables. This helps in organizing the content material extra successfully in comparison with PDFs. For instance, changing a PDF to HTML may end up in structured output like:

<html><physique><p>Desk of Contents<br>As filed with the Securities and Change Fee on August 31, 2021<br>Registration No. 333-<br>UNITED STATES<br>SECURITIES AND EXCHANGE COMMISSION<br>Washington, D.C. 20549<br>FORM S-1<br>REGISTRATION STATEMENT<br>UNDER<br>THE SECURITIES ACT OF 1933<br>Allbirds, Inc.<br>- Web page Choice: Extract solely the related pages of a PDF to cut back processing time and deal with an important sections. This may be finished by manually or programmatically choosing pages that comprise the required info. If you happen to’re extracting information from a 700-page PDF, choosing solely the pages with stability sheets can save important processing time.

- Picture Enhancement: By utilizing picture enhancement methods, we are able to enhance the readability of the textual content in scanned PDFs. This consists of adjusting distinction, brightness, and backbone, all of which contribute to creating OCR more practical. These steps assist be certain that the extracted information is extra correct and dependable.

Testing Our PDF Parser Inside a RAG System

On this part, we are going to take our testing to the following degree by integrating every of our PDF parsers into a completely useful RAG system, leveraging the Llama 3 mannequin because the system’s LLM.

We’ll consider the mannequin’s responses to particular questions and assess how the standard of the PDF parsers in extracting information impacts the accuracy of the RAG system’s replies. By doing so, we are able to gauge the parser’s efficiency in dealing with a posh doc just like the S1 submitting, which is lengthy, extremely detailed, and tough to parse. Even a minor error in information extraction might considerably impair the RAG mannequin’s capacity to generate correct responses.

This methodology will enable us to push the parsers to their limits, testing their robustness and accuracy in dealing with intricate authorized and monetary documentation.

1) Query from Textual content: “What’s the complete web income for Allbirds for the yr ended December 31, 2020?”

Right Reply: $219.3 million

PyPDF

- Reply: the conversion ratio is topic to adjustment upon the prevalence of sure occasions, as described under.

- Clarification: Incorrect reply. As a result of unstructured information extracted by PyPDF, the RAG system retrieves incorrect info.

PyPDF2

- Reply: in our Annual Report on Kind 10-Okay for the yr ended December 31, 2020. and $5.4 million and $7.4 million for the six months ended June 30, 2020 and 2021, respectively. (2) Contains depreciation and amortization expense of ………..

- Clarification: Incorrect reply, regardless of the RAG system returning the proper reply, “Allbirds, Inc. Internet income $193,673 $219,296 $92,779 $117,542 December 31,” the mannequin was unable to offer an correct response because of the poor parsing of the desk from the PDF. The messy extraction of information hindered the system’s capacity to interpret the numbers and ship a exact reply.

PDFMiner

- Reply: $219.3 million. Based on the given context, Allbirds’ web income grew from $126.0 million in 2018 to $219.3 million in 2020, representing a compound annual progress fee. Subsequently, the full web income for Allbirds for the yr ended December 31, 2020 is $219.3 million.

- Clarification: Right reply.

Tika Python

- Reply: Based on the 10-Okay submitting of Allbirds, Inc. for the yr ended December 31, 2020, the full web income for the yr was $266.7 million. [1]

- Clarification: Incorrect reply, the retrieved information didn’t comprise the proper response, thus the mannequin used it pre-stored data.

Llama Parser

- Reply: The full web income for Allbirds for the yr ended December 31, 2020 is $219,296. That is acknowledged within the given context.

- Clarification: Right reply.

Query from Desk: What’s the proposed most mixture providing worth for Allbirds, Inc.’s Class A standard inventory on this registration assertion?

Right reply: $100,000,000.

PyPDF

- Reply: the conversion ratio is topic to adjustment upon the prevalence of sure occasions, as described under.

- Clarification: Incorrect reply. Whereas the worth “$100,000,000” was current within the retrieved information, the big quantity of retrieved info because of the dangerous PDF Parsing overwhelmed the LLM, inflicting confusion and resulting in an inaccurate response.

PyPDF2

- Reply: The proposed most mixture providing worth for Allbirds, Inc.’s Class A standard inventory is $100,000,000.

- Clarification: Right reply., the proper worth was returned within the retrieved information.

PDFMiner

- Reply: Reply: The proposed most mixture providing worth for Allbirds, Inc.’s Class A standard inventory on this registration assertion is just not specified within the context.

- Clarification: Incorrect reply, the proper reply “$100,000,000” was not returned within the retrieved information, as a consequence of dangerous PDF parsing.

Tika Python

- Reply: The proposed most mixture providing worth for Allbirds, Inc.’s Class A standard inventory is just not specified within the given context.

- Clarification: Incorrect reply, the proper reply “$100,000,000” was not returned within the retrieved information, as a consequence of dangerous PDF parsing.

Llama Parser

- Reply: The precise reply is just not supplied within the given context.

- Clarification: Incorrect reply, the proper reply “$100,000,000” was not returned within the retrieved information, as a consequence of dangerous PDF parsing.

Query from Picture: What number of company-operated shops did Allbirds have as of June 30, 2021?

Right reply: 100%

For this given query, we are going to solely be testing the Llama parser since it’s the solely mannequin able to studying information within the photos.

- Reply: Not talked about within the supplied context.

- Clarification: Incorrect reply, although the RAG system failed in retrieving the precise worth because the extracted information from the pdf picture which was: “35′, ‘ 27 international locations’, ‘ Firm-operatedstores as 2.5B”, the extracted information was fairly messy, inflicting the RAG system to not retrieve it.

We have requested 10 such questions pertaining to content material in textual content/desk and summarized the outcomes under.

Abstract of all outcomes

PyPDF: Struggles with each structured and unstructured information, resulting in frequent incorrect solutions. Information extraction is messy, inflicting confusion in RAG mannequin responses.

PyPDF2: Performs higher with desk information however struggles with giant datasets that confuse the mannequin. It managed to return right solutions for some structured textual content information.

PDFMiner: Usually right with text-based questions however struggles with structured information like tables, typically lacking key info.

Tika Python: Extracts some information however depends on pre-stored data if right information is not retrieved, resulting in frequent incorrect solutions for each textual content and desk questions.

Llama Parser: Greatest at dealing with structured textual content, however struggles with complicated picture information and messy desk extractions.

From all these experiments it is truthful to say that PDF parsers are but to catch up for complicated layouts and may give a tricky time for downstream purposes that require clear structure consciousness and separation of blocks. Nonetheless we discovered PDFMiner and PyPDF2 pretty much as good beginning factors.

Enhancing Your RAG System with Superior PDF Parsing Options

As proven above, PDF parsers whereas extraordinarily versatile and straightforward to use, can generally battle with complicated doc layouts, equivalent to multi-column texts or embedded photos, and should fail to precisely extract info. One efficient answer to those challenges is utilizing Optical Character Recognition (OCR) to course of scanned paperwork or PDFs with intricate constructions. Nanonets, a number one supplier of AI-powered OCR options, affords superior instruments to reinforce PDF parsing for RAG methods.

Nanonets leverages a number of PDF parsers in addition to depends on AI and machine studying to effectively extract structured information from complicated PDFs, making it a strong software for enhancing RAG methods. It handles numerous doc sorts, together with scanned and multi-column PDFs, with excessive accuracy.

Chat with PDF

Chat with any PDF utilizing our AI software: Unlock beneficial insights and get solutions to your questions in real-time.

Advantages for RAG Functions

- Accuracy: Nanonets supplies exact information extraction, essential for dependable RAG outputs.

- Automation: It automates PDF parsing, lowering handbook errors and dashing up information processing.

- Versatility: Helps a variety of PDF sorts, making certain constant efficiency throughout totally different paperwork.

- Simple Integration: Nanonets integrates easily with current RAG frameworks through APIs.

Nanonets successfully handles complicated layouts, integrates OCR for scanned paperwork, and precisely extracts desk information, making certain that the parsed info is each dependable and prepared for evaluation.

AI PDF Summarizer

Add PDFs or Pictures and Get Immediate Summaries or Dive Deeper with AI-powered Conversations.

Takeaways

In conclusion, choosing probably the most appropriate PDF parser in your RAG system is important to make sure correct and dependable information extraction. All through this information, we now have reviewed numerous PDF parsers, highlighting their strengths and weaknesses, notably in dealing with complicated layouts equivalent to multi-column codecs and tables.

For efficient RAG purposes, it is important to decide on a parser that not solely excels in textual content extraction accuracy but in addition preserves the unique doc’s construction. That is essential for sustaining the integrity of the extracted information, which straight impacts the efficiency of the RAG system.

Finally, your best option of PDF parser will depend upon the particular wants of your RAG utility. Whether or not you prioritize accuracy, structure preservation, or integration ease, choosing a parser that aligns along with your goals will considerably enhance the standard and reliability of your RAG outputs.