A meta robots tag is a bit of HTML code that tells search engine robots how one can crawl, index, and show a web page’s content material.

It goes within the <head> part of the web page and may seem like this:

<meta identify="robots" content material="noindex">

The meta robots tag within the instance above tells all search engine crawlers to not index the web page.

Let’s talk about what you need to use robots meta tags for, why they’re vital for search engine marketing, and how one can use them correctly.

Meta robots tags and robots.txt information have comparable capabilities however serve completely different functions.

A robots.txt file is a single textual content file that applies to all the website. And tells serps which pages to crawl.

A meta robotstag applies to solely the web page containing the tag. And tells serps how one can crawl, index, and show info from that web page solely.

Robots meta tags assist management how Google crawls and indexes a web page’s content material. Together with whether or not to:

- Embody a web page in search outcomes

- Comply with the hyperlinks on a web page

- Index the pictures on a web page

- Present cached outcomes of the web page on the search engine outcomes pages (SERPs)

- Present a snippet of the web page on the SERPs

Under, we’ll discover the attributes you need to use to inform serps how one can work together along with your pages.

However first, let’s talk about why robots meta tags are vital and the way they’ll have an effect on your website’s search engine marketing.

Robots meta tags assist Google and different serps crawl and index your pages effectively.

Particularly for big or regularly up to date websites.

In any case, you doubtless don’t want each web page in your website to rank.

For instance, you most likely don’t need serps to index:

- Pages out of your staging website

- Affirmation pages, corresponding to thanks pages

- Admin or login pages

- Inner search end result pages

- Pages with duplicate content material

Combining robots meta tags with different directives and information, corresponding to sitemaps and robots.txt, can subsequently be a helpful a part of your technical search engine marketing technique. As they may help forestall points that might in any other case maintain again your web site’s efficiency.

What Are the Title and Content material Specs for Meta Robots Tags?

Meta robots tags comprise two attributes: identify and content material. Each are required.

Title Attribute

This attribute signifies which crawler ought to observe the directions within the tag.

Like this:

identify="crawler"

If you wish to tackle all crawlers, insert “robots” because the “identify” attribute.

Like this:

identify="robots"

If you wish to prohibit crawling to particular serps, the identify attribute helps you to do this. And you’ll select as many (or as few) as you need.

Listed below are a number of widespread crawlers:

- Google: Googlebot (or Googlebot-news for information outcomes)

- Bing: Bingbot (see the record of all Bing crawlers)

- DuckDuckGo: DuckDuckBot

- Baidu: Baiduspider

- Yandex: YandexBot

Content material Attribute

The “content material” attribute comprises directions for the crawler.

It appears like this:

content material="instruction"

Google helps the next “content material” values:

Default Content material Values

And not using a robots meta tag, crawlers will index content material and observe hyperlinks by default (until the hyperlink itself has a “nofollow” tag).

This is similar as including the next “all” worth (though there isn’t a must specify it):

<meta identify="robots" content material="all"

So, for those who don’t need the web page to look in search outcomes or for serps to crawl its hyperlinks, it’s essential add a meta robots tag. With correct content material values.

Noindex

The meta robots “noindex” worth tells crawlers to not embody the web page within the search engine’s index or show it within the SERPs.

<meta identify="robots" content material="noindex">

With out the noindex worth, serps could index and serve the web page within the search outcomes.

Typical use instances for “noindex” are cart or checkout pages on an ecommerce web site.

Nofollow

This tells crawlers to not crawl the hyperlinks on the web page.

<meta identify="robots" content material="nofollow">

Google and different serps typically use hyperlinks on pages to find these linked pages. And hyperlinks may help move authority from one web page to a different.

Use the nofollow rule for those who don’t need the crawler to observe any hyperlinks on the web page or move any authority to them.

This is likely to be the case for those who don’t have management over the hyperlinks positioned in your web site. Akin to in an unmoderated discussion board with largely user-generated content material.

Noarchive

The “noarchive” content material worth tells Google to not serve a duplicate of your web page within the search outcomes.

<meta identify="robots" content material="noarchive">

If you happen to don’t specify this worth, Google could present a cached copy of your web page that searchers may even see within the SERPs.

You could possibly use this worth for time-sensitive content material, inner paperwork, PPC touchdown pages, or some other web page you don’t need Google to cache.

Noimageindex

This worth instructs Google to not index the pictures on the web page.

<meta identify="robots" content material="noimageindex">

Utilizing “noimageindex” might damage potential natural site visitors from picture outcomes. And if customers can nonetheless entry the web page, they’ll nonetheless have the ability to discover the pictures. Even with this tag in place.

Notranslate

“Notranslate” prevents Google from serving translations of the web page in search outcomes.

<meta identify="robots" content material="notranslate">

If you happen to don’t specify this worth, Google can present a translation of the title and snippet of a search end result for pages that aren’t in the identical language because the search question.

If the searcher clicks the translated hyperlink, all additional interplay is thru Google Translate. Which mechanically interprets any adopted hyperlinks.

Use this worth for those who favor to not have your web page translated by Google Translate.

For instance, you probably have a product web page with product names you don’t need translated. Or for those who discover Google’s translations aren’t at all times correct.

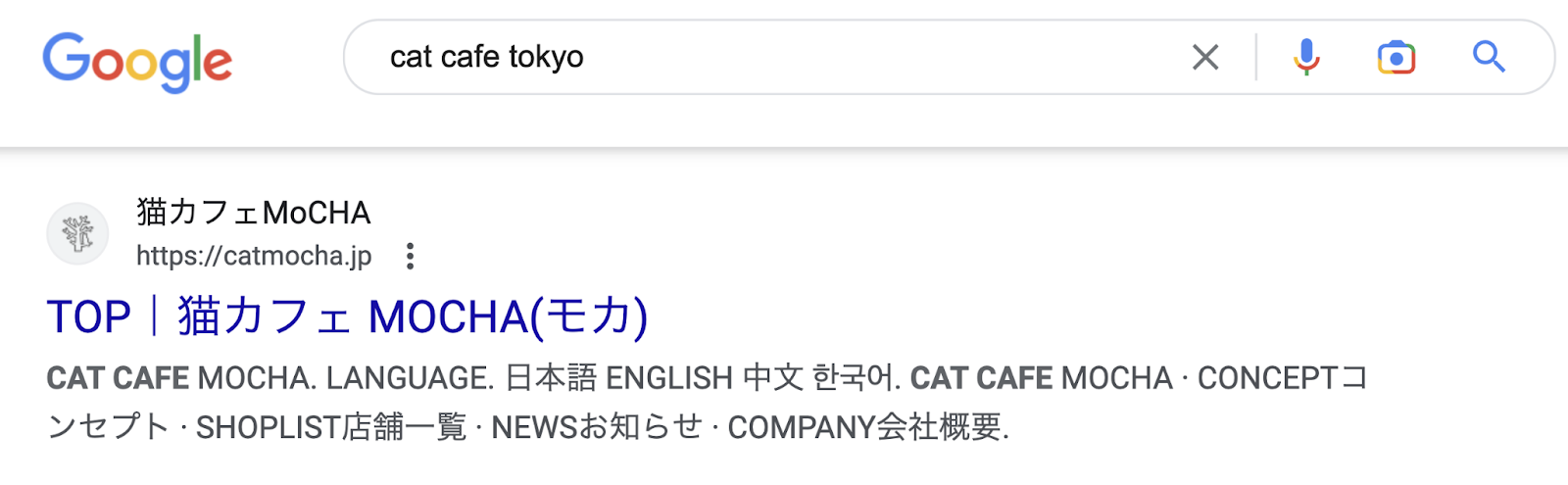

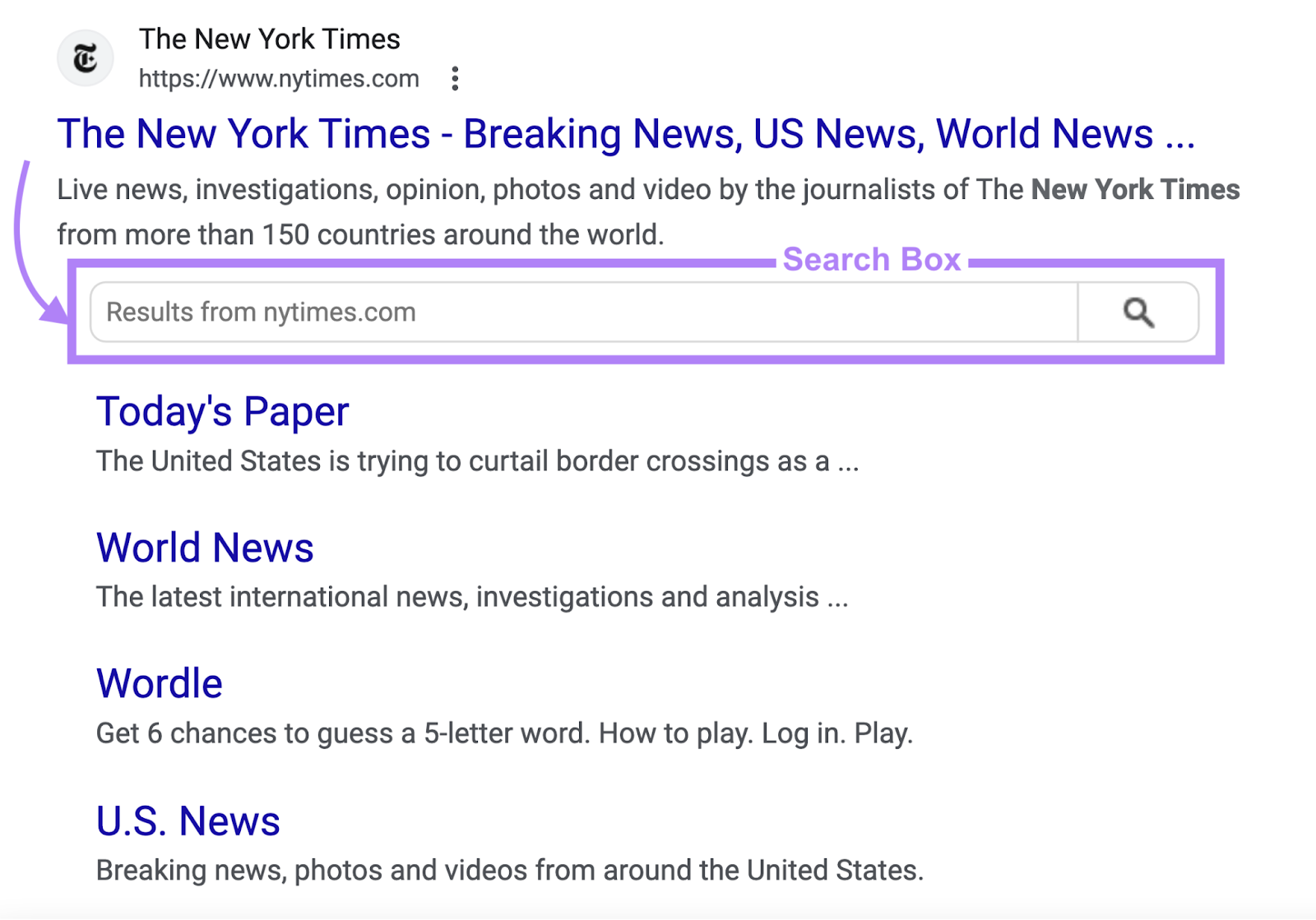

Nositelinkssearchbox

This worth tells Google to not generate a search field in your website in search outcomes.

<meta identify="robots" content material="nositelinkssearchbox">

If you happen to don’t use this worth, Google can present a search field in your website within the SERPs.

Like this:

Use this worth for those who don’t need the search field to look.

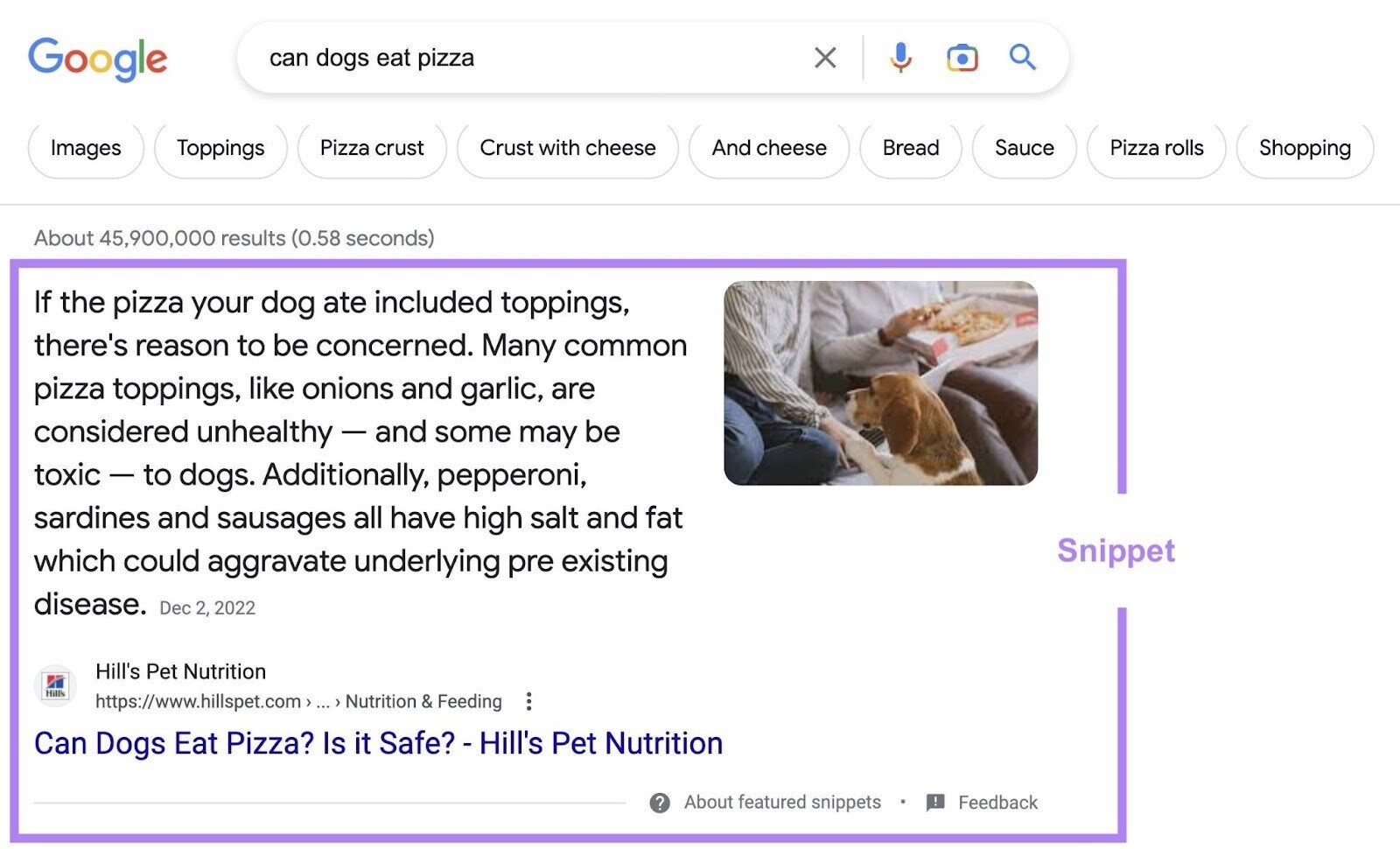

Nosnippet

“Nosnippet” stops Google from exhibiting a textual content snippet or video preview of the web page in search outcomes.

<meta identify="robots" content material="nosnippet">

With out this worth, Google can produce snippets of textual content or video primarily based on the web page’s content material.

The worth “nosnippet” additionally prevents Google from utilizing your content material as a “direct enter” for AI Overviews. But it surely’ll additionally forestall meta descriptions, wealthy snippets, and video previews. So use it with warning.

Whereas not a meta robots tag, you need to use the “data-nosnippet” attribute to forestall particular sections of your pages from exhibiting in search outcomes.

Like this:

<p>This textual content might be proven in a snippet

<span data-nosnippet>however this half would not be proven</span>.</p>

Max-snippet

“Max-snippet” tells Google the utmost character size it will possibly present as a textual content snippet for the web page in search outcomes.

This attribute has two vital instances to concentrate on:

- 0: Opts your web page out of textual content snippets (as with “nosnippet”)

- -1: Signifies there’s no restrict

For instance, to forestall Google from displaying a textual content snippet within the SERPs, you might use:

<meta identify="robots" content material="max-snippet:0">

Or, if you wish to permit as much as 100 characters:

<meta identify="robots" content material="max-snippet:100">

To point there’s no character restrict:

<meta identify="robots" content material="max-snippet:-1">

Max-image-preview

This tells Google the utmost measurement of a preview picture for the web page within the SERPs.

There are three values for this directive:

- None: Google gained’t present a preview picture

- Normal: Google could present a default preview

- Massive: Google could present a bigger preview picture

<meta identify="robots" content material="max-image-preview:giant">

Max-video-preview

This worth tells Google the utmost size you need it to make use of for a video snippet within the SERPs (in seconds).

As with “max-snippet,” there are two vital values for this directive:

- 0: Opts your web page out of video snippets

- -1: Signifies there’s no restrict

For instance, the tag under permits Google to serve a video preview of as much as 10 seconds:

<meta identify="robots" content material="max-video-preview:10">

Use this rule if you wish to restrict your snippet to point out sure elements of your movies. If you happen to don’t, Google could present a video snippet of any size.

Indexifembedded

When used together with noindex, this (pretty new) tag lets Google index the web page’s content material if it’s embedded in one other web page by means of HTML parts corresponding to iframes.

(It wouldn’t have an impact with out the noindex tag.)

<meta identify="robots" content material="noindex, indexifembedded">

“Indexifembedded” has been created with media publishers in thoughts:

They typically have media pages that shouldn’t be listed. However they do need the media listed when it’s embedded in one other web page’s content material.

Beforehand, they’d have used “noindex” on the media web page. Which might forestall it from being listed on the embedding pages too. “Indexifembedded” solves this.

Unavailable_after

The “unavailable_after” worth prevents Google from exhibiting a web page within the SERPs after a particular date and time.

<meta identify="robots" content material="unavailable_after: 2024-10-21">

You could specify the date and time utilizing RFC 822, RFC 850, or ISO 8601 codecs. Google ignores this rule for those who don’t specify a date/time. By default, there isn’t a expiration date for content material.

You should utilize this worth for limited-time occasion pages, time-sensitive pages, or pages you now not deem vital. This capabilities like a timed noindex tag, so use it with warning. Or you might find yourself with indexing points later down the road.

Combining Robots Meta Tag Guidelines

There are two methods in which you’ll mix robots meta tag guidelines:

- Writing a number of comma-separated values into the “content material” attribute

- Offering two or extra robots meta parts

A number of Values Contained in the ‘Content material’ Attribute

You may combine and match the “content material” values we’ve simply outlined. Simply be sure to separate them by comma. As soon as once more, the values are usually not case-sensitive.

For instance:

<meta identify="robots" content material="noindex, nofollow">

This tells serps to not index the web page or crawl any of the hyperlinks on the web page.

You may mix noindex and nofollow utilizing the “none” worth:

<meta identify="robots" content material="none">

However some serps, like Bing, don’t help this worth.

Two or Extra Robots Meta Parts

Use separate robots meta parts if you wish to instruct completely different crawlers to behave in another way.

For instance:

<meta identify="robots" content material="nofollow"><meta identify="YandexBot" content material="noindex">

This mix instructs all crawlers to keep away from crawling hyperlinks on the web page. But it surely additionally tells Yandex particularly to not index the web page (along with not crawling the hyperlinks).

The desk under reveals the supported meta robots values for various serps:

|

Worth |

|

Bing |

Yandex |

|

noindex |

Y |

Y |

Y |

|

noimageindex |

Y |

N |

N |

|

nofollow |

Y |

N |

Y |

|

noarchive |

Y |

Y |

Y |

|

nocache |

N |

Y |

N |

|

nosnippet |

Y |

Y |

N |

|

nositelinkssearchbox |

Y |

N |

N |

|

notranslate |

Y |

N |

N |

|

max-snippet |

Y |

Y |

N |

|

max-video-preview |

Y |

Y |

N |

|

max-image-preview |

Y |

Y |

N |

|

indexifembedded |

Y |

N |

N |

|

unavailable_after |

Y |

N |

N |

Including Robots Meta Tags to Your HTML Code

If you happen to can edit your web page’s HTML code, add your robots meta tags into the <head> part of the web page.

For instance, in order for you serps to keep away from indexing the web page and to keep away from crawling hyperlinks, use:

<meta identify="robots" content material="noindex, nofollow">

Implementing Robots Meta Tags in WordPress

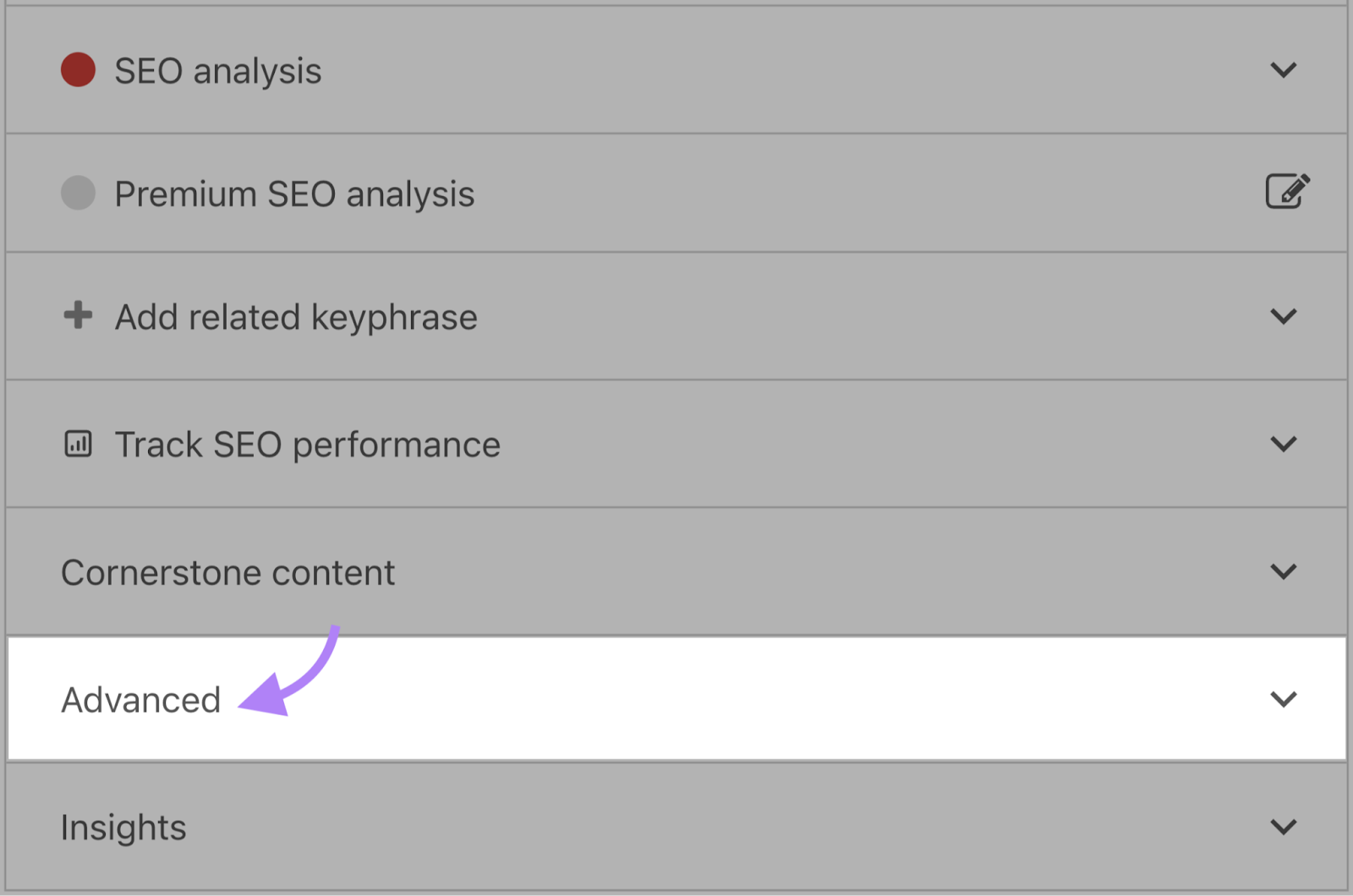

If you happen to’re utilizing a WordPress plugin like Yoast search engine marketing, open the “Superior” tab within the block under the web page editor.

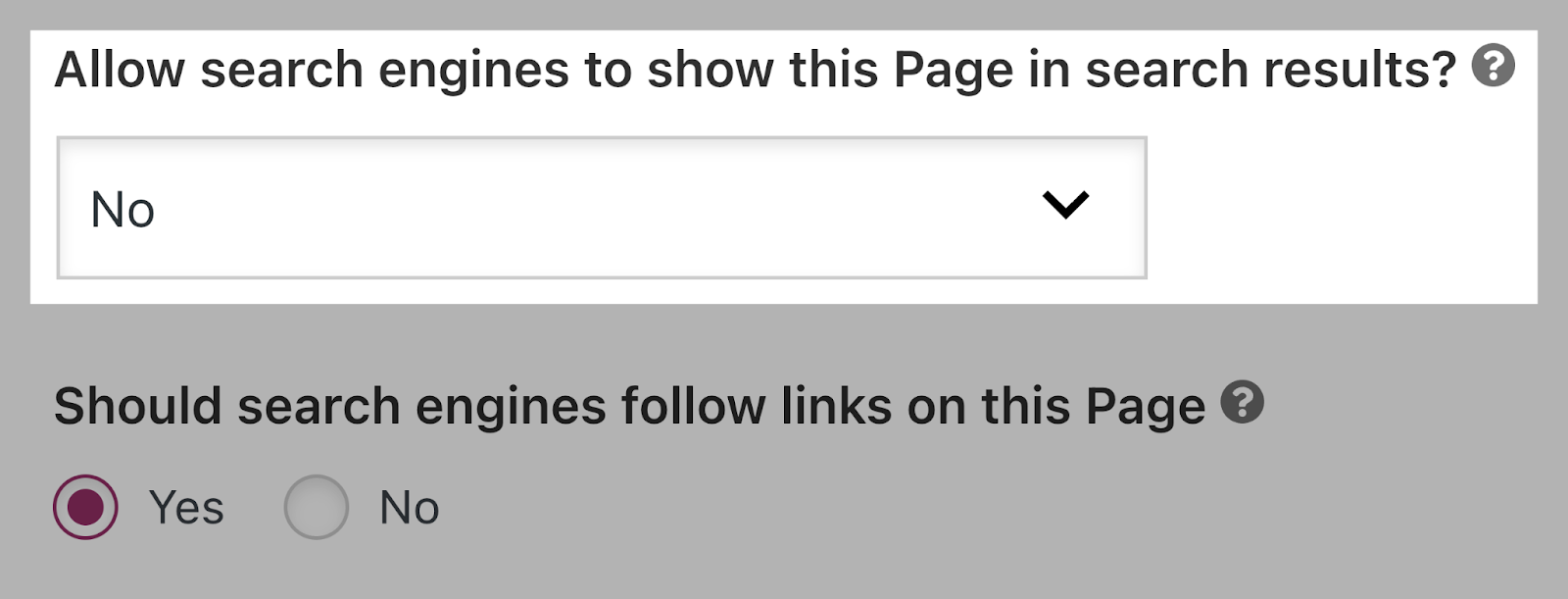

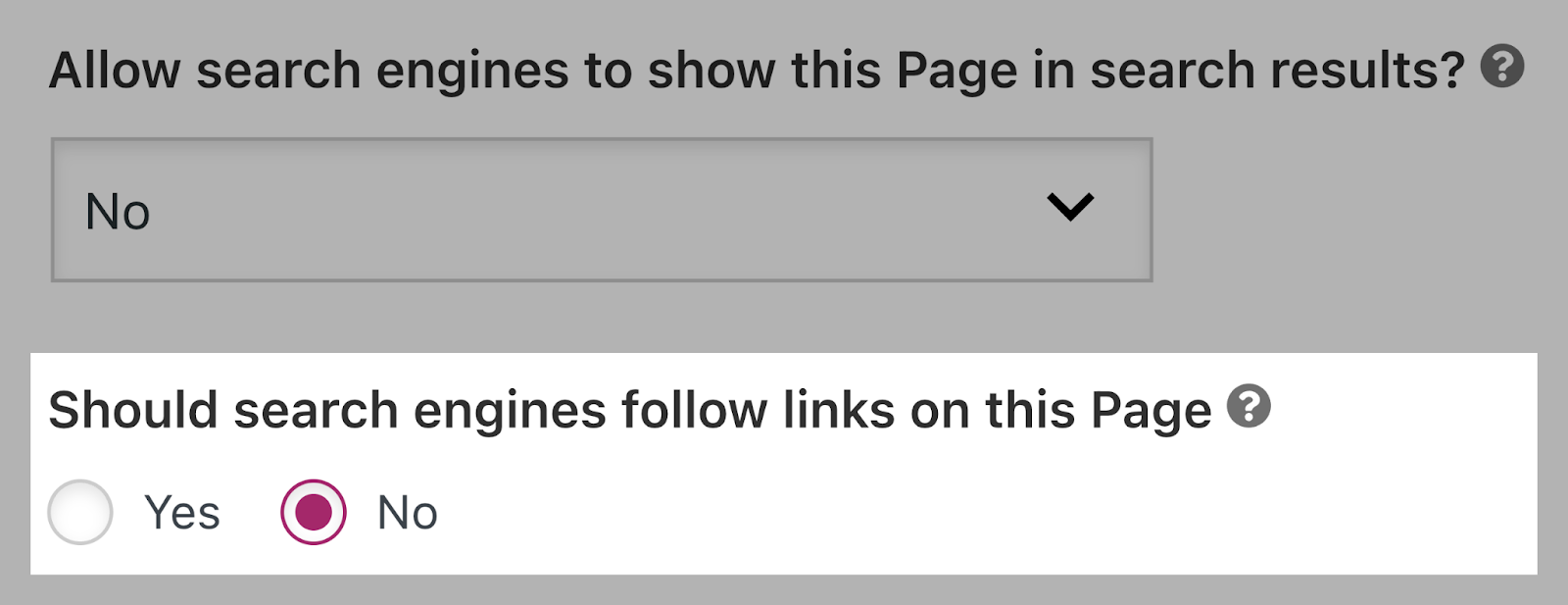

Set the “noindex” directive by switching the “Enable serps to point out this web page in search outcomes?” drop-down to “No.”

Or forestall serps from following hyperlinks by switching the “Ought to serps observe hyperlinks on this web page?” to “No.”

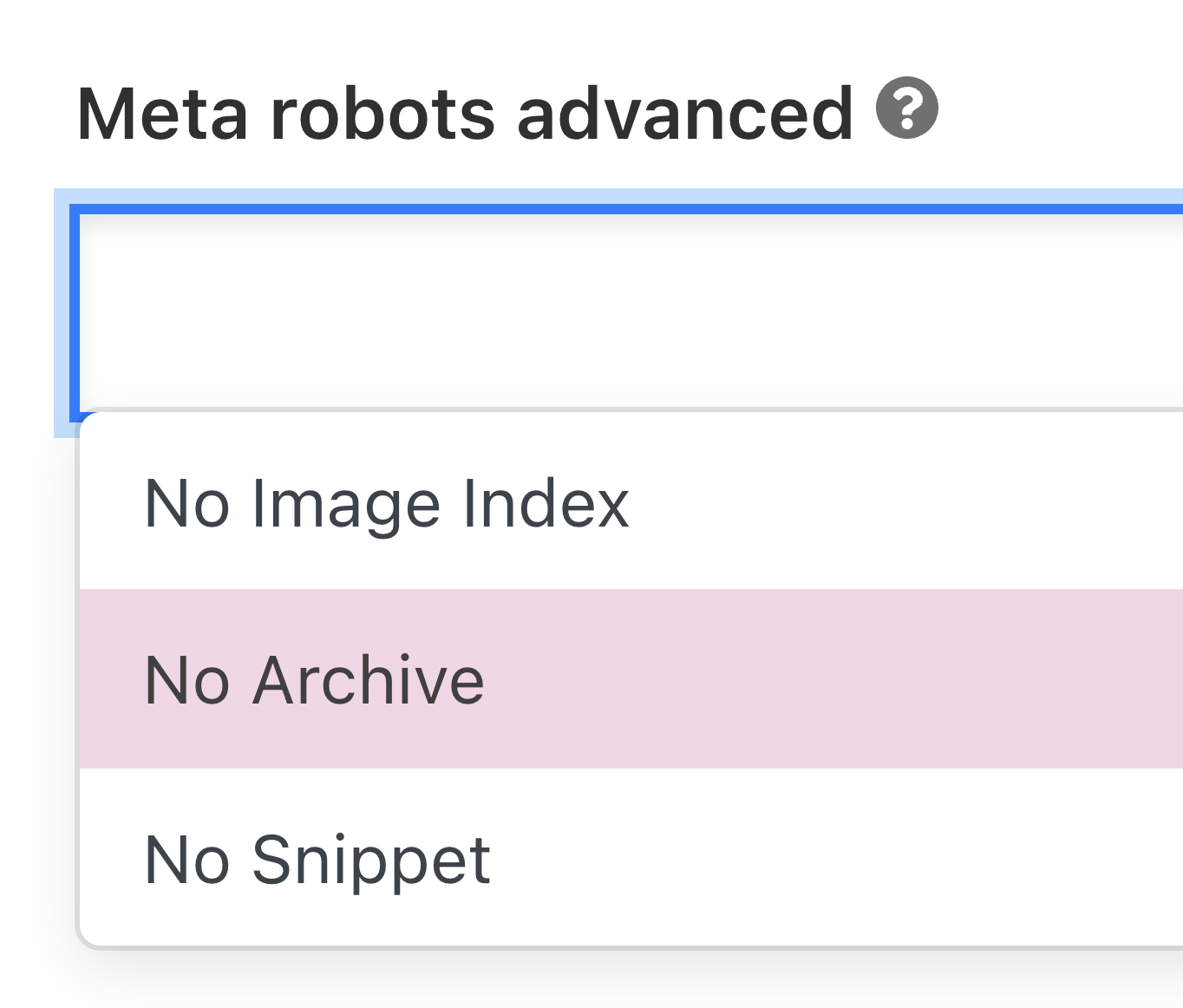

For different directives, you need to implement them within the “Meta robots superior” area.

Like this:

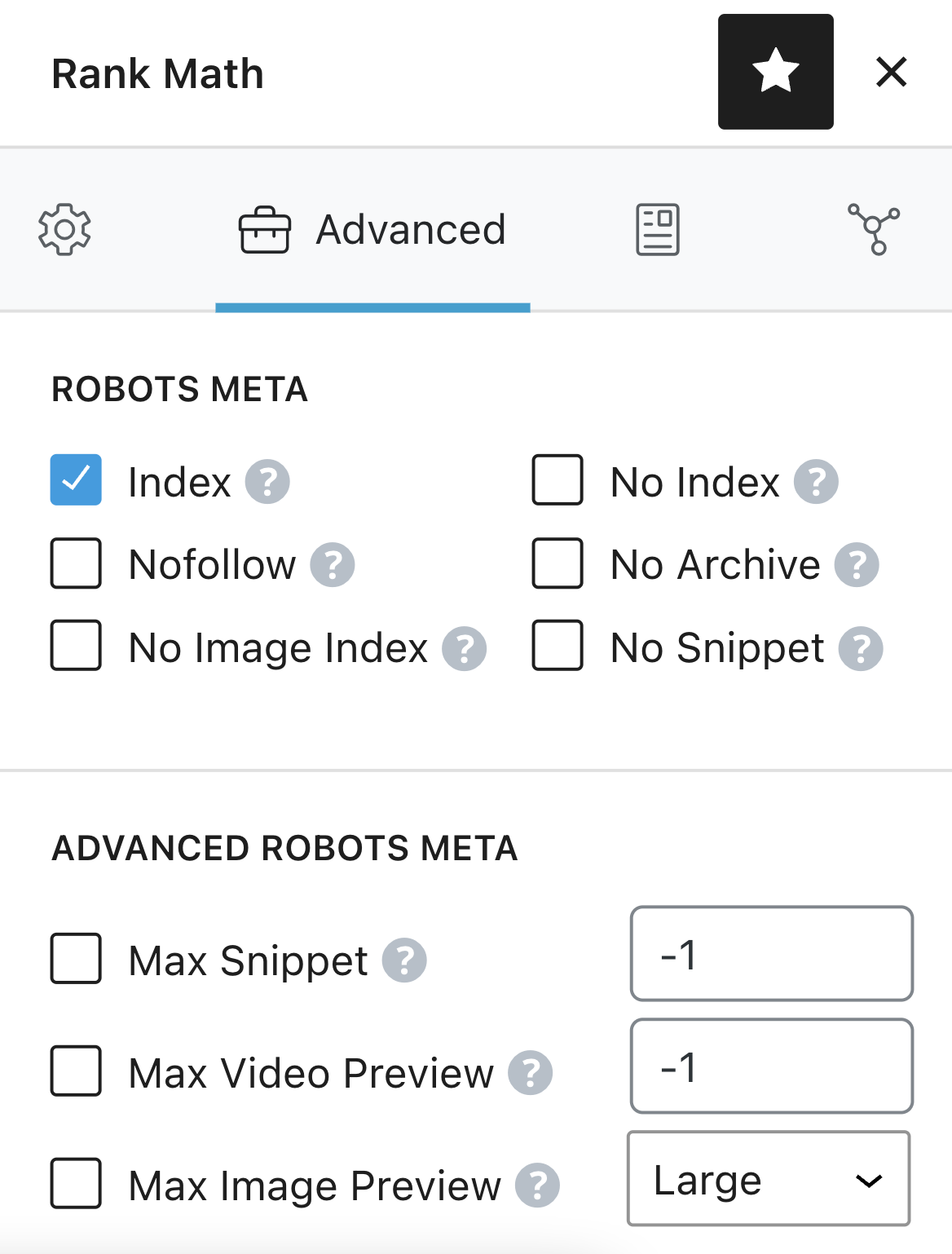

If you happen to’re utilizing Rank Math, choose the robots directives straight from the “Superior” tab of the meta field.

Like so:

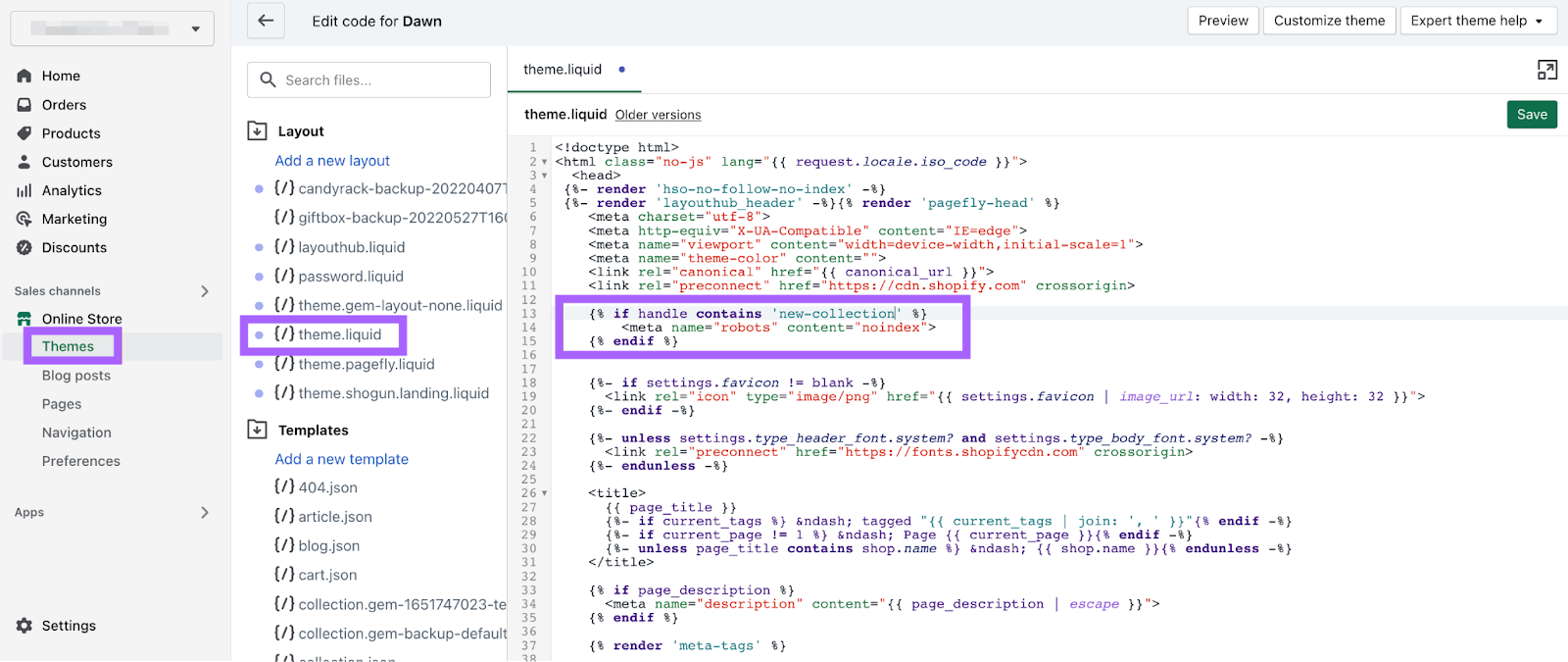

Including Robots Meta Tags in Shopify

To implement robots meta tags in Shopify, edit the <head> part of your theme.liquid format file.

To set the directives for a particular web page, add the code under to the file:

{% if deal with comprises 'page-name' %}

<meta identify="robots" content material="noindex">

{% endif %}

This instance instructs serps to not index /page-name/ (however to nonetheless observe all of the hyperlinks on the web page).

You could create separate entries to set the directives throughout completely different pages.

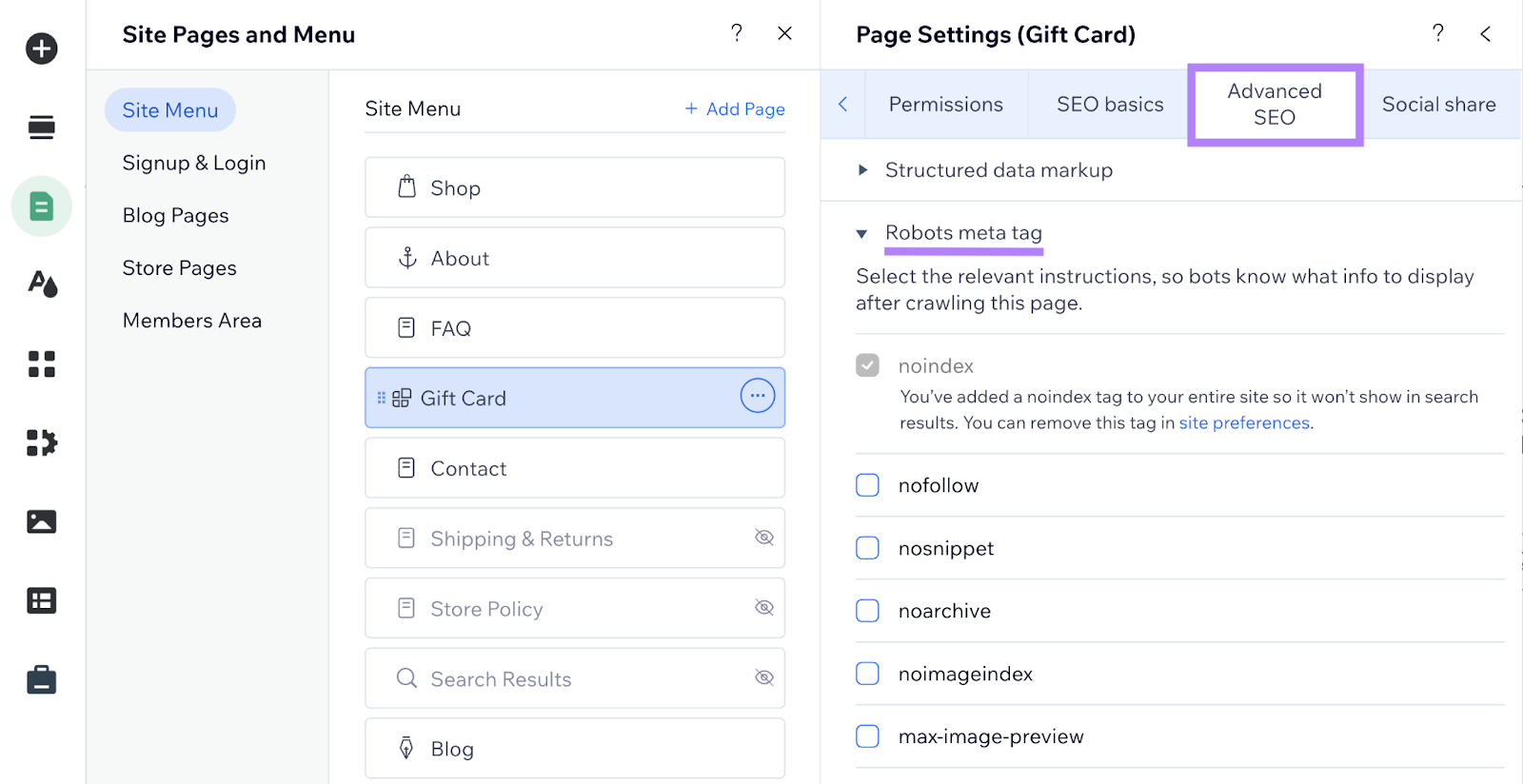

Implementing Robots Meta Tags in Wix

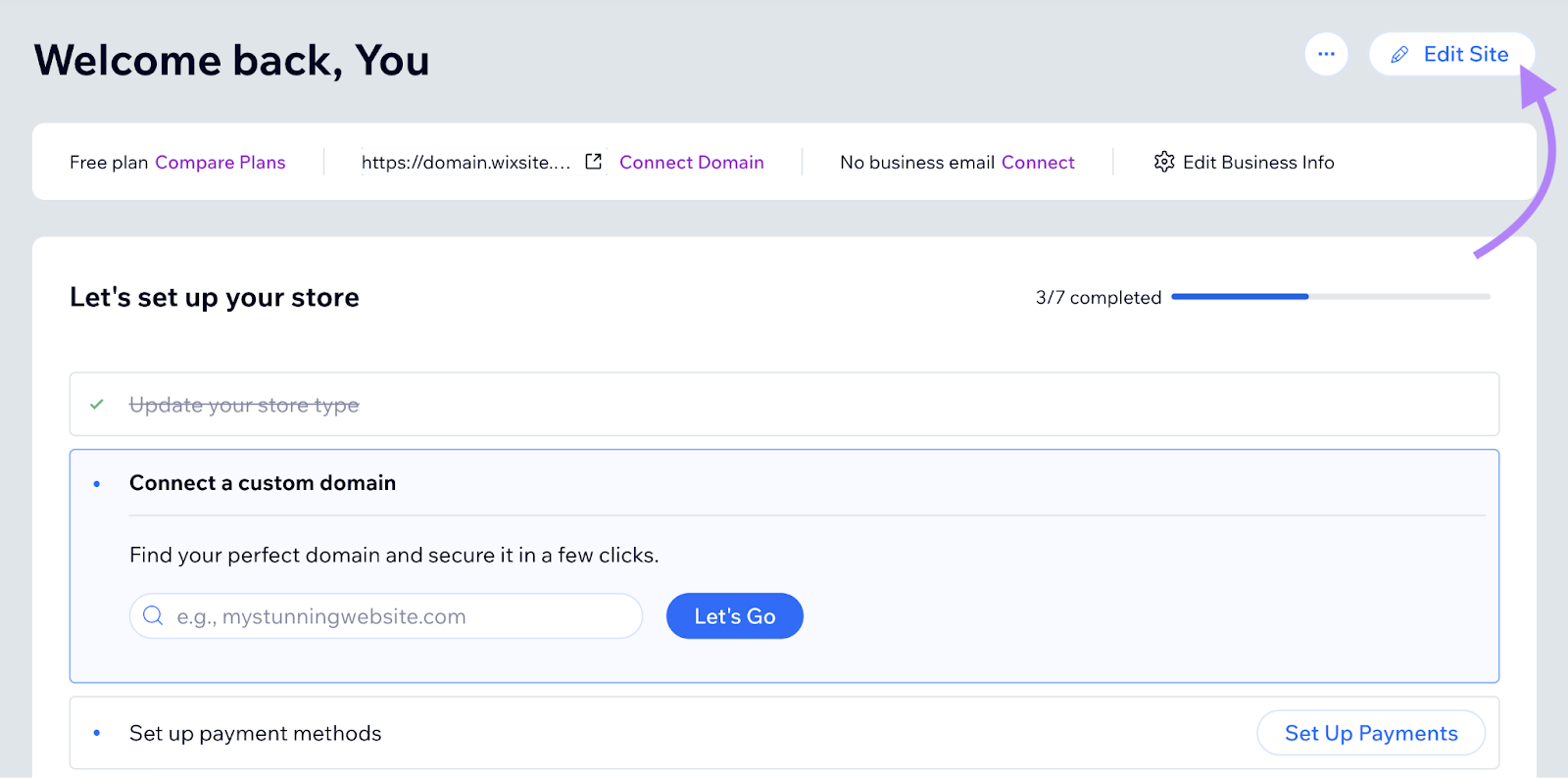

Open your Wix dashboard and click on “Edit Website.”

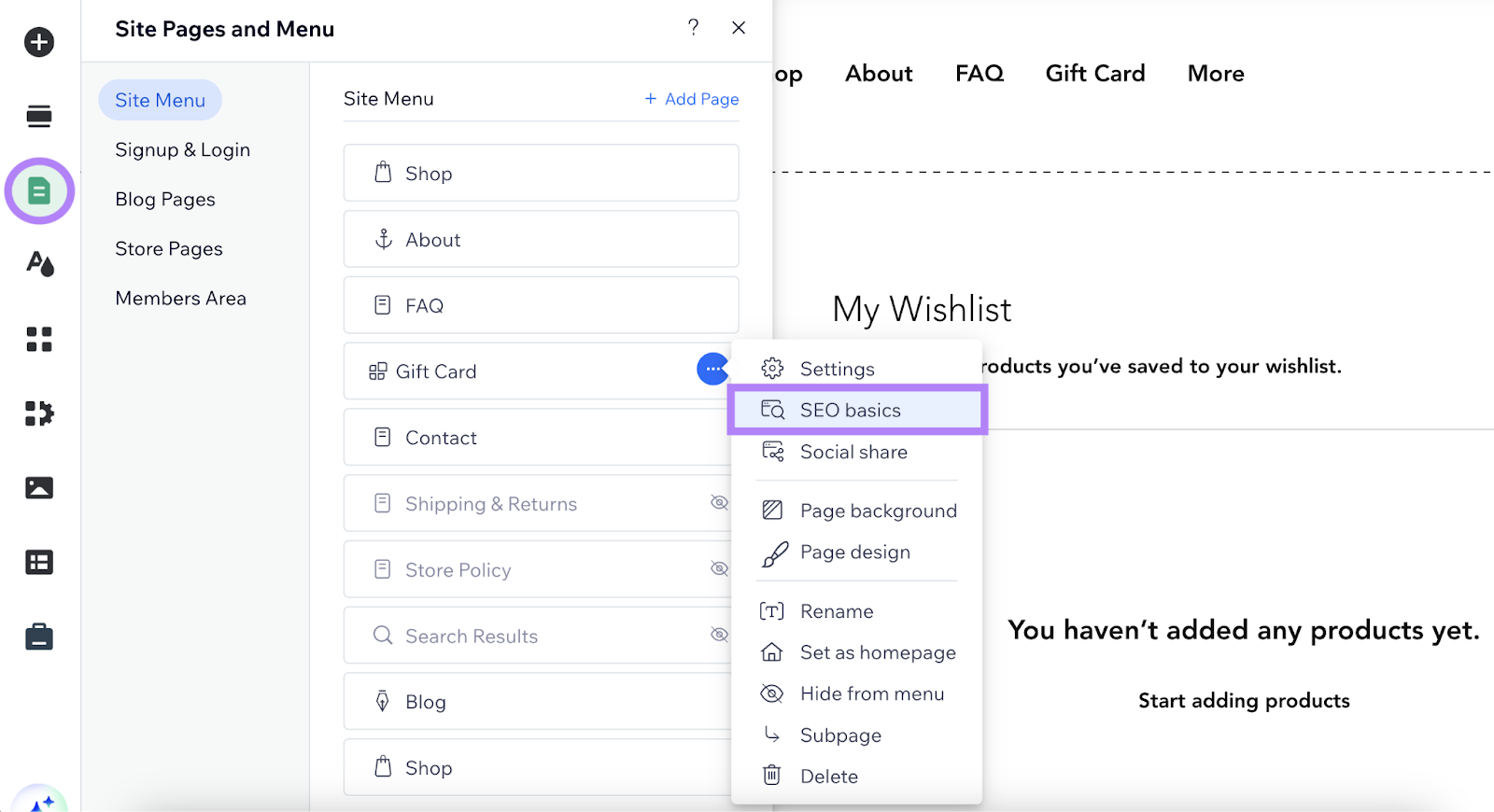

Click on “Pages & Menu” within the left-hand navigation.

Within the tab that opens, click on “…” subsequent to the web page you wish to set robots meta tags for. Select “search engine marketing fundamentals.”

Then click on “Superior search engine marketing” and click on on the collapsed merchandise “Robots meta tag.”

Now you possibly can set the related robots meta tags in your web page by clicking the checkboxes.

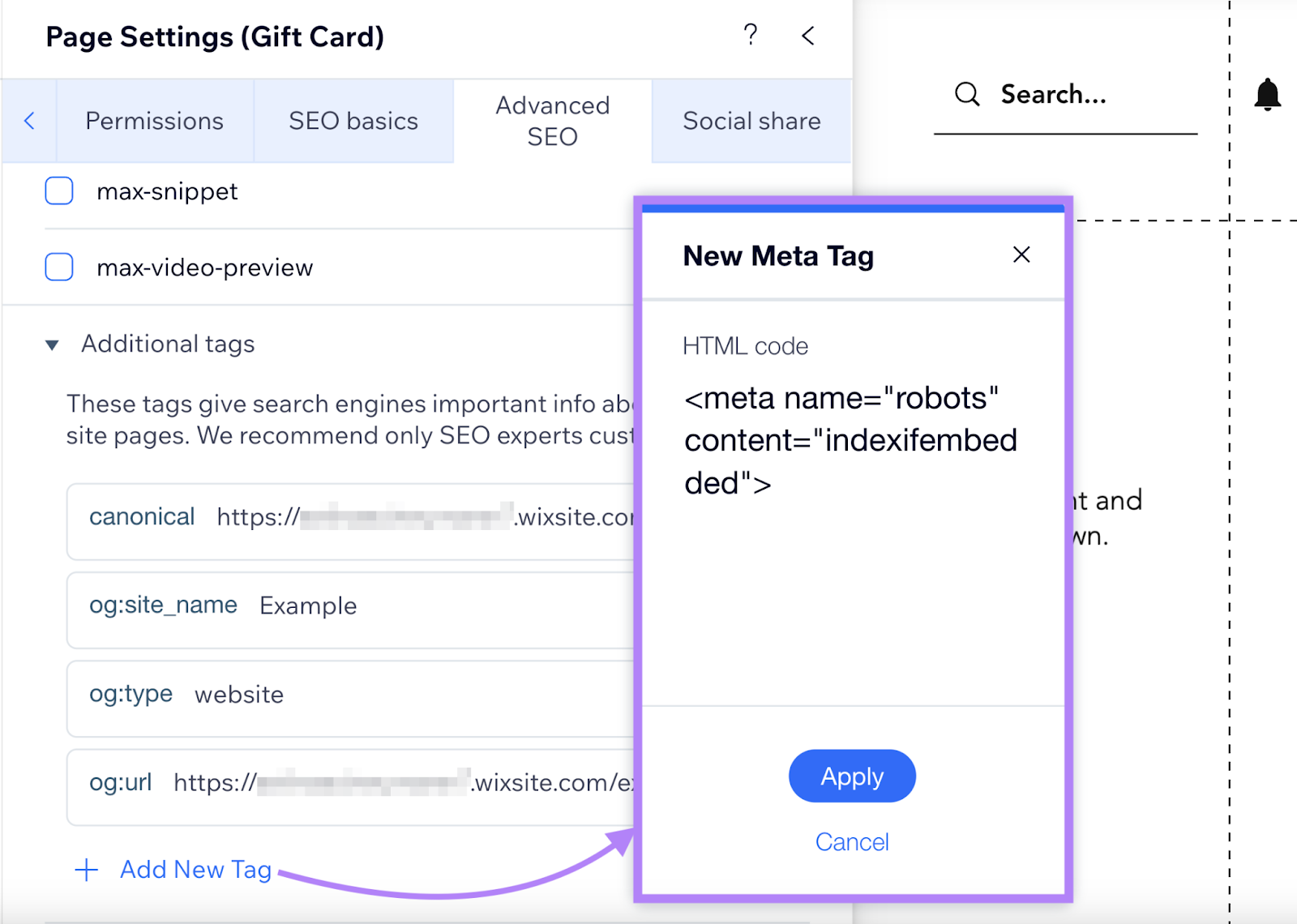

If you happen to want “notranslate,” “nositelinkssearchbox,” “indexifembedded,” or “unavailable_after,” click on “Extra tags”and “Add New Tags.”

Now you possibly can paste your meta tag in HTML format.

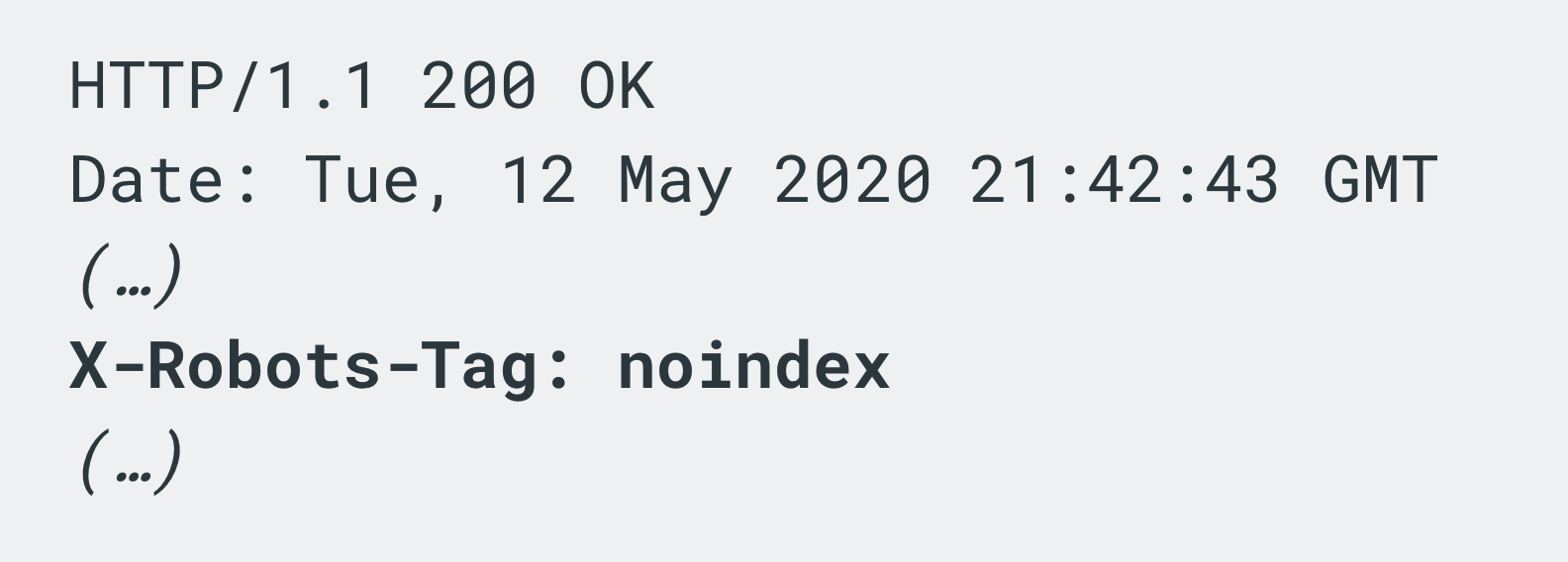

What Is the X-Robots-Tag?

An x-robots-tag serves the identical operate as a meta robots tag however for non-HTML information. Akin to photographs and PDFs.

You embody it as a part of the HTTP header response for a URL.

Like this:

To implement the x-robots-tag, you will must entry your web site’s header.php, .htaccess, or server configuration file. You should utilize the identical guidelines as these we mentioned earlier for meta robots tags.

Utilizing X-Robots-Tag on an Apache Server

To make use of the x-robots-tag on an Apache internet server, add the next to your website’s .htaccess file or httpd.conf file.

<Information ~ ".pdf$">

Header set X-Robots-Tag "noindex, nofollow"

</Information>

For instance, the code above instructs serps to not index or to observe any hyperlinks on all PDFs throughout all the website.

Utilizing X-Robots-Tag on an Nginx Server

If you happen to’re working an Nginx server, add the code under to your website’s .conf file:

location ~* .pdf$ {

add_header X-Robots-Tag "noindex, nofollow";

}

The instance code above will apply noindex and nofollow values to the entire website’s PDFs.

Let’s check out some widespread errors to keep away from when utilizing meta robots and x-robots-tags:

Utilizing Meta Robots Directives on a Web page Blocked by Robots.txt

If you happen to disallow crawling of a web page in your robots.txt file, main search engine bots gained’t crawl it. So any meta robots tags or x-robots-tags on that web page will likely be ignored.

Guarantee serps can crawl any pages with meta robots tags or x-robots-tags.

Including Robots Directives to the Robots.txt File

Though by no means formally supported by Google, you had been as soon as ready so as to add a “noindex” directive to your website’s robots.txt file.

That is now not an choice, as confirmed by Google.

The “noindex” rule in robots meta tags is the best solution to take away URLs from the index whenever you do permit crawling.

Eradicating Pages with a Noindex Directive from Sitemaps

If you happen to’re making an attempt to take away a web page from the index utilizing a “noindex” directive, go away the web page in your sitemap till it has been eliminated.

Eradicating the web page earlier than it’s deindexed may cause delays in deindexing.

Not Eradicating the ‘Noindex’ Directive from a Staging Setting

Stopping robots from crawling pages in your staging website is a finest apply. But it surely’s straightforward to neglect to take away “noindex” as soon as the location strikes into manufacturing.

And the outcomes will be disastrous. As serps could by no means crawl and index your website.

To keep away from these points, examine that your robots meta tags are appropriate earlier than transferring your website from a staging platform to a dwell surroundings.

Discovering and fixing crawlability points (and different technical search engine marketing errors) in your website can dramatically enhance efficiency.

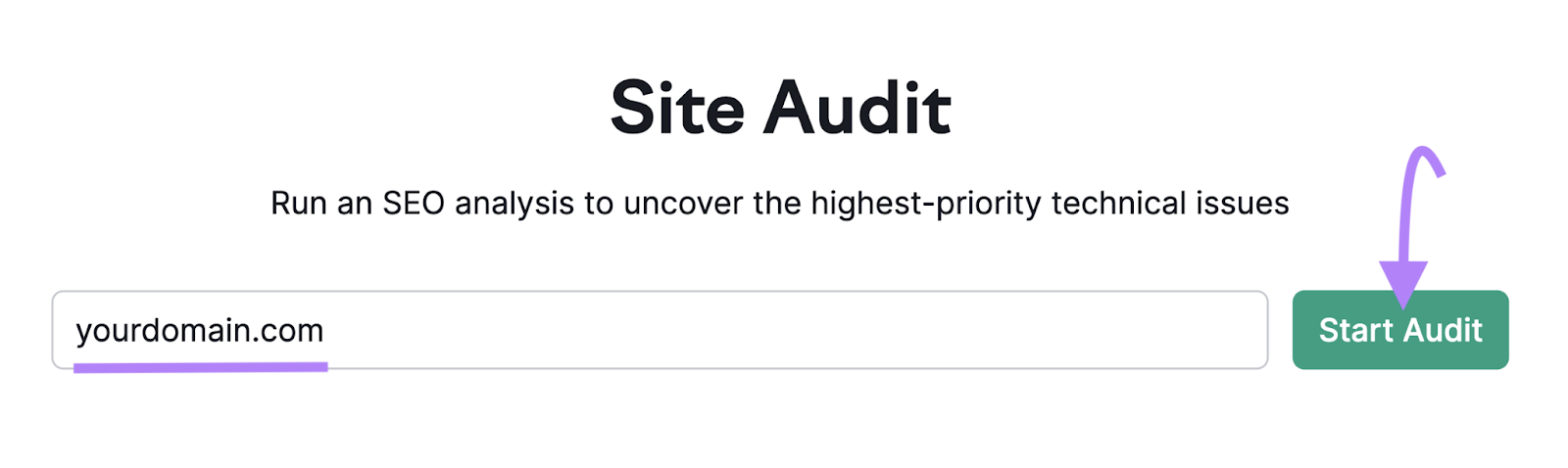

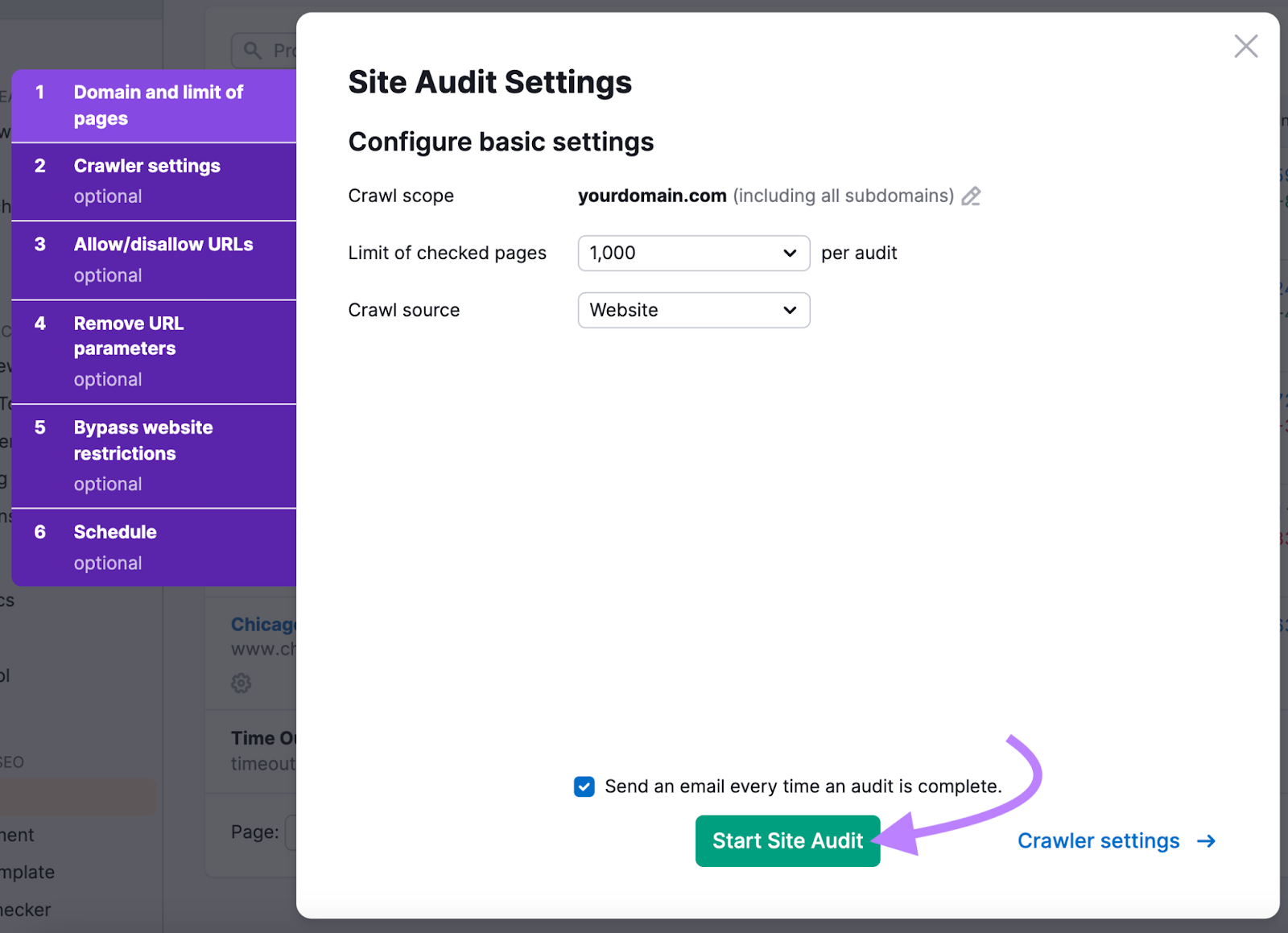

If you happen to don’t know the place to begin, use Semrush’s Website Audit instrument.

Simply enter your area and click on “Begin Audit.”

You may configure numerous settings, just like the variety of pages to crawl and which crawler you’d like to make use of. However you too can simply go away them as their defaults.

If you’re prepared, click on “Begin Website Audit.”

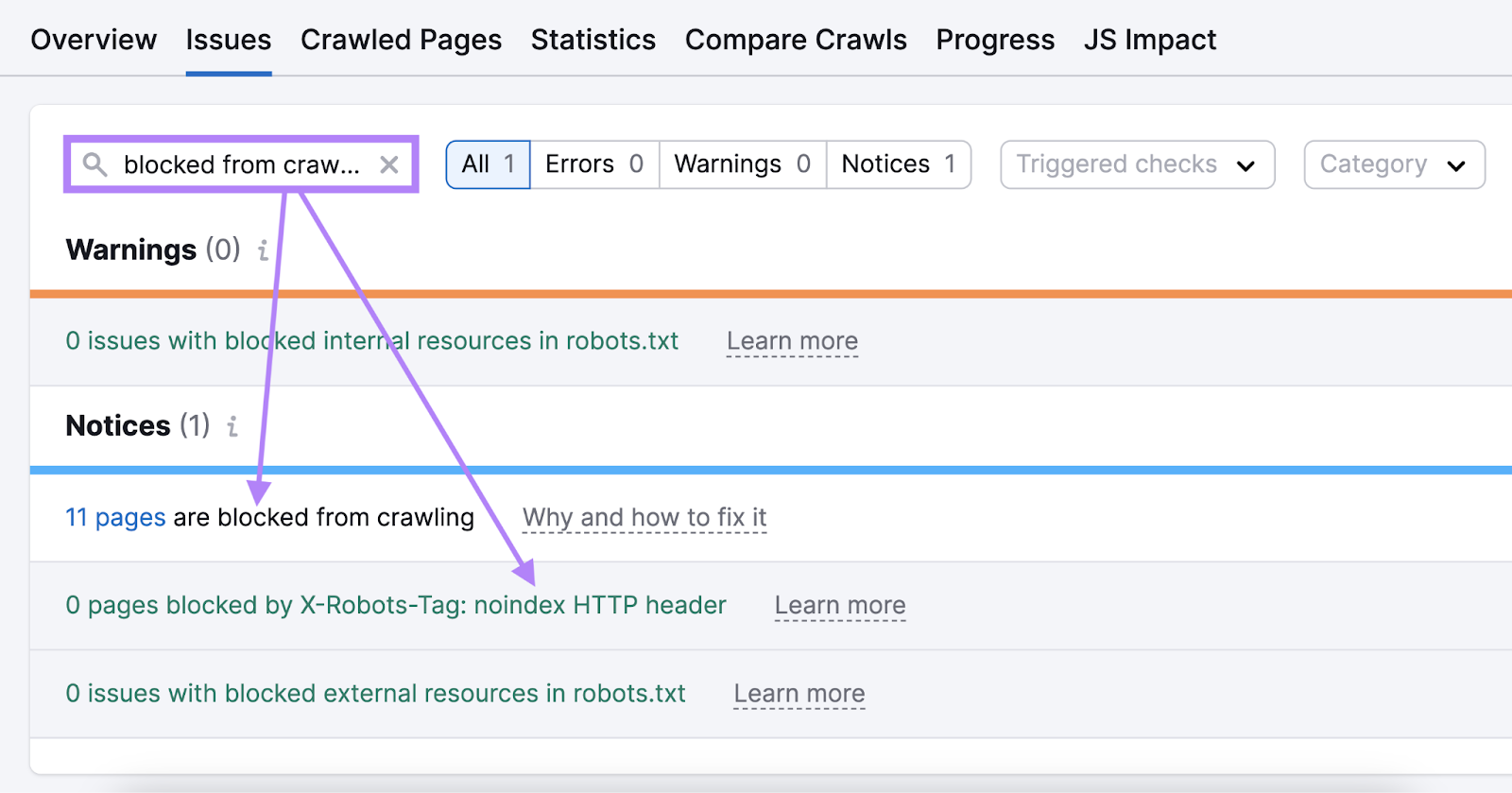

When the audit is full, head to the “Points” tab.

Within the search field, sort “blocked from crawling” to see errors relating to your meta robots tags or x-robots-tags.

Like this:

Click on on “Why and how one can repair it” subsequent to a problem to learn extra in regards to the difficulty and how one can repair it.

Repair every of those points to enhance your website’s crawlability. And to make it simpler for Google to search out and index your content material.

FAQs

When Ought to You Use the Robots Meta Tag vs. X-Robots-Tag?

Use the robots meta tag for HTML pages and the x-robots-tag for different non-HTML sources. Like PDFs and pictures.

This isn’t a technical requirement. You could possibly inform crawlers what to do along with your webpages by way of x-robots-tags. But it surely’s simpler to attain the identical factor by implementing the robots meta tags on a webpage.

You may also use x-robots-tags to use directives in bulk. Relatively than merely on a web page stage.

Do You Have to Use Each Meta Robots Tag and X-Robots-Tag?

You don’t want to make use of each meta robots tags and x-robots-tags. Telling crawlers how one can index your web page utilizing both a meta robots or x-robots-tag is sufficient.

Repeating the instruction gained’t improve the possibilities that Googlebot or some other crawlers will observe it.

What Is the Best Strategy to Implement Robots Meta Tags?

Utilizing a plugin is normally the simplest approach so as to add robots meta tags to your webpages. As a result of it doesn’t normally require you to edit any of your website’s code.

Which plugin you need to use is dependent upon the content material administration system (CMS) you’re utilizing.

Robots meta tags ensure that the content material you’re placing a lot effort into will get listed. If serps don’t index your content material, you possibly can’t generate any natural site visitors.

So, getting the fundamental robots meta tag parameters proper (like noindex and nofollow) is totally essential.

Test that you just’re implementing these tags accurately utilizing Semrush Website Audit.

This put up was up to date in 2024. Excerpts from the unique article by Carlos Silva could stay.