On April 2, the World Well being Group launched a chatbot named SARAH to boost well being consciousness about issues like easy methods to eat nicely, give up smoking, and extra.

However like some other chatbot, SARAH began giving incorrect solutions. Resulting in loads of web trolls and eventually, the same old disclaimer: The solutions from the chatbot won’t be correct. This tendency to make issues up, generally known as hallucination, is without doubt one of the largest obstacles chatbots face. Why does this occur? And why can’t we repair it?

Let’s discover why massive language fashions hallucinate by how they work. First, making stuff up is strictly what LLMs are designed to do. The chatbot attracts responses from the massive language mannequin with out trying up data in a database or utilizing a search engine.

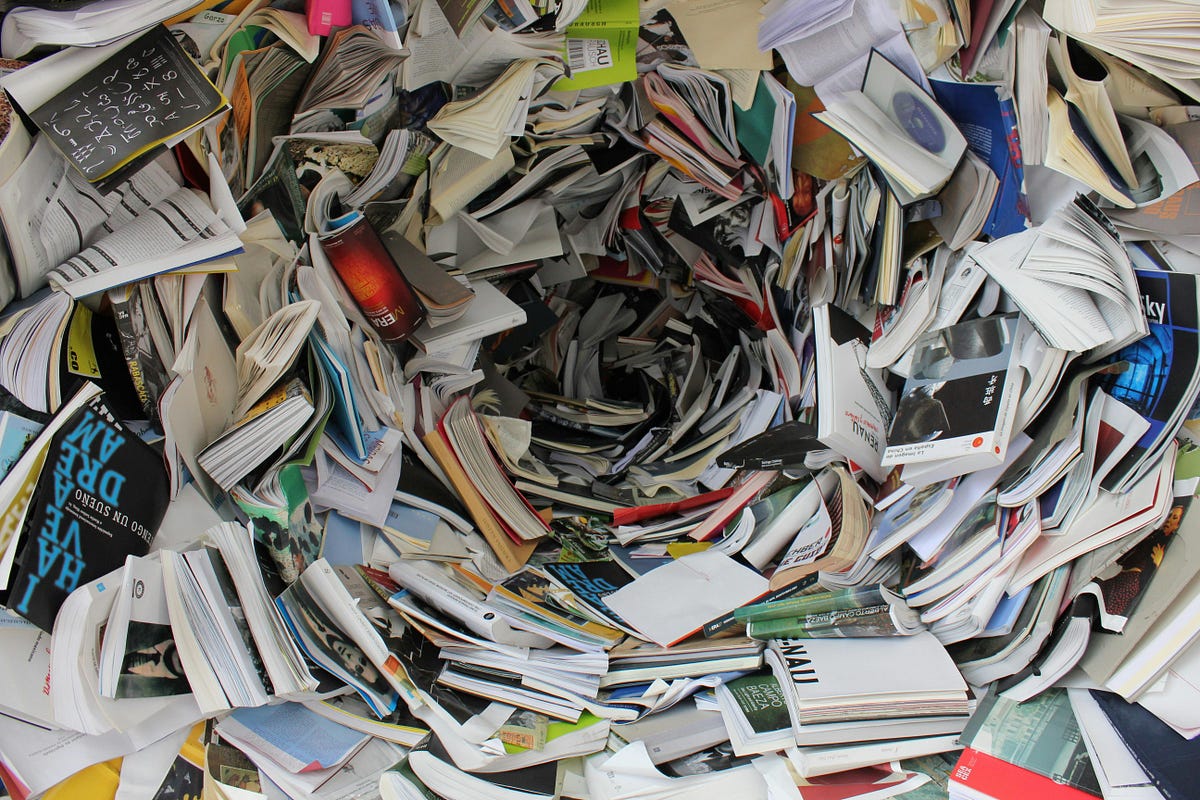

A big language mannequin accommodates billions and billions of numbers. It makes use of these numbers to calculate its responses from scratch, producing new sequences of phrases on the fly. A big language mannequin is extra like a vector than an encyclopedia.

Giant language fashions generate textual content by predicting the following phrase within the sequence. Then the brand new sequence is fed again into the mannequin, which is able to guess the following phrase. This cycle then goes on. Producing virtually any type of textual content attainable. LLMs simply love dreaming.

The mannequin captures the statistical chance of a phrase being predicted with sure phrases. The chances are set when a mannequin is skilled, the place the values within the mannequin are adjusted again and again till they meet the linguistic patterns of the coaching information. As soon as skilled, the mannequin calculates the rating for every phrase within the vocabulary, calculating its chance to come back subsequent.

So mainly, all these hyped-up massive language fashions do is hallucinate. However we solely discover when it’s incorrect. And the issue is that you just will not discover it as a result of these fashions are so good at what they do. And that makes trusting them exhausting.

Can we management what these massive language fashions generate? Regardless that these fashions are too sophisticated to be tinkered with, few consider that coaching them on much more information will cut back the error charge.

You may as well guarantee efficiency by breaking responses step-by-step. This methodology, generally known as chain-of-thought prompting, might help the mannequin really feel assured in regards to the outputs they produce, stopping them from going uncontrolled.

However this doesn’t assure one hundred pc accuracy. So long as the fashions are probabilistic, there’s a likelihood that they may produce the incorrect output. It’s just like rolling a cube even in the event you tamper with it to provide a outcome, there’s a small likelihood it can produce one thing else.

One other factor is that individuals consider these fashions and let their guard down. And these errors go unnoticed. Maybe, the most effective repair for hallucinations is to handle the expectations now we have of those chatbots and cross-verify the details.