Introduction

In immediately’s fast-paced world, customer support is an important facet of any enterprise. A Zendesk Reply Bot, powered by Giant Language Fashions (LLMs) like GPT-4, can considerably improve the effectivity and high quality of buyer assist by automating responses. This weblog publish will information you thru constructing and deploying your personal Zendesk Auto Responder utilizing LLMs and implementing RAG-based workflows in GenAI to streamline the method.

What are RAG primarily based workflows in GenAI

RAG (Retrieval Augmented Technology) primarily based workflows in GenAI (Generative AI) mix the advantages of retrieval and era to boost the AI system’s capabilities, notably in dealing with real-world, domain-specific knowledge. In easy phrases, RAG allows the AI to tug in related info from a database or different sources to assist the era of extra correct and knowledgeable responses. That is notably useful in enterprise settings the place accuracy and context are vital.

What are the elements in an RAG primarily based workflow

- Data base: The data base is a centralized repository of knowledge that the system refers to when answering queries. It will possibly embrace FAQs, manuals, and different related paperwork.

- Set off/Question: This part is accountable for initiating the workflow. It’s normally a buyer’s query or request that wants a response or motion.

- Activity/Motion: Primarily based on the evaluation of the set off/question, the system performs a particular job or motion, reminiscent of producing a response or performing a backend operation.

Few examples of RAG primarily based workflows

- Buyer Interplay Workflow in Banking:

- Chatbots powered by GenAI and RAG can considerably enhance engagement charges within the banking trade by personalizing interactions.

- By means of RAG, the chatbots can retrieve and make the most of related info from a database to generate personalised responses to buyer inquiries.

- As an illustration, throughout a chat session, a RAG-based GenAI system might pull within the buyer’s transaction historical past or account info from a database to supply extra knowledgeable and personalised responses.

- This workflow not solely enhances buyer satisfaction but in addition doubtlessly will increase the retention fee by offering a extra personalised and informative interplay expertise.

- E mail Campaigns Workflow:

- In advertising and gross sales, creating focused campaigns is essential.

- RAG may be employed to tug within the newest product info, buyer suggestions, or market tendencies from exterior sources to assist generate extra knowledgeable and efficient advertising / gross sales materials.

- For instance, when crafting an e-mail marketing campaign, a RAG-based workflow might retrieve latest constructive evaluations or new product options to incorporate within the marketing campaign content material, thus doubtlessly enhancing engagement charges and gross sales outcomes.

- Automated Code Documentation and Modification Workflow:

- Initially, a RAG system can pull present code documentation, codebase, and coding requirements from the challenge repository.

- When a developer wants so as to add a brand new function, RAG can generate a code snippet following the challenge’s coding requirements by referencing the retrieved info.

- If a modification is required within the code, the RAG system can suggest modifications by analyzing the prevailing code and documentation, making certain consistency and adherence to coding requirements.

- Publish code modification or addition, RAG can routinely replace the code documentation to replicate the modifications, pulling in needed info from the codebase and present documentation.

The right way to obtain and index all Zendesk tickets for retrieval

Allow us to now get began with the tutorial. We’ll construct a bot to reply incoming Zendesk tickets whereas utilizing a customized database of previous Zendesk tickets and responses to generate the reply with the assistance of LLMs.

- Entry Zendesk API: Use Zendesk API to entry and obtain all of the tickets. Guarantee you’ve the required permissions and API keys to entry the info.

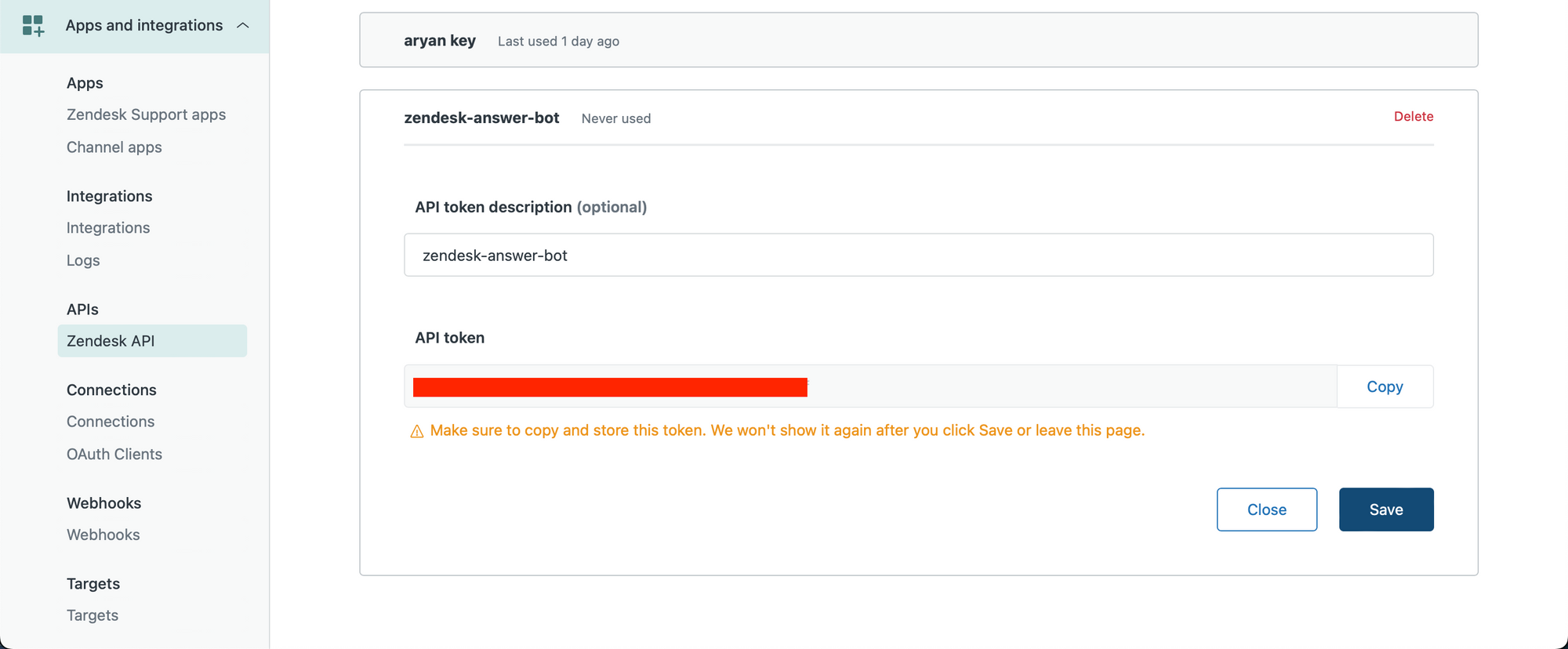

We first create our Zendesk API key. Be sure to are an Admin person and go to the next hyperlink to create your API key – https://YOUR_SUBDOMAIN.zendesk.com/admin/apps-integrations/apis/zendesk-api/settings/tokens

Create an API key and duplicate it to your clipboard.

Allow us to now get began in a python pocket book.

We enter our Zendesk credentials, together with the API key we simply obtained.

subdomain = YOUR_SUBDOMAIN

username = ZENDESK_USERNAME

password = ZENDESK_API_KEY

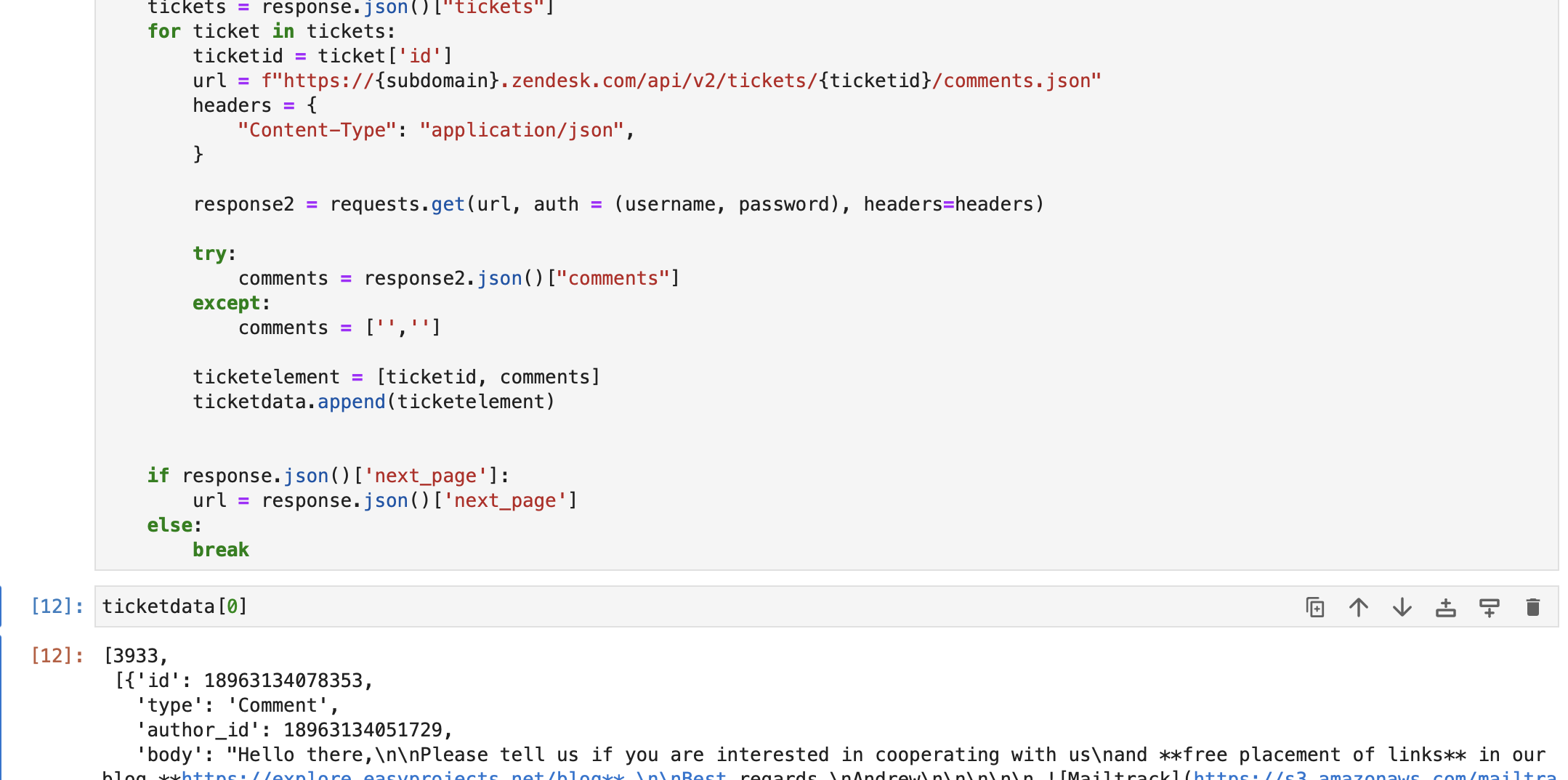

username="/token".format(username)We now retrieve ticket knowledge. Within the under code, we’ve got retrieved queries and replies from every ticket, and are storing every set [query, array of replies] representing a ticket into an array referred to as ticketdata.

We’re solely fetching the newest 1000 tickets. You may modify this as required.

import requests

ticketdata = []

url = f"https://subdomain.zendesk.com/api/v2/tickets.json"

params = "sort_by": "created_at", "sort_order": "desc"

headers = "Content material-Sort": "software/json"

tickettext = ""

whereas len(ticketdata) <= 1000:

response = requests.get(

url, auth=(username, password), params=params, headers=headers

)

tickets = response.json()["tickets"]

for ticket in tickets:

ticketid = ticket["id"]

url = f"https://subdomain.zendesk.com/api/v2/tickets/ticketid/feedback.json"

headers =

"Content material-Sort": "software/json",

response2 = requests.get(url, auth=(username, password), headers=headers)

strive:

feedback = response2.json()["comments"]

besides:

feedback = ["", ""]

ticketelement = [ticketid, comments]

ticketdata.append(ticketelement)

if response.json()["next_page"]:

url = response.json()["next_page"]

else:

breakAs you possibly can see under, we’ve got retrieved ticket knowledge from the Zendesk db. Every component in ticketdata comprises –

a. Ticket ID

b. All feedback / replies within the ticket.

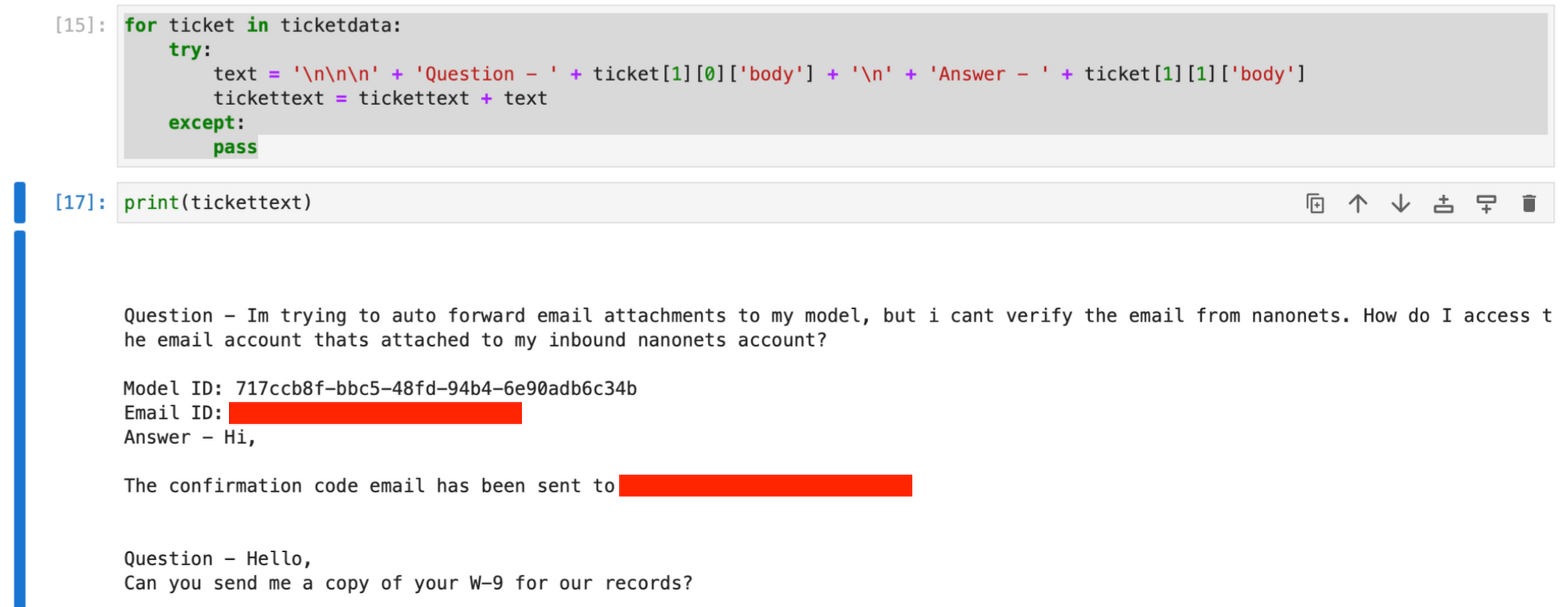

We then transfer on to create a textual content primarily based string having the queries and first responses from all retrieved tickets, utilizing the ticketdata array.

for ticket in ticketdata:

strive:

textual content = (

"nnn"

+ "Query - "

+ ticket[1][0]["body"]

+ "n"

+ "Reply - "

+ ticket[1][1]["body"]

)

tickettext = tickettext + textual content

besides:

crossThe tickettext string now comprises all tickets and first responses, with every ticket’s knowledge separated by newline characters.

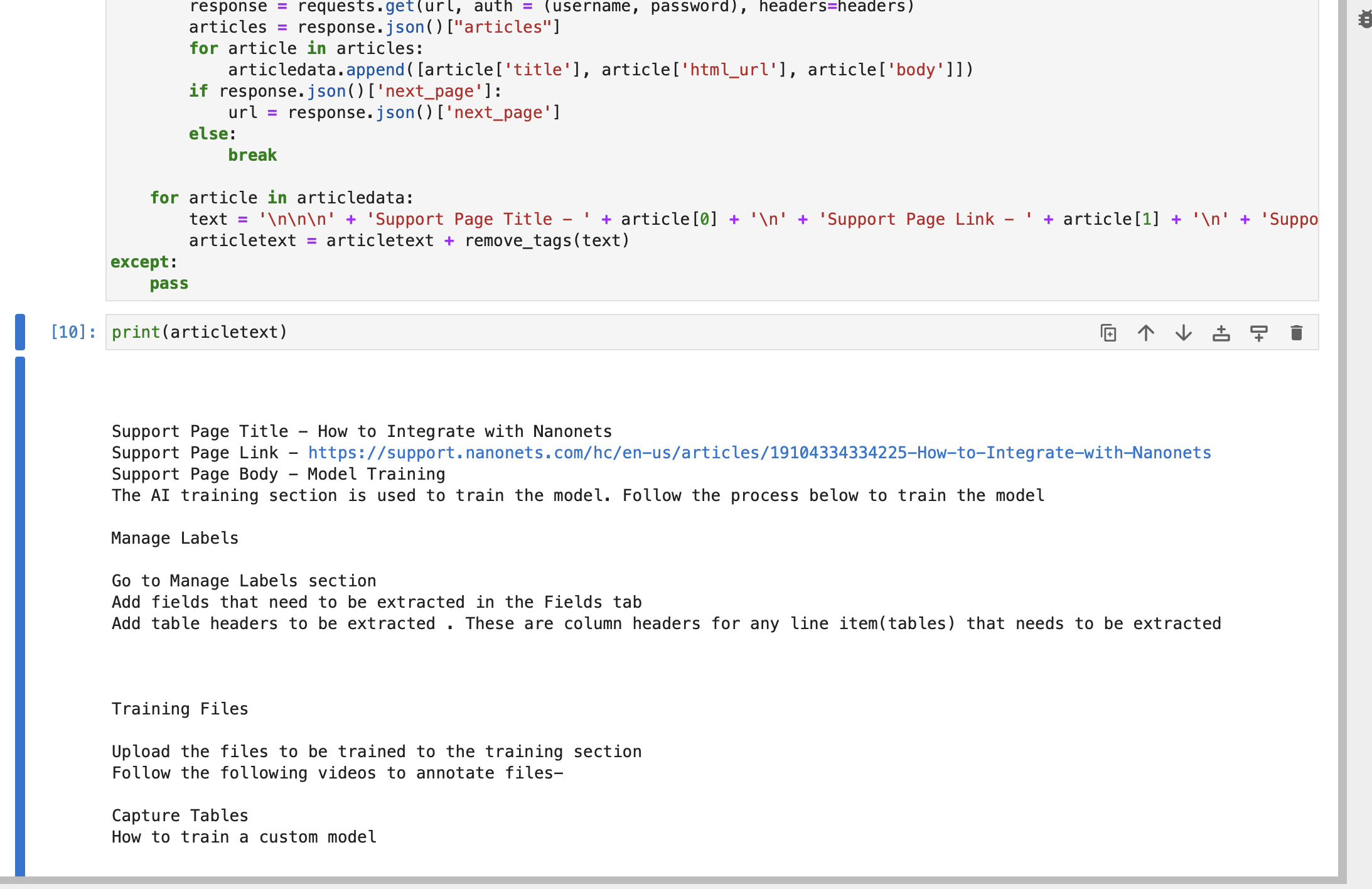

Elective : You may also fetch knowledge out of your Zendesk Assist articles to increase the data base additional, by operating the under code.

import re

def remove_tags(textual content):

clear = re.compile("<.*?>")

return re.sub(clear, "", textual content)

articletext = ""

strive:

articledata = []

url = f"https://subdomain.zendesk.com/api/v2/help_center/en-us/articles.json"

headers = "Content material-Sort": "software/json"

whereas True:

response = requests.get(url, auth=(username, password), headers=headers)

articles = response.json()["articles"]

for article in articles:

articledata.append([article["title"], article["html_url"], article["body"]])

if response.json()["next_page"]:

url = response.json()["next_page"]

else:

break

for article in articledata:

textual content = (

"nnn"

+ "Assist Web page Title - "

+ article[0]

+ "n"

+ "Assist Web page Hyperlink - "

+ article[1]

+ "n"

+ "Assist Web page Physique - "

+ article[2]

)

articletext = articletext + remove_tags(textual content)

besides:

crossThe string articletext comprises title, hyperlink and physique of every article a part of your Zendesk assist pages.

Elective : You may join your buyer database or some other related database, after which use it whereas creating the index retailer.

Mix the fetched knowledge.

data = tickettext + "nnn" + articletext- Index Tickets: As soon as downloaded, index the tickets utilizing an acceptable indexing technique to facilitate fast and environment friendly retrieval.

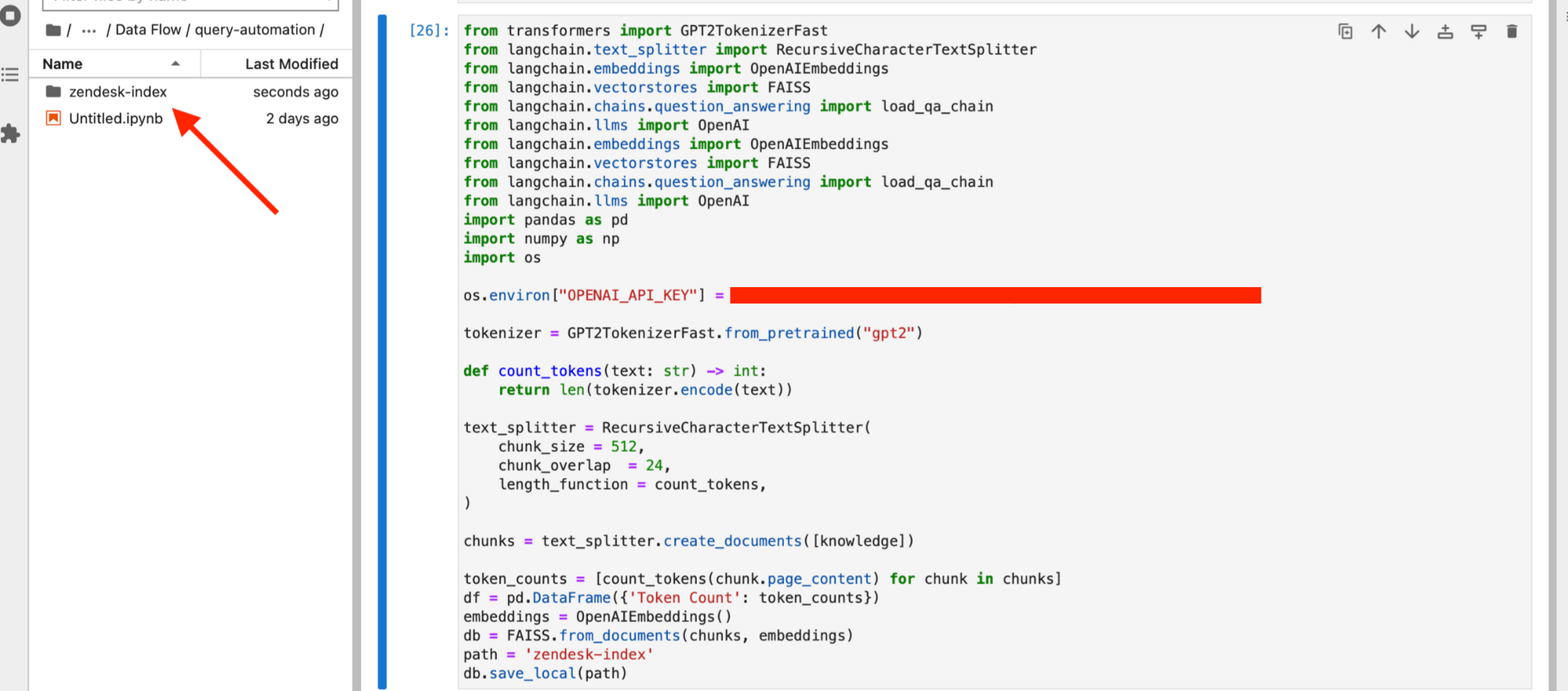

To do that, we first set up the dependencies required for creating the vector retailer.

pip set up langchain openai pypdf faiss-cpuCreate an index retailer utilizing the fetched knowledge. This can act as our data base after we try to reply new tickets through GPT.

os.environ["OPENAI_API_KEY"] = "YOUR_OPENAI_API_KEY"

from langchain.document_loaders import PyPDFLoader

from langchain.vectorstores import FAISS

from langchain.chat_models import ChatOpenAI

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.chains import RetrievalQA, ConversationalRetrievalChain

from transformers import GPT2TokenizerFast

import os

import pandas as pd

import numpy as np

tokenizer = GPT2TokenizerFast.from_pretrained("gpt2")

def count_tokens(textual content: str) -> int:

return len(tokenizer.encode(textual content))

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=512,

chunk_overlap=24,

length_function=count_tokens,

)

chunks = text_splitter.create_documents([knowledge])

token_counts = [count_tokens(chunk.page_content) for chunk in chunks]

df = pd.DataFrame("Token Rely": token_counts)

embeddings = OpenAIEmbeddings()

db = FAISS.from_documents(chunks, embeddings)

path = "zendesk-index"

db.save_local(path)

Your index will get saved in your native system.

- Replace Index Frequently: Frequently replace the index to incorporate new tickets and modifications to present ones, making certain the system has entry to probably the most present knowledge.

We will schedule the above script to run each week, and replace our ‘zendesk-index’ or some other desired frequency.

The right way to carry out retrieval when a brand new ticket is available in

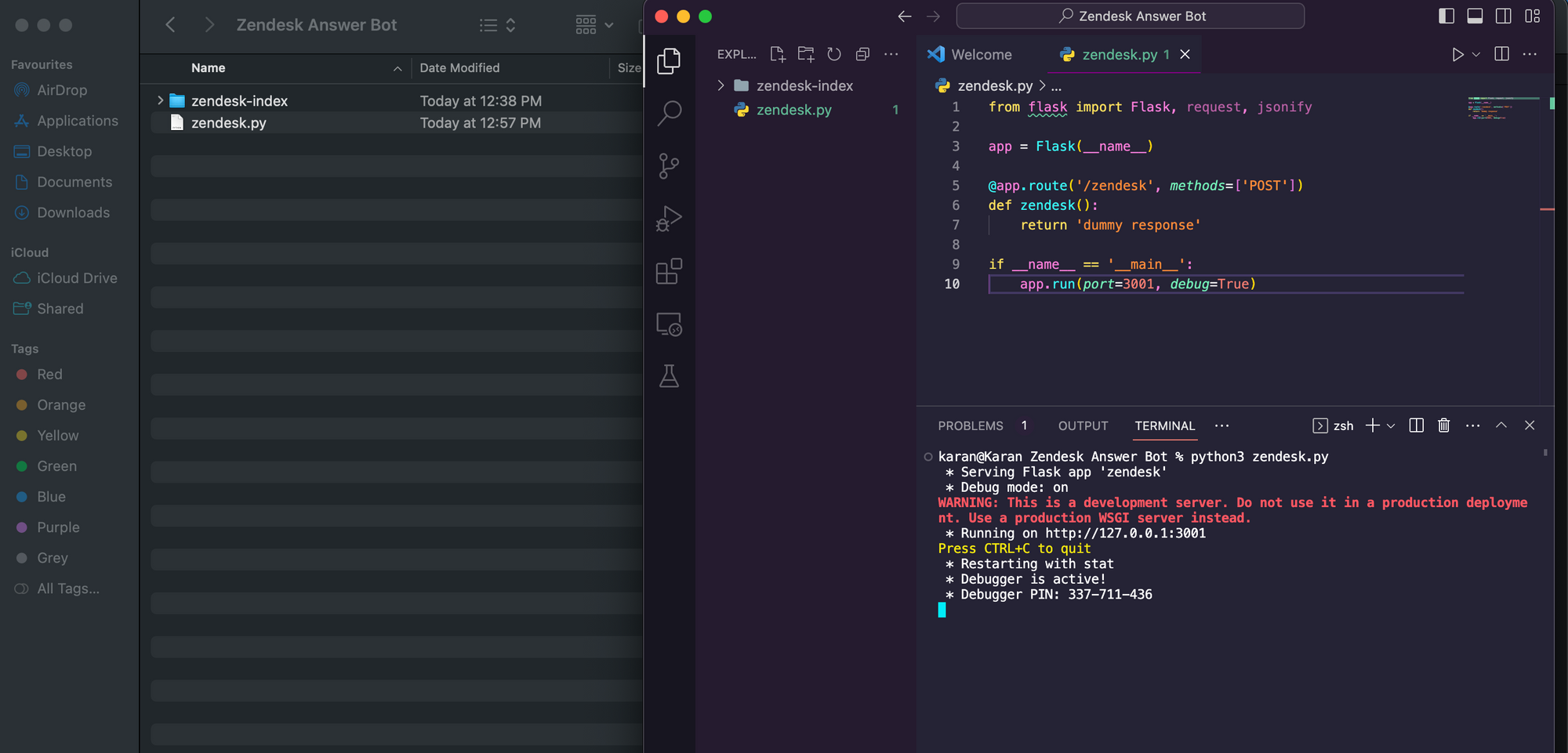

- Monitor for New Tickets: Arrange a system to observe Zendesk for brand new tickets repeatedly.

We’ll create a primary Flask API and host it. To get began,

- Create a brand new folder referred to as ‘Zendesk Reply Bot’.

- Add your FAISS db folder ‘zendesk-index’ to the ‘Zendesk Reply Bot’ folder.

- Create a brand new python file zendesk.py and duplicate the under code into it.

from flask import Flask, request, jsonify

app = Flask(__name__)

@app.route('/zendesk', strategies=['POST'])

def zendesk():

return 'dummy response'

if __name__ == '__main__':

app.run(port=3001, debug=True)

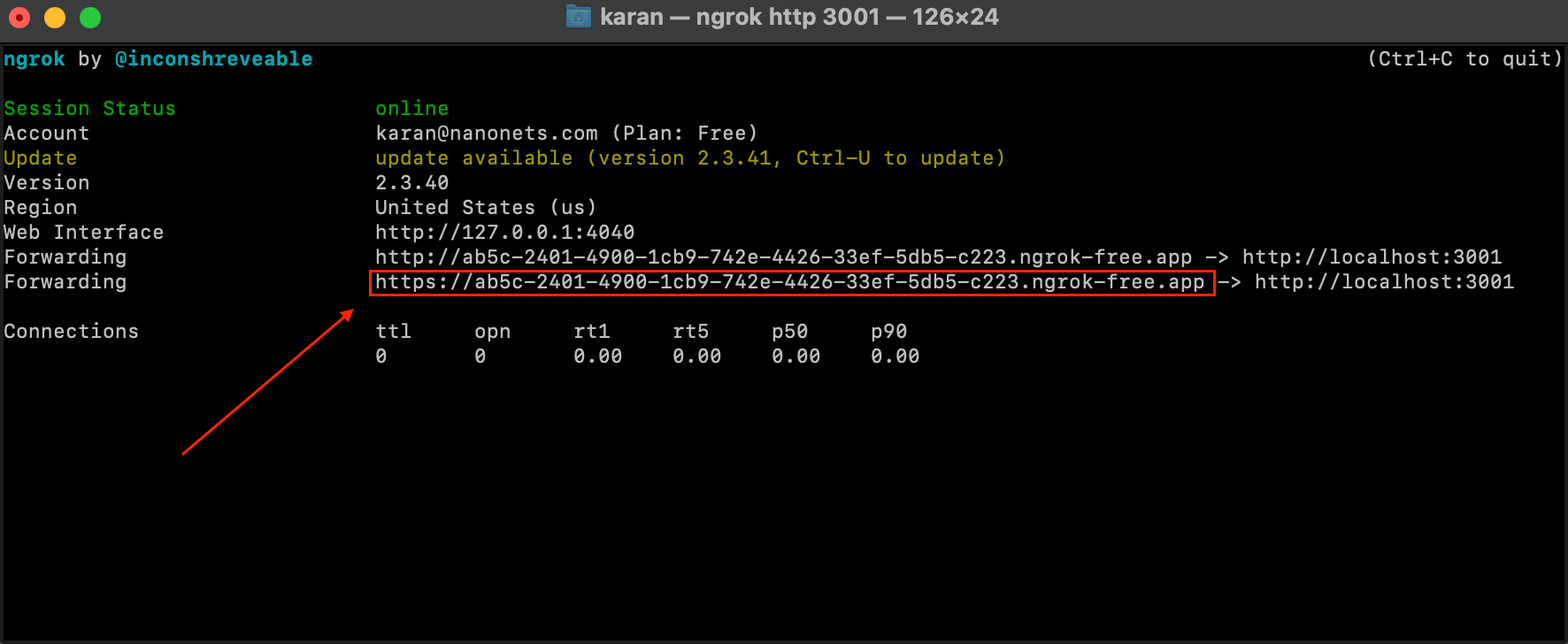

- Obtain and configure ngrok utilizing the directions right here. Make certain to configure the ngrok authtoken in your terminal as directed on the hyperlink.

- Open a brand new terminal occasion and run under command.

ngrok http 3001- We now have our Flask Service uncovered over an exterior IP utilizing which we are able to make API calls to our service from anyplace.

- We then arrange a Zendesk Webhook, by both visiting the next hyperlink – https://YOUR_SUBDOMAIN.zendesk.com/admin/apps-integrations/webhooks/webhooks OR immediately operating the under code in our authentic Jupyter pocket book.

NOTE : It is very important word that whereas utilizing ngrok is sweet for testing functions, it’s strongly advisable to shift the Flask API service to a server occasion. In that case, the static IP of the server turns into the Zendesk Webhook endpoint and you’ll need to configure the endpoint in your Zendesk Webhook to level in the direction of this handle – https://YOUR_SERVER_STATIC_IP:3001/zendesk

zendesk_workflow_endpoint = "HTTPS_NGROK_FORWARDING_ADDRESS"

url = "https://" + subdomain + ".zendesk.com/api/v2/webhooks"

payload =

"webhook":

"endpoint": zendesk_workflow_endpoint,

"http_method": "POST",

"identify": "Nanonets Workflows Webhook v1",

"standing": "energetic",

"request_format": "json",

"subscriptions": ["conditional_ticket_events"],

headers = "Content material-Sort": "software/json"

auth = (username, password)

response = requests.publish(url, json=payload, headers=headers, auth=auth)

webhook = response.json()

webhookid = webhook["webhook"]["id"]

- We now arrange a Zendesk Set off, which is able to set off the above webhook we simply created to run every time a brand new ticket seems. We will arrange the Zendesk set off by both visiting the next hyperlink – https://YOUR_SUBDOMAIN.zendesk.com/admin/objects-rules/guidelines/triggers OR by immediately operating the under code in our authentic Jupyter pocket book.

url = "https://" + subdomain + ".zendesk.com/api/v2/triggers.json"

trigger_payload = {

"set off": {

"title": "Nanonets Workflows Set off v1",

"energetic": True,

"circumstances": "all": ["field": "update_type", "value": "Create"],

"actions": [

"field": "notification_webhook",

"value": [

webhookid,

json.dumps(

"ticket_id": "ticket.id",

"org_id": "ticket.url",

"subject": "ticket.title",

"body": "ticket.description",

),

],

],

}

}

response = requests.publish(url, auth=(username, password), json=trigger_payload)

set off = response.json()

- Retrieve Related Data: When a brand new ticket is available in, use the listed data base to retrieve related info and previous tickets that may assist in producing a response.

After the set off and webhook has been arrange, Zendesk will be sure that our at the moment operating Flask service will get an API name on the /zendesk route with the ticket ID, topic and physique every time a brand new ticket arrives.

We now must configure our Flask Service to

a. generate a response utilizing our vector retailer ‘zendesk-index’.

b. replace the ticket with the generated response.

We change our present flask service code in zendesk.py with the code under –

from flask import Flask, request, jsonify

from langchain.document_loaders import PyPDFLoader

from langchain.vectorstores import FAISS

from langchain.chat_models import ChatOpenAI

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.chains import RetrievalQA, ConversationalRetrievalChain

from transformers import GPT2TokenizerFast

import os

import pandas as pd

import numpy as np

app = Flask(__name__)

@app.route('/zendesk', strategies=['POST'])

def zendesk():

updatedticketjson = request.get_json()

zenembeddings = OpenAIEmbeddings()

question = updatedticketjson['body']

zendb = FAISS.load_local('zendesk-index', zenembeddings)

docs = zendb.similarity_search(question)

if __name__ == '__main__':

app.run(port=3001, debug=True)As you possibly can see, we’ve got run a similarity search on our vector index and retrieved probably the most related tickets and articles to assist generate a response.

The right way to generate a response and publish to Zendesk

- Generate Response: Make the most of the LLM to generate a coherent and correct response primarily based on the retrieved info and analyzed context.

Allow us to now proceed establishing our API endpoint. We additional modify the code as proven under to generate a response primarily based on the related info retrieved.

@app.route("/zendesk", strategies=["POST"])

def zendesk():

updatedticketjson = request.get_json()

zenembeddings = OpenAIEmbeddings()

question = updatedticketjson["body"]

zendb = FAISS.load_local("zendesk-index", zenembeddings)

docs = zendb.similarity_search(question)

zenchain = load_qa_chain(OpenAI(temperature=0.7), chain_type="stuff")

reply = zenchain.run(input_documents=docs, query=question)

The reply variable will comprise the generated response.

- Overview Response: Optionally, have a human agent overview the generated response for accuracy and appropriateness earlier than posting.

The way in which we’re making certain that is by NOT posting the response generated by GPT immediately because the Zendesk reply. As a substitute, we are going to create a operate to replace new tickets with an inside word containing the GPT generated response.

Add the next operate to the zendesk.py flask service –

def update_ticket_with_internal_note(

subdomain, ticket_id, username, password, comment_body

):

url = f"https://subdomain.zendesk.com/api/v2/tickets/ticket_id.json"

e-mail = username

headers = "Content material-Sort": "software/json"

comment_body = "Advised Response - " + comment_body

knowledge = "ticket": "remark": "physique": comment_body, "public": False

response = requests.put(url, json=knowledge, headers=headers, auth=(e-mail, password))

- Publish to Zendesk: Use the Zendesk API to publish the generated response to the corresponding ticket, making certain well timed communication with the client.

Allow us to now incorporate the interior word creation operate into our API endpoint.

@app.route("/zendesk", strategies=["POST"])

def zendesk():

updatedticketjson = request.get_json()

zenembeddings = OpenAIEmbeddings()

question = updatedticketjson["body"]

zendb = FAISS.load_local("zendesk-index", zenembeddings)

docs = zendb.similarity_search(question)

zenchain = load_qa_chain(OpenAI(temperature=0.7), chain_type="stuff")

reply = zenchain.run(input_documents=docs, query=question)

update_ticket_with_internal_note(subdomain, ticket, username, password, reply)

return reply

This completes our workflow!

Allow us to revise the workflow we’ve got arrange –

- Our Zendesk Set off begins the workflow when a brand new Zendesk ticket seems.

- The set off sends the brand new ticket’s knowledge to our Webhook.

- Our Webhook sends a request to our Flask Service.

- Our Flask Service queries the vector retailer created utilizing previous Zendesk knowledge to retrieve related previous tickets and articles to reply the brand new ticket.

- The related previous tickets and articles are handed to GPT together with the brand new ticket’s knowledge to generate a response.

- The brand new ticket is up to date with an inside word containing the GPT generated response.

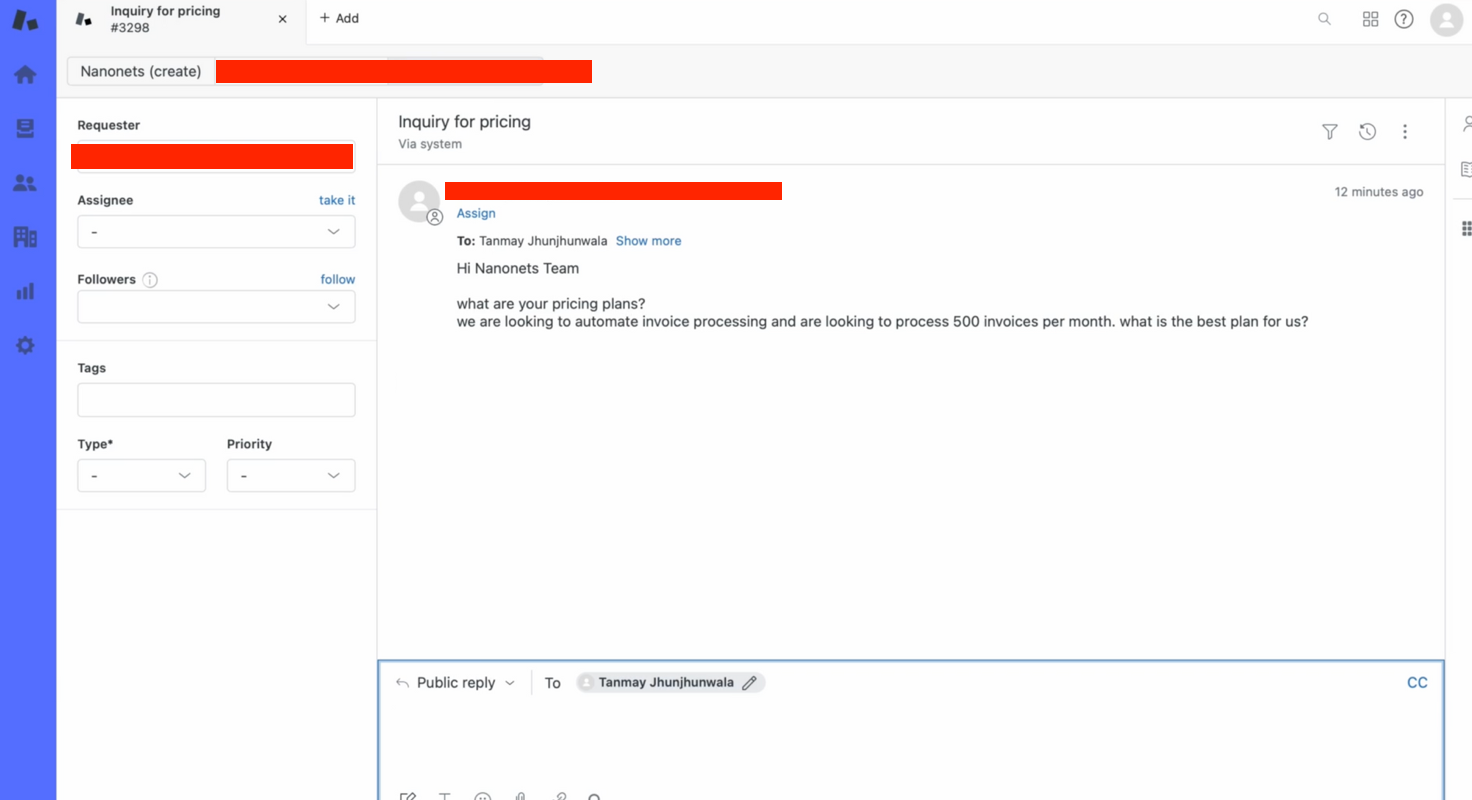

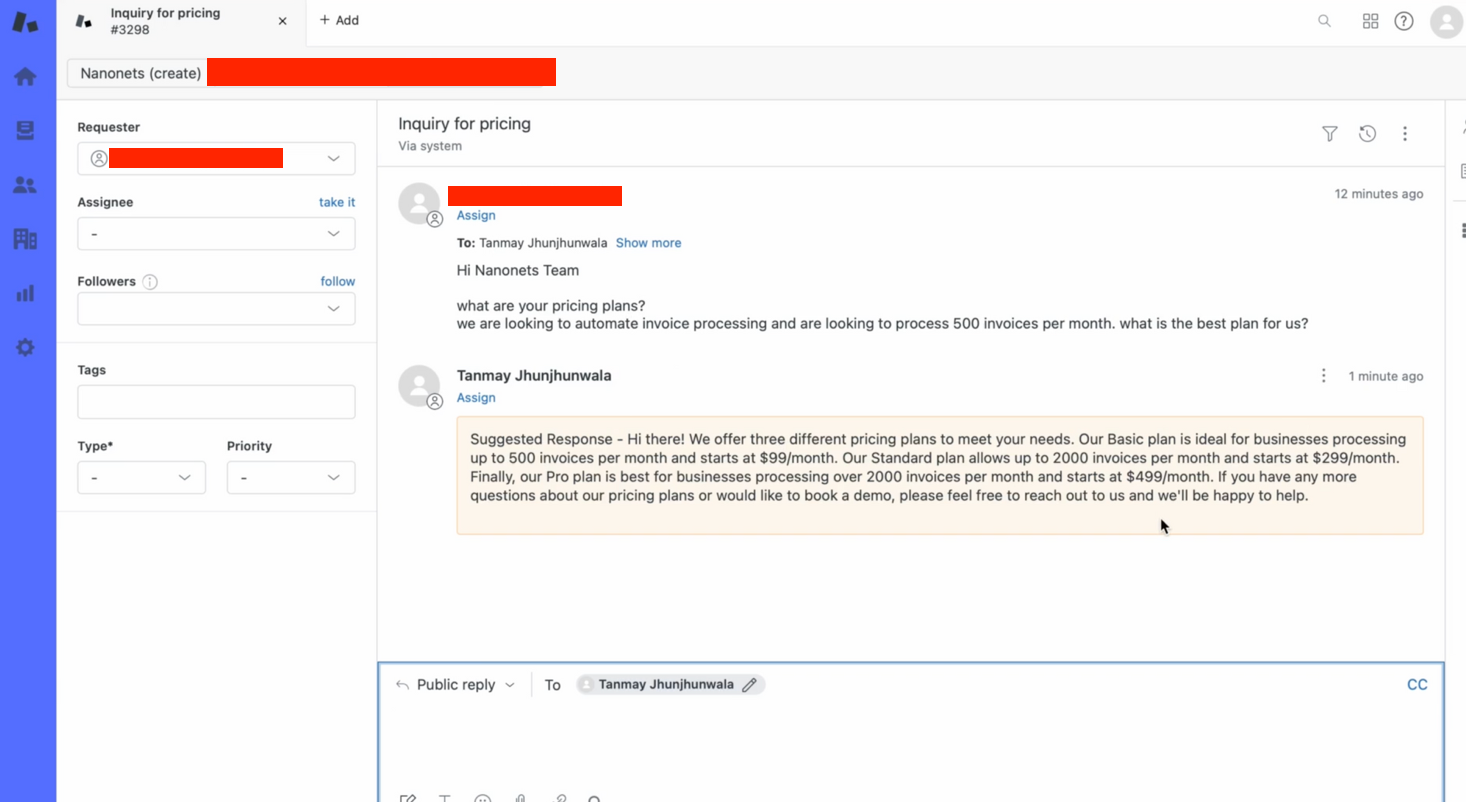

We will take a look at this manually –

- We create a ticket on Zendesk manually to check the circulate.

- Inside seconds, our bot gives a related reply to the ticket question!

How to do that total workflow with Nanonets

Nanonets gives a robust platform to implement and handle RAG-based workflows seamlessly. Right here’s how one can leverage Nanonets for this workflow:

- Combine with Zendesk: Join Nanonets with Zendesk to observe and retrieve tickets effectively.

- Construct and Prepare Fashions: Use Nanonets to construct and prepare LLMs to generate correct and coherent responses primarily based on the data base and analyzed context.

- Automate Responses: Arrange automation guidelines in Nanonets to routinely publish generated responses to Zendesk or ahead them to human brokers for overview.

- Monitor and Optimize: Constantly monitor the efficiency of the workflow and optimize the fashions and guidelines to enhance accuracy and effectivity.

By integrating LLMs with RAG-based workflows in GenAI and leveraging the capabilities of Nanonets, companies can considerably improve their buyer assist operations, offering swift and correct responses to buyer queries on Zendesk.