On this planet of synthetic intelligence, the function of deep studying is changing into central. Synthetic intelligence paradigms have historically taken inspiration from the functioning of the human mind, however it seems that deep studying has surpassed the educational capabilities of the human mind in some features. Deep studying has undoubtedly made spectacular strides, however it has its drawbacks, together with excessive computational complexity and the necessity for giant quantities of knowledge.

In mild of the above considerations, scientists from Bar-Ilan College in Israel are elevating an necessary query: ought to synthetic intelligence incorporate deep studying? They offered their new paper, revealed within the journal Scientific Experiences, which continues their earlier analysis on the benefit of tree-like architectures over convolutional networks. The primary purpose of the brand new examine was to seek out out whether or not advanced classification duties will be successfully educated utilizing shallower neural networks based mostly on brain-inspired rules, whereas decreasing the computational load. On this article, we’ll define key findings that would reshape the bogus intelligence trade.

So, as we already know, efficiently fixing advanced classification duties requires coaching deep neural networks, consisting of dozens and even a whole lot of convolutional and totally linked hidden layers. That is fairly completely different from how the human mind capabilities. In deep studying, the primary convolutional layer detects localized patterns within the enter knowledge, and subsequent layers establish larger-scale patterns till a dependable class characterization of the enter knowledge is achieved.

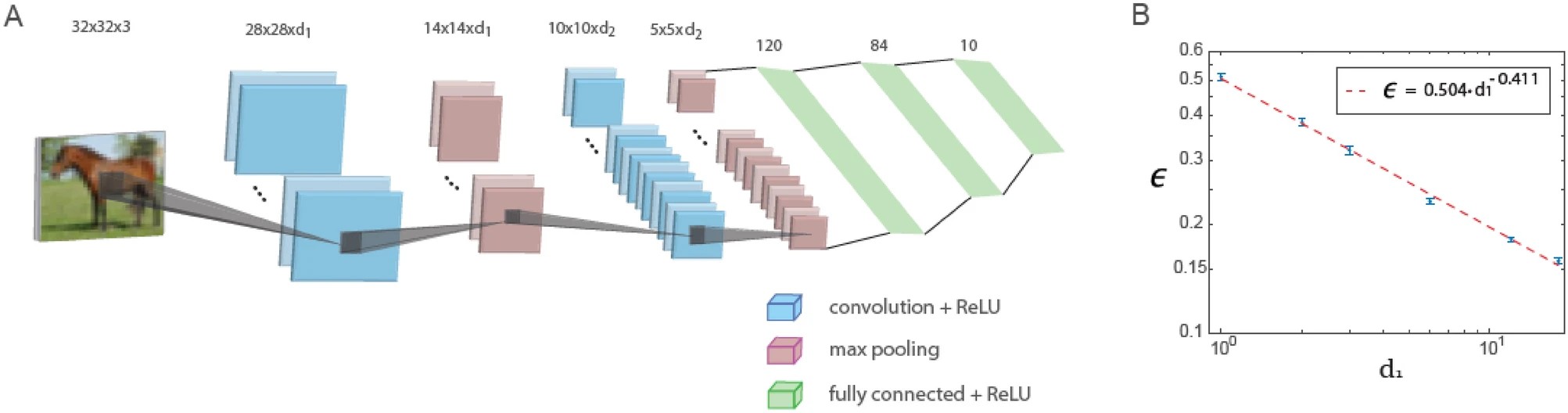

This examine reveals that when utilizing a hard and fast depth ratio of the primary and second convolutional layers, errors in a small LeNet structure consisting of solely 5 layers lower with the variety of filters within the first convolutional layer in line with an influence legislation. Extrapolation of this energy legislation means that the generalized LeNet structure is able to attaining low error values just like these obtained with deep neural networks based mostly on the CIFAR-10 knowledge.

The determine beneath reveals coaching in a generalized LeNet structure. The generalized LeNet structure for the CIFAR-10 database (enter measurement 32 x 32 x 3 pixels) consists of 5 layers: two convolutional layers utilizing most pooling and three totally linked layers. The primary and second convolutional layers comprise d1 and d2 filters, respectively, the place d1 / d2 ≃ 6 / 16. The plot of the check error, denoted as ϵ, versus d1 on a logarithmic scale, indicating a power-law dependence with an exponent ρ∼0.41. Neuron activation perform is ReLU.

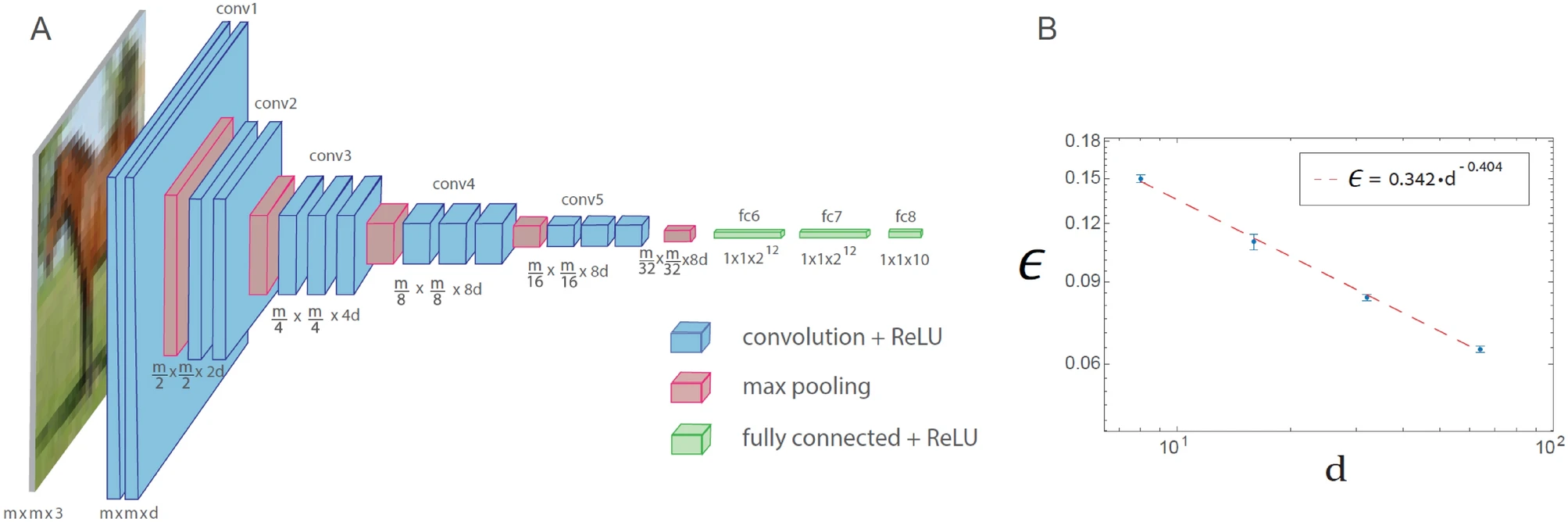

An identical energy legislation phenomenon can also be noticed for the generalized VGG-16 structure. Nevertheless, this results in a rise within the variety of operations required to realize a given error price in comparison with LeNet.

Coaching within the generalized VGG-16 structure is demonstrated within the determine beneath. The generalized VGG-16 structure consisting of 16 layers, the place the variety of filters within the nth set of convolutions is d x 2n − 1 (n ≤ 4), and the sq. root of the filter measurement is m x 2 − (n − 1) (n ≤ 5), the place m x m x 3 is the dimensions of every enter (d = 64 within the unique VGG-16 structure). Plot of the check error, denoted as ϵ, versus d on a logarithmic scale for the CIFAR-10 database (m = 32), indicating a power-law dependence with an exponent ρ∼0.4. Neuron activation perform is ReLU.

The ability legislation phenomenon covers numerous generalized LeNet and VGG-16 architectures, indicating its common conduct and suggesting a quantitative hierarchical complexity in machine studying. As well as, the conservation legislation for convolutional layers equal to the sq. root of their measurement multiplied by their depth is discovered to asymptotically reduce errors. The efficient method to floor studying demonstrated on this examine requires additional quantitative examine utilizing completely different databases and architectures, in addition to its accelerated implementation with future specialised {hardware} designs.