Lately, giant language fashions (LLMs) have made exceptional strides of their potential to grasp and generate human-like textual content. These fashions, reminiscent of OpenAI’s GPT and Anthropic’s Claude, have demonstrated spectacular efficiency on a variety of pure language processing duties. Nevertheless, with regards to complicated reasoning duties that require a number of steps of logical pondering, conventional prompting strategies typically fall brief. That is the place Chain-of-Thought (CoT) prompting comes into play, providing a strong immediate engineering method to enhance the reasoning capabilities of enormous language fashions.

Key Takeaways

- CoT prompting enhances reasoning capabilities by producing intermediate steps.

- It breaks down complicated issues into smaller, manageable sub-problems.

- Advantages embody improved efficiency, interpretability, and generalization.

- CoT prompting applies to arithmetic, commonsense, and symbolic reasoning.

- It has the potential to considerably affect AI throughout various domains.

Chain-of-Thought prompting is a method that goals to reinforce the efficiency of enormous language fashions on complicated reasoning duties by encouraging the mannequin to generate intermediate reasoning steps. Not like conventional prompting strategies, which usually present a single immediate and anticipate a direct reply, CoT prompting breaks down the reasoning course of right into a collection of smaller, interconnected steps.

At its core, CoT prompting entails prompting the language mannequin with a query or downside after which guiding it to generate a sequence of thought – a sequence of intermediate reasoning steps that result in the ultimate reply. By explicitly modeling the reasoning course of, CoT prompting permits the language mannequin to sort out complicated reasoning duties extra successfully.

One of many key benefits of CoT prompting is that it permits the language mannequin to decompose a fancy downside into extra manageable sub-problems. By producing intermediate reasoning steps, the mannequin can break down the general reasoning job into smaller, extra targeted steps. This strategy helps the mannequin preserve coherence and reduces the probabilities of shedding monitor of the reasoning course of.

CoT prompting has proven promising leads to enhancing the efficiency of enormous language fashions on a wide range of complicated reasoning duties, together with arithmetic reasoning, commonsense reasoning, and symbolic reasoning. By leveraging the ability of intermediate reasoning steps, CoT prompting permits language fashions to exhibit a deeper understanding of the issue at hand and generate extra correct and coherent responses.

Normal vs COT prompting (Wei et al., Google Analysis, Mind Group)

CoT prompting works by producing a collection of intermediate reasoning steps that information the language mannequin by means of the reasoning course of. As an alternative of merely offering a immediate and anticipating a direct reply, CoT prompting encourages the mannequin to interrupt down the issue into smaller, extra manageable steps.

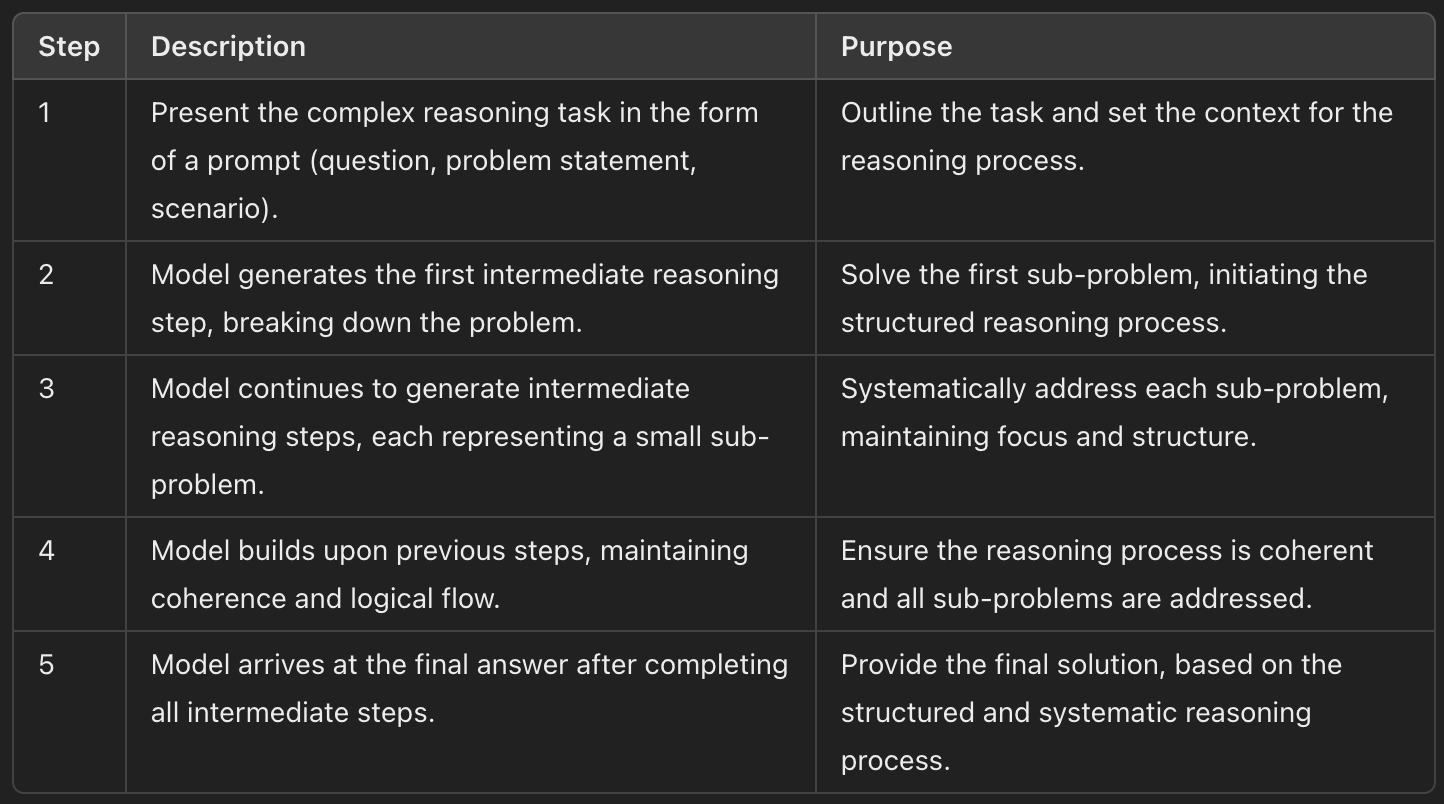

The method begins by presenting the language mannequin with a immediate that outlines the complicated reasoning job at hand. This immediate might be within the type of a query, an issue assertion, or a state of affairs that requires logical pondering. As soon as the immediate is supplied, the mannequin generates a sequence of intermediate reasoning steps that result in the ultimate reply.

Every intermediate reasoning step within the chain of thought represents a small, targeted sub-problem that the mannequin wants to unravel. By producing these steps, the mannequin can strategy the general reasoning job in a extra structured and systematic method. The intermediate steps permit the mannequin to keep up coherence and hold monitor of the reasoning course of, decreasing the probabilities of shedding focus or producing irrelevant info.

Because the mannequin progresses by means of the chain of thought, it builds upon the earlier reasoning steps to reach on the last reply. Every step within the chain is related to the earlier and subsequent steps, forming a logical circulate of reasoning. This step-by-step strategy permits the mannequin to sort out complicated reasoning duties extra successfully, as it might probably give attention to one sub-problem at a time whereas nonetheless sustaining the general context.

The technology of intermediate reasoning steps in CoT prompting is often achieved by means of rigorously designed prompts and coaching strategies. Researchers and practitioners can use numerous strategies to encourage the mannequin to provide a sequence of thought, reminiscent of offering examples of step-by-step reasoning, utilizing particular tokens to point the beginning and finish of every reasoning step, or fine-tuning the mannequin on datasets that show the specified reasoning course of.

5-Step COT prompting course of

By guiding the language mannequin by means of the reasoning course of utilizing intermediate steps, CoT prompting permits the mannequin to unravel complicated reasoning duties extra precisely and effectively. The specific modeling of the reasoning course of additionally enhances the interpretability of the mannequin’s outputs, because the generated chain of thought gives insights into how the mannequin arrived at its last reply.

CoT prompting has been efficiently utilized to a wide range of complicated reasoning duties, demonstrating its effectiveness in enhancing the efficiency of enormous language fashions.

Let’s discover just a few examples of how CoT prompting can be utilized in several domains.

Arithmetic Reasoning

Probably the most easy purposes of CoT prompting is in arithmetic reasoning duties. By producing intermediate reasoning steps, CoT prompting will help language fashions resolve multi-step arithmetic issues extra precisely.

For instance, think about the next downside:

"If John has 5 apples and Mary has 3 occasions as many apples as John, what number of apples does Mary have?"

Utilizing CoT prompting, the language mannequin can generate a sequence of thought like this:

John has 5 apples.Mary has 3 occasions as many apples as John.To seek out the variety of apples Mary has, we have to multiply John's apples by 3.5 apples × 3 = 15 applesSubsequently, Mary has 15 apples.

By breaking down the issue into smaller steps, CoT prompting permits the language mannequin to motive by means of the arithmetic downside extra successfully.

Commonsense Reasoning

CoT prompting has additionally proven promise in tackling commonsense reasoning duties, which require a deep understanding of on a regular basis information and logical pondering.

As an example, think about the next query:

"If an individual is allergic to canines and their pal invitations them over to a home with a canine, what ought to the particular person do?"

A language mannequin utilizing CoT prompting may generate the next chain of thought:

The particular person is allergic to canines.The pal's home has a canine.Being round canines can set off the particular person's allergic reactions.To keep away from an allergic response, the particular person ought to decline the invitation.The particular person can recommend an alternate location to fulfill their pal.

By producing intermediate reasoning steps, CoT prompting permits the language mannequin to show a clearer understanding of the scenario and supply a logical answer.

Symbolic Reasoning

CoT prompting has additionally been utilized to symbolic reasoning duties, which contain manipulating and reasoning with summary symbols and ideas.

For instance, think about the next downside:

"If A implies B, and B implies C, does A indicate C?"

Utilizing CoT prompting, the language mannequin can generate a sequence of thought like this:

A implies B implies that if A is true, then B should even be true.B implies C implies that if B is true, then C should even be true.If A is true, then B is true (from step 1).If B is true, then C is true (from step 2).Subsequently, if A is true, then C should even be true.So, A does indicate C.

By producing intermediate reasoning steps, CoT prompting permits the language mannequin to deal with summary symbolic reasoning duties extra successfully.

These examples show the flexibility and effectiveness of CoT prompting in enhancing the efficiency of enormous language fashions on complicated reasoning duties throughout totally different domains. By explicitly modeling the reasoning course of by means of intermediate steps, CoT prompting enhances the mannequin’s potential to sort out difficult issues and generate extra correct and coherent responses.

Advantages of Chain-of-Thought Prompting

Chain-of-Thought prompting provides a number of vital advantages in advancing the reasoning capabilities of enormous language fashions. Let’s discover a few of the key benefits:

Improved Efficiency on Advanced Reasoning Duties

One of many main advantages of CoT prompting is its potential to reinforce the efficiency of language fashions on complicated reasoning duties. By producing intermediate reasoning steps, CoT prompting permits fashions to interrupt down intricate issues into extra manageable sub-problems. This step-by-step strategy permits the mannequin to keep up focus and coherence all through the reasoning course of, resulting in extra correct and dependable outcomes.

Research have proven that language fashions educated with CoT prompting persistently outperform these educated with conventional prompting strategies on a variety of complicated reasoning duties. The specific modeling of the reasoning course of by means of intermediate steps has confirmed to be a strong method for enhancing the mannequin’s potential to deal with difficult issues that require multi-step reasoning.

Enhanced Interpretability of the Reasoning Course of

One other vital good thing about CoT prompting is the improved interpretability of the reasoning course of. By producing a sequence of thought, the language mannequin gives a transparent and clear rationalization of the way it arrived at its last reply. This step-by-step breakdown of the reasoning course of permits customers to grasp the mannequin’s thought course of and assess the validity of its conclusions.

The interpretability provided by CoT prompting is especially beneficial in domains the place the reasoning course of itself is of curiosity, reminiscent of in academic settings or in methods that require explainable AI. By offering insights into the mannequin’s reasoning, CoT prompting facilitates belief and accountability in using giant language fashions.

Potential for Generalization to Varied Reasoning Duties

CoT prompting has demonstrated its potential to generalize to a variety of reasoning duties. Whereas the method has been efficiently utilized to particular domains like arithmetic reasoning, commonsense reasoning, and symbolic reasoning, the underlying rules of CoT prompting might be prolonged to different sorts of complicated reasoning duties.

The power to generate intermediate reasoning steps is a elementary talent that may be leveraged throughout totally different downside domains. By fine-tuning language fashions on datasets that show the specified reasoning course of, CoT prompting might be tailored to sort out novel reasoning duties, increasing its applicability and affect.

Facilitating the Improvement of Extra Succesful AI Techniques

CoT prompting performs a vital position in facilitating the event of extra succesful and clever AI methods. By enhancing the reasoning capabilities of enormous language fashions, CoT prompting contributes to the creation of AI methods that may sort out complicated issues and exhibit greater ranges of understanding.

As AI methods turn out to be extra subtle and are deployed in numerous domains, the flexibility to carry out complicated reasoning duties turns into more and more vital. CoT prompting gives a strong instrument for enhancing the reasoning abilities of those methods, enabling them to deal with more difficult issues and make extra knowledgeable choices.

A Fast Abstract

CoT prompting is a strong method that enhances the reasoning capabilities of enormous language fashions by producing intermediate reasoning steps. By breaking down complicated issues into smaller, extra manageable sub-problems, CoT prompting permits fashions to sort out difficult reasoning duties extra successfully. This strategy improves efficiency, enhances interpretability, and facilitates the event of extra succesful AI methods.

FAQ

How does Chain-of-Thought prompting (CoT) work?

CoT prompting works by producing a collection of intermediate reasoning steps that information the language mannequin by means of the reasoning course of, breaking down complicated issues into smaller, extra manageable sub-problems.

What are the advantages of utilizing chain-of-thought prompting?

The advantages of CoT prompting embody improved efficiency on complicated reasoning duties, enhanced interpretability of the reasoning course of, potential for generalization to varied reasoning duties, and facilitating the event of extra succesful AI methods.

What are some examples of duties that may be improved with chain-of-thought prompting?

Some examples of duties that may be improved with CoT prompting embody arithmetic reasoning, commonsense reasoning, symbolic reasoning, and different complicated reasoning duties that require a number of steps of logical pondering.