Knowledge scientists and engineers regularly collaborate on machine studying ML duties, making incremental enhancements, iteratively refining ML pipelines, and checking the mannequin’s generalizability and robustness. There are main worries about information traceability and reproducibility as a result of, not like code, information modifications don’t at all times present sufficient details about the precise supply information used to create the revealed information and the transformations made to every supply.

To construct a well-documented ML pipeline, information traceability is essential. It ensures that the information used to coach the fashions is correct and helps them adjust to guidelines and greatest practices. Monitoring the unique information’s utilization, transformation, and compliance with licensing necessities turns into tough with out satisfactory documentation. Datasets might be discovered on information.gov and Accutus1, two open information portals and sharing platforms; nevertheless, information transformations are hardly ever supplied. Due to this lacking info, replicating the outcomes is harder, and individuals are much less prone to settle for the information.

A knowledge repository undergoes exponential adjustments as a result of myriad of potential transformations. Many columns, tables, all kinds of features, and new information sorts are commonplace in such updates. Transformation discovery strategies are generally employed to make clear variations throughout information repository desk variations. The programming-by-example (PBE) method is normally used when they should create a program that takes an enter and turns it into an output. Nevertheless, their inflexibility makes them ill-suited to cope with sophisticated and diversified information sorts and transformations. Moreover, they wrestle to regulate to altering information distributions or unfamiliar domains.

A staff of AI researchers and engineers at Amazon labored collectively to construct ML pipelines utilizing DATALORE, a brand new machine studying system that mechanically generates information transformations amongst tables in a shared information repository. DATALORE employs a generative technique to resolve the lacking information transformation subject. DATALORE makes use of Giant Language Fashions (LLMs) to cut back semantic ambiguity and handbook work as a knowledge transformation synthesis device. These fashions have been skilled on billions of traces of code. Second, for every supplied base desk T, the researchers use information discovery algorithms to search out doable associated candidate tables. This facilitates a sequence of knowledge transformations and enhances the effectiveness of the proposed LLM-based system. The third step in acquiring the improved desk is for DATALORE to stick to the Minimal Description Size idea, which reduces the variety of linked tables. This improves DATALORE’s effectivity by avoiding the pricey investigation of search areas.

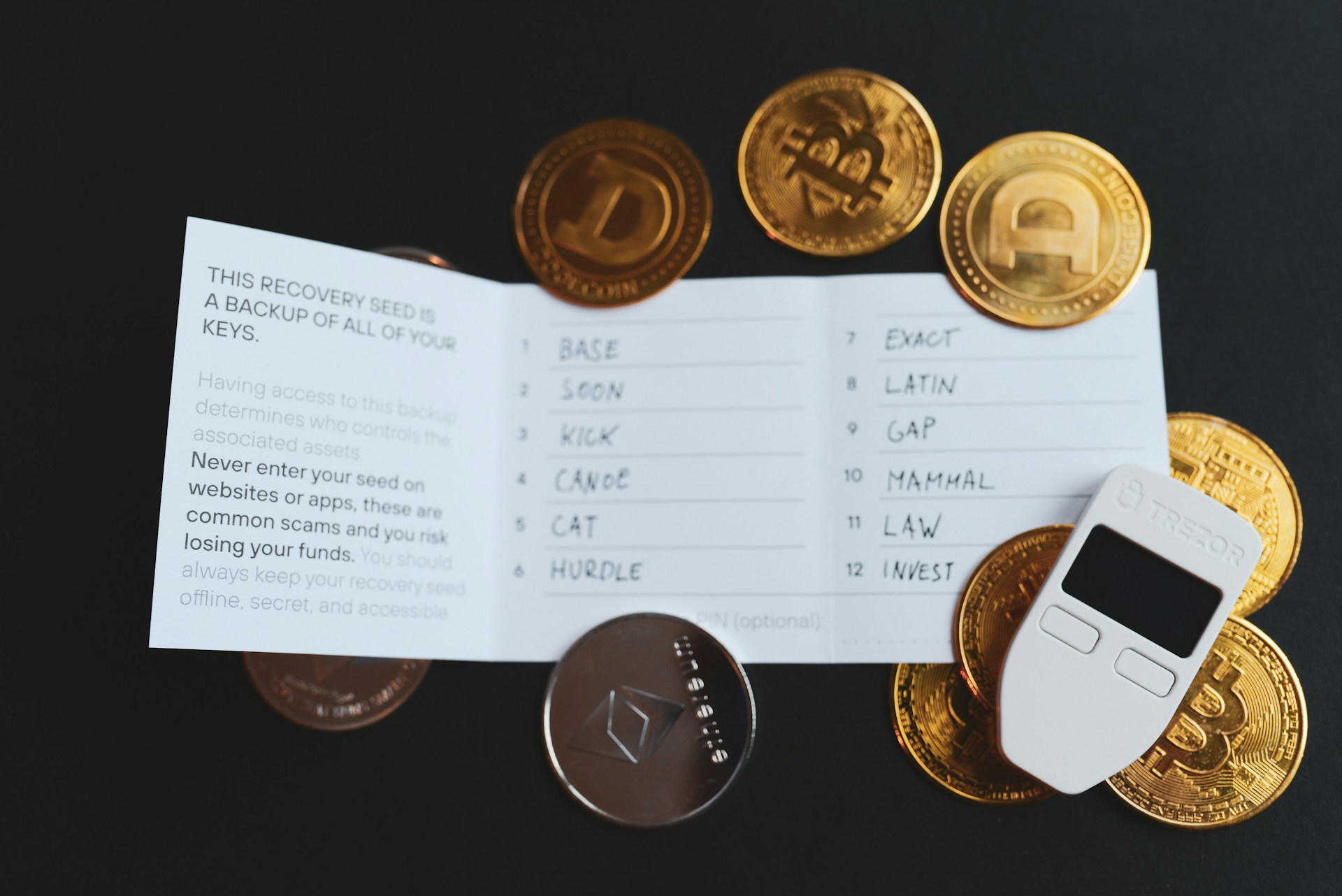

Examples of DATALORE utilization.

Customers can benefit from DATALORE’s information governance, information integration, and machine studying companies, amongst others, on cloud computing platforms like Amazon Internet Providers, Microsoft Azure, and Google Cloud. Nevertheless, discovering appropriate tables or datasets to look queries and manually checking their validity and usefulness might be time-consuming for service customers.

There are 3 ways wherein DATALORE enhances the consumer expertise:

- DATALORE’s associated desk discovery can enhance search outcomes by sorting related tables (each semantic and transformation-based) into distinct classes. By way of an offline technique, DATALORE might be utilized to search out datasets derived from those they presently have. This info will then be listed as a part of a knowledge catalog.

- Including extra particulars about linked tables in a database to the information catalog mainly helps statistical-based search algorithms overcome their limitations.

- Moreover, by displaying the potential transformations between a number of tables, DATALORE’s LLM-based information transformation era can considerably improve the return outcomes’ explainability, significantly helpful for customers enthusiastic about any linked desk.

- Bootstrapping ETL pipelines utilizing the supplied information transformation significantly reduces the consumer’s burden of writing their code. To reduce the potential for errors, the consumer should repeat and verify every step of the machine-learning workflow.

- DATALORE’s desk choice refinement recovers information transformations throughout a number of linked tables to make sure the consumer’s dataset might be reproduced and forestall errors within the ML workflow.

The staff employs Auto-Pipeline Benchmark (APB) and Semantic Knowledge Versioning Benchmark (SDVB). Remember the fact that pipelines comprising many tables are maintained utilizing a be a part of. To make sure that each datasets cowl all forty numerous sorts of transformation features, they modify them so as to add additional transformations. A state-of-the-art technique that produces information transformations to clarify adjustments between two equipped dataset variations, Clarify-DaV (EDV), is in comparison with the DATALORE. The researchers selected a 60-second delay for each methods, mimicking EDV’s default, as a result of producing transformations in DATALORE and EDV has exponential worst-case temporal complexity. Moreover, with DATALORE, they cap the utmost variety of columns utilized in a multi-column transformation at 3.

Within the SDVB benchmark, 32% of the take a look at instances are associated to numerical-to-numerical transformations. As a result of it might deal with numeric, textual, and categorical information, DATALORE usually beats EDV in each class. As a result of transformations with a be a part of are solely supported by DATALORE, additionally they see an even bigger efficiency margin over the APB dataset. When DATALORE was in contrast with EDV throughout many transformation classes, the researchers discovered that it excels in text-to-text and text-to-numerical transformations. The intricacy of DATALORE means there’s nonetheless house for growth concerning numeric-to-numeric and numeric-to-categorical transformations.

Try the Paper. All credit score for this analysis goes to the researchers of this undertaking. Additionally, don’t overlook to comply with us on Twitter. Be part of our Telegram Channel, Discord Channel, and LinkedIn Group.

Should you like our work, you’ll love our e-newsletter..

Don’t Overlook to hitch our 39k+ ML SubReddit

Dhanshree Shenwai is a Laptop Science Engineer and has an excellent expertise in FinTech firms protecting Monetary, Playing cards & Funds and Banking area with eager curiosity in functions of AI. She is obsessed with exploring new applied sciences and developments in right this moment’s evolving world making everybody’s life straightforward.