What Is Technical Web optimization?

Technical Web optimization is about bettering your web site to make it simpler for serps to seek out, perceive, and retailer your content material.

It additionally includes person expertise elements. Corresponding to making your web site sooner and simpler to make use of on cellular units.

Completed proper, technical Web optimization can enhance your visibility in search outcomes.

On this submit, you’ll study the basics and greatest practices to optimize your web site for technical Web optimization.

Let’s dive in.

Why Is Technical Web optimization Vital?

Technical Web optimization could make or break your Web optimization efficiency.

If pages in your web site aren’t accessible to serps, they gained’t seem in search outcomes—irrespective of how worthwhile your content material is.

This leads to a lack of site visitors to your web site and potential income to your corporation.

Plus, an internet site’s pace and mobile-friendliness are confirmed rating elements.

In case your pages load slowly, customers could get irritated and depart your web site. Person behaviors like this will sign that your web site doesn’t create a optimistic person expertise. Because of this, serps could not rank your web site properly.

To grasp technical Web optimization higher, we have to talk about two essential processes: crawling and indexing.

Understanding Crawling and Easy methods to Optimize for It

Crawling is a vital part of how serps work.

Crawling occurs when serps observe hyperlinks on pages they already learn about to seek out pages they haven’t seen earlier than.

For instance, each time we publish new weblog posts, we add them to our important weblog web page.

So, the subsequent time a search engine like Google crawls our weblog web page, it sees the just lately added hyperlinks to new weblog posts.

And that’s one of many methods Google discovers our new weblog posts.

There are a number of methods to make sure your pages are accessible to serps:

Create an Web optimization-Pleasant Website Structure

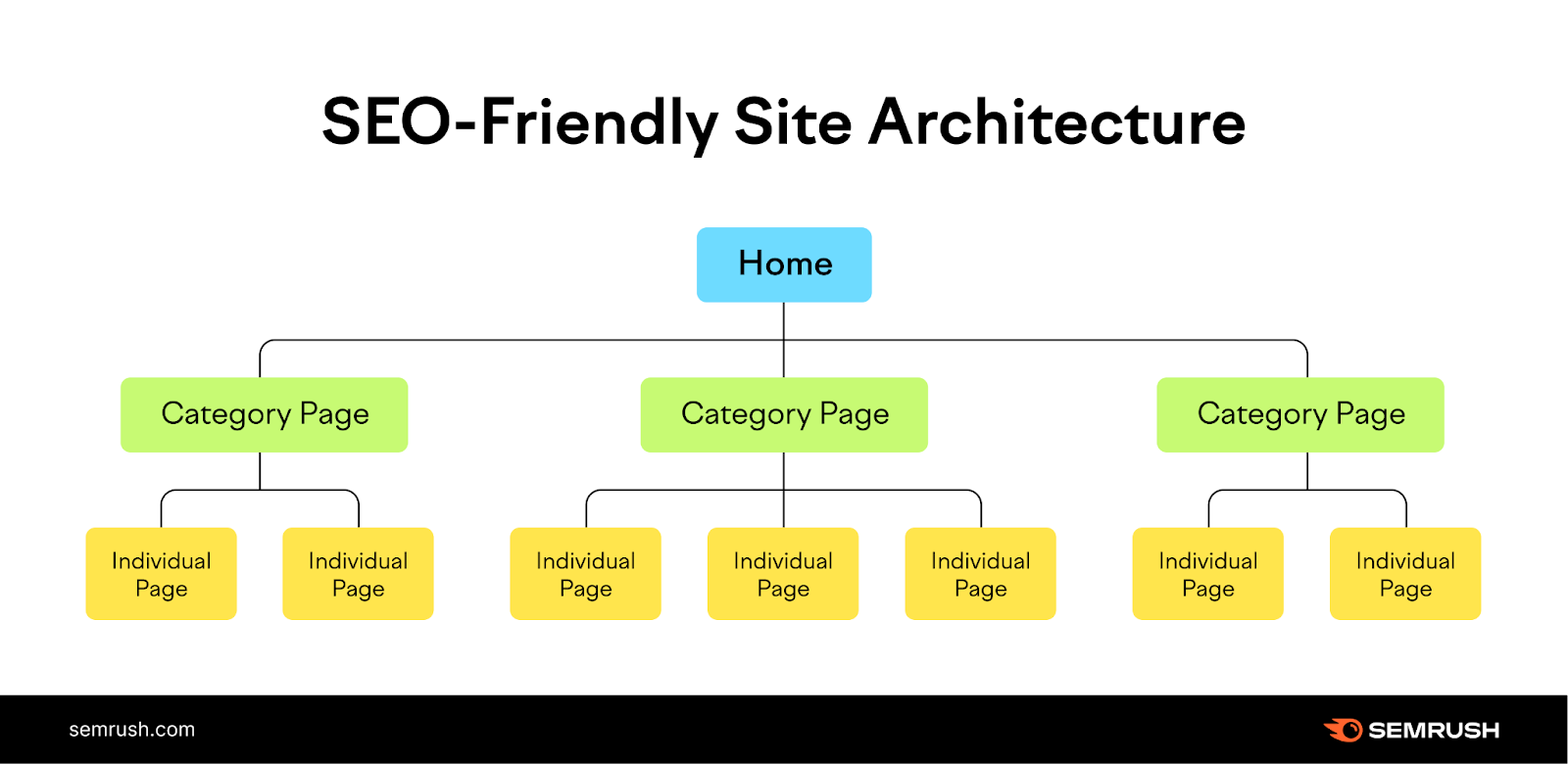

Website structure (additionally known as web site construction) is the best way pages are linked collectively inside your web site.

An efficient web site construction organizes pages in a manner that helps crawlers discover your web site content material shortly and simply.

So, guarantee all of the pages are just some clicks away out of your homepage when structuring your web site.

Like this:

Within the web site construction above, all of the pages are organized in a logical hierarchy.

The homepage hyperlinks to class pages. And the class pages hyperlink to particular person subpages on the positioning.

This construction additionally reduces the variety of orphan pages.

Orphan pages are pages with no inside hyperlinks pointing to them, making it tough (or generally inconceivable) for crawlers and customers to seek out them.

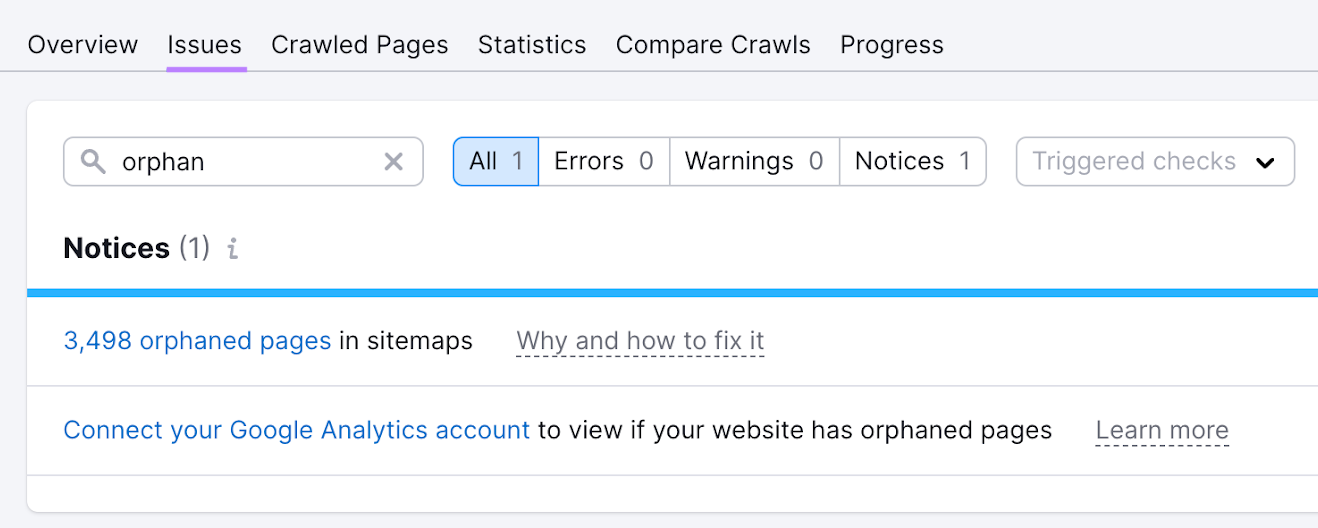

For those who’re a Semrush person, you’ll be able to simply discover whether or not your web site has any orphan pages.

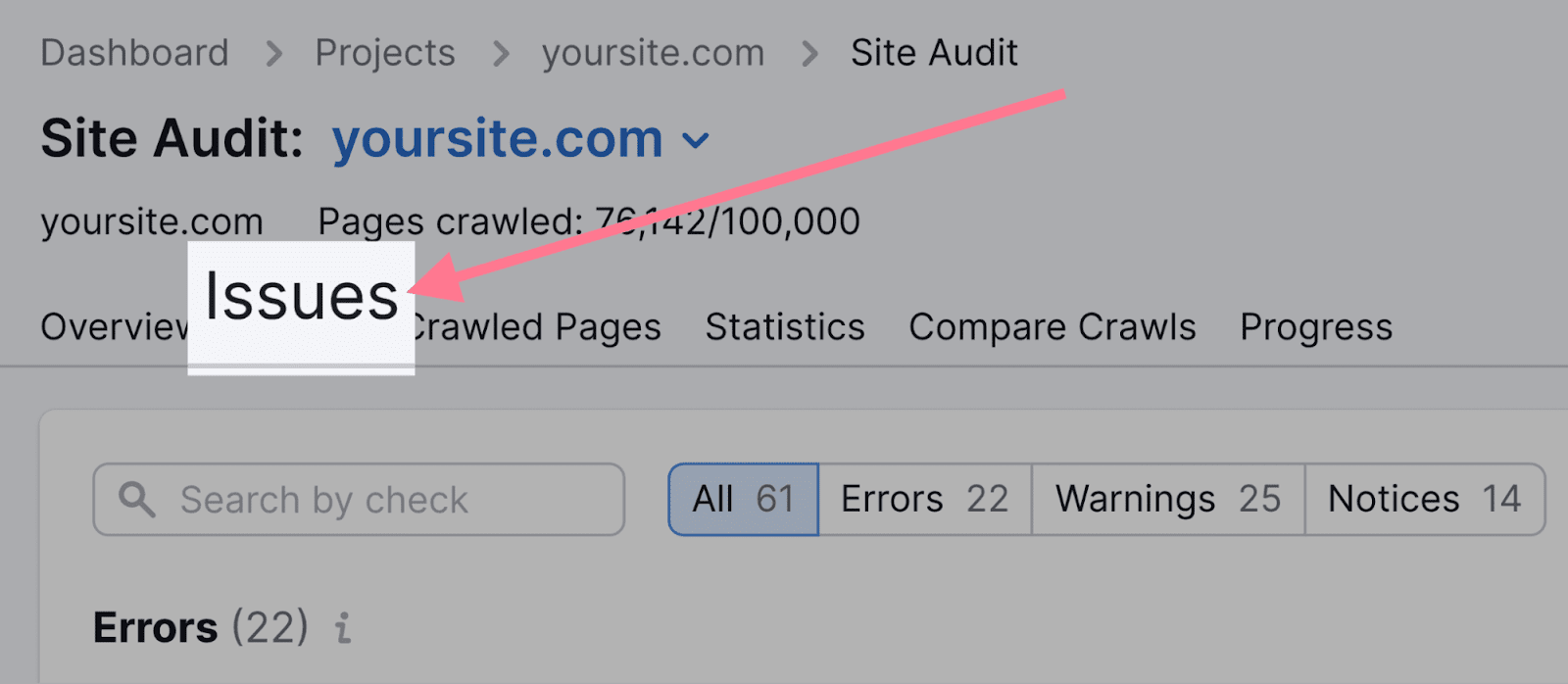

Arrange a mission within the Website Audit instrument and crawl your web site.

As soon as the crawl is full, navigate to the “Points” tab and seek for “orphan.”

The instrument reveals whether or not your web site has any orphan pages. Click on the blue hyperlink to see which of them they’re.

To repair the difficulty, add inside hyperlinks on non-orphan pages that time to the orphan pages.

Submit Your Sitemap to Google

Utilizing an XML sitemap may help Google discover your webpages.

An XML sitemap is a file containing an inventory of essential pages in your web site. It lets serps know which pages you could have and the place to seek out them.

That is particularly essential in case your web site incorporates loads of pages. Or in the event that they’re not linked collectively properly.

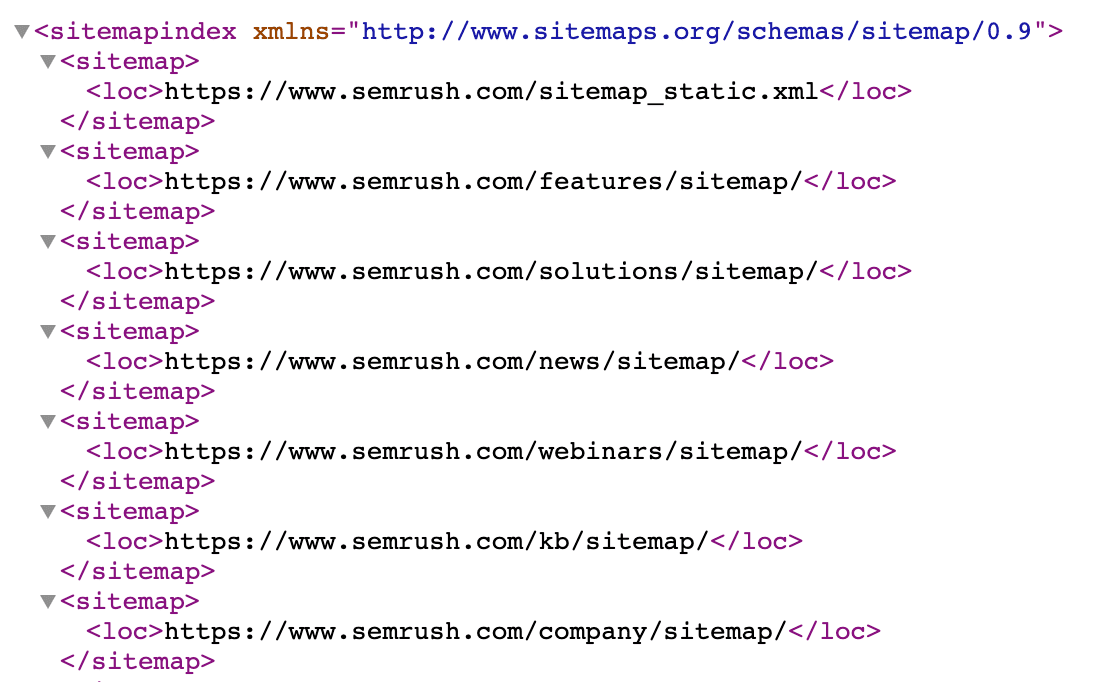

Right here’s what Semrush’s XML sitemap appears like:

Your sitemap is normally situated at one in every of these two URLs:

- yoursite.com/sitemap.xml

- yoursite.com/sitemap_index.xml

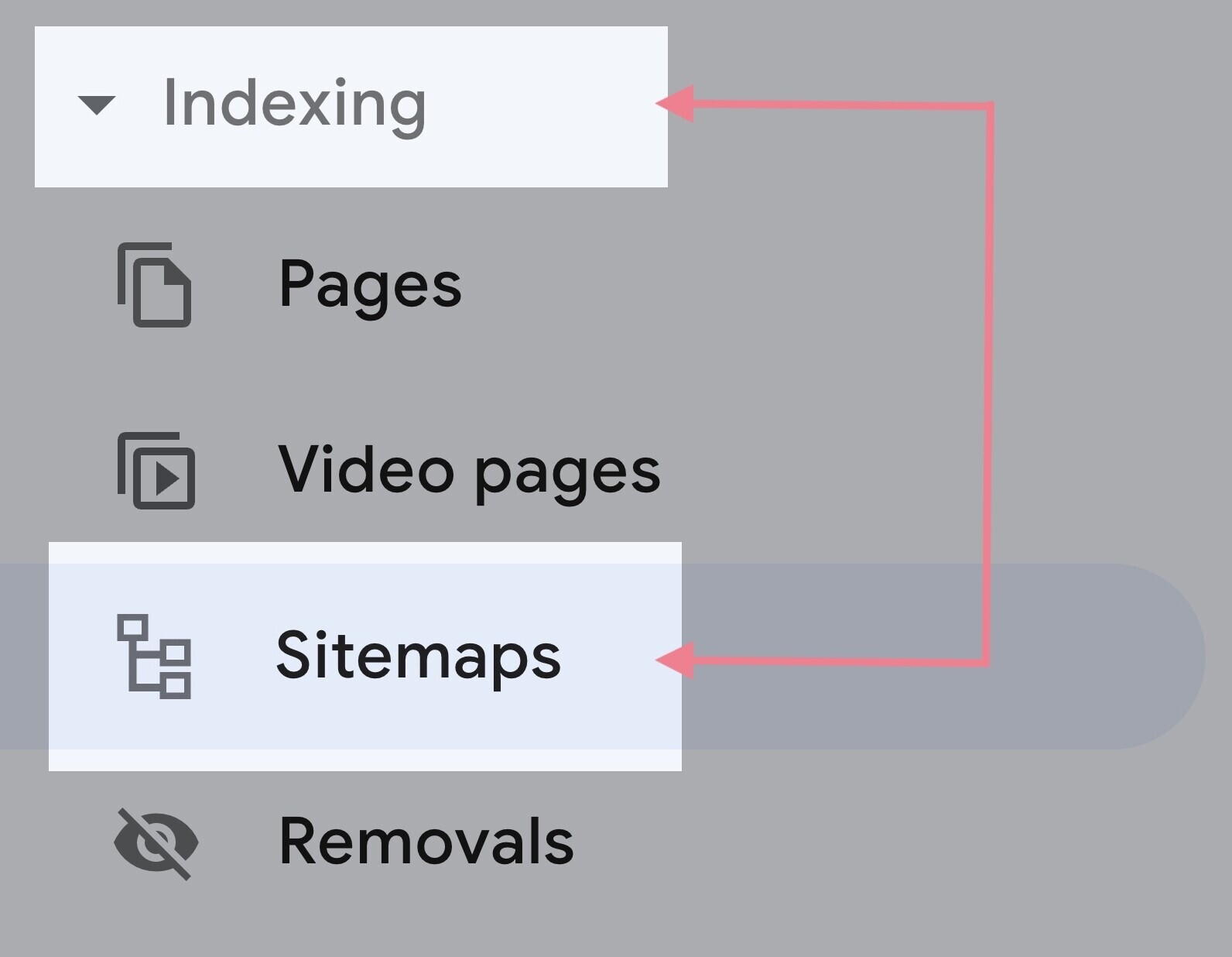

When you find your sitemap, submit it to Google by way of Google Search Console (GSC).

Go to GSC and click on “Indexing” > “Sitemaps” from the sidebar.

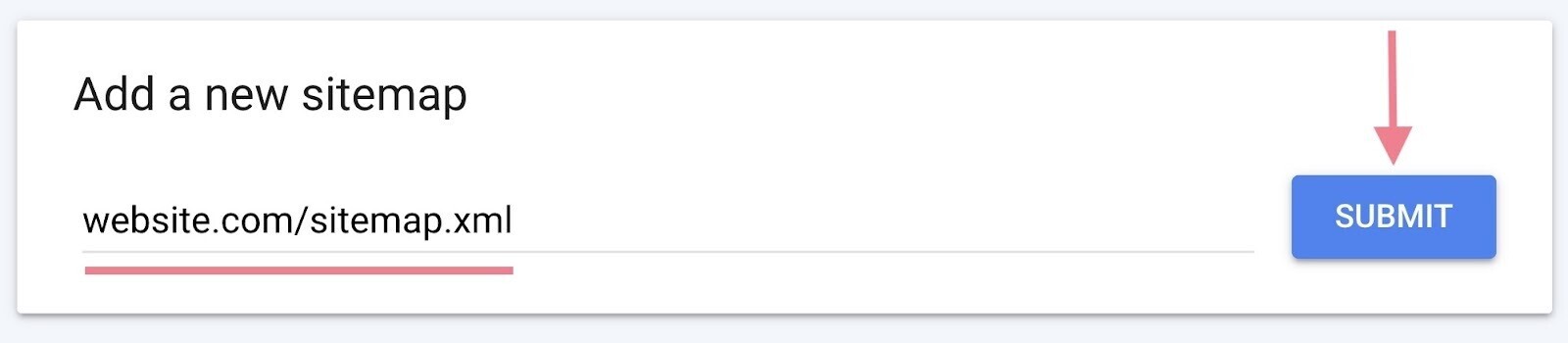

Then, paste your sitemap URL within the clean discipline and click on “Submit.”

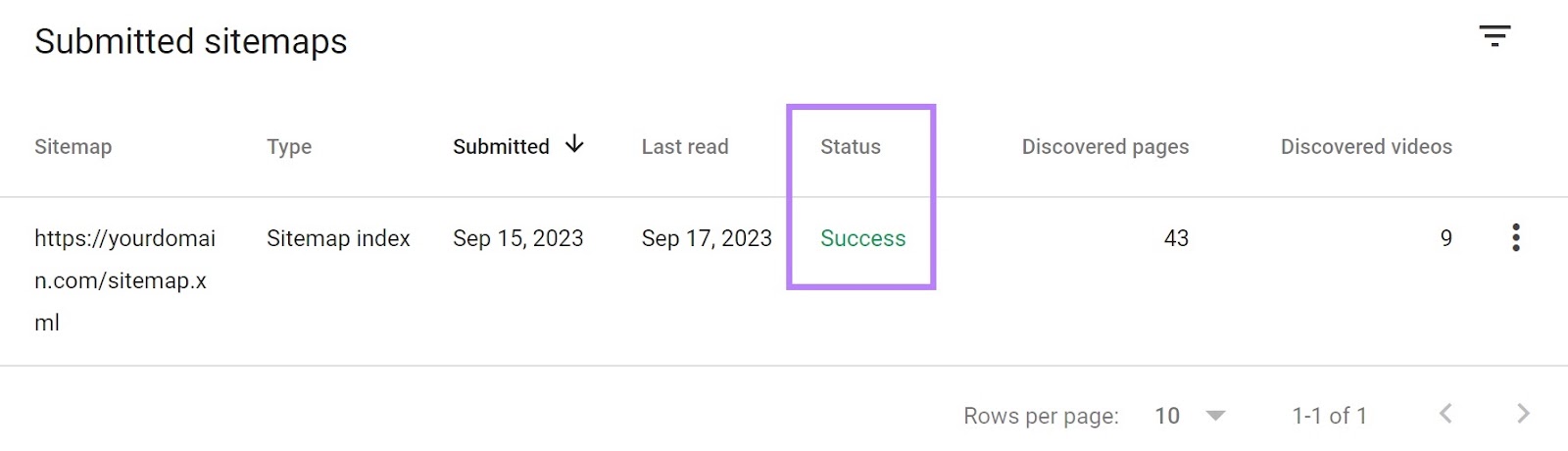

After Google is finished processing your sitemap, you must see a affirmation message like this:

Understanding Indexing and Easy methods to Optimize for It

As soon as serps crawl your pages, they then attempt to analyze and perceive the content material on these pages.

After which the search engine shops these items of content material in its search index—an enormous database containing billions of webpages.

Your webpages have to be listed by serps to look in search outcomes.

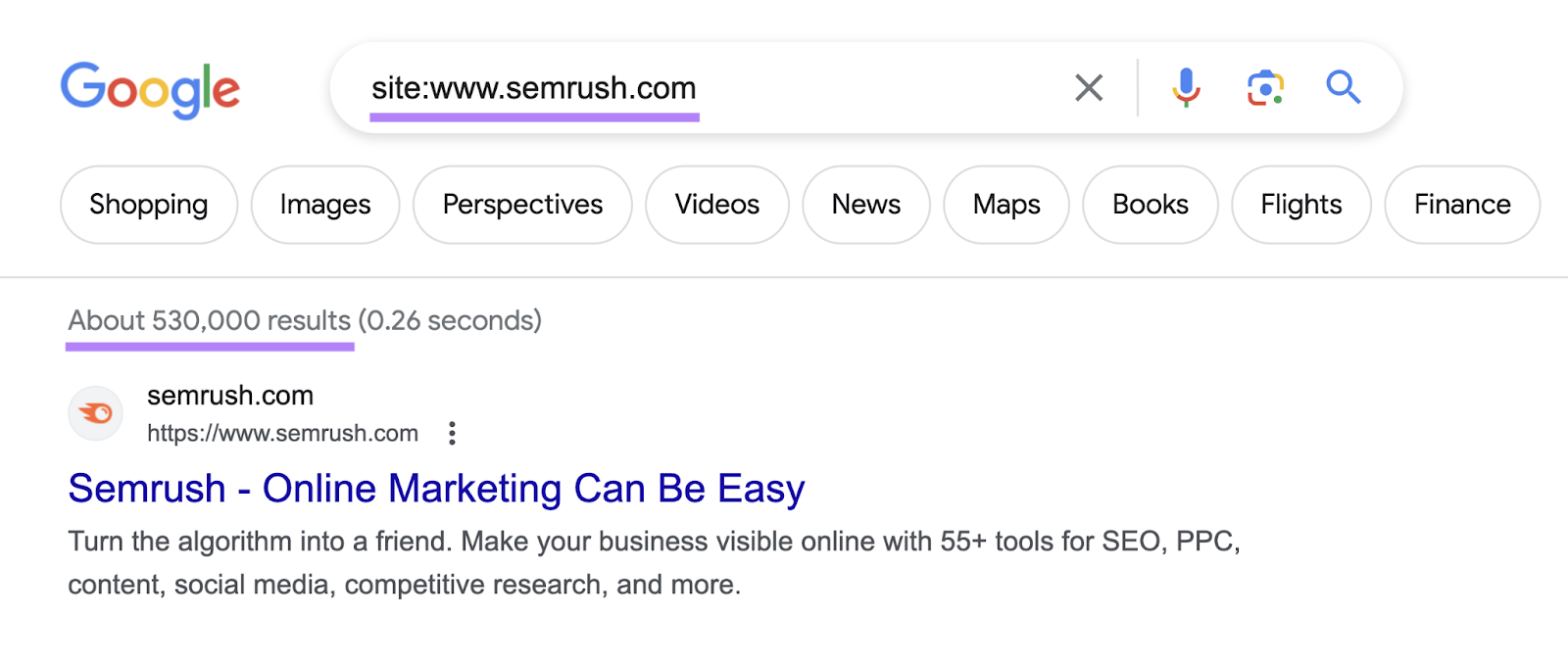

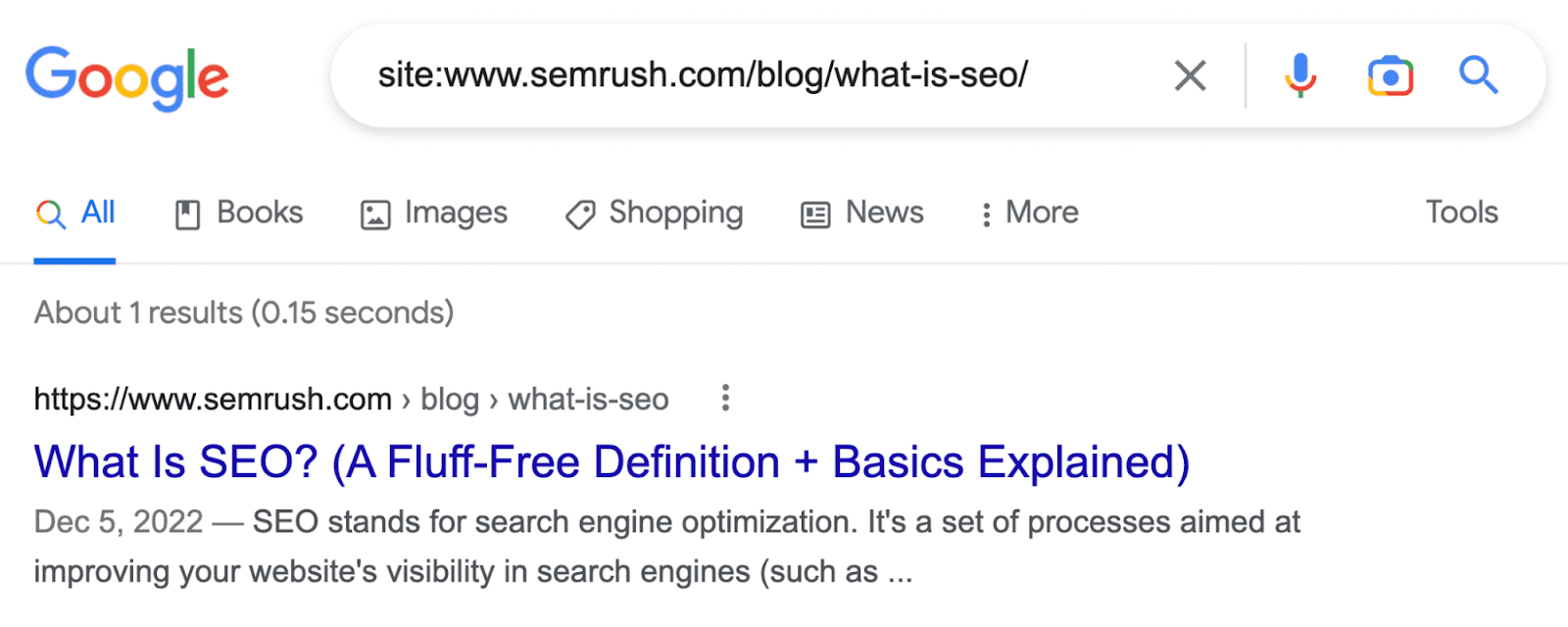

The best strategy to examine whether or not your pages are listed is to carry out a “web site:” operator search.

For instance, if you wish to examine the index standing of semrush.com, you’ll sort “web site:www.semrush.com” into Google’s search field.

This tells you (roughly) what number of pages from the positioning Google has listed.

You may also examine whether or not particular person pages are listed by looking out the web page URL with the “web site:” operator.

Like this:

There are some things you must do to make sure Google doesn’t have hassle indexing your webpages:

Use the Noindex Tag Fastidiously

The “noindex” tag is an HTML snippet that retains your pages out of Google’s index.

It’s positioned inside the <head> part of your webpage and appears like this:

<meta title="robots" content material="noindex">

Ideally, you’d need all of your essential pages to get listed. So use the noindex tag solely once you wish to exclude sure pages from indexing.

These could possibly be:

- Thanks pages

- PPC touchdown pages

To study extra about utilizing noindex tags and learn how to keep away from frequent implementation errors, learn our information to robots meta tags.

Implement Canonical Tags The place Wanted

When Google finds comparable content material on a number of pages in your web site, it generally doesn’t know which of the pages to index and present in search outcomes.

That’s when “canonical” tags come in useful.

The canonical tag (rel=”canonical”) identifies a hyperlink as the unique model, which tells Google which web page it ought to index and rank.

The tag is nested inside the <head> of a reproduction web page (however it’s a good suggestion to apply it to the principle web page as properly) and appears like this:

<hyperlink rel="canonical" href="https://instance.com/original-page/" />

Further Technical Web optimization Greatest Practices

Creating an Web optimization-friendly web site construction, submitting your sitemap to Google, and utilizing noindex and canonical tags appropriately ought to get your pages crawled and listed.

However if you’d like your web site to be totally optimized for technical Web optimization, contemplate these extra greatest practices.

1. Use HTTPS

Hypertext switch protocol safe (HTTPS) is a safe model of hypertext switch protocol (HTTP).

It helps defend delicate person data like passwords and bank card particulars from being compromised.

And it’s been a rating sign since 2014.

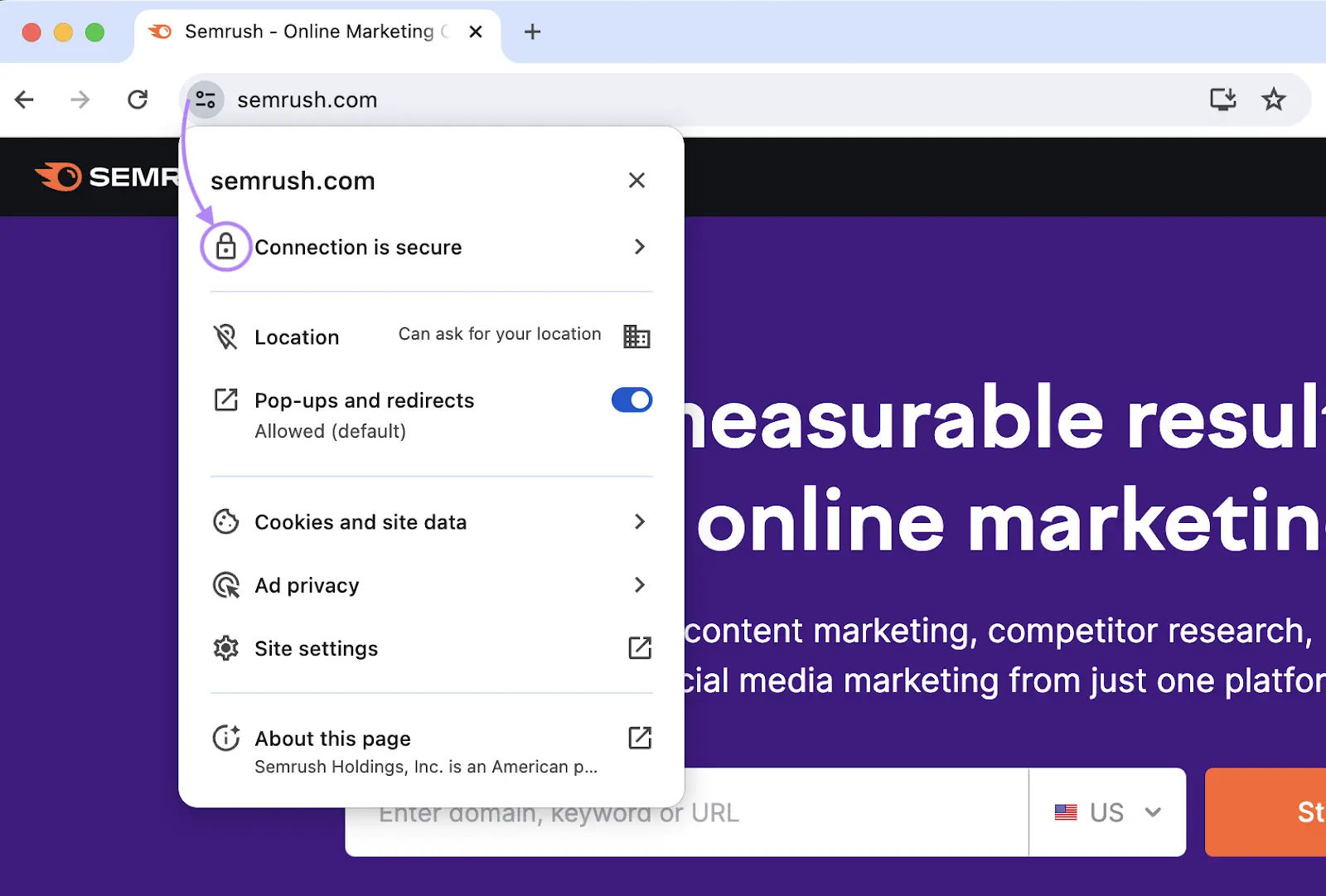

You possibly can examine whether or not your web site makes use of HTTPS by merely visiting it.

Simply search for the “lock” icon to substantiate.

For those who see the “Not safe” warning, you’re not utilizing HTTPS.

On this case, it’s essential to set up a safe sockets layer (SSL) or transport layer safety (TLS) certificates..

An SSL/TLS certificates authenticates the identification of the web site. And establishes a safe connection when customers are accessing it.

You will get an SSL/TLS certificates totally free from Let’s Encrypt.

2. Discover & Repair Duplicate Content material Points

Duplicate content material is when you could have the identical or practically the identical content material on a number of pages in your web site.

For instance, Buffer had these two completely different URLs for pages which might be practically similar:

- https://buffer.com/assets/social-media-manager-checklist/

- https://buffer.com/library/social-media-manager-checklist/

Google doesn’t penalize websites for having duplicate content material.

However duplicate content material may cause points like:

- Undesirable URLs rating in search outcomes

- Backlink dilution

- Wasted crawl price range

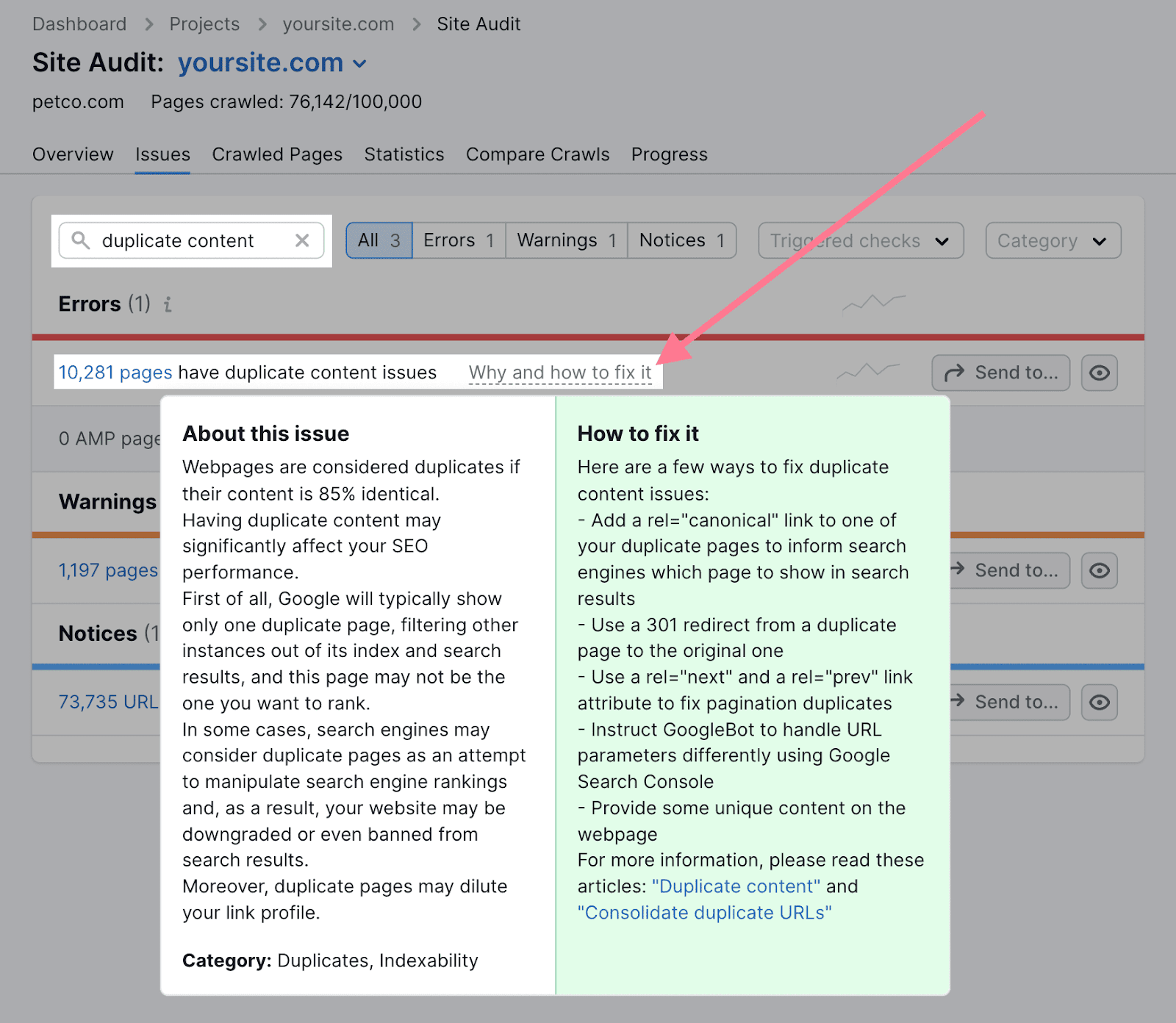

With Semrush’s Website Audit instrument, you could find out whether or not your web site has duplicate content material points.

Begin by operating a full crawl of your web site after which going to the “Points” tab.

Then, seek for “duplicate content material.”

The instrument will present the error in case you have duplicate content material. And supply recommendation on learn how to tackle it once you click on “Why and learn how to repair it.”

3. Make Certain Solely One Model of Your Web site Is Accessible to Customers and Crawlers

Customers and crawlers ought to solely have the ability to entry one in every of these two variations of your web site:

- https://yourwebsite.com

- https://www.yourwebsite.com

Having each variations accessible creates duplicate content material points.

And reduces the effectiveness of your backlink profile. As a result of some web sites could hyperlink to the www model, whereas others hyperlink to the non-www model.

This may negatively have an effect on your efficiency in Google.

So, solely use one model of your web site. And redirect the opposite model to your important web site.

4. Enhance Your Web page Pace

Web page pace is a rating issue each on cellular and desktop units.

So, be certain your web site masses as quick as doable.

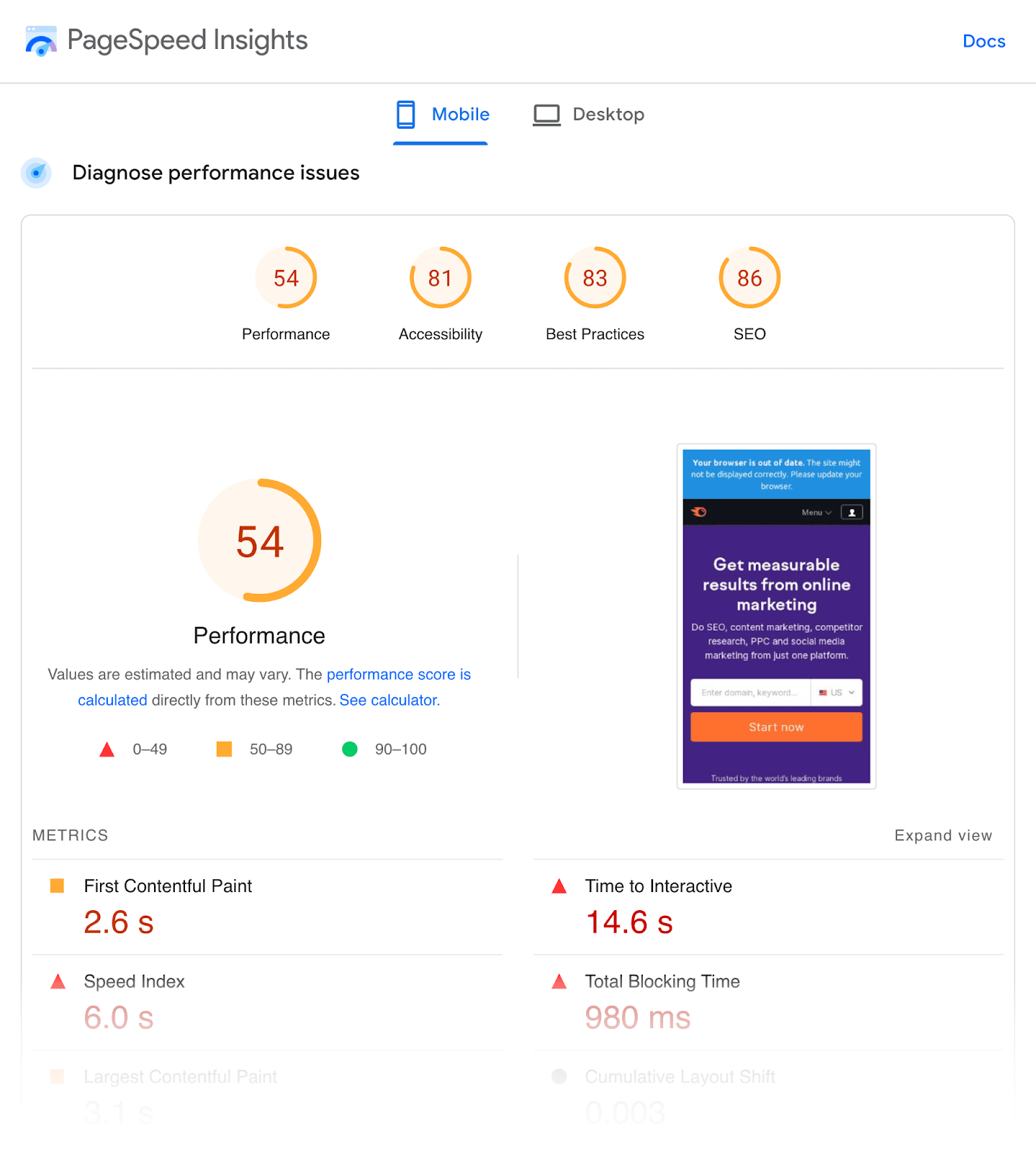

You should use Google’s PageSpeed Insights instrument to examine your web site’s present pace.

It provides you a efficiency rating from 0 to 100. The upper the quantity, the higher.

Listed here are few concepts for bettering your web site pace:

- Compress your photographs—Photographs are normally the largest information on a webpage. Compressing them with picture optimization instruments like ShortPixel will cut back their file sizes in order that they take as little time to load as doable.

- Use a content material distribution community (CDN)—A CDN shops copies of your webpages on servers across the globe. It then connects guests to the closest server, so there’s much less distance for the requested information to journey.

- Minify HTML, CSS, and JavaScript information—Minification removes pointless characters and whitespace from code to scale back file sizes. Which improves web page load time.

5. Guarantee Your Web site Is Cell-Pleasant

Google makes use of mobile-first indexing. Because of this it appears at cellular variations of webpages to index and rank content material.

So, be certain your web site is appropriate on cellular units.

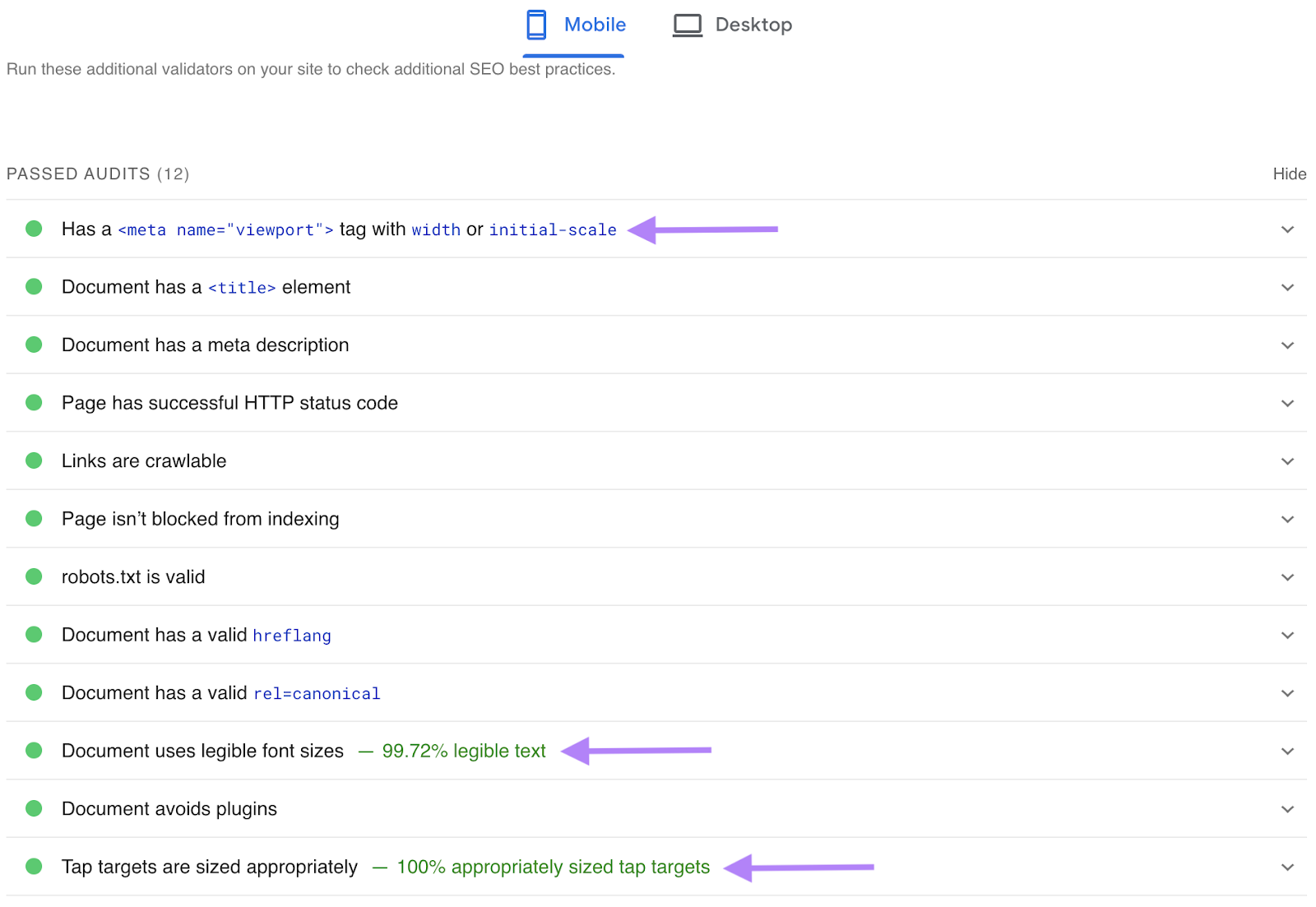

To see if that’s the case in your web site, use the identical PageSpeed Insights instrument.

When you run a webpage by way of it, navigate to the “Web optimization” part of the report. After which the “Handed Audits” part.

Right here, you’ll see whether or not mobile-friendly parts or options are current in your web site:

- Meta viewport tags—code that tells browsers learn how to management sizing on a web page’s seen space

- Legible font sizes

- Ample spacing round buttons and clickable parts

For those who deal with these items, your web site is optimized for cellular units.

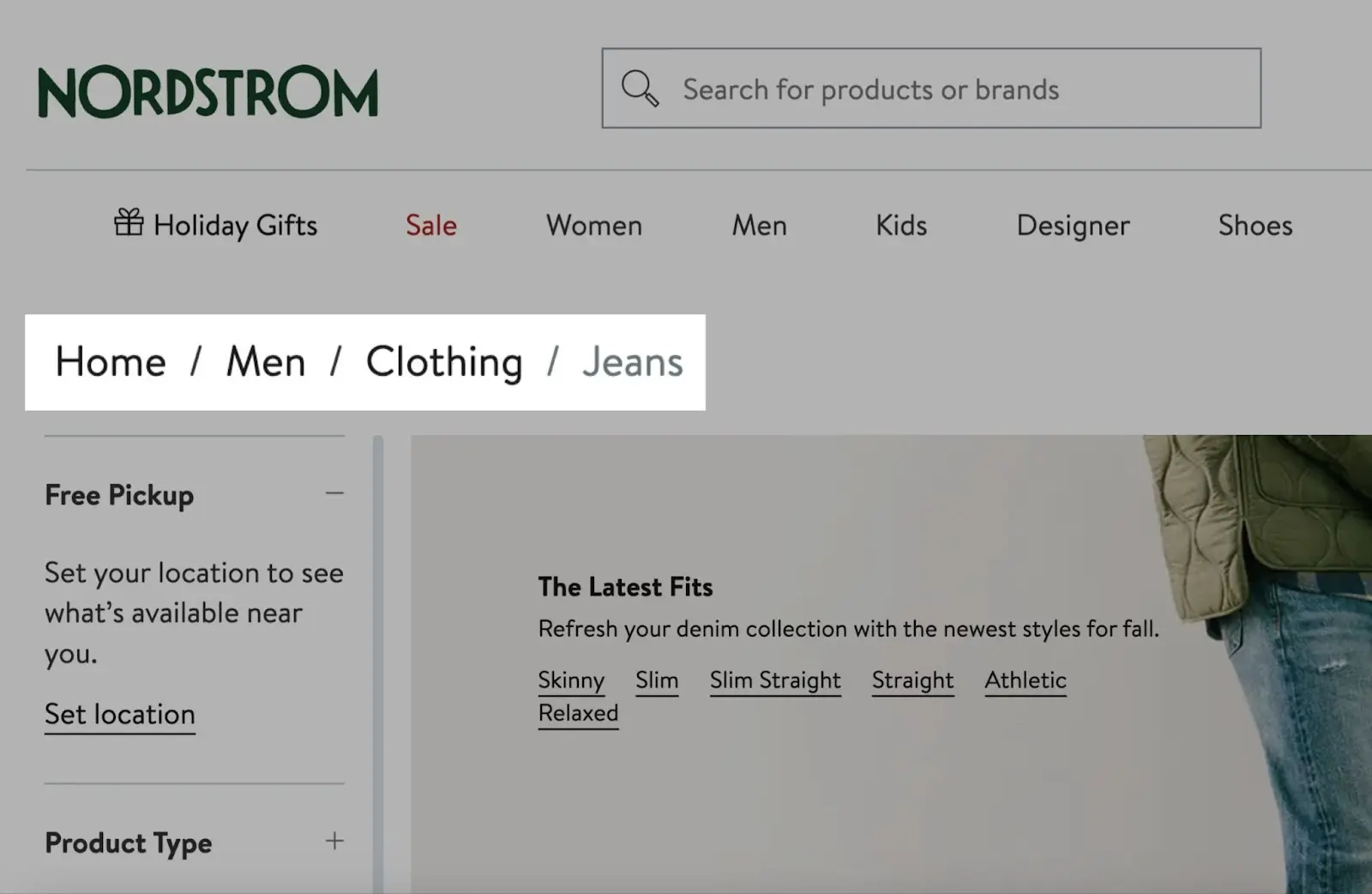

6. Use Breadcrumb Navigation

Breadcrumb navigation (or “breadcrumbs”) is a path of textual content hyperlinks that present customers the place they’re on the web site and the way they reached that time.

Right here’s an instance:

These hyperlinks make web site navigation simpler.

How?

Customers can simply navigate to higher-level pages with out the necessity to repeatedly use the again button or undergo complicated menu constructions.

So, you must positively implement breadcrumbs. Particularly in case your web site may be very giant. Like an ecommerce web site.

Additionally they profit Web optimization.

These extra hyperlinks distribute hyperlink fairness (PageRank) all through your web site. Which helps your web site rank larger.

In case your web site is on WordPress or Shopify, implementing breadcrumb navigation is especially straightforward.

Some themes could embody breadcrumbs out of the field. In case your theme doesn’t, you should use the Yoast Web optimization plugin and it’ll arrange the whole lot for you.

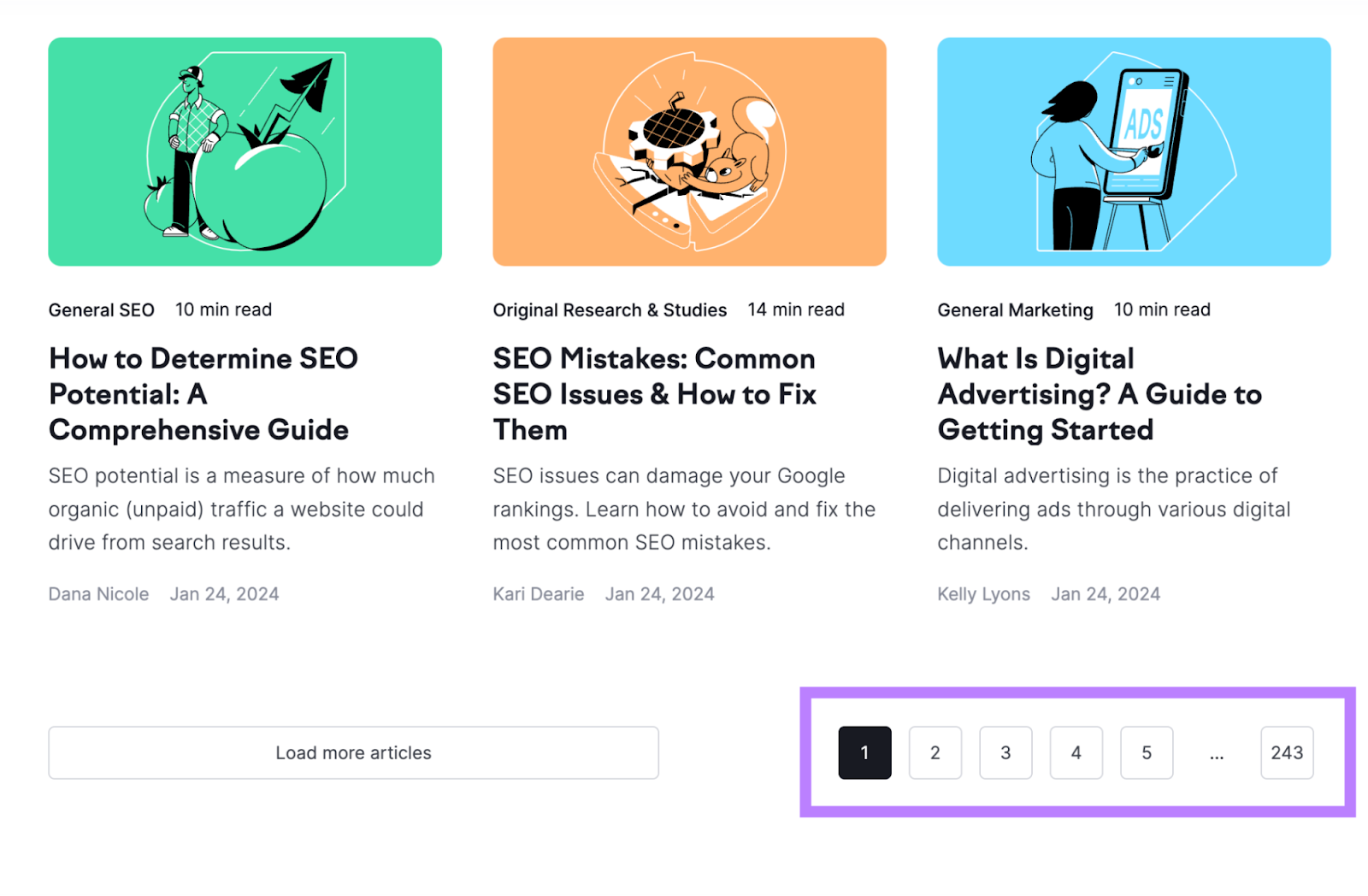

Pagination is a navigation approach that’s used to divide a protracted record of content material into a number of pages.

For instance, we’ve used pagination on our weblog.

This strategy is favored over infinite scrolling.

In infinite scrolling, content material masses dynamically as customers scroll down the web page.

This creates a problem for Google. As a result of it might not have the ability to entry all of the content material that masses dynamically.

And if Google can’t entry your content material, it gained’t seem in search outcomes.

Carried out accurately, pagination will reference hyperlinks to the subsequent collection of pages. Which Google can observe to find your content material.

Study extra: Pagination: What Is It & Easy methods to Implement It Correctly

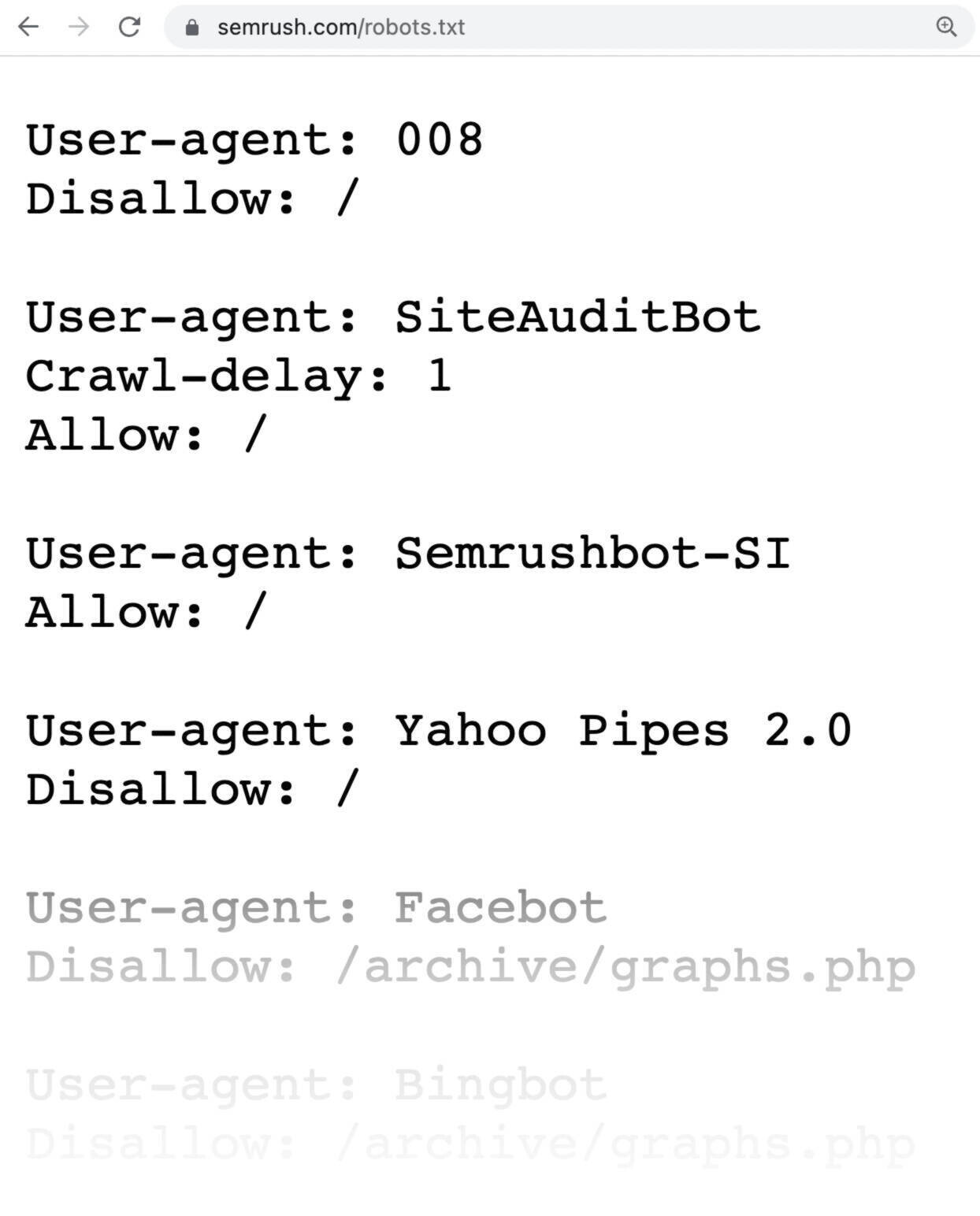

8. Assessment Your Robots.txt File

A robots.txt file tells Google which elements of the positioning it ought to entry and which of them it shouldn’t.

Right here’s what Semrush’s robots.txt file appears like:

Your robots.txt file is offered at your homepage URL with “/robots.txt” on the finish.

Right here’s an instance: yoursite.com/robots.txt

Verify it to make sure you’re not by accident blocking entry to essential pages that Google ought to crawl by way of the disallow directive.

For instance, you wouldn’t wish to block your weblog posts and common web site pages. As a result of then they’ll be hidden from Google.

Additional studying: Robots.txt: What It Is & How It Issues for Web optimization

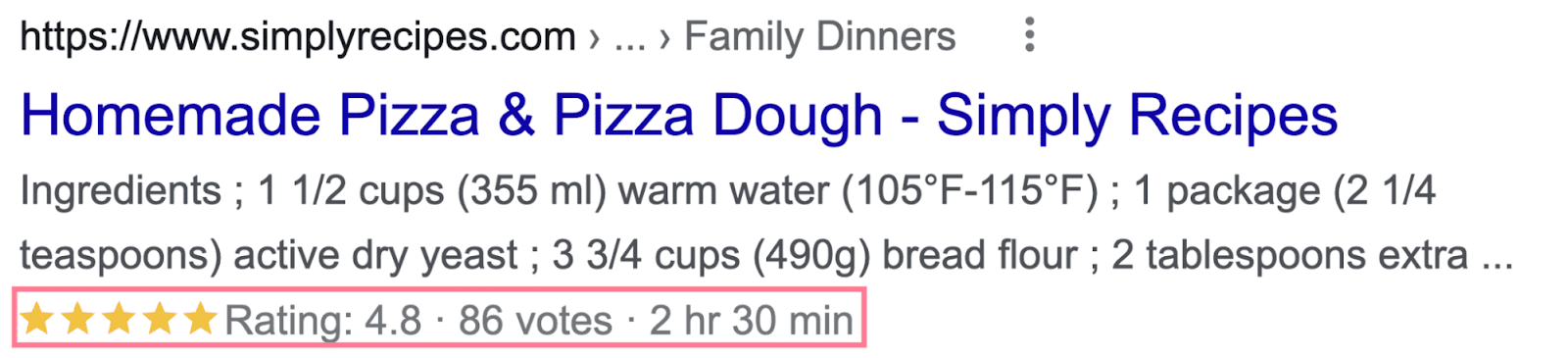

9. Implement Structured Knowledge

Structured knowledge (additionally known as schema markup) is code that helps Google higher perceive a web page’s content material.

And by including the suitable structured knowledge, your pages can win wealthy snippets.

Wealthy snippets are extra interesting search outcomes with extra data showing below the title and outline.

Right here’s an instance:

The good thing about wealthy snippets is that they make your pages stand out from others. Which may enhance your click-through fee (CTR).

Google helps dozens of structured knowledge markups, so select one that most closely fits the character of the pages you wish to add structured knowledge to.

For instance, when you run an ecommerce retailer, including product structured knowledge to your product pages is smart.

Right here’s what the pattern code may appear like for a web page promoting the iPhone 15 Professional:

<script sort="software/ld+json">

"@context": "https://schema.org/",

"@sort": "Product",

"title": "iPhone 15 Professional",

"picture": "iphone15.jpg",

"model":

"@sort": "Model",

"title": "Apple"

,

"presents":

"@sort": "Provide",

"url": "",

"priceCurrency": "USD",

"value": "1099",

"availability": "https://schema.org/InStock",

"itemCondition": "https://schema.org/NewCondition"

,

"aggregateRating":

"@sort": "AggregateRating",

"ratingValue": "4.8"

</script>

There are many free structured knowledge generator instruments like this one. So that you don’t have to write down the code by hand.

And when you’re utilizing WordPress, you canuse the Yoast Web optimization plugin to implement structured knowledge.

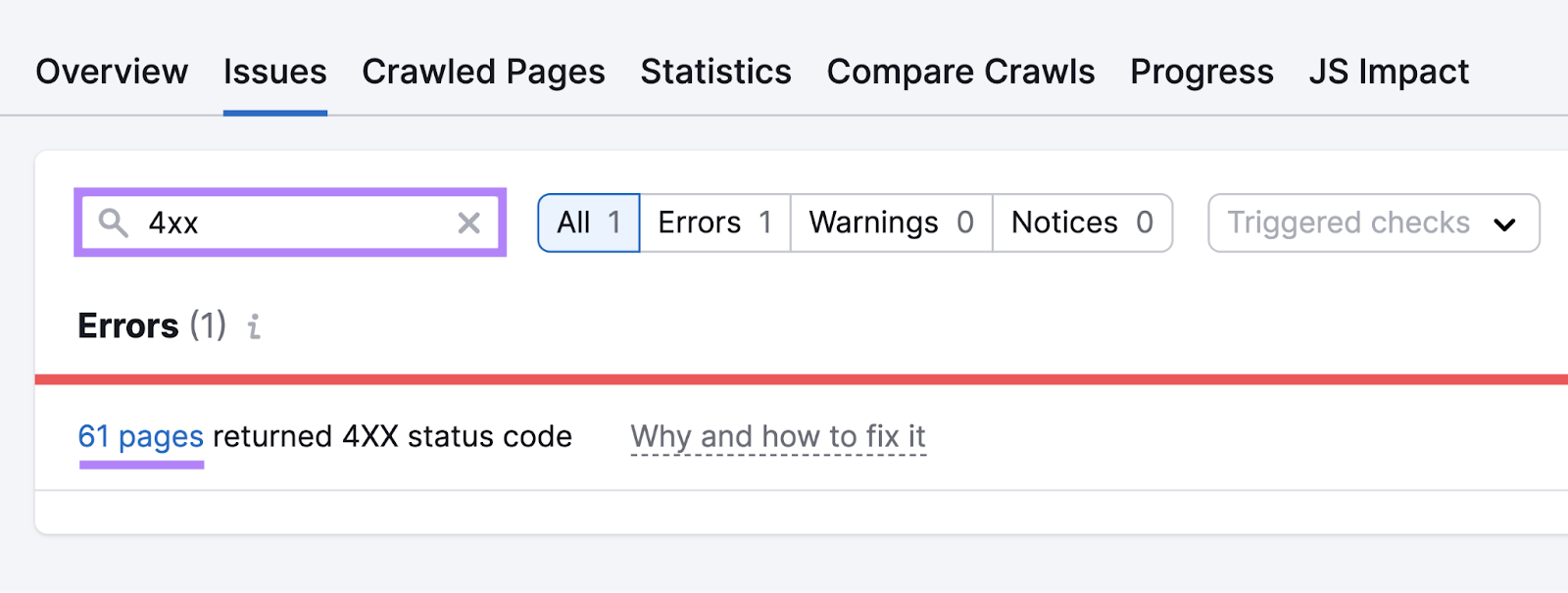

10. Discover & Repair Damaged Pages

Having damaged pages in your web site negatively impacts person expertise.

Right here’s an instance of what one appears like:

And if these pages have backlinks, they go wasted as a result of they level to lifeless assets.

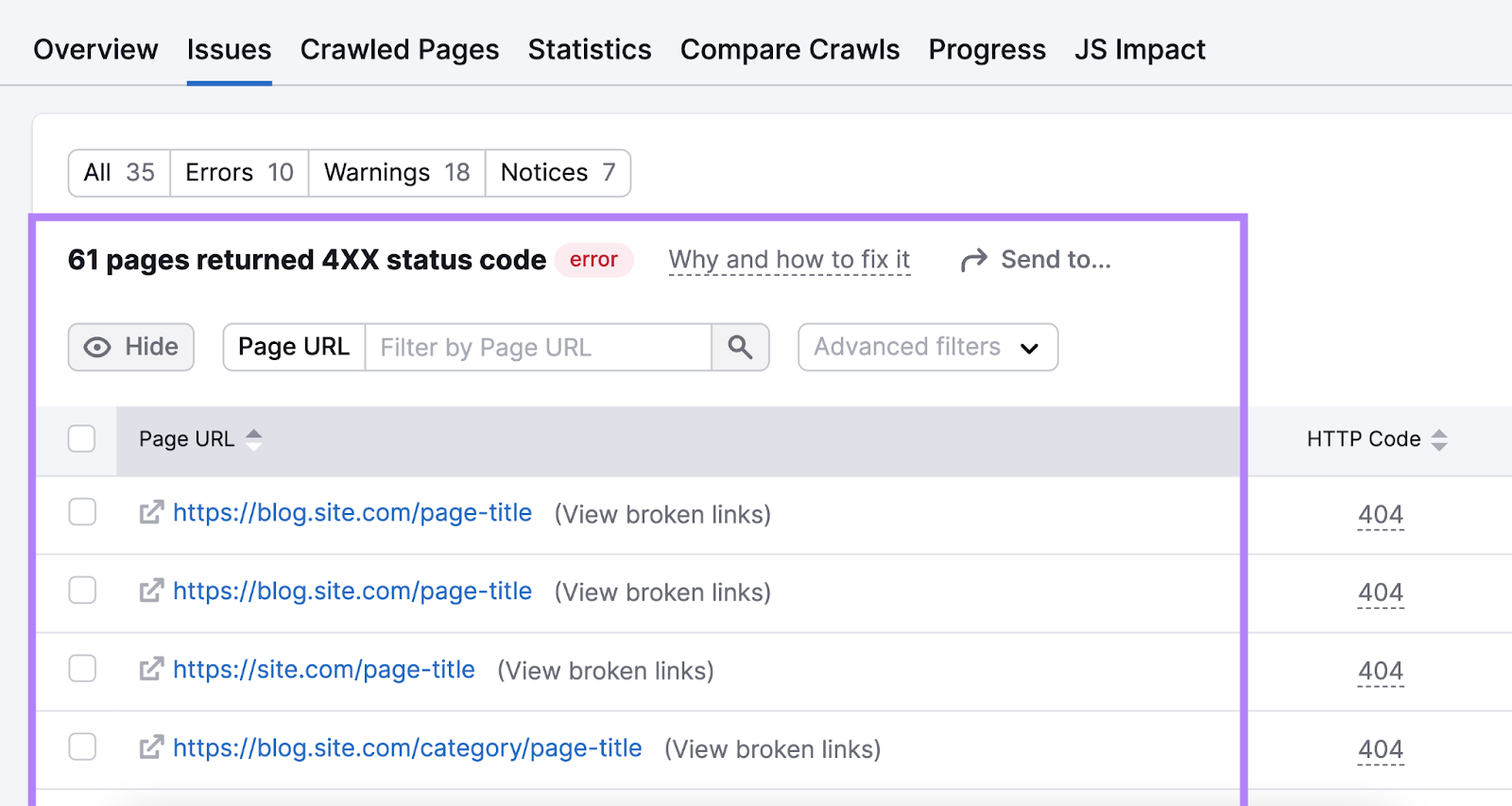

To search out damaged pages in your web site, crawl your web site utilizing Semrush’s Website Audit.

Then, go to the “Points” tab. And seek for “4xx.”

It’ll present you in case you have damaged pages in your web site. Click on on the “# pages” hyperlink to get an inventory of pages which might be lifeless.

To repair damaged pages, you could have two choices:

- Reinstate pages that had been by accident deleted

- Redirect outdated pages you now not wish to different related pages in your web site

After fixing your damaged pages, it’s essential to take away or replace any inside hyperlinks that time to your outdated pages.

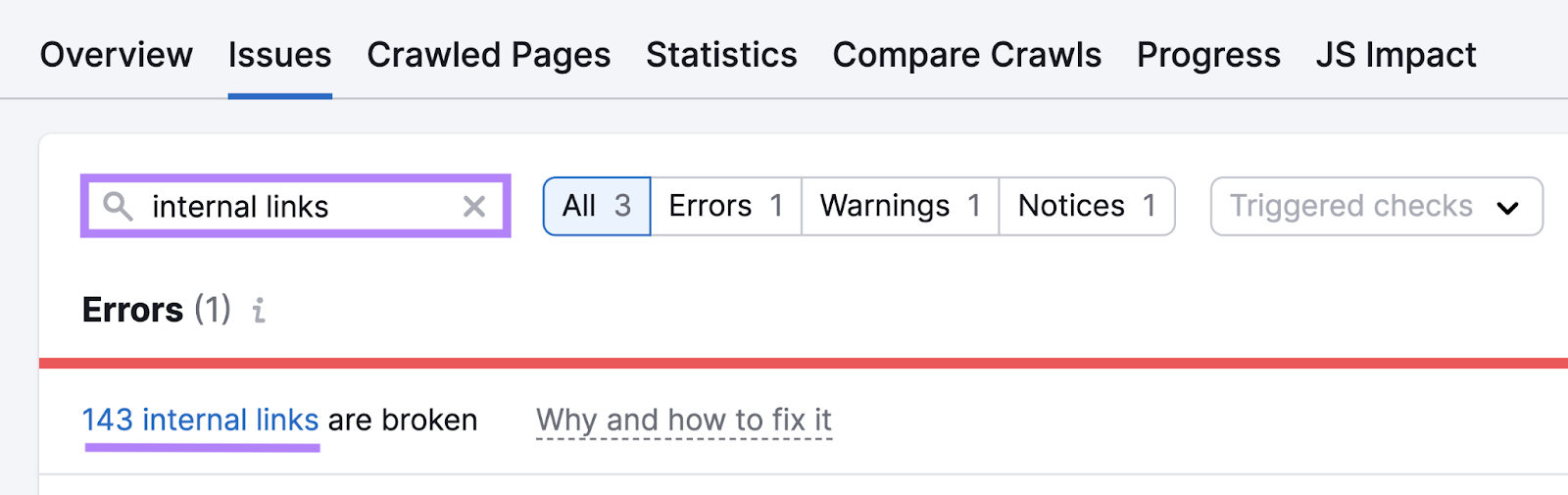

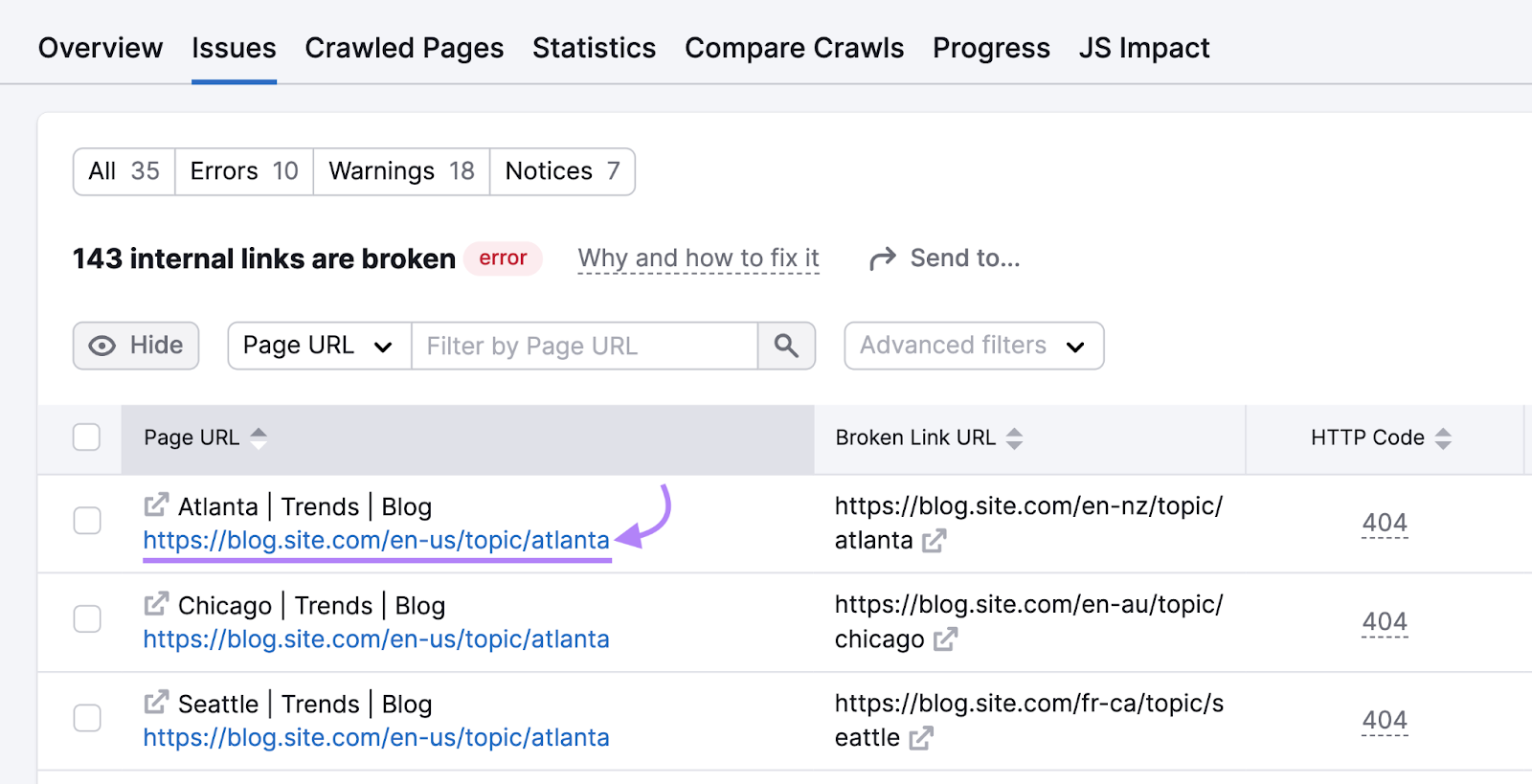

To try this, return to the “Points” tab. And seek for “inside hyperlinks.” The instrument will present you in case you have damaged inside hyperlinks.

For those who do, click on on the “# inside hyperlinks” button to see a full record of damaged pages with hyperlinks pointing to them. And click on on a particular URL to study extra.

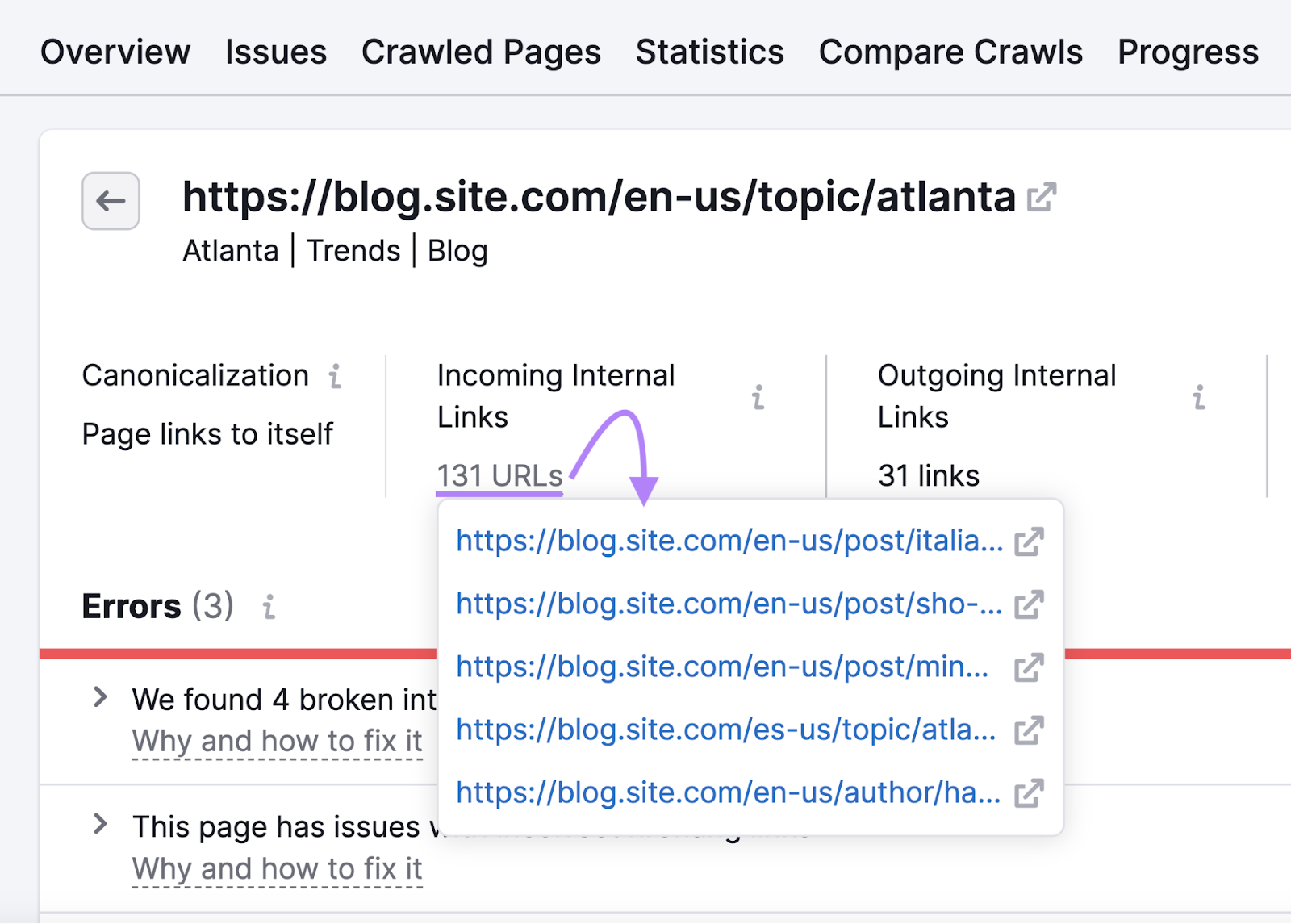

On the subsequent web page, click on the “# URLs” button, discovered below “Incoming Inner Hyperlinks,” to get an inventory of pages pointing to that damaged web page.

Replace inside hyperlinks pointing to damaged pages with hyperlinks to their up to date places.

11. Optimize for the Core Net Vitals

The Core Net Vitals are pace metrics that Google makes use of to measure person expertise.

These metrics embody:

- Largest Contentful Paint (LCP)—Calculates the time a webpage takes to load its largest ingredient for a person

- First Enter Delay (FID)—Measures the time it takes to react to a person’s first interplay with a webpage

- Cumulative Structure Shift (CLS)—Measures the surprising shifts in layouts of assorted parts on a webpage

To make sure your web site is optimized for the Core Net Vitals, it’s essential to purpose for the next scores:

- LCP—2.5 seconds or much less

- FID—100 milliseconds or much less

- CLS—0.1 or much less

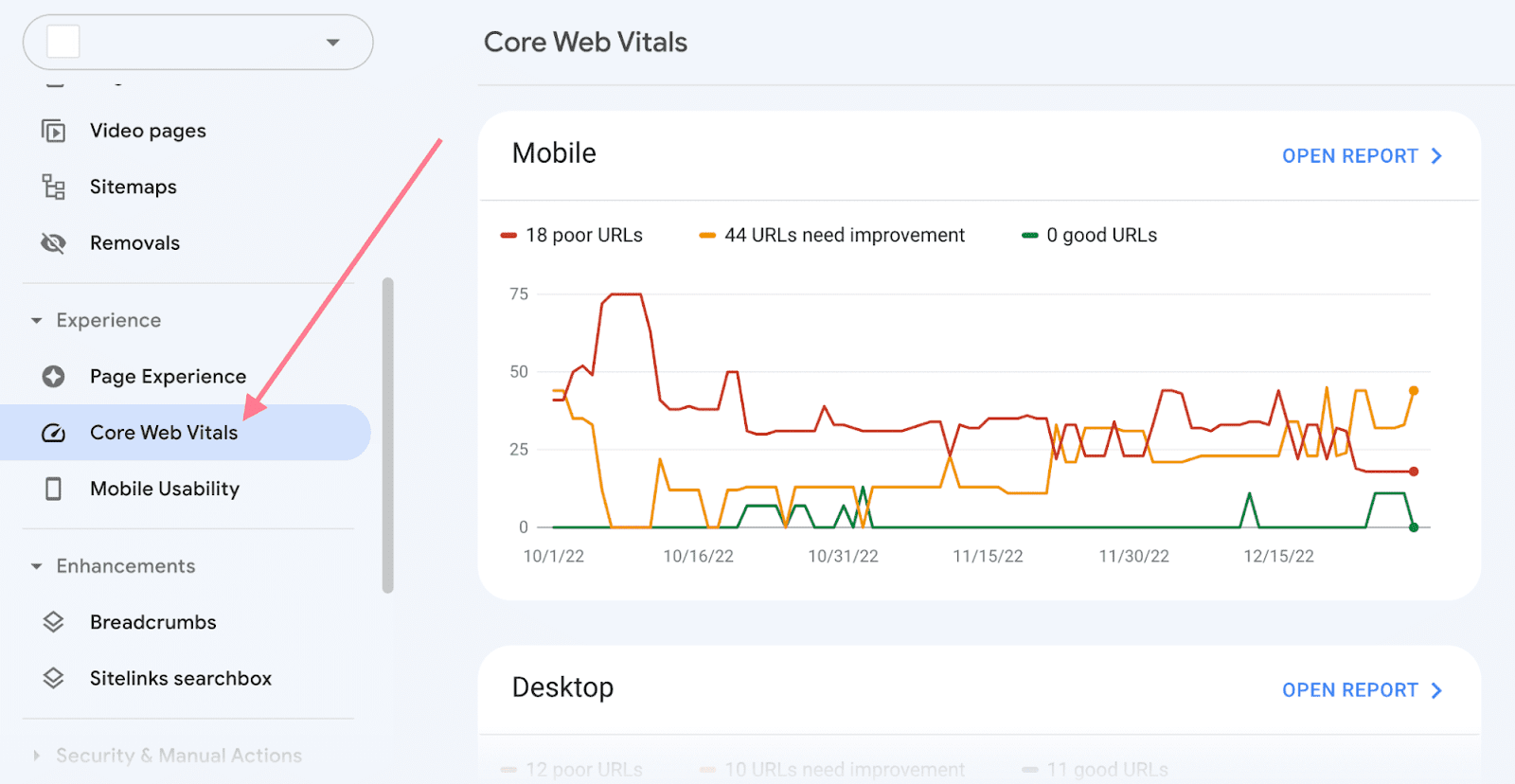

You possibly can examine your web site’s efficiency for the Core Net Vitals metrics in Google Search Console.

To do that, go to the “Core Net Vitals” report.

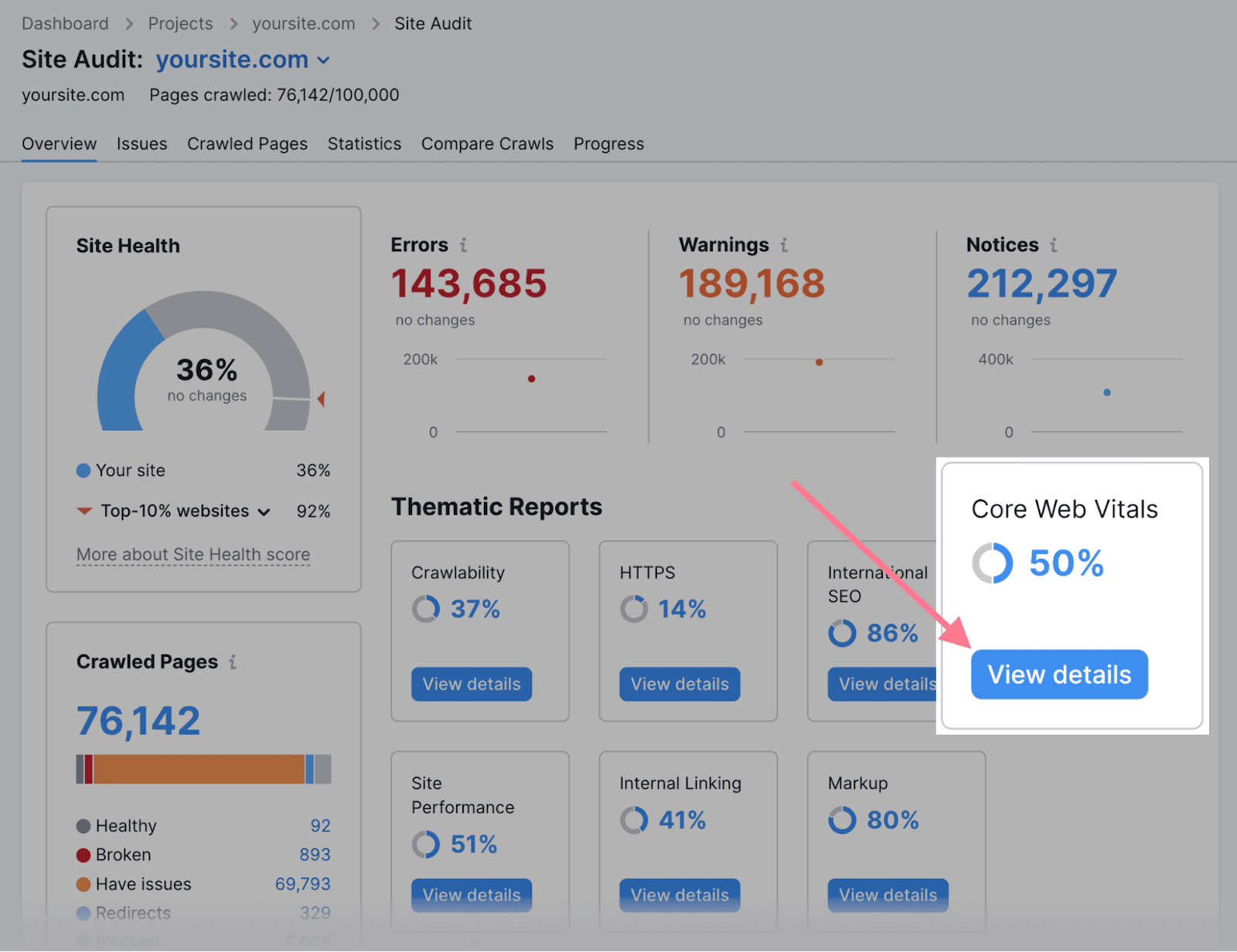

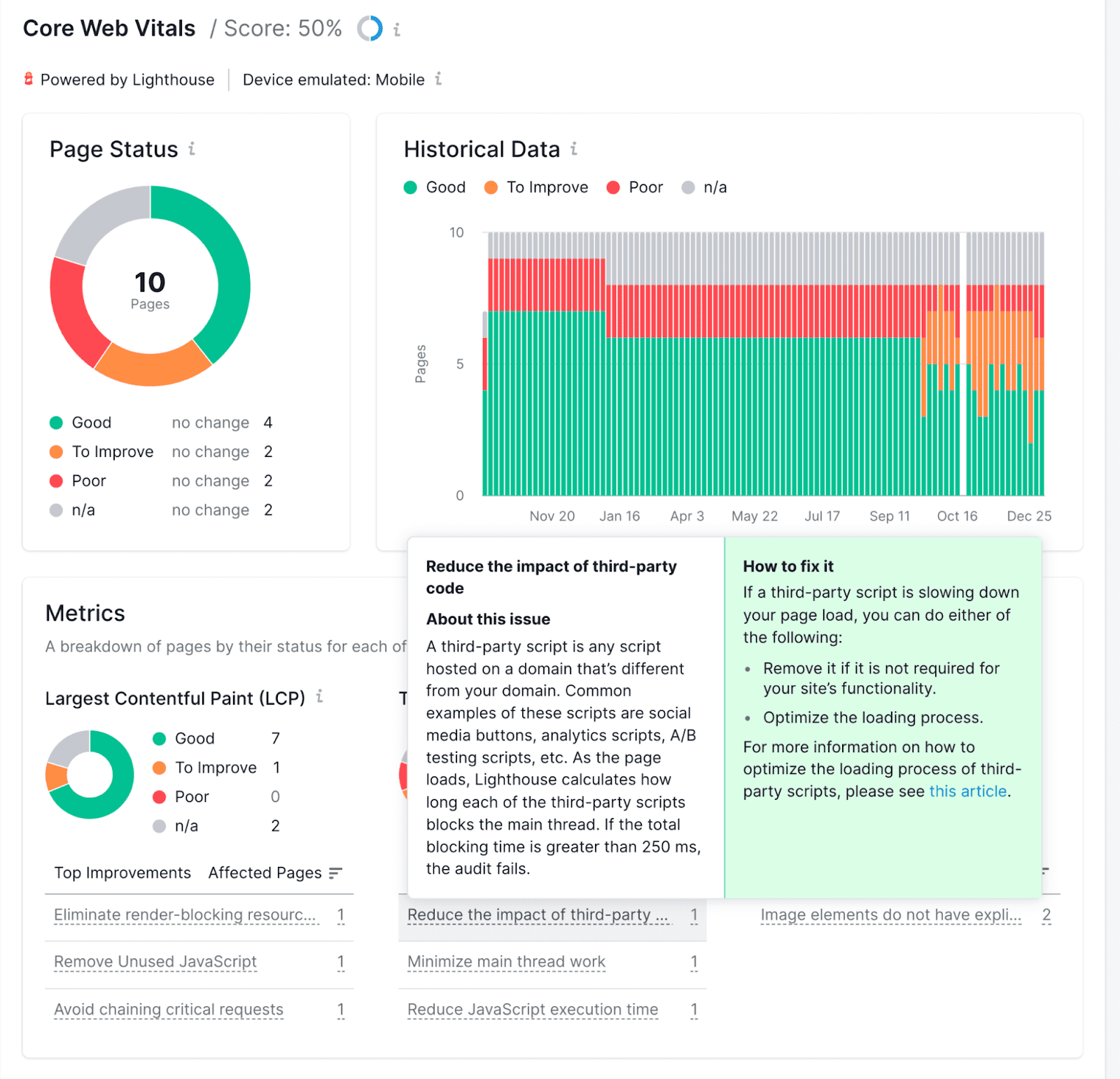

You may also use Semrush to see a report particularly created across the Core Net Vitals.

Within the Website Audit instrument, navigate to “Core Net Vitals” and click on “View particulars.”

It will open a report with an in depth document of your web site’s Core Net Vitals efficiency and proposals for fixing any points.

Additional studying: Core Net Vitals: A Information to Bettering Web page Pace

12. Use Hreflang for Content material in A number of Languages

In case your web site has content material in a number of languages, it’s essential to use hreflang tags.

Hreflang is an HTML attribute used for specifying a webpage’s language and geographical concentrating on. And it helps Google serve the right variations of your pages to completely different customers.

For instance, now we have a number of variations of our homepage in several languages. That is our homepage in English:

And right here’s our homepage in Spanish:

Every of our completely different variations makes use of hreflang tags to inform Google who the meant viewers is.

This tag is fairly easy to implement.

Simply add the suitable hreflang tags within the <head> part of all variations of the web page.

For instance, in case you have your homepage in English, Spanish, and Portuguese, you’ll add these hreflang tags to all of these pages:

<hyperlink rel="alternate" hreflang="x-default" href="https://yourwebsite.com" />

<hyperlink rel="alternate" hreflang="es" href="https://yourwebsite.com/es/" />

<hyperlink rel="alternate" hreflang="pt" href="https://yourwebsite.com/pt/" />

<hyperlink rel="alternate" hreflang="en" href="https://yourwebsite.com" />

13. Keep On Prime of Technical Web optimization Points

Technical optimization is not a one-off factor. New issues will doubtless pop up over time as your web site grows in complexity.

That’s why repeatedly monitoring your technical Web optimization well being and fixing points as they come up is essential.

You are able to do this utilizing Semrush’s Website Audit instrument. It displays over 140 technical Web optimization points.

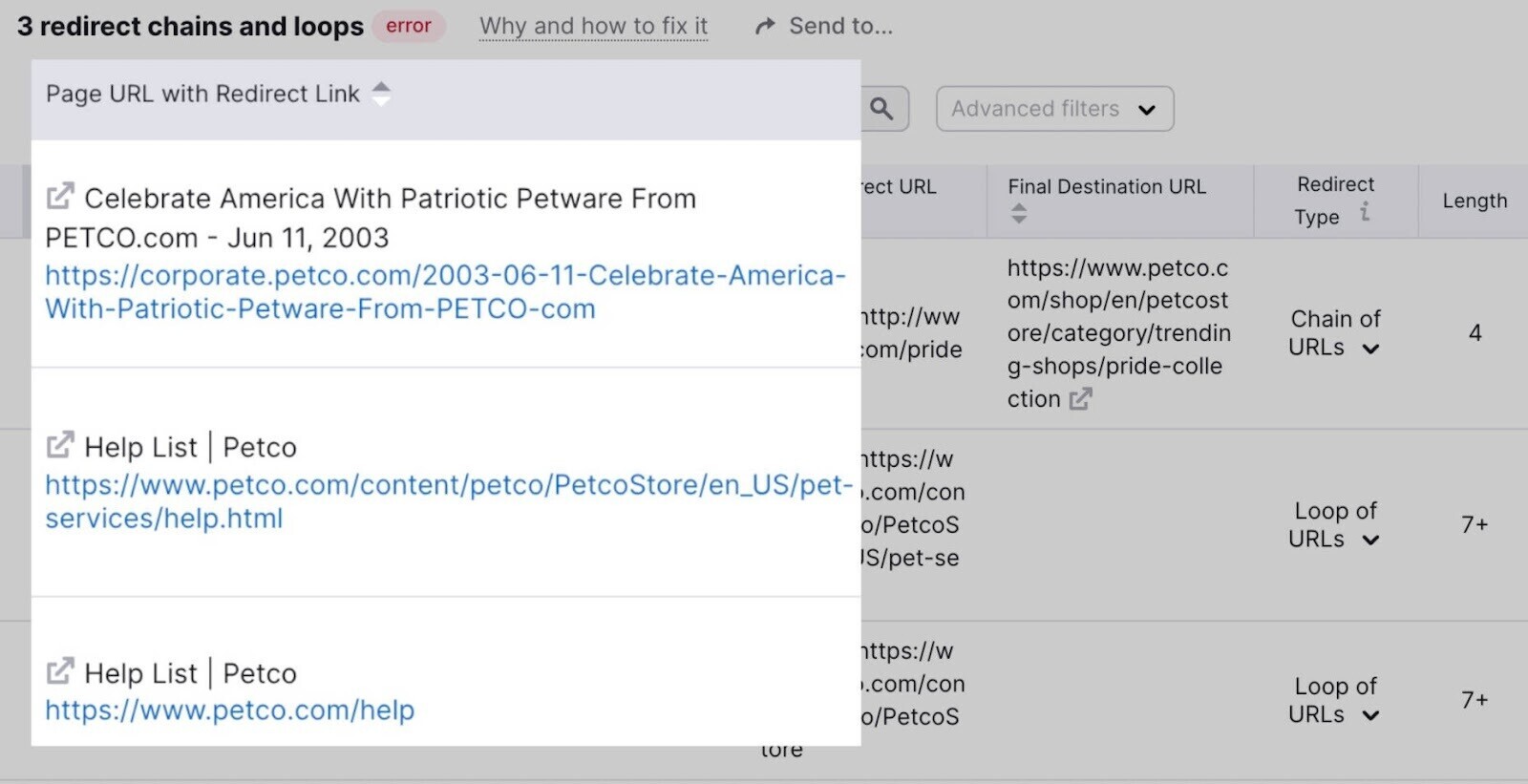

For instance, if we audit Petco’s web site, we discover three points associated to redirect chains and loops.

Redirect chains and loops are dangerous for Web optimization as a result of they contribute to a detrimental person expertise.

And also you’re unlikely to identify them by probability. So, this concern would have doubtless gone unnoticed with out a crawl-based audit.

Frequently operating these technical Web optimization audits provides you motion objects to enhance your search efficiency.