The emergence of Giant Language Fashions (LLMs) has reworked the panorama of pure language processing (NLP). The introduction of the transformer structure marked a pivotal second, ushering in a brand new period in NLP. Whereas a common definition for LLMs is missing, they’re typically understood as versatile machine studying fashions adept at concurrently dealing with numerous pure language processing duties, showcasing these fashions’ fast evolution and affect on the sphere.

4 important duties in LLMs are pure language understanding, pure language technology, knowledge-intensive duties, and reasoning skill. The evolving panorama contains numerous architectural methods, similar to fashions using each encoders and decoders, encoder-only fashions like BERT, and decoder-only fashions like GPT-4. GPT-4’s decoder-only strategy excels in pure language technology duties. Regardless of the improved efficiency of GPT-4 Turbo, its 1.7 trillion parameters increase considerations about substantial power consumption, emphasizing the necessity for sustainable AI options.

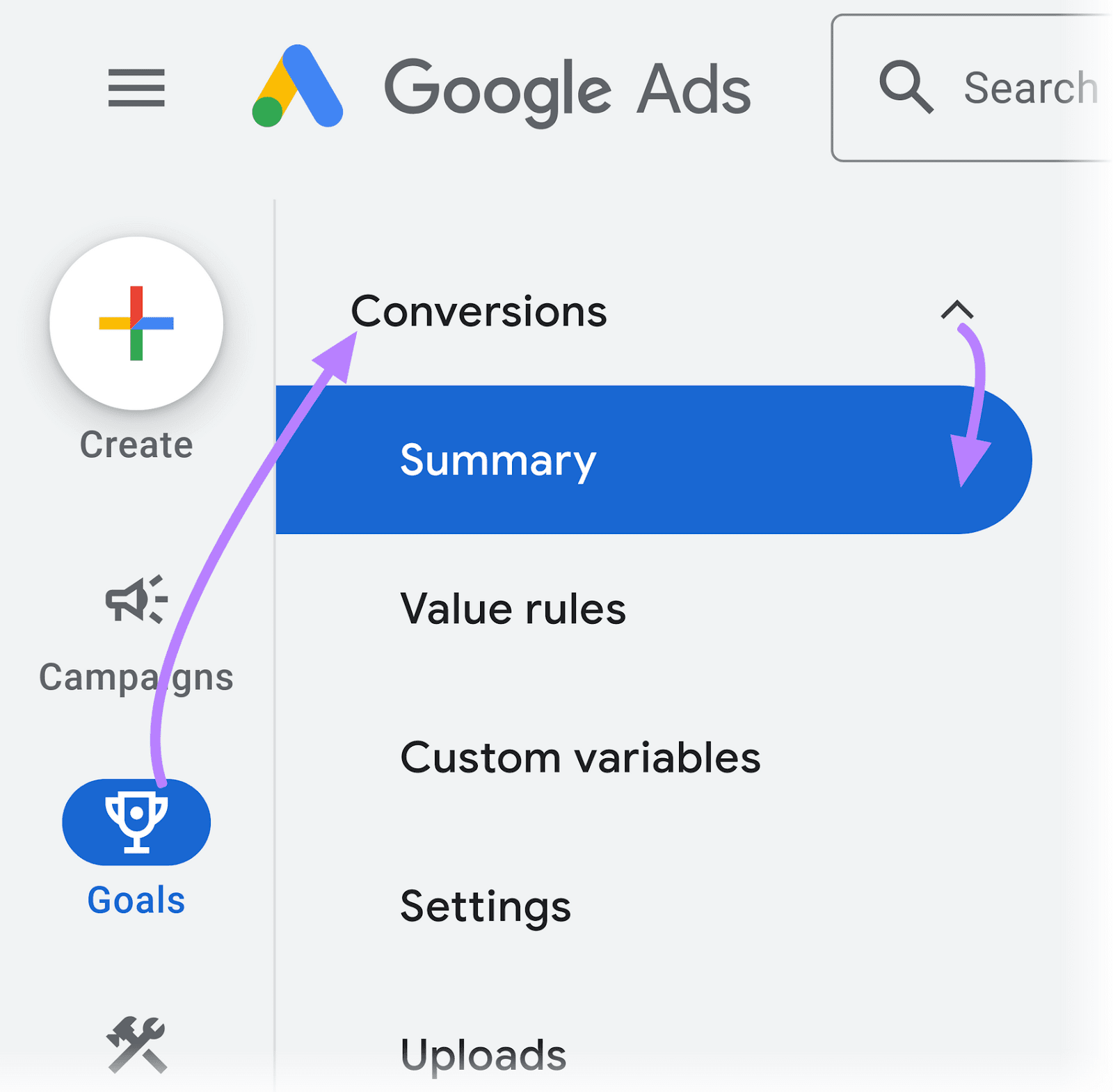

The researchers from McGill College have proposed the Pythia 70M mannequin, a pioneering strategy to enhancing the effectivity of LLM pre-training by advocating data distillation for cross-architecture switch. Drawing inspiration from the environment friendly Hyena mechanism, the tactic replaces consideration heads in transformer fashions with Hyena, offering a cheap various to standard pre-training. This strategy successfully tackles the intrinsic problem posed by processing lengthy contextual info in quadratic consideration mechanisms, providing a promising avenue for extra environment friendly and scalable LLMs.

The researchers make the most of the environment friendly Hyena mechanism, changing consideration heads in transformer fashions with Hyena. This progressive strategy improves inference pace and outperforms conventional pre-training in accuracy and effectivity. The strategy particularly addresses the problem of processing lengthy contextual info inherent in quadratic consideration mechanisms, striving to steadiness computational energy and environmental affect, showcasing a cheap and environmentally aware various to standard pre-training strategies.

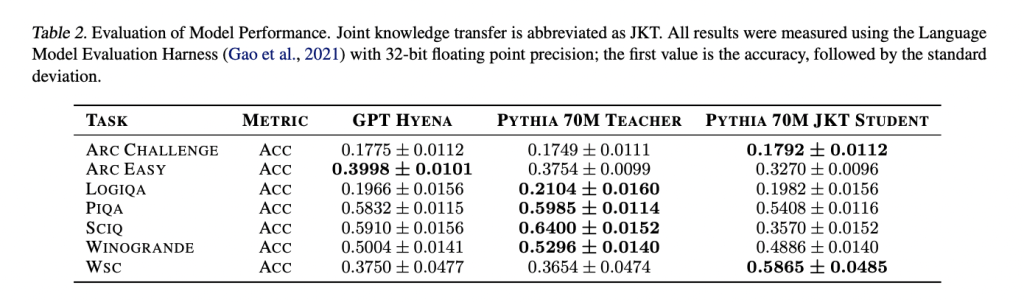

Research current perplexity scores for various fashions, together with Pythia-70M, pre-trained Hyena mannequin, Hyena pupil mannequin distilled with MSE loss, and Hyena pupil mannequin fine-tuned after distillation. The pre-trained Hyena mannequin reveals improved perplexity in comparison with Pythia-70M. Distillation additional enhances efficiency, with the bottom perplexity achieved by the Hyena pupil mannequin by way of fine-tuning. In language analysis duties utilizing the Language Mannequin Analysis Harness, the Hyena-based fashions show aggressive efficiency throughout numerous pure language duties in comparison with the attention-based Pythia-70M trainer mannequin.

To conclude, the researchers from McGill College have proposed the Pythia 70M mannequin. Using joint data switch with Hyena operators as an alternative choice to consideration enhances the computational effectivity of LLMs throughout coaching. Evaluating perplexity scores on OpenWebText and WikiText datasets, the Pythia 70M Hyena mannequin, present process progressive data switch, outperforms its pre-trained counterpart. Wonderful-tuning post-knowledge switch additional reduces perplexity, indicating improved mannequin efficiency. Though the coed Hyena mannequin reveals barely decrease accuracy in pure language duties in comparison with the trainer mannequin, the outcomes counsel that joint data switch with Hyena affords a promising various for coaching extra computationally environment friendly LLMs.

Take a look at the Paper. All credit score for this analysis goes to the researchers of this venture. Additionally, don’t neglect to comply with us on Twitter and Google Information. Be part of our 36k+ ML SubReddit, 41k+ Fb Group, Discord Channel, and LinkedIn Group.

In the event you like our work, you’ll love our e-newsletter..

Don’t Neglect to affix our Telegram Channel